- LDAvis is an interactive visualization tool built using R and D3 to help users interpret topics estimated using Latent Dirichlet Allocation (LDA).

- It aims to answer questions about the meaning of each topic, the prevalence of each topic, and how topics relate to each other.

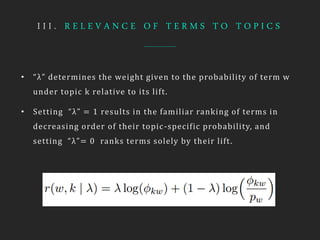

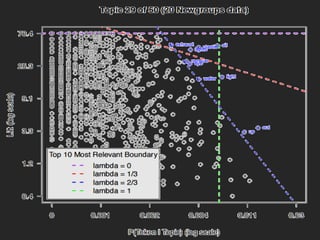

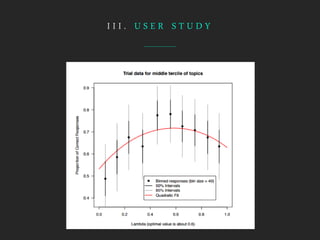

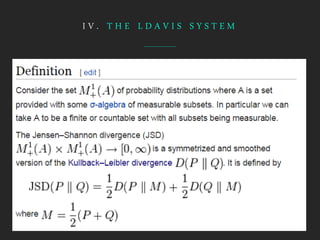

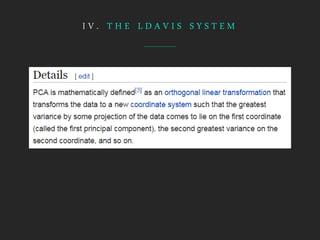

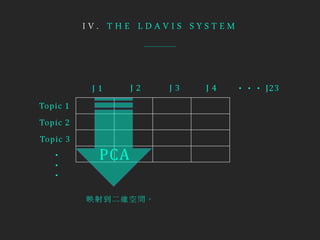

- The tool visualizes term relevance, topic prevalence, and inter-topic distances to help users understand the topics in a corpus.