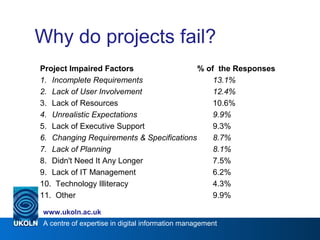

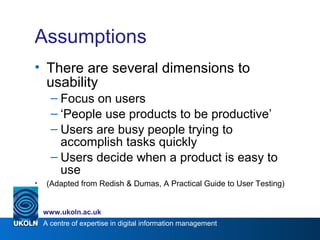

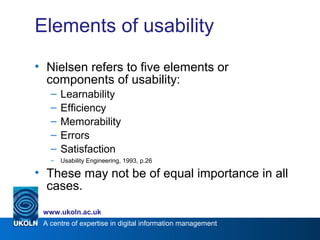

The document discusses usability testing in digital information management, identifying factors that contribute to project failure and emphasizing the importance of user involvement. It outlines various usability components, methods for testing, and scenarios for assessing user interaction, along with the significance of applying heuristics during evaluation. The document also highlights the need for effective task scenarios and details strategies for optimizing user experience through structured testing processes.

![A centre of expertise in digital information management

www.ukoln.ac.uk

Introducing usability

• Definition: the measure of a product’s

potential to accomplish the goals of a user

• How easy a user interface is to understand

and use

• Ability of a system to be used [easily?

Efficiently? Quickly?]

• The people who use the project can

accomplish their tasks quickly and easily](https://image.slidesharecdn.com/iwmw2007usabilitytestingforthewww-160308203651/85/IWMW-2007-Usability-Testing-for-the-WWW-4-320.jpg)

![A centre of expertise in digital information management

www.ukoln.ac.uk

Applying the results

Bug fixes

Feature requests

Major objections

Misnamed element

Confusing colours

It would be much easier if…

…‘this textbox autocompleted’

…’the system remembered my

email preferences’

I don’t like [type of application]

I prefer [totally different type of

application]

…(Oh)

Strange interaction flow

‘Low-

hanging

fruit’?](https://image.slidesharecdn.com/iwmw2007usabilitytestingforthewww-160308203651/85/IWMW-2007-Usability-Testing-for-the-WWW-23-320.jpg)