Embed presentation

Downloaded 17 times

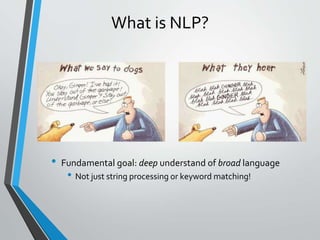

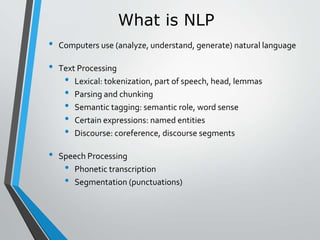

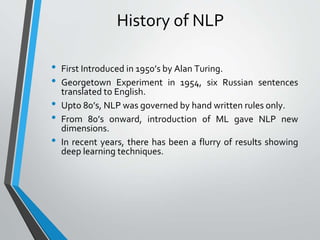

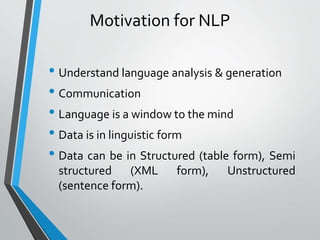

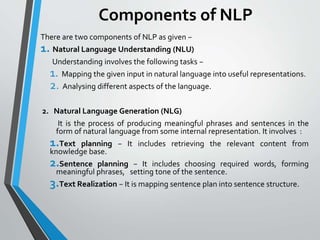

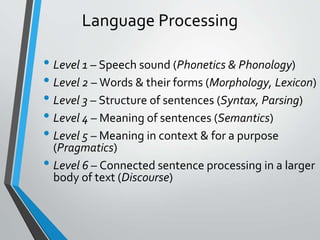

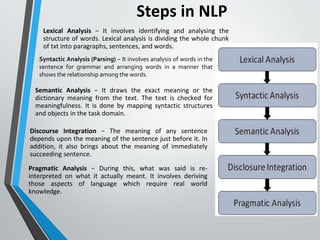

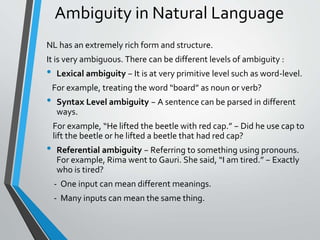

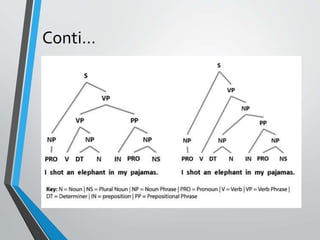

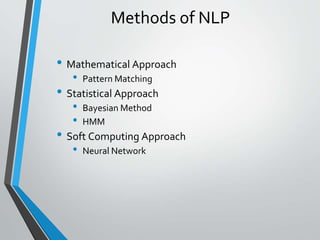

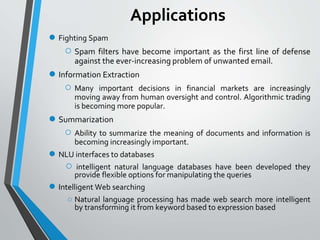

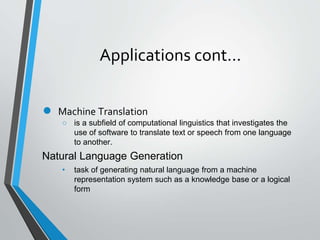

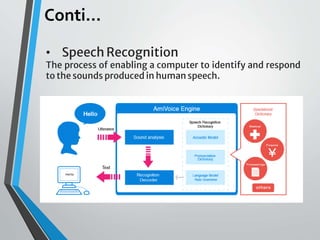

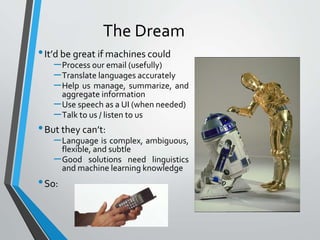

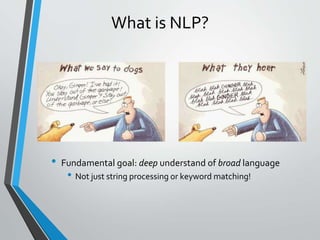

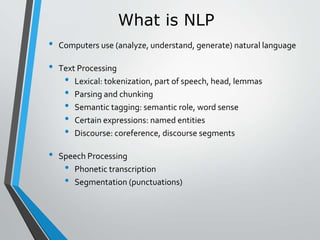

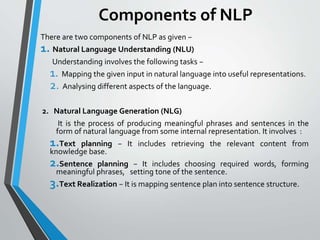

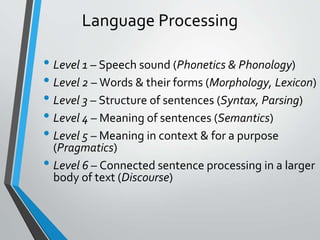

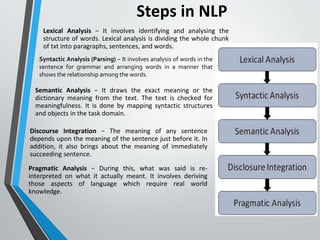

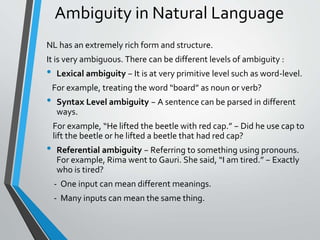

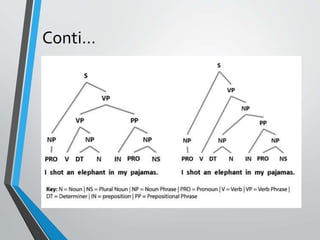

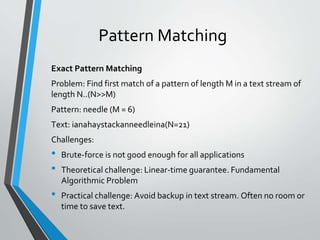

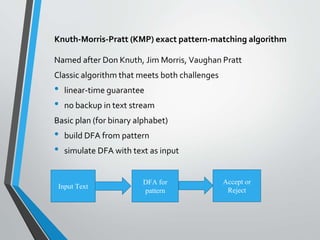

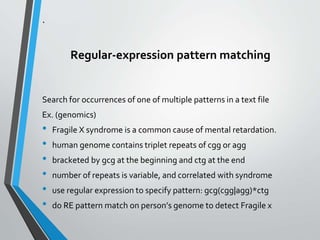

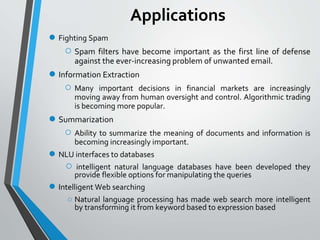

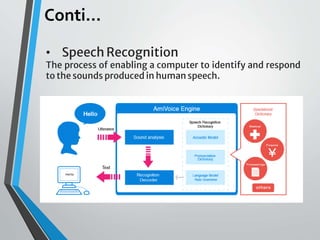

This document provides an introduction to natural language processing, including its history, goals, challenges, and applications. It discusses how NLP aims to help machines process human language like translation, summarization, and question answering. While language is complex, NLP uses techniques from linguistics, machine learning, and computer science to develop tools that analyze, understand, and generate human language.