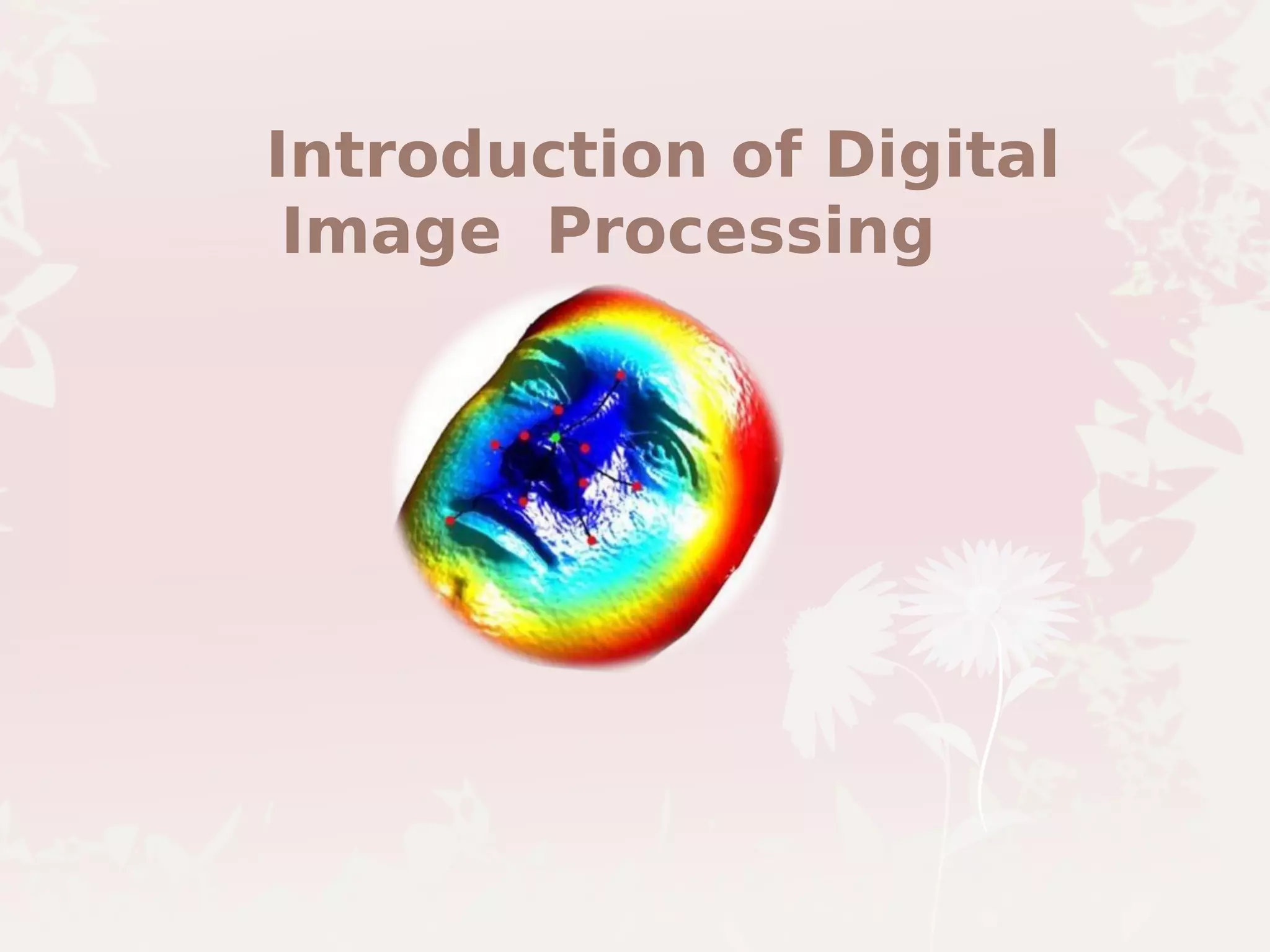

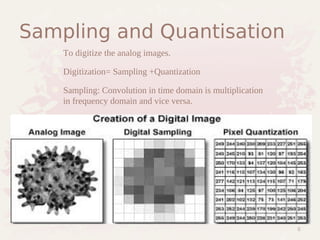

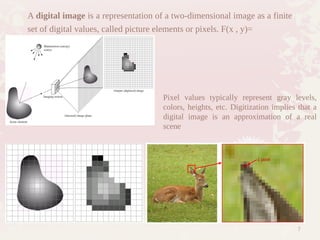

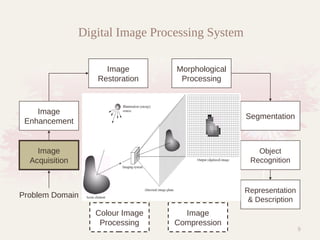

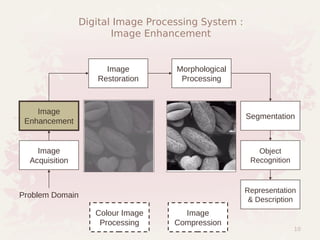

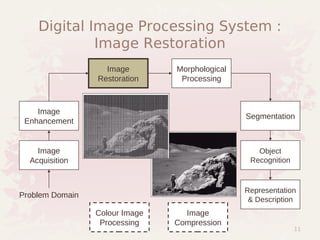

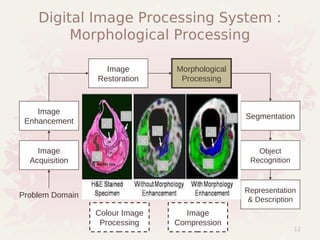

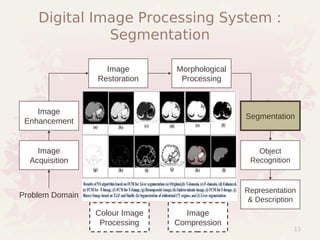

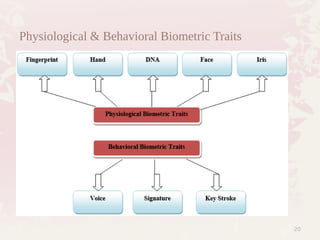

The document provides an overview of digital image processing, outlining key components such as image acquisition, storage, processing, display, and transmission. It categorizes images into monochrome, grayscale, and color formats and discusses digital representation through pixels. Additionally, the text highlights various applications, particularly in biometrics, medical fields, and space, along with a breakdown of image processing levels from noise removal to scene understanding.