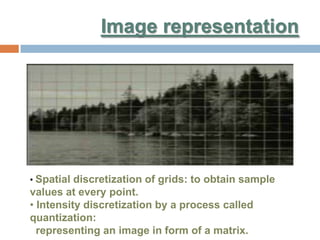

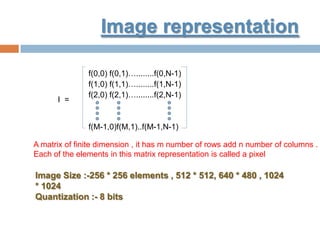

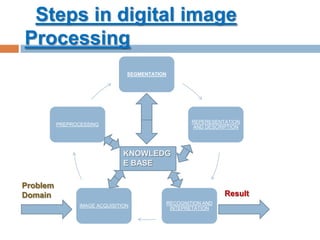

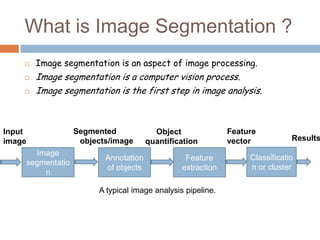

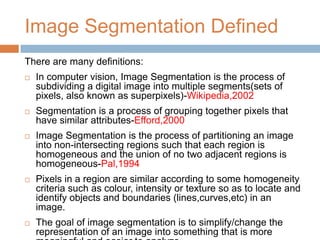

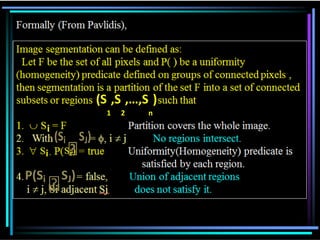

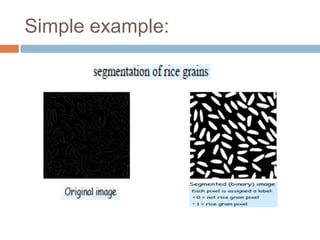

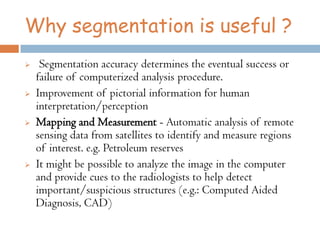

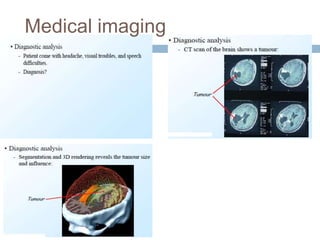

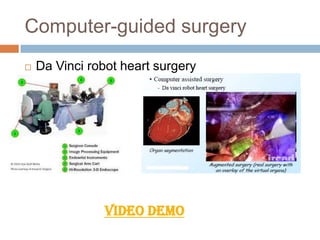

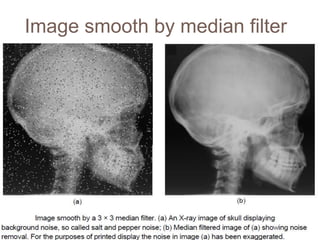

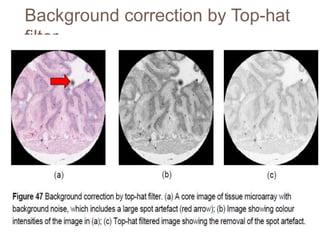

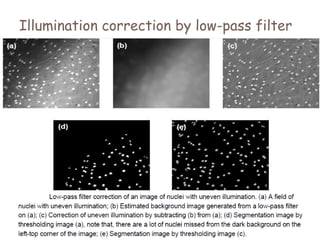

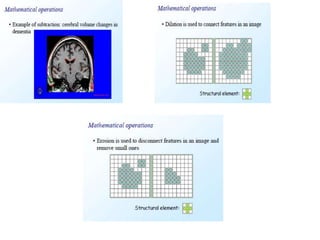

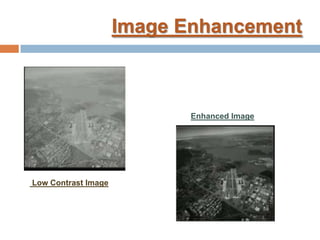

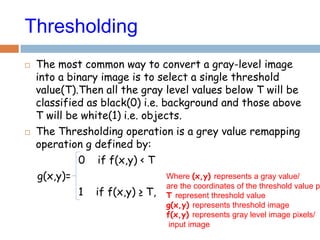

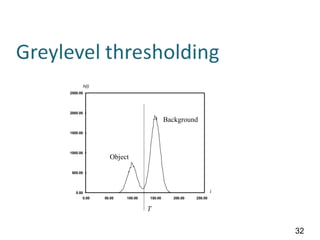

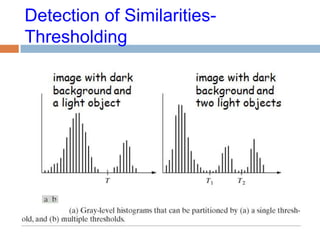

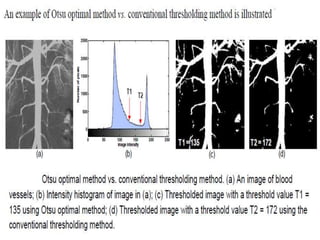

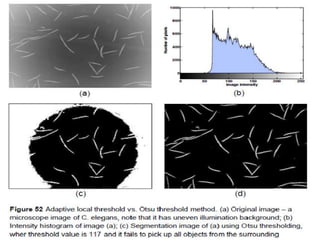

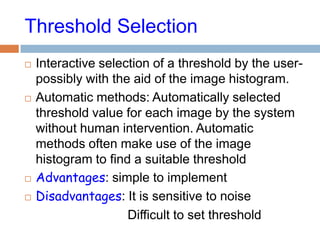

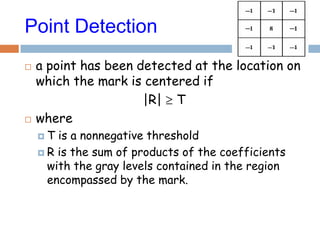

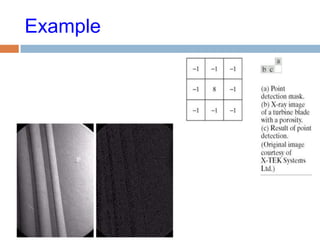

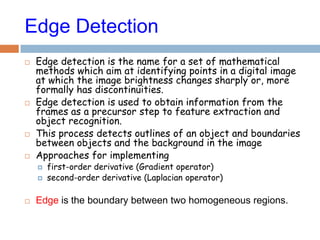

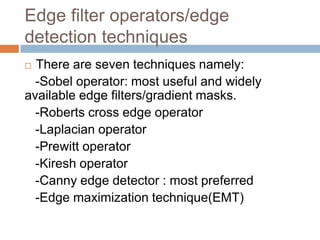

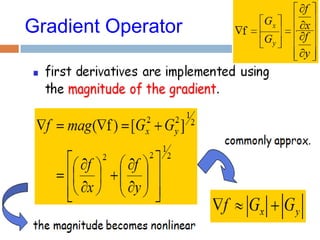

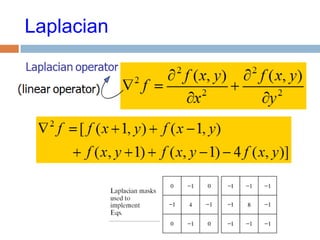

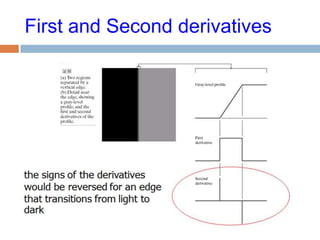

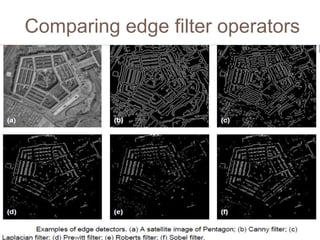

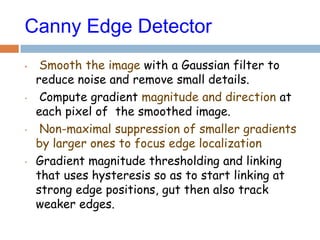

The document presents a seminar on image segmentation by Tawose Olamide Timothy, discussing its challenges, definitions, and significance in image processing and computer vision. It covers various methods and algorithms for image segmentation, including thresholding and edge detection techniques, as well as applications in medical imaging and automated systems. The focus is on improving image analysis through effective segmentation, highlighting the complexity of the process and the need for continued research.