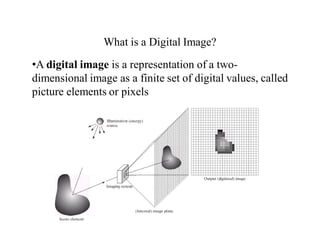

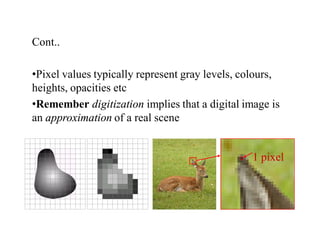

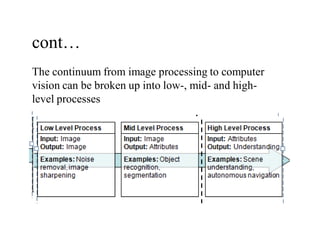

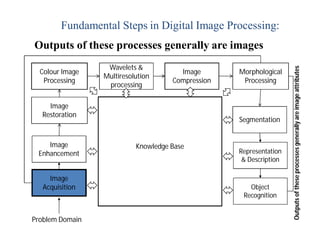

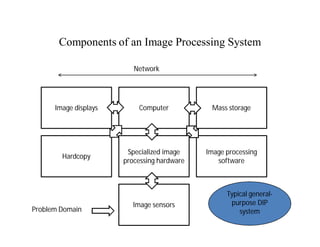

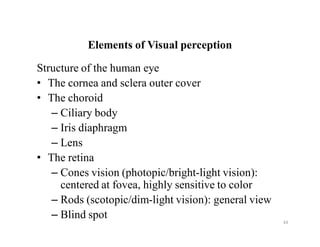

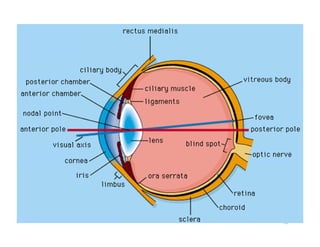

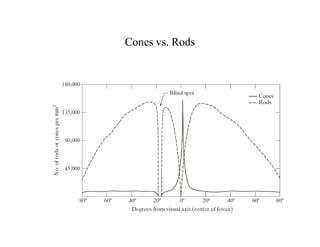

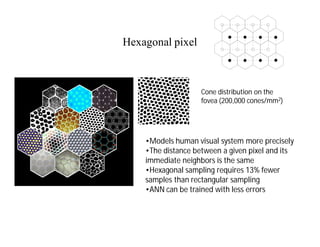

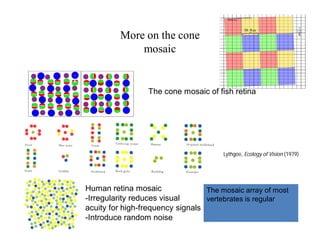

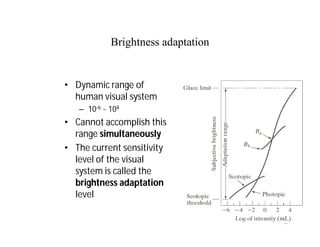

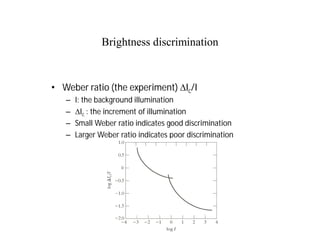

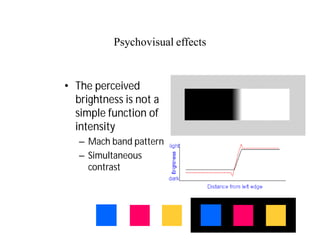

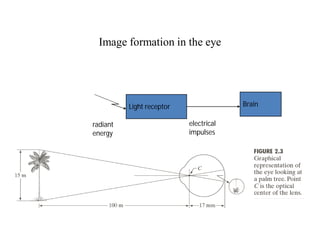

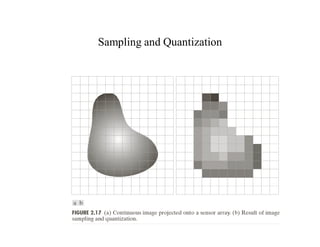

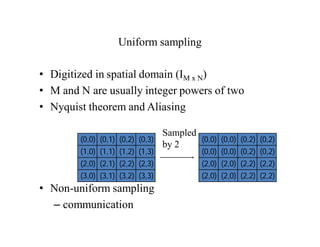

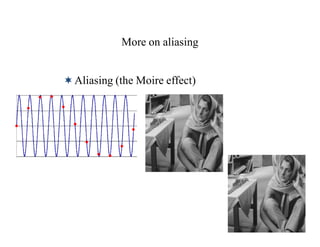

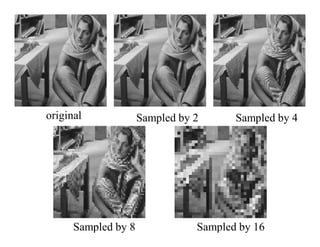

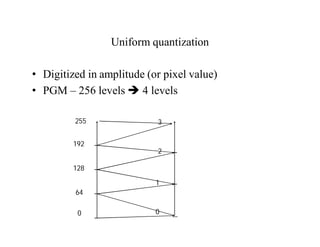

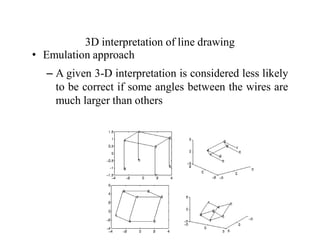

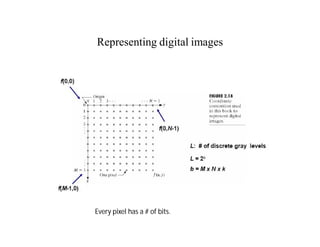

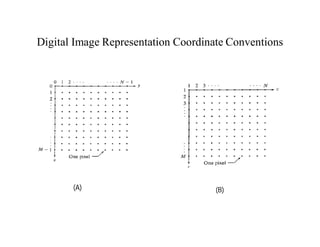

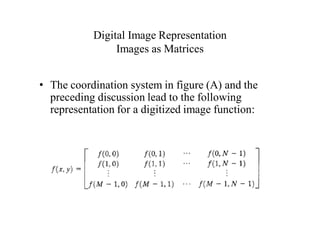

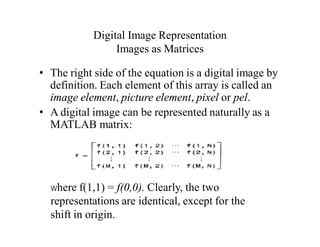

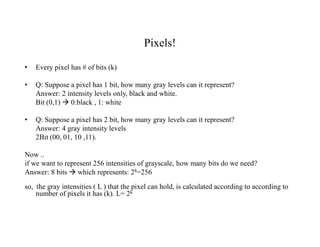

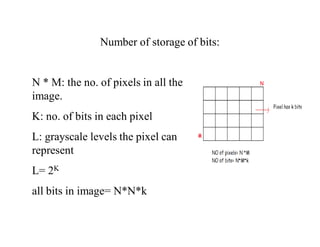

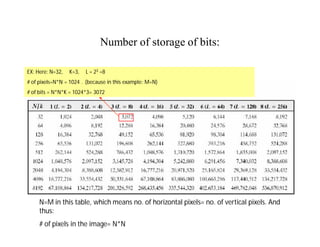

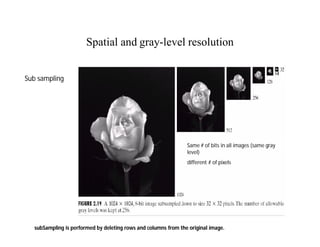

The document discusses digital image processing and provides details on key concepts. It begins with an overview of digital image fundamentals such as image sampling and quantization. Next, it describes the components of an image processing system including image sensors, hardware, software, displays and storage. Finally, it covers topics such as image formation in the eye, brightness adaptation, and the representation of digital images through sampling and quantization.