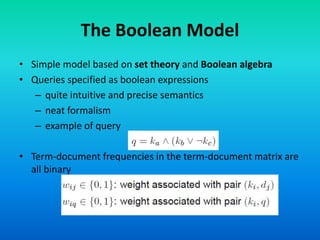

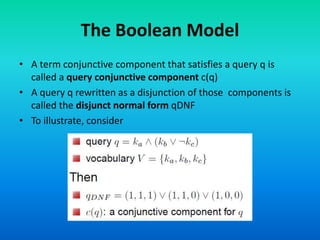

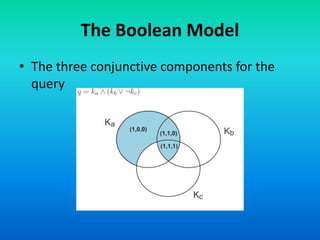

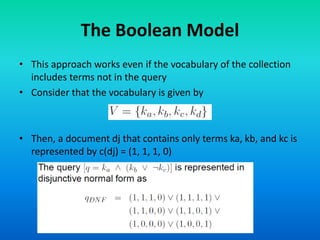

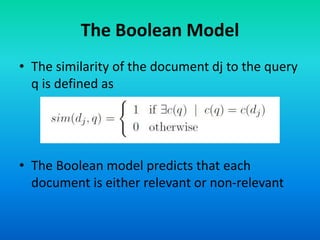

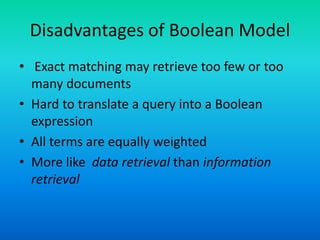

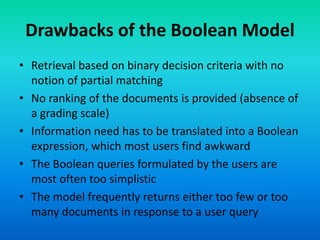

The Boolean model is a classical information retrieval model based on set theory and Boolean logic. Queries are specified as Boolean expressions to retrieve documents that either contain or do not contain the query terms. All term frequencies are binary and documents are retrieved based on an exact match to the query terms. However, this model has limitations as it does not rank documents and queries are difficult for users to translate into Boolean expressions, often returning too few or too many results.