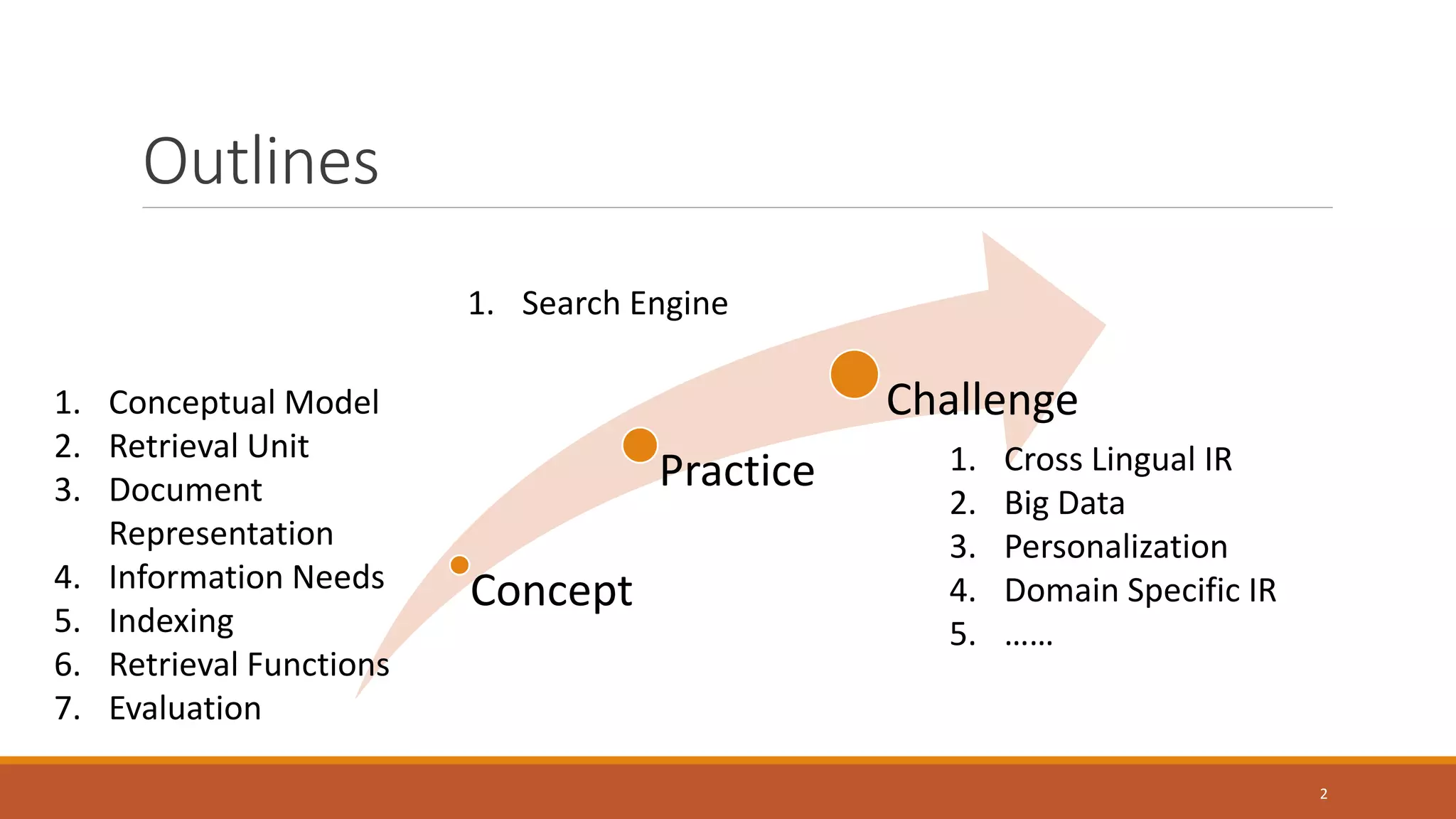

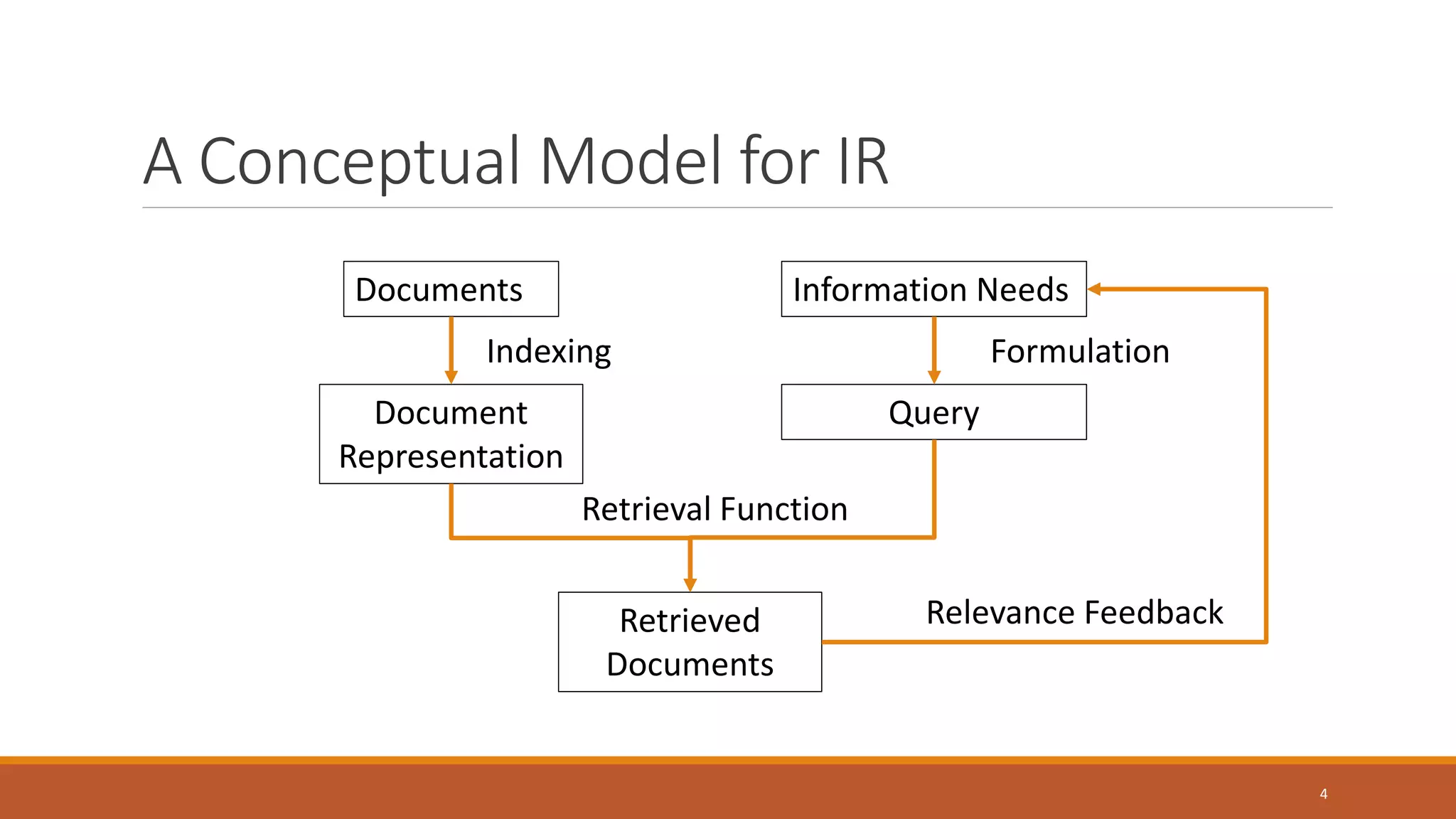

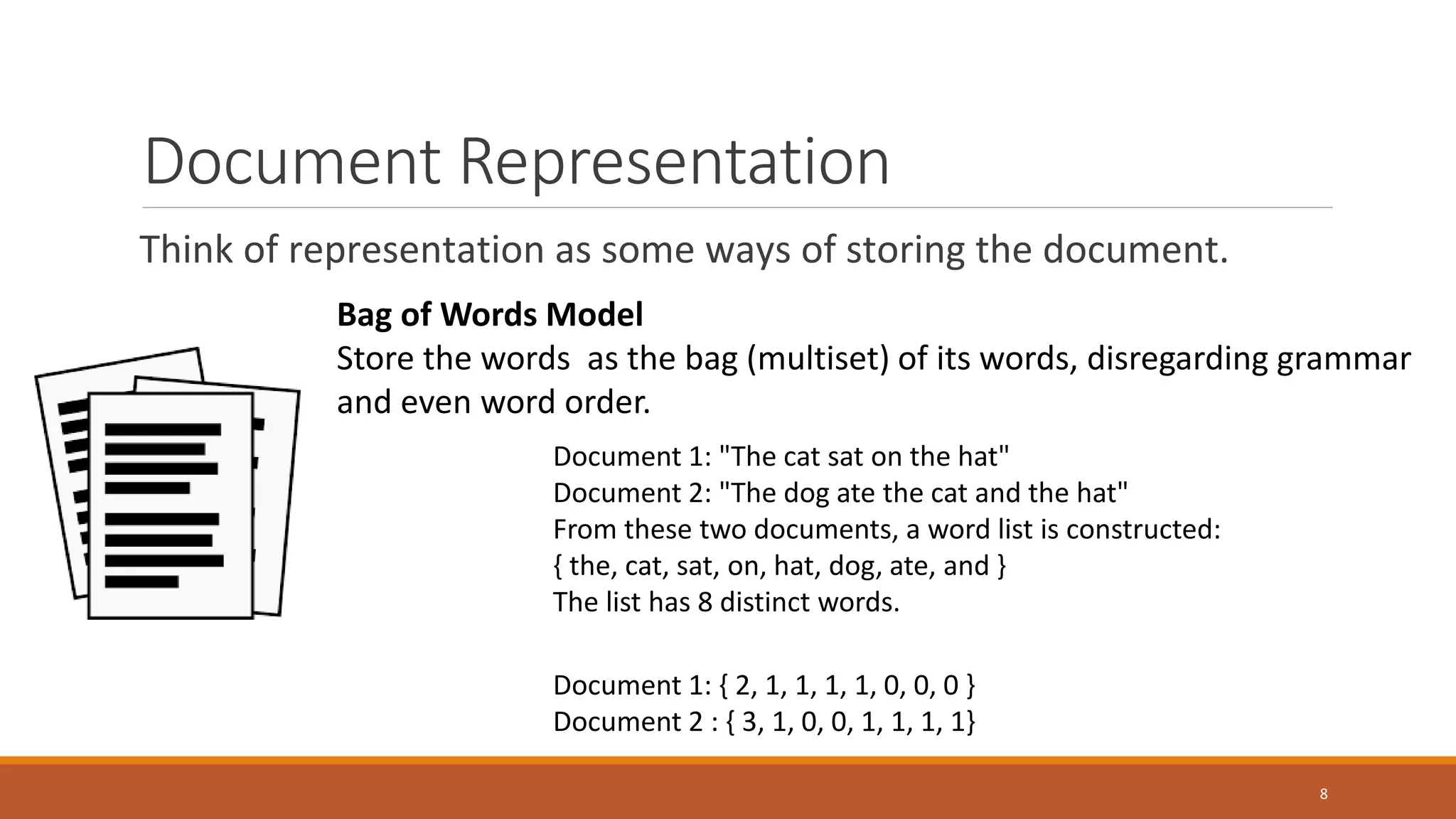

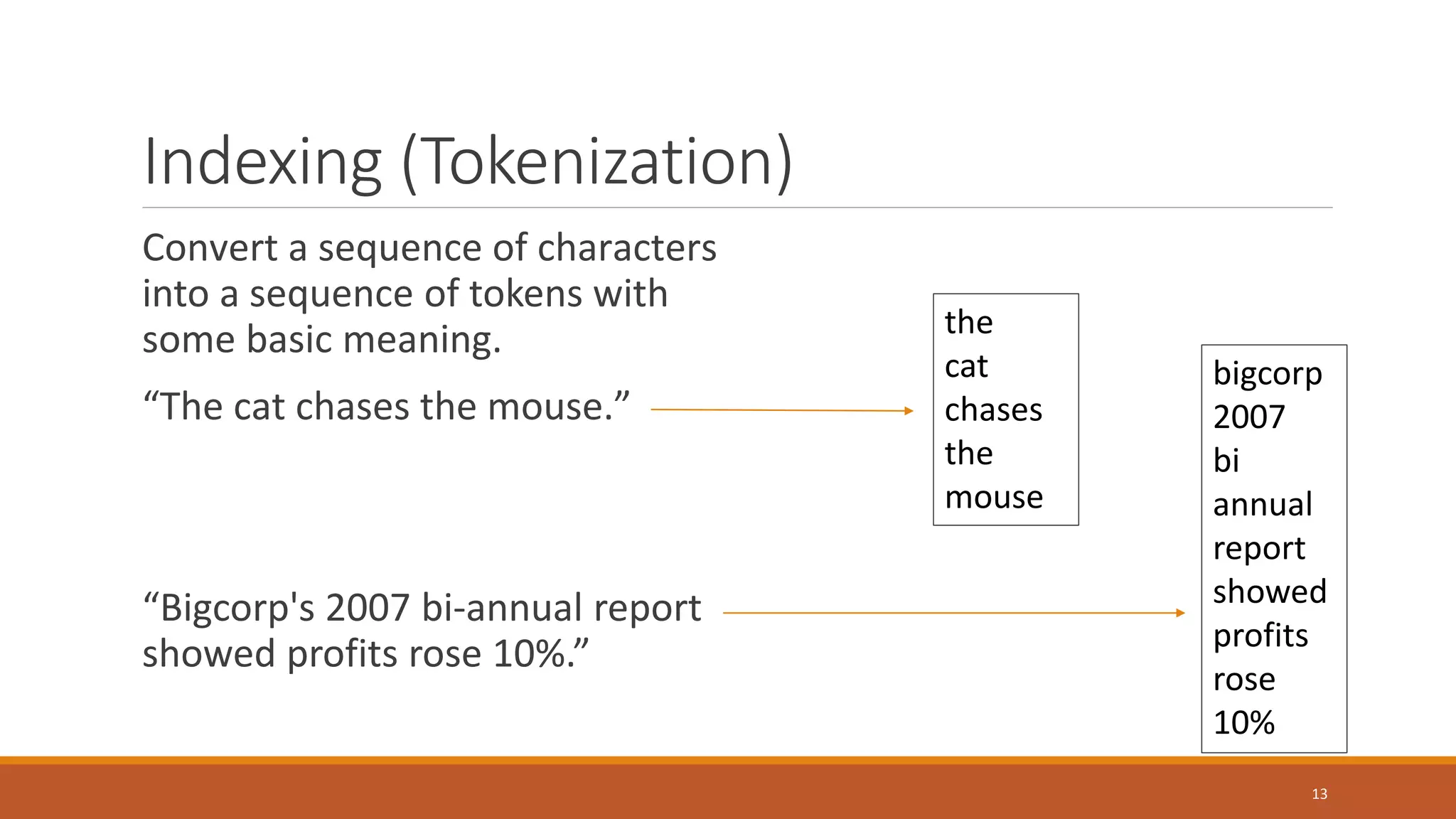

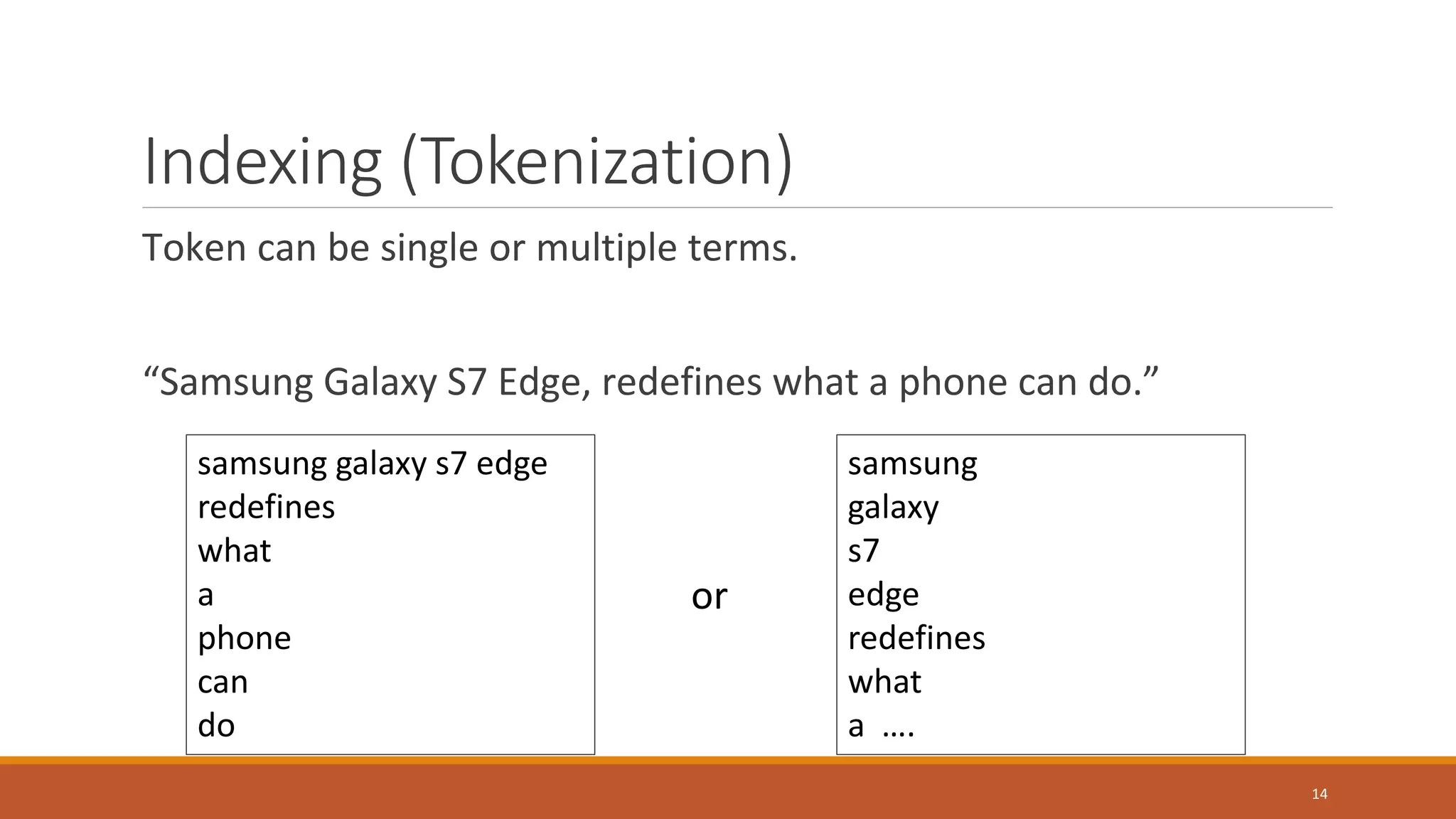

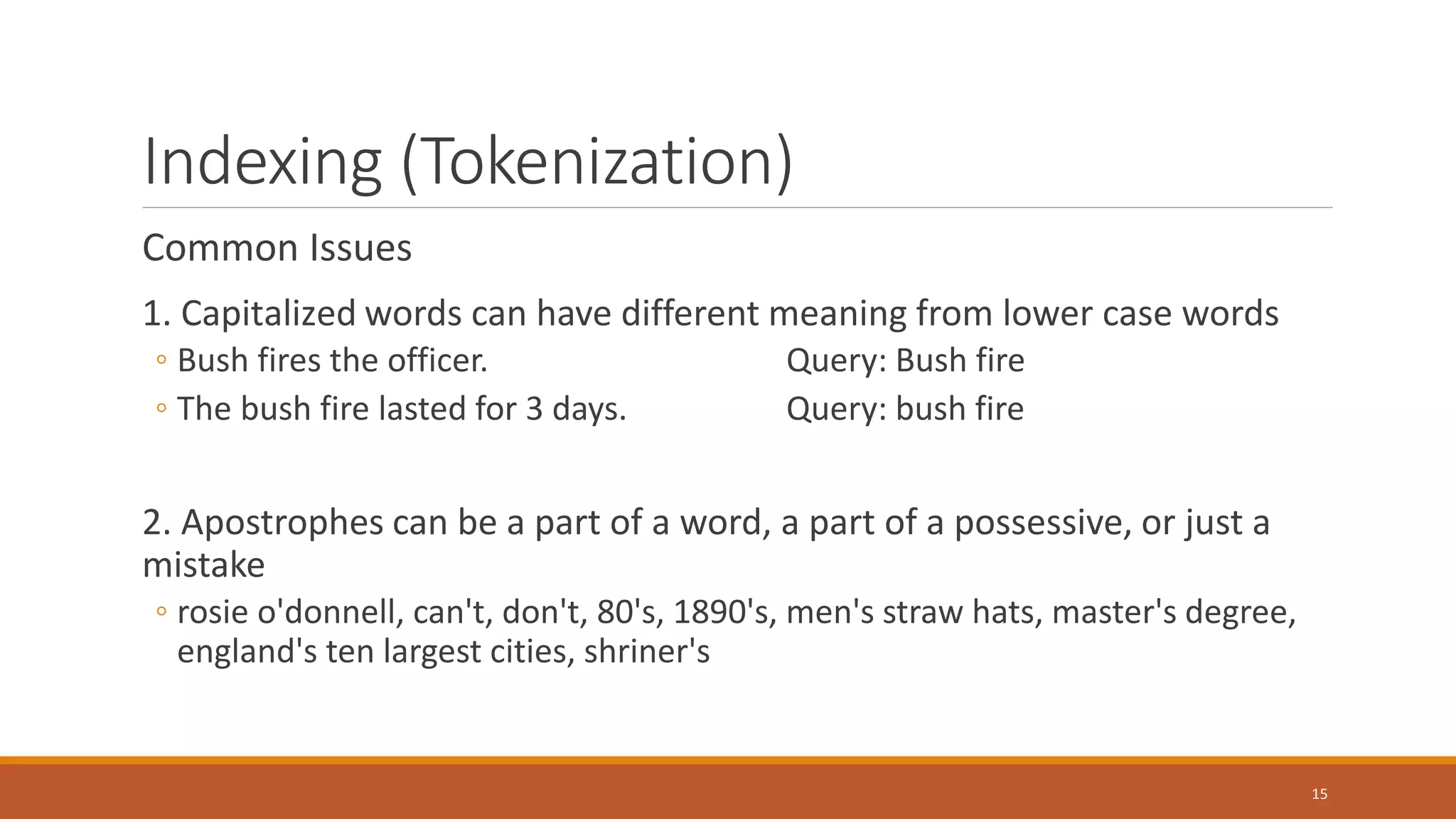

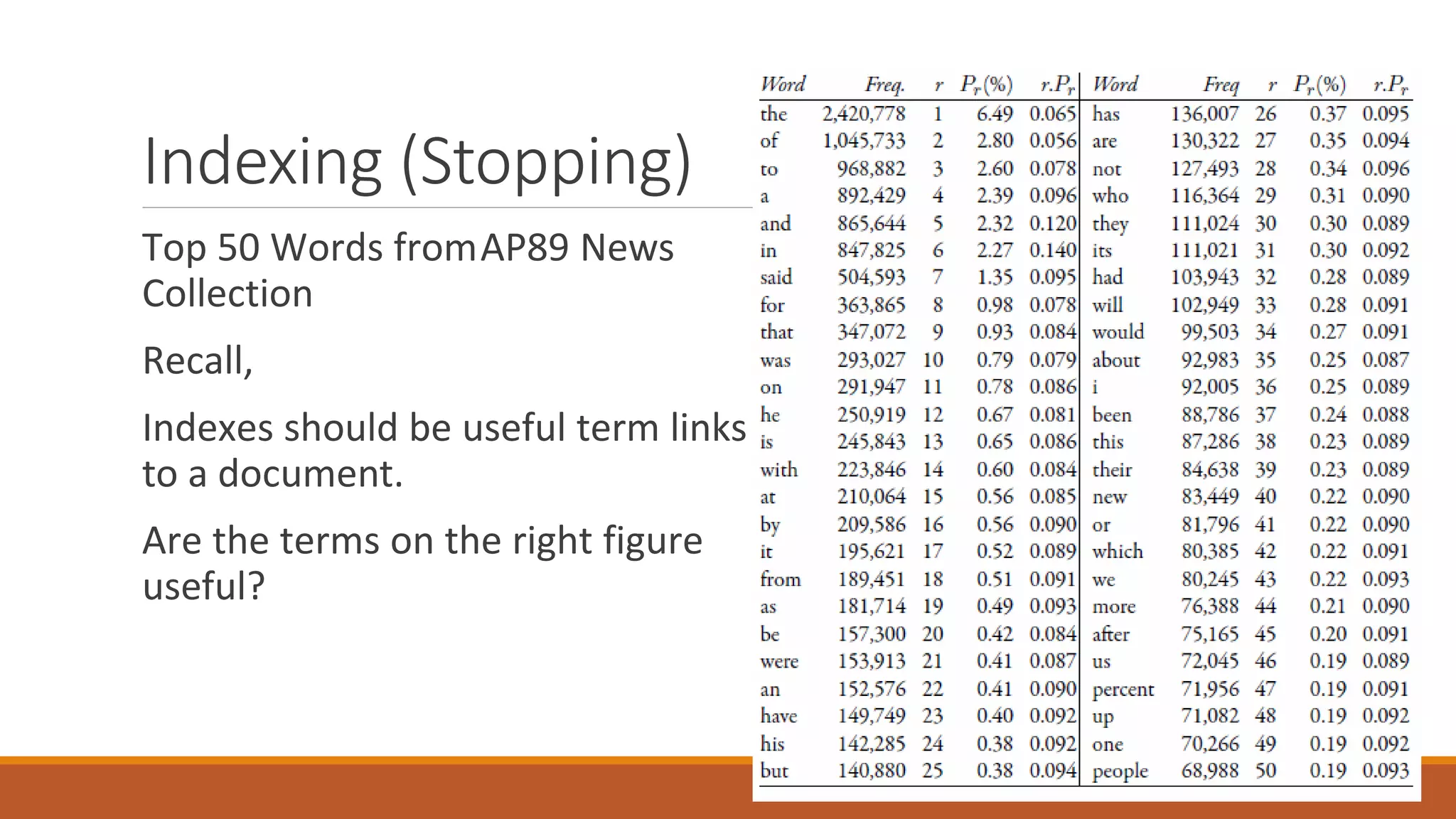

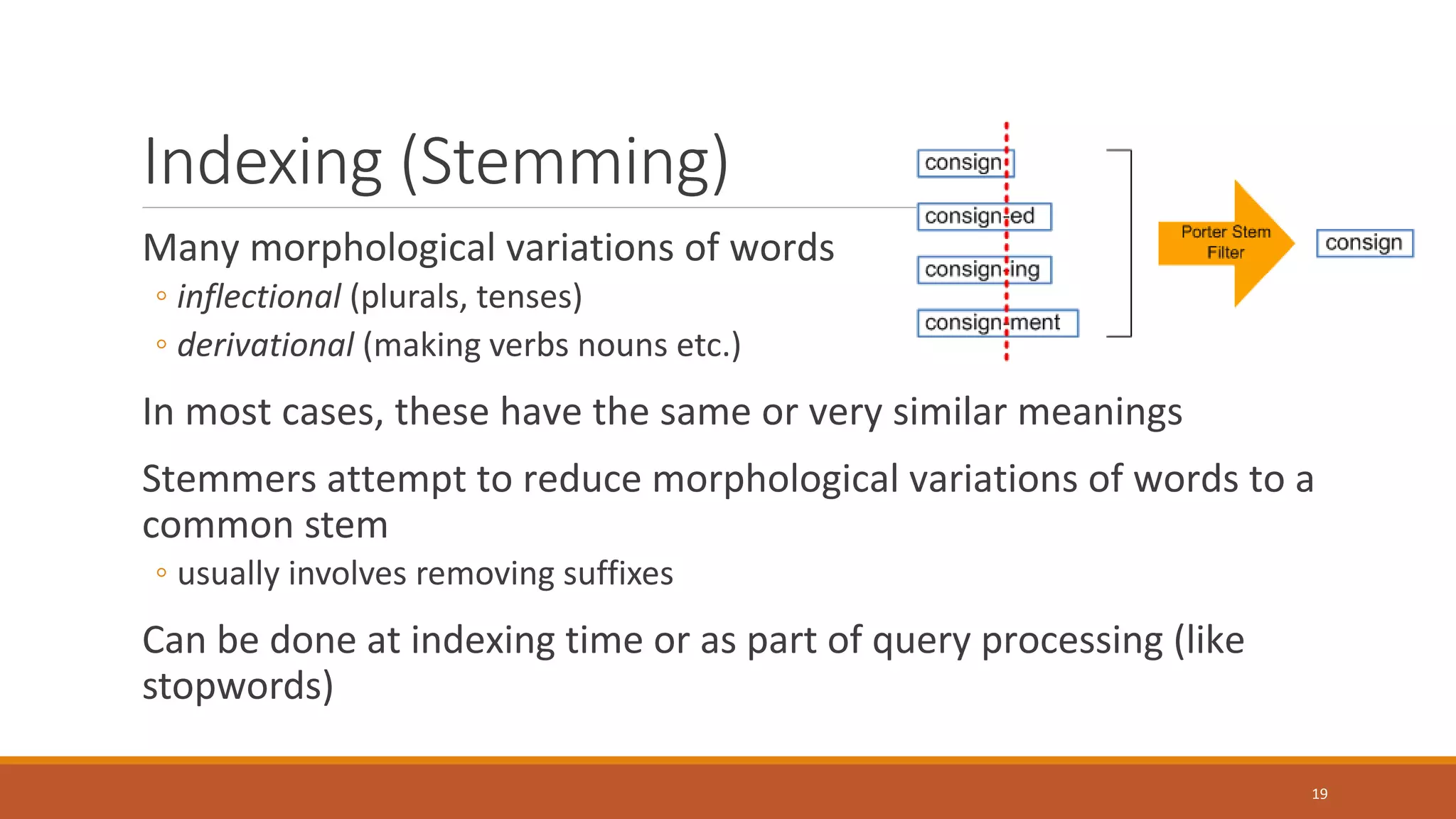

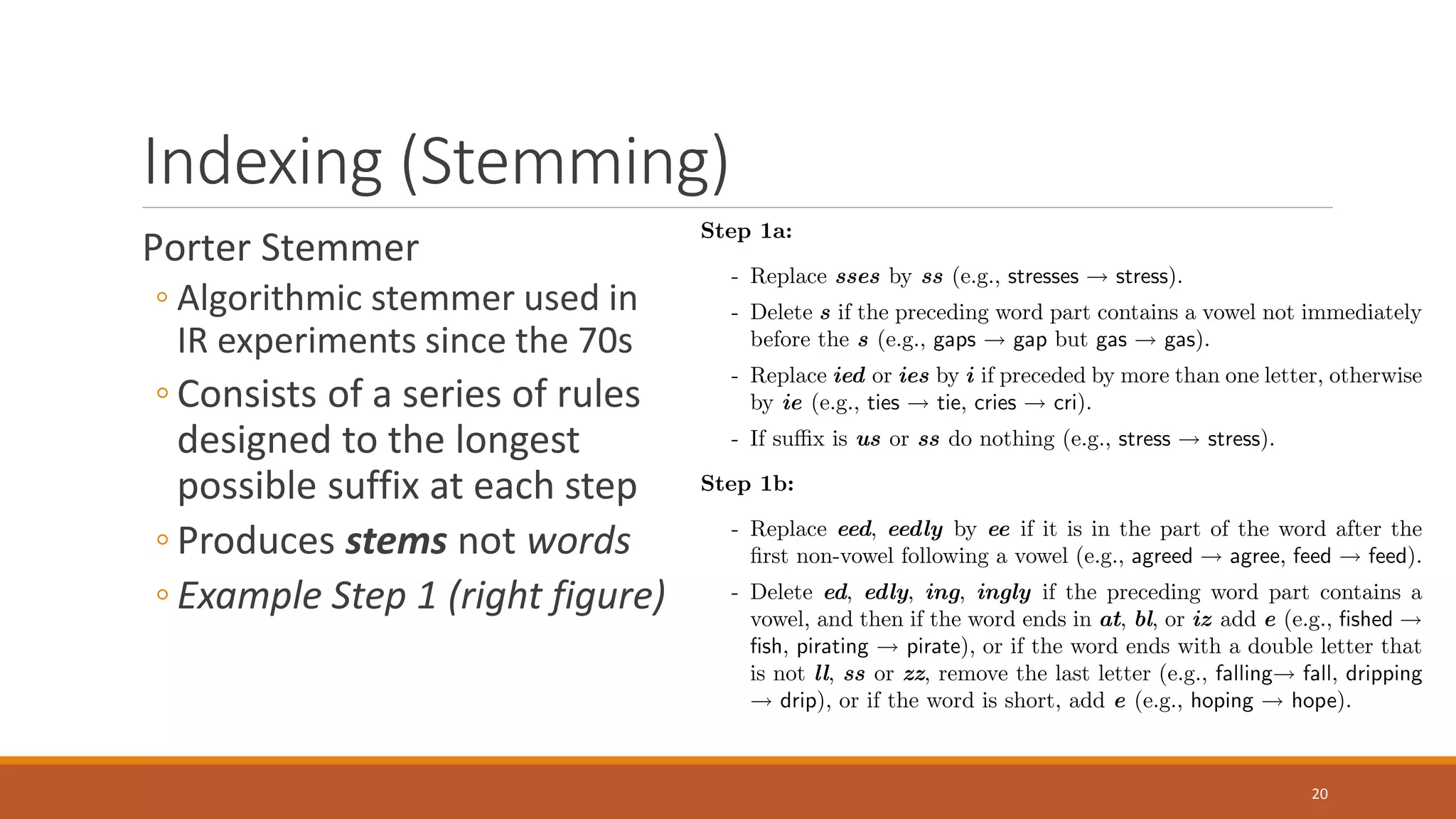

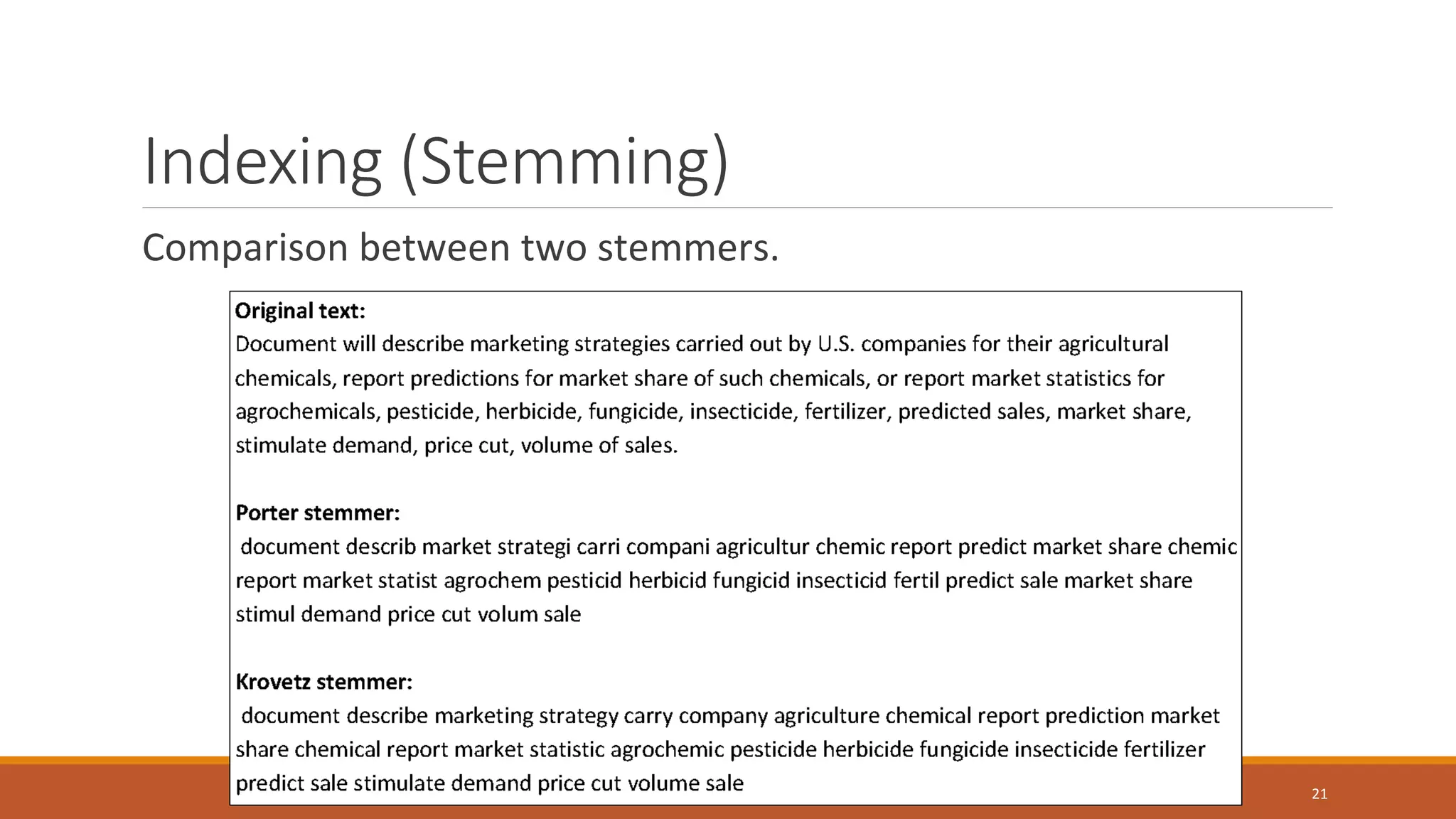

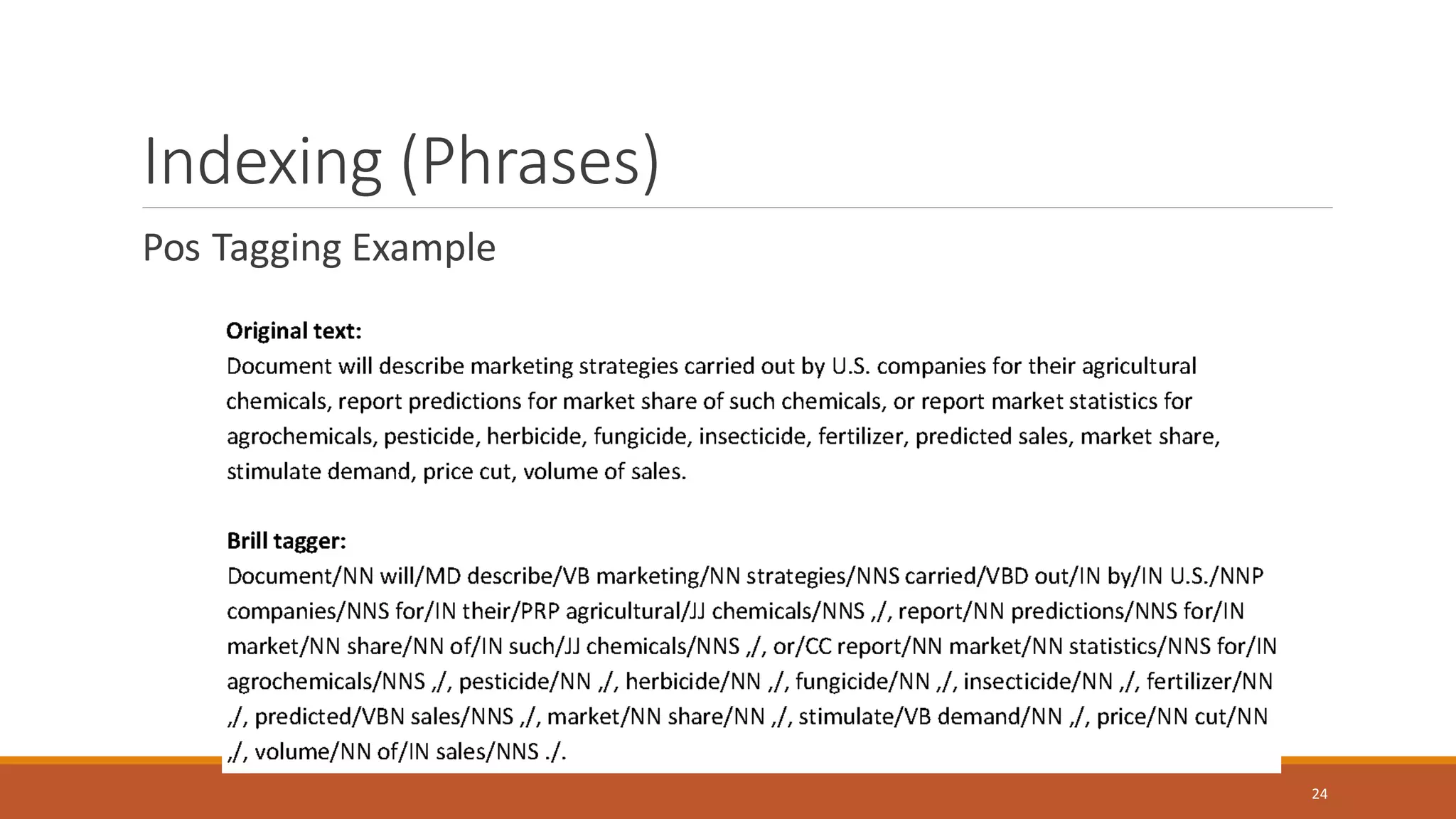

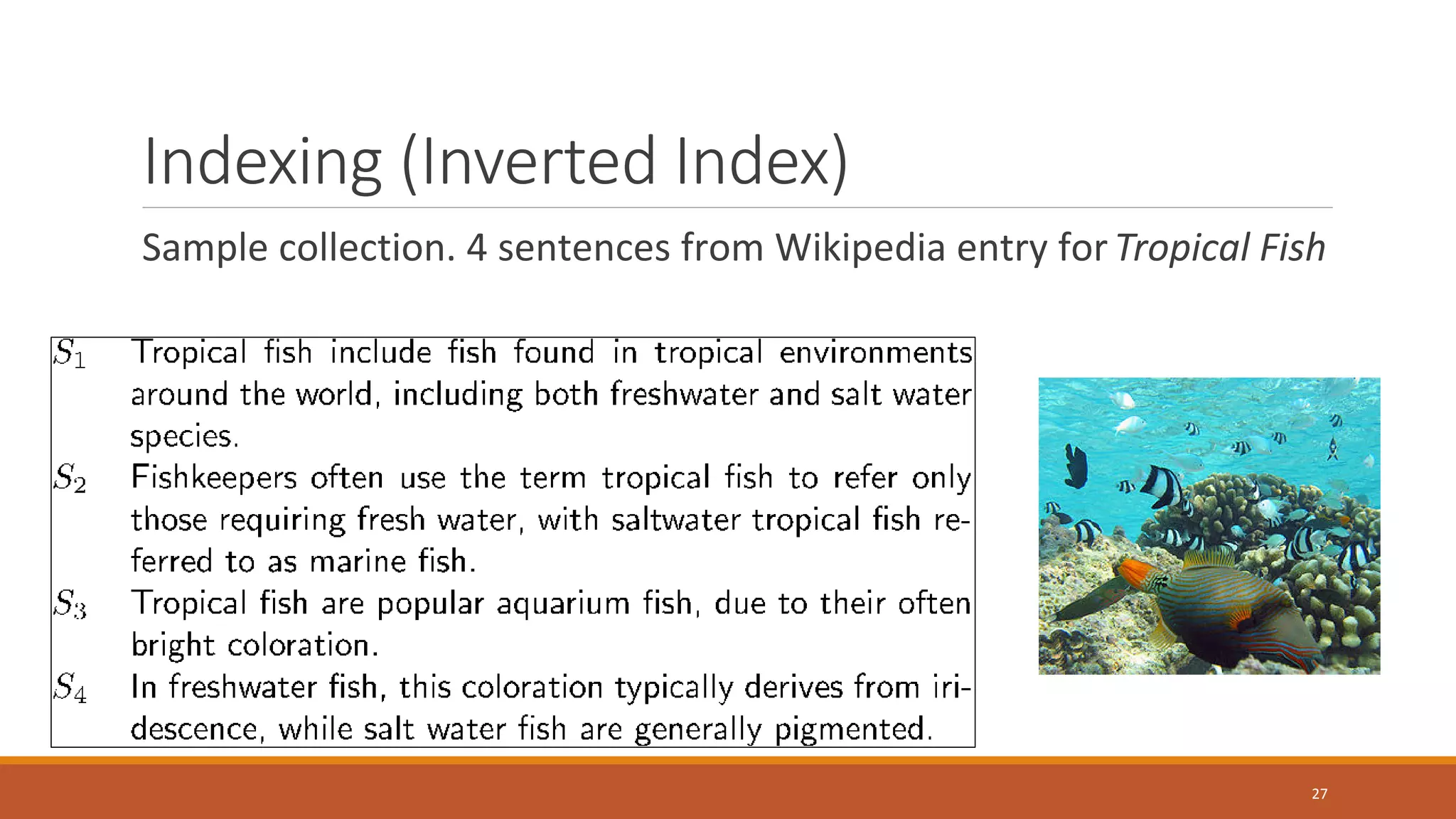

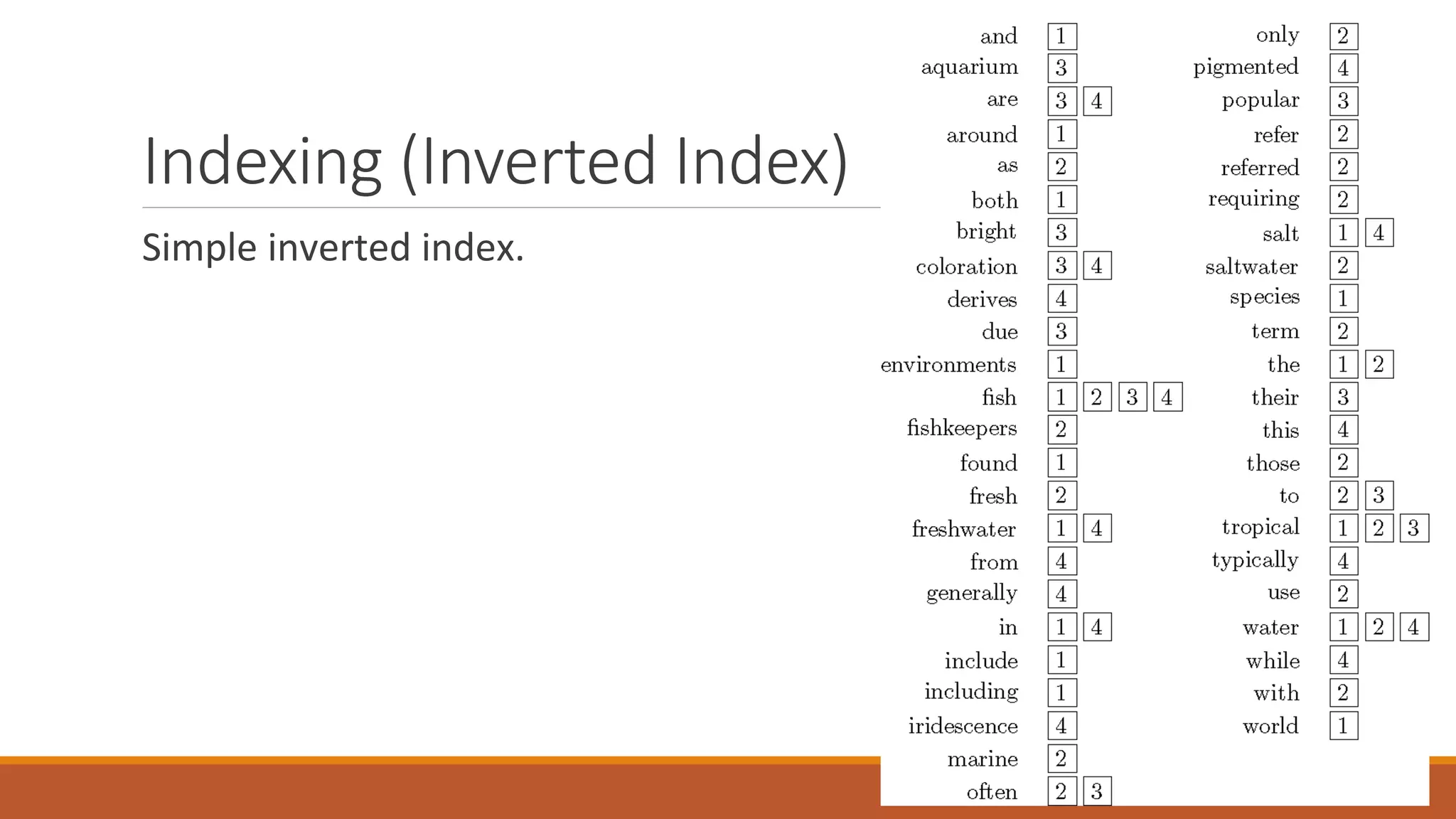

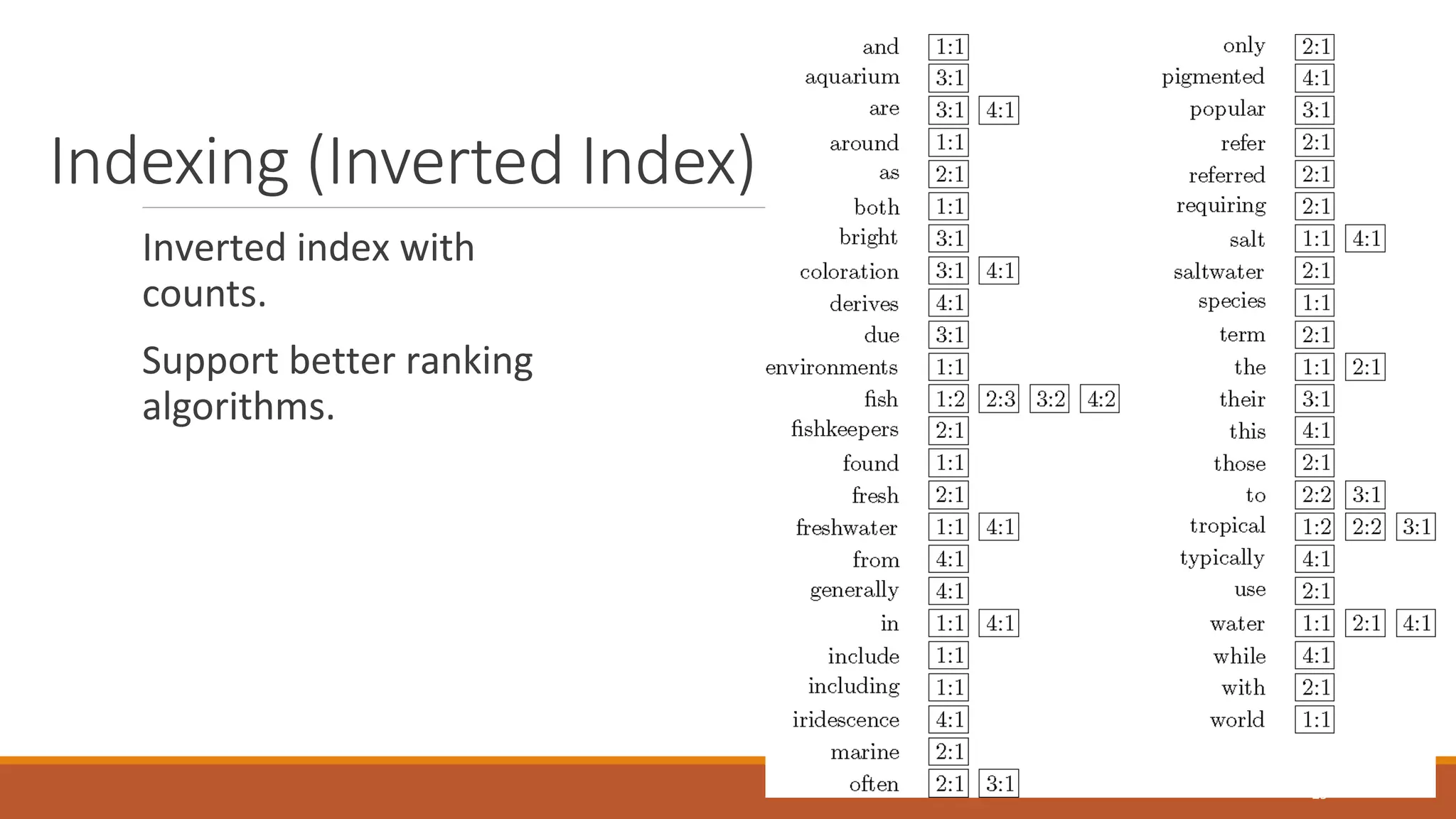

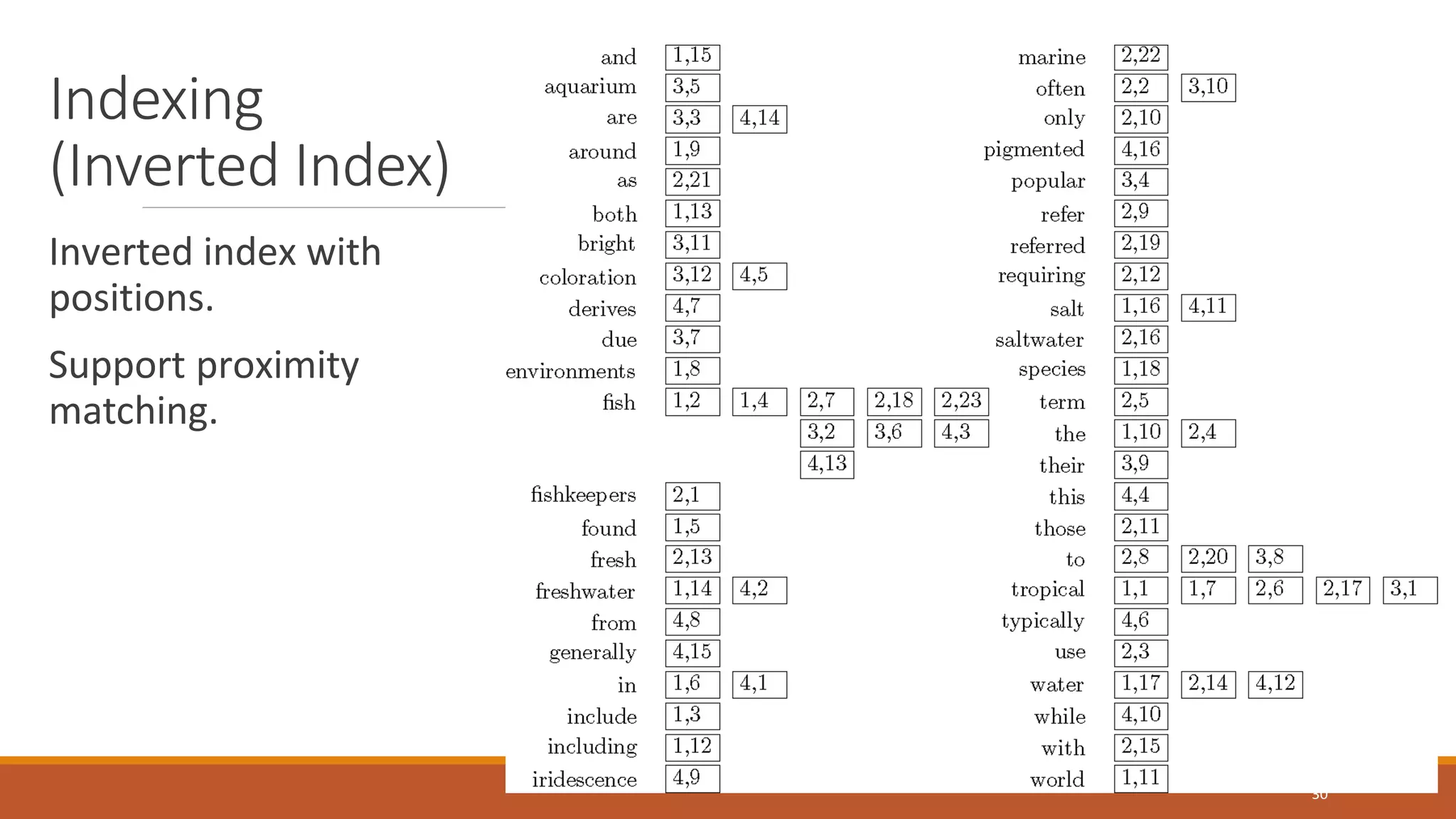

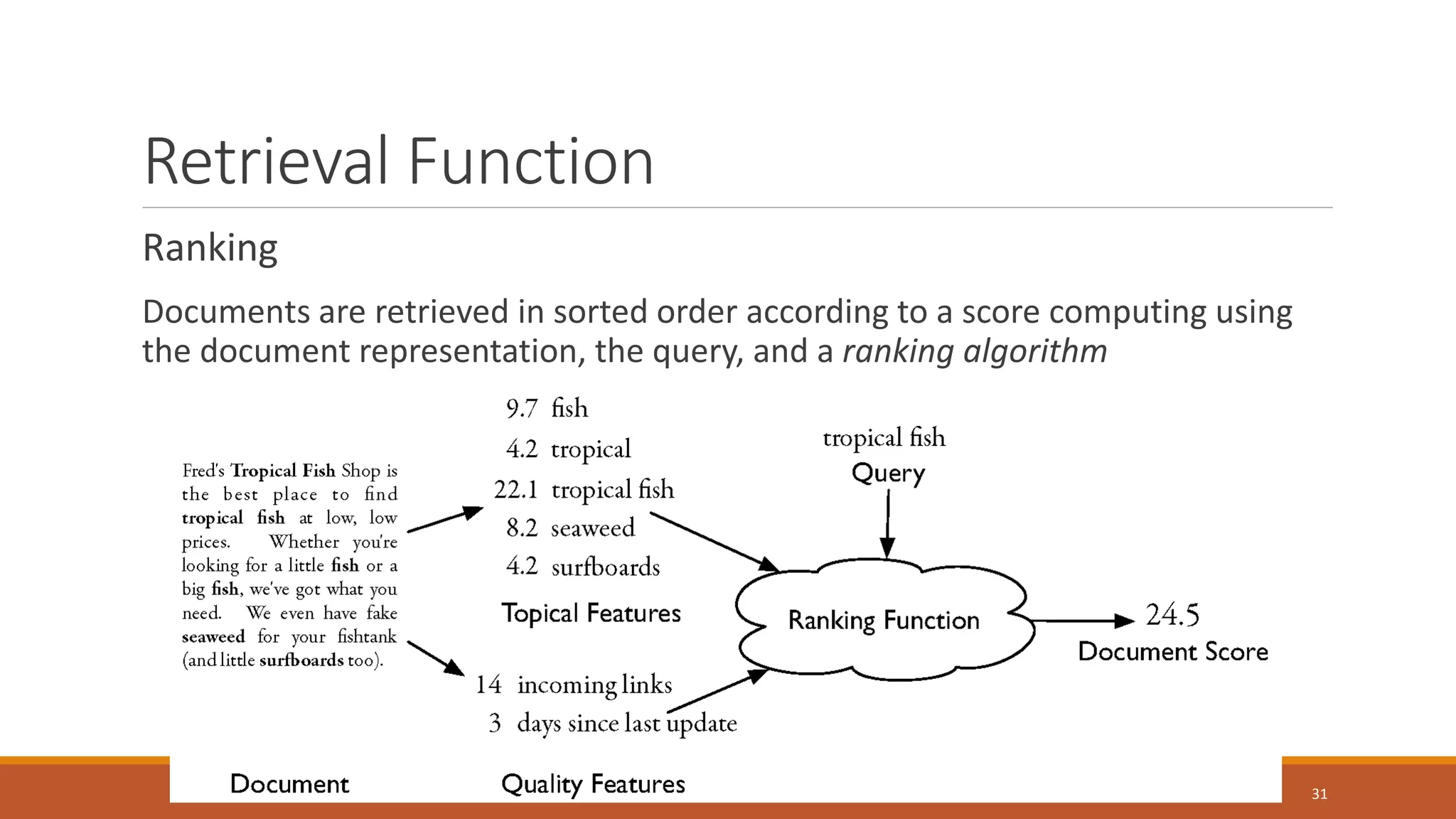

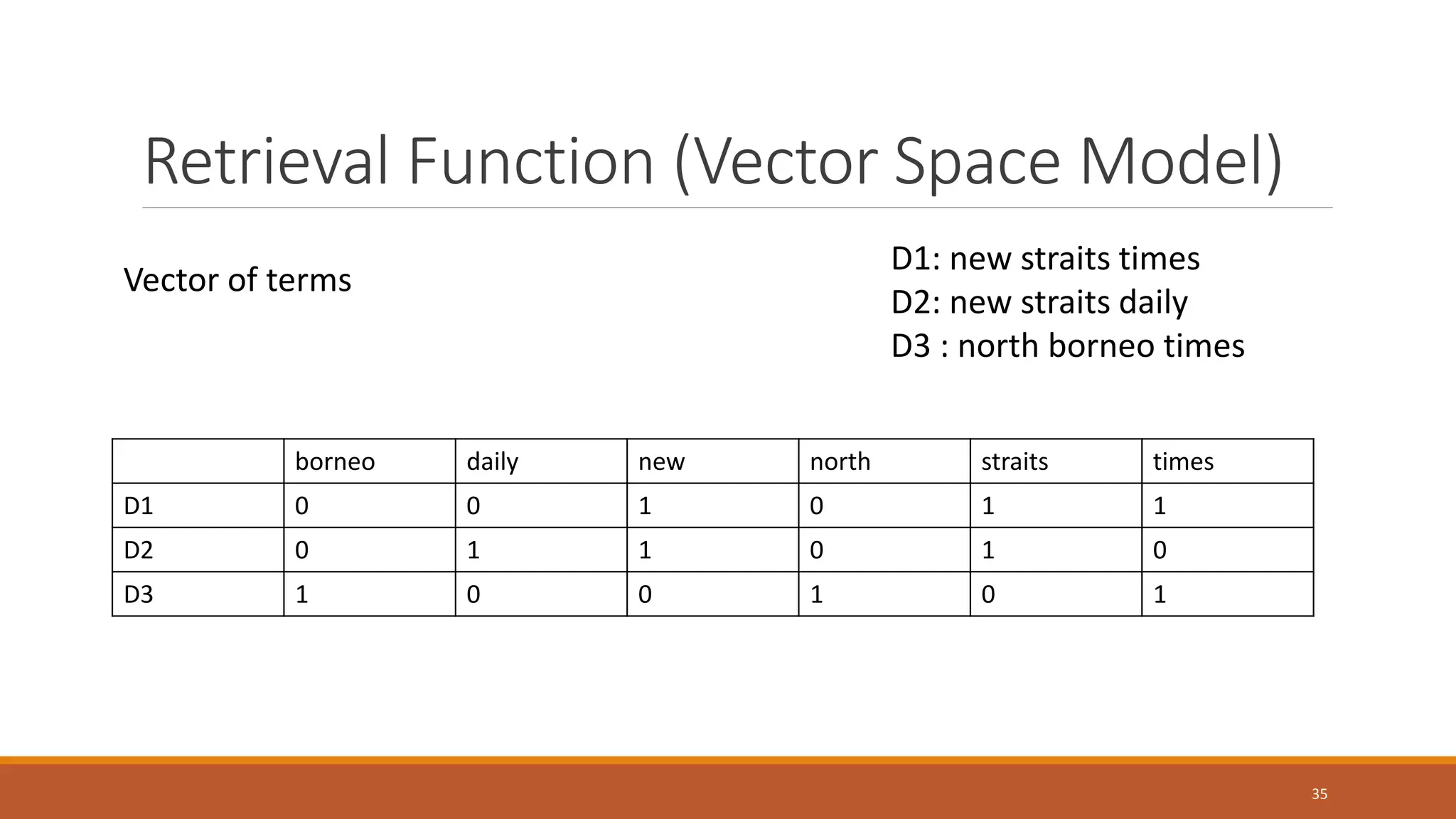

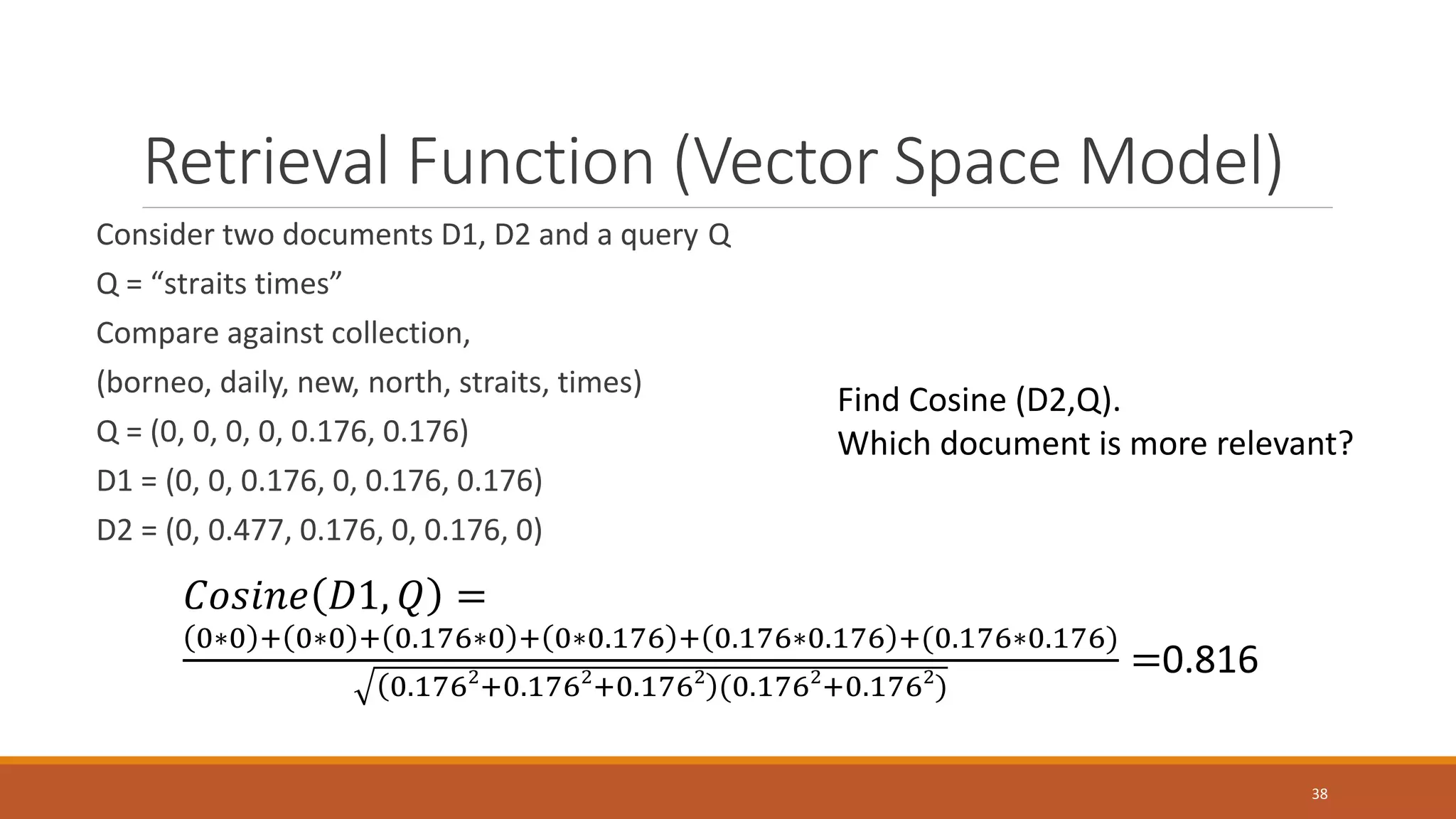

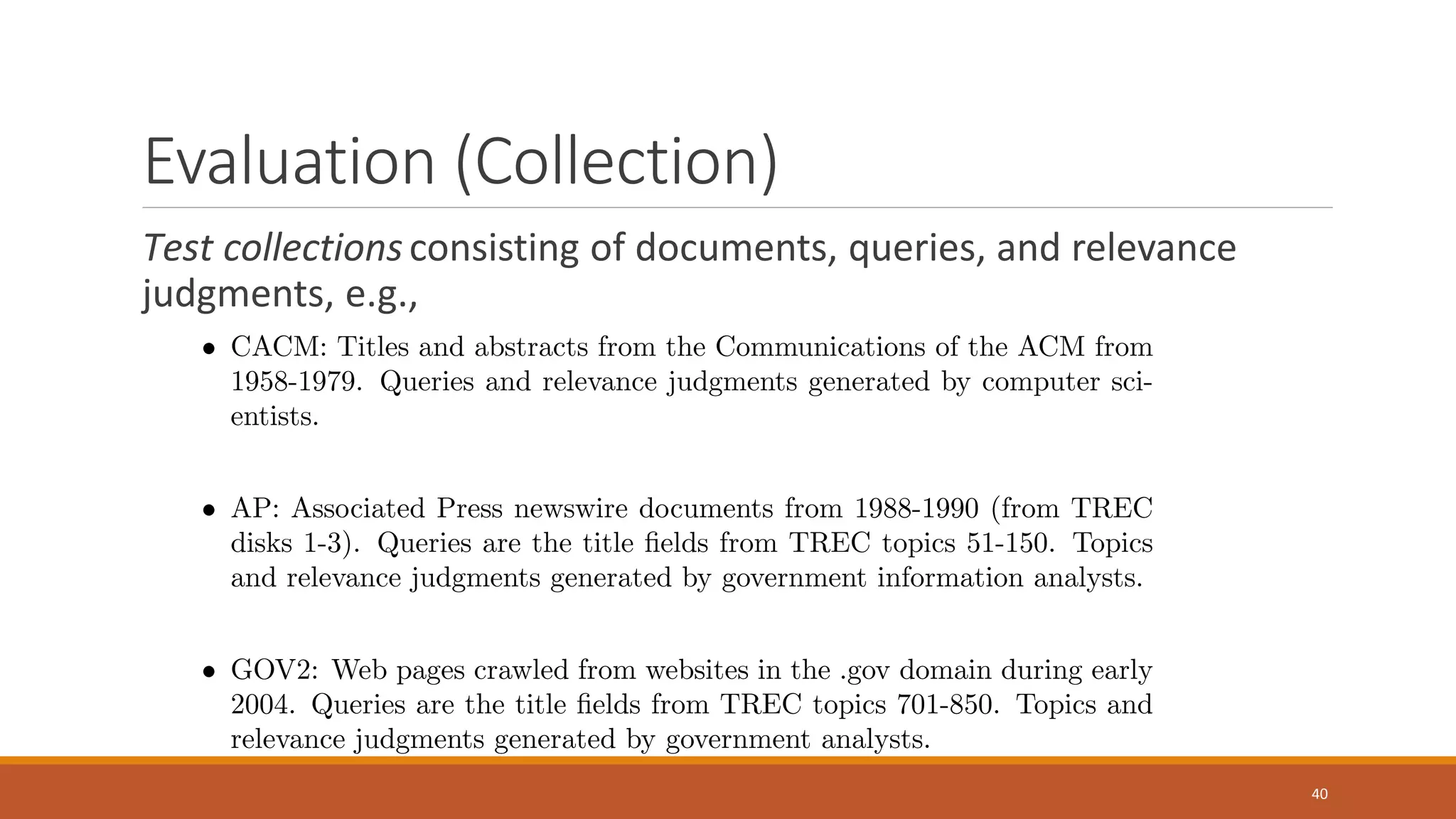

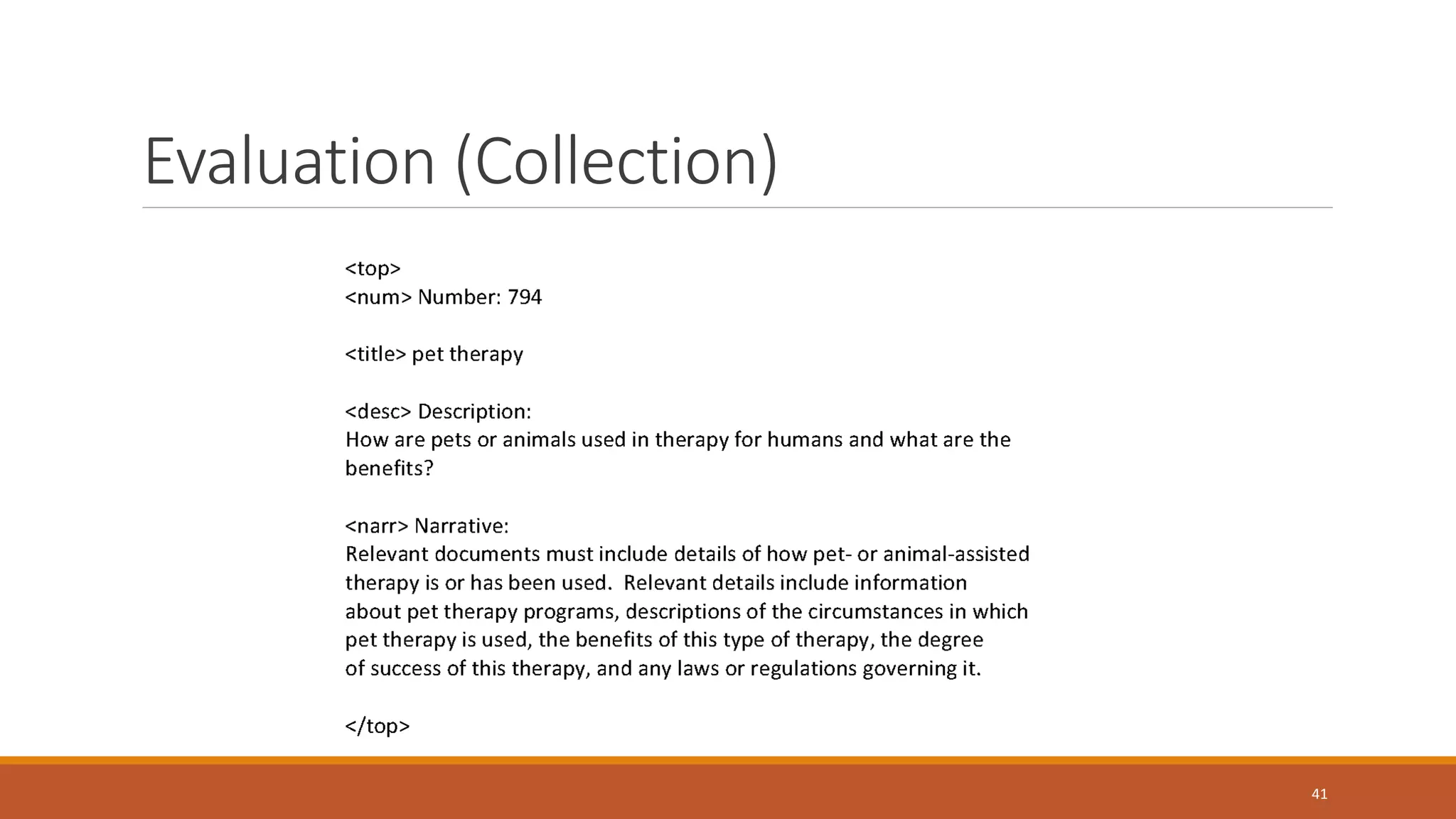

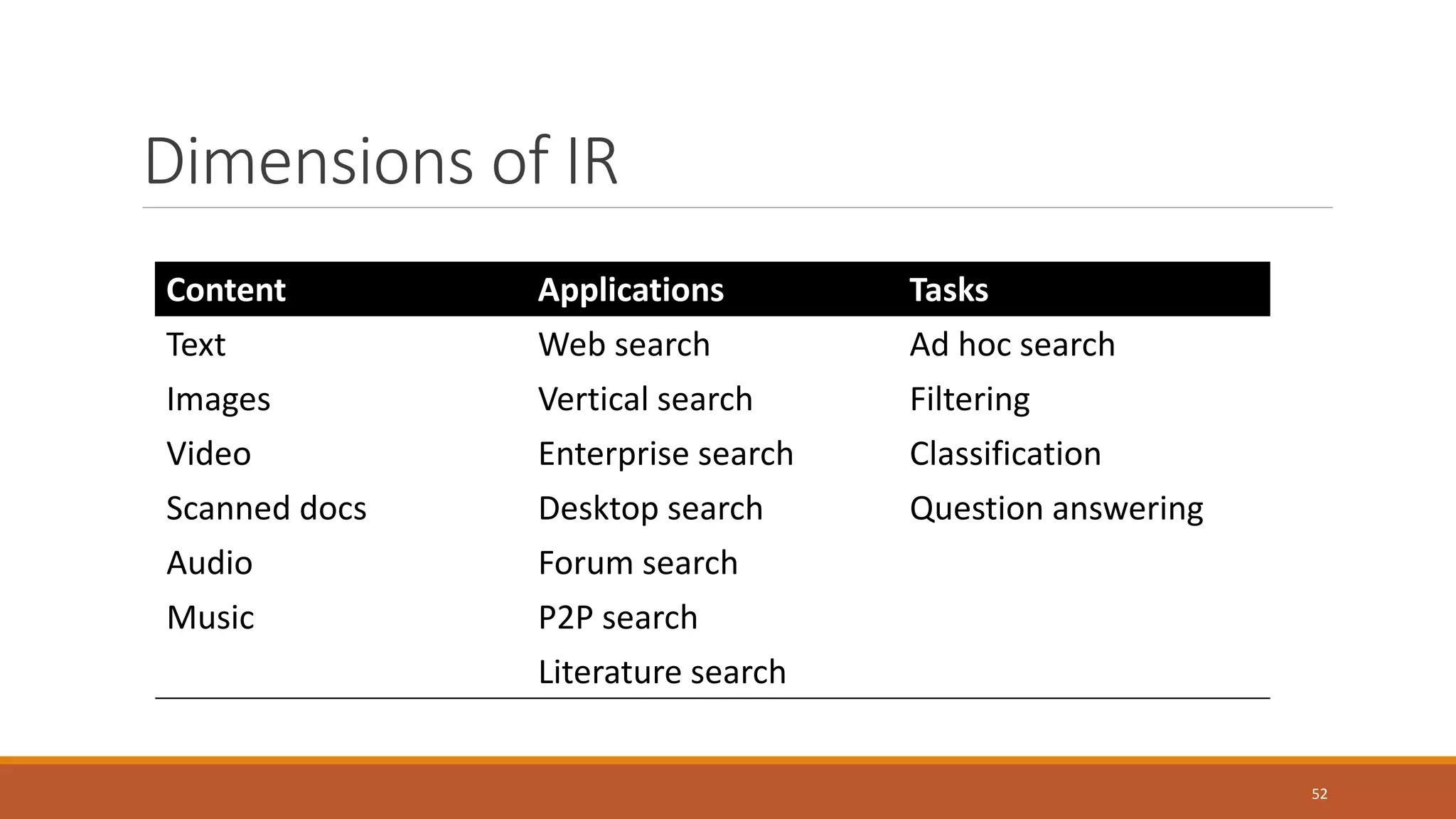

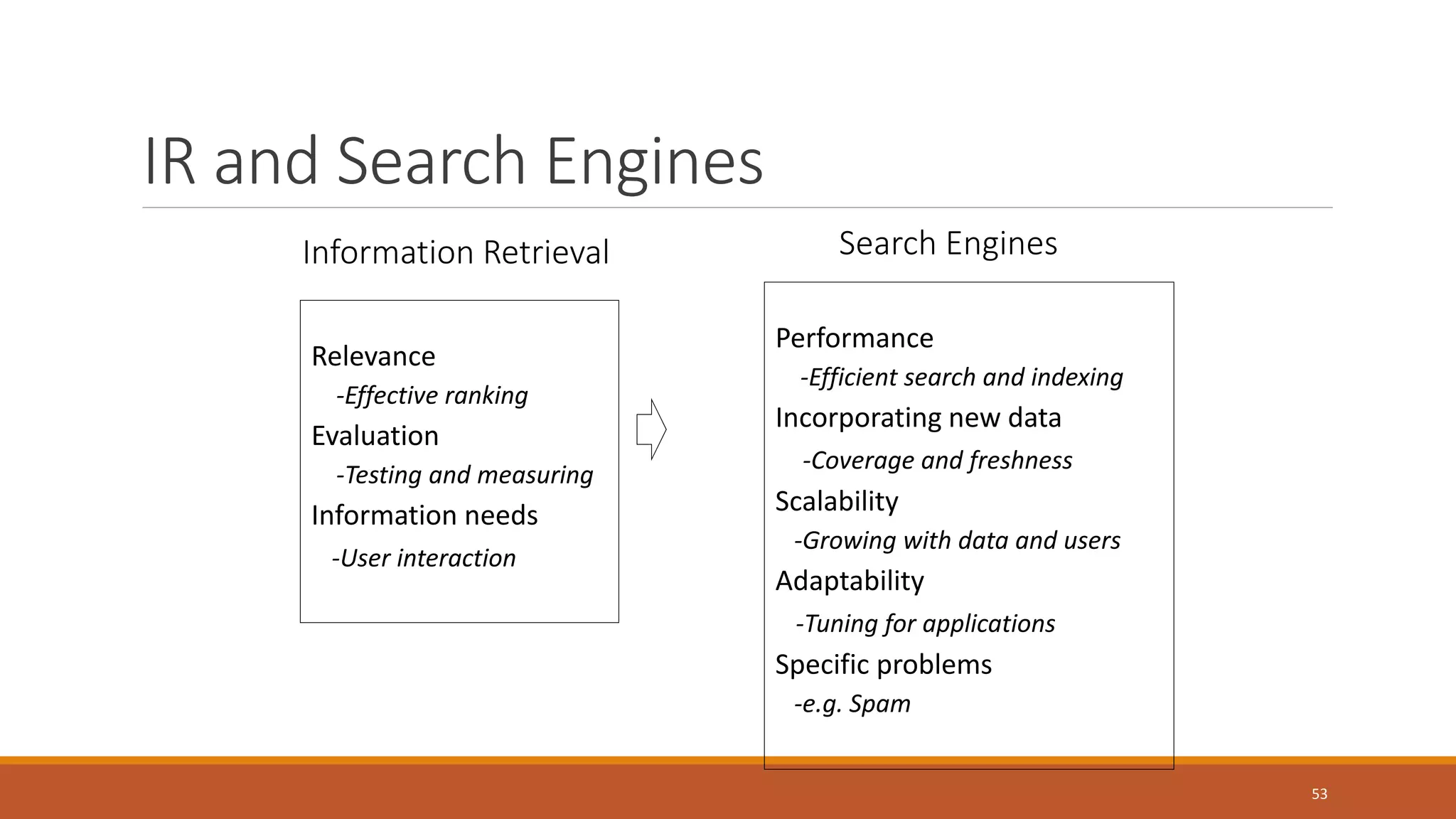

The document is a guest lecture on information retrieval, covering concepts such as document representation, indexing methods, retrieval functions, and evaluation metrics. It also discusses challenges in the field, including personalization and handling big data. Key definitions and practical applications of information retrieval in search engines are highlighted throughout the lecture.