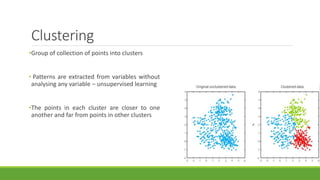

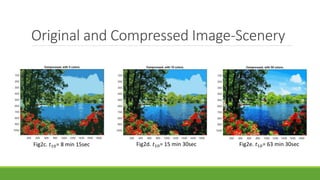

This document discusses image compression using the k-means clustering method, detailing the algorithm's process, cost function, and application in reducing image size by grouping similar pixel colors. It highlights the effectiveness and limitations of k-means, noting its utility in various fields such as medical imaging and remote sensing. The results and performance metrics of the compression on different images are also presented.