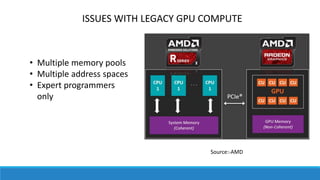

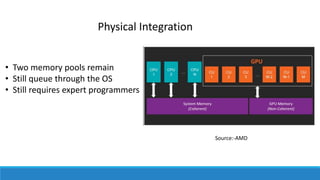

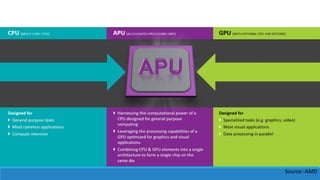

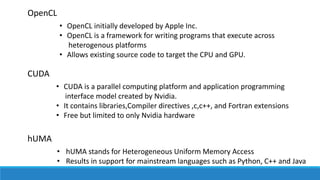

Heterogeneous computing refers to systems that use more than one type of processor or core. It allows integration of CPUs and GPUs on the same bus, with shared memory and tasks. This is called the Heterogeneous System Architecture (HSA). The HSA aims to reduce latency between devices and make them more compatible for programming. Programming models for HSA include OpenCL, CUDA, and hUMA. Heterogeneous computing is used in platforms like smartphones, laptops, game consoles, and APUs from AMD. It provides benefits like increased performance, lower costs, and better battery life over traditional CPUs, but discrete CPUs and GPUs can provide more power and new software models are needed.