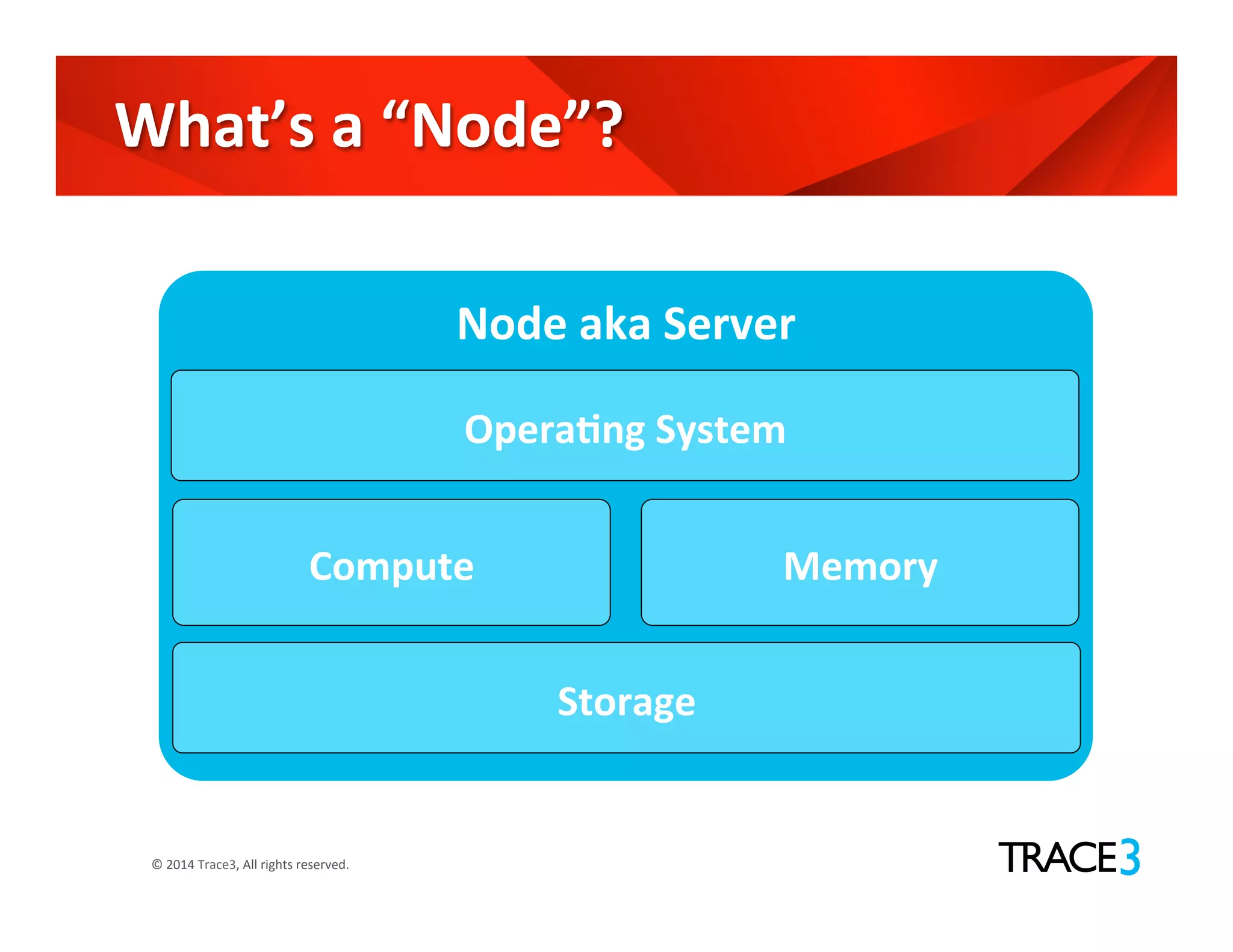

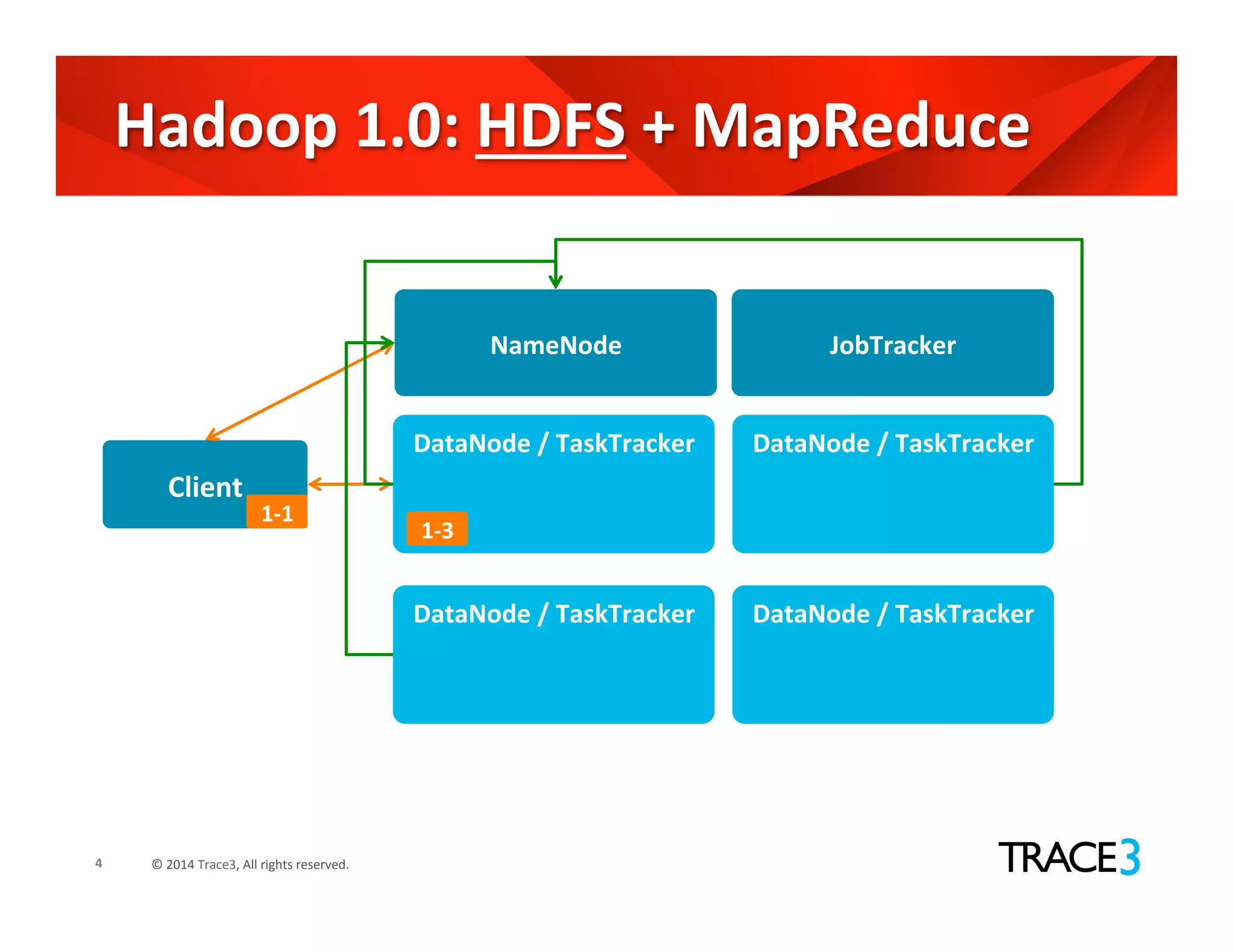

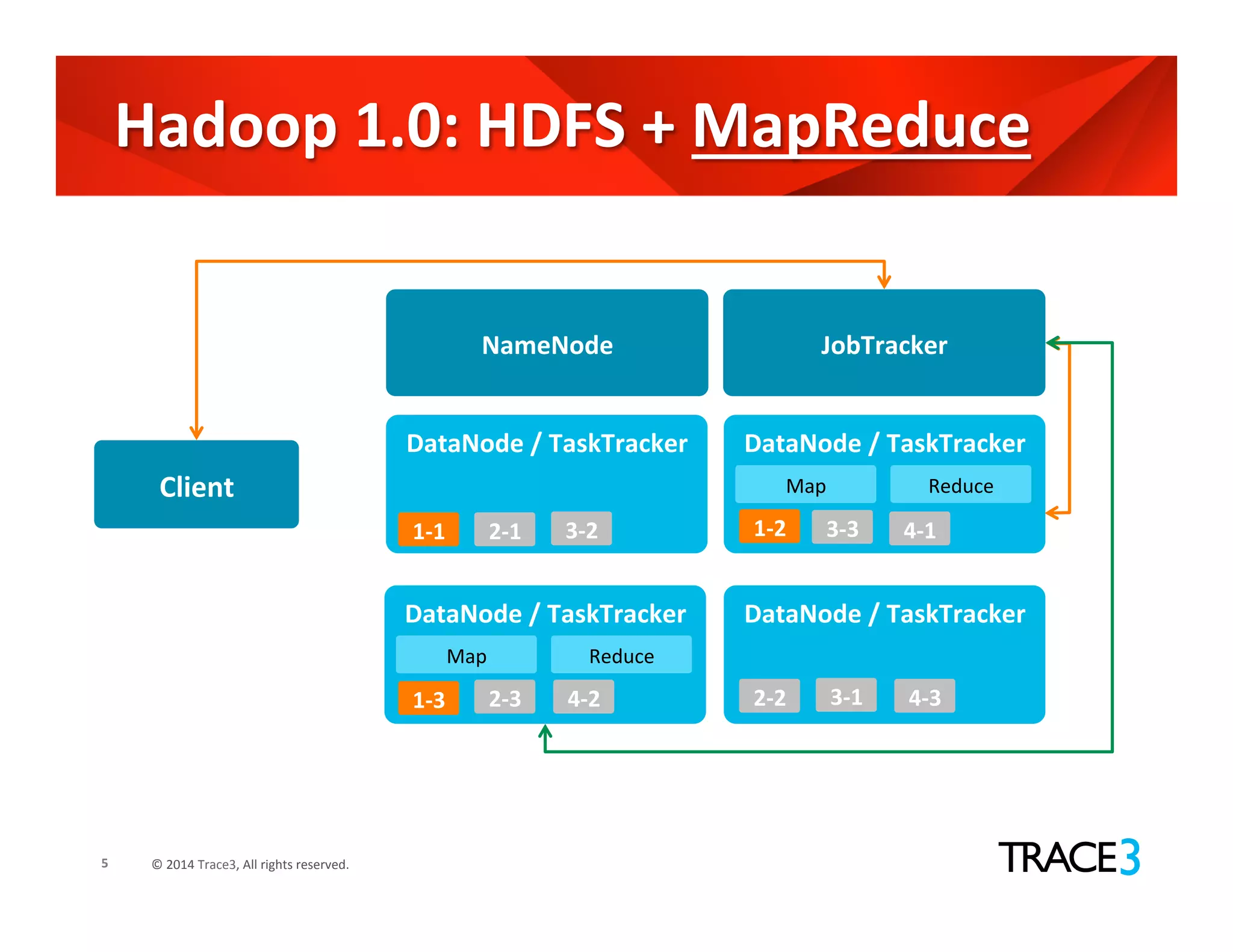

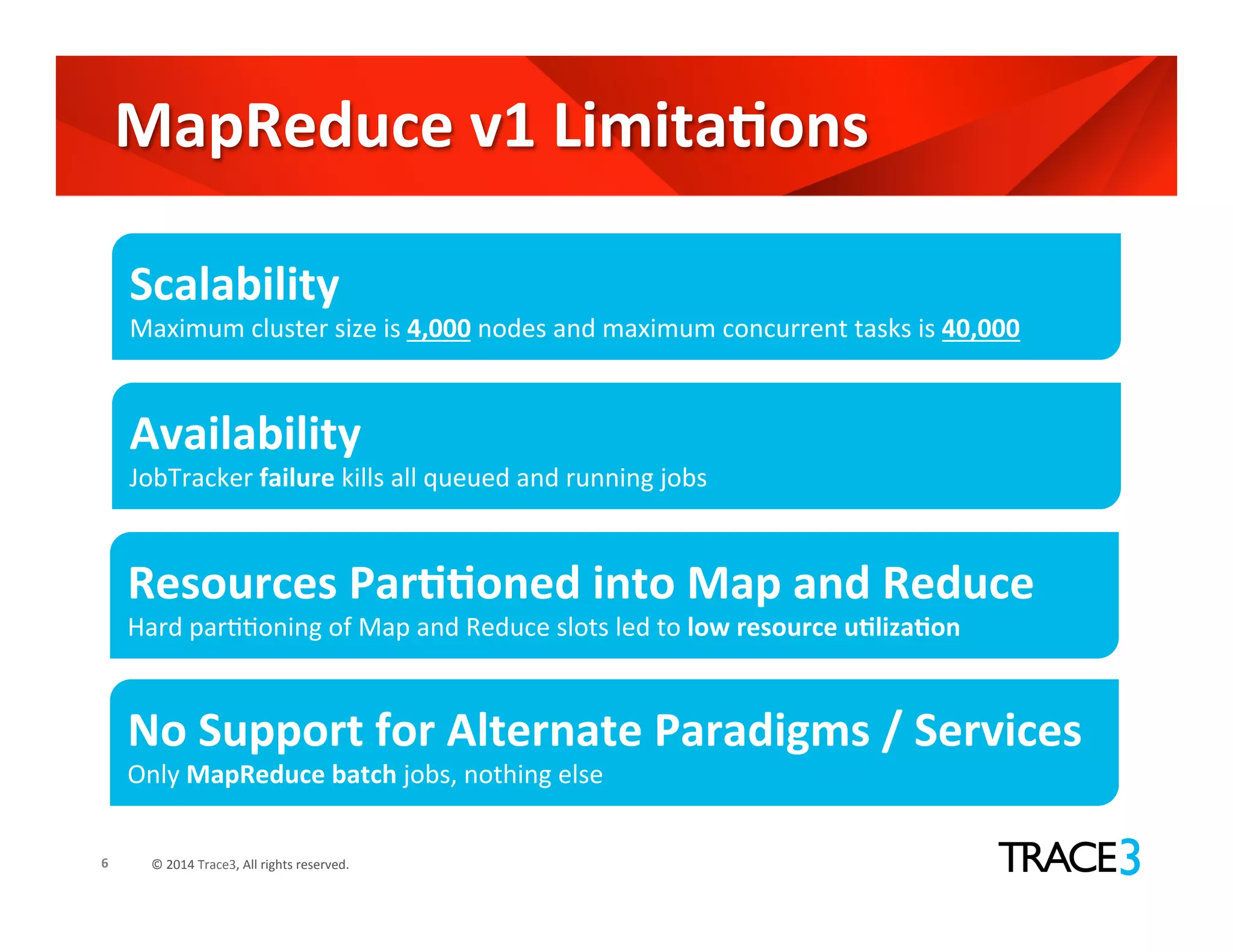

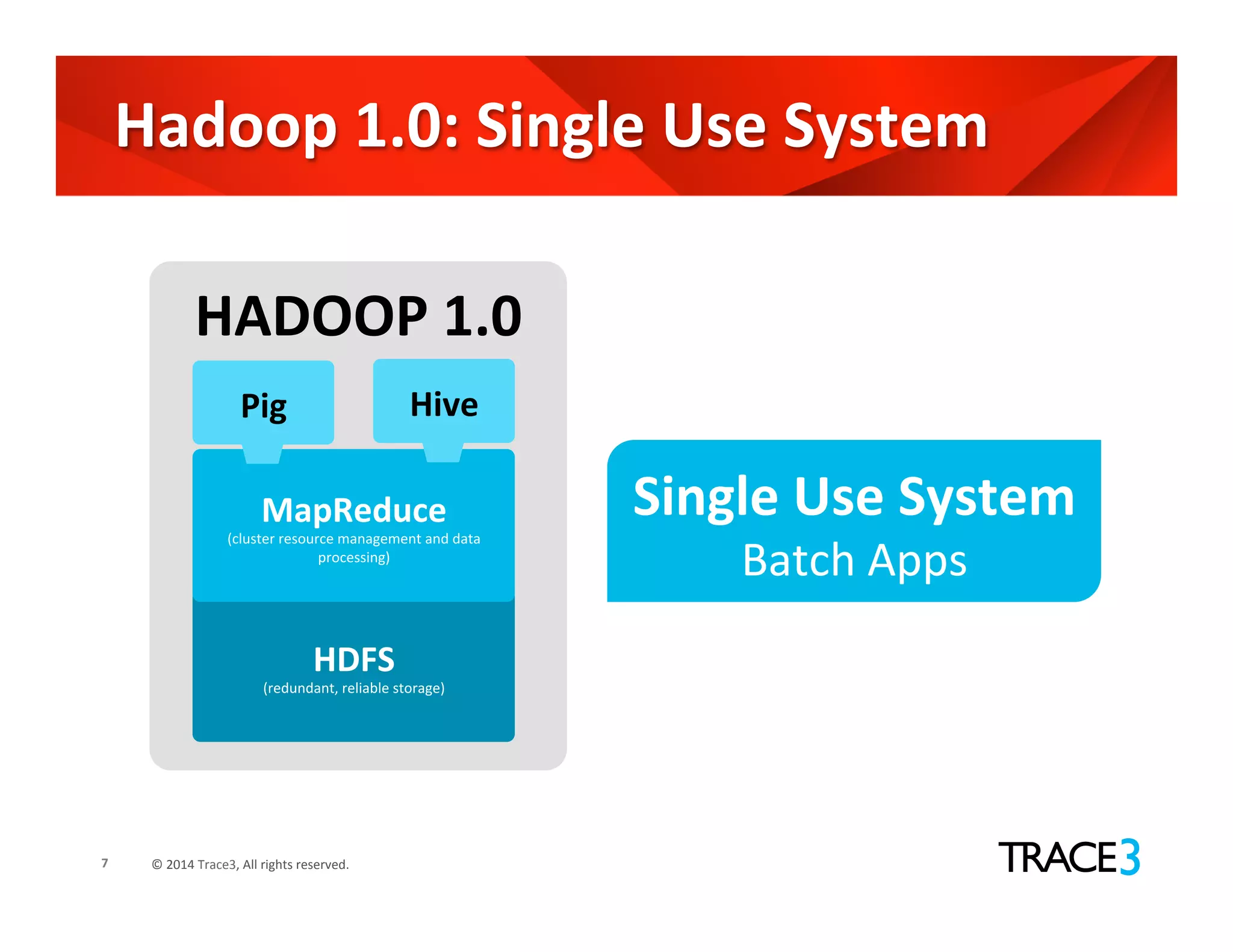

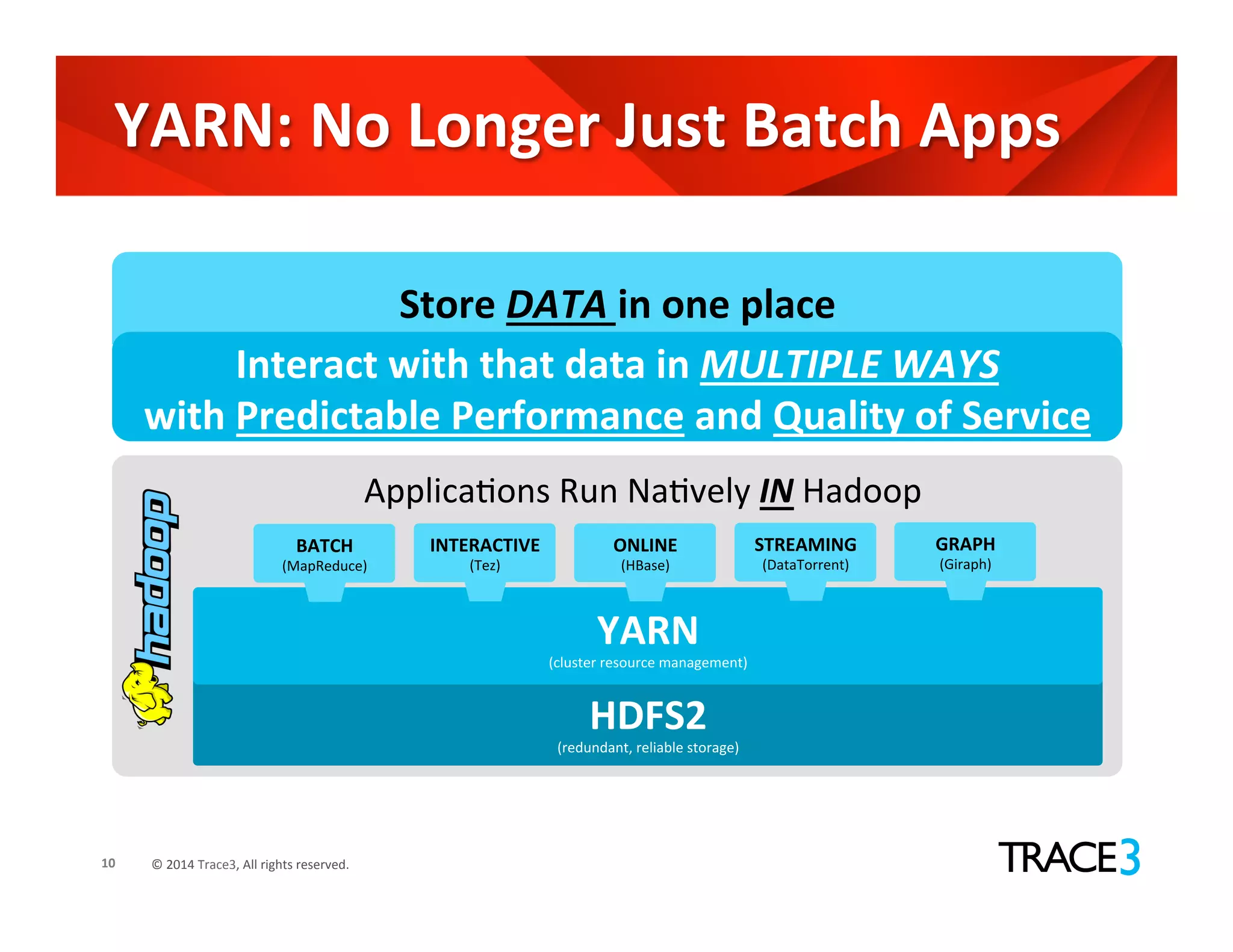

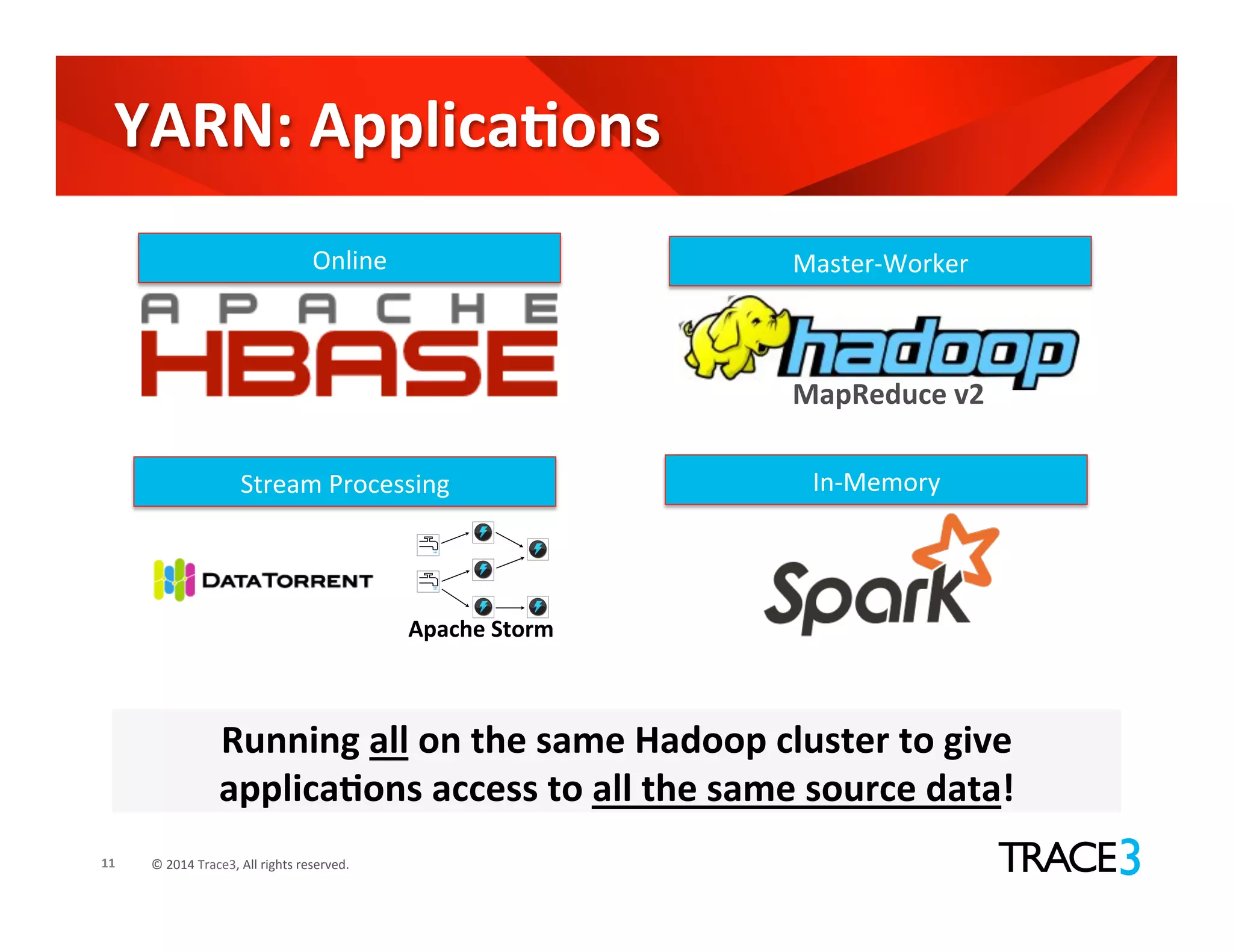

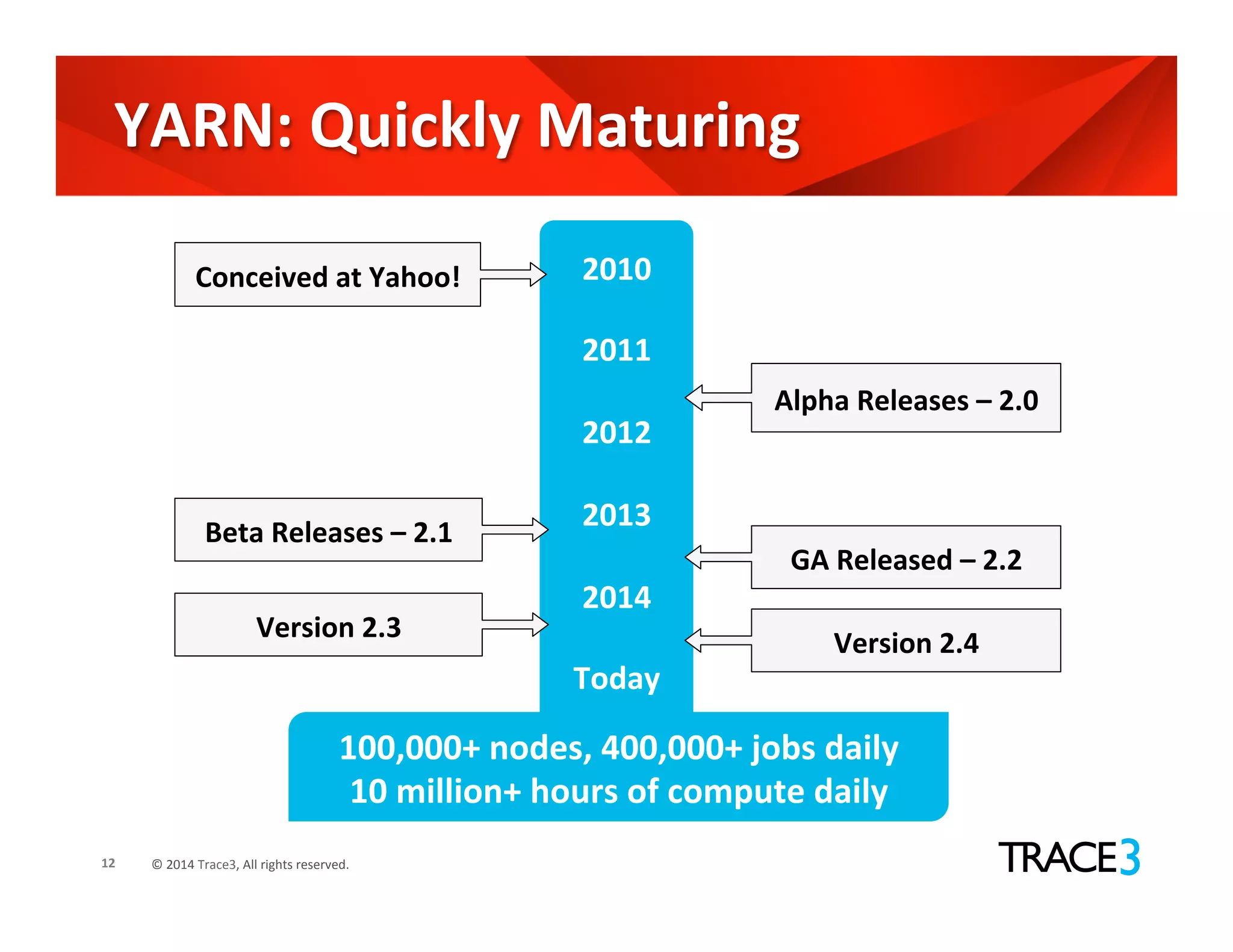

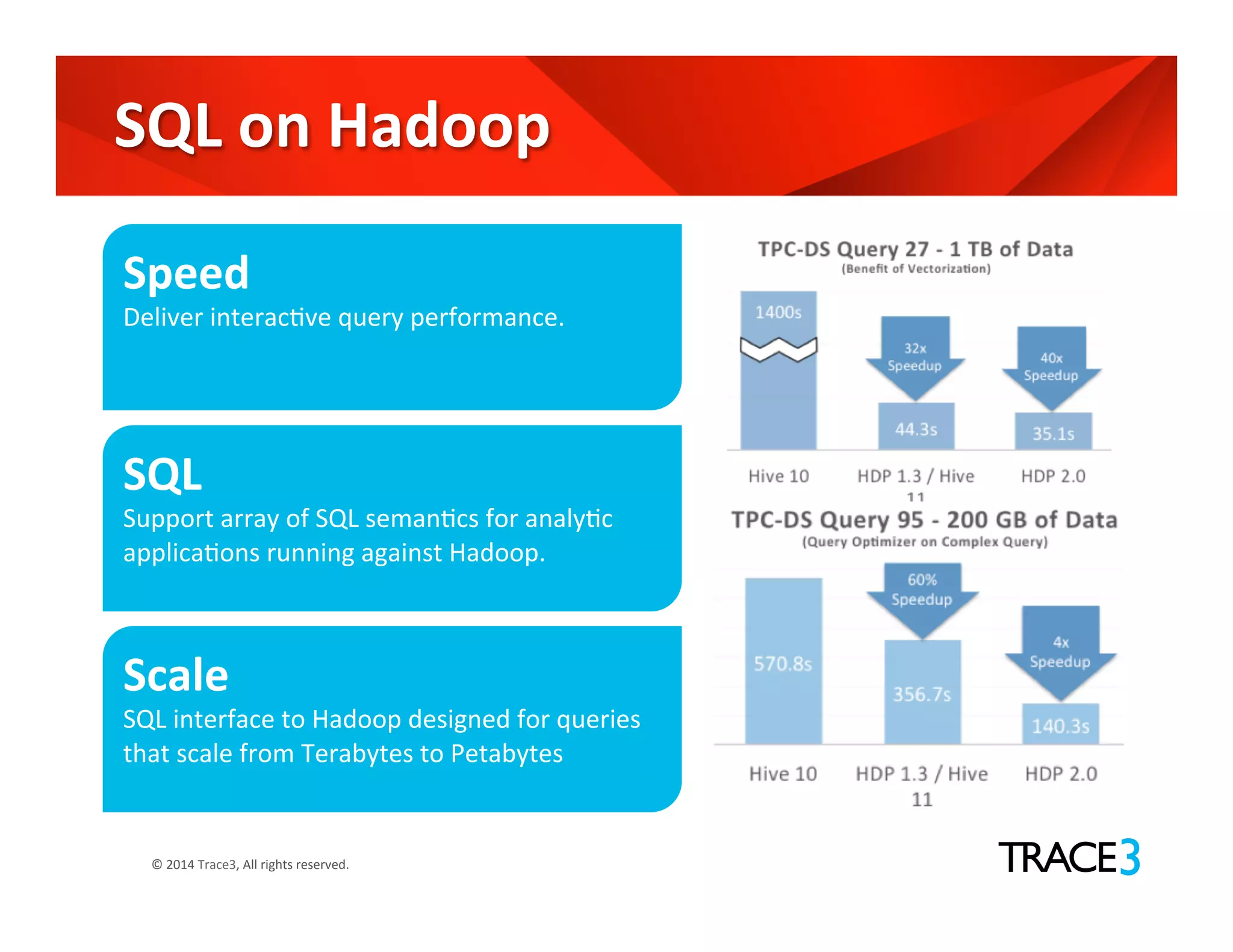

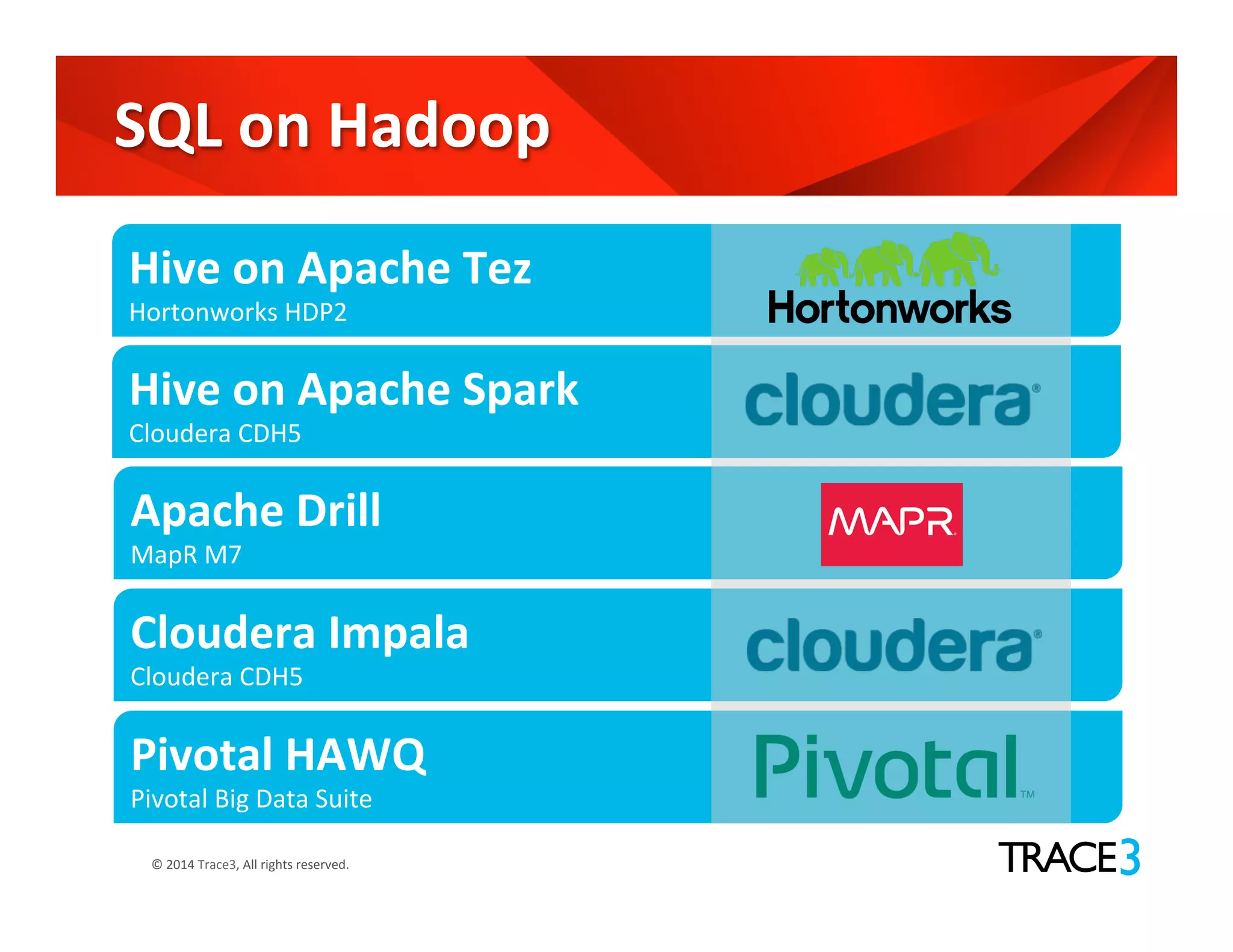

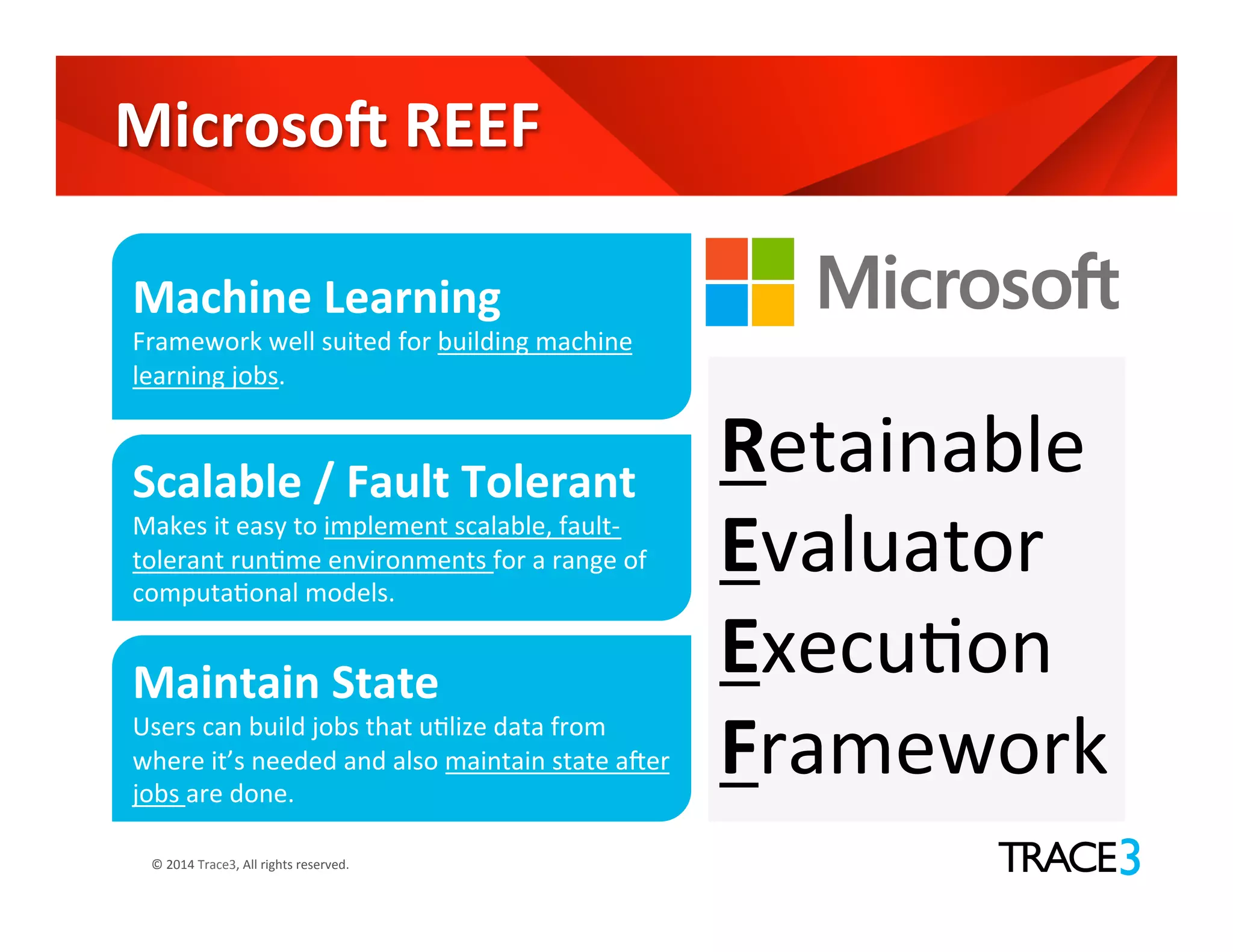

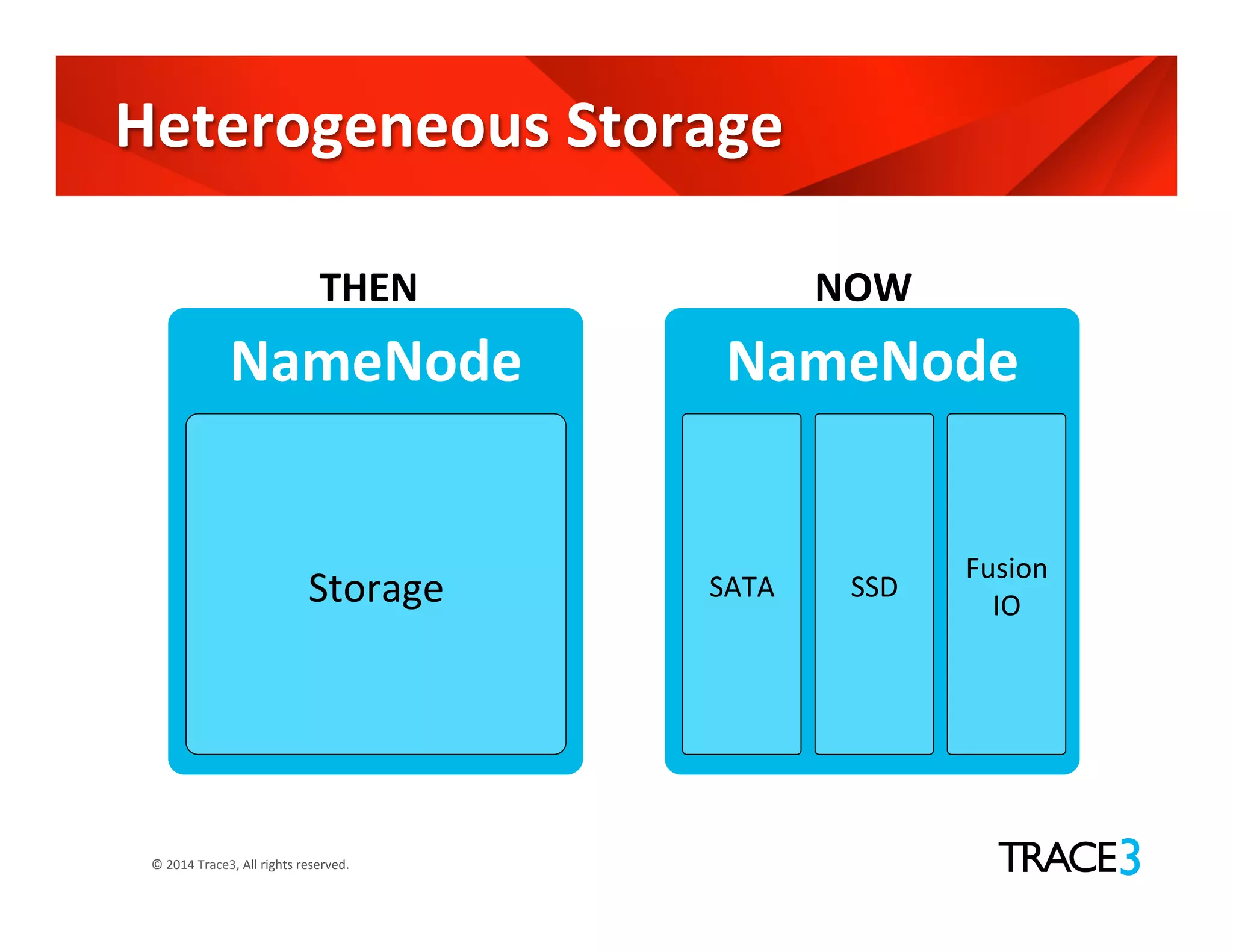

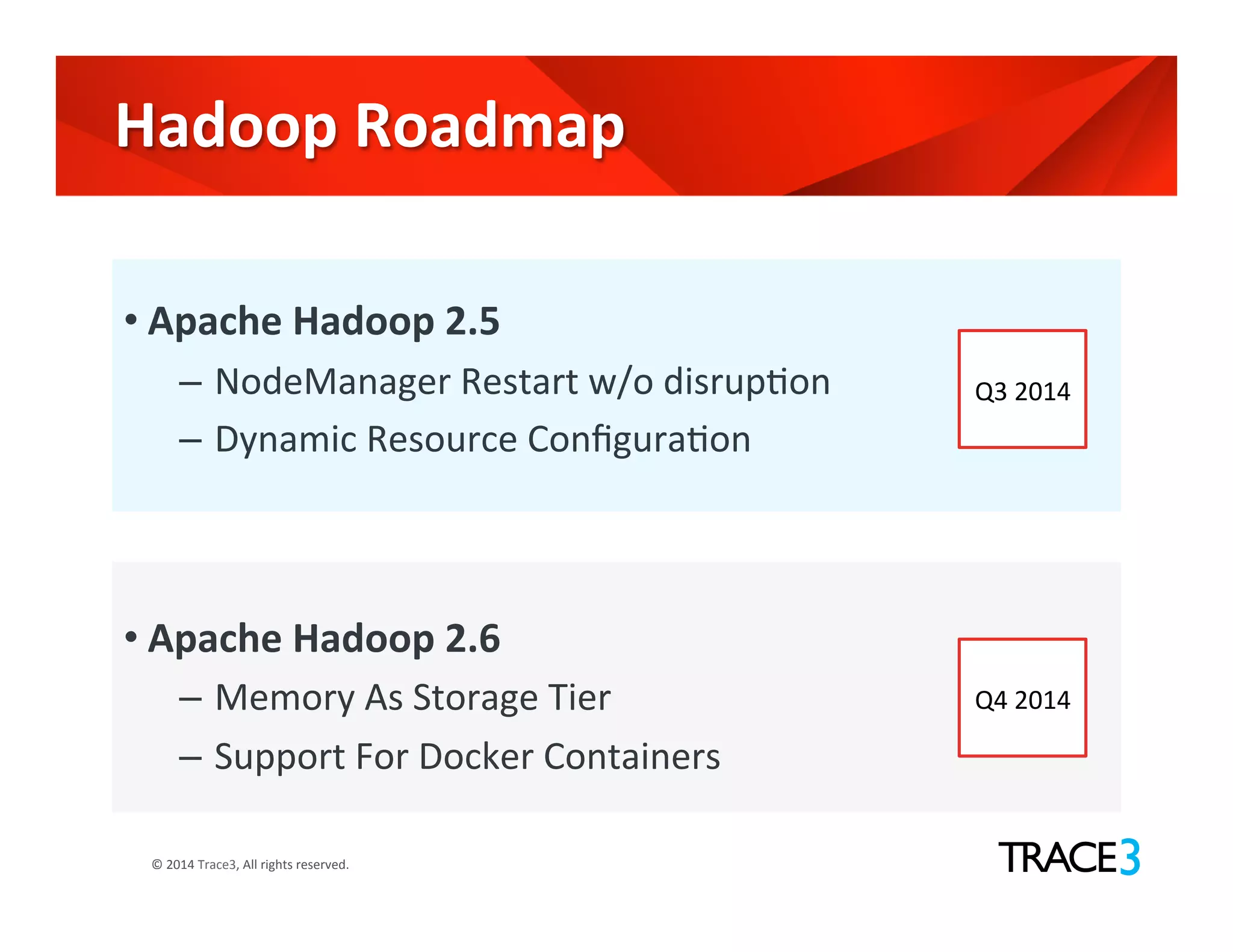

The document discusses the evolution of the Hadoop platform, highlighting the differences between Hadoop 1.x and 2.x, including the introduction of YARN for improved resource management. It outlines Hadoop 1.0's limitations and the new capabilities and programming models available in Hadoop 2.0. Additionally, it addresses future advancements in Hadoop such as SQL support and integration with machine learning frameworks.