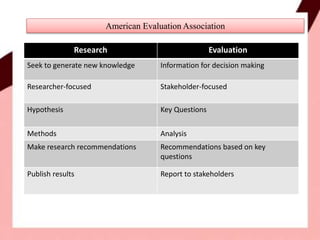

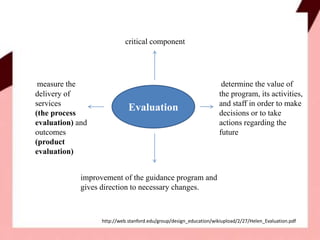

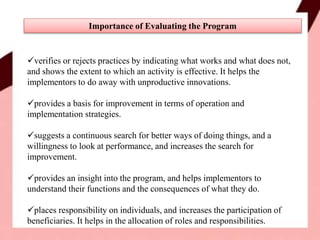

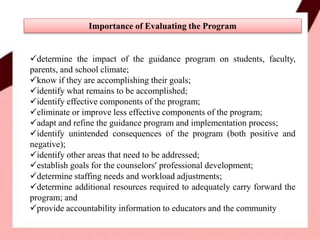

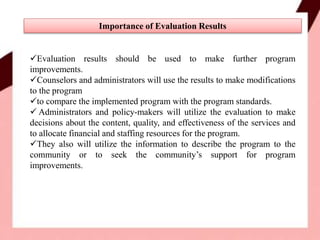

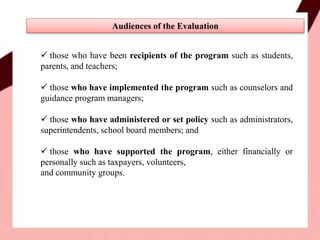

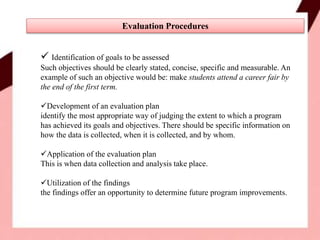

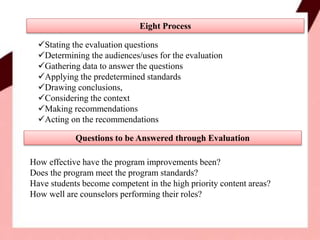

The document discusses the importance of evaluating guidance programs. Evaluation is defined as determining the value and effectiveness of a program by measuring outcomes and processes. Evaluations are important for improving programs, verifying practices, identifying effective and ineffective components, and providing accountability information. Evaluation results should be used by counselors, administrators, and policy-makers to make modifications to programs and allocate resources.