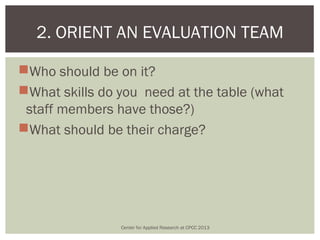

Here are the key points an orientation for an evaluation team should cover:

- Purpose and scope of the evaluation

- Roles and responsibilities of team members

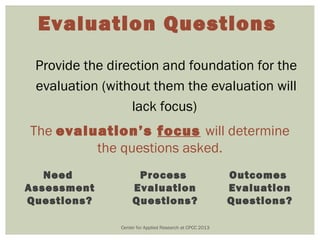

- Evaluation questions and intended uses of findings

- Important stakeholders and how they will be engaged

- Evaluation design, methodology, data collection procedures

- Analysis plan and timeline for delivering findings

- Resources and support available to the team

- Expectations for team communication and collaboration

The team should include staff with skills in research design, data collection/management, statistical analysis, and experience with the program/population being evaluated. Regular team meetings are important to track progress and address any issues.

Center for Applied Research at CPCC 2013

� 3. DEVELOP A