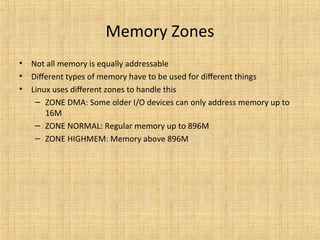

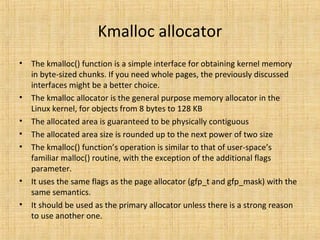

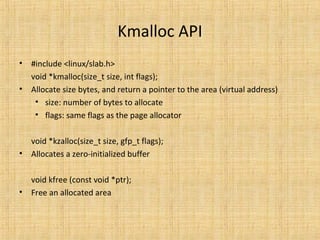

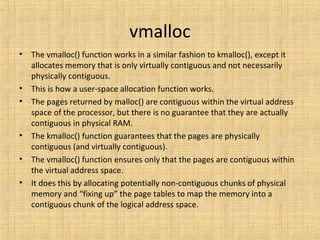

1. The document discusses various methods for managing memory in the Linux kernel, including physical memory, virtual memory, page tables, and different allocators like kmalloc, vmalloc, and SLAB for allocating memory to processes and the kernel.

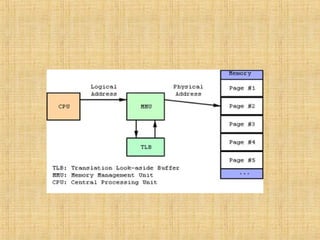

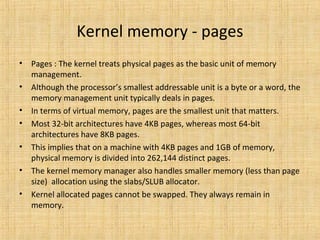

2. It explains concepts like physical vs virtual addresses, page tables that map virtual to physical memory, and the Memory Management Unit (MMU) that handles virtual address translation.

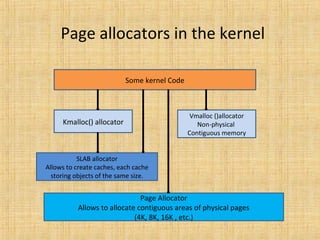

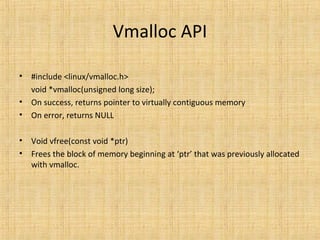

3. Different allocators like kmalloc, vmalloc and SLAB are used depending on the size and properties of the memory needed, with kmalloc and SLAB handling physically contiguous memory and vmalloc only requiring virtual contiguity.