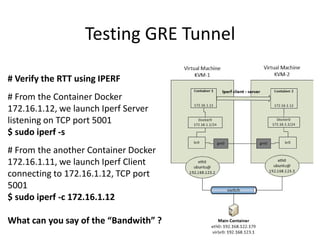

This document introduces Docker containers and provides examples of using Docker for networking containers across virtual machines. It discusses setting up a GRE tunnel between two VMs to connect their Docker interfaces and allow containers running on different VMs to communicate. Specific commands are provided to configure the Docker and overlay networks on each VM, establish the GRE tunnel, and run a sample container to test the connectivity.

![Working with Containers

$ sudo docker .... [version]

– What docker client can do

– More: https://docs.docker.com/reference/commandline/cli/

$ sudo docker run -d -P training/webapp python app.py

– Let’s inspect, stop, rm, top, ps, …](https://image.slidesharecdn.com/docker-final-intrig-20141210-150228124430-conversion-gate02/85/Docker-2014-28-320.jpg)

![Authoring images

with a Dockerfile

FROM ubuntu

RUN apt-get -y update

RUN apt-get install -y g++

RUN apt-get install -y make wget

EXPOSE 80

CMD ["/bin/ping"]

docker build -t AUTHOR/DOCKER-NAME .](https://image.slidesharecdn.com/docker-final-intrig-20141210-150228124430-conversion-gate02/85/Docker-2014-39-320.jpg)