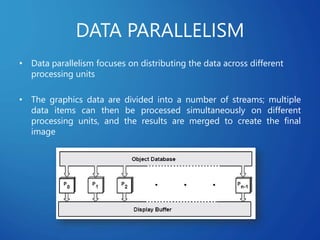

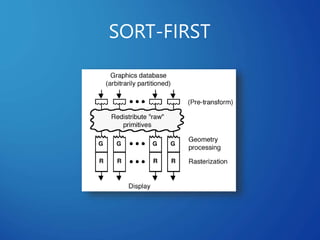

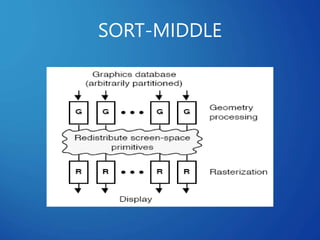

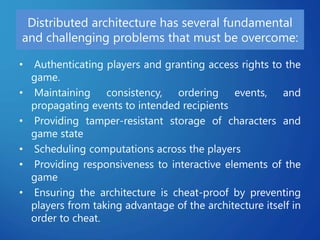

This document discusses distributed systems applications in real life, including three key areas: distributed rendering in computer graphics, peer-to-peer networks, and massively multiplayer online gaming. It describes how distributed rendering parallelizes graphics processing across multiple computers. Peer-to-peer networks are defined as decentralized networks where nodes act as both suppliers and consumers of resources. Examples of peer-to-peer applications include file sharing and content delivery networks. The document also outlines the challenges of designing multiplayer online games using a distributed architecture rather than a traditional client-server model.