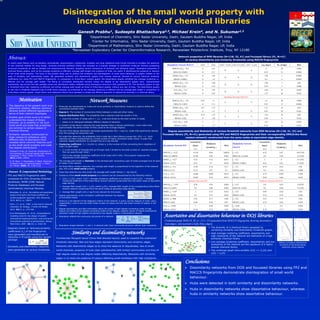

Disintegration of the small world property with increasing diversity of chemical libraries

•Download as PPTX, PDF•

0 likes•45 views

Authors: Ganesh Prabhu, Sudeepto Bhattacharya,, Michael Krein, N. Sukumar (ORCID: 0000-0002-2724-9944). Full paper in J. Math. Chem. 54(10), 1916-1941 (2016).

Report

Share

Report

Share

Recommended

Usage of word sense disambiguation in concept identification in ontology cons...

Usage of word sense disambiguation in concept identification in ontology cons...Innovation Quotient Pvt Ltd

The document discusses using word sense disambiguation (WSD) in concept identification for ontology construction. It describes implementing an approach that forms concepts from terms by meeting certain criteria, such as having an intentional definition and instances. WSD is needed to identify the sense of terms related to the domain when forming concepts. The Lesk algorithm is discussed as one method for WSD and concept disambiguation, involving calculating similarity between terms and WordNet senses. Evaluation shows the approach identified domain-specific concepts with reasonable precision and recall compared to other methods. Choosing the best WSD algorithm depends on factors like the problem nature and performance metrics.CORRELATION AND REGRESSION ANALYSIS FOR NODE BETWEENNESS CENTRALITY

In this paper, we seek to find a computationally light centrality metric that could serve as an alternate for the computationally heavy betweenness centrality (BWC) metric. In this pursuit, in the first half of the paper, we evaluate the correlation coefficient between BWC and the other commonly used centrality metrics such as Degree Centrality (DEG), Closeness Centrality (CLC), Farness Centrality (FRC),Clustering Coefficient Centrality (CCC) and Eigenvector Centrality (EVC). We observe BWC to be highly correlated with DEG for synthetic networks generated based on the Erdos-Renyi model (for randomnetworks) and Watts-Strogatz model (for small-world networks). In the second half of the paper, weconduct a regression analysis for BWC with that of a recently proposed centrality metric called thelocalized clustering coefficient complement-based degree centrality (LCC'DC) for a suite of 47 real-world networks. The R-Squared metric and Correlation coefficient for the LCC'DC-BWC regression has been observed to be appreciably greater than those observed for the DEG-BWC regression. We also bserve the LCC'DC-BWC regression to incur relatively a lower value for the standard error of residuals for a majority of the real-world networks.

Textual Data Partitioning with Relationship and Discriminative Analysis

Data partitioning methods are used to partition the data values with similarity. Similarity

measures are used to estimate transaction relationships. Hierarchical clustering model produces tree

structured results. Partitioned clustering produces results in grid format. Text documents are

unstructured data values with high dimensional attributes. Document clustering group ups unlabeled text

documents into meaningful clusters. Traditional clustering methods require cluster count (K) for the

document grouping process. Clustering accuracy degrades drastically with reference to the unsuitable

cluster count.

Textual data elements are divided into two types’ discriminative words and nondiscriminative

words. Only discriminative words are useful for grouping documents. The involvement of

nondiscriminative words confuses the clustering process and leads to poor clustering solution in return.

A variation inference algorithm is used to infer the document collection structure and partition of

document words at the same time. Dirichlet Process Mixture (DPM) model is used to partition

documents. DPM clustering model uses both the data likelihood and the clustering property of the

Dirichlet Process (DP). Dirichlet Process Mixture Model for Feature Partition (DPMFP) is used to

discover the latent cluster structure based on the DPM model. DPMFP clustering is performed without

requiring the number of clusters as input.

Document labels are used to estimate the discriminative word identification process. Concept

relationships are analyzed with Ontology support. Semantic weight model is used for the document

similarity analysis. The system improves the scalability with the support of labels and concept relations

for dimensionality reduction process.

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

In Manet’s Dynamics and Robustness are the key features of the nodes and are governed by several routing protocols such as AODV, DSR and so on. However in the network the growing resource demand leads to resource scarcity. The Node Mobility often leads to the link breakages and high routing overhead

decreasing the stability and reliability of the network connectivity. In this context, the paper proposes a novel opportunistic AODV routing scheme which implements a cognitive agent based intelligent technique to set up a stable connectivity over the Manet. The Scheme computes the routing metric (rf) based on the collaboration sensitivity levels of the nodes obtained based through the knowledge-based decision. This Routing Metric is subsequently used to set up the stable path for network connectivity. Thus minimizes the route overhead and increases the stability of the path. The Performance evaluation is conducted in comparison with the AODV and sleep AODV routing protocol and validated.

An Entity-Driven Recursive Neural Network Model for Chinese Discourse Coheren...

Chinese discourse coherence modeling remains a challenge taskin Natural Language Processing

field.Existing approaches mostlyfocus on the need for feature engineering, whichadoptthe sophisticated

features to capture the logic or syntactic or semantic relationships acrosssentences within a text.In this

paper, we present an entity-drivenrecursive deep modelfor the Chinese discourse coherence evaluation

based on current English discourse coherenceneural network model. Specifically, to overcome the

shortage of identifying the entity(nouns) overlap across sentences in the currentmodel, Our combined

modelsuccessfully investigatesthe entities information into the recursive neural network

freamework.Evaluation results on both sentence ordering and machine translation coherence rating

task show the effectiveness of the proposed model, which significantly outperforms the existing strong

baseline.

Important spreaders in networks: exact results on small graphs

To be able to control spreading phenomena (like the spreading of diseases and information) in networks it is important to identify influential spreaders. What "important" means depends on what is spreading and what kind of countermeasures that are available. In this work, we let the susceptible-infected-removed (SIR) model represent the spreading dynamics and contrast three different definitions of importance: Influence maximization (the expected outbreak size given a set of seed nodes), the effect of vaccination (how much deleting nodes would reduce the expected outbreak size) and sentinel surveillance (how early an outbreak could be detected with sensors at a set of nodes). We calculate the exact expressions of these quantities, as functions of the SIR parameters, for all connected graphs of three to seven nodes. We obtain the smallest graphs where the optimal node sets are not overlapping. We find that: node separation is more important than centrality for more than one active node, that vaccination and influence maximization are the most different aspects of importance, and that the three aspects are more similar when the infection rate is low. Furthermore, we discuss similar approaches to study the extinction times in the susceptible-infected- susceptible model.

NAMED ENTITY RECOGNITION IN TURKISH USING ASSOCIATION MEASURES

Named Entity Recognition which is an important subject of Natural Language Processing is a key technology of information extraction, information retrieval, question answering and other text processing applications. In this study, we evaluate previously well-established association measures as an initial

attempt to extract two-worded named entities in a Turkish corpus. Furthermore we propose a new association measure, and compare it with the other methods. The evaluation of these methods is performed by precision and recall measures.

TOPIC EXTRACTION OF CRAWLED DOCUMENTS COLLECTION USING CORRELATED TOPIC MODEL...

The tremendous increase in the amount of available research documents impels researchers to propose topic models to extract the latent semantic themes of a documents collection. However, how to extract the hidden topics of the documents collection has become a crucial task for many topic model applications. Moreover, conventional topic modeling approaches suffer from the scalability problem when the size of documents collection increases. In this paper, the Correlated Topic Model with variational ExpectationMaximization algorithm is implemented in MapReduce framework to solve the scalability problem. The proposed approach utilizes the dataset crawled from the public digital library. In addition, the full-texts of the crawled documents are analysed to enhance the accuracy of MapReduce CTM. The experiments are conducted to demonstrate the performance of the proposed algorithm. From the evaluation, the proposed approach has a comparable performance in terms of topic coherences with LDA implemented in MapReduce framework.

Recommended

Usage of word sense disambiguation in concept identification in ontology cons...

Usage of word sense disambiguation in concept identification in ontology cons...Innovation Quotient Pvt Ltd

The document discusses using word sense disambiguation (WSD) in concept identification for ontology construction. It describes implementing an approach that forms concepts from terms by meeting certain criteria, such as having an intentional definition and instances. WSD is needed to identify the sense of terms related to the domain when forming concepts. The Lesk algorithm is discussed as one method for WSD and concept disambiguation, involving calculating similarity between terms and WordNet senses. Evaluation shows the approach identified domain-specific concepts with reasonable precision and recall compared to other methods. Choosing the best WSD algorithm depends on factors like the problem nature and performance metrics.CORRELATION AND REGRESSION ANALYSIS FOR NODE BETWEENNESS CENTRALITY

In this paper, we seek to find a computationally light centrality metric that could serve as an alternate for the computationally heavy betweenness centrality (BWC) metric. In this pursuit, in the first half of the paper, we evaluate the correlation coefficient between BWC and the other commonly used centrality metrics such as Degree Centrality (DEG), Closeness Centrality (CLC), Farness Centrality (FRC),Clustering Coefficient Centrality (CCC) and Eigenvector Centrality (EVC). We observe BWC to be highly correlated with DEG for synthetic networks generated based on the Erdos-Renyi model (for randomnetworks) and Watts-Strogatz model (for small-world networks). In the second half of the paper, weconduct a regression analysis for BWC with that of a recently proposed centrality metric called thelocalized clustering coefficient complement-based degree centrality (LCC'DC) for a suite of 47 real-world networks. The R-Squared metric and Correlation coefficient for the LCC'DC-BWC regression has been observed to be appreciably greater than those observed for the DEG-BWC regression. We also bserve the LCC'DC-BWC regression to incur relatively a lower value for the standard error of residuals for a majority of the real-world networks.

Textual Data Partitioning with Relationship and Discriminative Analysis

Data partitioning methods are used to partition the data values with similarity. Similarity

measures are used to estimate transaction relationships. Hierarchical clustering model produces tree

structured results. Partitioned clustering produces results in grid format. Text documents are

unstructured data values with high dimensional attributes. Document clustering group ups unlabeled text

documents into meaningful clusters. Traditional clustering methods require cluster count (K) for the

document grouping process. Clustering accuracy degrades drastically with reference to the unsuitable

cluster count.

Textual data elements are divided into two types’ discriminative words and nondiscriminative

words. Only discriminative words are useful for grouping documents. The involvement of

nondiscriminative words confuses the clustering process and leads to poor clustering solution in return.

A variation inference algorithm is used to infer the document collection structure and partition of

document words at the same time. Dirichlet Process Mixture (DPM) model is used to partition

documents. DPM clustering model uses both the data likelihood and the clustering property of the

Dirichlet Process (DP). Dirichlet Process Mixture Model for Feature Partition (DPMFP) is used to

discover the latent cluster structure based on the DPM model. DPMFP clustering is performed without

requiring the number of clusters as input.

Document labels are used to estimate the discriminative word identification process. Concept

relationships are analyzed with Ontology support. Semantic weight model is used for the document

similarity analysis. The system improves the scalability with the support of labels and concept relations

for dimensionality reduction process.

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

In Manet’s Dynamics and Robustness are the key features of the nodes and are governed by several routing protocols such as AODV, DSR and so on. However in the network the growing resource demand leads to resource scarcity. The Node Mobility often leads to the link breakages and high routing overhead

decreasing the stability and reliability of the network connectivity. In this context, the paper proposes a novel opportunistic AODV routing scheme which implements a cognitive agent based intelligent technique to set up a stable connectivity over the Manet. The Scheme computes the routing metric (rf) based on the collaboration sensitivity levels of the nodes obtained based through the knowledge-based decision. This Routing Metric is subsequently used to set up the stable path for network connectivity. Thus minimizes the route overhead and increases the stability of the path. The Performance evaluation is conducted in comparison with the AODV and sleep AODV routing protocol and validated.

An Entity-Driven Recursive Neural Network Model for Chinese Discourse Coheren...

Chinese discourse coherence modeling remains a challenge taskin Natural Language Processing

field.Existing approaches mostlyfocus on the need for feature engineering, whichadoptthe sophisticated

features to capture the logic or syntactic or semantic relationships acrosssentences within a text.In this

paper, we present an entity-drivenrecursive deep modelfor the Chinese discourse coherence evaluation

based on current English discourse coherenceneural network model. Specifically, to overcome the

shortage of identifying the entity(nouns) overlap across sentences in the currentmodel, Our combined

modelsuccessfully investigatesthe entities information into the recursive neural network

freamework.Evaluation results on both sentence ordering and machine translation coherence rating

task show the effectiveness of the proposed model, which significantly outperforms the existing strong

baseline.

Important spreaders in networks: exact results on small graphs

To be able to control spreading phenomena (like the spreading of diseases and information) in networks it is important to identify influential spreaders. What "important" means depends on what is spreading and what kind of countermeasures that are available. In this work, we let the susceptible-infected-removed (SIR) model represent the spreading dynamics and contrast three different definitions of importance: Influence maximization (the expected outbreak size given a set of seed nodes), the effect of vaccination (how much deleting nodes would reduce the expected outbreak size) and sentinel surveillance (how early an outbreak could be detected with sensors at a set of nodes). We calculate the exact expressions of these quantities, as functions of the SIR parameters, for all connected graphs of three to seven nodes. We obtain the smallest graphs where the optimal node sets are not overlapping. We find that: node separation is more important than centrality for more than one active node, that vaccination and influence maximization are the most different aspects of importance, and that the three aspects are more similar when the infection rate is low. Furthermore, we discuss similar approaches to study the extinction times in the susceptible-infected- susceptible model.

NAMED ENTITY RECOGNITION IN TURKISH USING ASSOCIATION MEASURES

Named Entity Recognition which is an important subject of Natural Language Processing is a key technology of information extraction, information retrieval, question answering and other text processing applications. In this study, we evaluate previously well-established association measures as an initial

attempt to extract two-worded named entities in a Turkish corpus. Furthermore we propose a new association measure, and compare it with the other methods. The evaluation of these methods is performed by precision and recall measures.

TOPIC EXTRACTION OF CRAWLED DOCUMENTS COLLECTION USING CORRELATED TOPIC MODEL...

The tremendous increase in the amount of available research documents impels researchers to propose topic models to extract the latent semantic themes of a documents collection. However, how to extract the hidden topics of the documents collection has become a crucial task for many topic model applications. Moreover, conventional topic modeling approaches suffer from the scalability problem when the size of documents collection increases. In this paper, the Correlated Topic Model with variational ExpectationMaximization algorithm is implemented in MapReduce framework to solve the scalability problem. The proposed approach utilizes the dataset crawled from the public digital library. In addition, the full-texts of the crawled documents are analysed to enhance the accuracy of MapReduce CTM. The experiments are conducted to demonstrate the performance of the proposed algorithm. From the evaluation, the proposed approach has a comparable performance in terms of topic coherences with LDA implemented in MapReduce framework.

SIMILARITY ANALYSIS OF DNA SEQUENCES BASED ON THE CHEMICAL PROPERTIES OF NUCL...

The DNA sequences similarity analysis approaches have been based on the representation and the frequency of sequences components; however, the position inside sequence is important information for the sequence data. Whereas, insufficient information in sequences

representations is important reason that causes poor similarity results. Based on three classifications of the DNA bases according to their chemical properties, the frequencies and

average positions of group mutations have been grouped into two twelve-components vectors,the Euclidean distances among introduced vectors applied to compare the coding sequences of the first exon of beta globin gene of 11 species.

Dimensionality Reduction Techniques for Document Clustering- A Survey

Abstract— Dimensionality reduction technique is applied to get rid of the inessential terms like redundant and noisy terms in documents. In this paper a systematic study is conducted for seven dimensionality reduction methods such as Latent Semantic Indexing (LSI), Random Projection (RP), Principle Component Analysis (PCA) and CUR decomposition, Latent Dirichlet Allocation(LDA), Singular value decomposition (SVD). Linear Discriminant Analysis(LDA)

Important spreaders in networks: Exact results for small graphs

The document discusses exact calculations of node importance in network epidemiology for small graphs. It examines three types of node importance: influence maximization, vaccination, and sentinel surveillance. The goal is to find the smallest graph where all three notions of importance differ for different nodes. Symbolic algebra and fast computational methods are used to efficiently calculate outbreak probabilities and expected times for all small graph topologies to identify cases where the important nodes are not the same.

A Novel Dencos Model For High Dimensional Data Using Genetic Algorithms

Subspace clustering is an emerging task that aims at detecting clusters in entrenched in

subspaces. Recent approaches fail to reduce results to relevant subspace clusters. Their results are

typically highly redundant and lack the fact of considering the critical problem, “the density divergence

problem,” in discovering the clusters, where they utilize an absolute density value as the density threshold

to identify the dense regions in all subspaces. Considering the varying region densities in different

subspace cardinalities, we note that a more appropriate way to determine whether a region in a subspace

should be identified as dense is by comparing its density with the region densities in that subspace. Based

on this idea and due to the infeasibility of applying previous techniques in this novel clustering model, we

devise an innovative algorithm, referred to as DENCOS(DENsity Conscious Subspace clustering), to adopt

a divide-and-conquer scheme to efficiently discover clusters satisfying different density thresholds in

different subspace cardinalities. DENCOS can discover the clusters in all subspaces with high quality, and

the efficiency significantly outperforms previous works, thus demonstrating its practicability for subspace

clustering. As validated by our extensive experiments on retail dataset, it outperforms previous works. We

extend our work with a clustering technique based on genetic algorithms which is capable of optimizing the

number of clusters for tasks with well formed and separated clusters.

A paradox of importance in network epidemiology

Talk at the International Conference on Computational Social Science, Helsinki, June 9, 2015. On YouTube here (Plenary II): https://www.youtube.com/channel/UCUGsbLwL4G2CQQfk95oZjVw

2018 algorithms for the minmax regret path problem with interval data

This document summarizes a research paper that studies the Minmax Regret Path Problem with interval data. The paper presents a new exact branch and cut algorithm for solving this problem and also proposes new heuristics, including a local search heuristic and a simulated annealing metaheuristic that uses a novel neighborhood structure. Computational experiments on benchmark instances are conducted to analyze the performance of the different algorithms and approaches. The results provide an assessment of the algorithms and show the superiority of the simulated annealing approach for finding good solutions to large problem instances.

Spreading processes on temporal networks

This document discusses temporal networks and how temporal structures can impact dynamical processes on networks. It begins by describing different types of temporal networks including person-to-person communication, information dissemination, physical proximity, and cellular biology networks. It then discusses methods for analyzing temporal network structures like inter-event times and how bursty or heavy-tailed distributions can slow spreading compared to memory-less processes. The document also presents examples of how neutralizing temporal structures like inter-event times or beginning/end times can impact spreading simulations. Finally, it discusses how different temporal network datasets exhibit diverse temporal structures.

Exploiting rhetorical relations to

Many of previous research have proven that the usage of rhetorical relations is capable to enhance many applications such as text summarization, question answering and natural language generation. This work proposes an approach that expands the benefit of rhetorical relations to address redundancy problem for cluster-based text summarization of multiple documents. We exploited rhetorical relations exist between sentences to group similar sentences into multiple clusters to identify themes of common information. The candidate summary were extracted from these clusters. Then, cluster-based text summarization is performed using Conditional Markov Random Walk Model to measure the saliency scores of the candidate summary. We evaluated our method by measuring the cohesion and separation of the clusters constructed by exploiting rhetorical relations and ROUGE score of generated summaries. The experimental result shows that our method performed well which shows promising potential of applying rhetorical relation in text clustering which benefits text summarization of multiple documents.

2224d_final

This document summarizes research applying deep learning techniques to predict epigenomic enhancer regions from DNA sequences. Four models - variations of Basset, DeepSea, DanQ, and a custom Greenside-Basset model - were trained on a dataset from the NIH Roadmap Epigenomics Mapping Consortium to label 1000 base pair sequences as active or inactive for 57 cell types. The Basset variation achieved the best balanced accuracy of 72.8% and had the highest precision and F1 scores, though it still weighted negative examples heavily, as precision was not strong. Experimenting with different embeddings, loss functions, and architectures helped narrow the models best suited for this sequence labeling task.

Identifying the Coding and Non Coding Regions of DNA Using Spectral Analysis

This paper presents a new method for exon detection in DNA sequences based on multi-scale parametric spectral analysis Identification and analysis of hidden features of coding and non-coding regions of DNA sequence is a challenging problem in the area of genomics. The objective of this paper is to estimate and compare spectral content of coding and non-coding segments of DNA sequence both by Parametric and Non-parametric methods. In this context protein coding region (exon) identification in the DNA sequence has been attaining a great interest in few decades. These coding regions can be identified by exploiting the period-3 property present in it. The discrete Fourier transform has been commonly used as a spectral estimation technique to extract the period-3 patterns present in DNA sequence. Consequently an attempt has been made so that some hidden internal properties of the DNA sequence can be brought into light in order to identify coding regions from non-coding ones. In this approach the DNA sequence from various Homo Sapiens genes have been identified for sample test and assigned numerical values based on weak-strong hydrogen bonding (WSHB) before application of digital signal analysis techniques.

A SURVEY ON SIMILARITY MEASURES IN TEXT MINING

The Volume of text resources have been increasing in digital libraries and internet. Organizing these text documents has become a practical need. For organizing great number of objects into small or minimum number of coherent groups automatically, Clustering technique is used. These documents are widely used for information retrieval and Natural Language processing tasks. Different Clustering algorithms require a metric for quantifying how dissimilar two given documents are. This difference is often measured by similarity measure such as Euclidean distance, Cosine similarity etc. The similarity measure process in text

mining can be used to identify the suitable clustering algorithm for a specific problem. This survey discusses the existing works on text similarity by partitioning them into three significant approaches; String-based, Knowledge based and Corpus-based similarities.

Graphical Structure Learning accelerated with POWER9

Prof Arghya Das from University of Wisconsin - Platteville presented as part of the 3 days International summit using OpenpOWER Systems

International Journal of Computer Science and Security Volume (2) Issue (5)

The document proposes a new method to calculate the distance matrix for phylogenetic tree construction in less computational time compared to traditional multiple sequence alignment methods. The method estimates the score of aligning two sequences in O(m+n) time using a 4-step scanning algorithm, where m and n are the lengths of the sequences. It then generates the distance matrix from the score matrix using the Feng-Doolittle formula. A phylogenetic tree is constructed from the distance matrix using the neighbor-joining algorithm. The method is tested on datasets of BChE sequences from mammals and bacteria, and the trees are compared to those generated by ClustalX.

A Novel Framework for Short Tandem Repeats (STRs) Using Parallel String Matching

This document presents a novel framework for identifying Short Tandem Repeats (STRs) using parallel string matching. It begins with background on STRs and challenges with existing sequential algorithms. It then describes a two-phase methodology - first applying a basic improved right prefix algorithm sequentially, then applying it in parallel using multi-threading on multicore processors. Results show the basic algorithm outperforms Boyer-Moore, Knuth-Morris-Pratt and brute force algorithms sequentially. When applied in parallel, processing time is reduced from 80ms sequentially to 40ms in parallel on multicore systems. The parallel STR identification framework allows efficient searching of repeats in large genomes.

Temporal Networks of Human Interaction

Temporal networks provide a framework for modeling systems of interactions that occur between nodes over time. These networks capture both the topological structure of connections as well as the timing of interactions. Three key aspects of temporal networks discussed in the document are:

1) Temporal networks can be represented using contact sequences that capture when interactions occur between nodes, unlike static networks which only represent connections.

2) The temporal structure of interactions, such as patterns in the timing of contacts, can impact dynamical processes unfolding on the network like information or disease spreading.

3) Randomizing the timing of contacts in empirical temporal network data can alter dynamical processes, highlighting the importance of temporal structure beyond just topology.

Improving the data recovery for short length LT codes

Luby Transform (LT) code is considered as an efficient erasure fountain code. The construction of the coded symbols is based on the formation of the degree distribution which played a significant role in ensuring a smooth decoding process. In this paper, we propose a new encoding scheme for LT code generation. This encoding presents a deterministic degree generation (DDG) with time hoping pattern which found to be suitable for the case of short data length where the well-known Robust Soliton Distribution (RSD) witnessed a severe performance degradation. It is shown via computer simulations that the proposed (DDG) has the lowest records for unrecovered data packets when compared to that using random degree distribution like RSD and non-uniform data selection (NUDS). The success interpreted in decreasing the overhead required for data recovery to the order of 25% for a data length of 32 packets.

JMM_Poster_2015

The document describes a research project that aims to model the effects of latency on thalamocortical fast-spiking interneurons in schizophrenia. Specifically, it seeks to: 1) Model a thalamocortical feed-forward inhibitory circuit in response to spike latency; 2) Review neuron models to incorporate biological details; 3) Develop a mathematical model incorporating latency; 4) Simulate the latency model using MATLAB; and 5) Predict how properties of the circuit change with latency. The project develops a latency model based on existing neuron models and simulations, but notes that further model development is needed to fully capture latency effects.

Interpretation of the biological knowledge using networks approach

This document discusses using biological networks to analyze and interpret biological knowledge. It begins with an overview of networks as tools to reduce complexity and integrate data. Key properties of networks are described, including nodes, edges, degree distribution, clustering coefficient, and centrality measures. Methods for analyzing networks like community detection and network motifs are also covered. The document emphasizes that biological networks must be analyzed and interpreted based on their properties and by mapping relevant biological data to provide meaningful insights.

Application of graph theory in drug design

Graph theory is a branch of mathematics that studies networks and their structures. It began in 1736 when Euler solved the Konigsberg bridges problem. Graphs represent objects called nodes connected by relationships called edges. They are used to model many real-world applications. Similarity searching uses graph representations of molecules to identify structurally or pharmacologically similar compounds. Various molecular descriptors and similarity coefficients are used with substructure, reduced graph, and 3D methods. Data fusion combines results from multiple search methods to improve accuracy. Graph theory continues to be important for modeling complex systems and networks in science.

9517cnc01

"Kurtosis" has long been considered an appropriate measure to quantify the extent of fat-tailedness of the degree distribution of a complex real-world network. However, the Kurtosis values for more than one realworld network have not been studied in conjunction with other statistical measures that also capture the

extent of variation in node degree. Also, the Kurtosis values of the distributions of other commonly centrality metrics for real-world networks have not been analyzed. In this paper, we determine the Kurtosis values for a suite of 48 real-world networks along with measures such as SPR(K), Max(K)-Min(K),

Max(K)-Avg(K), SD(K)/Avg(K), wherein SPR(K), Max(K), Min(K), Avg(K) and SD(K) represent the spectral radius ratio for node degree, maximum node degree, minimum node degree, average and standard deviation of node degree respectively. Contrary to the conceived notion in the literature, we observe that real-world networks whose degree distribution is Poisson in nature (characterized by lower values of SPR(K), Max(K)-Min(K), Max(K)-Avg(K), SD(K)/Avg(K)) could have Kurtosis values that are larger than that of real-world networks whose degree distribution is scale-free in nature (characterized by larger values of SPR(K), Max(K)-Min(K), Max(K)-Avg(K), SD(K)/Avg(K)). We also observe the Kurtosis values of the betweenness centrality distributions of the real-world networks to be more likely the largest among the Kurtosis values with respect to the commonly studied centrality metrics.

Socialnetworkanalysis (Tin180 Com)

Some key models of social network generation are discussed, including random graph models, Watts-Strogatz models, and scale-free networks. Scale-free networks can generate networks with few components, small diameters, and heavy-tailed degree distributions, but do not capture high clustering. Biological networks like metabolic and protein interaction networks also tend to be scale-free.

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

1) The document proposes a novel opportunistic AODV routing scheme called COAODV that uses cognitive mobile agents to intelligently route packets and establish stable connectivity in mobile ad hoc networks (MANETs).

2) COAODV aims to minimize routing overhead and increase path stability by computing a routing metric based on nodes' collaboration sensitivity levels determined through knowledge-based decision making.

3) It evaluates the performance of COAODV compared to standard AODV and a sleep AODV protocol, finding that COAODV reduces route overhead and increases path stability.

More Related Content

What's hot

SIMILARITY ANALYSIS OF DNA SEQUENCES BASED ON THE CHEMICAL PROPERTIES OF NUCL...

The DNA sequences similarity analysis approaches have been based on the representation and the frequency of sequences components; however, the position inside sequence is important information for the sequence data. Whereas, insufficient information in sequences

representations is important reason that causes poor similarity results. Based on three classifications of the DNA bases according to their chemical properties, the frequencies and

average positions of group mutations have been grouped into two twelve-components vectors,the Euclidean distances among introduced vectors applied to compare the coding sequences of the first exon of beta globin gene of 11 species.

Dimensionality Reduction Techniques for Document Clustering- A Survey

Abstract— Dimensionality reduction technique is applied to get rid of the inessential terms like redundant and noisy terms in documents. In this paper a systematic study is conducted for seven dimensionality reduction methods such as Latent Semantic Indexing (LSI), Random Projection (RP), Principle Component Analysis (PCA) and CUR decomposition, Latent Dirichlet Allocation(LDA), Singular value decomposition (SVD). Linear Discriminant Analysis(LDA)

Important spreaders in networks: Exact results for small graphs

The document discusses exact calculations of node importance in network epidemiology for small graphs. It examines three types of node importance: influence maximization, vaccination, and sentinel surveillance. The goal is to find the smallest graph where all three notions of importance differ for different nodes. Symbolic algebra and fast computational methods are used to efficiently calculate outbreak probabilities and expected times for all small graph topologies to identify cases where the important nodes are not the same.

A Novel Dencos Model For High Dimensional Data Using Genetic Algorithms

Subspace clustering is an emerging task that aims at detecting clusters in entrenched in

subspaces. Recent approaches fail to reduce results to relevant subspace clusters. Their results are

typically highly redundant and lack the fact of considering the critical problem, “the density divergence

problem,” in discovering the clusters, where they utilize an absolute density value as the density threshold

to identify the dense regions in all subspaces. Considering the varying region densities in different

subspace cardinalities, we note that a more appropriate way to determine whether a region in a subspace

should be identified as dense is by comparing its density with the region densities in that subspace. Based

on this idea and due to the infeasibility of applying previous techniques in this novel clustering model, we

devise an innovative algorithm, referred to as DENCOS(DENsity Conscious Subspace clustering), to adopt

a divide-and-conquer scheme to efficiently discover clusters satisfying different density thresholds in

different subspace cardinalities. DENCOS can discover the clusters in all subspaces with high quality, and

the efficiency significantly outperforms previous works, thus demonstrating its practicability for subspace

clustering. As validated by our extensive experiments on retail dataset, it outperforms previous works. We

extend our work with a clustering technique based on genetic algorithms which is capable of optimizing the

number of clusters for tasks with well formed and separated clusters.

A paradox of importance in network epidemiology

Talk at the International Conference on Computational Social Science, Helsinki, June 9, 2015. On YouTube here (Plenary II): https://www.youtube.com/channel/UCUGsbLwL4G2CQQfk95oZjVw

2018 algorithms for the minmax regret path problem with interval data

This document summarizes a research paper that studies the Minmax Regret Path Problem with interval data. The paper presents a new exact branch and cut algorithm for solving this problem and also proposes new heuristics, including a local search heuristic and a simulated annealing metaheuristic that uses a novel neighborhood structure. Computational experiments on benchmark instances are conducted to analyze the performance of the different algorithms and approaches. The results provide an assessment of the algorithms and show the superiority of the simulated annealing approach for finding good solutions to large problem instances.

Spreading processes on temporal networks

This document discusses temporal networks and how temporal structures can impact dynamical processes on networks. It begins by describing different types of temporal networks including person-to-person communication, information dissemination, physical proximity, and cellular biology networks. It then discusses methods for analyzing temporal network structures like inter-event times and how bursty or heavy-tailed distributions can slow spreading compared to memory-less processes. The document also presents examples of how neutralizing temporal structures like inter-event times or beginning/end times can impact spreading simulations. Finally, it discusses how different temporal network datasets exhibit diverse temporal structures.

Exploiting rhetorical relations to

Many of previous research have proven that the usage of rhetorical relations is capable to enhance many applications such as text summarization, question answering and natural language generation. This work proposes an approach that expands the benefit of rhetorical relations to address redundancy problem for cluster-based text summarization of multiple documents. We exploited rhetorical relations exist between sentences to group similar sentences into multiple clusters to identify themes of common information. The candidate summary were extracted from these clusters. Then, cluster-based text summarization is performed using Conditional Markov Random Walk Model to measure the saliency scores of the candidate summary. We evaluated our method by measuring the cohesion and separation of the clusters constructed by exploiting rhetorical relations and ROUGE score of generated summaries. The experimental result shows that our method performed well which shows promising potential of applying rhetorical relation in text clustering which benefits text summarization of multiple documents.

2224d_final

This document summarizes research applying deep learning techniques to predict epigenomic enhancer regions from DNA sequences. Four models - variations of Basset, DeepSea, DanQ, and a custom Greenside-Basset model - were trained on a dataset from the NIH Roadmap Epigenomics Mapping Consortium to label 1000 base pair sequences as active or inactive for 57 cell types. The Basset variation achieved the best balanced accuracy of 72.8% and had the highest precision and F1 scores, though it still weighted negative examples heavily, as precision was not strong. Experimenting with different embeddings, loss functions, and architectures helped narrow the models best suited for this sequence labeling task.

Identifying the Coding and Non Coding Regions of DNA Using Spectral Analysis

This paper presents a new method for exon detection in DNA sequences based on multi-scale parametric spectral analysis Identification and analysis of hidden features of coding and non-coding regions of DNA sequence is a challenging problem in the area of genomics. The objective of this paper is to estimate and compare spectral content of coding and non-coding segments of DNA sequence both by Parametric and Non-parametric methods. In this context protein coding region (exon) identification in the DNA sequence has been attaining a great interest in few decades. These coding regions can be identified by exploiting the period-3 property present in it. The discrete Fourier transform has been commonly used as a spectral estimation technique to extract the period-3 patterns present in DNA sequence. Consequently an attempt has been made so that some hidden internal properties of the DNA sequence can be brought into light in order to identify coding regions from non-coding ones. In this approach the DNA sequence from various Homo Sapiens genes have been identified for sample test and assigned numerical values based on weak-strong hydrogen bonding (WSHB) before application of digital signal analysis techniques.

A SURVEY ON SIMILARITY MEASURES IN TEXT MINING

The Volume of text resources have been increasing in digital libraries and internet. Organizing these text documents has become a practical need. For organizing great number of objects into small or minimum number of coherent groups automatically, Clustering technique is used. These documents are widely used for information retrieval and Natural Language processing tasks. Different Clustering algorithms require a metric for quantifying how dissimilar two given documents are. This difference is often measured by similarity measure such as Euclidean distance, Cosine similarity etc. The similarity measure process in text

mining can be used to identify the suitable clustering algorithm for a specific problem. This survey discusses the existing works on text similarity by partitioning them into three significant approaches; String-based, Knowledge based and Corpus-based similarities.

Graphical Structure Learning accelerated with POWER9

Prof Arghya Das from University of Wisconsin - Platteville presented as part of the 3 days International summit using OpenpOWER Systems

International Journal of Computer Science and Security Volume (2) Issue (5)

The document proposes a new method to calculate the distance matrix for phylogenetic tree construction in less computational time compared to traditional multiple sequence alignment methods. The method estimates the score of aligning two sequences in O(m+n) time using a 4-step scanning algorithm, where m and n are the lengths of the sequences. It then generates the distance matrix from the score matrix using the Feng-Doolittle formula. A phylogenetic tree is constructed from the distance matrix using the neighbor-joining algorithm. The method is tested on datasets of BChE sequences from mammals and bacteria, and the trees are compared to those generated by ClustalX.

A Novel Framework for Short Tandem Repeats (STRs) Using Parallel String Matching

This document presents a novel framework for identifying Short Tandem Repeats (STRs) using parallel string matching. It begins with background on STRs and challenges with existing sequential algorithms. It then describes a two-phase methodology - first applying a basic improved right prefix algorithm sequentially, then applying it in parallel using multi-threading on multicore processors. Results show the basic algorithm outperforms Boyer-Moore, Knuth-Morris-Pratt and brute force algorithms sequentially. When applied in parallel, processing time is reduced from 80ms sequentially to 40ms in parallel on multicore systems. The parallel STR identification framework allows efficient searching of repeats in large genomes.

Temporal Networks of Human Interaction

Temporal networks provide a framework for modeling systems of interactions that occur between nodes over time. These networks capture both the topological structure of connections as well as the timing of interactions. Three key aspects of temporal networks discussed in the document are:

1) Temporal networks can be represented using contact sequences that capture when interactions occur between nodes, unlike static networks which only represent connections.

2) The temporal structure of interactions, such as patterns in the timing of contacts, can impact dynamical processes unfolding on the network like information or disease spreading.

3) Randomizing the timing of contacts in empirical temporal network data can alter dynamical processes, highlighting the importance of temporal structure beyond just topology.

Improving the data recovery for short length LT codes

Luby Transform (LT) code is considered as an efficient erasure fountain code. The construction of the coded symbols is based on the formation of the degree distribution which played a significant role in ensuring a smooth decoding process. In this paper, we propose a new encoding scheme for LT code generation. This encoding presents a deterministic degree generation (DDG) with time hoping pattern which found to be suitable for the case of short data length where the well-known Robust Soliton Distribution (RSD) witnessed a severe performance degradation. It is shown via computer simulations that the proposed (DDG) has the lowest records for unrecovered data packets when compared to that using random degree distribution like RSD and non-uniform data selection (NUDS). The success interpreted in decreasing the overhead required for data recovery to the order of 25% for a data length of 32 packets.

JMM_Poster_2015

The document describes a research project that aims to model the effects of latency on thalamocortical fast-spiking interneurons in schizophrenia. Specifically, it seeks to: 1) Model a thalamocortical feed-forward inhibitory circuit in response to spike latency; 2) Review neuron models to incorporate biological details; 3) Develop a mathematical model incorporating latency; 4) Simulate the latency model using MATLAB; and 5) Predict how properties of the circuit change with latency. The project develops a latency model based on existing neuron models and simulations, but notes that further model development is needed to fully capture latency effects.

What's hot (17)

SIMILARITY ANALYSIS OF DNA SEQUENCES BASED ON THE CHEMICAL PROPERTIES OF NUCL...

SIMILARITY ANALYSIS OF DNA SEQUENCES BASED ON THE CHEMICAL PROPERTIES OF NUCL...

Dimensionality Reduction Techniques for Document Clustering- A Survey

Dimensionality Reduction Techniques for Document Clustering- A Survey

Important spreaders in networks: Exact results for small graphs

Important spreaders in networks: Exact results for small graphs

A Novel Dencos Model For High Dimensional Data Using Genetic Algorithms

A Novel Dencos Model For High Dimensional Data Using Genetic Algorithms

2018 algorithms for the minmax regret path problem with interval data

2018 algorithms for the minmax regret path problem with interval data

Identifying the Coding and Non Coding Regions of DNA Using Spectral Analysis

Identifying the Coding and Non Coding Regions of DNA Using Spectral Analysis

Graphical Structure Learning accelerated with POWER9

Graphical Structure Learning accelerated with POWER9

International Journal of Computer Science and Security Volume (2) Issue (5)

International Journal of Computer Science and Security Volume (2) Issue (5)

A Novel Framework for Short Tandem Repeats (STRs) Using Parallel String Matching

A Novel Framework for Short Tandem Repeats (STRs) Using Parallel String Matching

Improving the data recovery for short length LT codes

Improving the data recovery for short length LT codes

Similar to Disintegration of the small world property with increasing diversity of chemical libraries

Interpretation of the biological knowledge using networks approach

This document discusses using biological networks to analyze and interpret biological knowledge. It begins with an overview of networks as tools to reduce complexity and integrate data. Key properties of networks are described, including nodes, edges, degree distribution, clustering coefficient, and centrality measures. Methods for analyzing networks like community detection and network motifs are also covered. The document emphasizes that biological networks must be analyzed and interpreted based on their properties and by mapping relevant biological data to provide meaningful insights.

Application of graph theory in drug design

Graph theory is a branch of mathematics that studies networks and their structures. It began in 1736 when Euler solved the Konigsberg bridges problem. Graphs represent objects called nodes connected by relationships called edges. They are used to model many real-world applications. Similarity searching uses graph representations of molecules to identify structurally or pharmacologically similar compounds. Various molecular descriptors and similarity coefficients are used with substructure, reduced graph, and 3D methods. Data fusion combines results from multiple search methods to improve accuracy. Graph theory continues to be important for modeling complex systems and networks in science.

9517cnc01

"Kurtosis" has long been considered an appropriate measure to quantify the extent of fat-tailedness of the degree distribution of a complex real-world network. However, the Kurtosis values for more than one realworld network have not been studied in conjunction with other statistical measures that also capture the

extent of variation in node degree. Also, the Kurtosis values of the distributions of other commonly centrality metrics for real-world networks have not been analyzed. In this paper, we determine the Kurtosis values for a suite of 48 real-world networks along with measures such as SPR(K), Max(K)-Min(K),

Max(K)-Avg(K), SD(K)/Avg(K), wherein SPR(K), Max(K), Min(K), Avg(K) and SD(K) represent the spectral radius ratio for node degree, maximum node degree, minimum node degree, average and standard deviation of node degree respectively. Contrary to the conceived notion in the literature, we observe that real-world networks whose degree distribution is Poisson in nature (characterized by lower values of SPR(K), Max(K)-Min(K), Max(K)-Avg(K), SD(K)/Avg(K)) could have Kurtosis values that are larger than that of real-world networks whose degree distribution is scale-free in nature (characterized by larger values of SPR(K), Max(K)-Min(K), Max(K)-Avg(K), SD(K)/Avg(K)). We also observe the Kurtosis values of the betweenness centrality distributions of the real-world networks to be more likely the largest among the Kurtosis values with respect to the commonly studied centrality metrics.

Socialnetworkanalysis (Tin180 Com)

Some key models of social network generation are discussed, including random graph models, Watts-Strogatz models, and scale-free networks. Scale-free networks can generate networks with few components, small diameters, and heavy-tailed degree distributions, but do not capture high clustering. Biological networks like metabolic and protein interaction networks also tend to be scale-free.

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

1) The document proposes a novel opportunistic AODV routing scheme called COAODV that uses cognitive mobile agents to intelligently route packets and establish stable connectivity in mobile ad hoc networks (MANETs).

2) COAODV aims to minimize routing overhead and increase path stability by computing a routing metric based on nodes' collaboration sensitivity levels determined through knowledge-based decision making.

3) It evaluates the performance of COAODV compared to standard AODV and a sleep AODV protocol, finding that COAODV reduces route overhead and increases path stability.

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

In Manet’s Dynamics and Robustness are the key features of the nodes and are governed by several routing protocols such as AODV, DSR and so on. However in the network the growing resource demand leads to resource scarcity. The Node Mobility often leads to the link breakages and high routing overhead

decreasing the stability and reliability of the network Connectivity. In this context, the paper proposes a novel opportunistic AODV routing scheme which implements a cognitive agent based intelligent technique to set up a stable connectivity over the Manet. The Scheme computes the routing metric (rf) based on the collaboration sensitivity levels of the nodes obtained based through the knowledge-based decision. This Routing Metric is subsequently used to set up the stable path for network connectivity. Thus minimizes the route overhead and increases the stability of the path. The Performance evaluation is conducted in comparison with the AODV and sleep AODV routing protocol and validated.

AN OPPORTUNISTIC AODV ROUTING SCHEME: A COGNITIVE MOBILE AGENTS APPROACH

In Manet’s Dynamics and Robustness are the key features of the nodes and are governed by several routing

protocols such as AODV, DSR and so on. However in the network the growing resource demand leads to

resource scarcity. The Node Mobility often leads to the link breakages and high routing overhead

decreasing the stability and reliability of the network connectivity. In this context, the paper proposes a

novel opportunistic AODV routing scheme which implements a cognitive agent based intelligent technique

to set up a stable connectivity over the Manet. The Scheme computes the routing metric (rf) based on the

collaboration sensitivity levels of the nodes obtained based through the knowledge-based decision. This

Routing Metric is subsequently used to set up the stable path for network connectivity. Thus minimizes the

route overhead and increases the stability of the path. The Performance evaluation is conducted in

comparison with the AODV and sleep AODV routing protocol and validated.

Using spectral radius ratio for node degree

In this paper, we show that the spectral radius ratio for node degree could be used to analyze the variation of node degree during the evolution of complex networks. We focus on three commonly studied models of complex networks: random networks, scale-free networks and small-world networks. The spectral radius ratio for node degree is defined as the ratio of the principal (largest) eigenvalue of the adjacency matrix of a network graph to that of the average node degree. During the evolution of each of the above three categories of networks (using the appropriate evolution model for each category), we observe the spectral radius ratio for node degree to exhibit high-very high positive correlation (0.75 or above) to that of the

coefficient of variation of node degree (ratio of the standard deviation of node degree and average node degree). We show that the spectral radius ratio for node degree could be used as the basis to tune the operating parameters of the evolution models for each of the three categories of complex networks as well as analyze the impact of specific operating parameters for each model.

Quantum persistent k cores for community detection

PPT overview of paper accepted for 2019 Southeastern International Conference on Combinatorics, Graph Theory & Computing. Details a persistence approach to community detection and a new quantum persistence-based algorithm based on the coloring problem.

Use of eigenvalues and eigenvectors to analyze bipartivity of network graphs

This paper presents the applications of Eigenvalues and Eigenvectors (as part of spectral

decomposition) to analyze the bipartivity index of graphs as well as to predict the set of vertices

that will constitute the two partitions of graphs that are truly bipartite and those that are close

to being bipartite. Though the largest eigenvalue and the corresponding eigenvector (called the

principal eigenvalue and principal eigenvector) are typically used in the spectral analysis of

network graphs, we show that the smallest eigenvalue and the smallest eigenvector (called the

bipartite eigenvalue and the bipartite eigenvector) could be used to predict the bipartite

partitions of network graphs. For each of the predictions, we hypothesize an expected partition

for the input graph and compare that with the predicted partitions. We also analyze the impact

of the number of frustrated edges (edges connecting the vertices within a partition) and their

location across the two partitions on the bipartivity index. We observe that for a given number

of frustrated edges, if the frustrated edges are located in the larger of the two partitions of the

bipartite graph (rather than the smaller of the two partitions or equally distributed across the

two partitions), the bipartivity index is likely to be relatively larger.

Topology ppt

This document discusses network topology and modeling of internet structure. It begins by explaining why network topology is important for tasks like routing, simulation, and analysis. It then covers models for representing internet topology at the router and domain level. Common models discussed include Waxman, Barabasi-Albert, and transit-stub models. The document also addresses concepts of complex networks, scale-free networks, and power laws observed in real-world networks like the web. It provides an example of search in peer-to-peer networks like Gnutella and how their power law structure can be leveraged. Finally, it outlines the hierarchical structure of the internet and components within an ISP like points of presence.

Paper Explained: Understanding the wiring evolution in differentiable neural ...

Read my Explanation of the Paper here: https://medium.com/@devanshverma425/why-and-how-is-neural-architecture-search-is-biased-778763d03f38?sk=e16a3e54d6c26090a6b28f7420d3f6f7

Abstract: Controversy exists on whether differentiable neural architecture search methods discover wiring topology effectively. To understand how wiring topology evolves, we study the underlying mechanism of several existing differentiable NAS frameworks. Our investigation is motivated by three observed searching patterns of differentiable NAS: 1) they search by growing instead of pruning; 2) wider networks are more preferred than deeper ones; 3) no edges are selected in bi-level optimization. To anatomize these phenomena, we propose a unified view on searching algorithms of existing frameworks, transferring the global optimization to local cost minimization. Based on this reformulation, we conduct empirical and theoretical analyses, revealing implicit inductive biases in the cost's assignment mechanism and evolution dynamics that cause the observed phenomena. These biases indicate strong discrimination towards certain topologies. To this end, we pose questions that future differentiable methods for neural wiring discovery need to confront, hoping to evoke a discussion and rethinking on how much bias has been enforced implicitly in existing NAS methods.

An Efficient Algorithm to Calculate The Connectivity of Hyper-Rings Distribut...

The aim of this paper is develop a software module to test the connectivity of various odd-sized HRs and attempted to answer an open question whether the node connectivity of an odd-sized HR is equal to its degree. We attempted to answer this question by explicitly testing the node connectivity's of various oddsized HRs. In this paper, we also study the properties, constructions, and connectivity of hyper-rings. We usually use a graph to represent the architecture of an interconnection network, where nodes represent processors and edges represent communication links between pairs of processors. Although the number of edges in a hyper-ring is roughly twice that of a hypercube with the same number of nodes, the diameter and the connectivity of the hyper-ring are shorter and larger, respectively, than those of the corresponding hypercube. These properties are advantageous to hyper-ring as desirable interconnection networks. This paper discusses the reliability in hyper-ring. One of the major goals in network design is to find the best way to increase the system’s reliability. The reliability of a distributed system depends on the reliabilities of its communication links and computer elements

Topology ppt

This document discusses network topology and modeling of internet structure. It begins by explaining why network topology is important for tasks like routing, simulation, and analysis. It then describes several models that are commonly used to represent internet topology, including graph models at the router and domain level. Specific topology generation models are also summarized, such as the Barabasi-Albert, Waxman, and transit-stub models. The document concludes by discussing concepts like complex networks, scale-free networks, and power laws that are observed in real-world internet topologies.

Topology ppt

This document discusses network topology and modeling of internet structure. It begins by explaining why network topology is important for tasks like routing, simulation, and analysis. It then describes several models that are commonly used to represent internet topology, including graph models at the router and domain level. Specific topology generation models are also summarized, such as the Barabasi-Albert, Waxman, and transit-stub models. The document concludes by discussing concepts like complex networks, scale-free networks, and power laws that are observed in real-world internet topologies.

TopologyPPT.ppt

This document discusses network topology and complex networks. It begins by outlining topics related to modeling internet topology, including scale-free networks and power laws. It then discusses modeling approaches like the Barabasi-Albert model and Waxman model. Finally, it examines complex networks and scale-free properties, including examples like the world wide web and Gnutella peer-to-peer network.

Medicinal Applications of Quantum Computing

Here, I study to understand how computational and mathematical models are used to describe EEG readings of the brain in order to assess if these methods can be expanded into further research efforts to create better antidepressants.

EVOLUTIONARY CENTRALITY AND MAXIMAL CLIQUES IN MOBILE SOCIAL NETWORKS

This paper introduces an evolutionary approach to enhance the process of finding central nodes in mobile networks. This can provide essential information and important applications in mobile and social networks. This evolutionary approach considers the dynamics of the network and takes into consideration the central nodes from previous time slots. We also study the applicability of maximal cliques algorithms in mobile social networks and how it can be used to find the central nodes based on the discovered maximal cliques. The experimental results are promising and show a significant enhancement in finding the central nodes.

Simplicial closure & higher-order link prediction

The document discusses higher-order link prediction in networks. It summarizes previous work representing higher-order interactions as tensors, hypergraphs, etc. It then proposes evaluating models of higher-order data using "higher-order link prediction" to predict which groups of more than two nodes will interact based on past data. The authors analyze dynamics of triadic closure in several real-world networks and propose methods to predict closure based on structural properties like edge weights.

SIMILARITY ANALYSIS OF DNA SEQUENCES BASED ON THE CHEMICAL PROPERTIES OF NUCL...

The DNA sequences similarity analysis approaches have been based on the representation and the frequency of sequences components; however, the position inside sequence is important information for the sequence data. Whereas, insufficient information in sequences representations is important reason that causes poor similarity results. Based on three classifications of the DNA bases according to their chemical properties, the frequencies and average positions of group mutations have been grouped into two twelve-components vectors, the Euclidean distances among introduced vectors applied to compare the coding sequences of the first exon of beta globin gene of 11 species.

Similar to Disintegration of the small world property with increasing diversity of chemical libraries (20)

Interpretation of the biological knowledge using networks approach

Interpretation of the biological knowledge using networks approach

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

An Opportunistic AODV Routing Scheme : A Cognitive Mobile Agents Approach

AN OPPORTUNISTIC AODV ROUTING SCHEME: A COGNITIVE MOBILE AGENTS APPROACH

AN OPPORTUNISTIC AODV ROUTING SCHEME: A COGNITIVE MOBILE AGENTS APPROACH

Quantum persistent k cores for community detection

Quantum persistent k cores for community detection

Use of eigenvalues and eigenvectors to analyze bipartivity of network graphs

Use of eigenvalues and eigenvectors to analyze bipartivity of network graphs

Paper Explained: Understanding the wiring evolution in differentiable neural ...

Paper Explained: Understanding the wiring evolution in differentiable neural ...

An Efficient Algorithm to Calculate The Connectivity of Hyper-Rings Distribut...

An Efficient Algorithm to Calculate The Connectivity of Hyper-Rings Distribut...

EVOLUTIONARY CENTRALITY AND MAXIMAL CLIQUES IN MOBILE SOCIAL NETWORKS

EVOLUTIONARY CENTRALITY AND MAXIMAL CLIQUES IN MOBILE SOCIAL NETWORKS

SIMILARITY ANALYSIS OF DNA SEQUENCES BASED ON THE CHEMICAL PROPERTIES OF NUCL...

SIMILARITY ANALYSIS OF DNA SEQUENCES BASED ON THE CHEMICAL PROPERTIES OF NUCL...

Recently uploaded

Cytokines and their role in immune regulation.pptx

This presentation covers the content and information on "Cytokines " and their role in immune regulation .

ESR spectroscopy in liquid food and beverages.pptx

With increasing population, people need to rely on packaged food stuffs. Packaging of food materials requires the preservation of food. There are various methods for the treatment of food to preserve them and irradiation treatment of food is one of them. It is the most common and the most harmless method for the food preservation as it does not alter the necessary micronutrients of food materials. Although irradiated food doesn’t cause any harm to the human health but still the quality assessment of food is required to provide consumers with necessary information about the food. ESR spectroscopy is the most sophisticated way to investigate the quality of the food and the free radicals induced during the processing of the food. ESR spin trapping technique is useful for the detection of highly unstable radicals in the food. The antioxidant capability of liquid food and beverages in mainly performed by spin trapping technique.

原版制作(carleton毕业证书)卡尔顿大学毕业证硕士文凭原版一模一样

原版纸张【微信:741003700 】【(carleton毕业证书)卡尔顿大学毕业证】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

SAR of Medicinal Chemistry 1st by dk.pdf

In this presentation include the prototype drug SAR on thus or with their examples .

Syllabus of Second Year B. Pharmacy

2019 PATTERN.

Describing and Interpreting an Immersive Learning Case with the Immersion Cub...

Current descriptions of immersive learning cases are often difficult or impossible to compare. This is due to a myriad of different options on what details to include, which aspects are relevant, and on the descriptive approaches employed. Also, these aspects often combine very specific details with more general guidelines or indicate intents and rationales without clarifying their implementation. In this paper we provide a method to describe immersive learning cases that is structured to enable comparisons, yet flexible enough to allow researchers and practitioners to decide which aspects to include. This method leverages a taxonomy that classifies educational aspects at three levels (uses, practices, and strategies) and then utilizes two frameworks, the Immersive Learning Brain and the Immersion Cube, to enable a structured description and interpretation of immersive learning cases. The method is then demonstrated on a published immersive learning case on training for wind turbine maintenance using virtual reality. Applying the method results in a structured artifact, the Immersive Learning Case Sheet, that tags the case with its proximal uses, practices, and strategies, and refines the free text case description to ensure that matching details are included. This contribution is thus a case description method in support of future comparative research of immersive learning cases. We then discuss how the resulting description and interpretation can be leveraged to change immersion learning cases, by enriching them (considering low-effort changes or additions) or innovating (exploring more challenging avenues of transformation). The method holds significant promise to support better-grounded research in immersive learning.

Randomised Optimisation Algorithms in DAPHNE

Slides from talk:

Aleš Zamuda: Randomised Optimisation Algorithms in DAPHNE .

Austrian-Slovenian HPC Meeting 2024 – ASHPC24, Seeblickhotel Grundlsee in Austria, 10–13 June 2024

https://ashpc.eu/

The binding of cosmological structures by massless topological defects

Assuming spherical symmetry and weak field, it is shown that if one solves the Poisson equation or the Einstein field

equations sourced by a topological defect, i.e. a singularity of a very specific form, the result is a localized gravitational

field capable of driving flat rotation (i.e. Keplerian circular orbits at a constant speed for all radii) of test masses on a thin

spherical shell without any underlying mass. Moreover, a large-scale structure which exploits this solution by assembling

concentrically a number of such topological defects can establish a flat stellar or galactic rotation curve, and can also deflect

light in the same manner as an equipotential (isothermal) sphere. Thus, the need for dark matter or modified gravity theory is

mitigated, at least in part.

The use of Nauplii and metanauplii artemia in aquaculture (brine shrimp).pptx

Although Artemia has been known to man for centuries, its use as a food for the culture of larval organisms apparently began only in the 1930s, when several investigators found that it made an excellent food for newly hatched fish larvae (Litvinenko et al., 2023). As aquaculture developed in the 1960s and ‘70s, the use of Artemia also became more widespread, due both to its convenience and to its nutritional value for larval organisms (Arenas-Pardo et al., 2024). The fact that Artemia dormant cysts can be stored for long periods in cans, and then used as an off-the-shelf food requiring only 24 h of incubation makes them the most convenient, least labor-intensive, live food available for aquaculture (Sorgeloos & Roubach, 2021). The nutritional value of Artemia, especially for marine organisms, is not constant, but varies both geographically and temporally. During the last decade, however, both the causes of Artemia nutritional variability and methods to improve poorquality Artemia have been identified (Loufi et al., 2024).

Brine shrimp (Artemia spp.) are used in marine aquaculture worldwide. Annually, more than 2,000 metric tons of dry cysts are used for cultivation of fish, crustacean, and shellfish larva. Brine shrimp are important to aquaculture because newly hatched brine shrimp nauplii (larvae) provide a food source for many fish fry (Mozanzadeh et al., 2021). Culture and harvesting of brine shrimp eggs represents another aspect of the aquaculture industry. Nauplii and metanauplii of Artemia, commonly known as brine shrimp, play a crucial role in aquaculture due to their nutritional value and suitability as live feed for many aquatic species, particularly in larval stages (Sorgeloos & Roubach, 2021).

Immersive Learning That Works: Research Grounding and Paths Forward

We will metaverse into the essence of immersive learning, into its three dimensions and conceptual models. This approach encompasses elements from teaching methodologies to social involvement, through organizational concerns and technologies. Challenging the perception of learning as knowledge transfer, we introduce a 'Uses, Practices & Strategies' model operationalized by the 'Immersive Learning Brain' and ‘Immersion Cube’ frameworks. This approach offers a comprehensive guide through the intricacies of immersive educational experiences and spotlighting research frontiers, along the immersion dimensions of system, narrative, and agency. Our discourse extends to stakeholders beyond the academic sphere, addressing the interests of technologists, instructional designers, and policymakers. We span various contexts, from formal education to organizational transformation to the new horizon of an AI-pervasive society. This keynote aims to unite the iLRN community in a collaborative journey towards a future where immersive learning research and practice coalesce, paving the way for innovative educational research and practice landscapes.

THEMATIC APPERCEPTION TEST(TAT) cognitive abilities, creativity, and critic...

THEMATIC APPERCEPTION TEST(TAT) cognitive abilities, creativity, and critic...Abdul Wali Khan University Mardan,kP,Pakistan