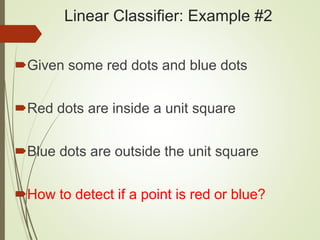

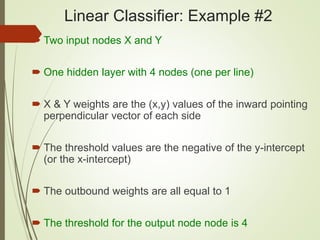

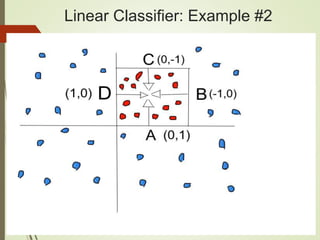

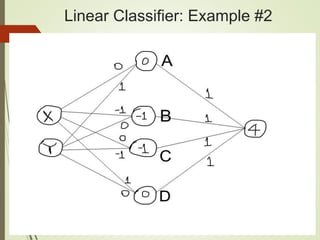

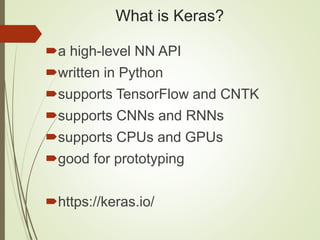

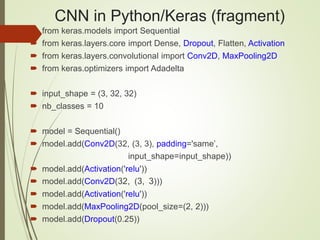

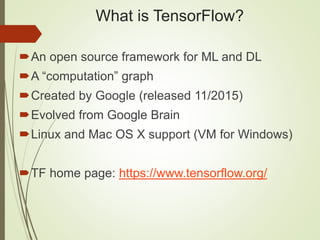

This document provides a comprehensive overview of deep learning concepts, focusing on Keras and TensorFlow. It covers topics such as neural networks, activation functions, hyperparameters, and various types of classifiers, including linear regression and convolutional neural networks (CNNs). It also includes code examples and practical applications of TensorFlow for machine learning tasks.

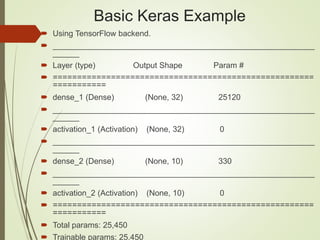

![Basic Keras Example

from keras.models import Sequential

from keras.layers import Dense, Activation

model = Sequential([

Dense(32, input_shape=(784,)),

Activation('relu'),

Dense(10),

Activation('softmax'),

])

model.summary()](https://image.slidesharecdn.com/h2o-dl-180314050645/85/Deep-Learning-Keras-and-TensorFlow-51-320.jpg)

![TensorFlow and Linear Regression

import tensorflow as tf

# W and x are 1d arrays

W = tf.constant([10,20], name=’W’)

x = tf.placeholder(tf.int32, name='x')

b = tf.placeholder(tf.int32, name='b')

Wx = tf.multiply(W, x, name='Wx')

y = tf.add(Wx, b, name=’y’)](https://image.slidesharecdn.com/h2o-dl-180314050645/85/Deep-Learning-Keras-and-TensorFlow-67-320.jpg)

![TensorFlow fetch/feed_dict

with tf.Session() as sess:

print("Result 1: Wx = ",

sess.run(Wx, feed_dict={x:[5,10]}))

print("Result 2: y = ",

sess.run(y, feed_dict={x:[5,10], b:[15,25]}))

Result 1: Wx = [50 200]

Result 2: y = [65 225]](https://image.slidesharecdn.com/h2o-dl-180314050645/85/Deep-Learning-Keras-and-TensorFlow-68-320.jpg)

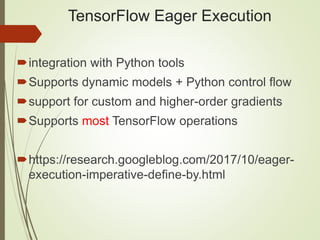

![TensorFlow Eager Execution

import tensorflow as tf # tf-eager1.py

import tensorflow.contrib.eager as tfe

tfe.enable_eager_execution()

x = [[2.]]

m = tf.matmul(x, x)

print(m)

# tf.Tensor([[4.]], shape=(1, 1), dtype=float32)](https://image.slidesharecdn.com/h2o-dl-180314050645/85/Deep-Learning-Keras-and-TensorFlow-72-320.jpg)

![TensorFlow and CNNs (#1)

def cnn_model_fn(features, labels, mode): # input layer

input_layer = tf.reshape(features["x"], [-1, 28, 28, 1])

conv1 = tf.layers.conv2d( # Conv layer #1

inputs=input_layer,

filters=32,

kernel_size=[5,5],

padding="same",

activation=tf.nn.relu)

# Pooling Layer #1

pool1 = tf.layers.max_pooling2d(inputs=conv1,

pool_size=[2, 2], strides=2)](https://image.slidesharecdn.com/h2o-dl-180314050645/85/Deep-Learning-Keras-and-TensorFlow-73-320.jpg)

![TensorFlow and CNNs (#2)

# Conv Layer #2 and Pooling Layer #2

conv2 = tf.layers.conv2d(

inputs=pool1,

filters=64,

kernel_size=[5,5],

padding="same",

activation=tf.nn.relu)

pool2 = tf.layers.max_pooling2d(inputs=conv2,

pool_size=[2, 2], strides=2)](https://image.slidesharecdn.com/h2o-dl-180314050645/85/Deep-Learning-Keras-and-TensorFlow-74-320.jpg)