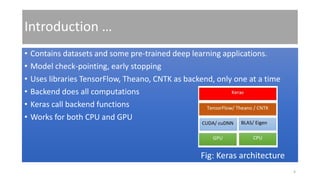

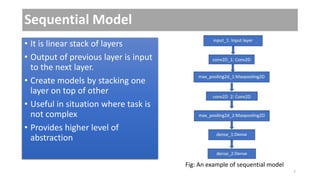

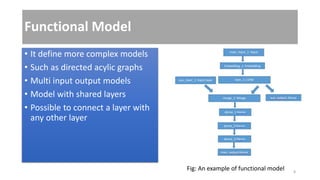

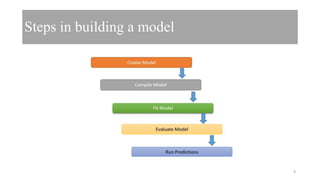

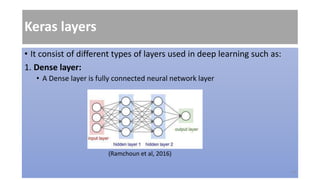

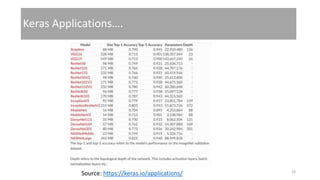

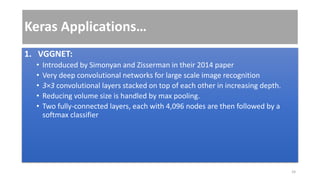

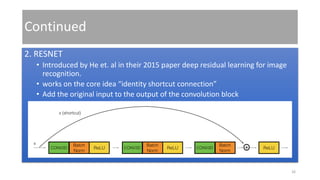

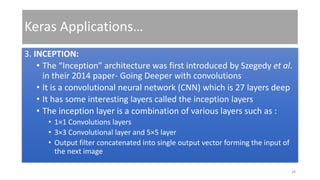

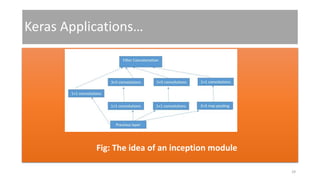

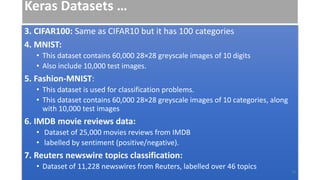

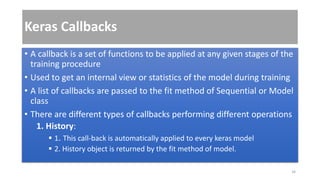

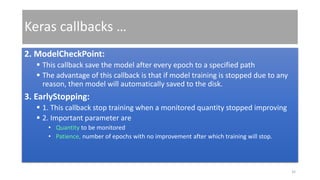

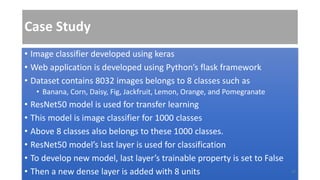

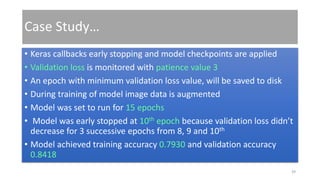

Keras is an open-source Python framework developed for deep learning that enables fast experimentation with neural networks, supporting both CNN and RNN architectures. It includes functionalities like rapid prototyping, model building (sequential and functional), image data augmentation, and callbacks to enhance training. The framework also offers access to various pre-trained models and datasets, with applications in tasks such as image classification through transfer learning.