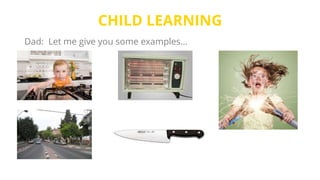

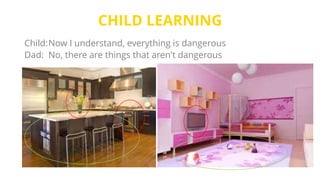

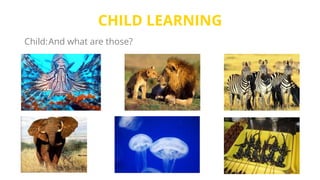

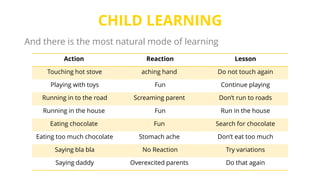

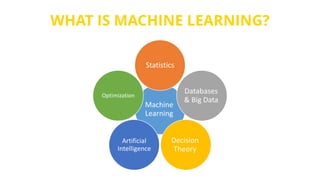

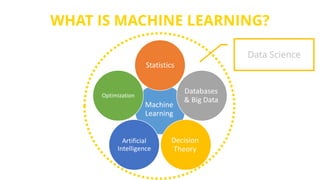

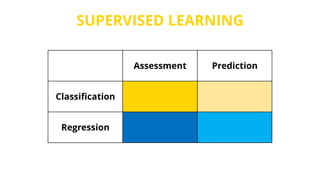

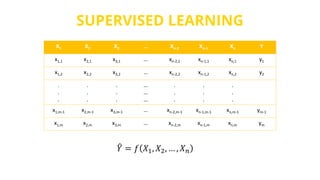

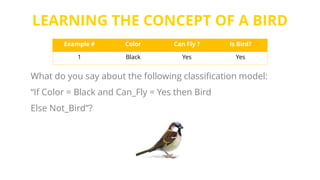

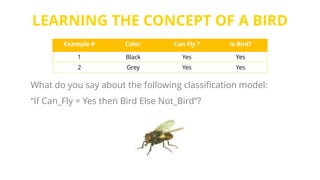

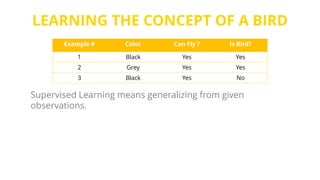

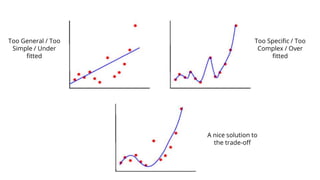

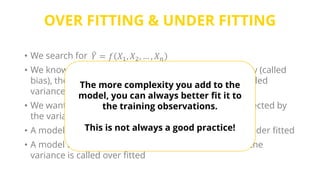

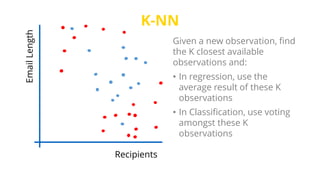

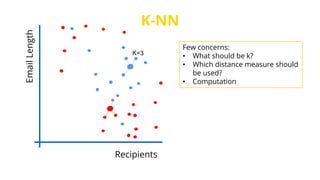

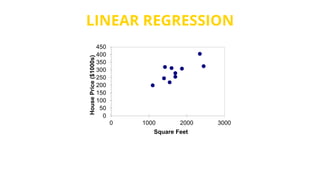

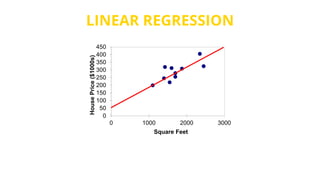

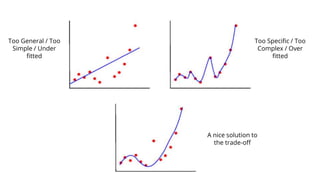

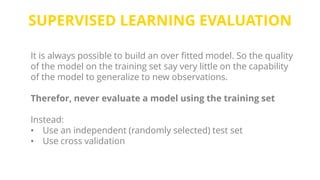

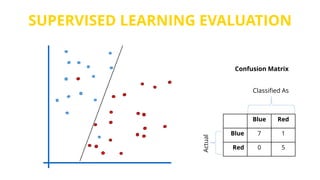

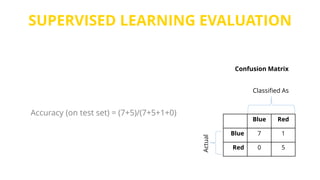

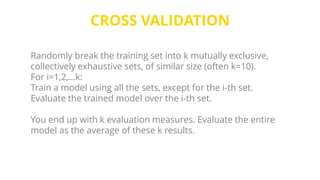

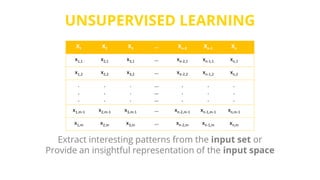

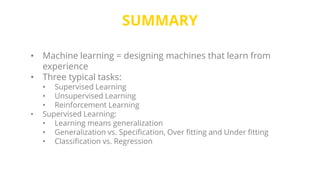

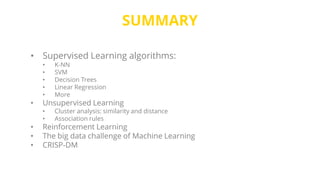

This document provides an introduction to machine learning. It discusses how children learn through explanations from parents, examples, and reinforcement learning. It then defines machine learning as programs that improve in performance on tasks through experience processing. The document outlines typical machine learning tasks including supervised learning, unsupervised learning, and reinforcement learning. It provides examples of each type of learning and discusses evaluation methods for supervised learning models.