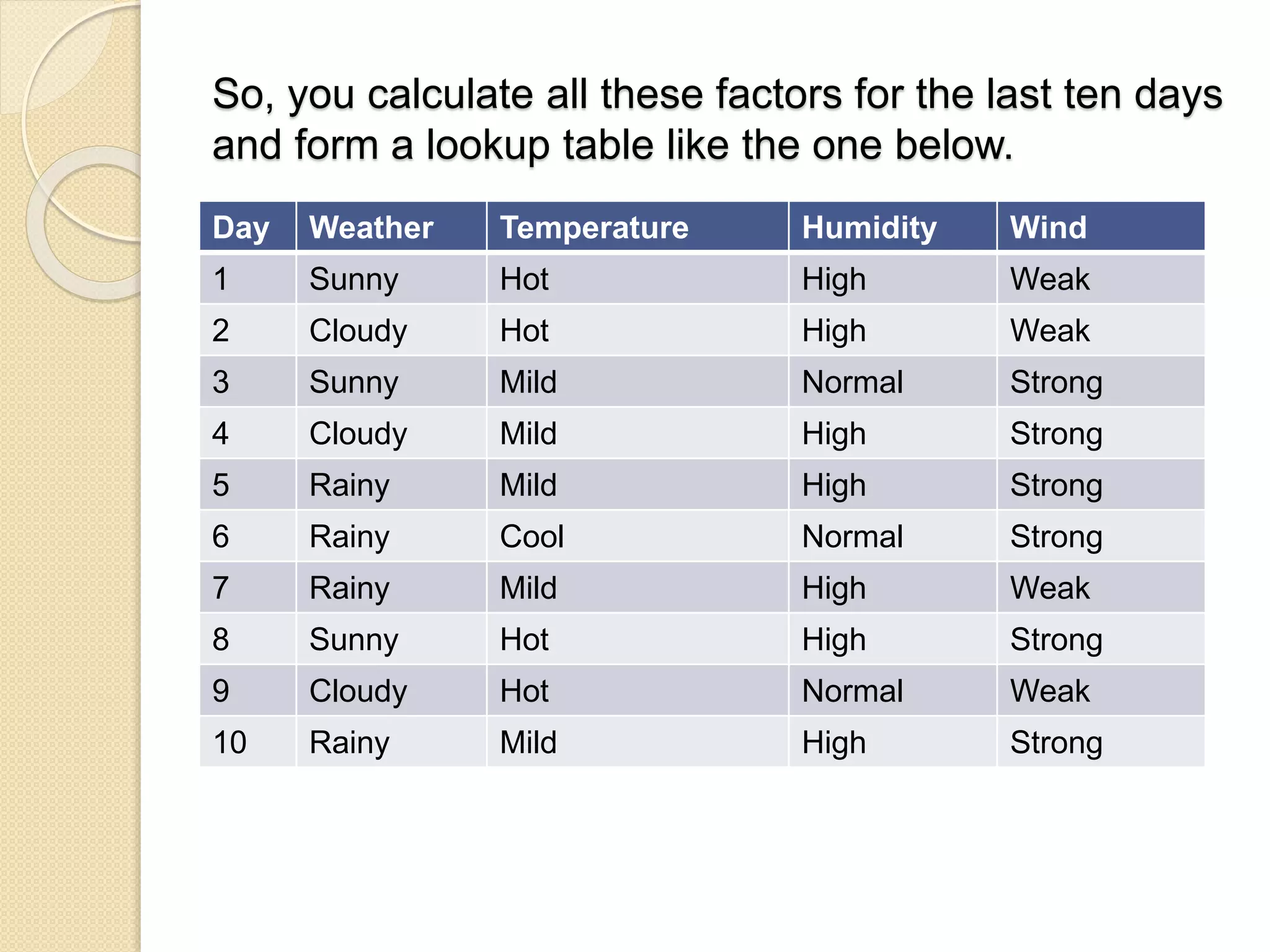

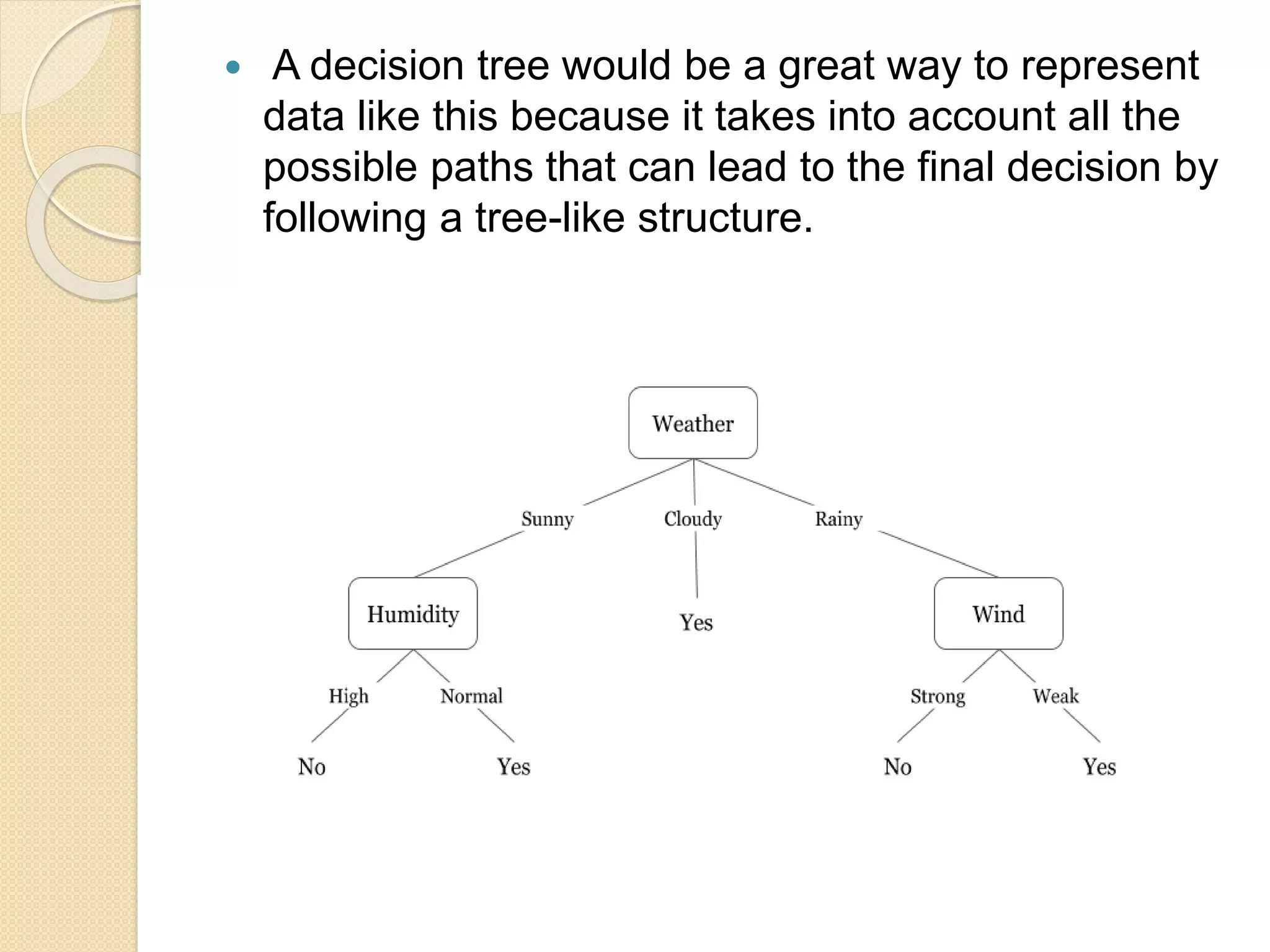

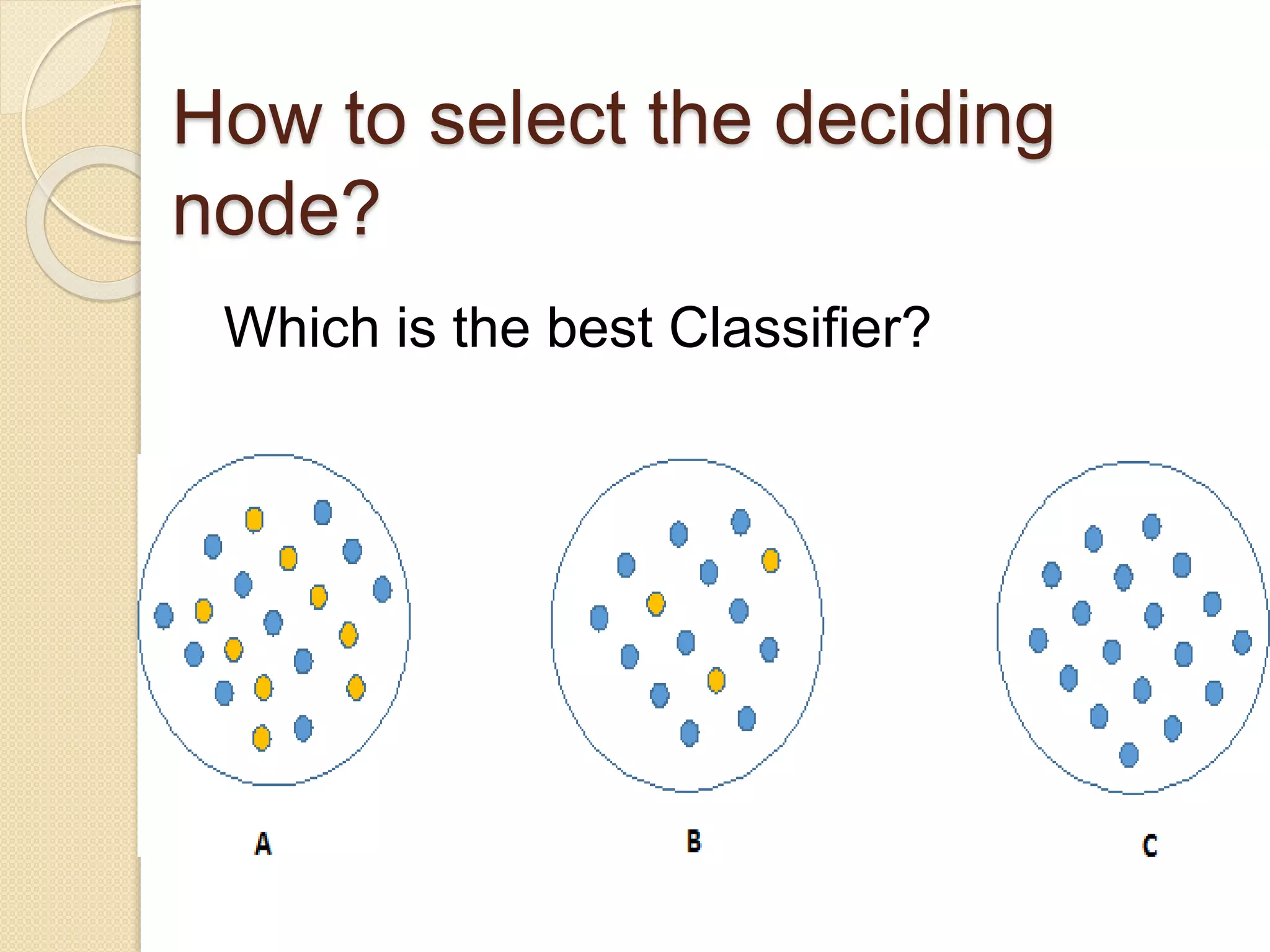

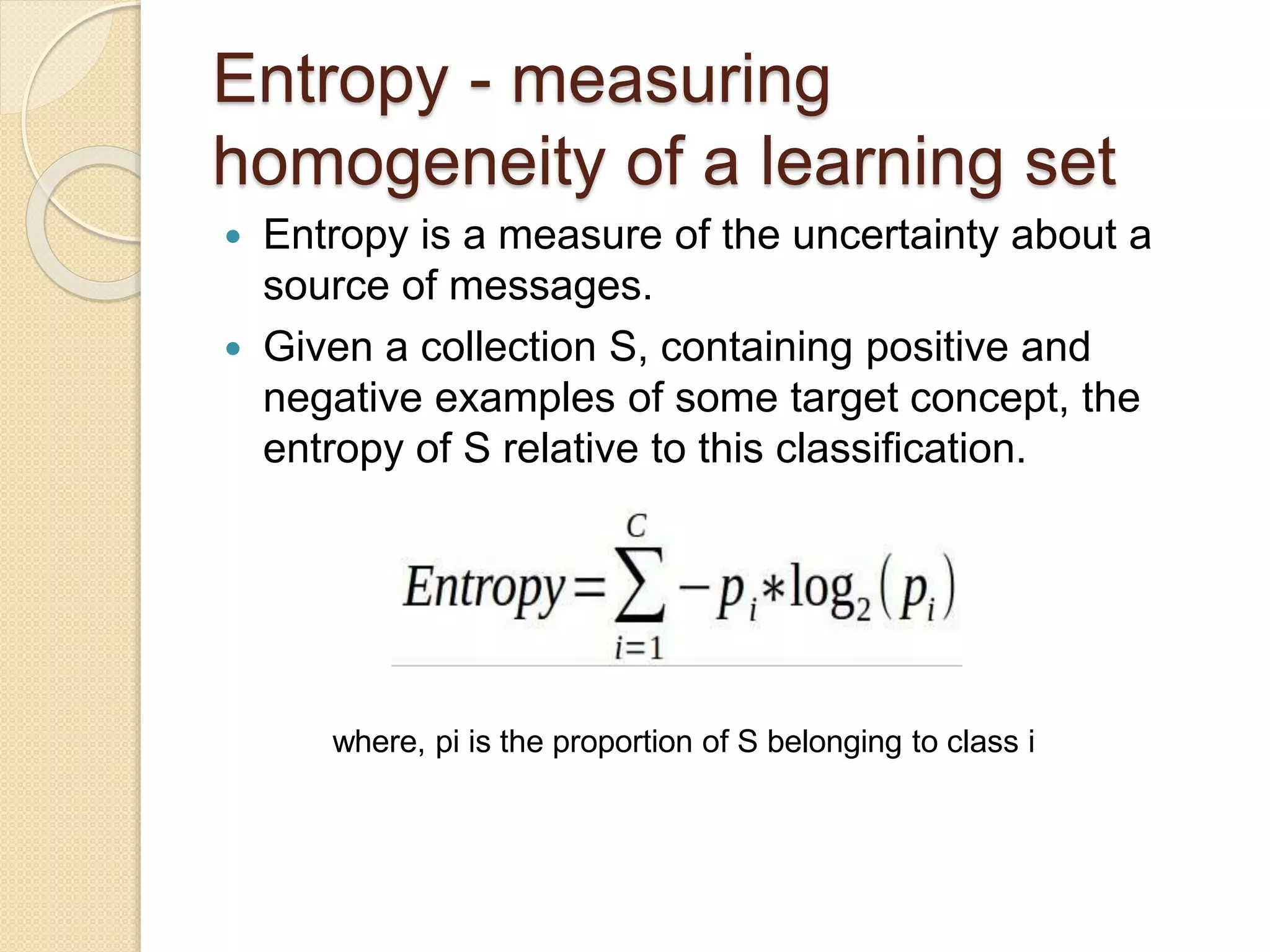

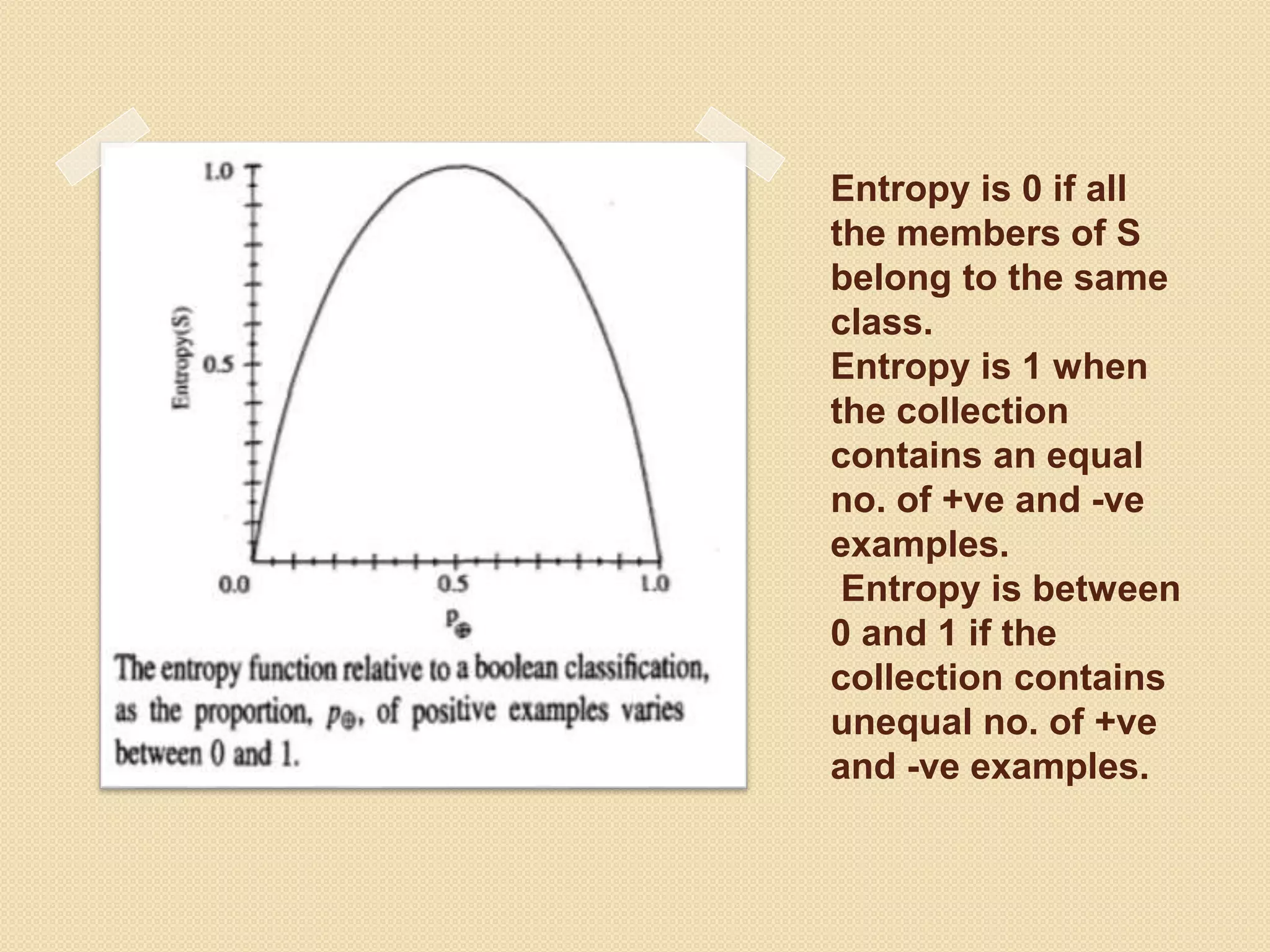

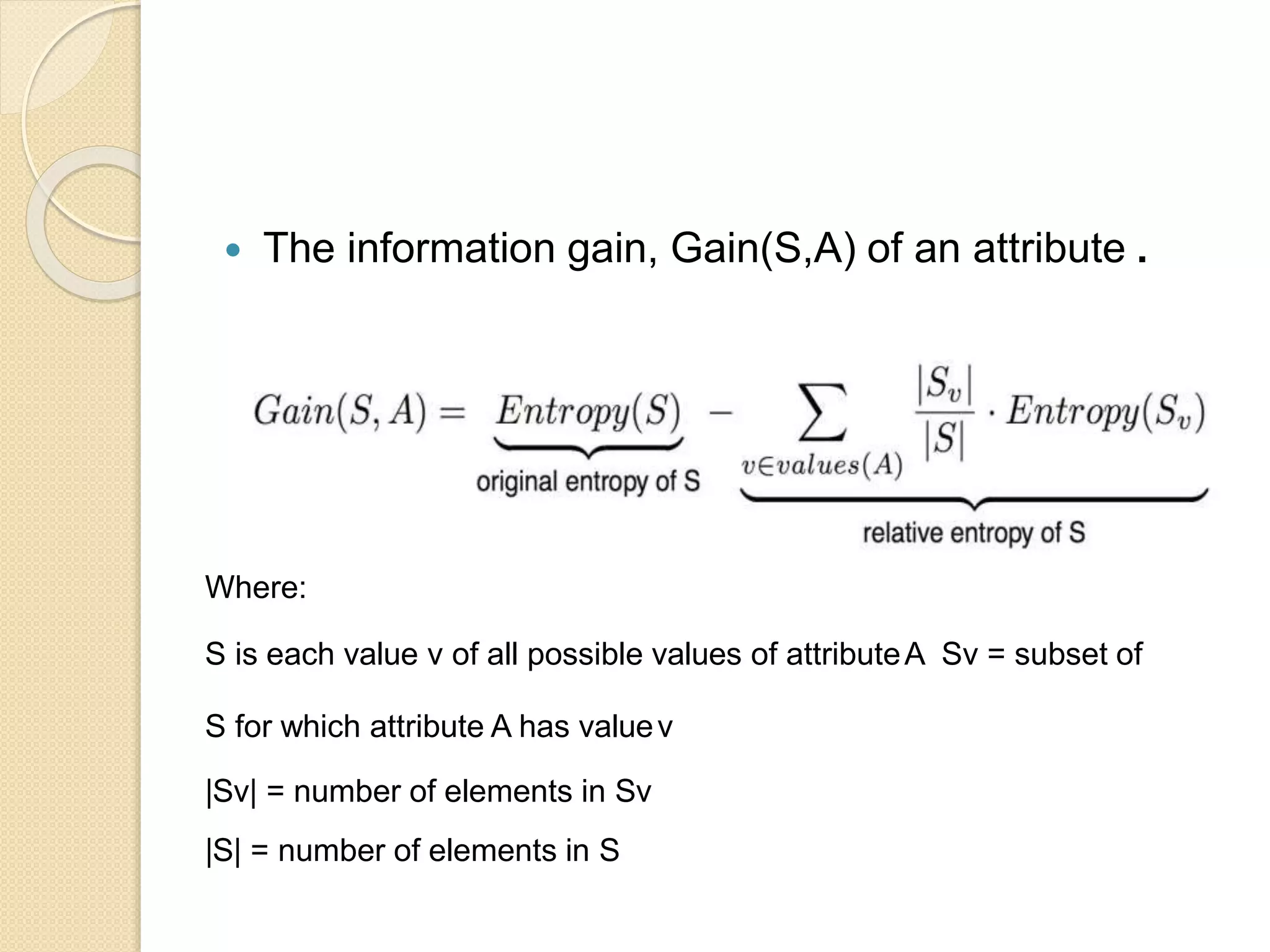

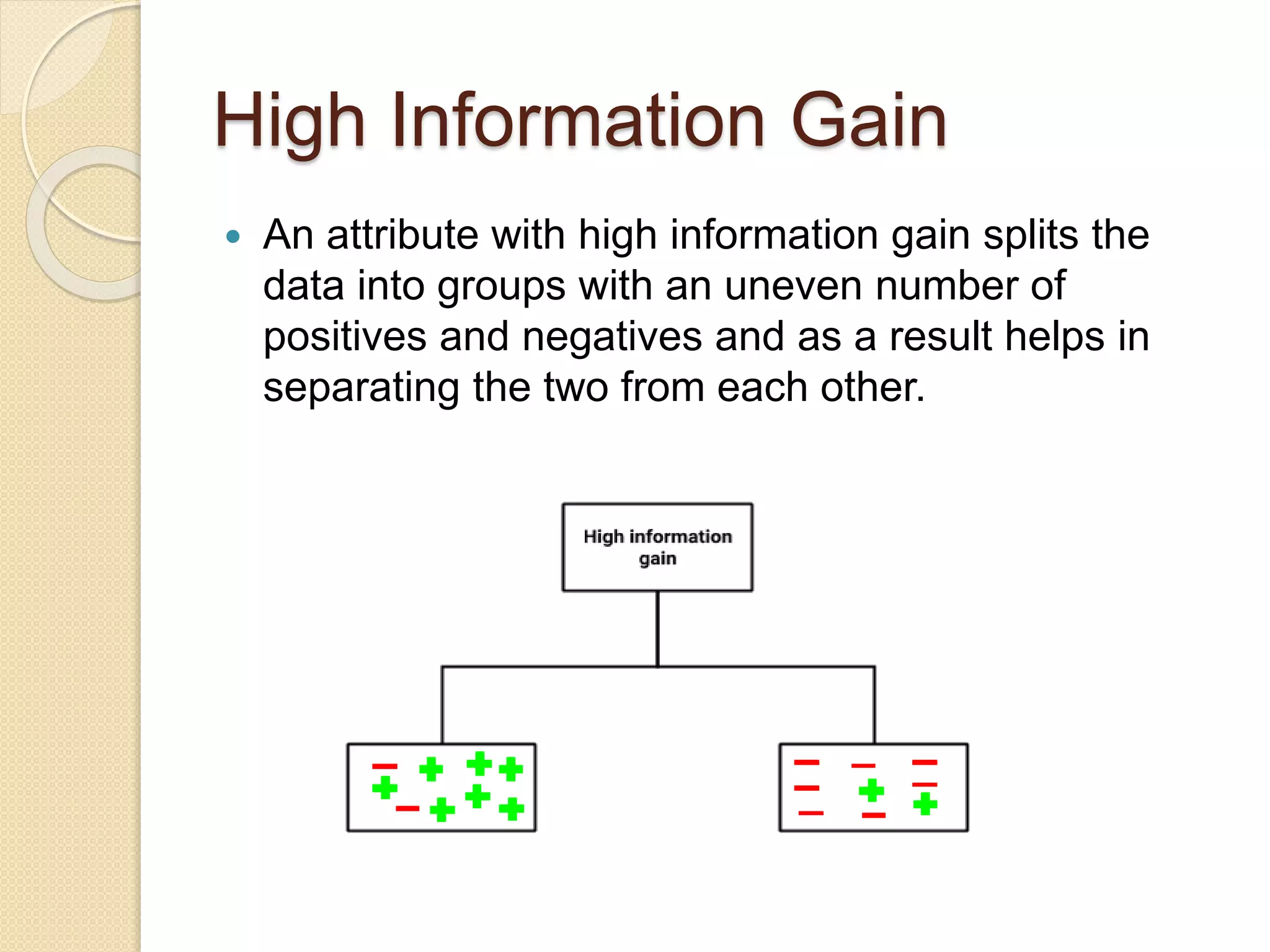

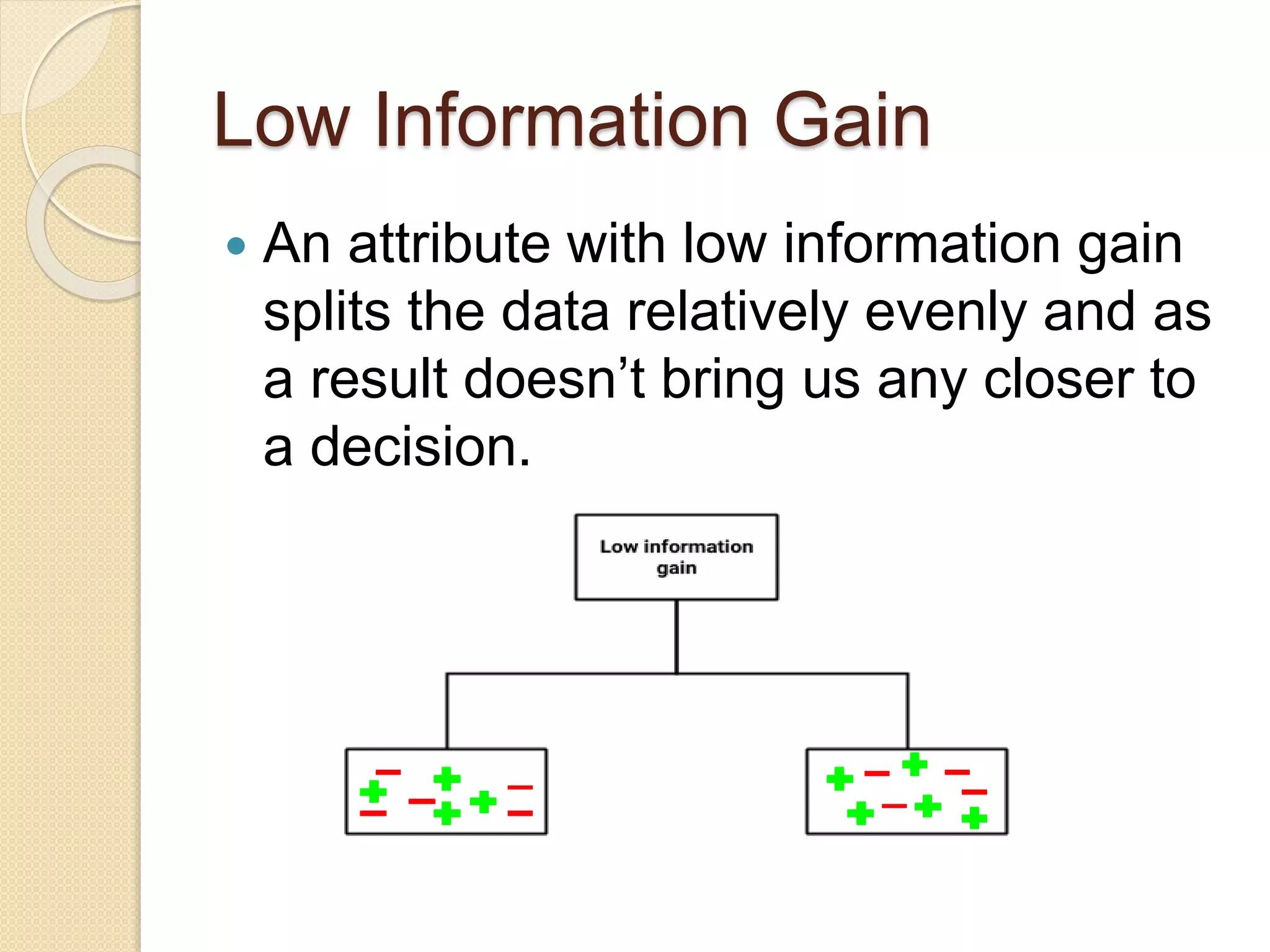

A decision tree is a popular supervised learning tool used for classification and prediction, represented as a flowchart-like structure. The document discusses key concepts such as selecting the deciding node, measuring uncertainty through entropy and information gain, and the Gini impurity. It also outlines the steps to create a decision tree and highlights its pros and cons.