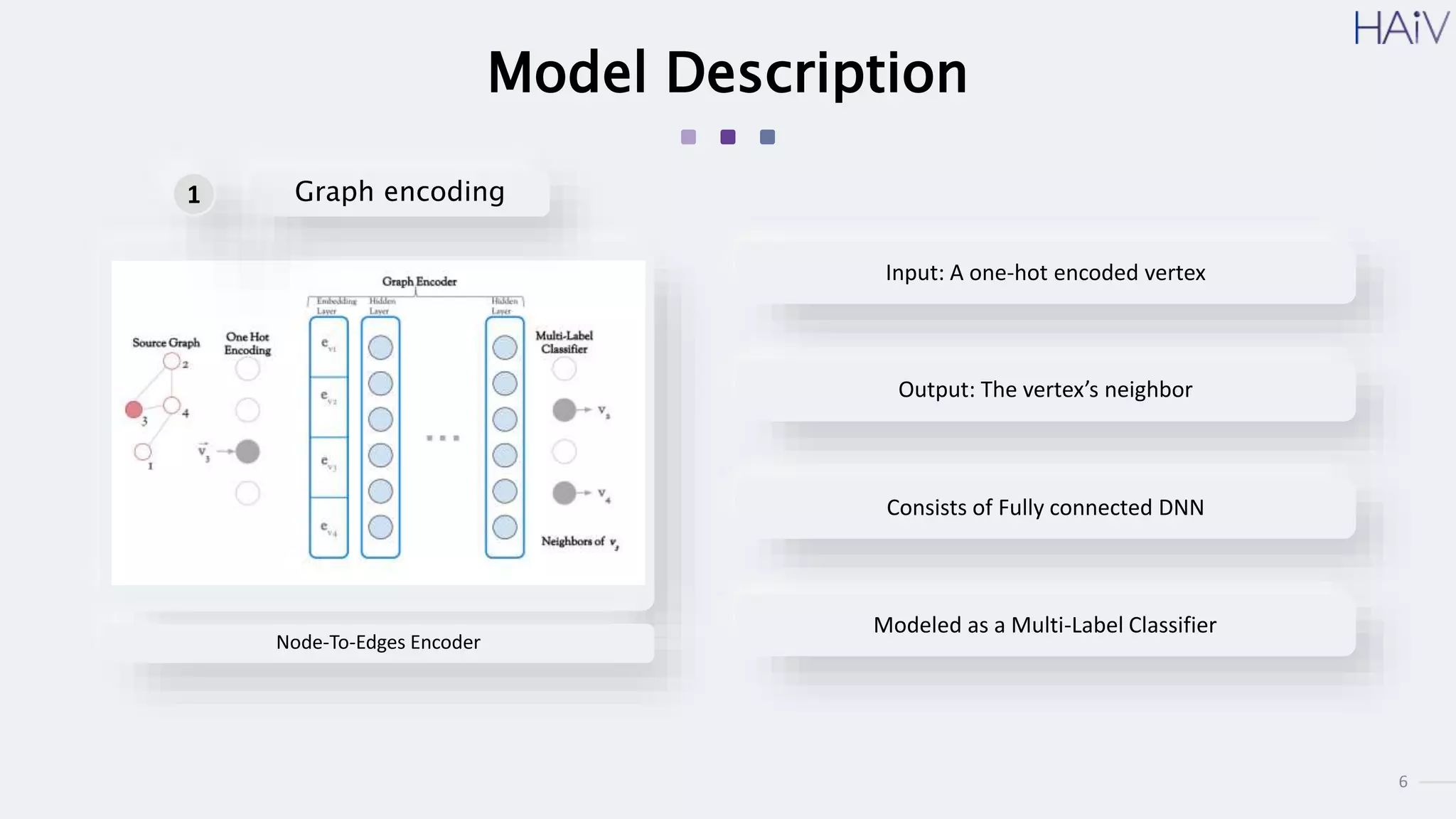

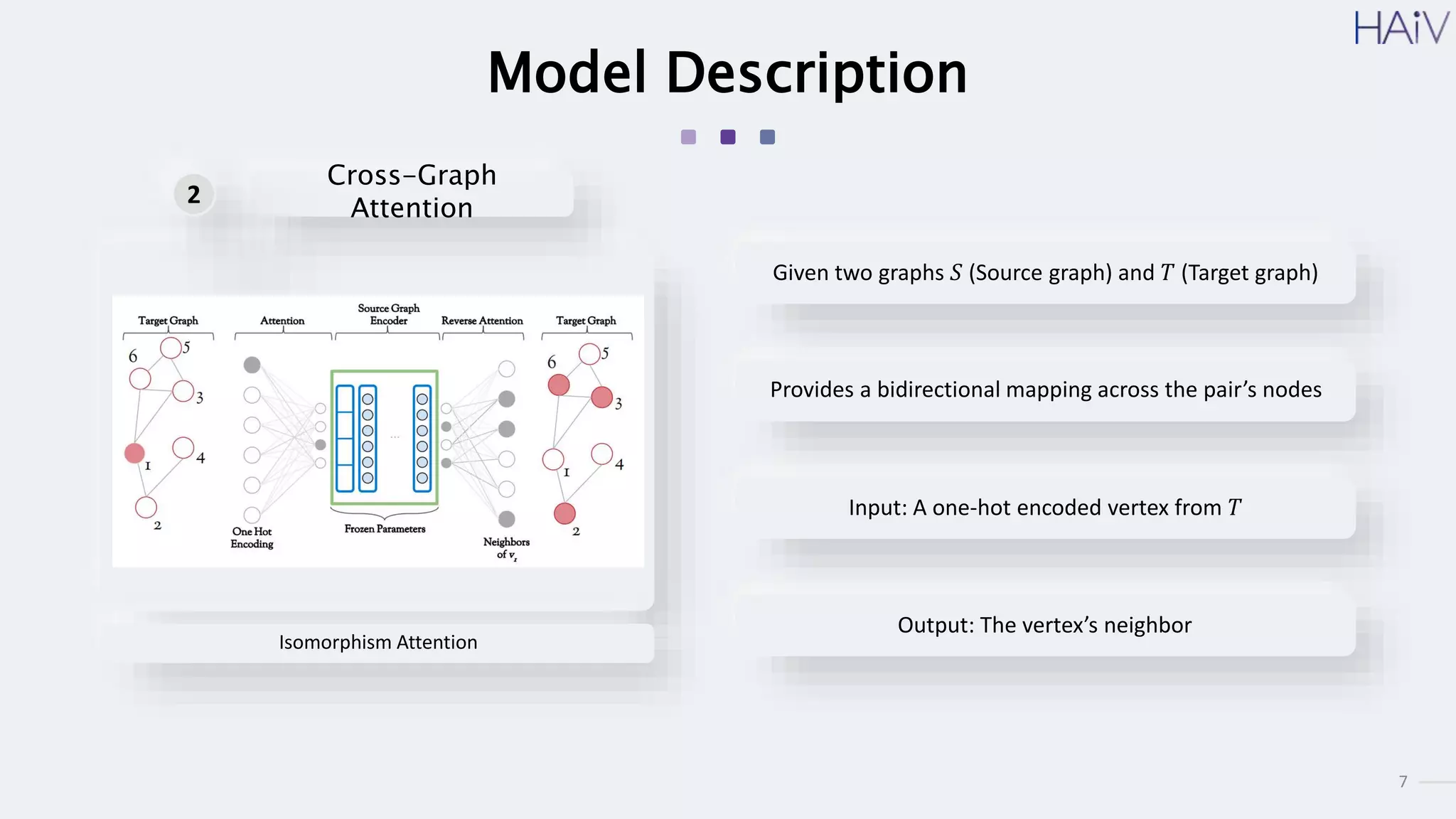

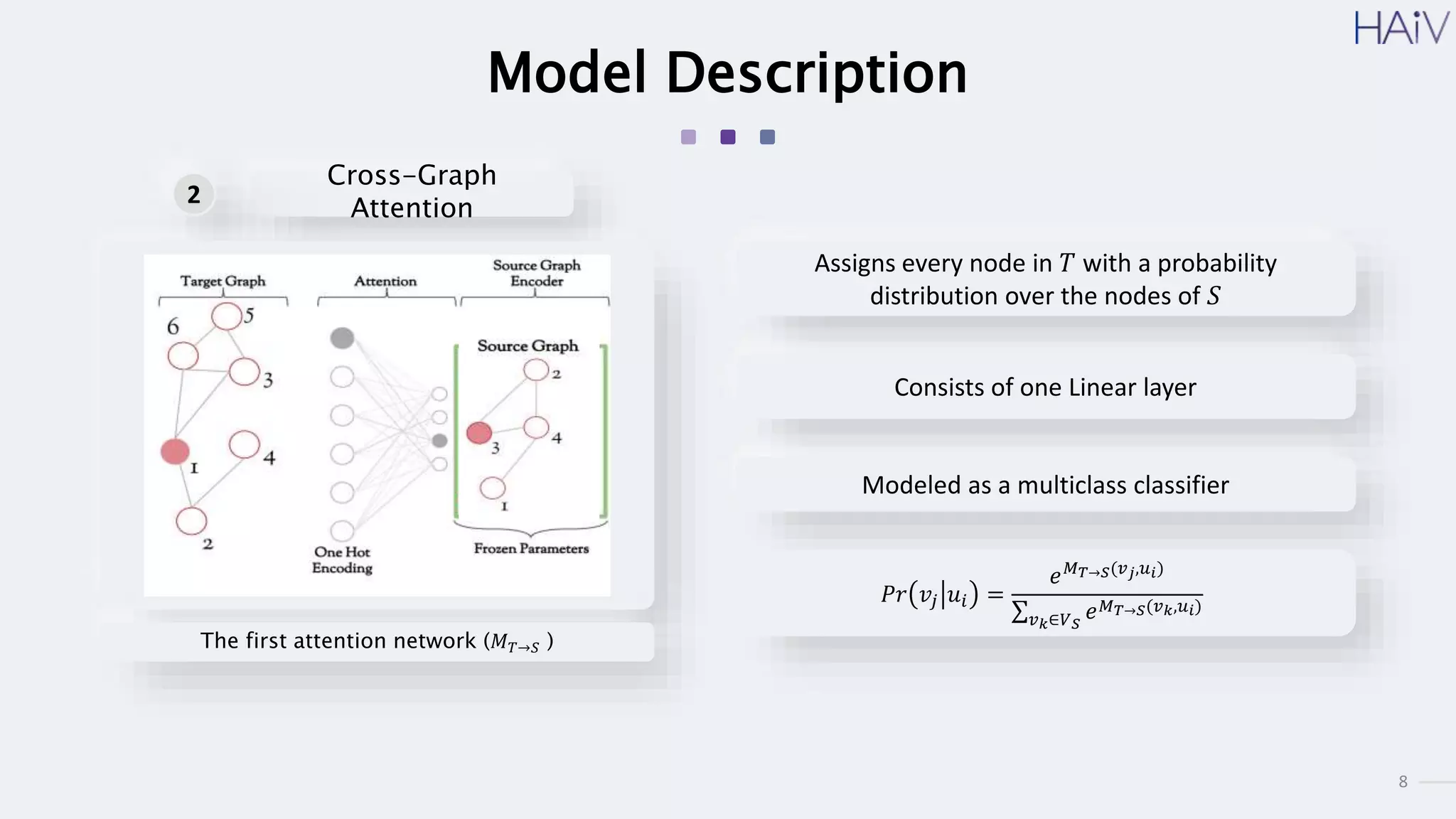

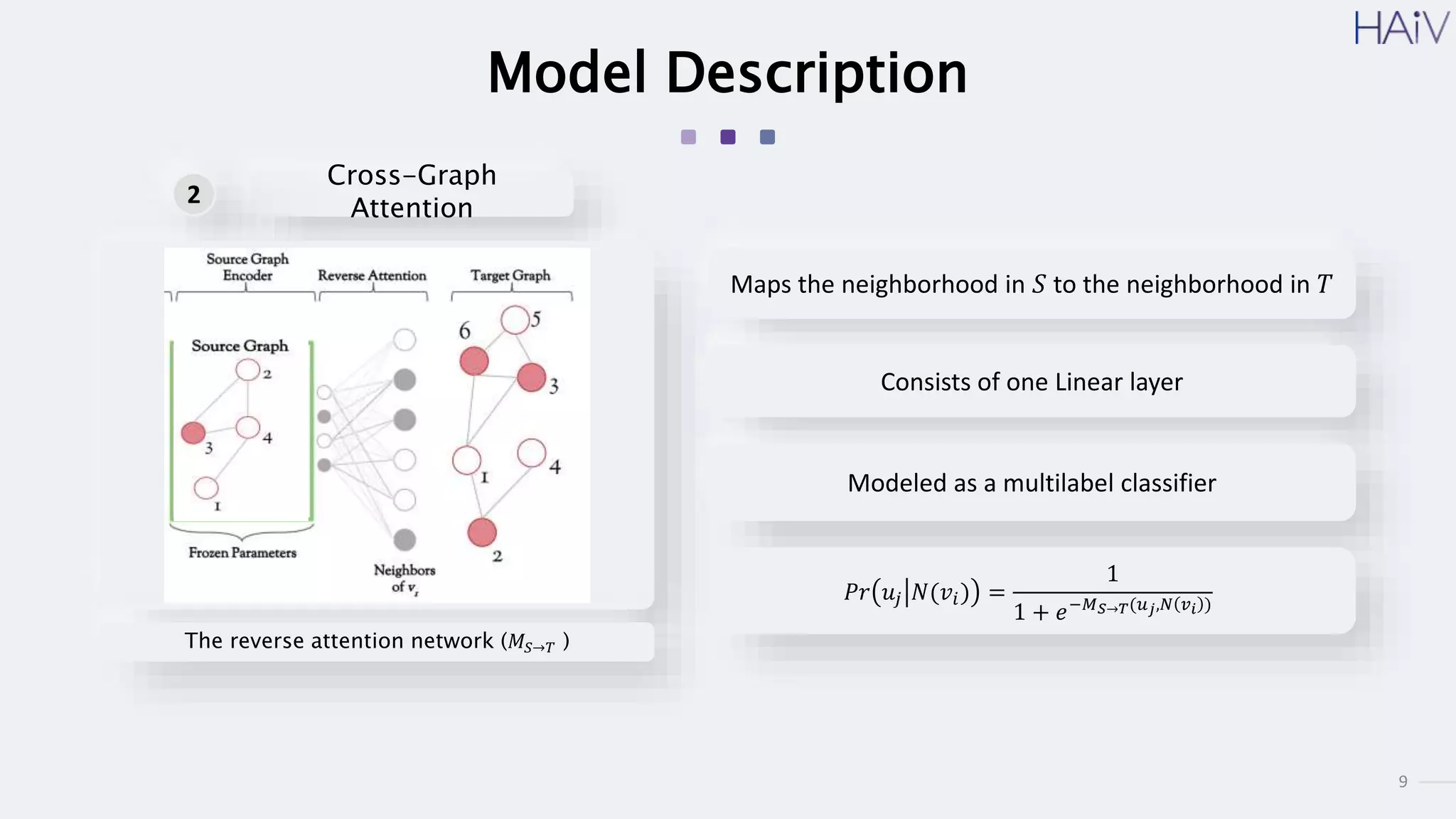

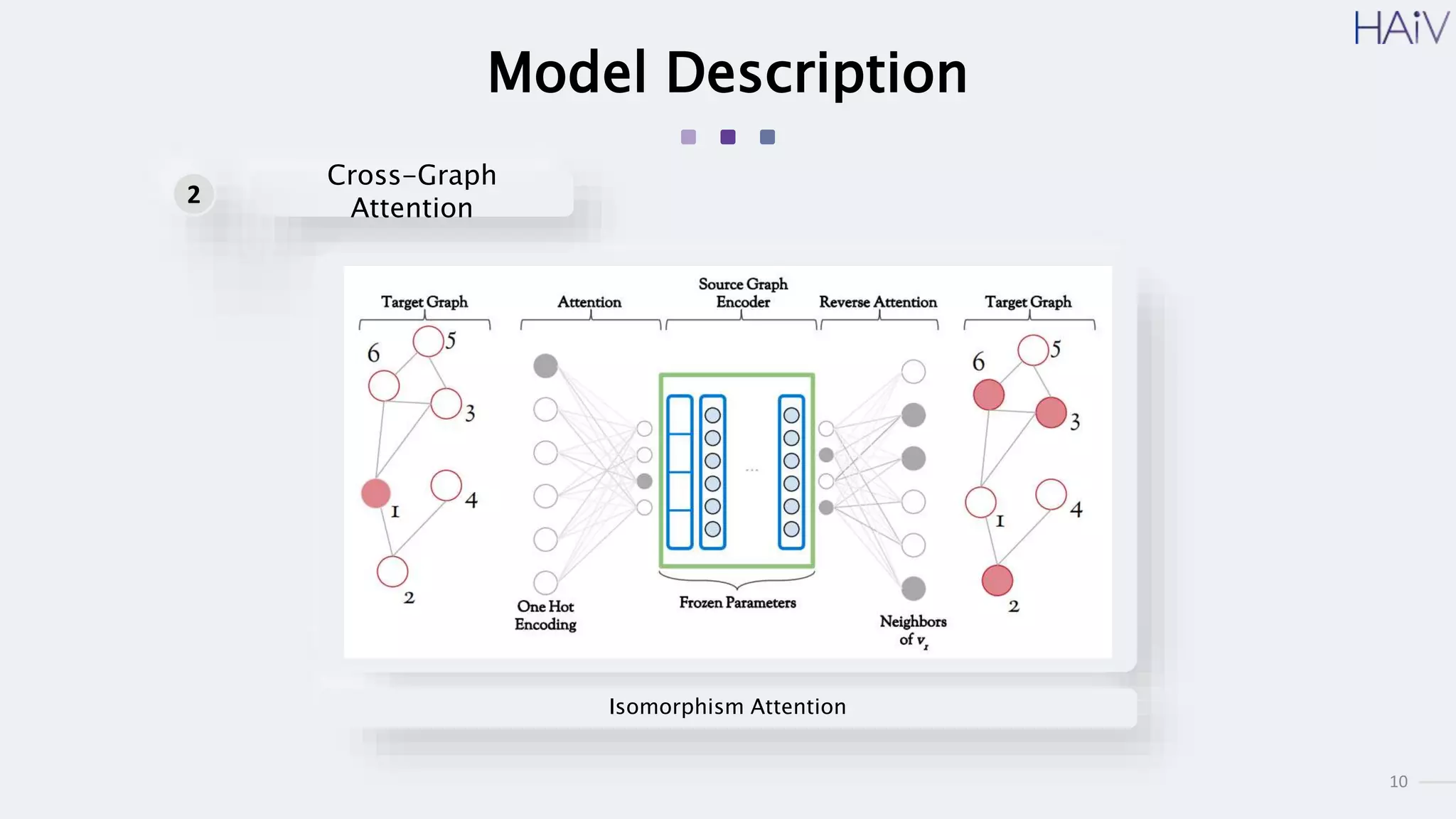

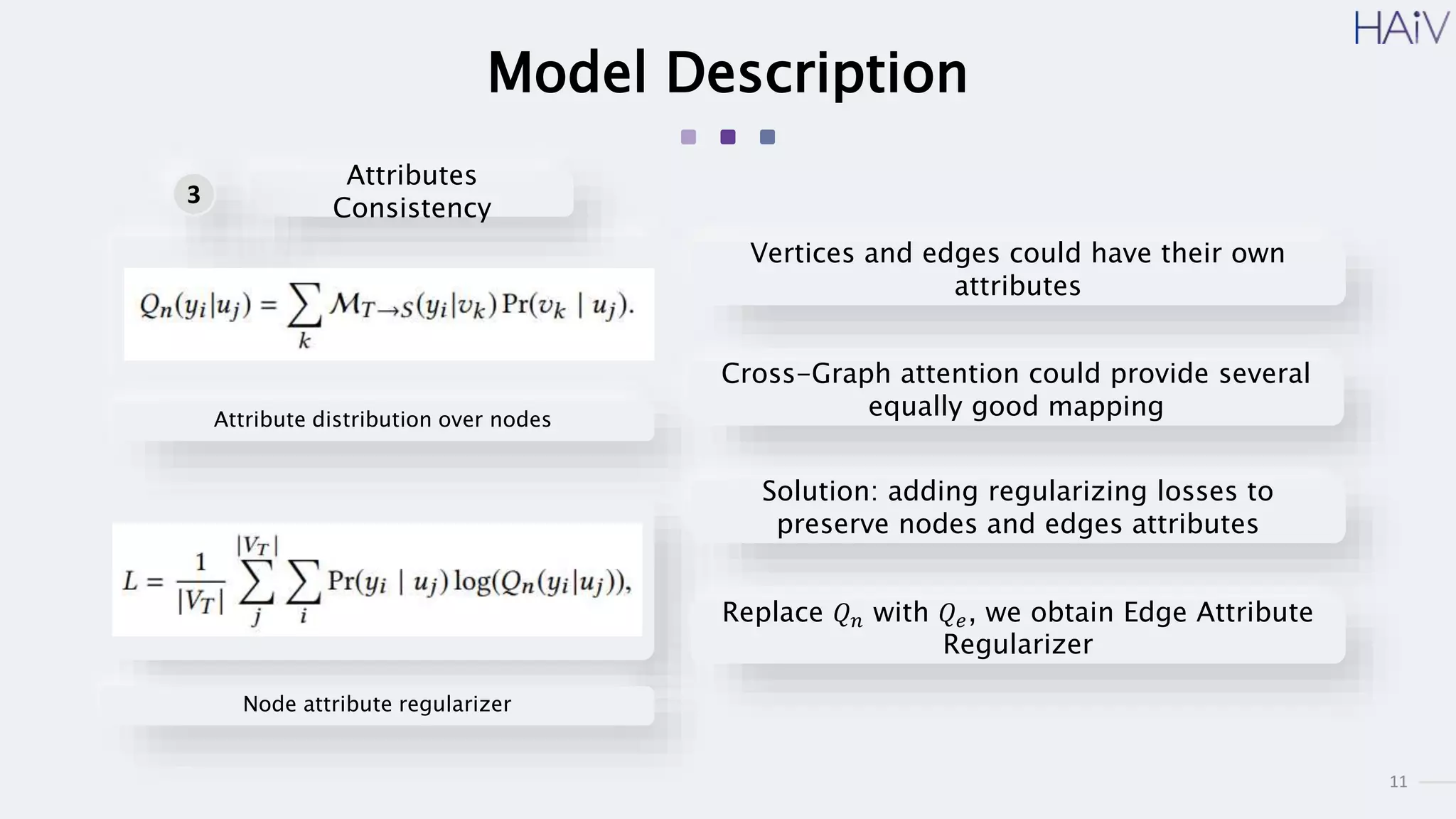

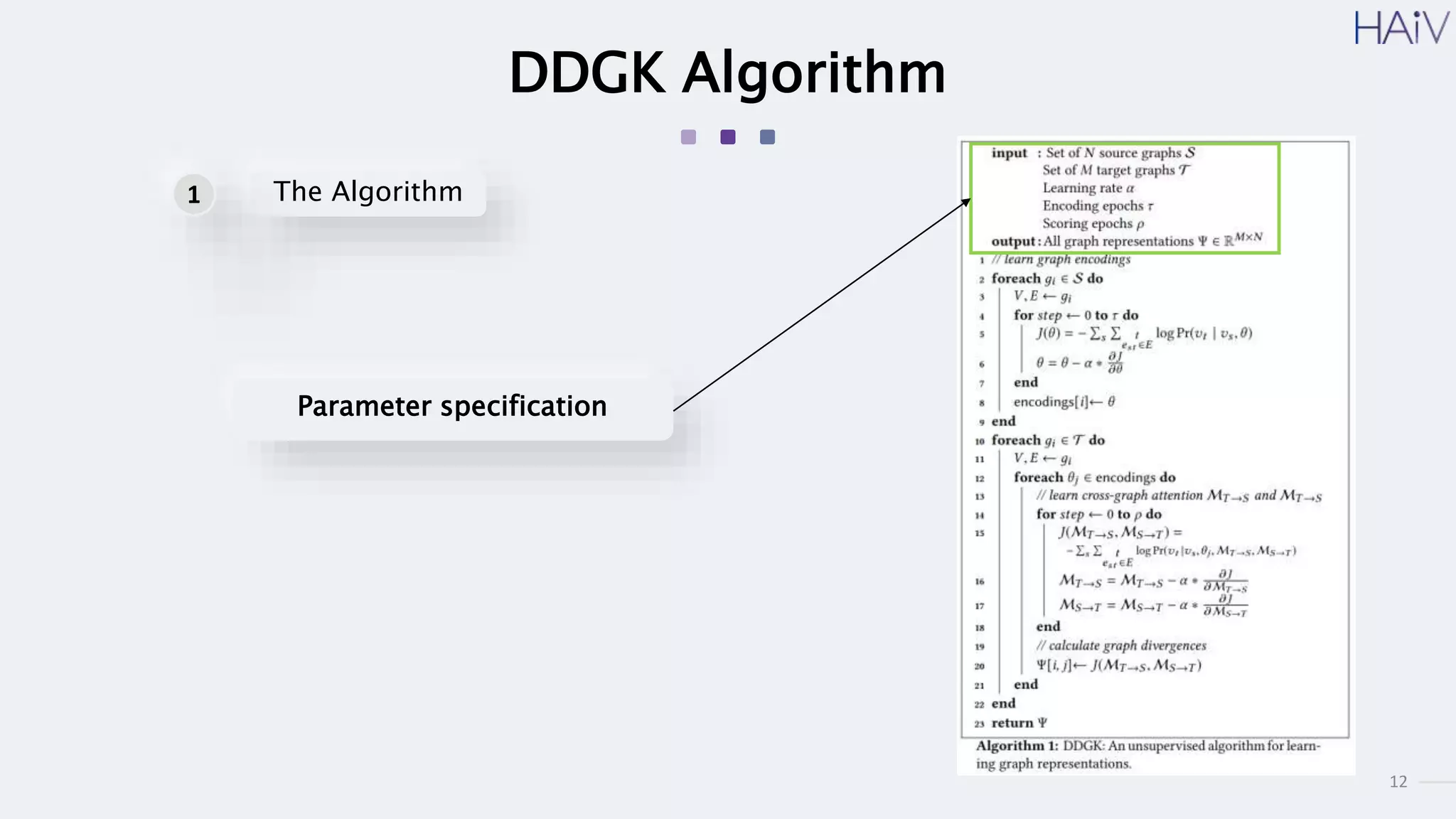

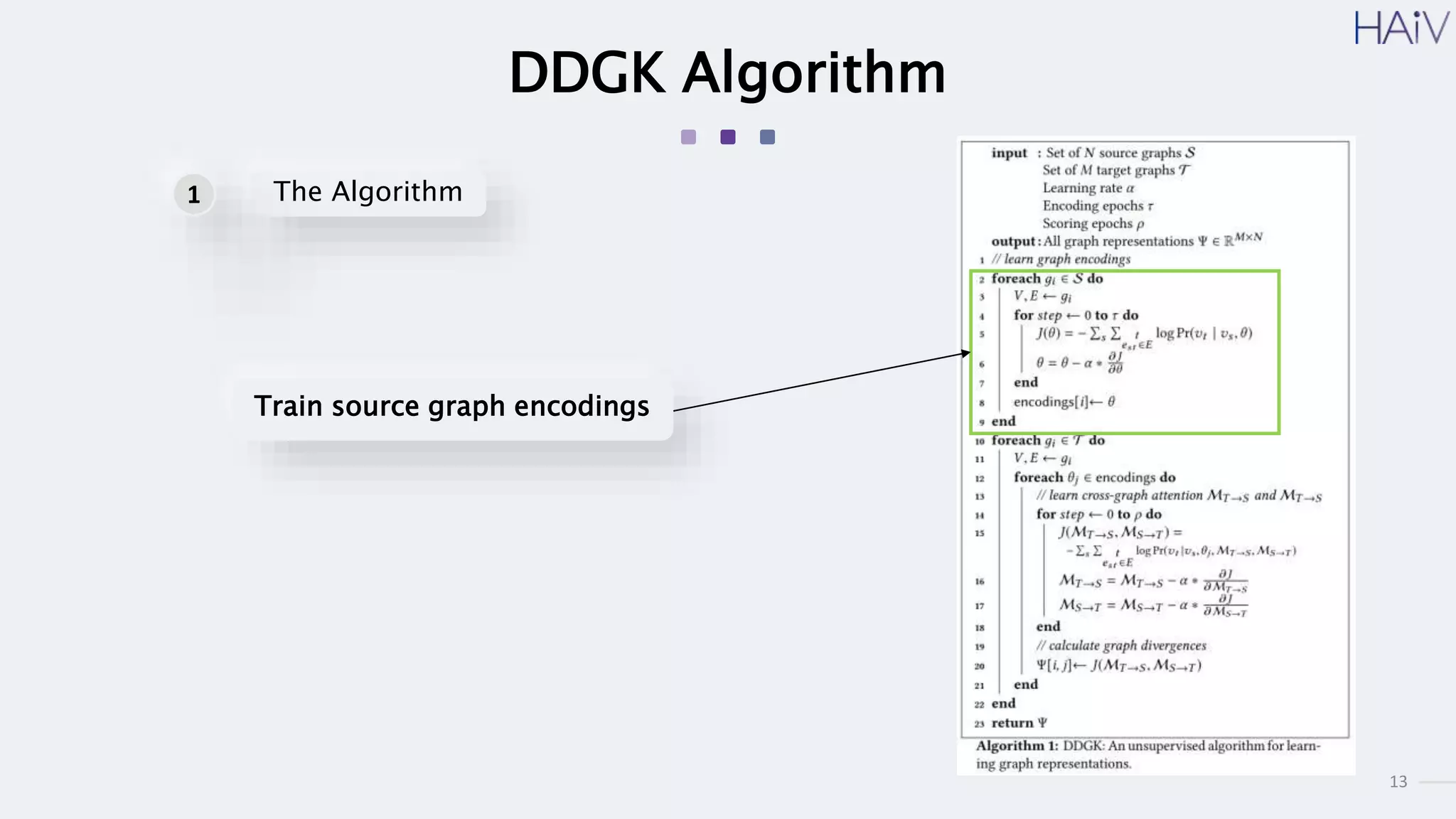

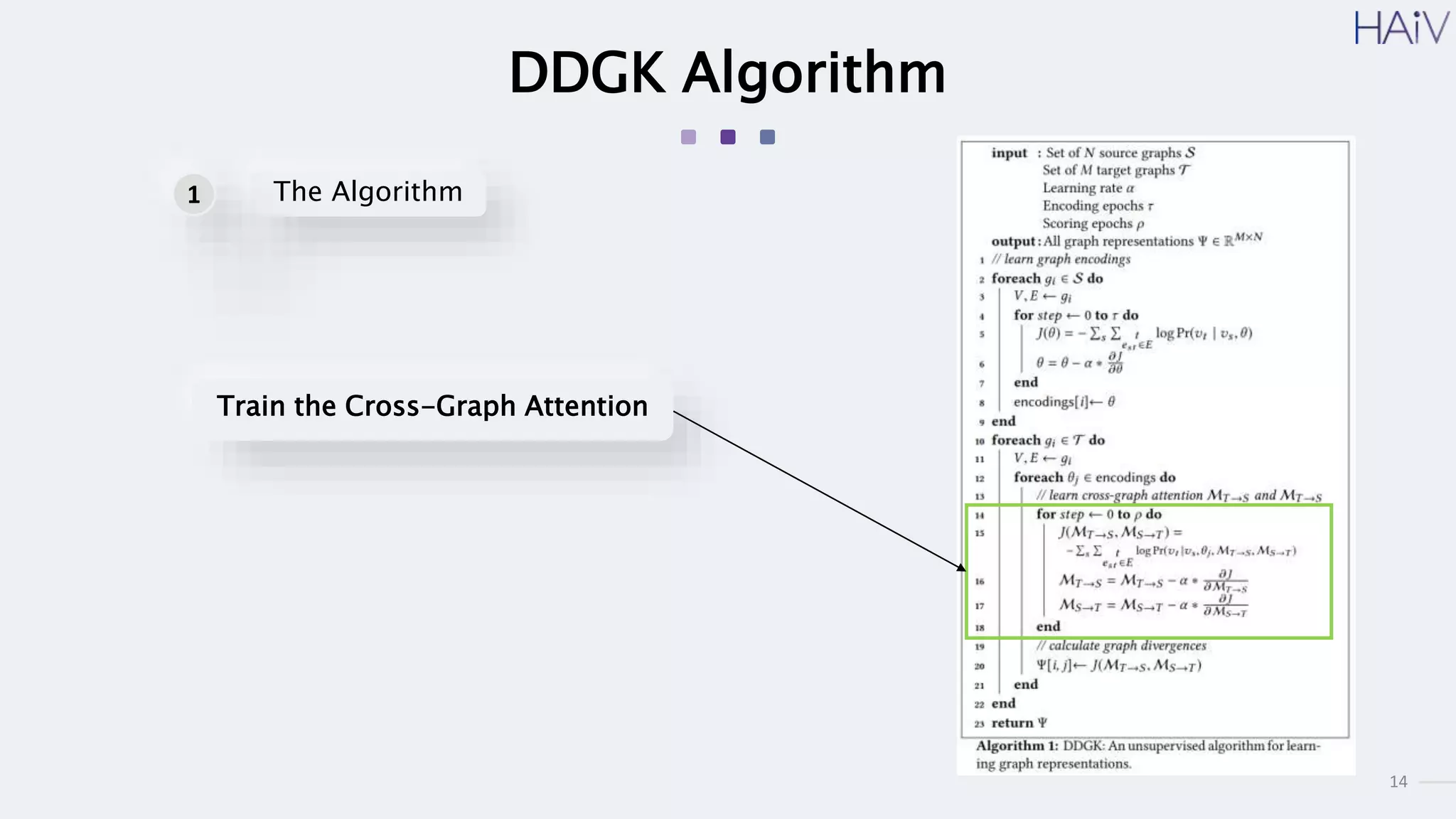

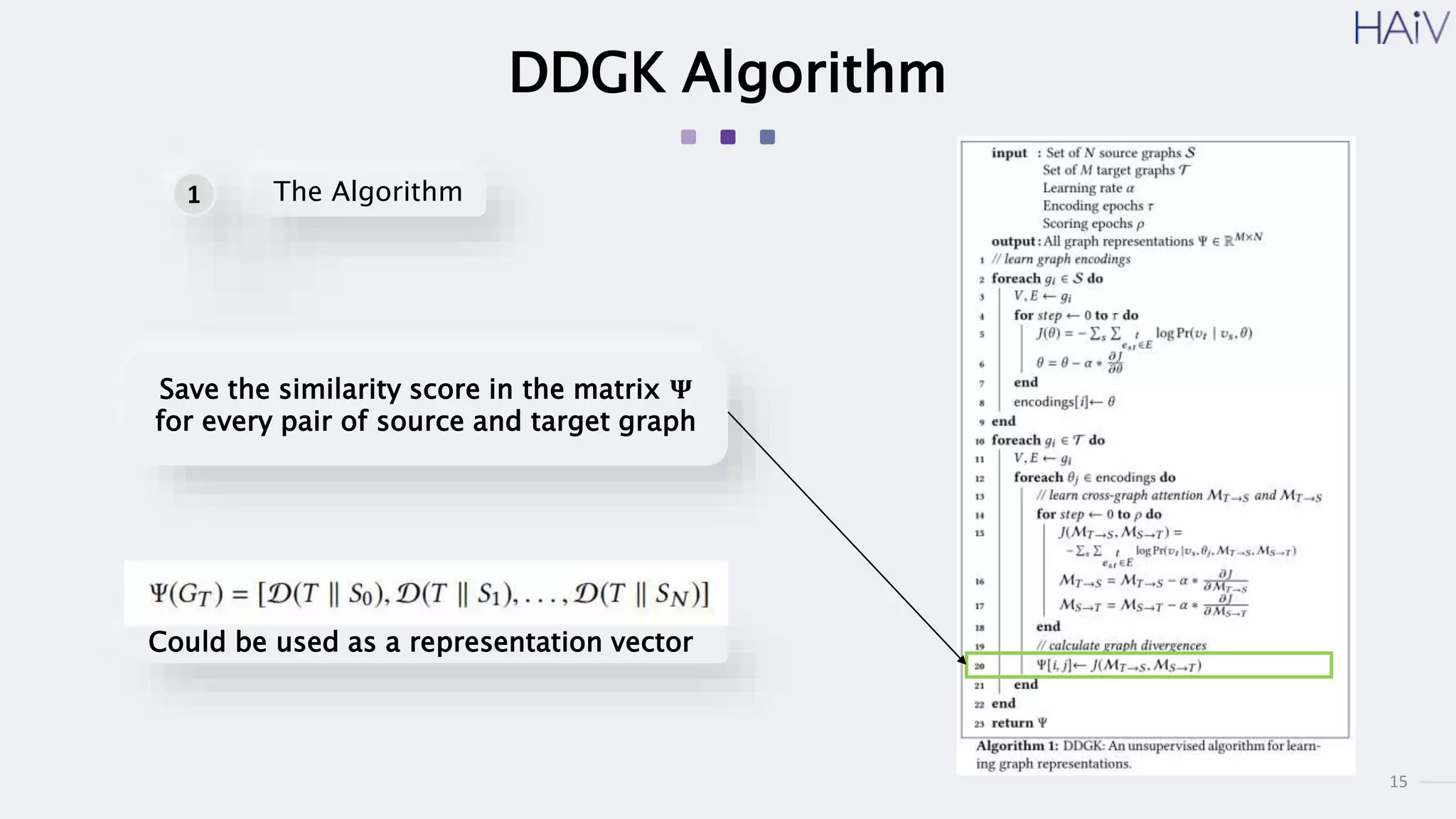

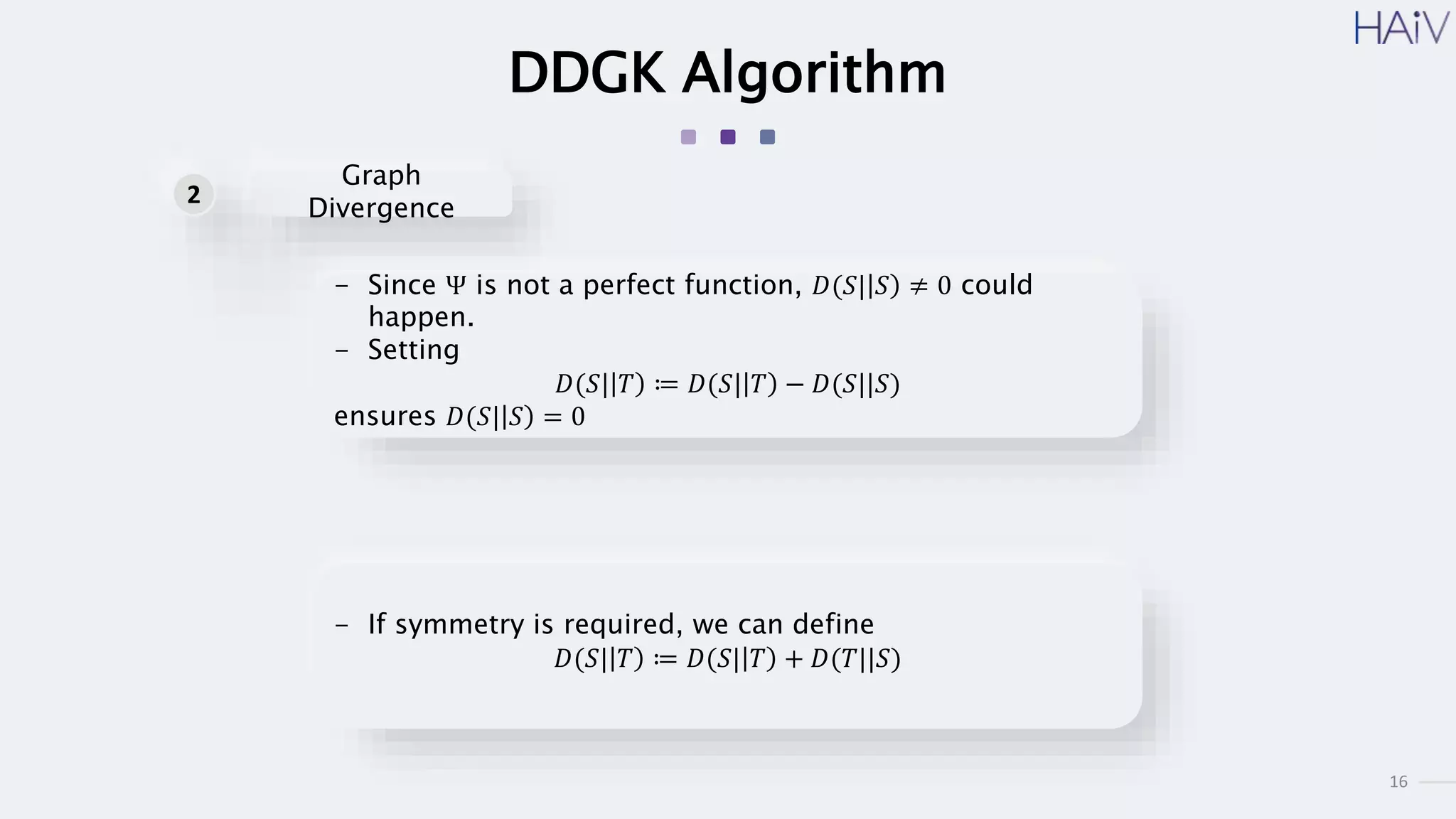

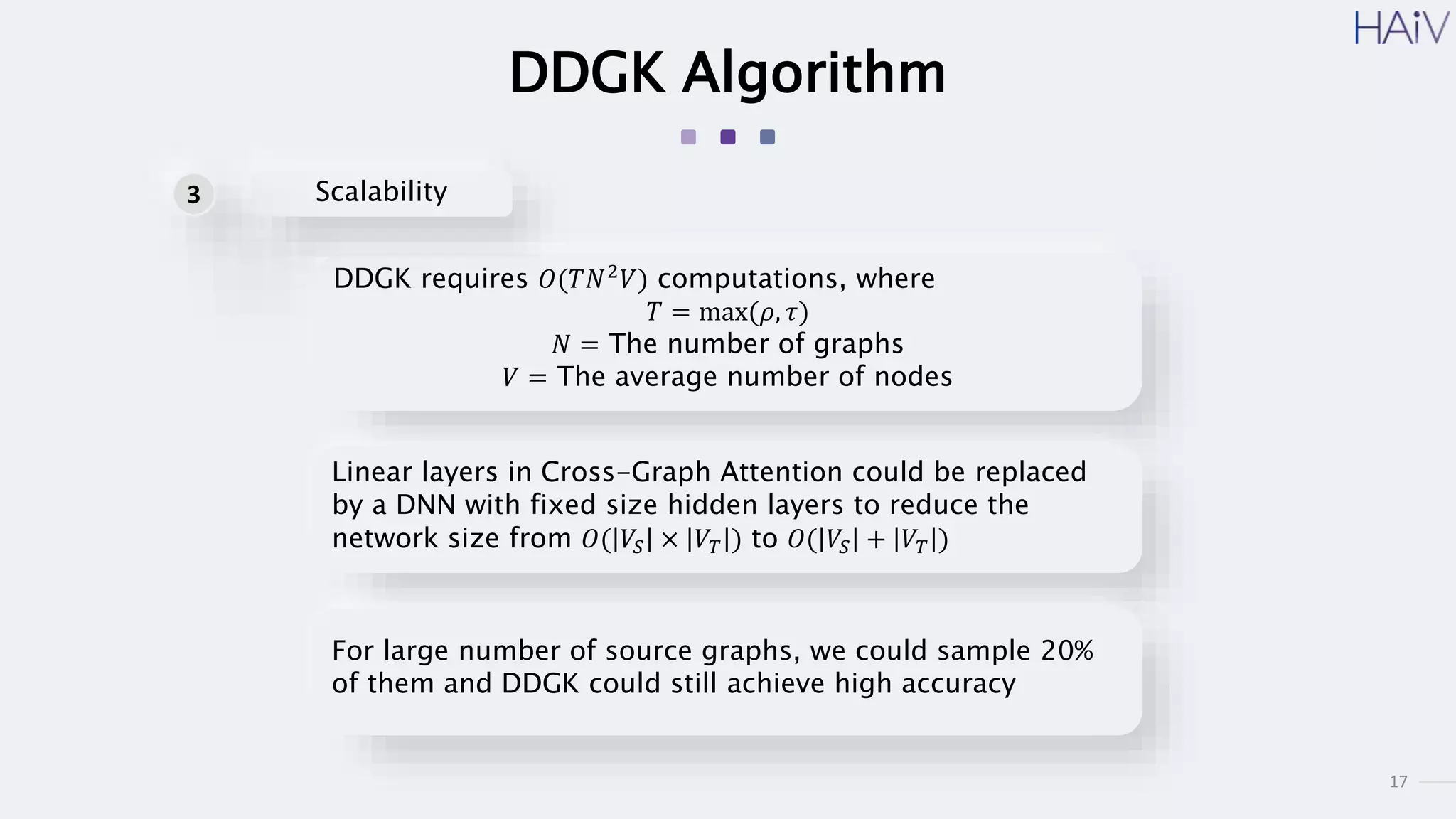

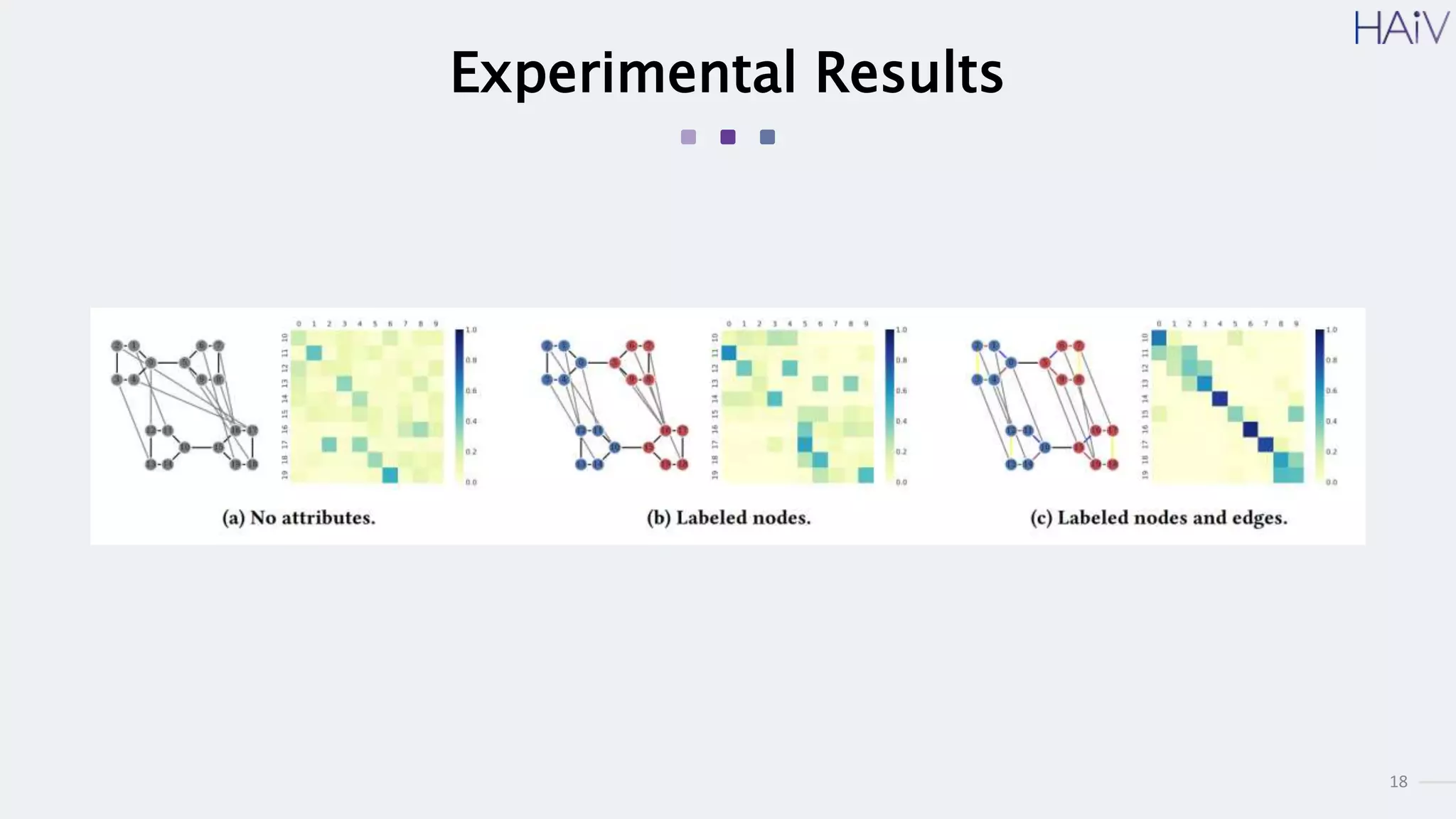

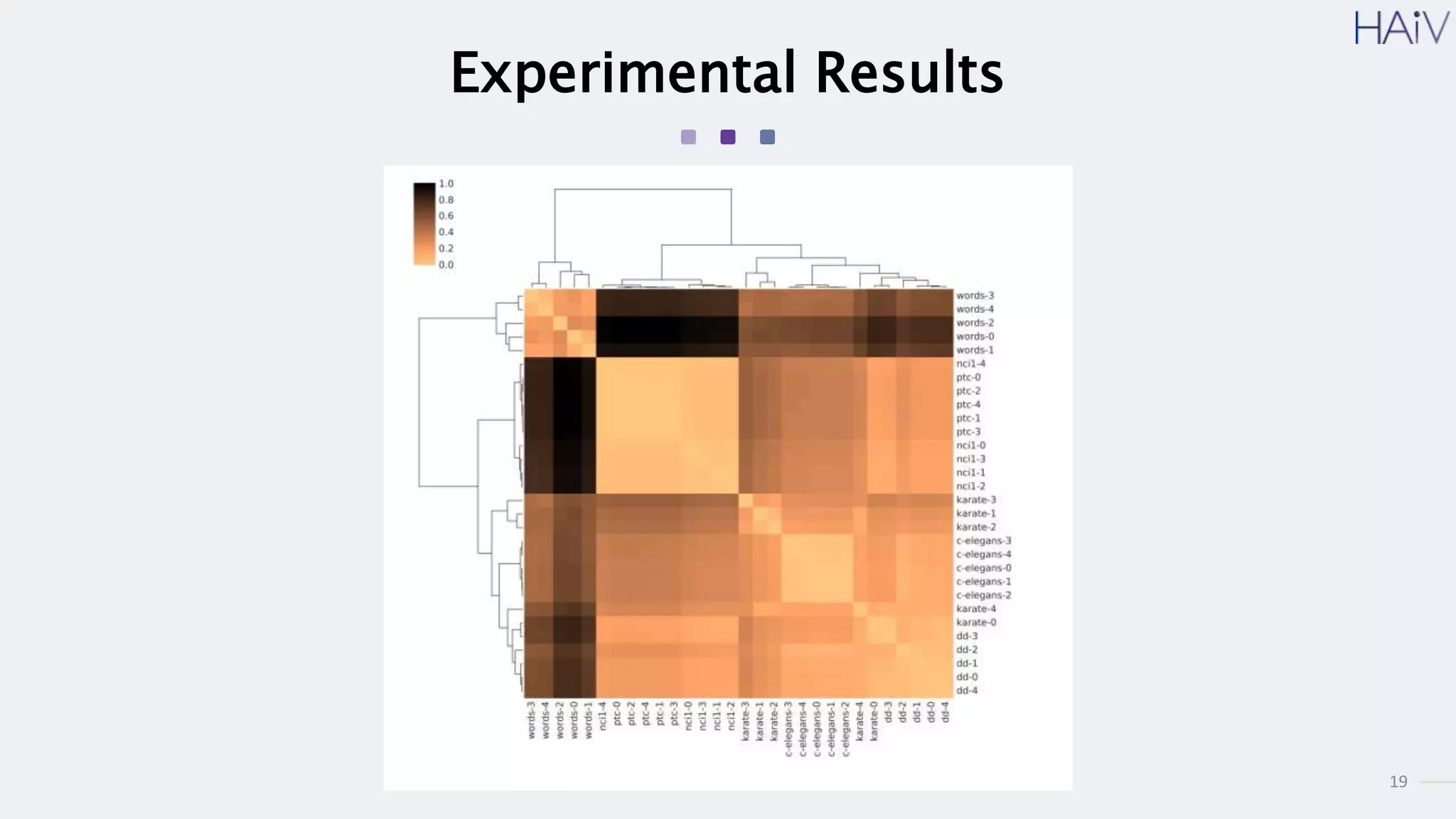

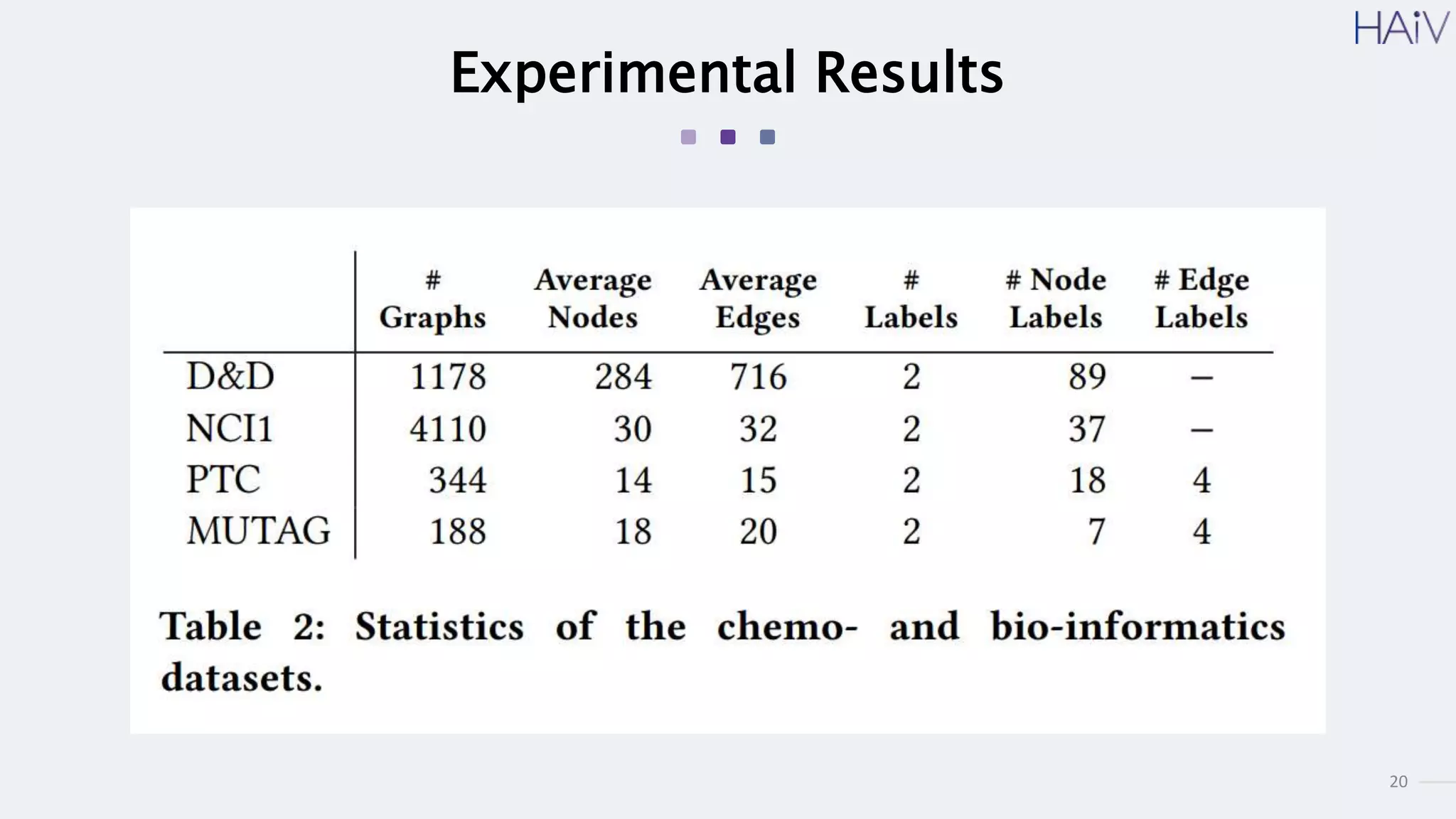

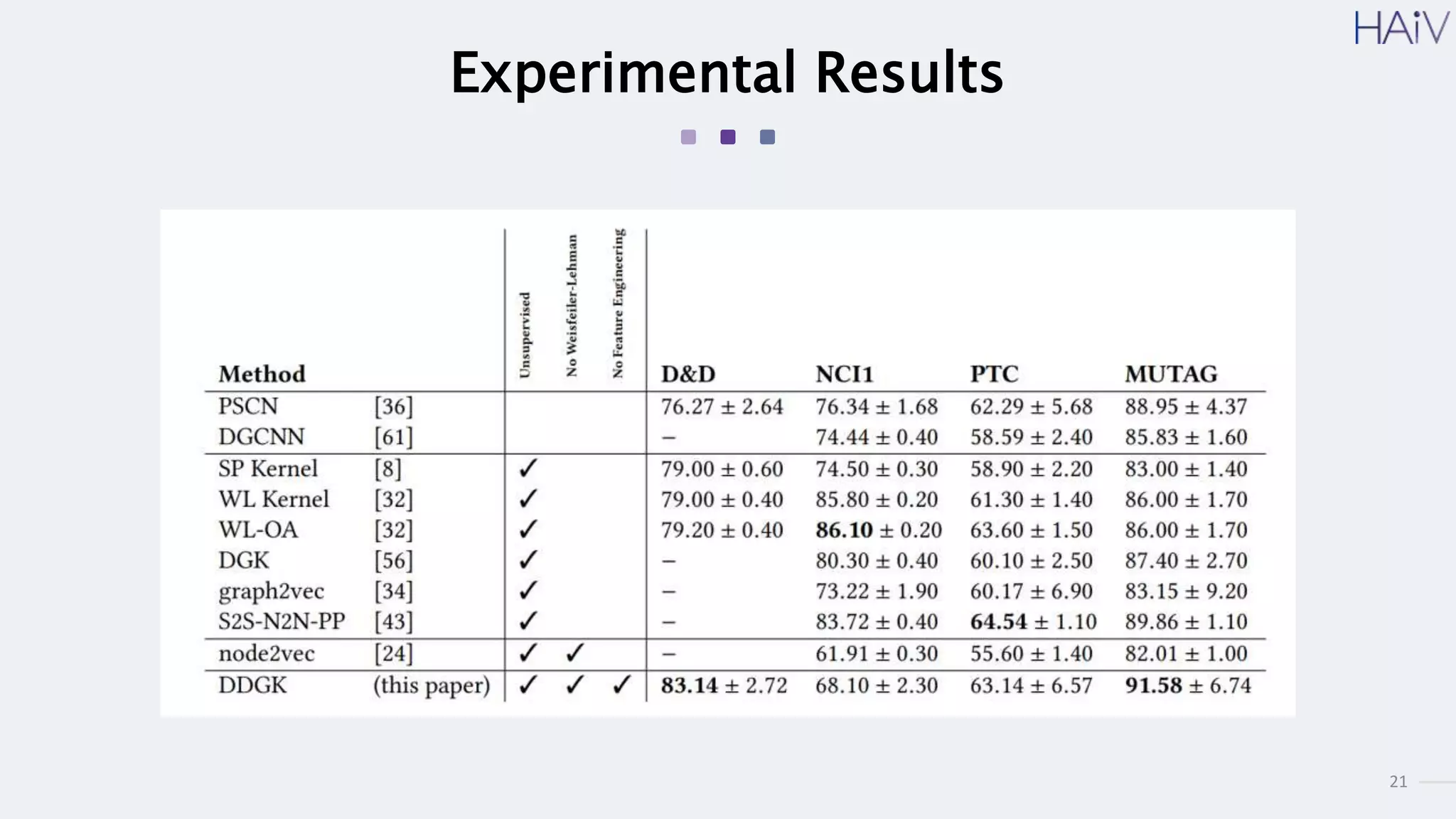

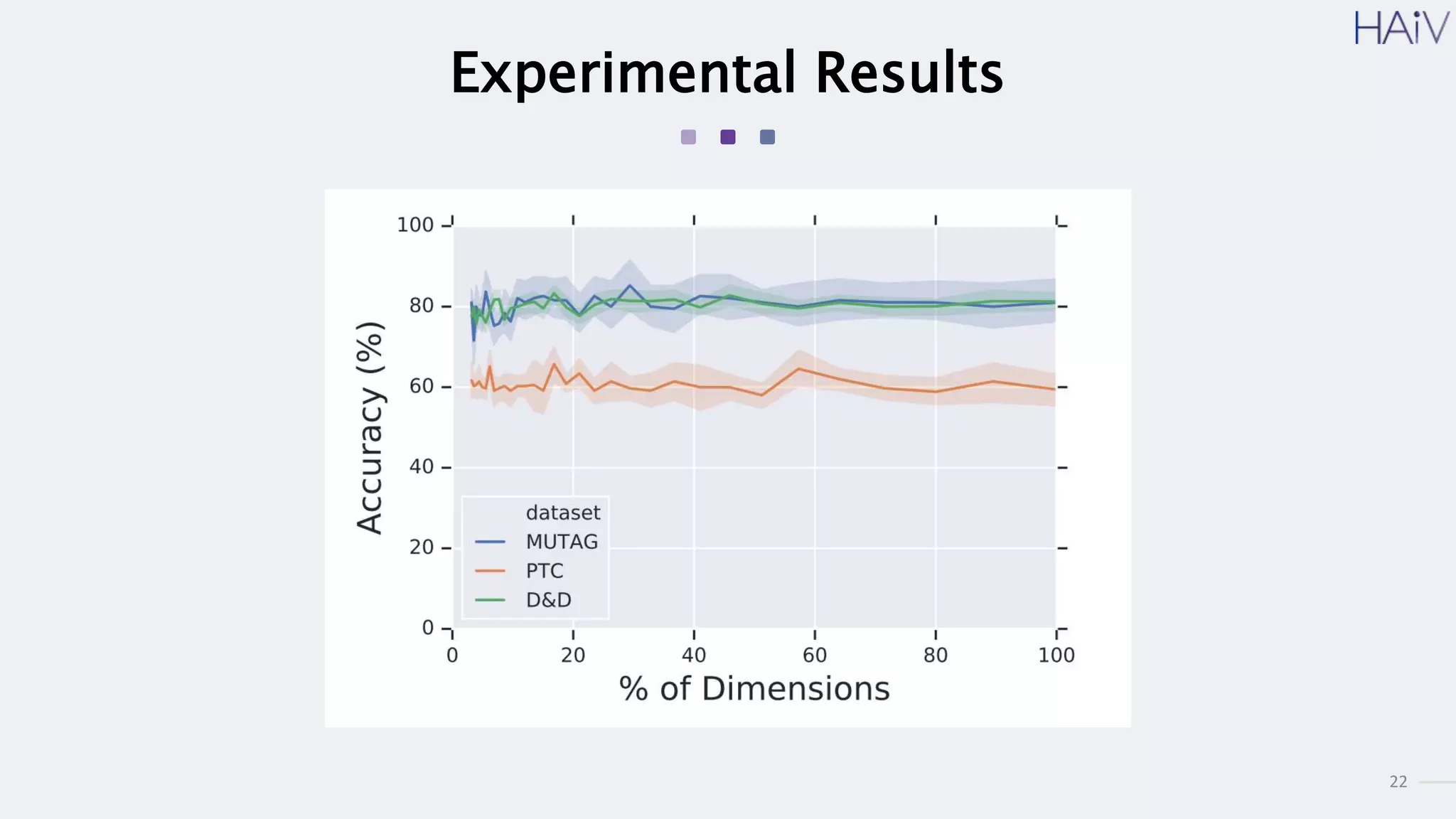

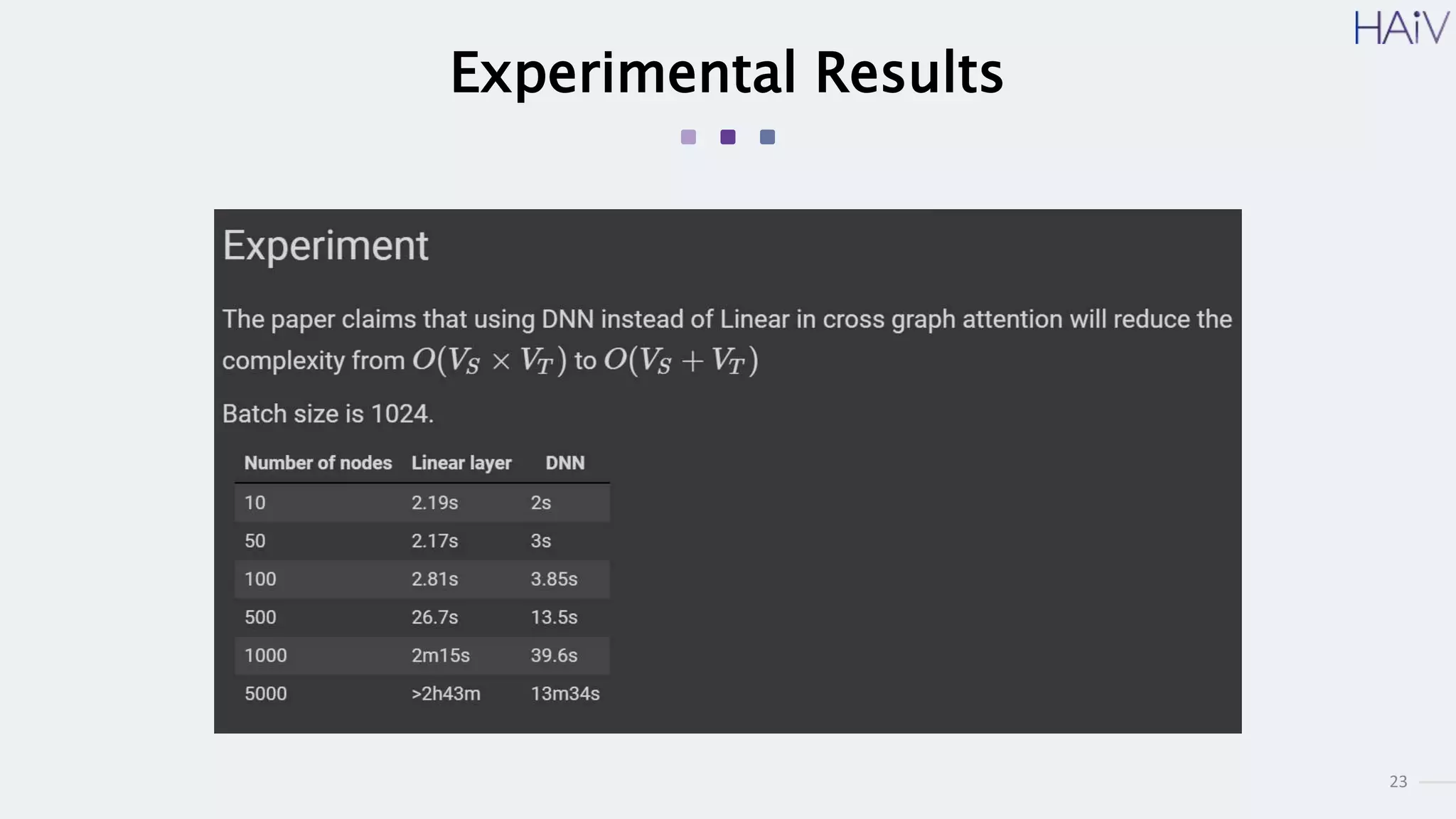

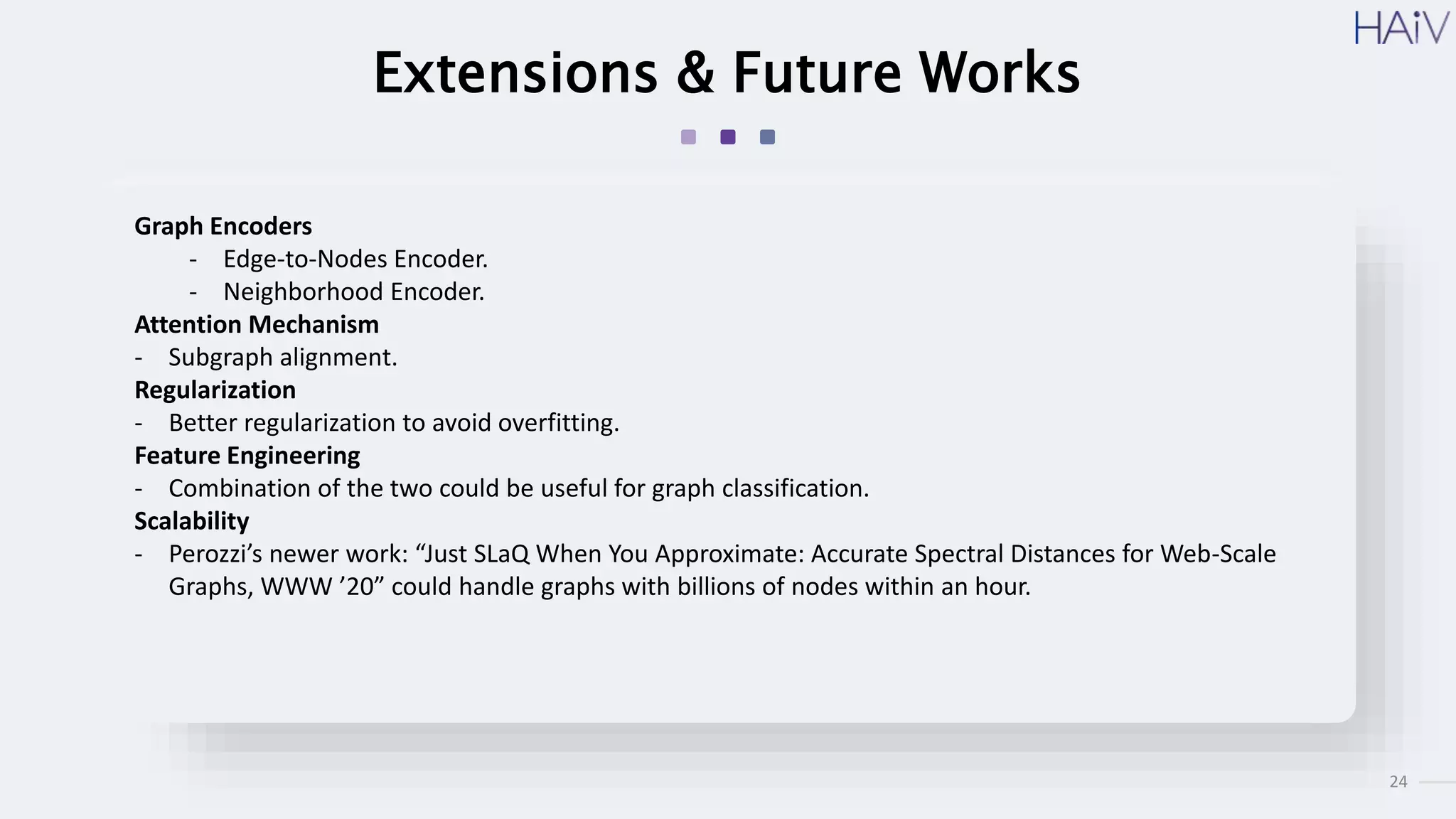

This document summarizes a research paper on learning graph representations for deep divergence graph kernels (DDGK). DDGK learns graph representations without supervision or domain knowledge by using a node-to-edges encoder and isomorphism attention. The isomorphism attention provides a bidirectional mapping between nodes in two graphs. DDGK then calculates a divergence score between the source and target graphs as a measure of their (dis)similarity. Experimental results showed DDGK produces representations competitive with other graph kernel baselines. The paper proposes several extensions, including different graph encoders and attention mechanisms, as well as improved regularization and scalability.