Dataviz Products throughout the Evaluation Lifecycle

•

0 likes•972 views

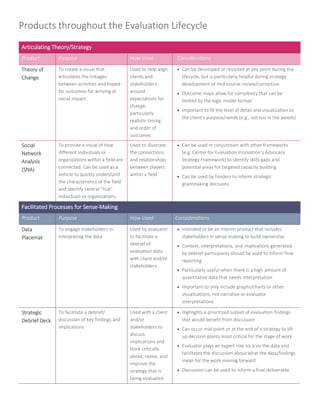

Innovation Network's Veena Pankaj and ORS Impact's Mel Howlett share dataviz products that can be used throughout the evaluation lifecycle, including theory of change, social network analysis, data placemat, strategic debrief deck, H-form, visual report deck, visual executive summary, and timeline.

Report

Share

Report

Share

Download to read offline

Recommended

Evaluation Plan Workbook

Innovation Network's own workbook on evaluation planning. Can be used alone or in conjunction with the Evaluation Plan Builder at the Point K Learning Center.

Coalition Assessment: Approaches for Measuring Capacity and Impact

Coalition Assessment: Approaches for Measuring Capacity and Impact

Innovation Network

by Veena Pankaj, Kat Athanasiades, and Ann Emery

February 2014

Download the paper here: www.innonet.org/research

Why assess coalition capacity? How should a coalition be assessed? How can coalition assessment data be analyzed and used?

Coalitions are an important tool in the advocacy and policy change toolbox. They can be used to promote an issue, increase visibility, and eventually propel an issue to the forefront of a political or social agenda. They can provide a lot of horsepower—harnessing the combined power and expertise of many entities all at once. And they are a valuable technique for crafting more durable solutions generated by a broad constituency. For all of these reasons, developing and strengthening coalitions is a common strategy among advocates and advocacy funders.

For evaluators, coalition assessment is a growing field of experimentation and learning. Innovation Network has been evaluating coalitions since 2006, beginning with the Coalition for Comprehensive Immigration Reform, a national effort to secure passage by the U.S. Congress for comprehensive immigration reform. Over the years, we have evaluated many different types of coalitions throughout the United States. Our coalition partners have worked at national, state, regional, and local levels on a variety of advocacy and policy change goals, such as healthy community design or childhood nutrition. This white paper provides practitioners and funders with insights into the coalition assessment process along with concrete examples and lessons we’ve learned from our own work.

Logic Model Workbook

Innovation Network's own workbook (revised in 2010), offering an introduction to the processes and concepts of the logic model. This workbook can be used alone or in conjunction with the Logic Model Builder at the Point K Learning Center.

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...Institute of Development Studies

Program logic models: design and use

Presentation on what program logic model is, what the difference is between PLM a theory-based models, and on the use of program logic models.

Introduction to logic models

Jennifer Kuschner, Program Development and Evaluation Specialist, UW-Extension

Kerry Zaleski, Monitoring and Evaluation Project Coordinator, UW-Extension

This interactive session provided participants with an overview of what a logic model is and how to use one for planning, implementation, evaluation or communicating about co-curricular community service activities. The session also provided an opportunity to work in teams to create participant’s own logic model.

Recommended

Evaluation Plan Workbook

Innovation Network's own workbook on evaluation planning. Can be used alone or in conjunction with the Evaluation Plan Builder at the Point K Learning Center.

Coalition Assessment: Approaches for Measuring Capacity and Impact

Coalition Assessment: Approaches for Measuring Capacity and Impact

Innovation Network

by Veena Pankaj, Kat Athanasiades, and Ann Emery

February 2014

Download the paper here: www.innonet.org/research

Why assess coalition capacity? How should a coalition be assessed? How can coalition assessment data be analyzed and used?

Coalitions are an important tool in the advocacy and policy change toolbox. They can be used to promote an issue, increase visibility, and eventually propel an issue to the forefront of a political or social agenda. They can provide a lot of horsepower—harnessing the combined power and expertise of many entities all at once. And they are a valuable technique for crafting more durable solutions generated by a broad constituency. For all of these reasons, developing and strengthening coalitions is a common strategy among advocates and advocacy funders.

For evaluators, coalition assessment is a growing field of experimentation and learning. Innovation Network has been evaluating coalitions since 2006, beginning with the Coalition for Comprehensive Immigration Reform, a national effort to secure passage by the U.S. Congress for comprehensive immigration reform. Over the years, we have evaluated many different types of coalitions throughout the United States. Our coalition partners have worked at national, state, regional, and local levels on a variety of advocacy and policy change goals, such as healthy community design or childhood nutrition. This white paper provides practitioners and funders with insights into the coalition assessment process along with concrete examples and lessons we’ve learned from our own work.

Logic Model Workbook

Innovation Network's own workbook (revised in 2010), offering an introduction to the processes and concepts of the logic model. This workbook can be used alone or in conjunction with the Logic Model Builder at the Point K Learning Center.

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...Institute of Development Studies

Program logic models: design and use

Presentation on what program logic model is, what the difference is between PLM a theory-based models, and on the use of program logic models.

Introduction to logic models

Jennifer Kuschner, Program Development and Evaluation Specialist, UW-Extension

Kerry Zaleski, Monitoring and Evaluation Project Coordinator, UW-Extension

This interactive session provided participants with an overview of what a logic model is and how to use one for planning, implementation, evaluation or communicating about co-curricular community service activities. The session also provided an opportunity to work in teams to create participant’s own logic model.

CSU Extension, Engagement and the Logic model

Presentation delivered to graduate class Principles of Extension.

Much of the material generated in this lecture were from the extension, logic model, scholarship of engagement were taken from the University of Wisconsin-Extension, Program Development and Evaluation program.

http://www.uwex.edu/ces/pdande/evaluation/evallogicmodel.html

A Guide to Logic Models – Grant Writing

Federal, state, provincial and foundation grant applications in both the United States and Canada are increasingly requiring the use of logic models in their grant applications. Depending on the level of complexity required, these can prove a major stumbling block, especially with looming deadlines. The purpose of this seminar is to unlock the mystery surrounding their development and use. At the conclusion, we will not promise that you will like them any better, just understand them and fear them less.

2. grantseeking creating a program logic model

Grants for beginners. Module 2 of grant seeking series. Covers how to develop a program logic model for grant development. Basic program logic models include highlighting the situation and priorities; development of overall program goal; determining program outcomes, outputs and inputs; identifying any assumptions and external factors that are in play; and developing an program evaluation plan.

Evaluating Communication Programmes, Products and Campaigns: Training workshop

A one day workshop on evaluating communication programmes, products and campaigns. The main steps and methods are covered with real life examples given. This workshop was originally conducted by Glenn O'Neil of Owl RE for Gellis Communications in Brussels in October

United Way Logic model presentation

A presentation for agencies who wanted to apply for United Way funds in the spring of 2011

Non-Profit Program Planning and Evaluation

Presentation for Dallas Center for Nonprofit Management for the Nonprofit Management Certificate Program - Part 1 of Program Planning and Evaluation.

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...Institute of Development Studies

Program Evaluation In the Non-Profit Sector

3 Session presetnation to students at Rhode Island College in the Non-Profit Certificate Program; specifically regarding program evaluation.

Two Examples of Program Planning, Monitoring and Evaluation

Presented by Laili Irani, Senior Policy Analyst for the Population Reference Bureau, as part of the Measuring Success Toolkit webinar in September 2012.

HOW TO CARRY OUT MONITORING AND EVALUATION OF PROJECTS

M &E FRAMEWORKS INDICATES THE SCOPE OF EVALUATION AND THE DETAILS INVLVED .THE LEVEL OF INVOLVEMENT BY STAKEHOLDERS

Make Your Data Count: Tips & Tools for Visual Reporting

In their session at YNPNdc's 2015 Annual Conference in Washington, DC, Johanna Morariu and Katherine Haugh presented on helpful tips and tools for visual reporting. This handout includes their suggestions, great examples of visual reports, and tools anyone can access to create powerful visual reports.

Putting Data in Context: Timelining for Evaluators (HANDOUT)

Creating a timeline is a method for picturing or seeing events as they take place over time. The full PowerPoint slides of this presentation are also available in SlideShare. Search for the title "Putting Data in Context: Timelining for Evaluators".

[Link: http://www.slideshare.net/InnoNet_Eval/putting-data-in-context-timelining-for-evaluators ]

More Related Content

What's hot

CSU Extension, Engagement and the Logic model

Presentation delivered to graduate class Principles of Extension.

Much of the material generated in this lecture were from the extension, logic model, scholarship of engagement were taken from the University of Wisconsin-Extension, Program Development and Evaluation program.

http://www.uwex.edu/ces/pdande/evaluation/evallogicmodel.html

A Guide to Logic Models – Grant Writing

Federal, state, provincial and foundation grant applications in both the United States and Canada are increasingly requiring the use of logic models in their grant applications. Depending on the level of complexity required, these can prove a major stumbling block, especially with looming deadlines. The purpose of this seminar is to unlock the mystery surrounding their development and use. At the conclusion, we will not promise that you will like them any better, just understand them and fear them less.

2. grantseeking creating a program logic model

Grants for beginners. Module 2 of grant seeking series. Covers how to develop a program logic model for grant development. Basic program logic models include highlighting the situation and priorities; development of overall program goal; determining program outcomes, outputs and inputs; identifying any assumptions and external factors that are in play; and developing an program evaluation plan.

Evaluating Communication Programmes, Products and Campaigns: Training workshop

A one day workshop on evaluating communication programmes, products and campaigns. The main steps and methods are covered with real life examples given. This workshop was originally conducted by Glenn O'Neil of Owl RE for Gellis Communications in Brussels in October

United Way Logic model presentation

A presentation for agencies who wanted to apply for United Way funds in the spring of 2011

Non-Profit Program Planning and Evaluation

Presentation for Dallas Center for Nonprofit Management for the Nonprofit Management Certificate Program - Part 1 of Program Planning and Evaluation.

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...Institute of Development Studies

Program Evaluation In the Non-Profit Sector

3 Session presetnation to students at Rhode Island College in the Non-Profit Certificate Program; specifically regarding program evaluation.

Two Examples of Program Planning, Monitoring and Evaluation

Presented by Laili Irani, Senior Policy Analyst for the Population Reference Bureau, as part of the Measuring Success Toolkit webinar in September 2012.

HOW TO CARRY OUT MONITORING AND EVALUATION OF PROJECTS

M &E FRAMEWORKS INDICATES THE SCOPE OF EVALUATION AND THE DETAILS INVLVED .THE LEVEL OF INVOLVEMENT BY STAKEHOLDERS

What's hot (20)

Evaluating Communication Programmes, Products and Campaigns: Training workshop

Evaluating Communication Programmes, Products and Campaigns: Training workshop

KM Impact Challenge - Sharing findings of synthesis report

KM Impact Challenge - Sharing findings of synthesis report

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...

IDS Impact, Innovation and Learning Workshop March 2013: Day 2, Keynote 2 Pat...

Two Examples of Program Planning, Monitoring and Evaluation

Two Examples of Program Planning, Monitoring and Evaluation

HOW TO CARRY OUT MONITORING AND EVALUATION OF PROJECTS

HOW TO CARRY OUT MONITORING AND EVALUATION OF PROJECTS

Viewers also liked

Make Your Data Count: Tips & Tools for Visual Reporting

In their session at YNPNdc's 2015 Annual Conference in Washington, DC, Johanna Morariu and Katherine Haugh presented on helpful tips and tools for visual reporting. This handout includes their suggestions, great examples of visual reports, and tools anyone can access to create powerful visual reports.

Putting Data in Context: Timelining for Evaluators (HANDOUT)

Creating a timeline is a method for picturing or seeing events as they take place over time. The full PowerPoint slides of this presentation are also available in SlideShare. Search for the title "Putting Data in Context: Timelining for Evaluators".

[Link: http://www.slideshare.net/InnoNet_Eval/putting-data-in-context-timelining-for-evaluators ]

Real Time Evaluation Handout

This handout accompanies the slides on real time evaluation (http://bit.ly/1Py1ME4).

Do-It-Yourself Logic Models: Examples, Templates, and Checklists

Logic models are nonprofit road maps: they help you diagram where you are now and where you hope to be in the future. They are used for program planning, program management, fundraising, communications, consensus-building, and evaluation planning.

Want to make a logic model, but not sure where to start? In this 90-minute webinar, Johanna Morariu and Ann Emery taught about the nuts and bolts of logic models--what they are, how to make them, who should be involved in the process, and how often to update them. We’ll provide you with tools like a logic model template, free online logic model builder, and a logic model checklist. We’ll also share several examples from real nonprofits so that you’re ready to hit the ground running.

To learn more, please visit www.innonet.org.

#JAGUnity2014: DataViz for Philanthropists! Tips, Tools, and How-Tos for Comm...

Presentation by Innovation Network's Johanna Morariu and Ann K. Emery at the Joint Affinity Groups Unity Conference, held June 6, 2014 in Washington, DC.

Viewers also liked (9)

Make Your Data Count: Tips & Tools for Visual Reporting

Make Your Data Count: Tips & Tools for Visual Reporting

Putting Data in Context: Timelining for Evaluators (HANDOUT)

Putting Data in Context: Timelining for Evaluators (HANDOUT)

Do-It-Yourself Logic Models: Examples, Templates, and Checklists

Do-It-Yourself Logic Models: Examples, Templates, and Checklists

#JAGUnity2014: DataViz for Philanthropists! Tips, Tools, and How-Tos for Comm...

#JAGUnity2014: DataViz for Philanthropists! Tips, Tools, and How-Tos for Comm...

Similar to Dataviz Products throughout the Evaluation Lifecycle

Final Class Presentation on Direct Problem-solving Intervention Projects.pptx

Your proposal is an important document

concept of evaluation "Character insight"

Evaluation is a systematic process to understand what a program does and how well the program does it. Evaluation results can be used to maintain or improve program quality and to ensure that future planning can be more evidence-based.

in this topic i cover SWOT analysis, mile stone , Gantt chart, PERT, CPM, Bennett's hierarchy evaluation , logical framework approach

Training pm jan 11 part b

Presentation for Fast Forward and COnnect Trainees Saxion, 14, 28 January 2011

Writing evaluation report of a project

How to write an development project evaluation report. Format and principle guidelines for mid-term and for completed projects. This format can be used for any kind of development project.

Measuring the Impact and ROI of community/social investment programs

Measuring the Impact and ROI of community/social investment programsNext Generation Consultants: Reana Rossouw

Getting better at understanding community/social investment programs and strategies. What works - how it works and why it works? PMO Proposal For Global Sales Operations

Proposal to create a Worldwide Project Management Office for Hewlett Packard Sales Operations function

Fountain Park Strategy Navigator

Taking strategy to the bottom line: How to make the organisation understand and accept the strategy and act accordingly – and fast?

Elaborating on Process and Results: A Comprehensive Analysis and Interpretation

Join us on an insightful journey as we dive deep into the process and results of a comprehensive analysis, providing a thorough understanding and interpretation of the findings. In this engaging SlideShare presentation, we will explore the intricacies of conducting a rigorous analysis, deciphering the outcomes, and deriving meaningful insights.

In this presentation, we will introduce the importance of conducting a comprehensive analysis in various fields and disciplines. Understand the value of robust research methodologies, data collection techniques, and analytical tools in gaining a deeper understanding of complex phenomena. Explore how the analysis process illuminates patterns, relationships, and trends that inform decision-making, shape strategies, and drive improvements.

We will delve into the key steps involved in conducting a comprehensive analysis. Explore the stages of data collection, data cleaning, and data transformation to ensure the integrity and quality of the dataset. Understand various analysis methods, such as quantitative analysis, qualitative analysis, or mixed-method approaches, depending on the nature of the research questions and available data.

Furthermore, we will discuss the interpretation and presentation of the results. Learn how to interpret statistical findings, identify significant patterns, and extract meaningful insights from the data. Explore the effective visualization and communication techniques to present the results in a clear and compelling manner, catering to different audiences and stakeholders.

Through case studies, examples, and practical insights, we will showcase the importance of interpreting the results in the context of the research objectives and real-world implications. Discuss the process of drawing conclusions, making recommendations, and translating findings into actionable strategies. Explore the ethical considerations of data analysis and the responsible use of findings.

Whether you are a researcher, analyst, decision-maker, or simply interested in the analytical process, this presentation will provide you with a comprehensive understanding of analysis methods and interpretation techniques. Let us embark on a journey of analysis, unraveling the complexities, and deriving meaningful insights that drive informed decisions and impactful outcomes.

Project evaluation

Summary of the Part III. Project Evaluation of the book “DESIGNING EDUCATION PROJECTS”

Program governance Structure

How to set up a Governance model for your IT Programs and how to set up and manage a PMO

feseability report in economic management.pptx

Feaseability report and structure and there components .

Best practiceoverview productlifecycle_b_yelland

Becky Yelland's Best Practice guide on product life cycle product management.

What is the importance of communication skills for a business analyst.docx

Communication skills are crucial for business analysts, aiding in stakeholder engagement, requirement gathering, and project success. Effective facilitation of workshops and defining project scope further underscores their importance in driving collaboration and meeting objectives.

Similar to Dataviz Products throughout the Evaluation Lifecycle (20)

Advanced program management constituency management

Advanced program management constituency management

Final Class Presentation on Direct Problem-solving Intervention Projects.pptx

Final Class Presentation on Direct Problem-solving Intervention Projects.pptx

Measuring the Impact and ROI of community/social investment programs

Measuring the Impact and ROI of community/social investment programs

Elaborating on Process and Results: A Comprehensive Analysis and Interpretation

Elaborating on Process and Results: A Comprehensive Analysis and Interpretation

What is the importance of communication skills for a business analyst.docx

What is the importance of communication skills for a business analyst.docx

More from Innovation Network

Refreshing Evaluation in Support of the Social Movements Revival

There is a growing social consciousness in America and a revival of using social movements as a vehicle for social change—with increasing nonprofit involvement and philanthropic funding support. Since the mid-2000’s there have been several notable movements that have taken hold of the public consciousness: the immigration reform movement and DREAMers, The Occupy Movement, Gay Marriage, climate change movement, Black Lives Matter, and a nascent, potential movement developing in protest of the Trump Administration. While evaluating movements has some parallels to established evaluation practice, it also represents some thorny challenges. In a session presented at the American Evaluation Association Conference on November 10, 2017, we explore and share what we are learning about evaluating social movements, including: what we know about social movements, their components, characteristics, and types; what aspects of social movements are ripe for evaluation; and what existing evaluation approaches are well suited to evaluating social movements.

10-Year Evaluation of Connecticut Health Foundation's Leadership Program

This presentation shares findings from an outcomes evaluation conducted by Innovation Network for the Connecticut Health Foundation in 2014-2015.

Evaluation Theory Tree: Evaluation Approaches

During the 2015 American Evaluation Association's Annual Conference in Chicago, Katherine Haugh and Deborah Grodzicki conducted a real time data mini-study to see which evaluation approaches evaluators at #eval15 use most frequently in their work. Basing their mini-study off of Marvin C. Alkin's "Evaluation Roots: A Wider Perspective of Theorists’ Views and Influences," they asked evaluators to vote for the top two approaches they used most often. This handout accompanied the real time data mini-study to provide more information about the formation of the evaluation theory tree, it's three branches, and definitions of the evaluation approaches associated with each branch.

Putting Data in Context: Timelining for Evaluators

Creating a timeline is a method for picturing or seeing events as they take place over time. By documenting major occurrences in chronological order, evaluators are able to identify patterns, themes, or trends that they may not have seen otherwise. A timeline allows evaluators to “zoom out” and look at the broader landscape, so that they are better positioned to think through and understand the context in which events occur. Having a timeline is especially useful for complex, multi-year evaluation projects with several threads of evaluation, where documenting the process is just as important as measuring the outcome itself. Creating a timeline has three key components: planning, populating, and revising. This presentation shows how to incorporate a timeline into a report, how to use a timeline to track progress internally, and how to utilize data visualization principles to create a visual timeline.

Highlights of this presentation are also available in our handout titled "Putting Data in Context: Timelining for Evaluators (HANDOUT)".

[Link: http://www.slideshare.net/InnoNet_Eval/putting-data-in-context-timelining-for-evaluators-handout ]

Data Placemats: Construction and Practical Design Tips

Increasing stakeholder involvement throughout the evaluation lifecycle, not only enhances stakeholder buy-in to the final evaluation results, but it also ensures that the evaluator is taking into consideration multiple viewpoints to be able to provide a more comprehensive picture of a program or initiative. Data placemats, a data viz technique to improve stakeholder understanding of data, can be used to communicate preliminary evaluation results during the analysis phase of the evaluation life cycle. When done correctly, it offers stakeholders an opportunity to form their own judgments about the data and weigh in prior to the final report. In this session, the presenter will review the concept of data placemats, focusing specifically on the nuts and bolts of constructing a data placemat.

Real Time Evaluation: Tips, Tools, and Tricks of the Trade

How can an evaluator meaningfully convey findings to stakeholders based on data collected that same day? How can real time evaluation really be done in real time? This Ignite talk is based on Innovation Network’s experiences with facilitating real time evaluation in health policy settings, and will introduce AEA participants to three tools that can be used as part of any evaluator’s real time evaluation toolbox: surveys, H-forms, and timelines. Yuqi Wang from Innovation Network will provide an overview of each tool; show how these tools can aid data collection, analysis, and communication of findings in real time; and lessons learned from Innovation Network’s experiences with these three tools during the evaluation process.

Real Time Survey_Long Method

Collecting and analyzing data in real time doesn't have to be as stressful or hard as it sounds, especially if you want to collect real time data using surveys. There is a short way and a long way to collect real time survey data. The short way of collecting and analyzing survey data is to use software that has the capability of collecting and analyzing survey data when embedded into powerpoints or webinars. The long way is to use hard copies of surveys to collect data, and Excel to analyze. This document will show you step by step how to collect and analyze survey data the long way.

Make Your Data Count: New, Visual Approaches to Evaluation Reporting

Charts and graphs built on dataviz principles are transforming evaluation reporting and increasing the evaluator's communication power. At YNPNdc's 2015 Annual Conference in Washington DC, Johanna Morariu and Katherine Haugh will provide principles and case studies of new, visual approaches to evaluation reporting. The session will provide examples, such as incorporating a wealth of visuals in text reports, using large format hand-outs to emphasize findings, and designing slide reports.

Success and Failure in the Evaluation Process

Success and Failure in the Evaluation Process

What do the terms “success” and “failure” really mean in the philanthropic world? Funders have taken different approaches to learning from initiatives that haven’t gone quite as they had hoped. Some funders want to learn from their mistakes, some provide technical assistance to lagging grantees, and some want to focus their light on “bright spots” and grantee successes. In this session, Kat Athanasiades from Innovation Network will discuss how and when her organization uses grant reports in evaluation; how and why getting good evaluation data from grant reports is difficult; and potential ways to make it easier for grantees to report on failure in a way that could be useful to evaluators.

Session participants will:

•Know how funders can embed “failure reporting” into grant reports in ways that are useful to evaluators.

•Learn ways a foundation can combat some of the "structural" impediments, e.g., trust and communication, that may prevent proper reporting on failure.

•Gain ideas from fellow participants on how to understand and appreciate grantmaking "failures" as well as successes.

Evaluating Adaptive Capacity and Skills + Resources

Since its inception in 2000, the Missouri Foundation for Health (MFH) has invested in policy change in Missouri. Recognizing a dearth of organizations with the capacity to advocate for Missouri health consumers, MFH broadened its grantmaking vision to building a field of consumer health advocates. Using the Framework for Evaluating Advocacy Field Building, Innovation Network and the Center for Evaluation Innovation are currently gauging how MFH shapes this field through its grantmaking. This presentation will focus on evaluating two dimensions of the Framework of this field: Adaptive Capacity and Skills & Resources. A discussion of data collection activities will give the audience ideas about how to evaluate these dimensions, lessons learned from the process, and what has been revealed through the evaluation about Skills & Resources and Adaptive Capacity in a field.

#Eval14 - Make Your Data Count: New, Visual Approaches to Reporting

5 elements of visual reporting and 5 lessons learned

Data Placemats (handout)

Handout for the session, "Data Placemats: A DataViz Technique to Improve Stakeholder Understanding of Evaluation Results" presented at the American Evaluation Association's 2014 conference.

#JAGUnity2014: Innovations in Evaluating Social Movements

Today, social movement organizers are grappling with big questions: What is the long-term impact we are hoping to make? How can we measure the progress we've made thus far? How can we learn from past practice?

On June 7, 2014, Innovation Netowrk's William Fenn spoke on a panel with with Deepak Pateriya and Sian O'Faolain of the Center for Community Change and Hillary Klein of Make the Road New York to try and answer some of these questions. The presentation highlighted specific ways in which social movement organizers can evaluate the impact of their work.

Evaluation Essentials for Nonprofits: Terms, Tips, and Trends

These slides are an excerpt from an evaluation session for the Young Nonprofit Professionals Network (YNPN), which was held in June 2014 in Washington, DC.

#JAGUnity2014: DataViz for Philanthropists! Tips, Tools, and How-Tos for Comm...

Presentation by Innovation Network's Johanna Morariu and Ann K. Emery at the Joint Affinity Groups Unity Conference, held June 6, 2014 in Washington, DC.

#YNPNdc14: DataViz! Tips, Tools, and How-tos for Visualizing Your Data (Slides)

Innovation Network's Johanna Morariu and Ann K. Emery presented at the Young Nonprofit Professionals Network 2014 Annual Leadership Conference, which was held on May 9, 2014 in Washington, DC.

#YNPNdc14: DataViz! Tips, Tools, and How-tos for Visualizing Your Data (Handout)

Innovation Network's Johanna Morariu and Ann K. Emery presented at the Young Nonprofit Professionals Network 2014 Annual Leadership Conference, which was held on May 9, 2014 in Washington, DC.

Assessing the Capacity of Community Coalitions to Advocate for Change (Presen...

Research has shown that high-capacity coalitions are more successful in effecting community change. But what does “high capacity” mean? Evaluators have developed tools to provide an answer, but documentation is scarce regarding how they are implemented, how the results are used, and whether they predict coalition success in collaborative community change efforts. This breakfast talk will focus on a coalition assessment tool designed by Innovation Network to assess changes in coalition capacity over time.

Developed for a health promotion initiative of the Kansas Health Foundation, this tool is designed to assess coalition progress in seven key areas across twelve different community coalitions, over the course of a four-year initiative. The Innovation Network team will share lessons learned from the first year of the initiative about developing and deploying the assessment tool, as well as what these tools can—and can’t—tell us about a coalition’s capacity to conduct community change work. They will also present some data visualization techniques for effectively communicating results back to coalitions.

#14ntcdataviz: DataViz! Tips, Tools, and How-tos for Visualizing Your Data [R...

Presentation by Ann K. Emery, Johanna Morariu, and Andrew Means at the 2014 Nonprofit Technology Conference in Washington, DC

#14ntcdataviz: DataViz! Tips, Tools, and How-tos for Visualizing Your Data

Presentation by Ann K. Emery, Johanna Morariu, and Andrew Means at the 2014 Nonprofit Technology Conference in Washington, DC

More from Innovation Network (20)

Refreshing Evaluation in Support of the Social Movements Revival

Refreshing Evaluation in Support of the Social Movements Revival

10-Year Evaluation of Connecticut Health Foundation's Leadership Program

10-Year Evaluation of Connecticut Health Foundation's Leadership Program

Putting Data in Context: Timelining for Evaluators

Putting Data in Context: Timelining for Evaluators

Data Placemats: Construction and Practical Design Tips

Data Placemats: Construction and Practical Design Tips

Real Time Evaluation: Tips, Tools, and Tricks of the Trade

Real Time Evaluation: Tips, Tools, and Tricks of the Trade

Make Your Data Count: New, Visual Approaches to Evaluation Reporting

Make Your Data Count: New, Visual Approaches to Evaluation Reporting

Evaluating Adaptive Capacity and Skills + Resources

Evaluating Adaptive Capacity and Skills + Resources

#Eval14 - Make Your Data Count: New, Visual Approaches to Reporting

#Eval14 - Make Your Data Count: New, Visual Approaches to Reporting

#JAGUnity2014: Innovations in Evaluating Social Movements

#JAGUnity2014: Innovations in Evaluating Social Movements

Evaluation Essentials for Nonprofits: Terms, Tips, and Trends

Evaluation Essentials for Nonprofits: Terms, Tips, and Trends

#JAGUnity2014: DataViz for Philanthropists! Tips, Tools, and How-Tos for Comm...

#JAGUnity2014: DataViz for Philanthropists! Tips, Tools, and How-Tos for Comm...

#YNPNdc14: DataViz! Tips, Tools, and How-tos for Visualizing Your Data (Slides)

#YNPNdc14: DataViz! Tips, Tools, and How-tos for Visualizing Your Data (Slides)

#YNPNdc14: DataViz! Tips, Tools, and How-tos for Visualizing Your Data (Handout)

#YNPNdc14: DataViz! Tips, Tools, and How-tos for Visualizing Your Data (Handout)

Assessing the Capacity of Community Coalitions to Advocate for Change (Presen...

Assessing the Capacity of Community Coalitions to Advocate for Change (Presen...

#14ntcdataviz: DataViz! Tips, Tools, and How-tos for Visualizing Your Data [R...

#14ntcdataviz: DataViz! Tips, Tools, and How-tos for Visualizing Your Data [R...

#14ntcdataviz: DataViz! Tips, Tools, and How-tos for Visualizing Your Data

#14ntcdataviz: DataViz! Tips, Tools, and How-tos for Visualizing Your Data

Recently uploaded

做(mqu毕业证书)麦考瑞大学毕业证硕士文凭证书学费发票原版一模一样

原版定制【Q微信:741003700】《(mqu毕业证书)麦考瑞大学毕业证硕士文凭证书》【Q微信:741003700】成绩单 、雅思、外壳、留信学历认证永久存档查询,采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【Q微信741003700】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信741003700】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

一比一原版(CBU毕业证)不列颠海角大学毕业证成绩单

CBU毕业证【微信95270640】《如何办理不列颠海角大学毕业证认证》【办证Q微信95270640】《不列颠海角大学文凭毕业证制作》《CBU学历学位证书哪里买》办理不列颠海角大学学位证书扫描件、办理不列颠海角大学雅思证书!

国际留学归国服务中心《如何办不列颠海角大学毕业证认证》《CBU学位证书扫描件哪里买》实体公司,注册经营,行业标杆,精益求精!

1:1完美还原海外各大学毕业材料上的工艺:水印阴影底纹钢印LOGO烫金烫银LOGO烫金烫银复合重叠。文字图案浮雕激光镭射紫外荧光温感复印防伪。

可办理以下真实不列颠海角大学存档留学生信息存档认证:

1不列颠海角大学真实留信网认证(网上可查永久存档无风险百分百成功入库);

2真实教育部认证(留服)等一切高仿或者真实可查认证服务(暂时不可办理);

3购买英美真实学籍(不用正常就读直接出学历);

4英美一年硕士保毕业证项目(保录取学校挂名不用正常就读保毕业)

留学本科/硕士毕业证书成绩单制作流程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询不列颠海角大学不列颠海角大学本科学位证成绩单);

2开始安排制作不列颠海角大学毕业证成绩单电子图;

3不列颠海角大学毕业证成绩单电子版做好以后发送给您确认;

4不列颠海角大学毕业证成绩单电子版您确认信息无误之后安排制作成品;

5不列颠海角大学成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

— — — — — — — — — — — 《文凭顾问Q/微:95270640》这么大这么美的地方赚大钱高楼大厦鳞次栉比大街小巷人潮涌动山娃一路张望一路惊叹他发现城里的桥居然层层叠叠扭来扭去桥下没水却有着水一般的车水马龙山娃惊诧于城里的公交车那么大那么美不用买票乖乖地掷下二枚硬币空调享受还能坐着看电视呢屡经辗转山娃终于跟着父亲到家了山娃没想到父亲城里的家会如此寒碜更没料到父亲的城里竟有如此简陋的鬼地方父亲的家在高楼最底屋最下面很矮很黑是很不显眼的地下室父亲的家安在别人脚底下孰

一比一原版(UPenn毕业证)宾夕法尼亚大学毕业证成绩单

UPenn毕业证【微信95270640】办理宾夕法尼亚大学毕业证原版一模一样、UPenn毕业证制作【Q微信95270640】《宾夕法尼亚大学毕业证购买流程》《UPenn成绩单制作》宾夕法尼亚大学毕业证书UPenn毕业证文凭宾夕法尼亚大学

本科毕业证书,学历学位认证如何办理【留学国外学位学历认证、毕业证、成绩单、大学Offer、雅思托福代考、语言证书、学生卡、高仿教育部认证等一切高仿或者真实可查认证服务】代办国外(海外)英国、加拿大、美国、新西兰、澳大利亚、新西兰等国外各大学毕业证、文凭学历证书、成绩单、学历学位认证真实可查。

办国外宾夕法尼亚大学宾夕法尼亚大学硕士学位证成绩单教育部学历学位认证留信认证大使馆认证留学回国人员证明修改成绩单信封申请学校offer录取通知书在读证明offer letter。

快速办理高仿国外毕业证成绩单:

1宾夕法尼亚大学毕业证+成绩单+留学回国人员证明+教育部学历认证(全套留学回国必备证明材料给父母及亲朋好友一份完美交代);

2雅思成绩单托福成绩单OFFER在读证明等留学相关材料(申请学校转学甚至是申请工签都可以用到)。

3.毕业证 #成绩单等全套材料从防伪到印刷从水印到钢印烫金高精仿度跟学校原版100%相同。

专业服务请勿犹豫联系我!联系人微信号:95270640诚招代理:本公司诚聘当地代理人员如果你有业余时间有兴趣就请联系我们。

国外宾夕法尼亚大学宾夕法尼亚大学硕士学位证成绩单办理过程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。我们在哪里父母对我们的爱和思念为我们的生命增加了光彩给予我们自由追求的力量生活的力量我们也不忘感恩正因为这股感恩的线牵着我们使我们在一年的结束时刻义无反顾的踏上了回家的旅途人们常说父母恩最难回报愿我能以当年爸爸妈妈对待小时候的我们那样耐心温柔地对待我将渐渐老去的父母体谅他们以反哺之心奉敬父母以感恩之心孝顺父母哪怕只为父母换洗衣服为父母喂饭送汤按摩酸痛的腰背握着父母的手扶着他们一步一步地慢慢散步.娃

Algorithmic optimizations for Dynamic Levelwise PageRank (from STICD) : SHORT...

Techniques to optimize the pagerank algorithm usually fall in two categories. One is to try reducing the work per iteration, and the other is to try reducing the number of iterations. These goals are often at odds with one another. Skipping computation on vertices which have already converged has the potential to save iteration time. Skipping in-identical vertices, with the same in-links, helps reduce duplicate computations and thus could help reduce iteration time. Road networks often have chains which can be short-circuited before pagerank computation to improve performance. Final ranks of chain nodes can be easily calculated. This could reduce both the iteration time, and the number of iterations. If a graph has no dangling nodes, pagerank of each strongly connected component can be computed in topological order. This could help reduce the iteration time, no. of iterations, and also enable multi-iteration concurrency in pagerank computation. The combination of all of the above methods is the STICD algorithm. [sticd] For dynamic graphs, unchanged components whose ranks are unaffected can be skipped altogether.

Innovative Methods in Media and Communication Research by Sebastian Kubitschk...

Innovative Methods in Media and Communication Research

一比一原版(CU毕业证)卡尔顿大学毕业证成绩单

CU毕业证【微信95270640】(卡尔顿大学毕业证成绩单本科学历)Q微信95270640(补办CU学位文凭证书)卡尔顿大学留信网学历认证怎么办理卡尔顿大学毕业证成绩单精仿本科学位证书硕士文凭证书认证Seneca College diplomaoffer,Transcript办理硕士学位证书造假卡尔顿大学假文凭学位证书制作CU本科毕业证书硕士学位证书精仿卡尔顿大学学历认证成绩单修改制作,办理真实认证、留信认证、使馆公证、购买成绩单,购买假文凭,购买假学位证,制造假国外大学文凭、毕业公证、毕业证明书、录取通知书、Offer、在读证明、雅思托福成绩单、假文凭、假毕业证、请假条、国际驾照、网上存档可查!

如果您是以下情况,我们都能竭诚为您解决实际问题:【公司采用定金+余款的付款流程,以最大化保障您的利益,让您放心无忧】

1、在校期间,因各种原因未能顺利毕业,拿不到官方毕业证+微信95270640

2、面对父母的压力,希望尽快拿到卡尔顿大学卡尔顿大学毕业证文凭证书;

3、不清楚流程以及材料该如何准备卡尔顿大学卡尔顿大学毕业证文凭证书;

4、回国时间很长,忘记办理;

5、回国马上就要找工作,办给用人单位看;

6、企事业单位必须要求办理的;

面向美国乔治城大学毕业留学生提供以下服务:

【★卡尔顿大学卡尔顿大学毕业证文凭证书毕业证、成绩单等全套材料,从防伪到印刷,从水印到钢印烫金,与学校100%相同】

【★真实使馆认证(留学人员回国证明),使馆存档可通过大使馆查询确认】

【★真实教育部认证,教育部存档,教育部留服网站可查】

【★真实留信认证,留信网入库存档,可查卡尔顿大学卡尔顿大学毕业证文凭证书】

我们从事工作十余年的有着丰富经验的业务顾问,熟悉海外各国大学的学制及教育体系,并且以挂科生解决毕业材料不全问题为基础,为客户量身定制1对1方案,未能毕业的回国留学生成功搭建回国顺利发展所需的桥梁。我们一直努力以高品质的教育为起点,以诚信、专业、高效、创新作为一切的行动宗旨,始终把“诚信为主、质量为本、客户第一”作为我们全部工作的出发点和归宿点。同时为海内外留学生提供大学毕业证购买、补办成绩单及各类分数修改等服务;归国认证方面,提供《留信网入库》申请、《国外学历学位认证》申请以及真实学籍办理等服务,帮助众多莘莘学子实现了一个又一个梦想。

专业服务,请勿犹豫联系我

如果您真实毕业回国,对于学历认证无从下手,请联系我,我们免费帮您递交

诚招代理:本公司诚聘当地代理人员,如果你有业余时间,或者你有同学朋友需要,有兴趣就请联系我

你赢我赢,共创双赢

你做代理,可以帮助卡尔顿大学同学朋友

你做代理,可以拯救卡尔顿大学失足青年

你做代理,可以挽救卡尔顿大学一个个人才

你做代理,你将是别人人生卡尔顿大学的转折点

你做代理,可以改变自己,改变他人,给他人和自己一个机会他交友与城里人交友但他俩就好像是两个世界里的人根本拢不到一块儿不知不觉山娃倒跟周围出租屋里的几个小伙伴成了好朋友因为他们也是从乡下进城过暑假的小学生快乐的日子总是过得飞快山娃尚未完全认清那几位小朋友时他们却一个接一个地回家了山娃这时才恍然发现二个月的暑假已转到了尽头他的城市生活也将划上一个不很圆满的句号了值得庆幸的是山娃早记下了他们的学校和联系方式说也奇怪在山娃离城的头一天父亲居然请假陪山娃耍了活

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

SlideShare Description for "Chatty Kathy - UNC Bootcamp Final Project Presentation"

Title: Chatty Kathy: Enhancing Physical Activity Among Older Adults

Description:

Discover how Chatty Kathy, an innovative project developed at the UNC Bootcamp, aims to tackle the challenge of low physical activity among older adults. Our AI-driven solution uses peer interaction to boost and sustain exercise levels, significantly improving health outcomes. This presentation covers our problem statement, the rationale behind Chatty Kathy, synthetic data and persona creation, model performance metrics, a visual demonstration of the project, and potential future developments. Join us for an insightful Q&A session to explore the potential of this groundbreaking project.

Project Team: Jay Requarth, Jana Avery, John Andrews, Dr. Dick Davis II, Nee Buntoum, Nam Yeongjin & Mat Nicholas

Malana- Gimlet Market Analysis (Portfolio 2)

A market analysys on Spotify's parent Podcast company Gimlet.

一比一原版(TWU毕业证)西三一大学毕业证成绩单

TWU毕业证【微信95270640】西三一大学没毕业>办理西三一大学毕业证成绩单【微信TWU】TWU毕业证成绩单TWU学历证书TWU文凭《TWU毕业套号文凭网认证西三一大学毕业证成绩单》《哪里买西三一大学毕业证文凭TWU成绩学校快递邮寄信封》《开版西三一大学文凭》TWU留信认证本科硕士学历认证

[留学文凭学历认证(留信认证使馆认证)西三一大学毕业证成绩单毕业证证书大学Offer请假条成绩单语言证书国际回国人员证明高仿教育部认证申请学校等一切高仿或者真实可查认证服务。

多年留学服务公司,拥有海外样板无数能完美1:1还原海外各国大学degreeDiplomaTranscripts等毕业材料。海外大学毕业材料都有哪些工艺呢?工艺难度主要由:烫金.钢印.底纹.水印.防伪光标.热敏防伪等等组成。而且我们每天都在更新海外文凭的样板以求所有同学都能享受到完美的品质服务。

国外毕业证学位证成绩单办理方法:

1客户提供办理西三一大学西三一大学本科学位证成绩单信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

— — — — 我们是挂科和未毕业同学们的福音我们是实体公司精益求精的工艺! — — — -

一真实留信认证的作用(私企外企荣誉的见证):

1:该专业认证可证明留学生真实留学身份同时对留学生所学专业等级给予评定。

2:国家专业人才认证中心颁发入库证书这个入网证书并且可以归档到地方。

3:凡是获得留信网入网的信息将会逐步更新到个人身份内将在公安部网内查询个人身份证信息后同步读取人才网入库信息。

4:个人职称评审加20分个人信誉贷款加10分。

5:在国家人才网主办的全国网络招聘大会中纳入资料供国家500强等高端企业选择人才。广州火车东站的那一刻山娃感受到了一种从未有过的激动和震撼太美了可爱的广州父亲的城山娃惊喜得几乎叫出声来山娃觉得父亲太伟大了居然能单匹马地跑到这么远这么大这么美的地方赚大钱高楼大厦鳞次栉比大街小巷人潮涌动山娃一路张望一路惊叹他发现城里的桥居然层层叠叠扭来扭去桥下没水却有着水一般的车水马龙山娃惊诧于城里的公交车那么大那么美不用买票乖乖地掷下二枚硬币空调享受还能坐着看电视呢屡经辗转山娃终于跟着父亲到拉

一比一原版(UMich毕业证)密歇根大学|安娜堡分校毕业证成绩单

UMich毕业证【微信95270640】办理UMich毕业证【Q微信95270640】密歇根大学|安娜堡分校毕业证书原版↑制作密歇根大学|安娜堡分校学历认证文凭办理密歇根大学|安娜堡分校留信网认证,留学回国办理毕业证成绩单文凭学历认证【Q微信95270640】专业为海外学子办理毕业证成绩单、文凭制作,学历仿制,回国人员证明、做文凭,研究生、本科、硕士学历认证、留信认证、结业证、学位证书样本、美国教育部认证百分百真实存档可查】

【本科硕士】密歇根大学|安娜堡分校密歇根大学|安娜堡分校硕士毕业证成绩单(GPA修改);学历认证(教育部认证);大学Offer录取通知书留信认证使馆认证;雅思语言证书等高仿类证书。

办理流程:

1客户提供办理密歇根大学|安娜堡分校密歇根大学|安娜堡分校硕士毕业证成绩单信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

真实网上可查的证明材料

1教育部学历学位认证留服官网真实存档可查永久存档。

2留学回国人员证明(使馆认证)使馆网站真实存档可查。

我们对海外大学及学院的毕业证成绩单所使用的材料尺寸大小防伪结构(包括:密歇根大学|安娜堡分校密歇根大学|安娜堡分校硕士毕业证成绩单隐形水印阴影底纹钢印LOGO烫金烫银LOGO烫金烫银复合重叠。文字图案浮雕激光镭射紫外荧光温感复印防伪)都有原版本文凭对照。质量得到了广大海外客户群体的认可同时和海外学校留学中介做到与时俱进及时掌握各大院校的(毕业证成绩单资格证结业证录取通知书在读证明等相关材料)的版本更新信息能够在第一时间掌握最新的海外学历文凭的样版尺寸大小纸张材质防伪技术等等并在第一时间收集到原版实物以求达到客户的需求。

本公司还可以按照客户原版印刷制作且能够达到客户理想的要求。有需要办理证件的客户请联系我们在线客服中心微信:95270640 或咨询在线在我脑海中回旋很幸运我有这样的机会能将埋在心底深深的感激之情得以表达从婴儿的“哇哇坠地到哺育我长大您们花去了多少的心血与汗水编织了多少个日日夜夜从上小学到初中乃至大学燃烧着自己默默奉献着光和热全天下的父母都是仁慈的无私的伟大的所以无论何时何地都不要忘记您们是这样的无私将一份沉甸甸的爱和希望传递到我们的眼里和心中而我应做的就是感恩父母回报社会共创和谐父母对我们的恩情深厚而无私孝敬父母是我们做人元

Predicting Product Ad Campaign Performance: A Data Analysis Project Presentation

Predicting Product Ad Campaign Performance: A Data Analysis Project PresentationBoston Institute of Analytics

Explore our comprehensive data analysis project presentation on predicting product ad campaign performance. Learn how data-driven insights can optimize your marketing strategies and enhance campaign effectiveness. Perfect for professionals and students looking to understand the power of data analysis in advertising. for more details visit: https://bostoninstituteofanalytics.org/data-science-and-artificial-intelligence/一比一原版(BU毕业证)波士顿大学毕业证成绩单

BU毕业证【微信95270640】购买(波士顿大学毕业证成绩单硕士学历)Q微信95270640代办BU学历认证留信网伪造波士顿大学学位证书精仿波士顿大学本科/硕士文凭证书补办波士顿大学 diplomaoffer,Transcript购买波士顿大学毕业证成绩单购买BU假毕业证学位证书购买伪造波士顿大学文凭证书学位证书,专业办理雅思、托福成绩单,学生ID卡,在读证明,海外各大学offer录取通知书,毕业证书,成绩单,文凭等材料:1:1完美还原毕业证、offer录取通知书、学生卡等各种在读或毕业材料的防伪工艺(包括 烫金、烫银、钢印、底纹、凹凸版、水印、防伪光标、热敏防伪、文字图案浮雕,激光镭射,紫外荧光,温感光标)学校原版上有的工艺我们一样不会少,不论是老版本还是最新版本,都能保证最高程度还原,力争完美以求让所有同学都能享受到完美的品质服务。

专业为留学生办理波士顿大学波士顿大学毕业证offer【100%存档可查】留学全套申请材料办理。本公司承诺所有毕业证成绩单成品全部按照学校原版工艺对照一比一制作和学校一样的羊皮纸张保证您证书的质量!

如果你回国在学历认证方面有以下难题请联系我们我们将竭诚为你解决认证瓶颈

1所有材料真实但资料不全无法提供完全齐整的原件。【如:成绩单丶毕业证丶回国证明等材料中有遗失的。】

2获得真实的国外最终学历学位但国外本科学历就读经历存在问题或缺陷。【如:国外本科是教育部不承认的或者是联合办学项目教育部没有备案的或者外本科没有正常毕业的。】

3学分转移联合办学等情况复杂不知道怎么整理材料的。时间紧迫自己不清楚递交流程的。

如果你是以上情况之一请联系我们我们将在第一时间内给你免费咨询相关信息。我们将帮助你整理认证所需的各种材料.帮你解决国外学历认证难题。

国外波士顿大学波士顿大学毕业证offer办理方法:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询波士顿大学波士顿大学毕业证offer);

2开始安排制作波士顿大学毕业证成绩单电子图;

3波士顿大学毕业证成绩单电子版做好以后发送给您确认;

4波士顿大学毕业证成绩单电子版您确认信息无误之后安排制作成品;

5波士顿大学成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。二条巴掌般大的裤衩衩走出泳池山娃感觉透身粘粘乎乎散发着药水味有点痒山娃顿时留恋起家乡的小河潺潺活水清凉无比日子就这样孤寂而快乐地过着寂寞之余山娃最神往最开心就是晚上无论多晚多累父亲总要携山娃出去兜风逛夜市流光溢彩人潮涌动的都市夜生活总让山娃目不暇接惊叹不已父亲老问山娃想买什么想吃什么山娃知道父亲赚钱很辛苦除了书籍和文具山娃啥也不要能牵着父亲的手满城闲逛他已心满意足了父亲连挑了三套童装叫山娃试穿山伸

Q1’2024 Update: MYCI’s Leap Year Rebound

Key things you need to know on consumer confidence, key behavioral, tech, e-wallet & esports trends in Malaysia.

一比一原版(YU毕业证)约克大学毕业证成绩单

YU毕业证【微信95270640】(约克大学毕业证高仿学位证书((+《Q微信95270640》)))购买YU毕业证修改YU成绩单购买约克大学毕业证办YU文凭办高仿毕业证约克大学毕业证购买修改成绩单挂科退学如何进行学历认证留学退学办毕业证书/ 出国留学无法毕业买毕业证留学被劝退买毕业证(非正常毕业教育部认证咨询) York University

办理国外约克大学毕业证书 #成绩单改成绩 #教育部学历学位认证 #毕业证认证 #留服认证 #使馆认证(留学回国人员证明) #(证)等

真实教育部认证教育部存档中国教育部留学服务中心认证(即教育部留服认证)网站100%可查.

真实使馆认证(即留学人员回国证明)使馆存档可通过大使馆查询确认.

留信网认证国家专业人才认证中心颁发入库证书留信网永久存档可查.

约克大学约克大学毕业证学历书毕业证 #成绩单等全套材料从防伪到印刷从水印到钢印烫金跟学校原版100%相同.

国际留学归国服务中心:实体公司注册经营行业标杆精益求精!

国外毕业证学位证成绩单办理流程:

1客户提供办理约克大学约克大学毕业证学历书信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作约克大学毕业证成绩单电子图;

3约克大学毕业证成绩单电子版做好以后发送给您确认;

4约克大学毕业证成绩单电子版您确认信息无误之后安排制作成品;

5约克大学成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快递邮寄约克大学约克大学毕业证学历书)。心温柔地对待我将渐渐老去的父母体谅他们以反哺之心奉敬父母以感恩之心孝顺父母哪怕只为父母换洗衣服为父母喂饭送汤按摩酸痛的腰背握着父母的手扶着他们一步一步地慢慢散步.让我们的父母幸福快乐地度过一生挽着清风芒耀似金的骄阳如将之绽放的花蕾一般静静的从远方的山峦间缓缓升起这一片寂静的城市默默的等待着它的第一缕光芒将之唤醒那飘散在它前方的几层薄云像是新娘的婚纱一般为它的光芒添上了几分淡淡的浮晕在悄无声息间这怕

Recently uploaded (20)

Best best suvichar in gujarati english meaning of this sentence as Silk road ...

Best best suvichar in gujarati english meaning of this sentence as Silk road ...

Algorithmic optimizations for Dynamic Levelwise PageRank (from STICD) : SHORT...

Algorithmic optimizations for Dynamic Levelwise PageRank (from STICD) : SHORT...

Innovative Methods in Media and Communication Research by Sebastian Kubitschk...

Innovative Methods in Media and Communication Research by Sebastian Kubitschk...

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

Predicting Product Ad Campaign Performance: A Data Analysis Project Presentation

Predicting Product Ad Campaign Performance: A Data Analysis Project Presentation

tapal brand analysis PPT slide for comptetive data

tapal brand analysis PPT slide for comptetive data

Dataviz Products throughout the Evaluation Lifecycle

- 1. Products throughout the Evaluation Lifecycle Articulating Theory/Strategy Product Purpose How Used Considerations Theory of Change To create a visual that articulates the linkages between activities and hoped- for outcomes for arriving at social impact Used to help align clients and stakeholders around expectations for change, particularly realistic timing and order of outcomes Can be developed or revisited at any point during the lifecycle, but is particularly helpful during strategy development or mid-course review/correction Outcome maps allow for complexity that can be limited by the logic model format Important to fit the level of detail and visualization to the client’s purpose/needs (e.g., not too in the weeds) Social Network Analysis (SNA) To provide a visual of how different individuals or organizations within a field are connected. Can be used as a vehicle to quickly understand the characteristics of the field and identify central “hub” individuals or organizations Used to illustrate the connections and relationships between players within a field Can be used in conjunction with other frameworks (e.g. Center for Evaluation Innovation’s Advocacy Strategy Framework) to identify skills gaps and potential areas for targeted capacity building Can be used by funders to inform strategic grantmaking decisions Facilitated Processes for Sense-Making Product Purpose How Used Considerations Data Placemat To engage stakeholders in interpreting the data Used by evaluator to facilitate a debrief of evaluation data with client and/or stakeholders Intended to be an interim product that includes stakeholders in sense-making to build ownership Context, interpretations, and implications generated by debrief participants should be used to inform final reporting Particularly useful when there is a high amount of quantitative data that needs interpretation Important to only include graphs/charts or other visualizations, not narrative or evaluator interpretations Strategic Debrief Deck To facilitate a debrief/ discussion of key findings and implications Used with a client and/or stakeholders to discuss implications and think critically about, revise, and improve the strategy that is being evaluated Highlights a prioritized subset of evaluation findings that would benefit from discussion Can occur mid-point or at the end of a strategy to lift up decision points most critical for the stage of work Evaluator plays an expert role vis a vis the data and facilitates the discussion about what the data/findings mean for the work moving forward Discussion can be used to inform a final deliverable

- 2. 2 H-Forms To capture the degree to which stakeholders agree or disagree with a selected concept, and why. The process of publically rating each concept promotes discussion, identifies areas of consensus and divergence among stakeholders, and allows for the interpretation of information Used as a facilitative tool to gather and document stakeholder opinions and capture in-the- moment reflections Evaluator plays a facilitative role during this process H-Forms can be used to gather perspectives on multiple concepts simultaneously Evaluator creates an H-Form display for each concept being rated H-Forms should include a rating scale (preferably a 5- point scale) with anchor points clearly labeled Dot stickers can be used to enable stakeholders to rate each concept Communication and Reporting to Stakeholders Product Purpose How Used Considerations Visual Report Deck To share findings from an evaluation using data visualization to enhance understanding and promote evaluation use Used as a communication tool to share evaluation findings Visual reports take time to create Evaluator needs to follow basic dataviz guidelines when creating a visual report Evaluator needs to ensure that the report is well- organized Visual reports need to be a stand-alone product Visual Executive Summary To complement a traditional evaluation report Used as a communication tool to share evaluation findings with audiences that have a limited appetite for data or limited time Data are highly synthesized; should be no more than 1-2 pages Product may have a life of its own and should tell a story of the highest priority data/findings Timeline To chronologically document events related to an initiative or program to help stakeholders identify patterns, themes, and trends that may otherwise be difficult to see; Promotes a shared understanding of the contextual factors that may have contributed to or detracted from a desired outcome Used as a communication tool to help stakeholders digest multiple levels of information across a specified timeline Important to identify the purpose, audience, and time horizon of the timeline at the beginning of the process Evaluator needs to be selective about the categories of information included in the timeline Important to be purposeful in selecting the format and visualization style for the timeline Timelines are especially relevant when evaluating advocacy and policy change initiatives where contextual elements play a role in shaping policy outcomes