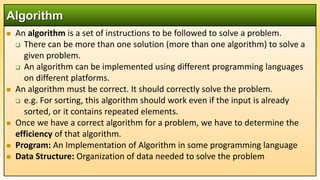

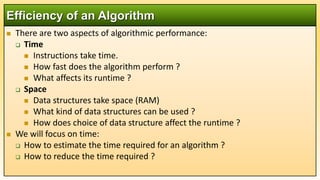

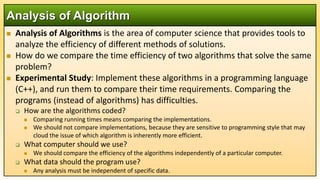

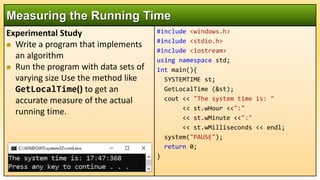

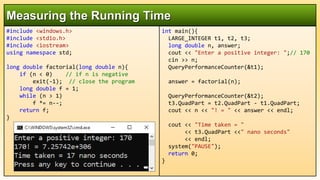

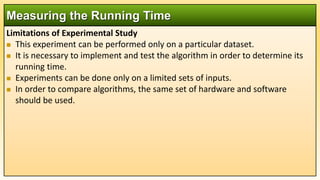

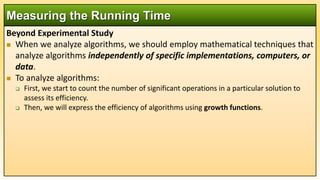

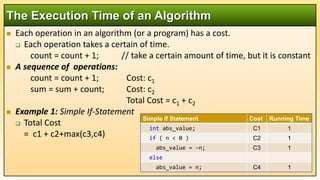

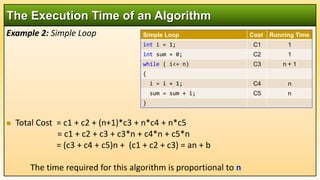

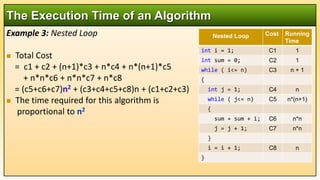

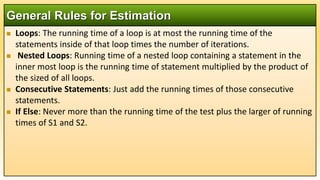

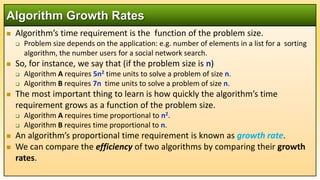

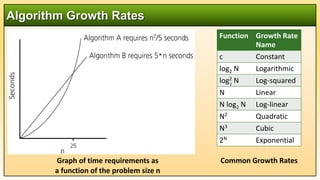

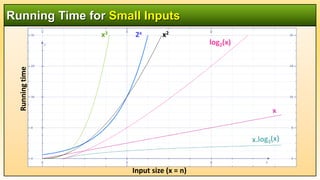

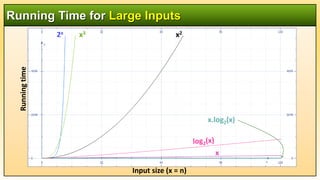

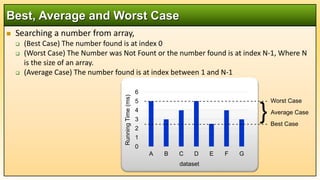

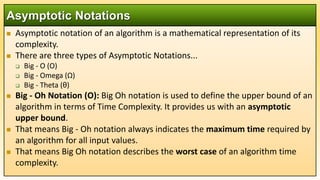

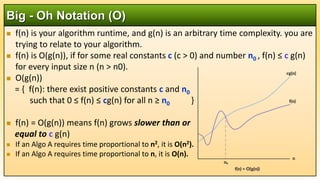

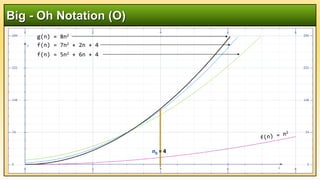

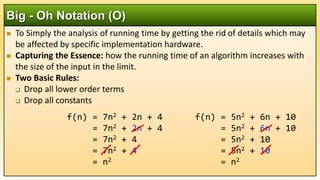

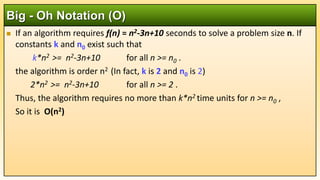

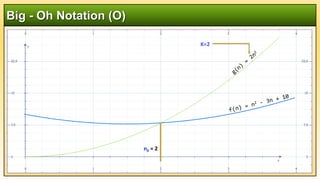

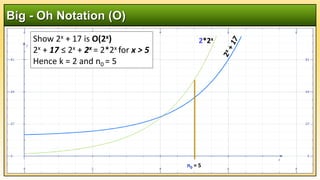

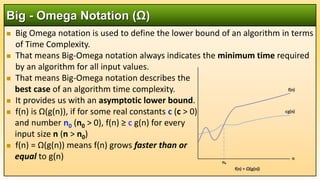

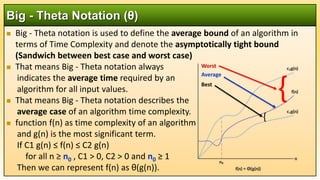

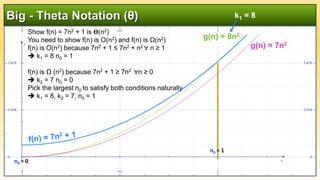

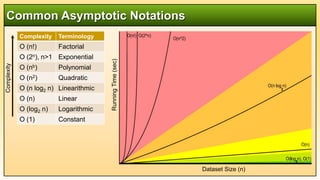

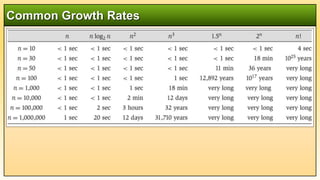

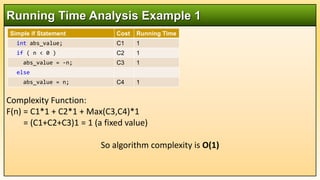

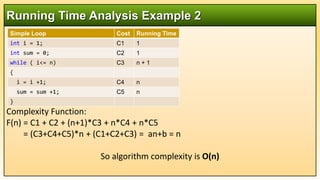

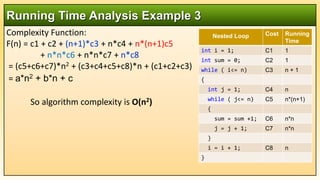

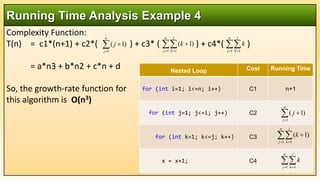

The document is a comprehensive lecture on algorithms, specifically analyzing their efficiency and performance, including time and space complexity. It covers important concepts like correct algorithms, data structures, growth rates, and asymptotic notations (Big O, Big Omega, and Big Theta). The lecture emphasizes the need for mathematical analysis and experimental studies to evaluate and compare algorithms objectively.