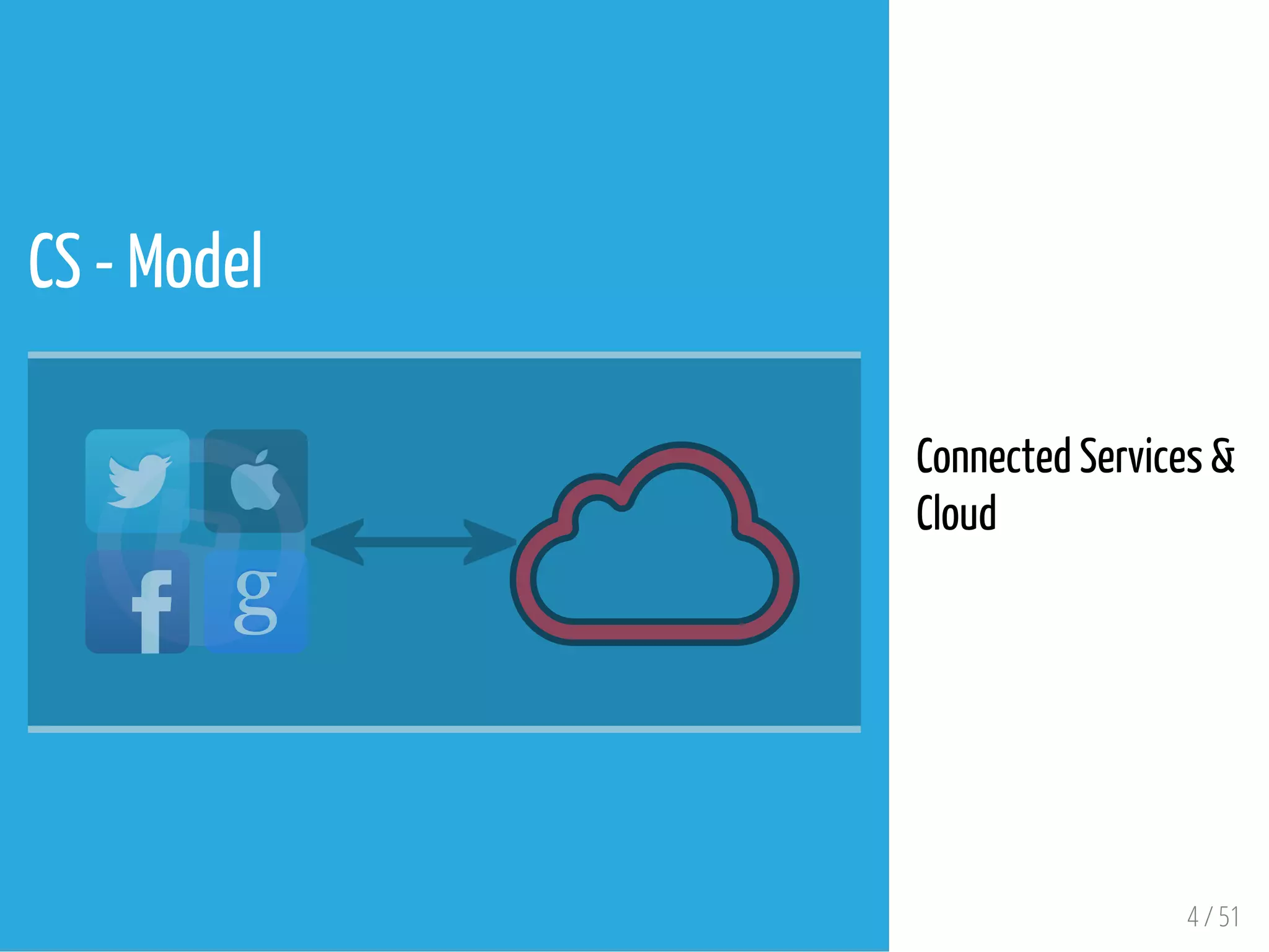

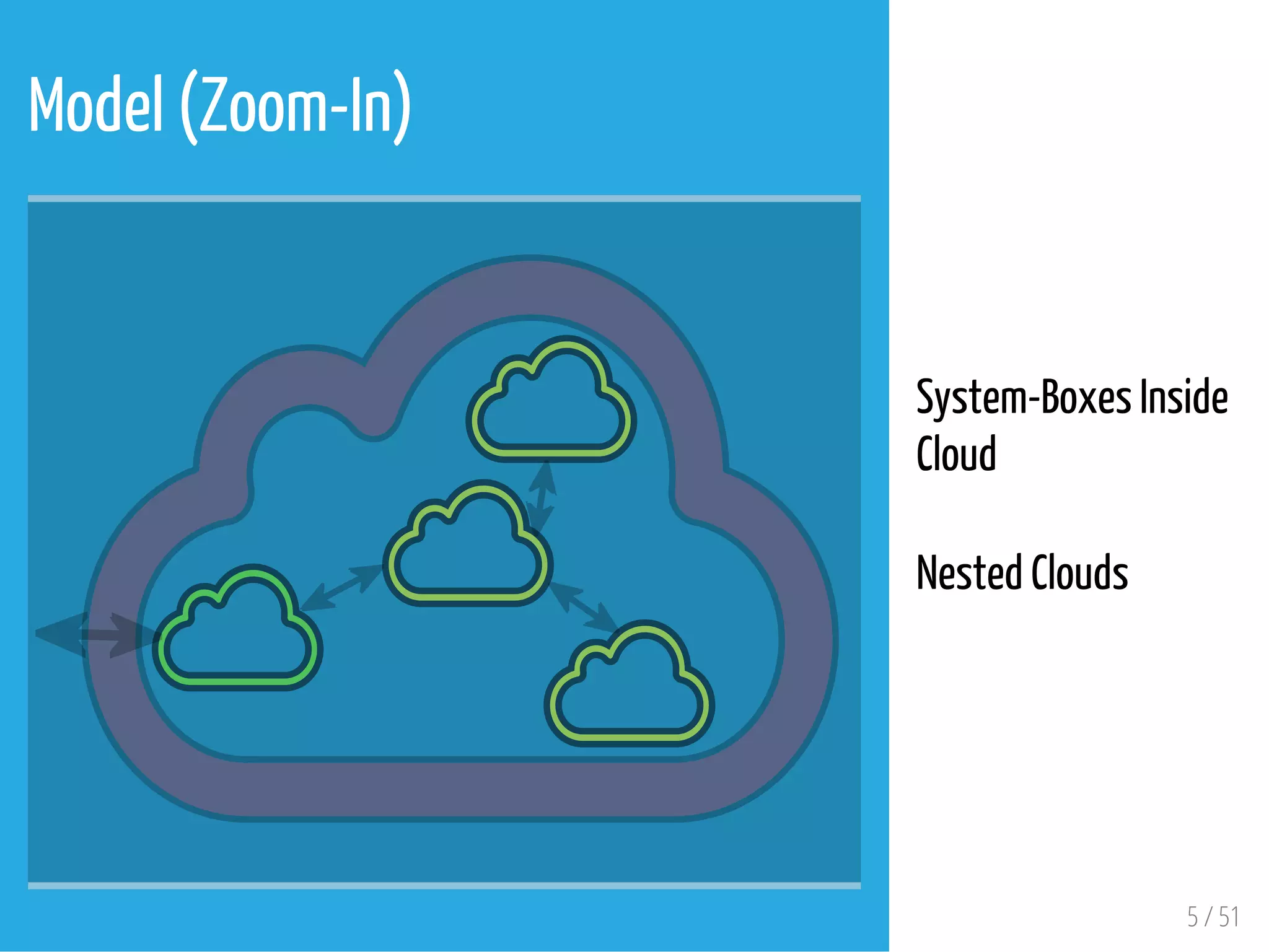

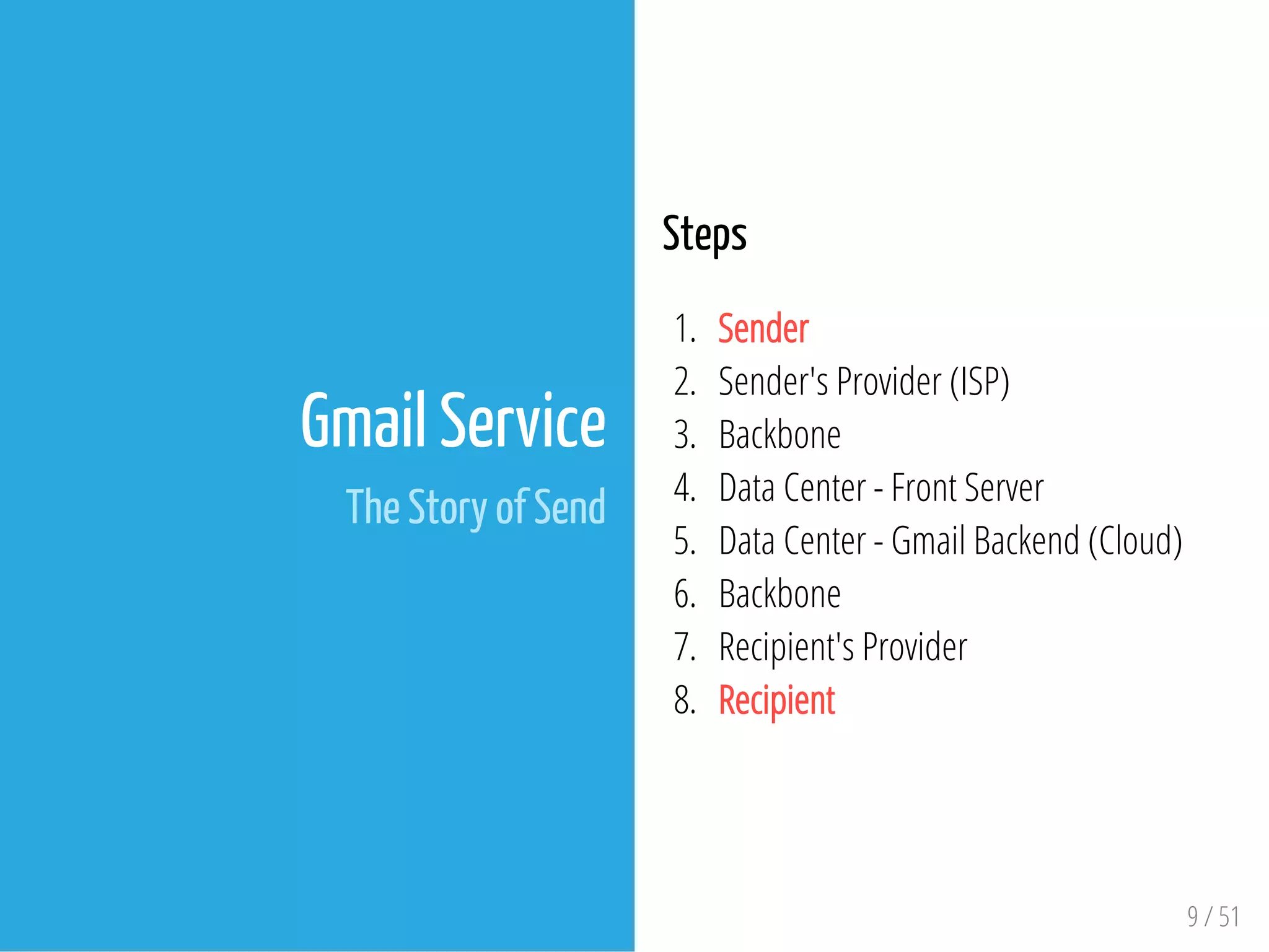

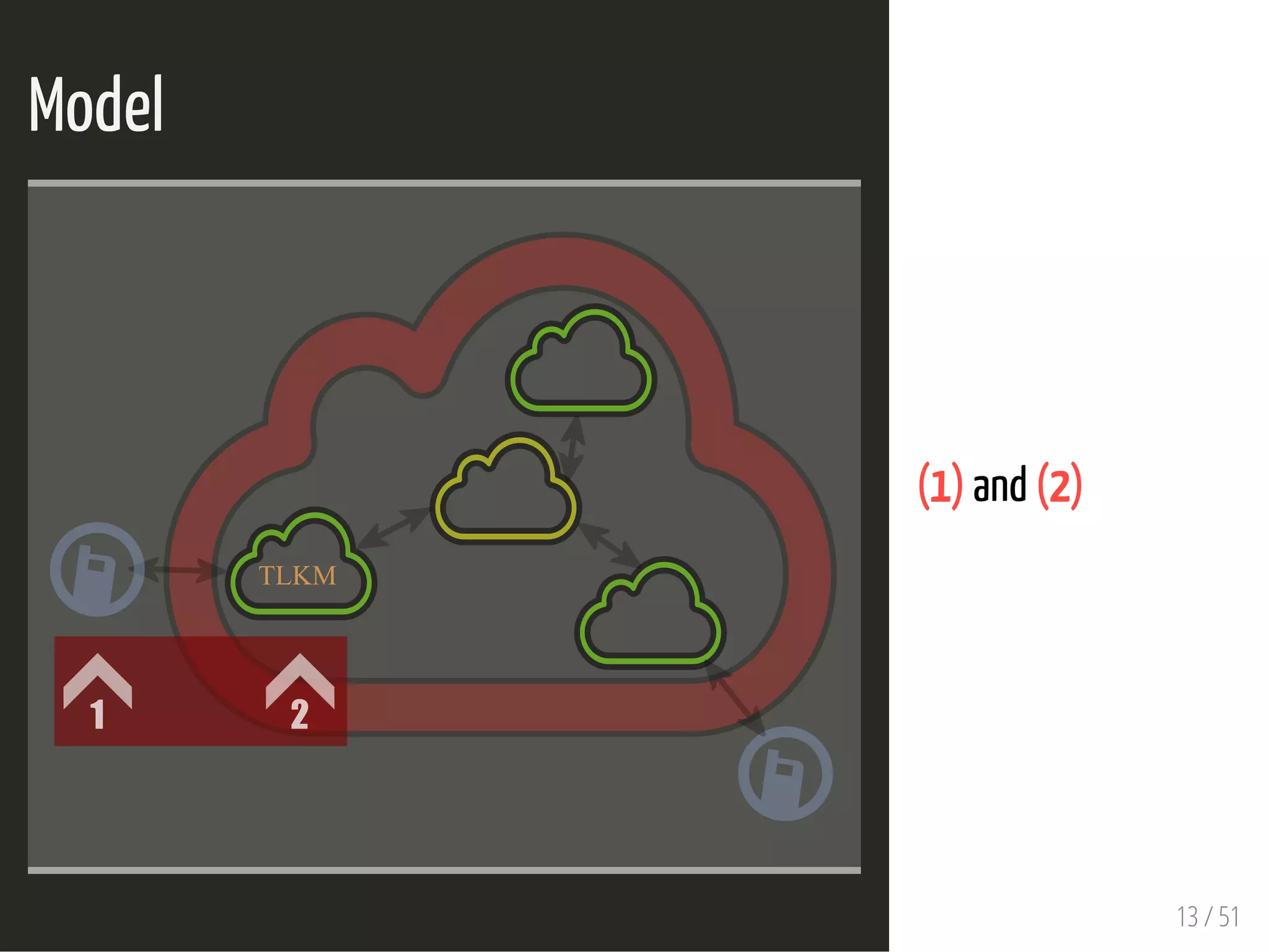

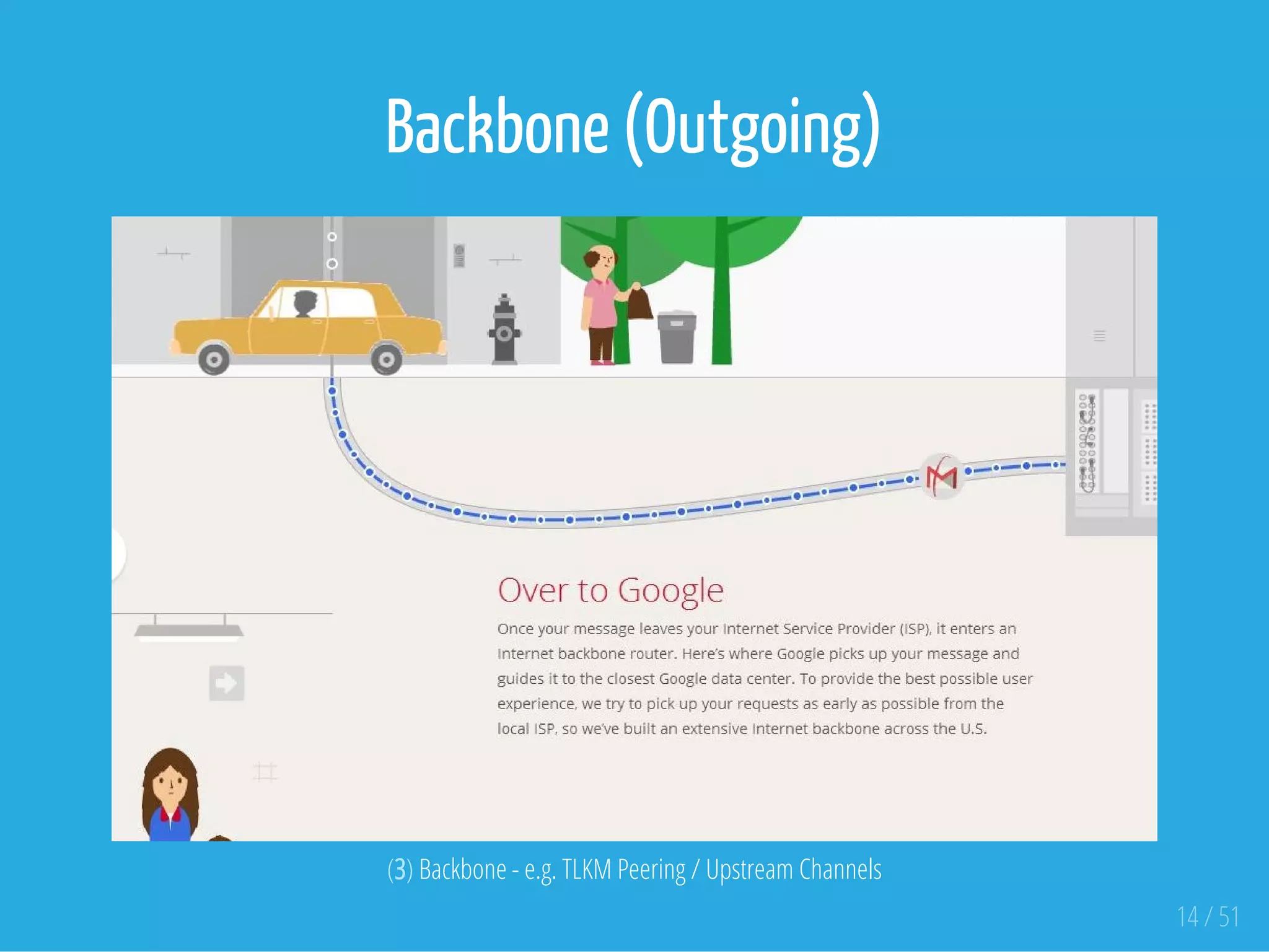

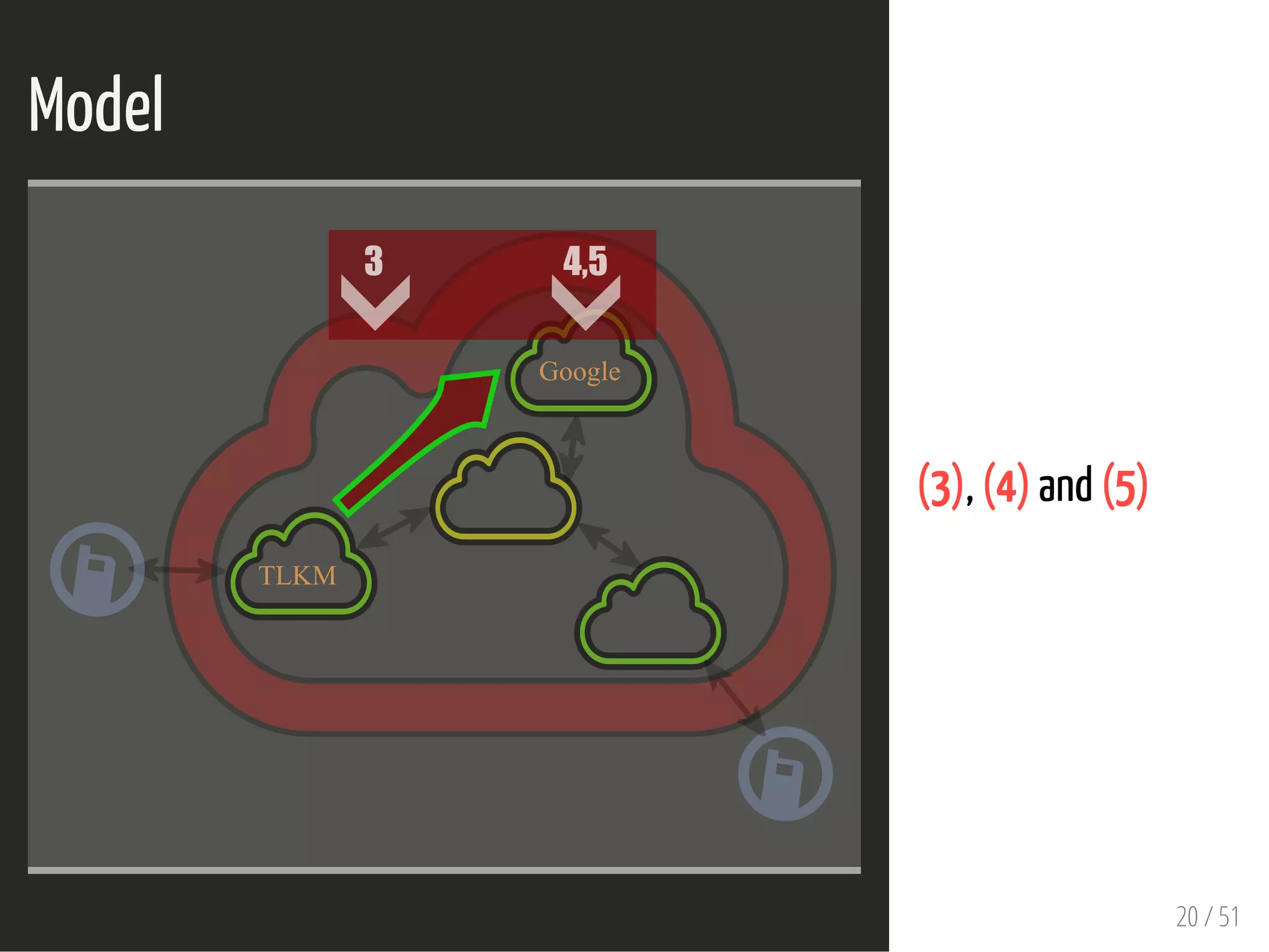

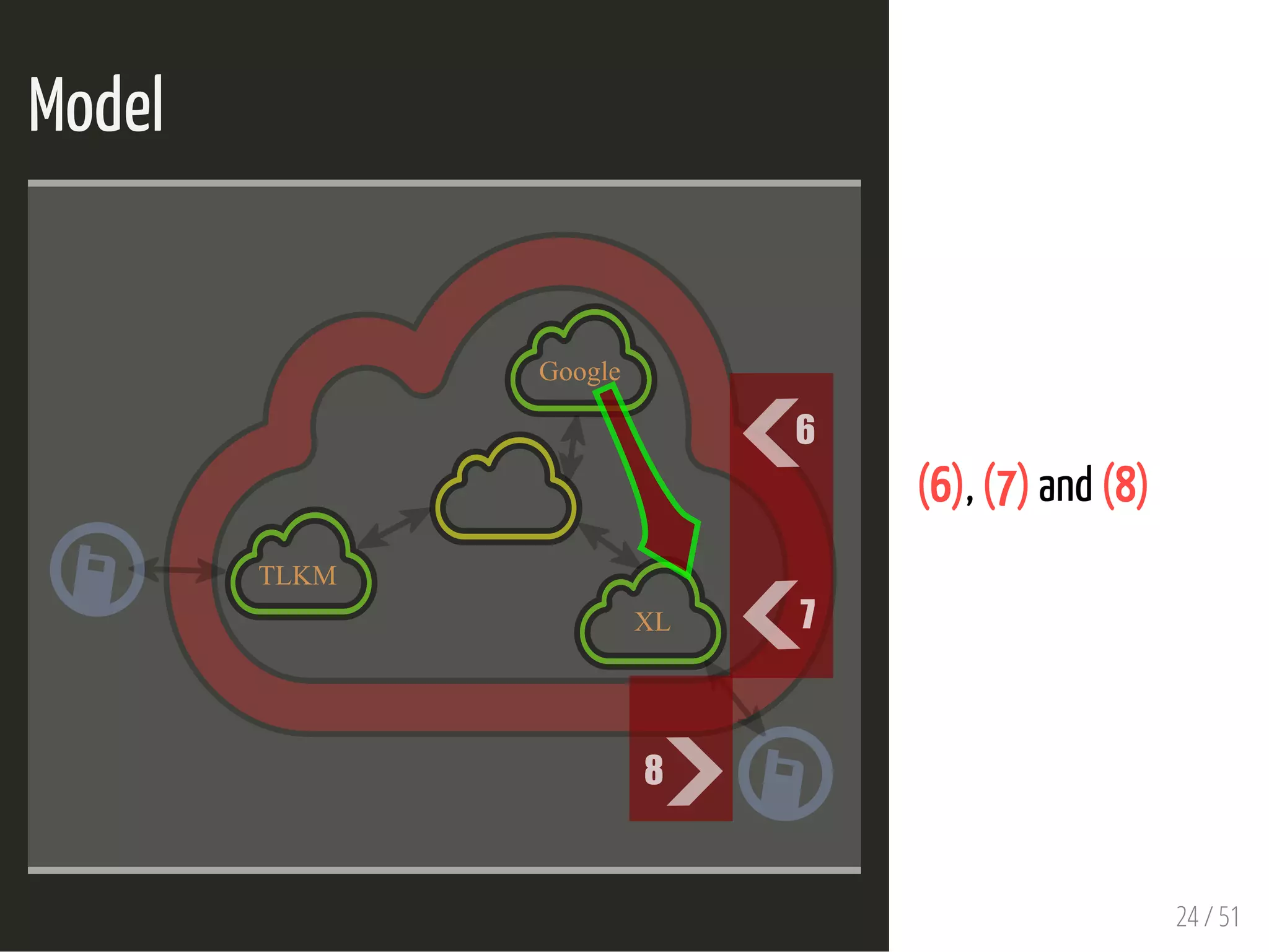

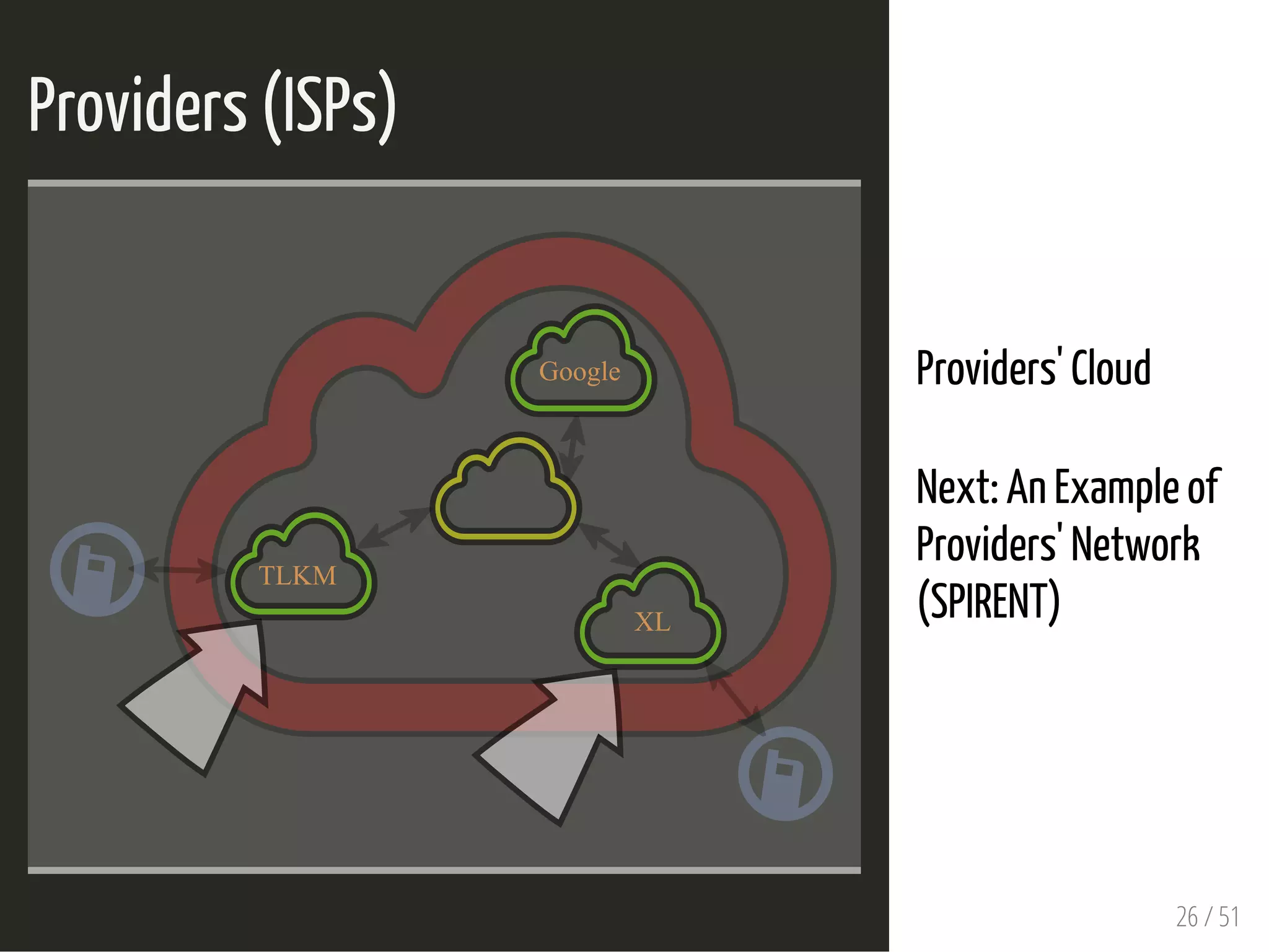

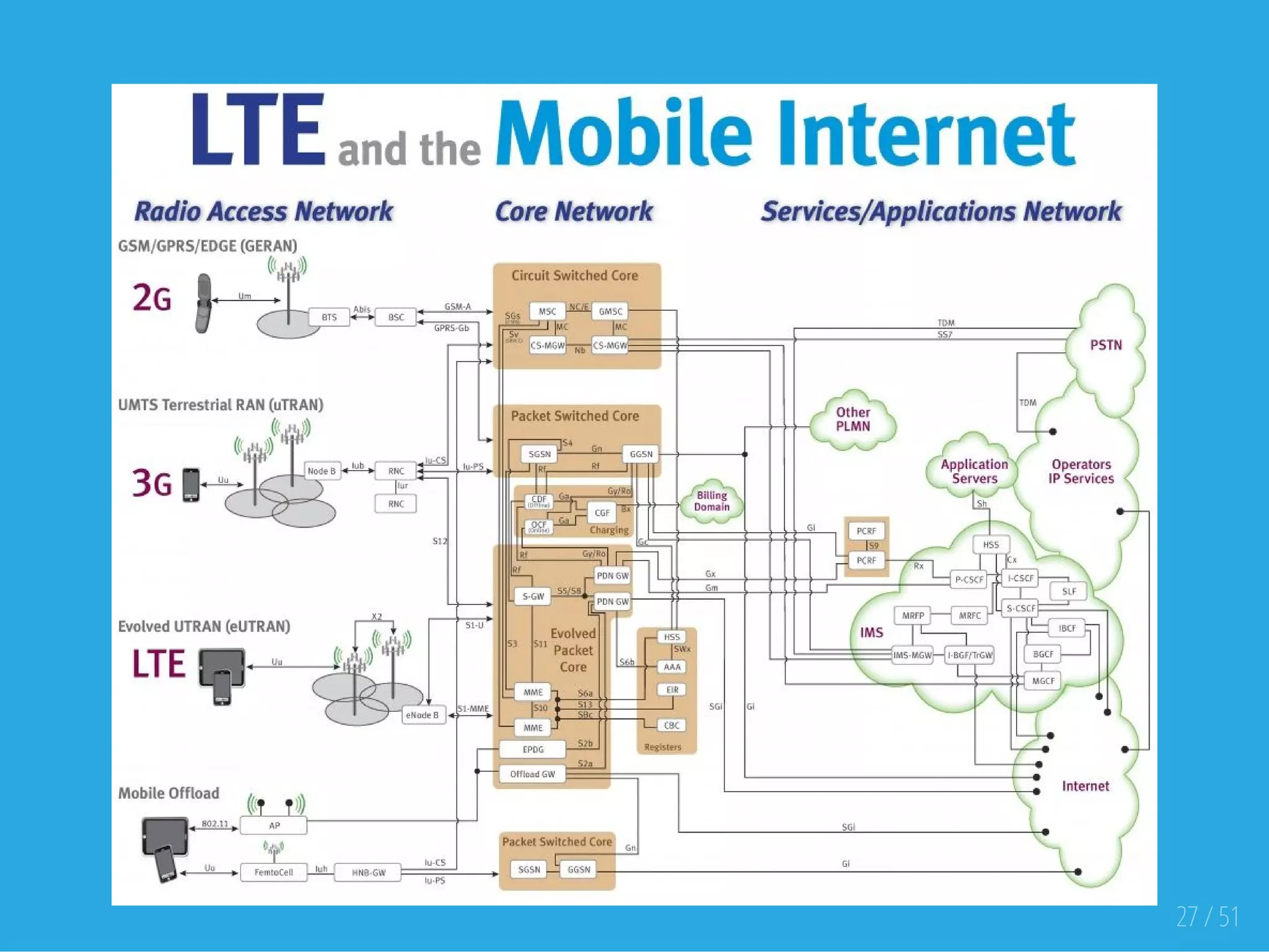

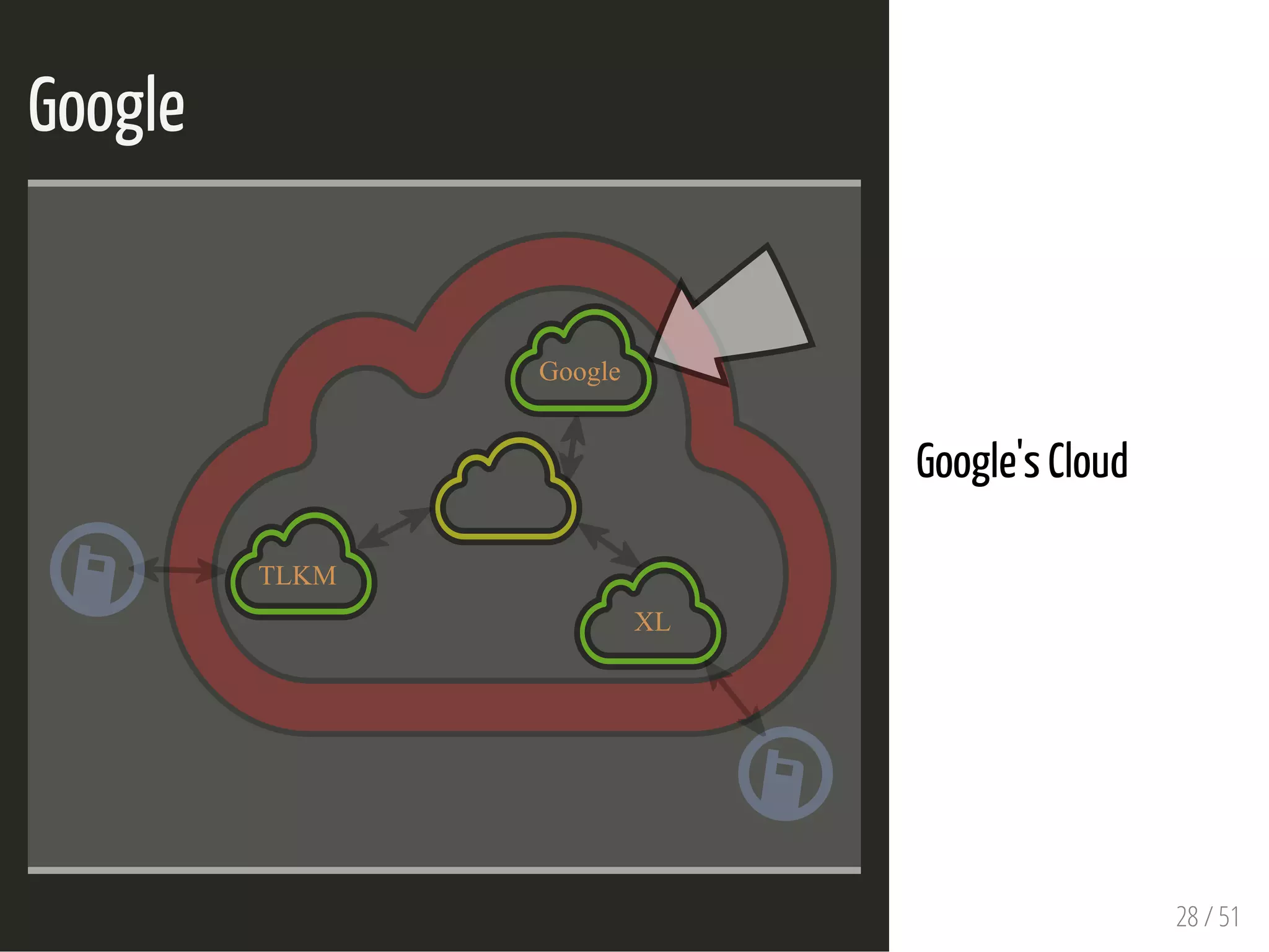

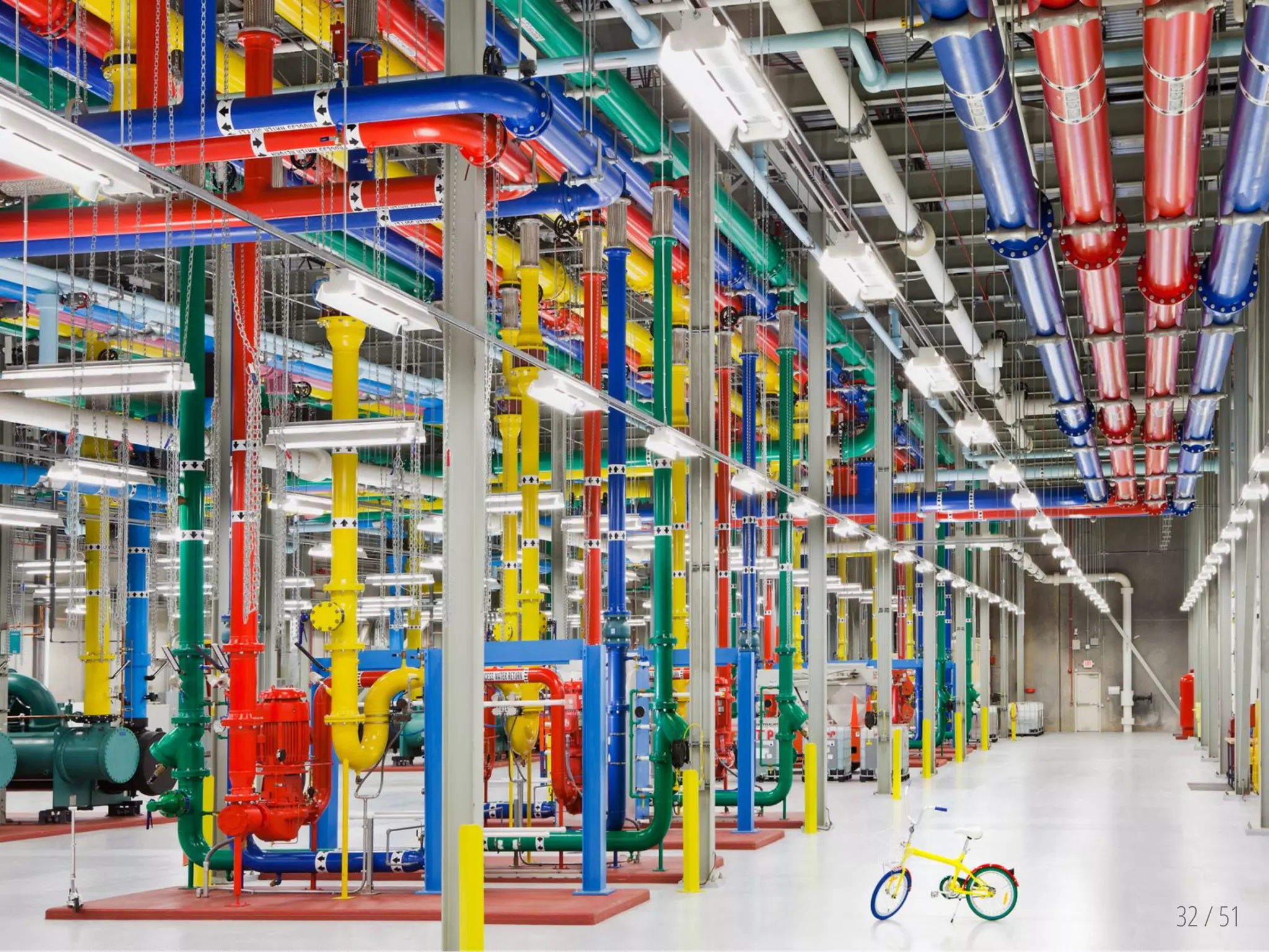

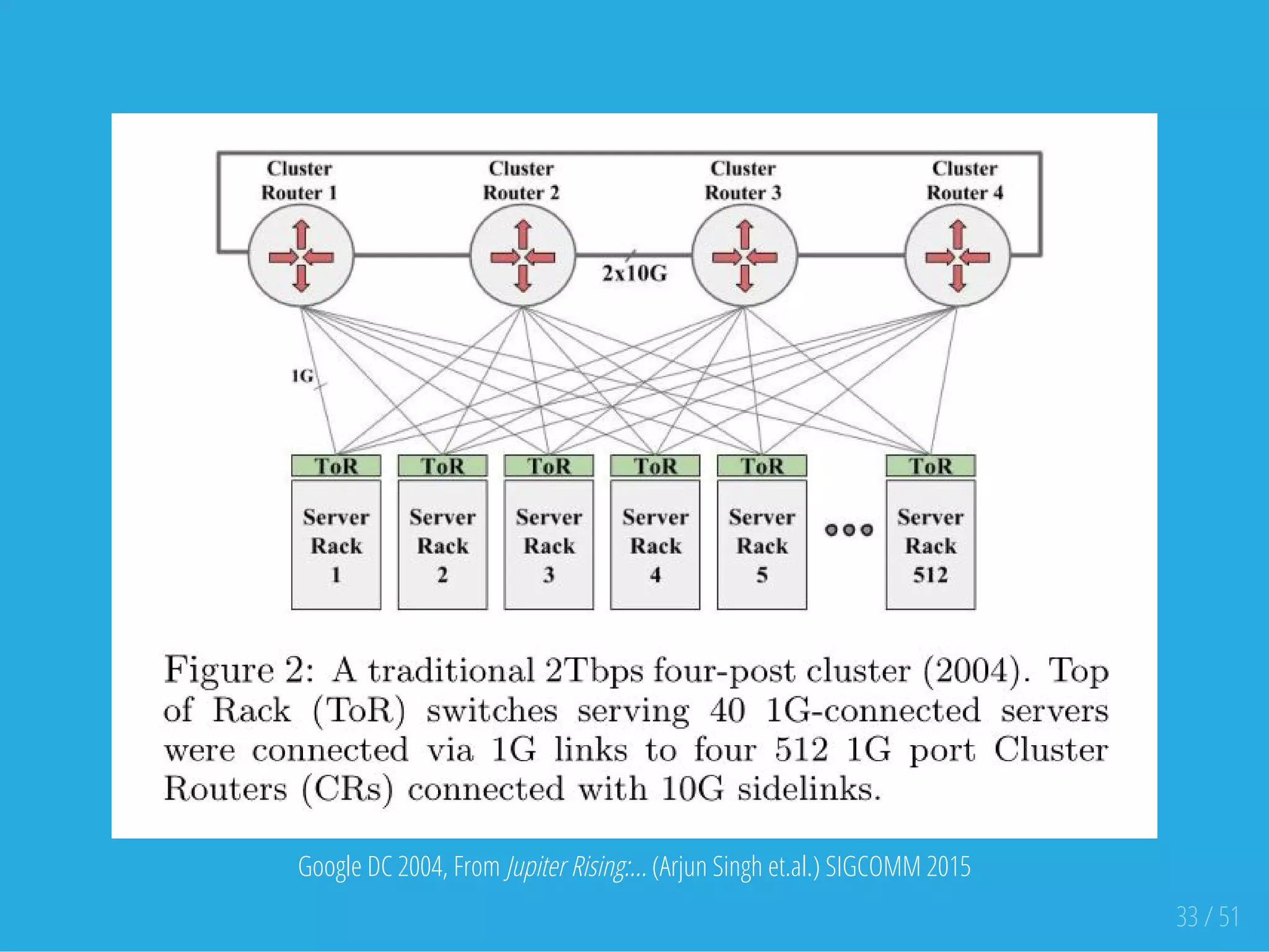

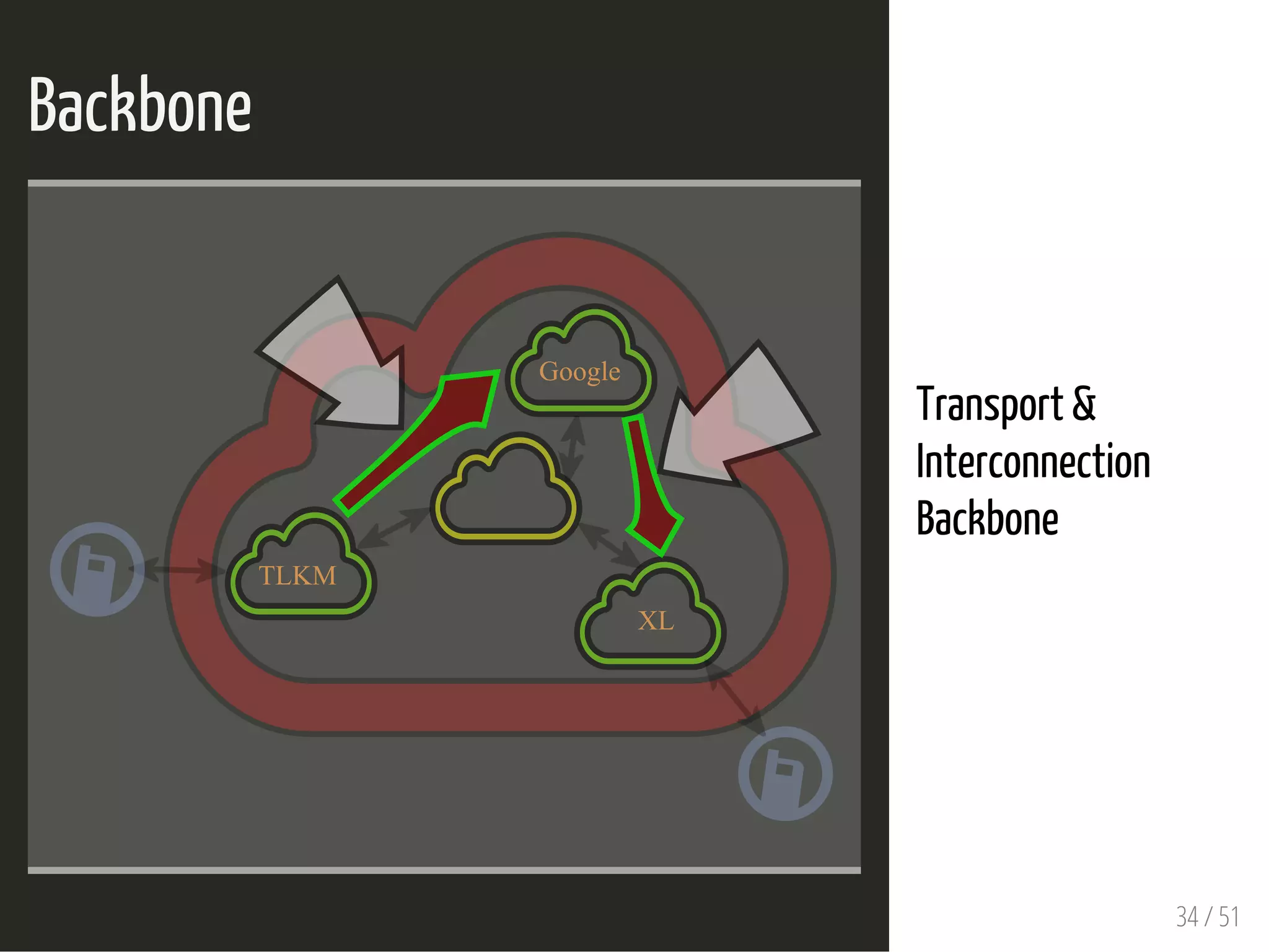

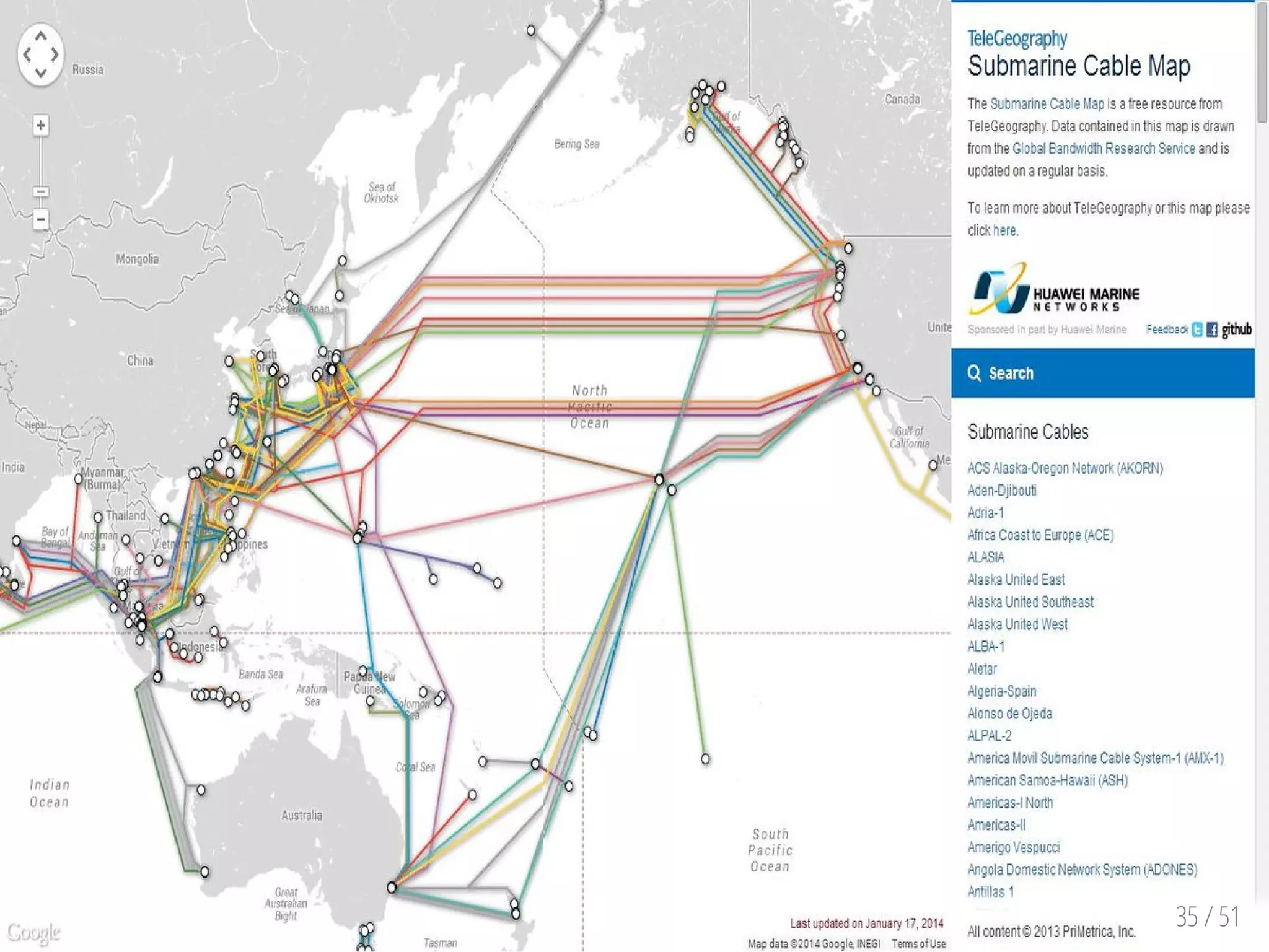

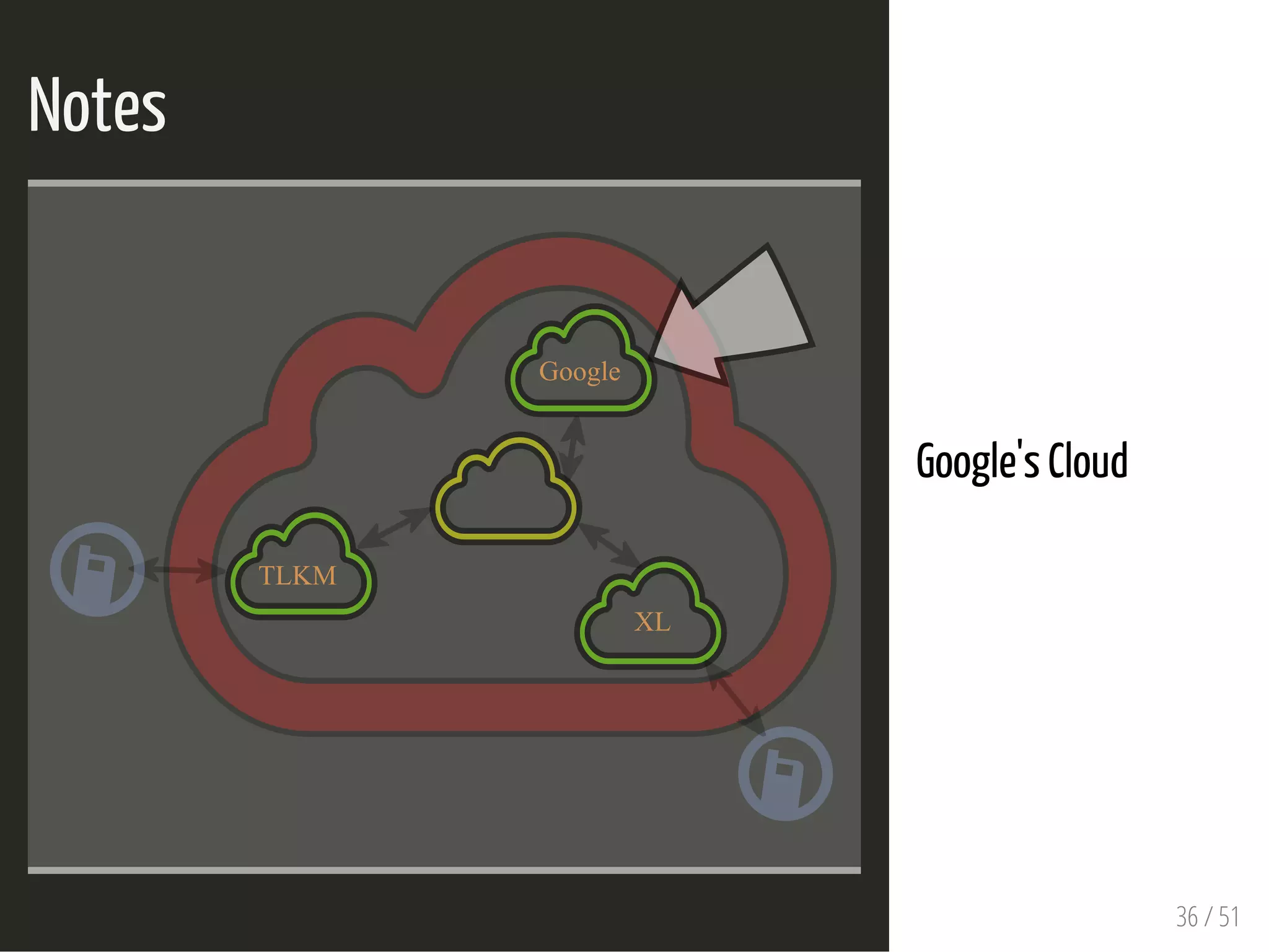

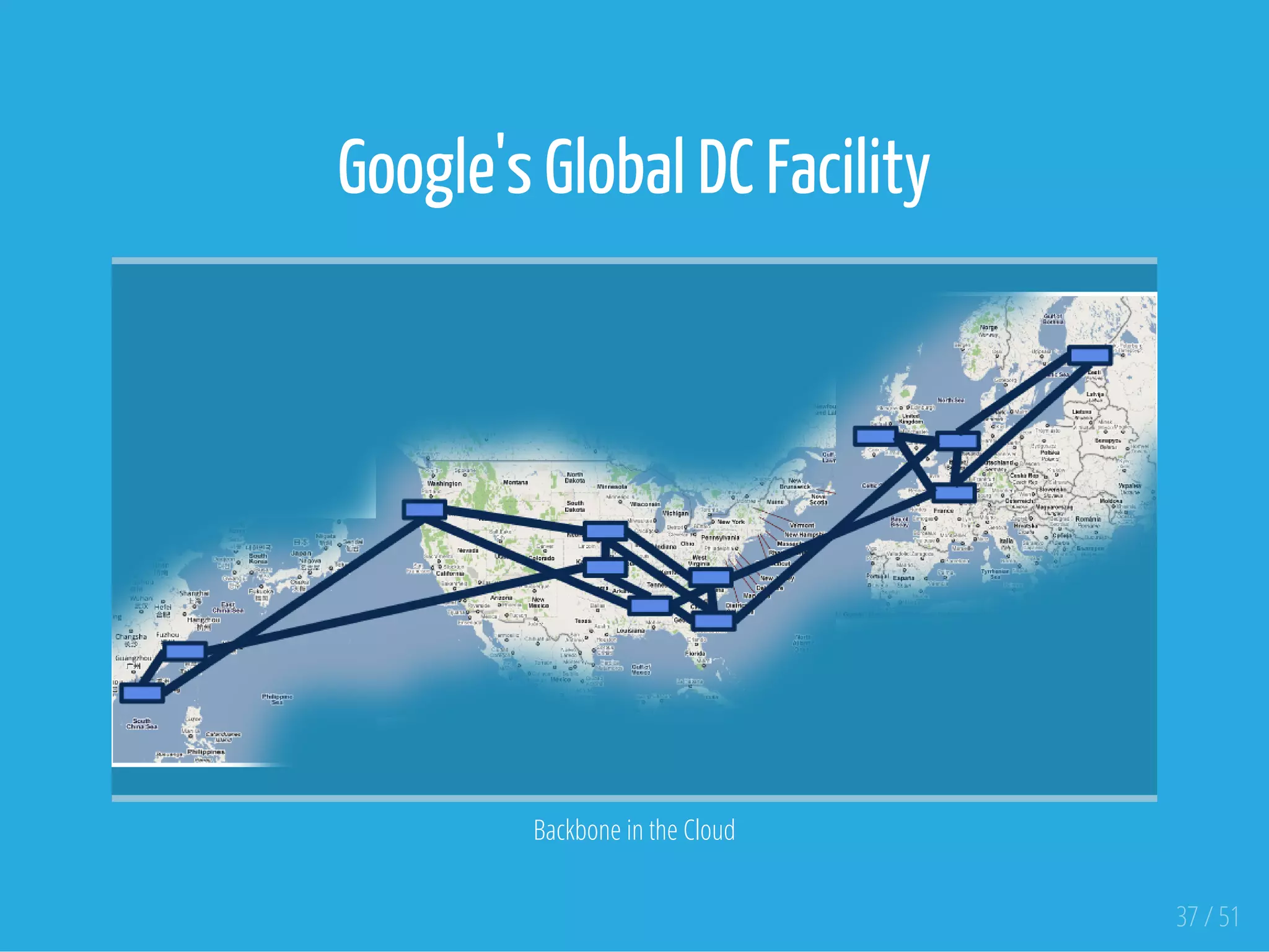

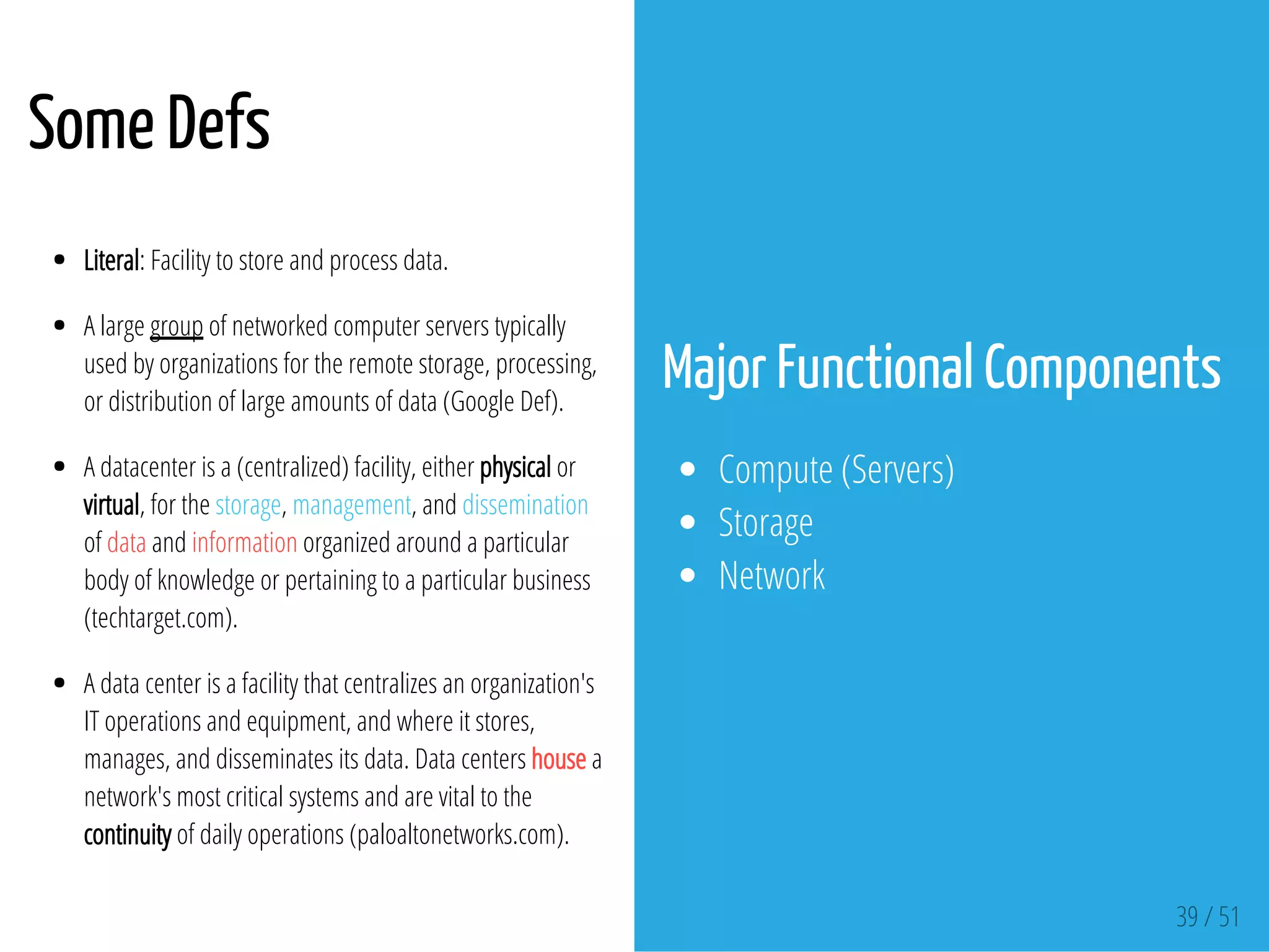

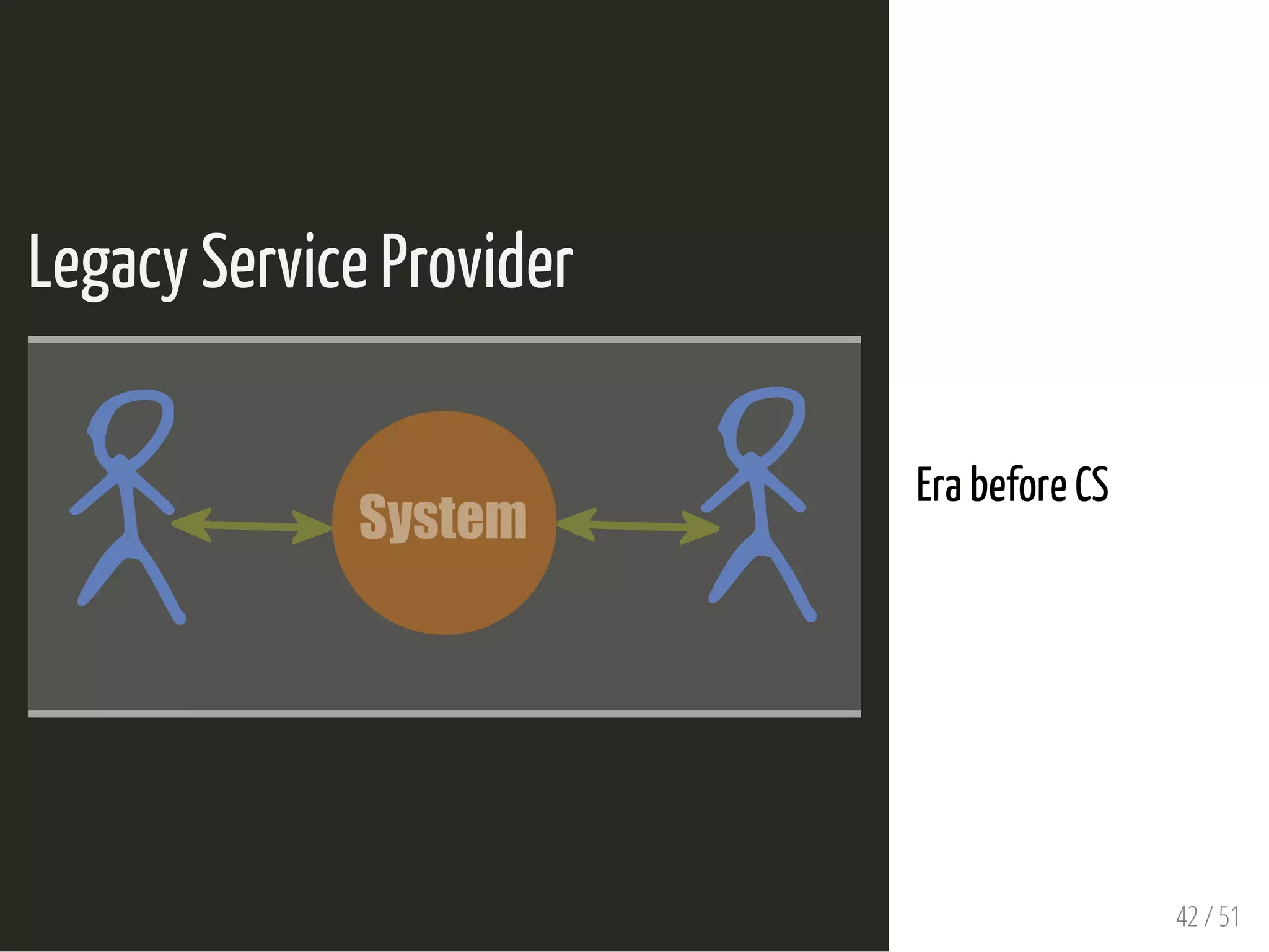

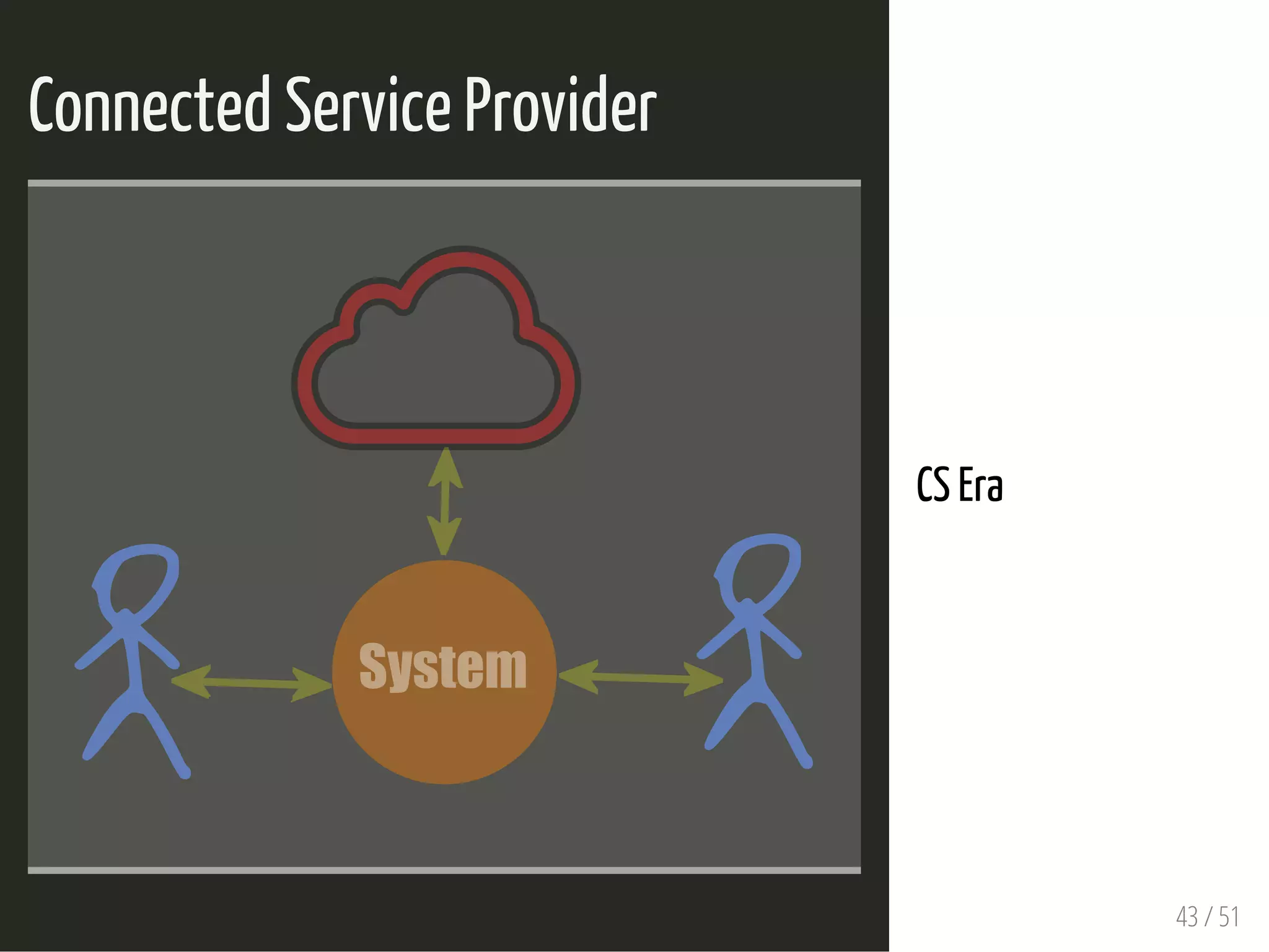

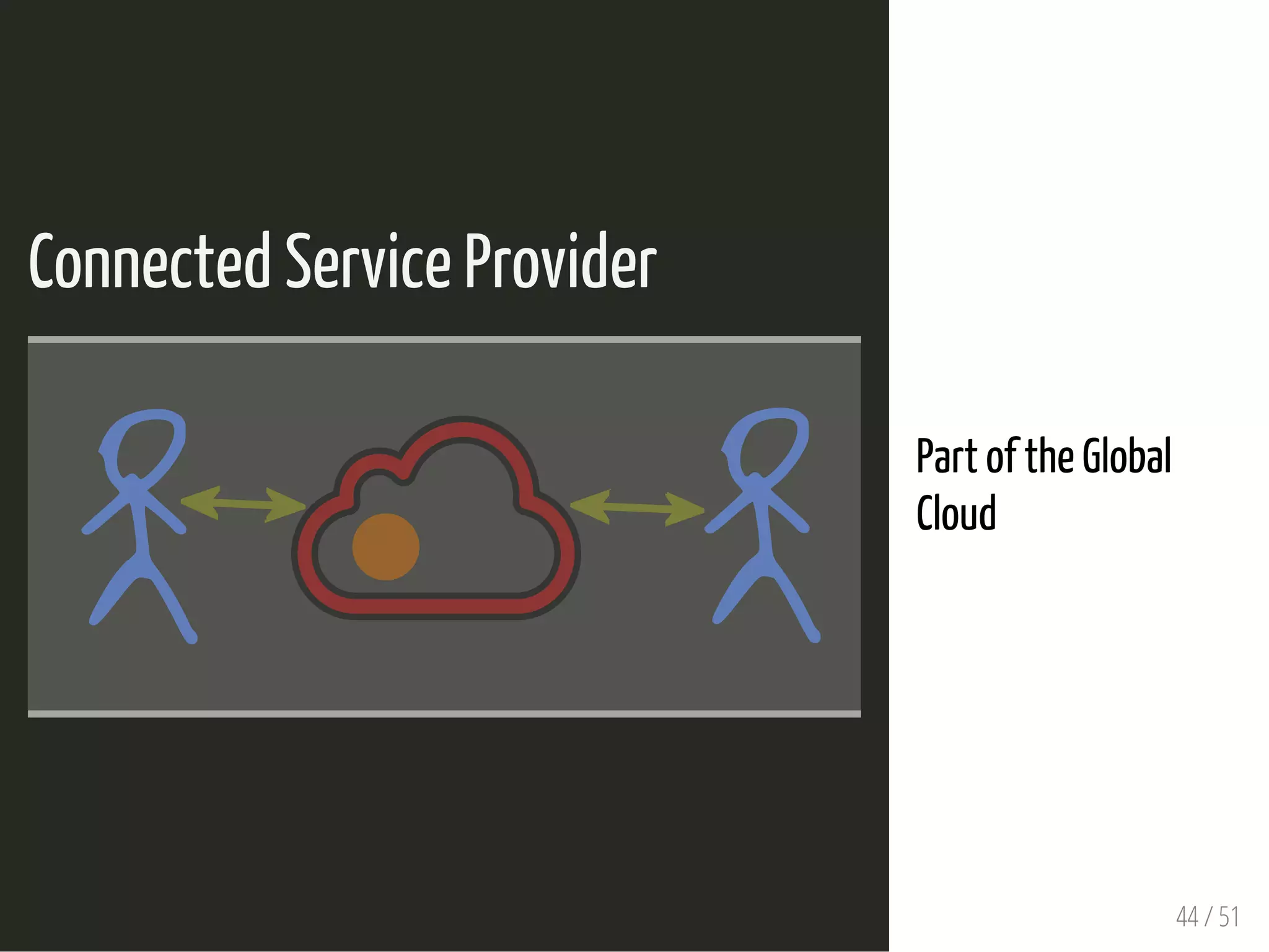

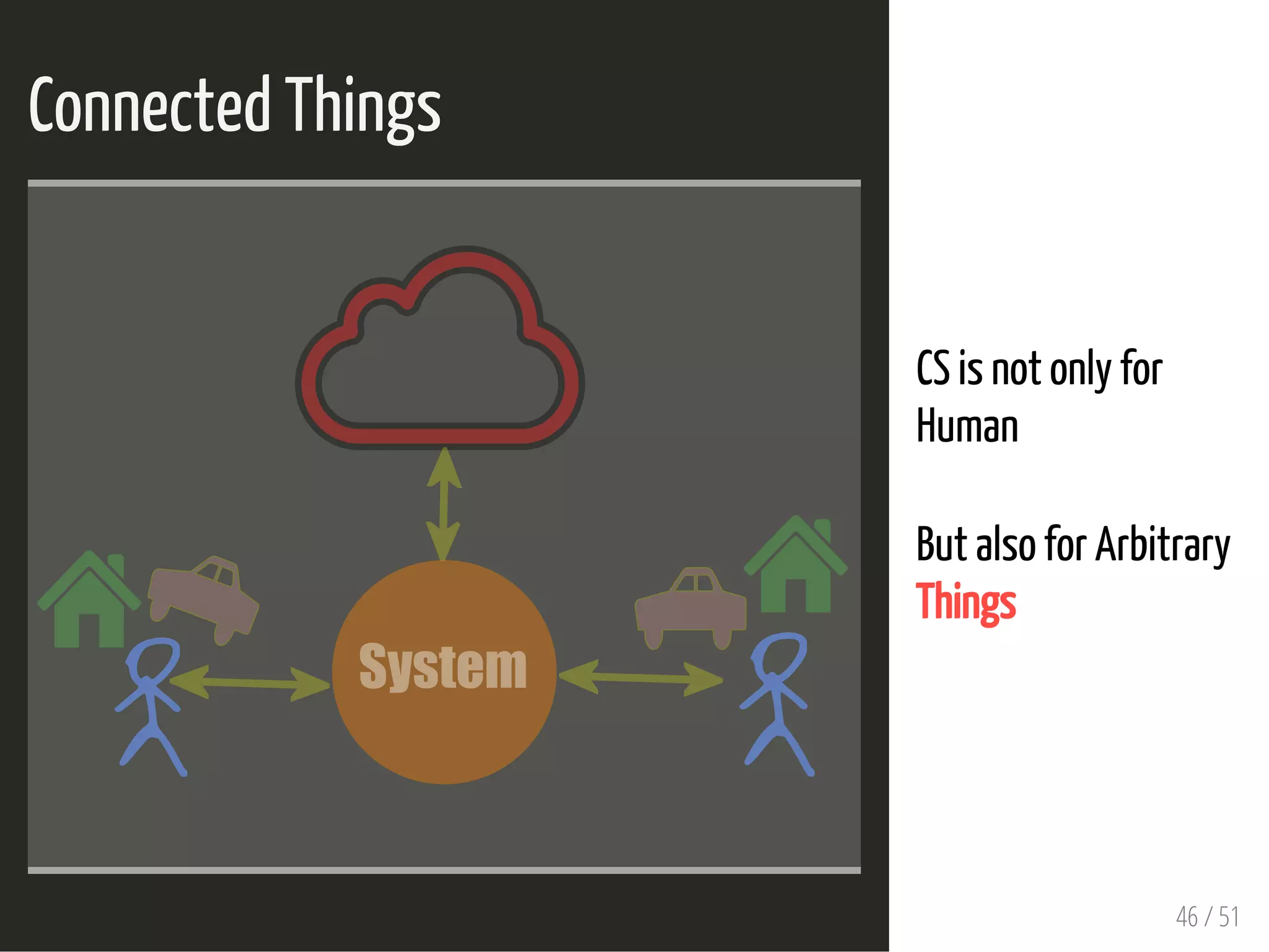

The document discusses the architecture and models of connected services and cloud computing, highlighting key components such as data centers and the flow of information in services like Gmail. It emphasizes the transition from traditional network systems to modern cloud-based systems, including the interactions between various service providers. Additionally, it provides definitions and insights into data center functionality and the role of connected things within these environments.