Embed presentation

Download to read offline

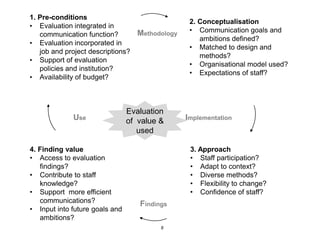

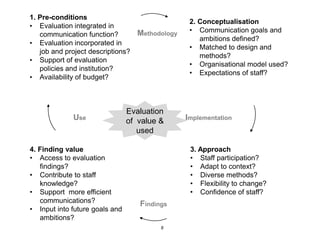

The document discusses the complexities and challenges of communication evaluation, emphasizing the need to assess various outputs, impacts, and audience engagement. It highlights the importance of integrating evaluation within communication practices, defining clear goals, and using diverse methodological approaches for effective evaluation. Additionally, it stresses the value of findings in improving future communication strategies.