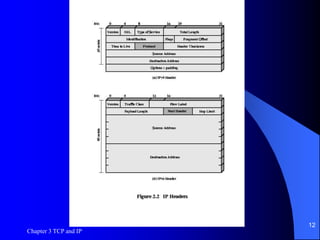

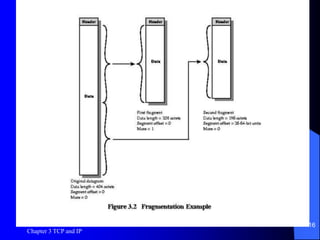

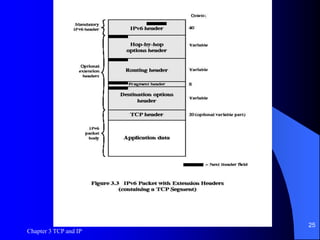

This document discusses TCP, UDP, and IP protocols, detailing their specifications, functionalities, and usage. It explains how TCP manages data segmentation, checksums, and the importance of flow control, while UDP is presented as a simpler, connectionless alternative. Additionally, it covers IP operation, fragmentation, and the transition to IPv6, highlighting new features like expanded address space and differentiated services support.