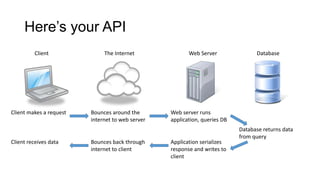

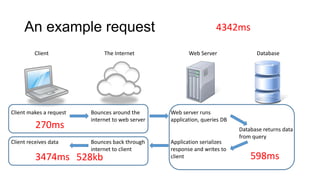

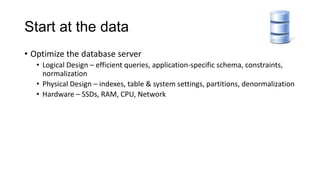

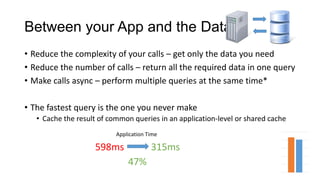

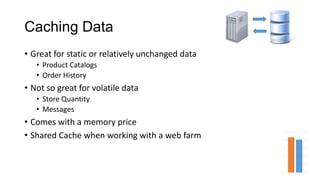

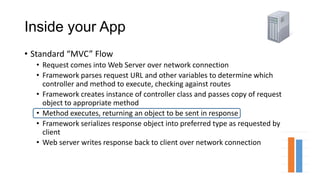

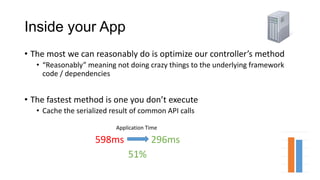

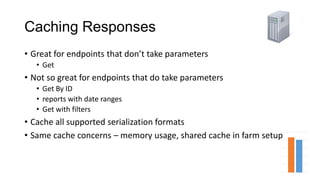

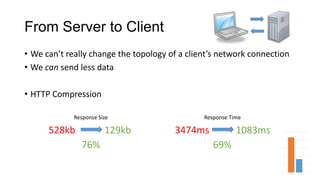

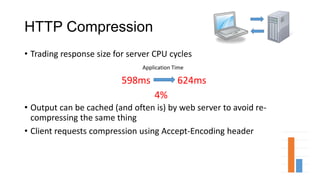

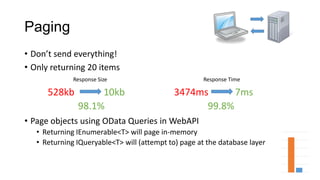

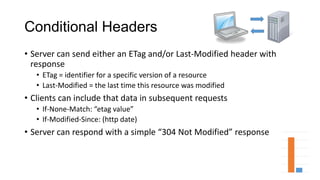

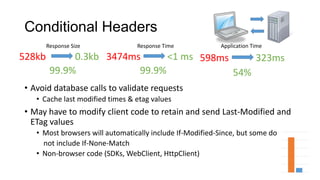

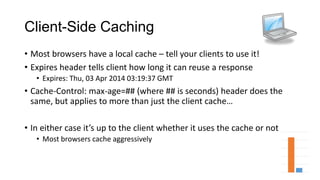

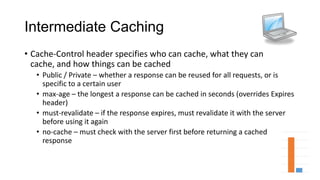

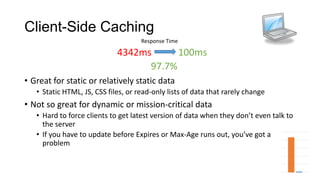

The document discusses strategies for improving web service performance, focusing on optimizing database design, reducing the complexity of API calls, and implementing caching techniques. It outlines methods such as using HTTP compression, conditional headers for caching responses, and client-side caching to minimize server load and response times. Overall, it emphasizes the importance of efficient data handling and caching strategies for enhancing application performance.