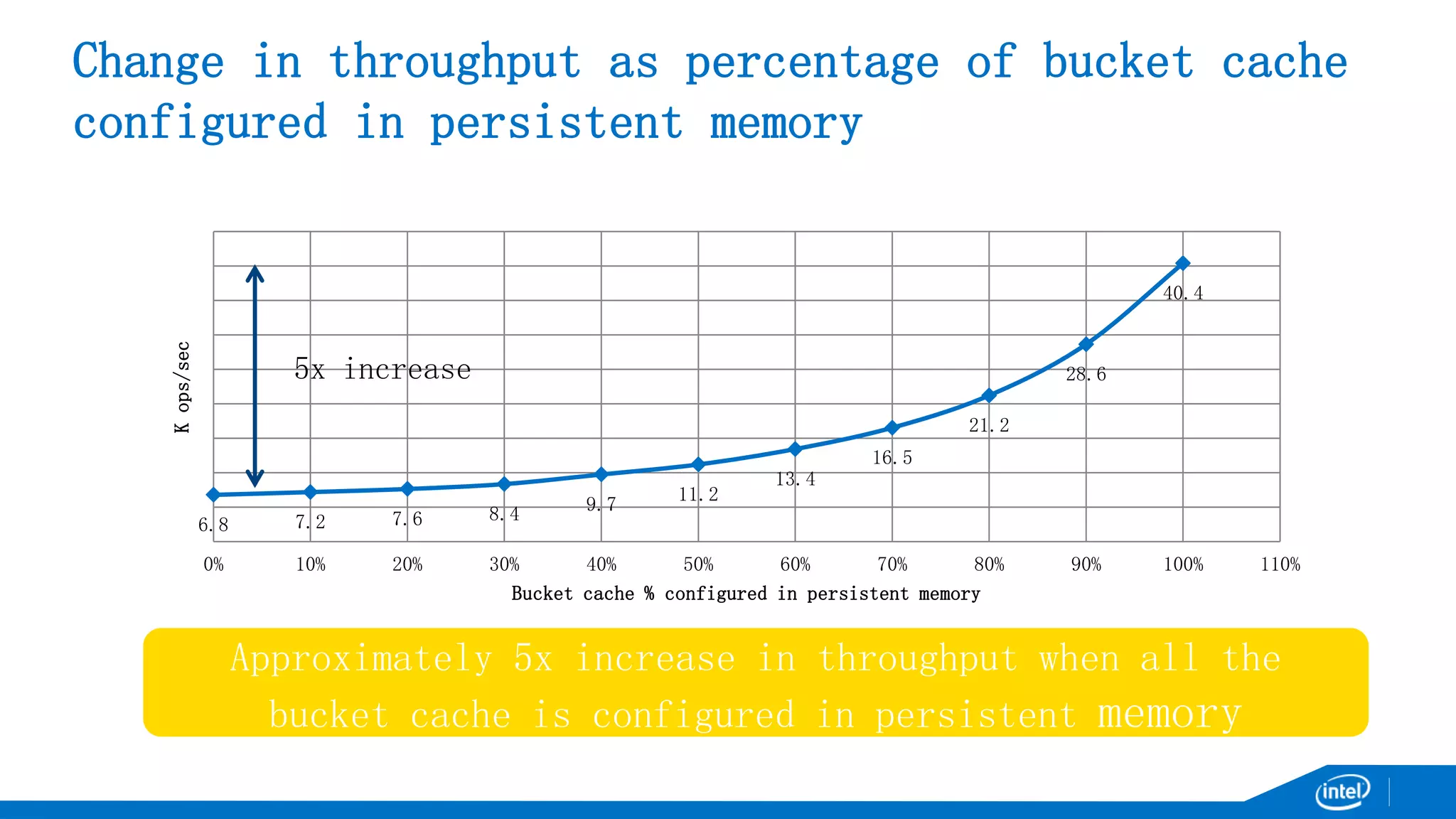

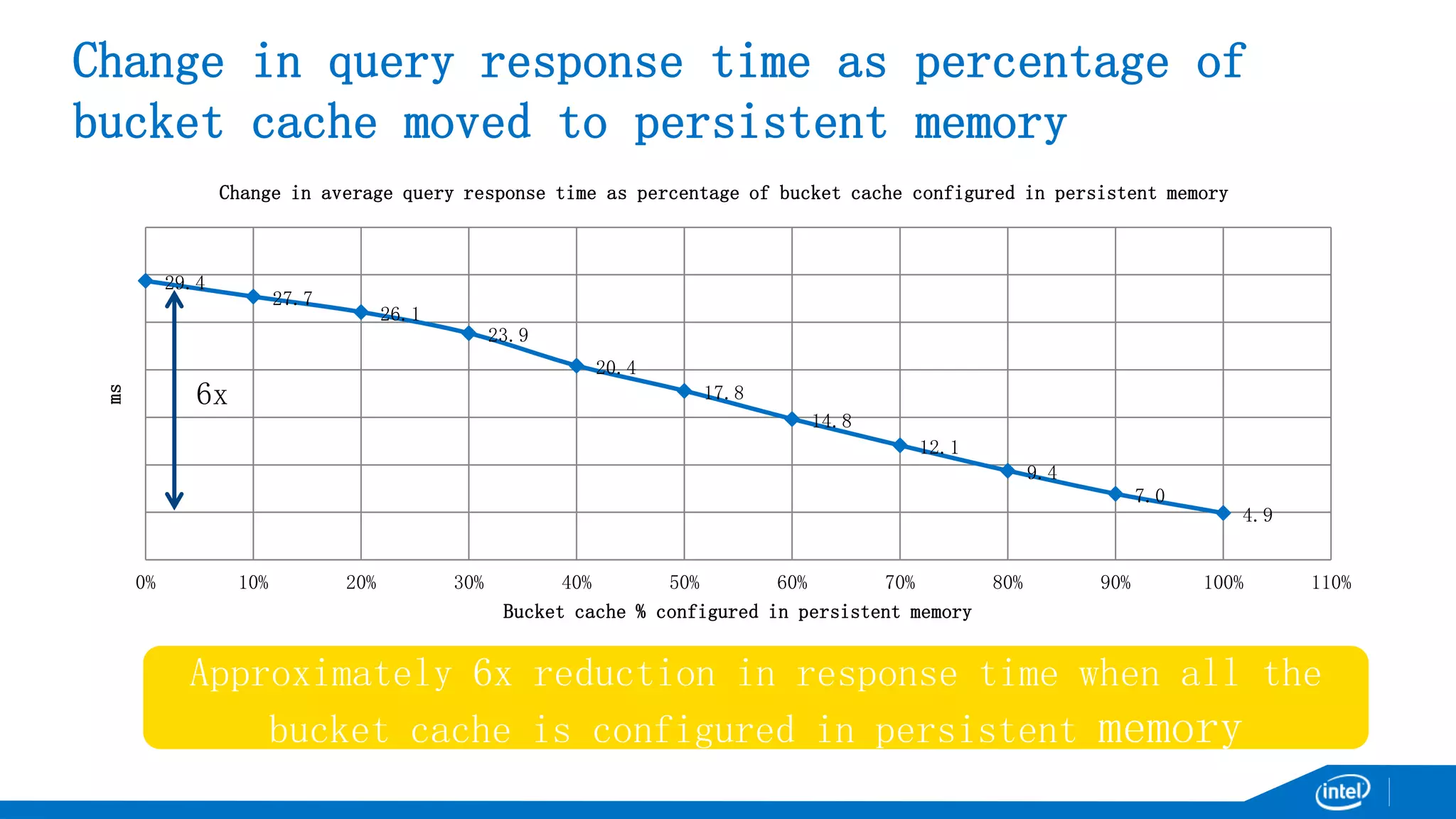

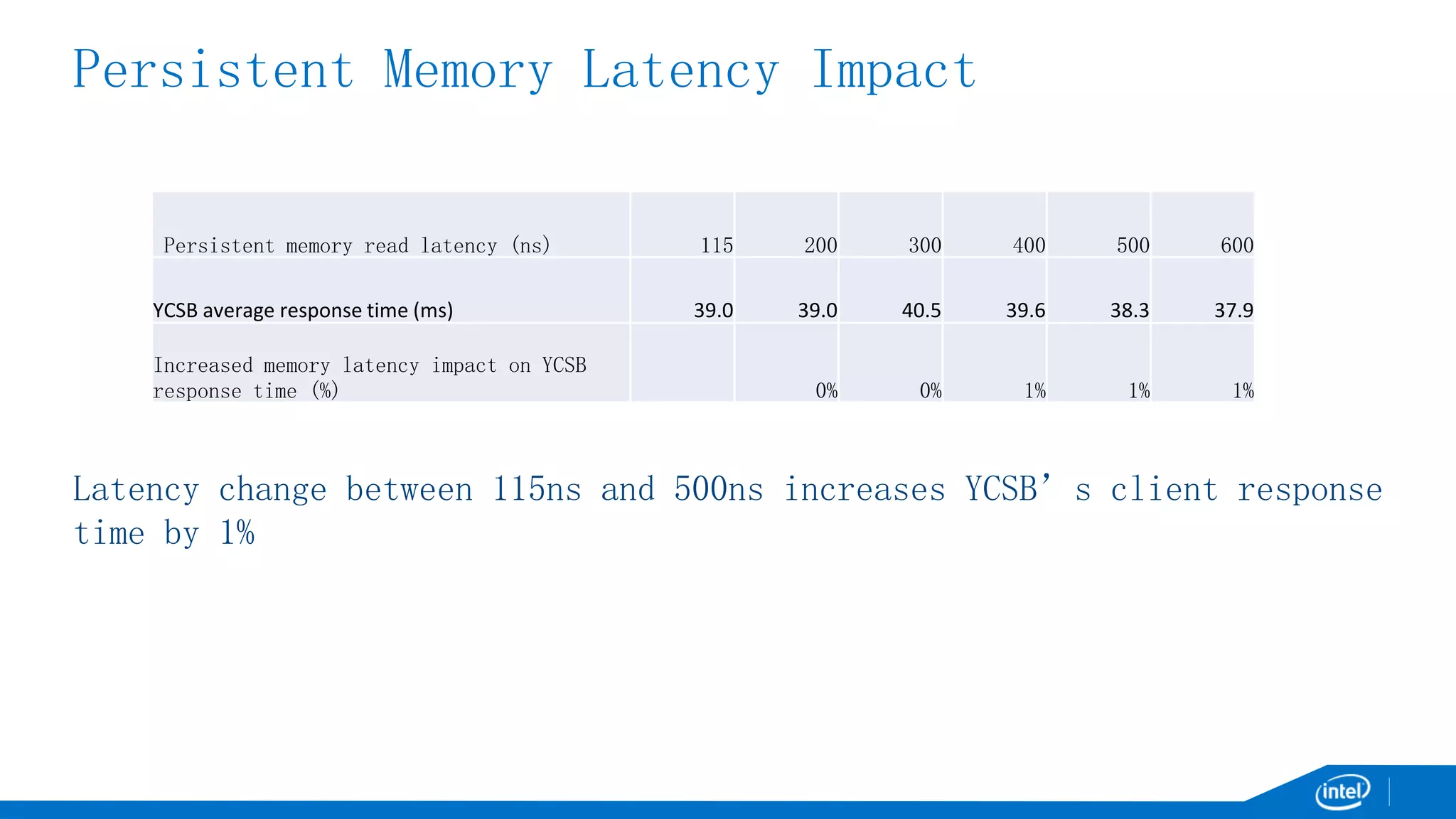

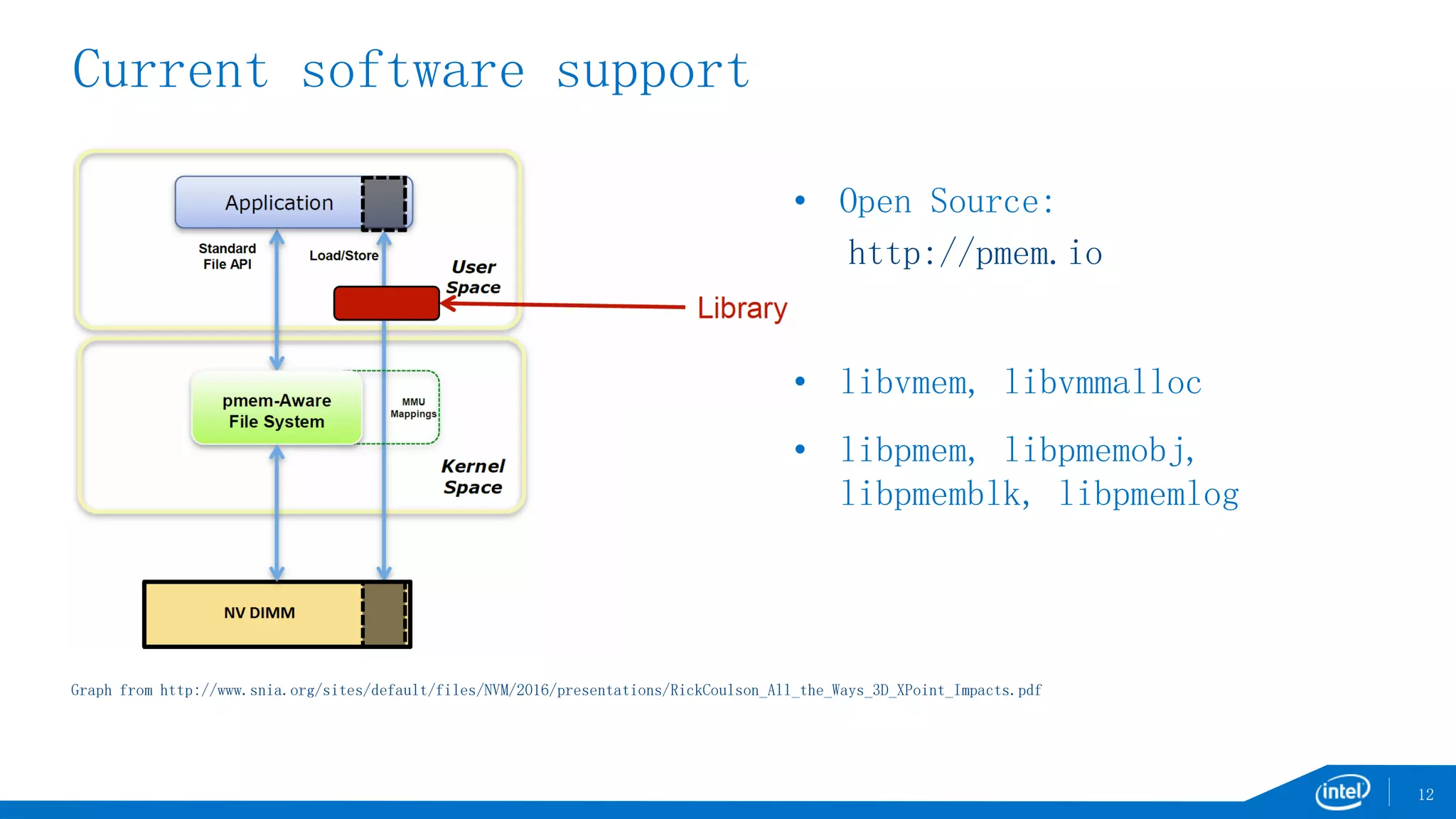

The document discusses the benefits of persistent memory in overcoming performance limitations related to disk writes and data management. It presents experimental results demonstrating a significant increase in throughput and a reduction in response time when utilizing persistent memory, highlighting its potential advantages for systems like HBase. Additionally, it addresses current software support and outlines potential solutions to achieve higher bandwidth with lower latency in data transactions.