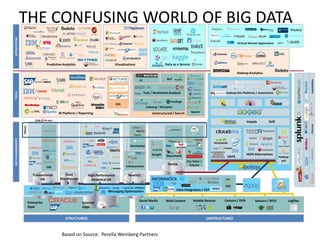

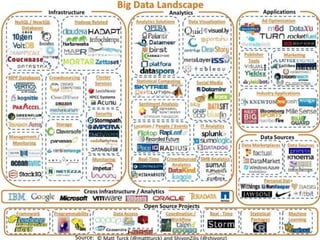

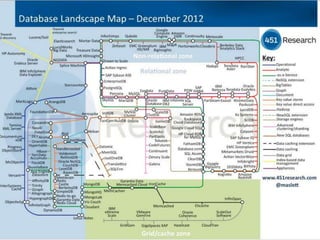

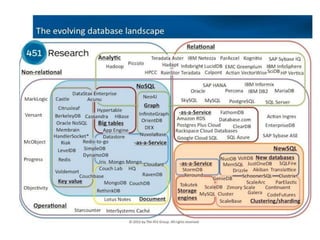

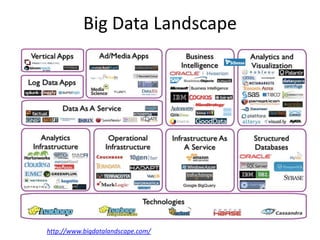

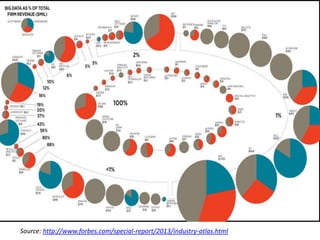

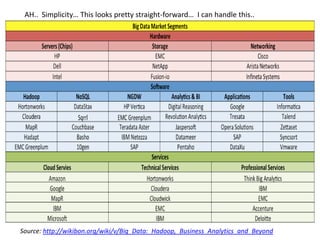

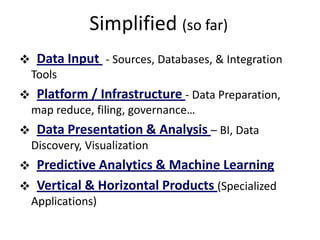

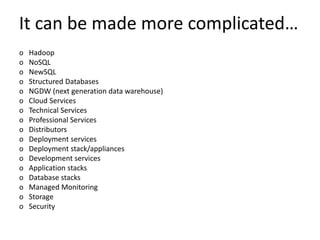

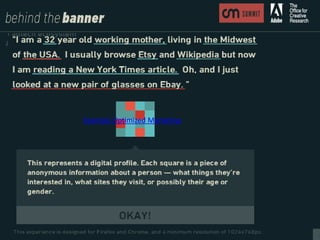

The document provides an agenda for a meeting on big data. It begins with acknowledging those in attendance from various companies. It then lists the agenda items which include an overview of big data connections, a presentation on demystifying big data, and success stories from SAP HANA startups. There will also be a quick poll of attendees and a networking session with Q&A. Additional slides provide background on the American Institute of Big Data Professionals and their mission to educate on big data solutions. Diagrams map the big data landscape and key vendors in related areas like analytics, visualization and predictive analytics.