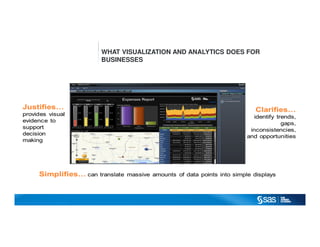

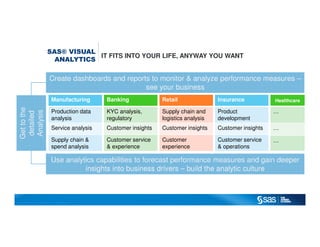

SAS® Visual Analytics is a comprehensive solution designed to enhance business decision-making through powerful visualizations and analytics. It empowers users with self-service capabilities, enabling ad hoc data exploration, and facilitates the adoption of an analytics-driven culture across organizations. The platform integrates analytics into various sectors, including retail, healthcare, and finance, driving significant value and insights for diverse operational needs.