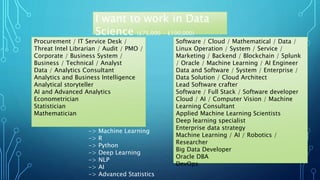

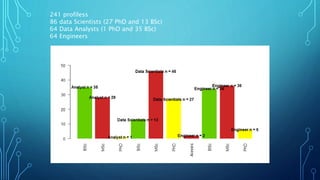

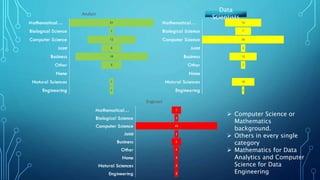

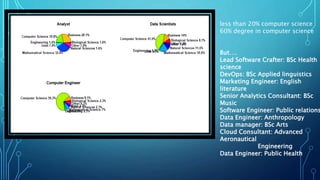

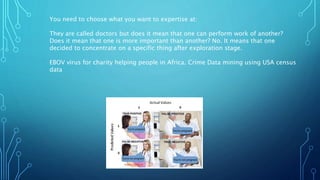

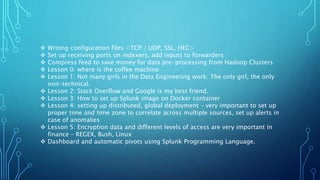

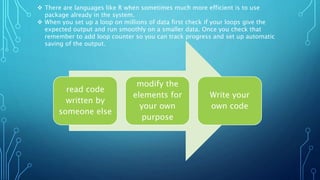

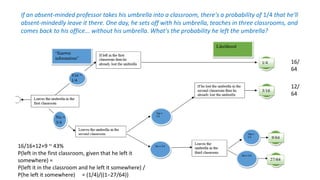

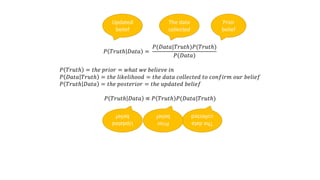

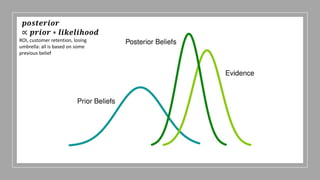

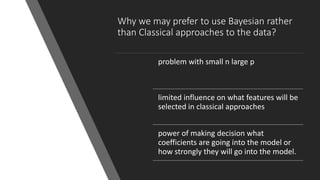

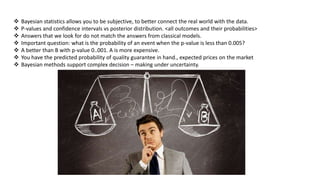

The document discusses insights from analyzing LinkedIn profiles related to data professions, exploring various roles, backgrounds, and the importance of specialization in the field. It highlights lessons learned in data engineering and analytics, including the application of Bayesian reasoning for decision-making under uncertainty. Furthermore, it emphasizes the differences in approaches between static and real-time data analytics, showcasing the significance of statistical thinking and practical application of tools like R and Python.