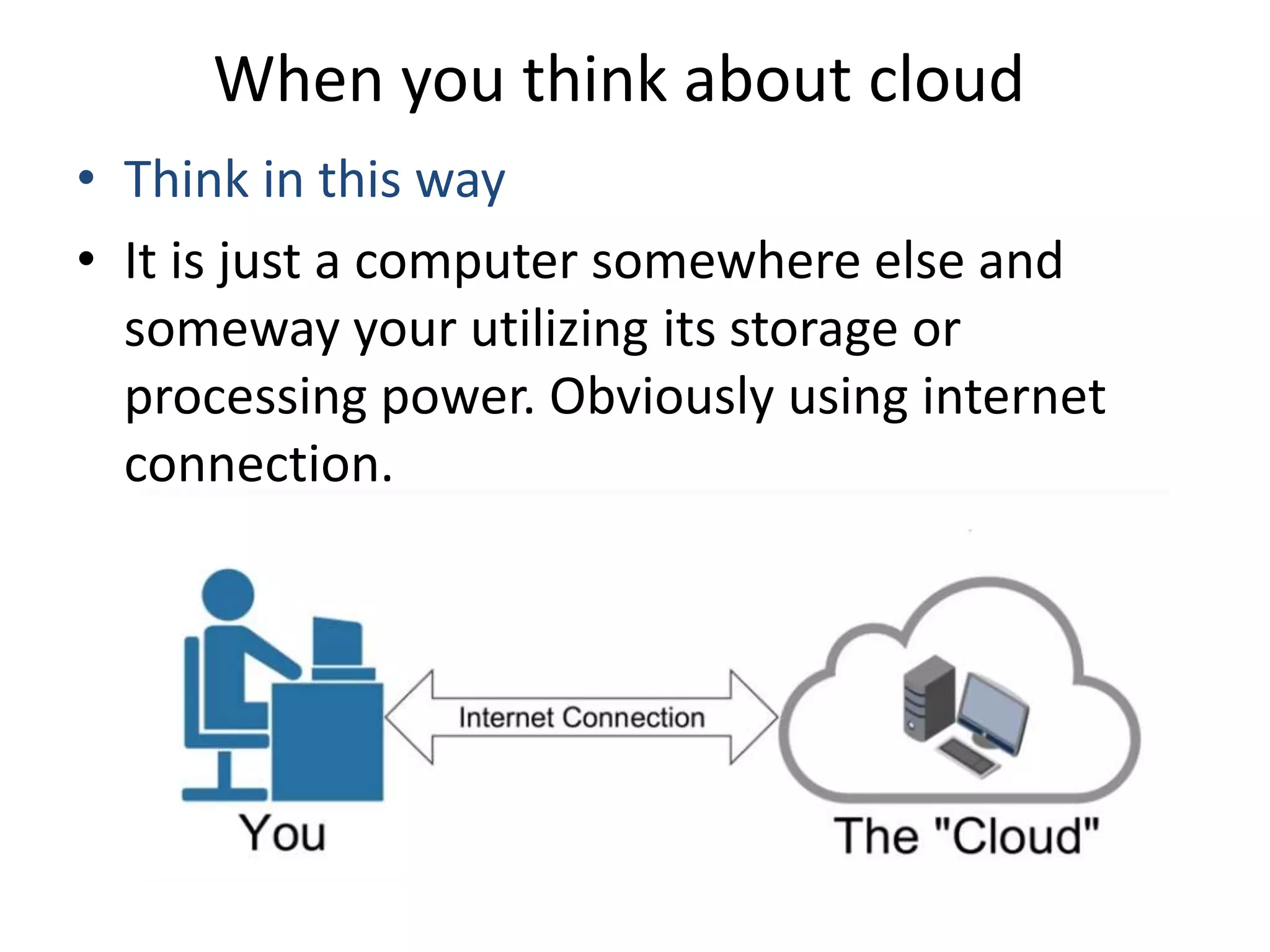

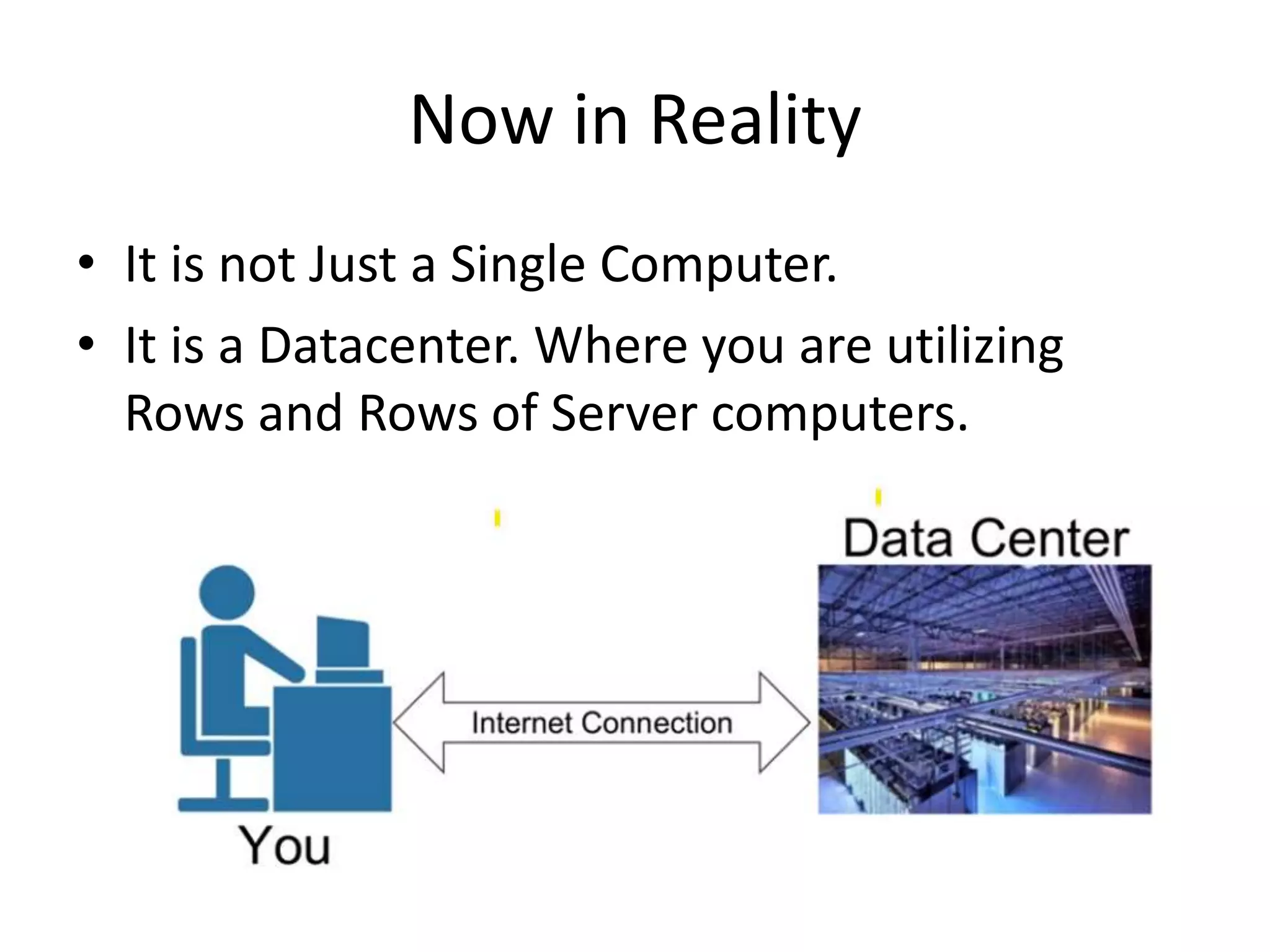

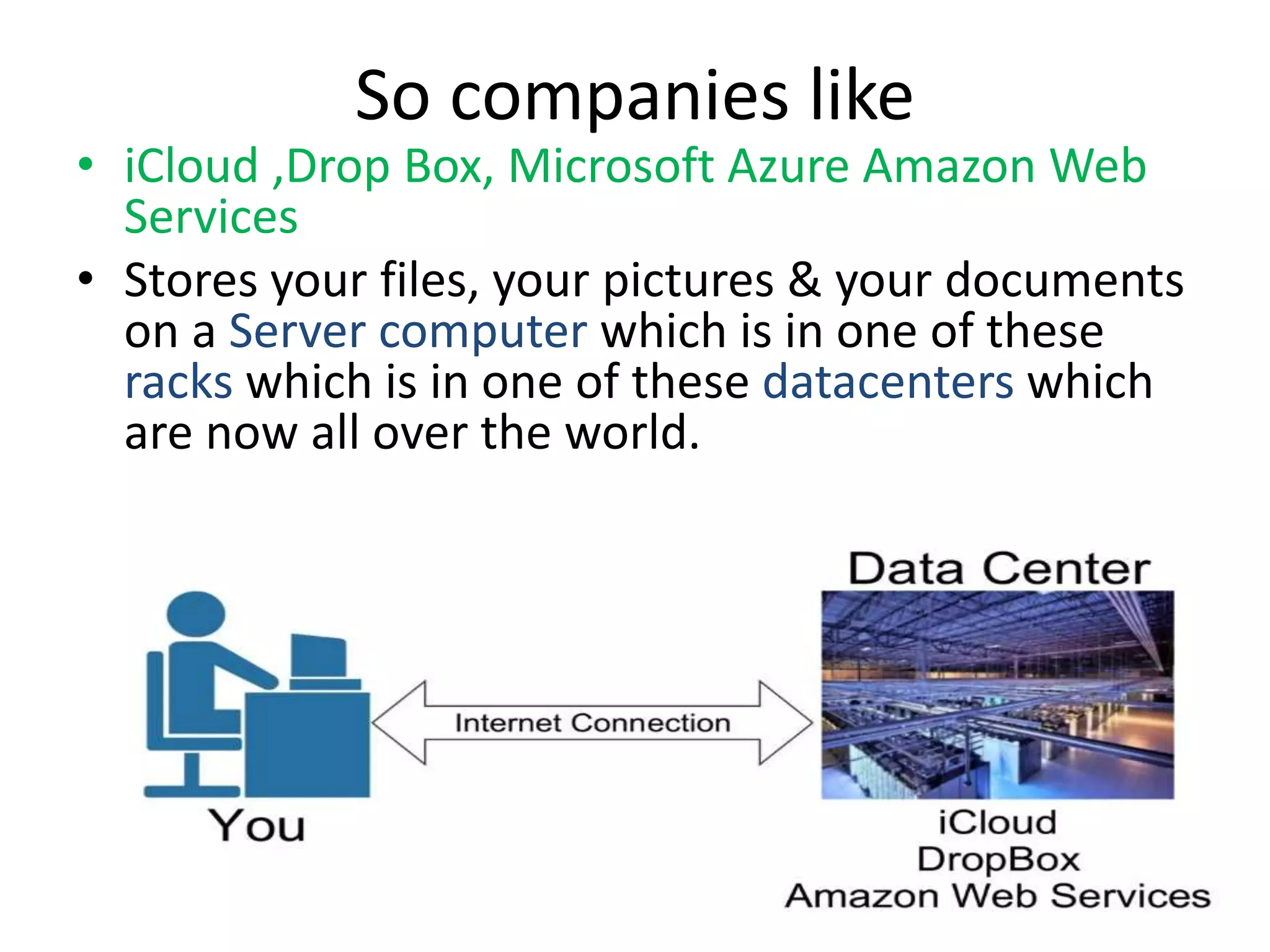

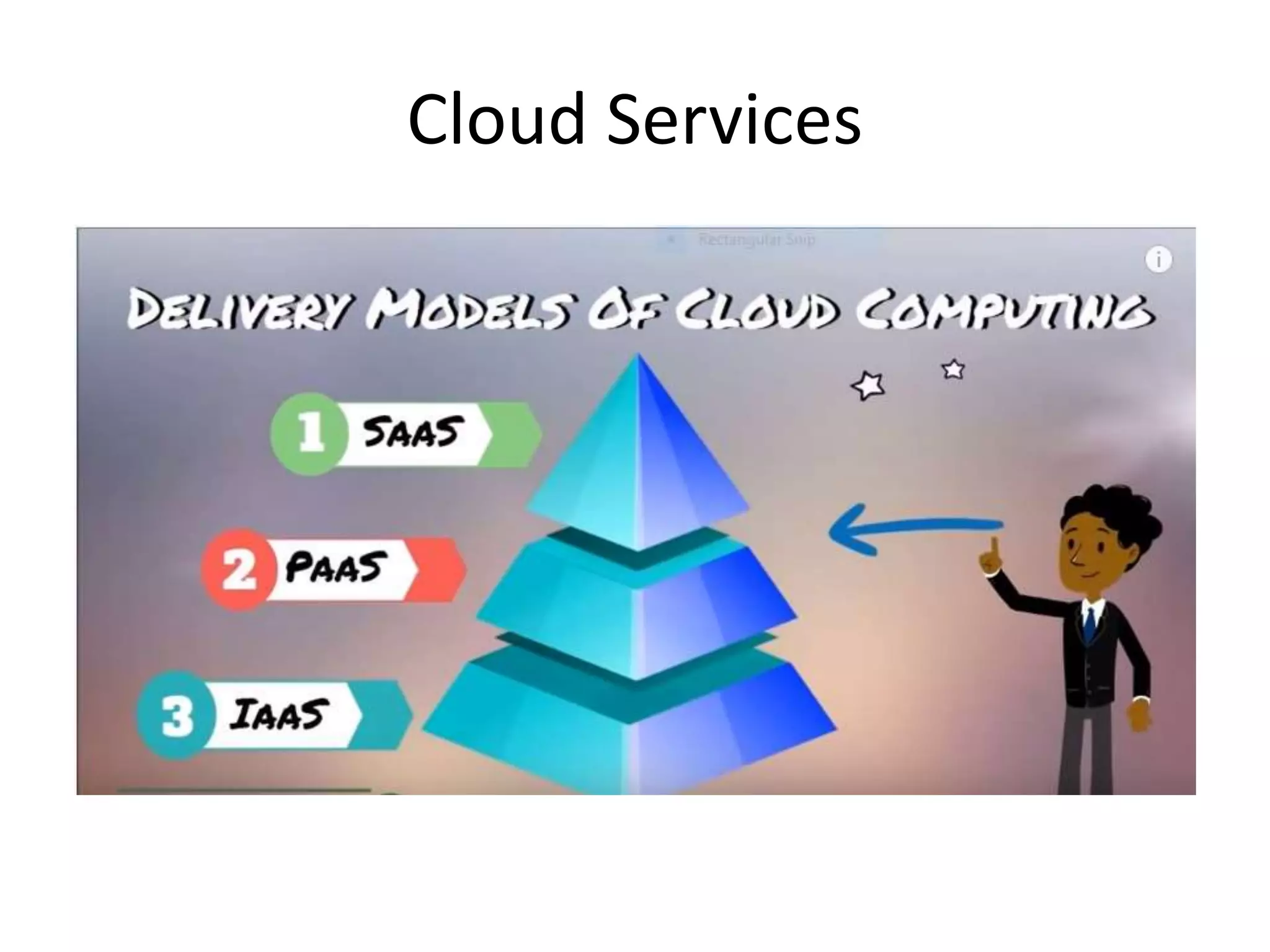

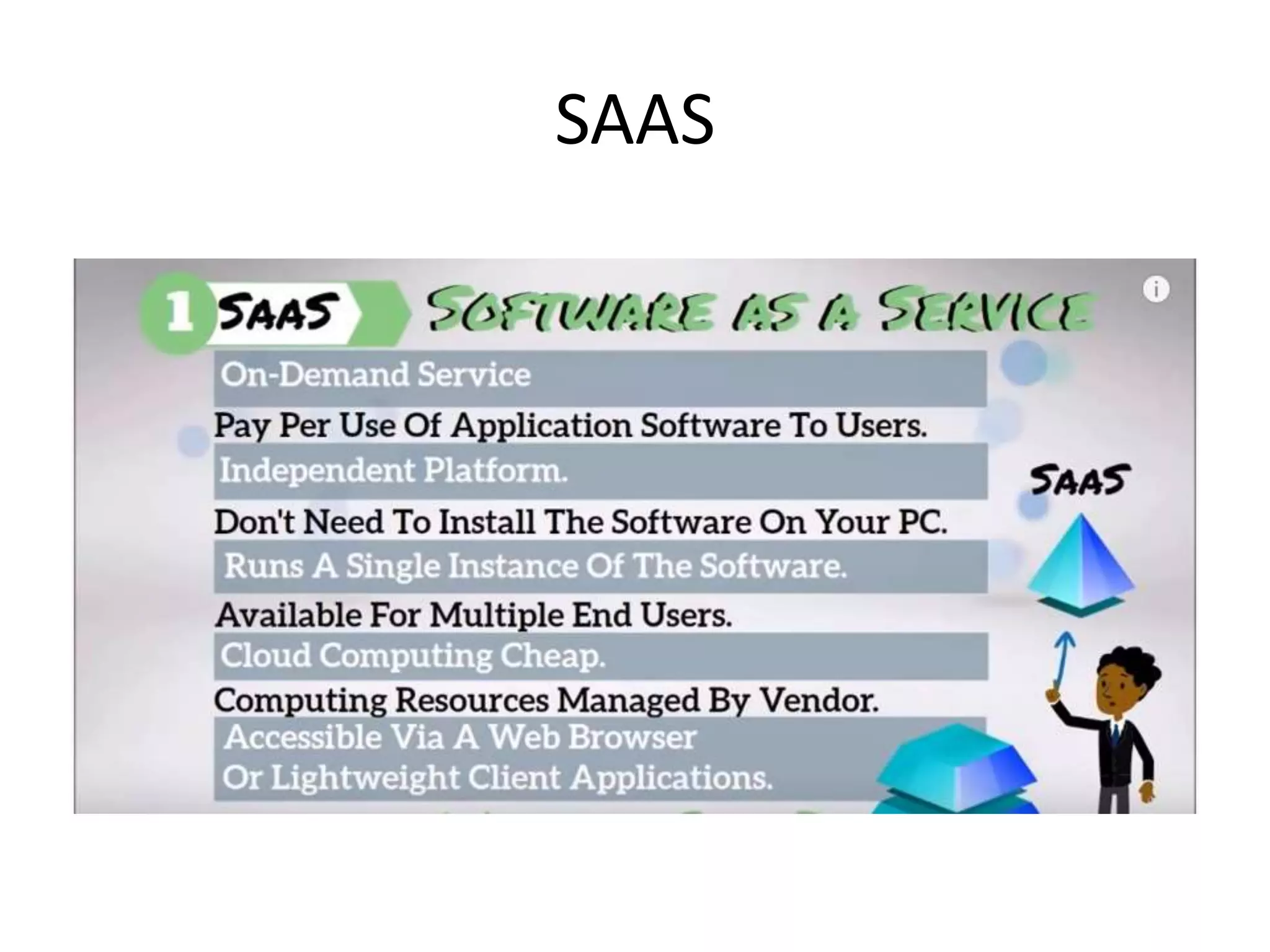

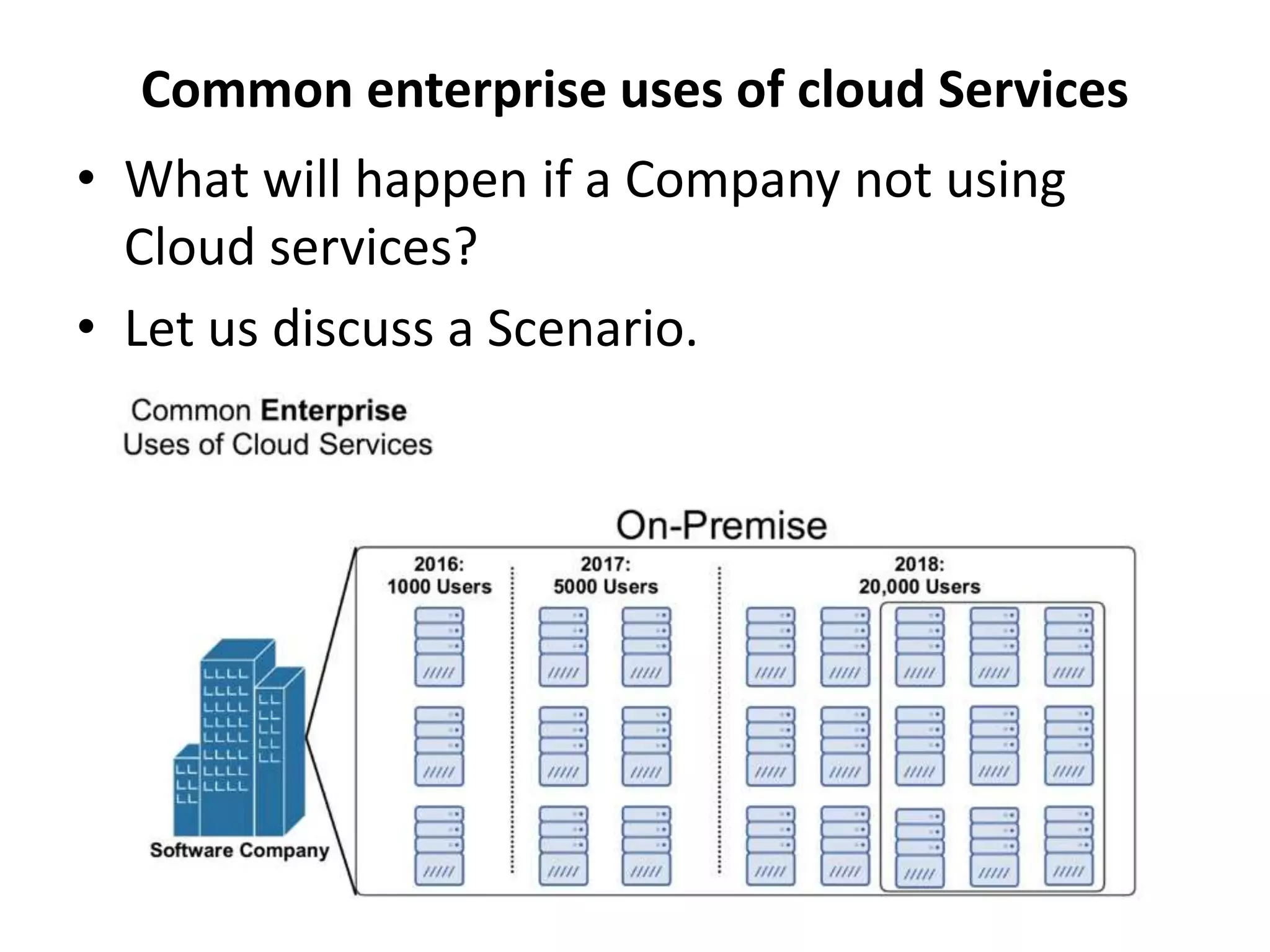

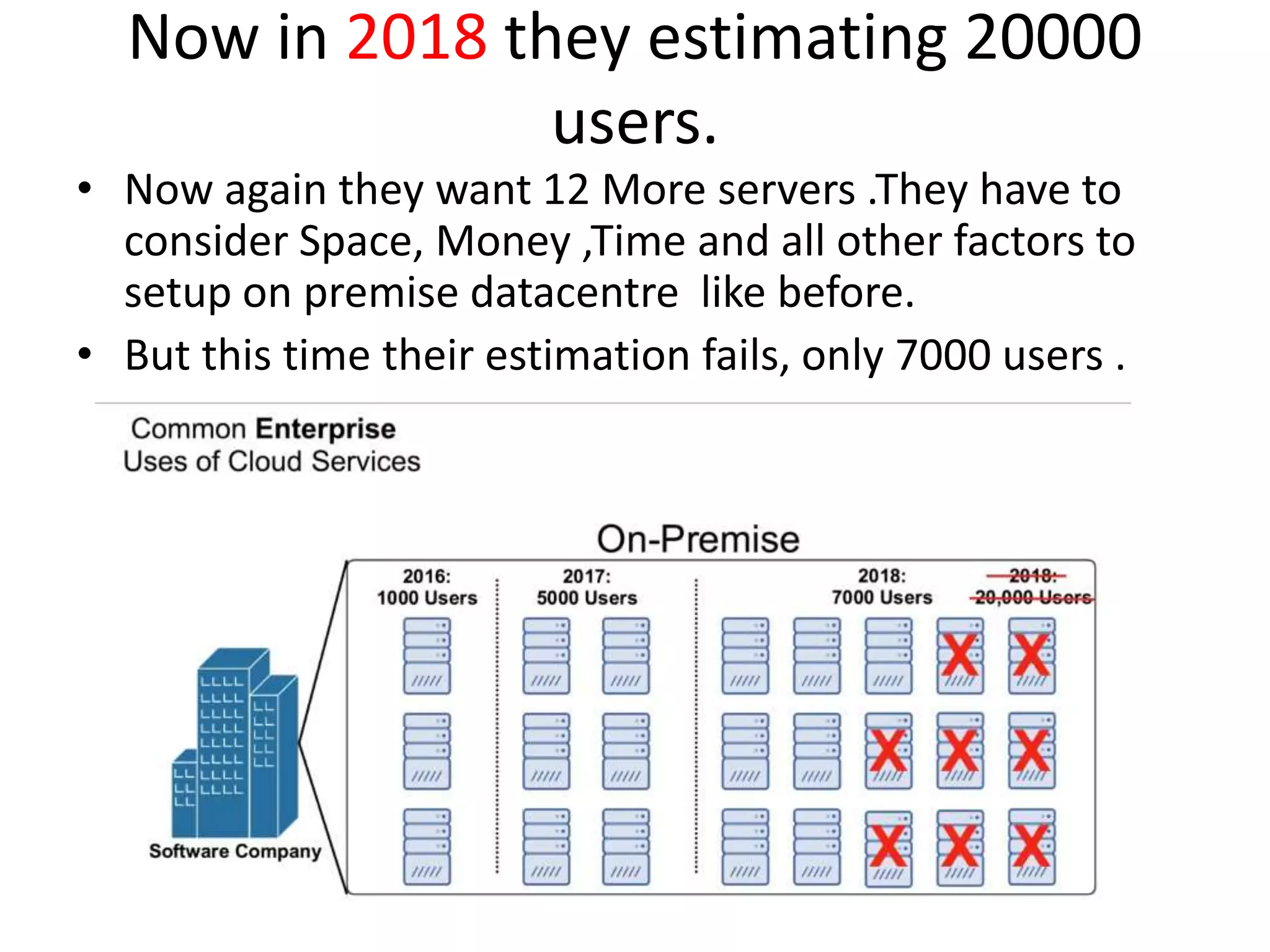

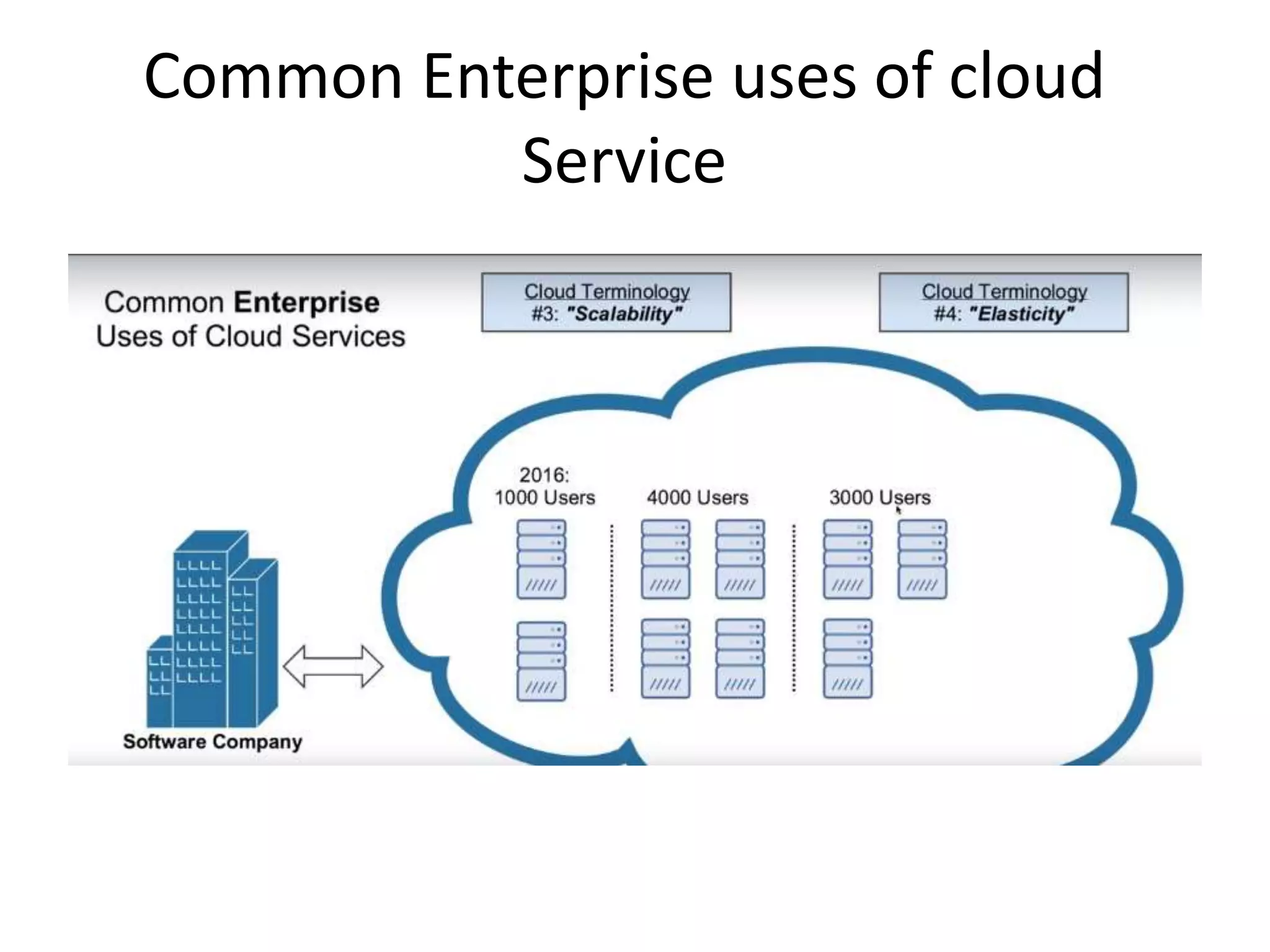

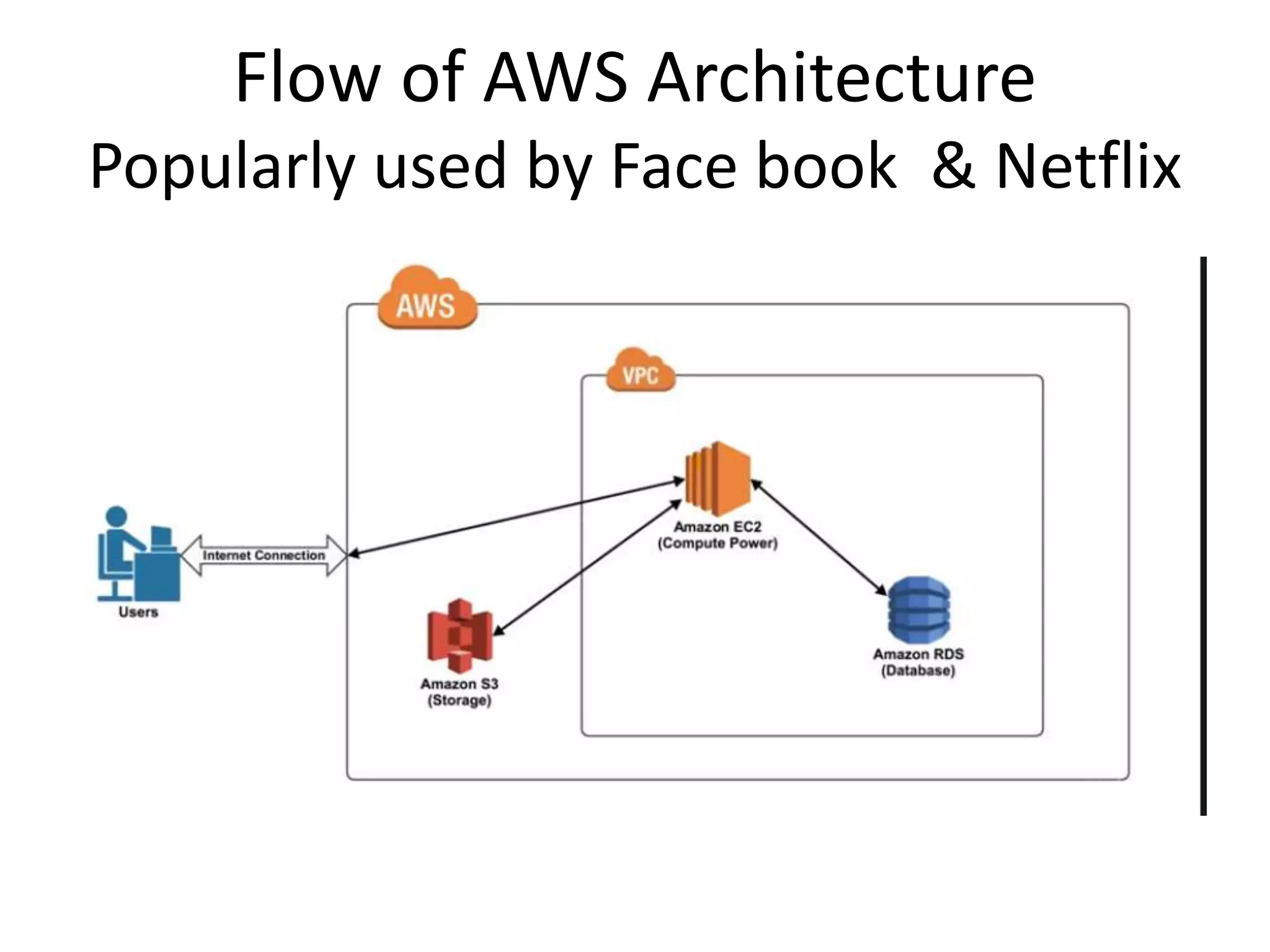

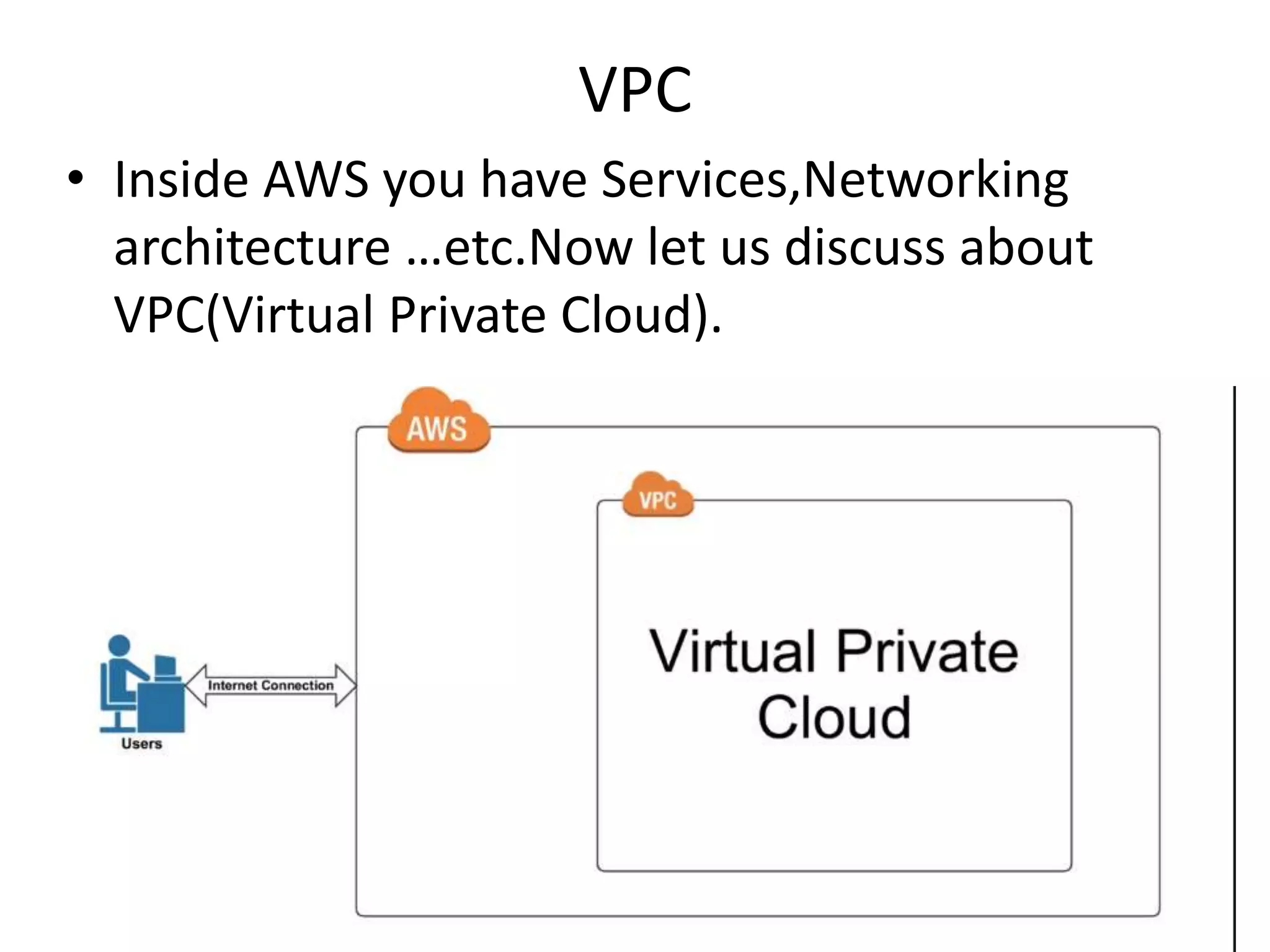

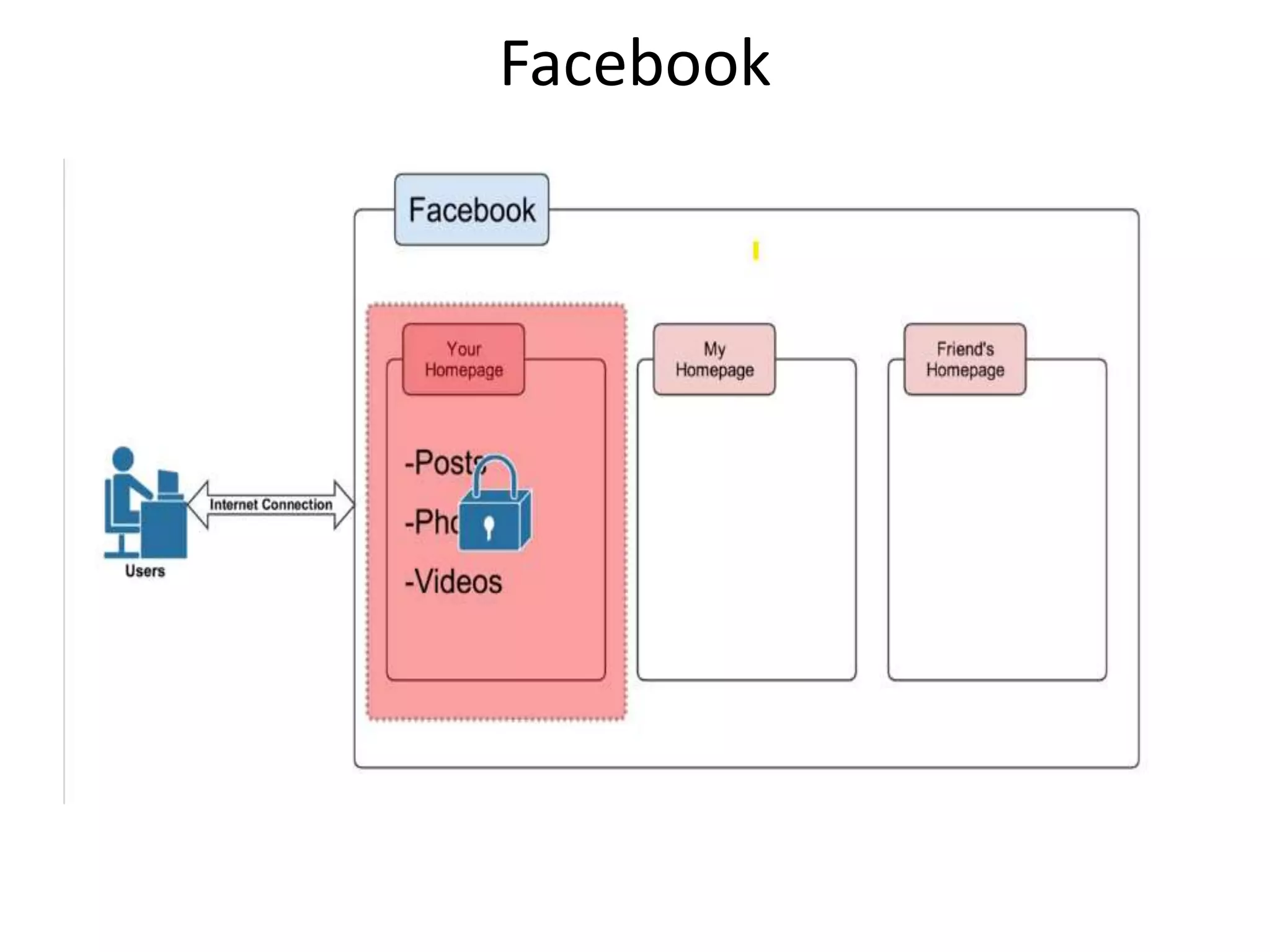

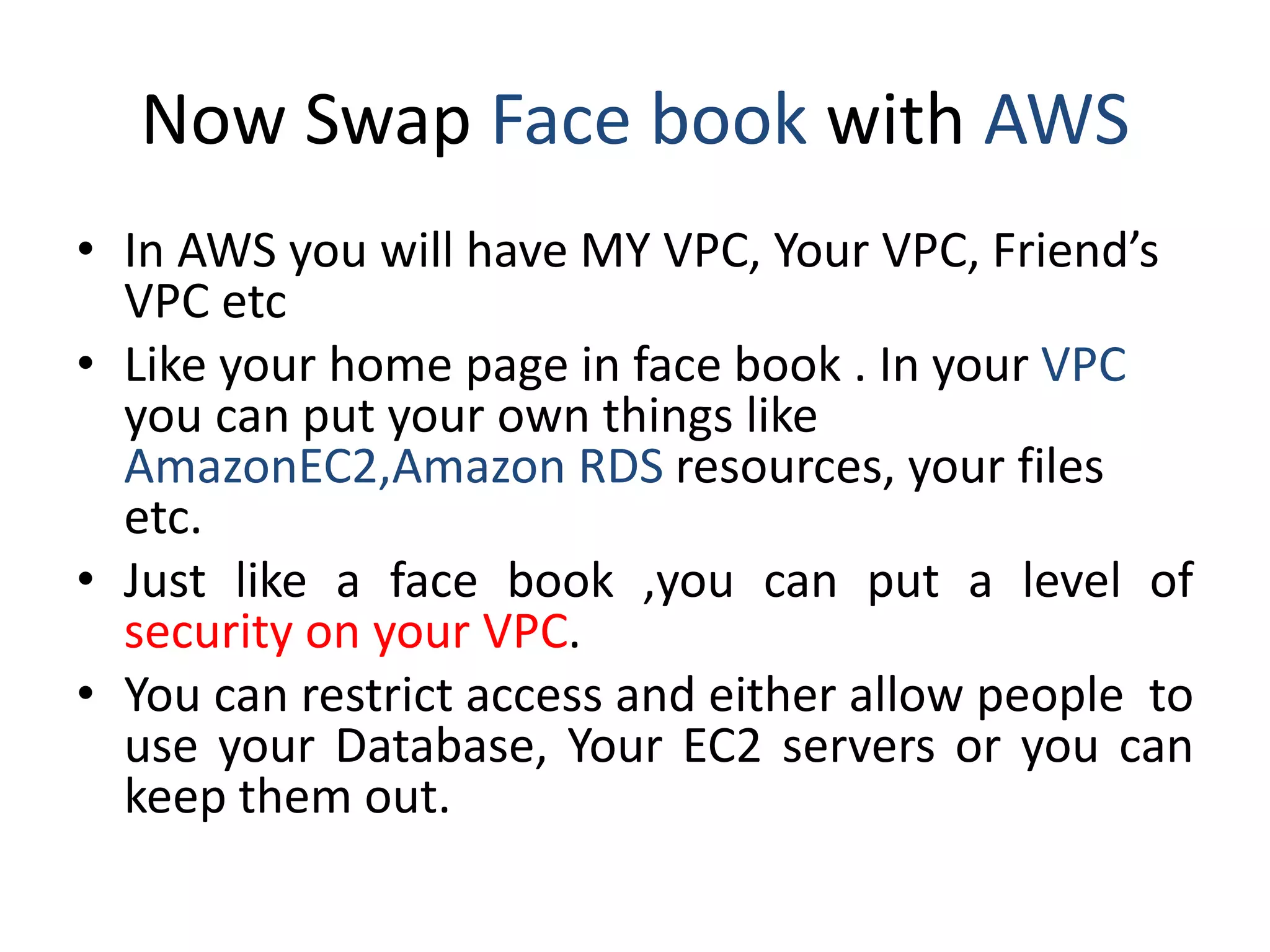

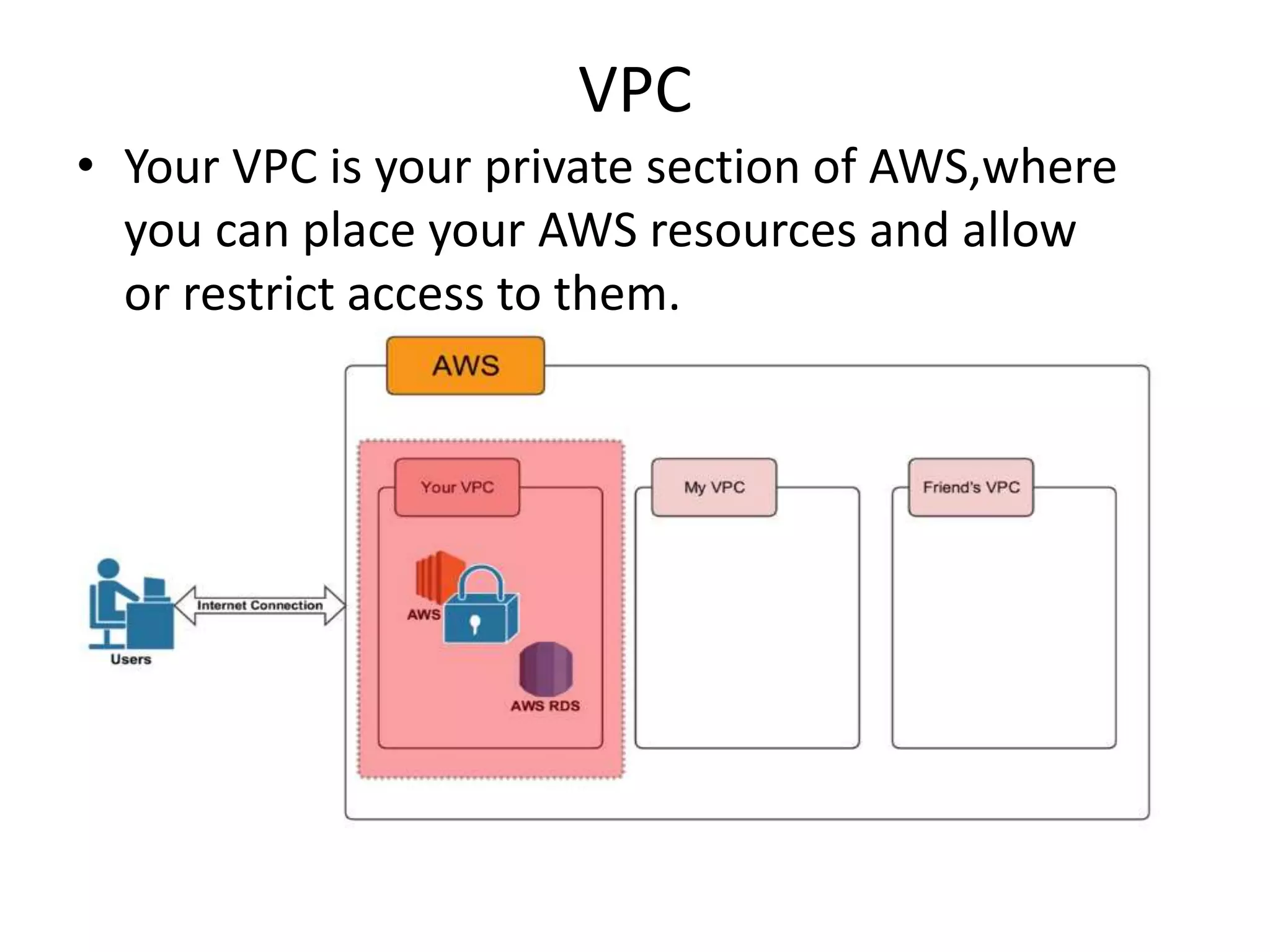

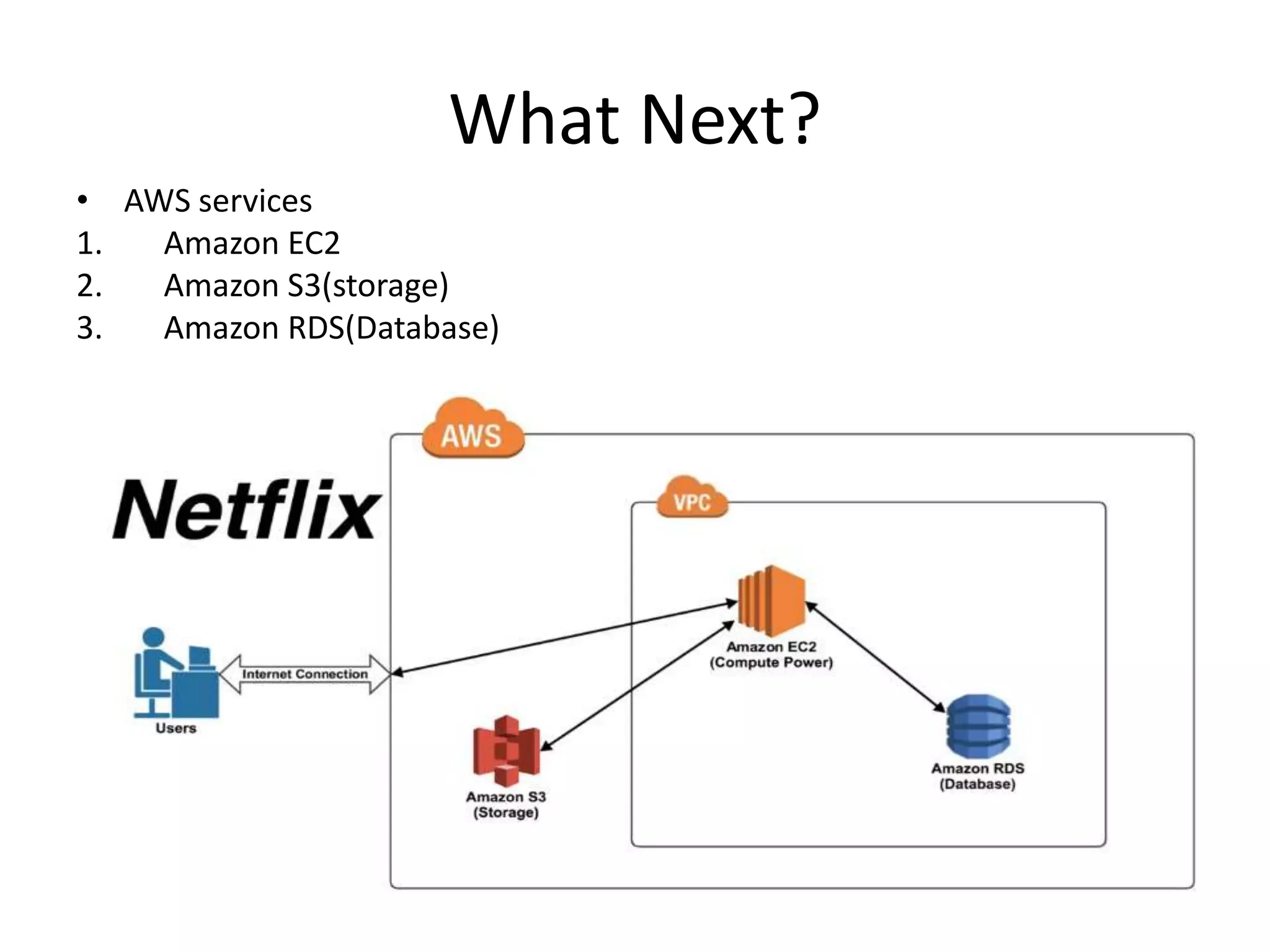

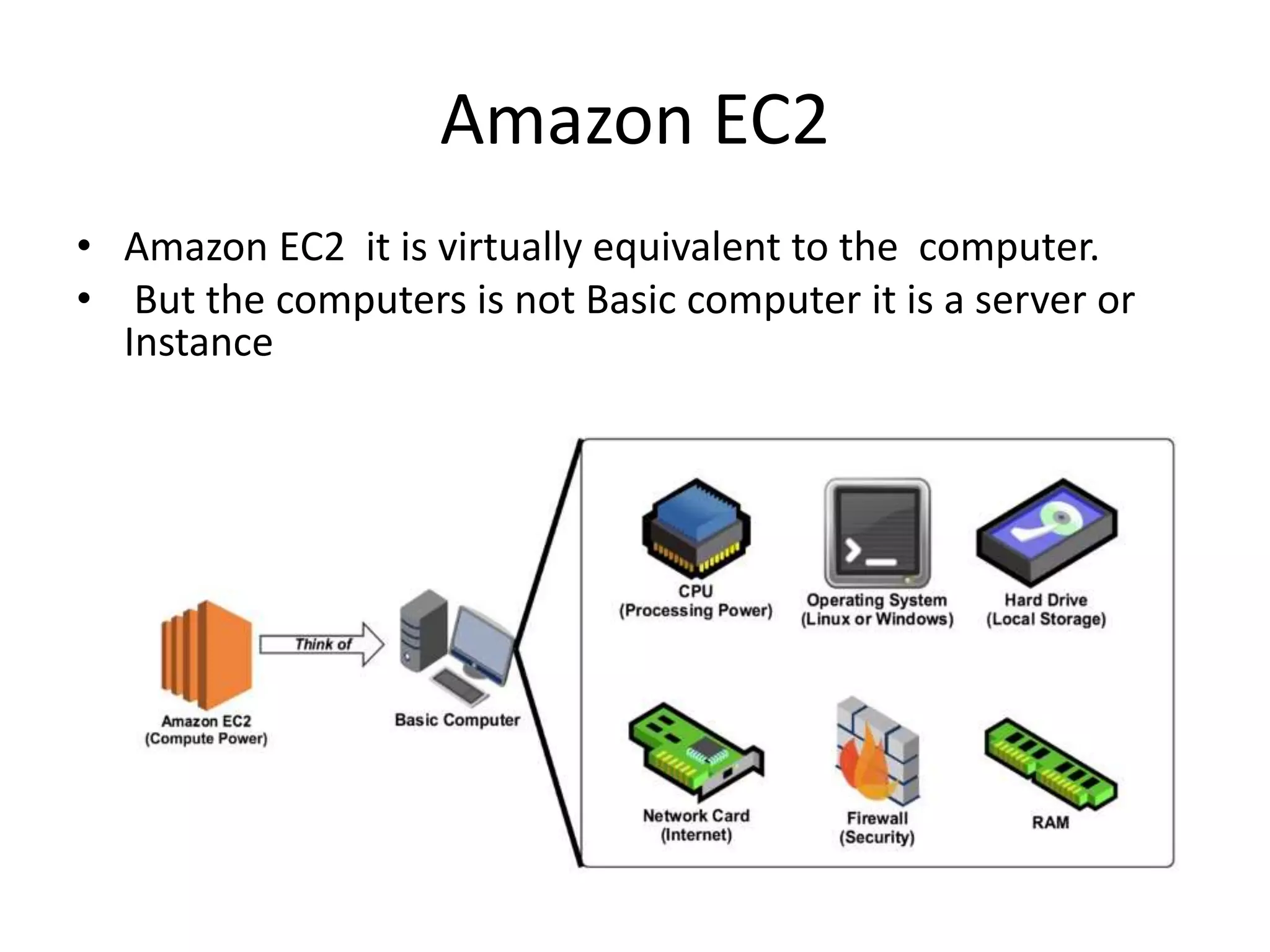

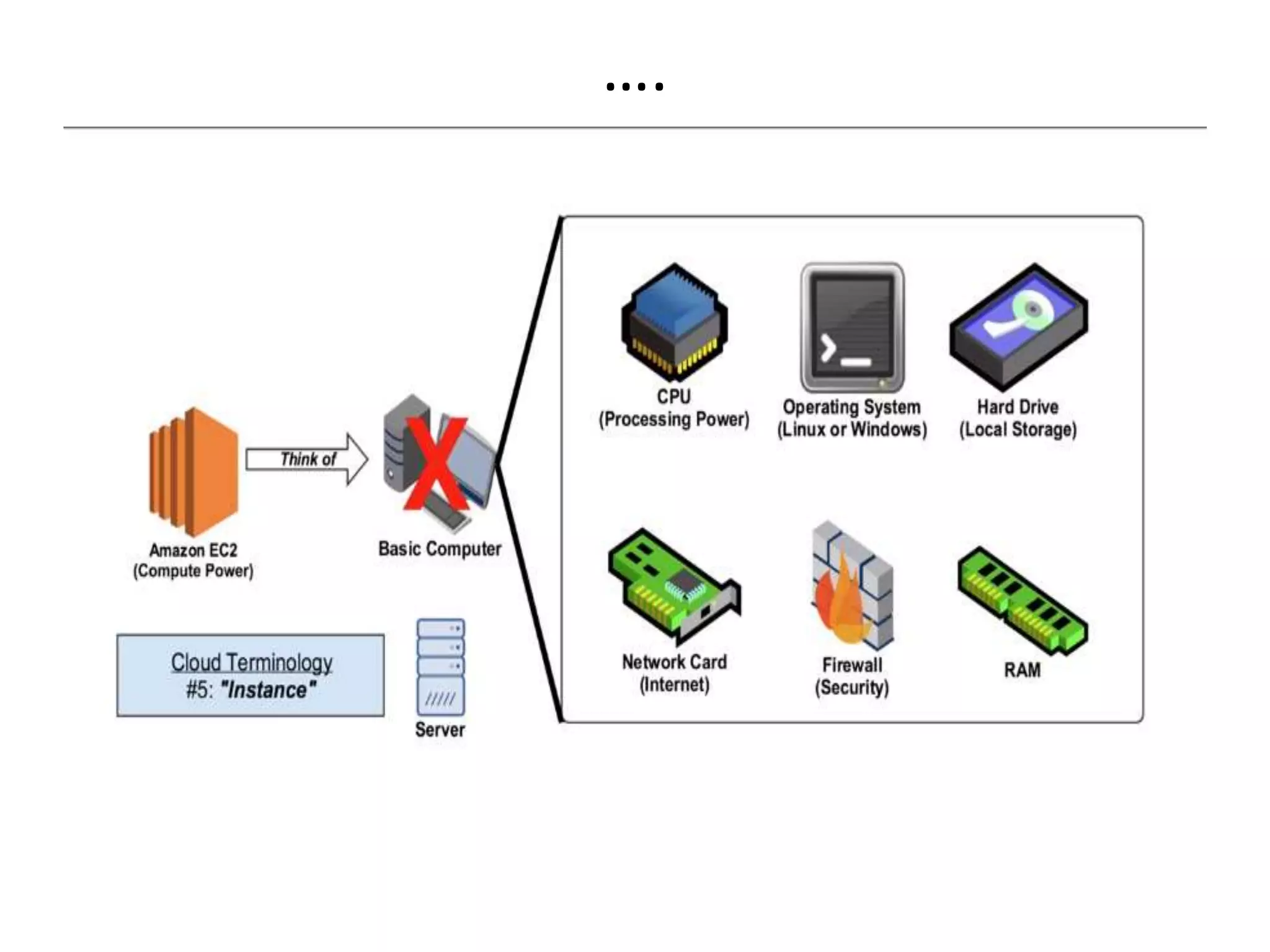

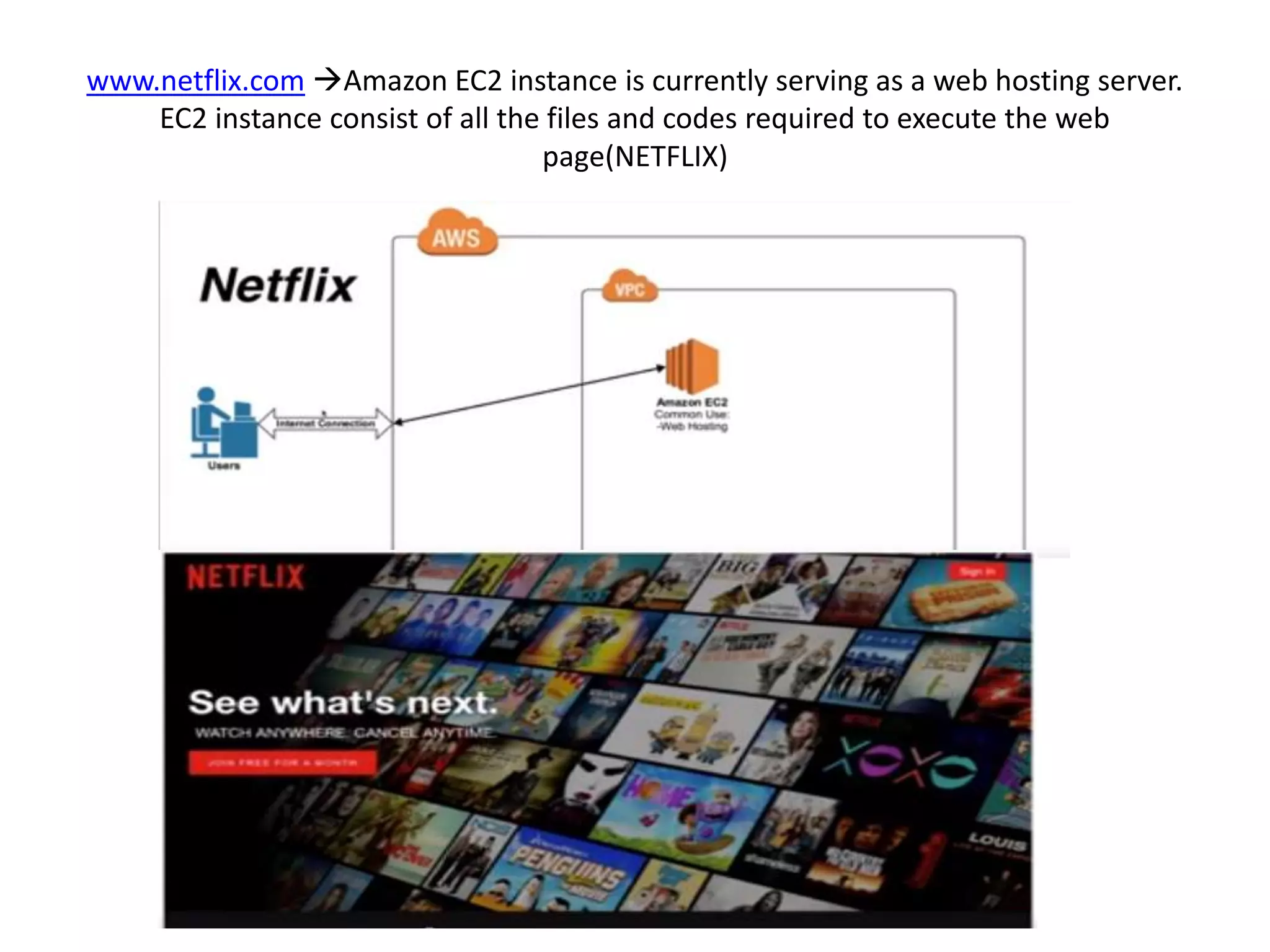

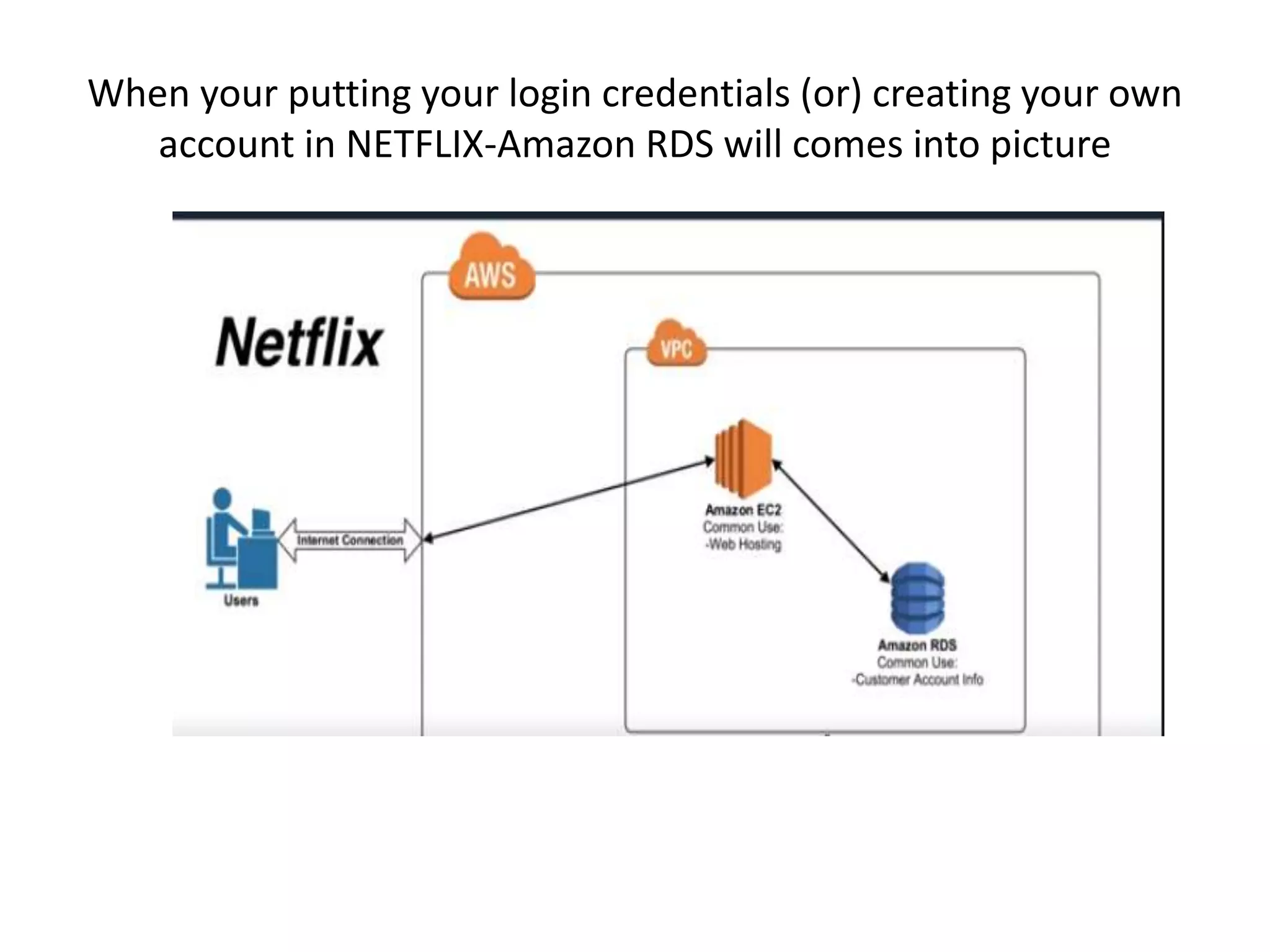

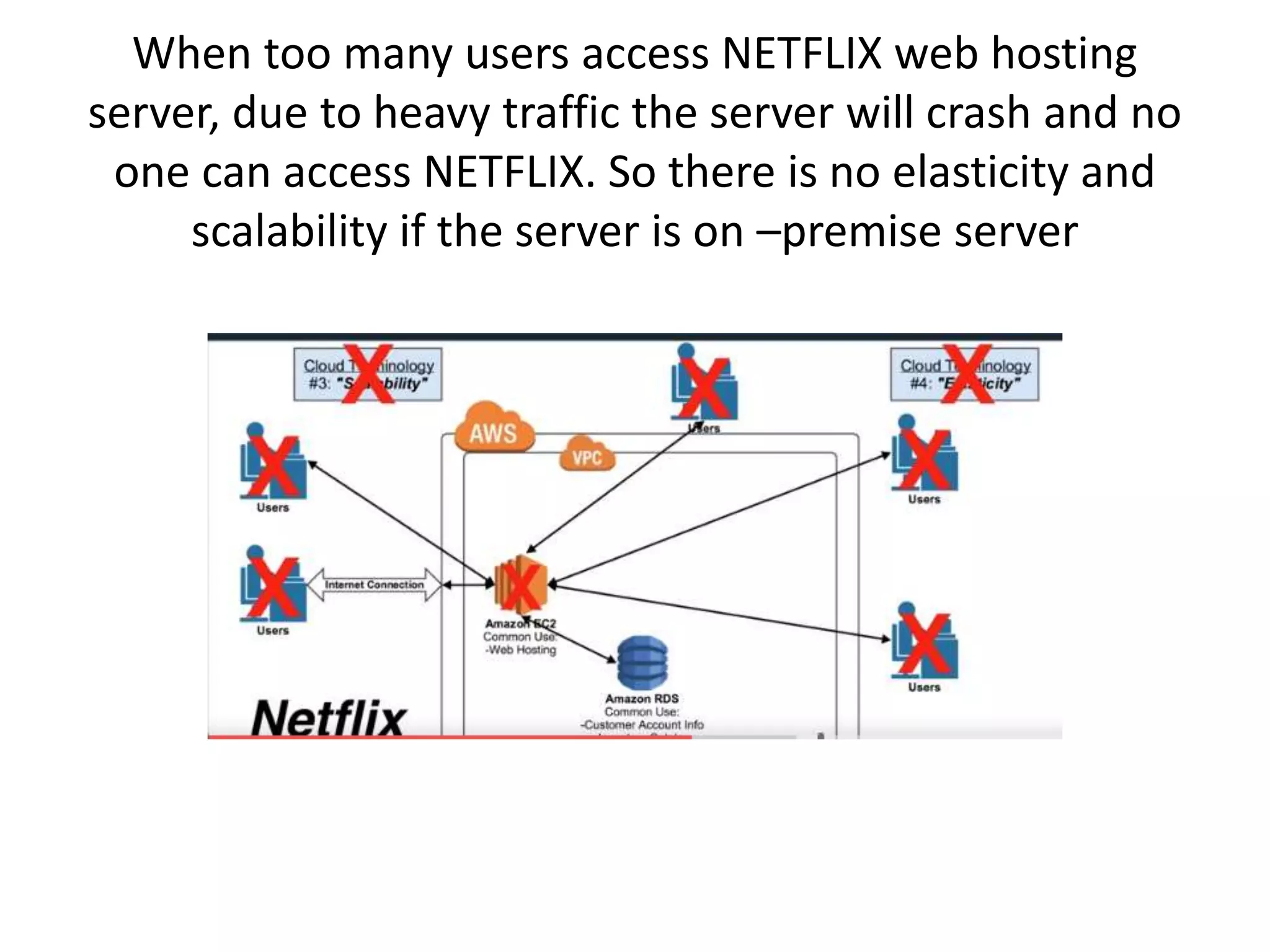

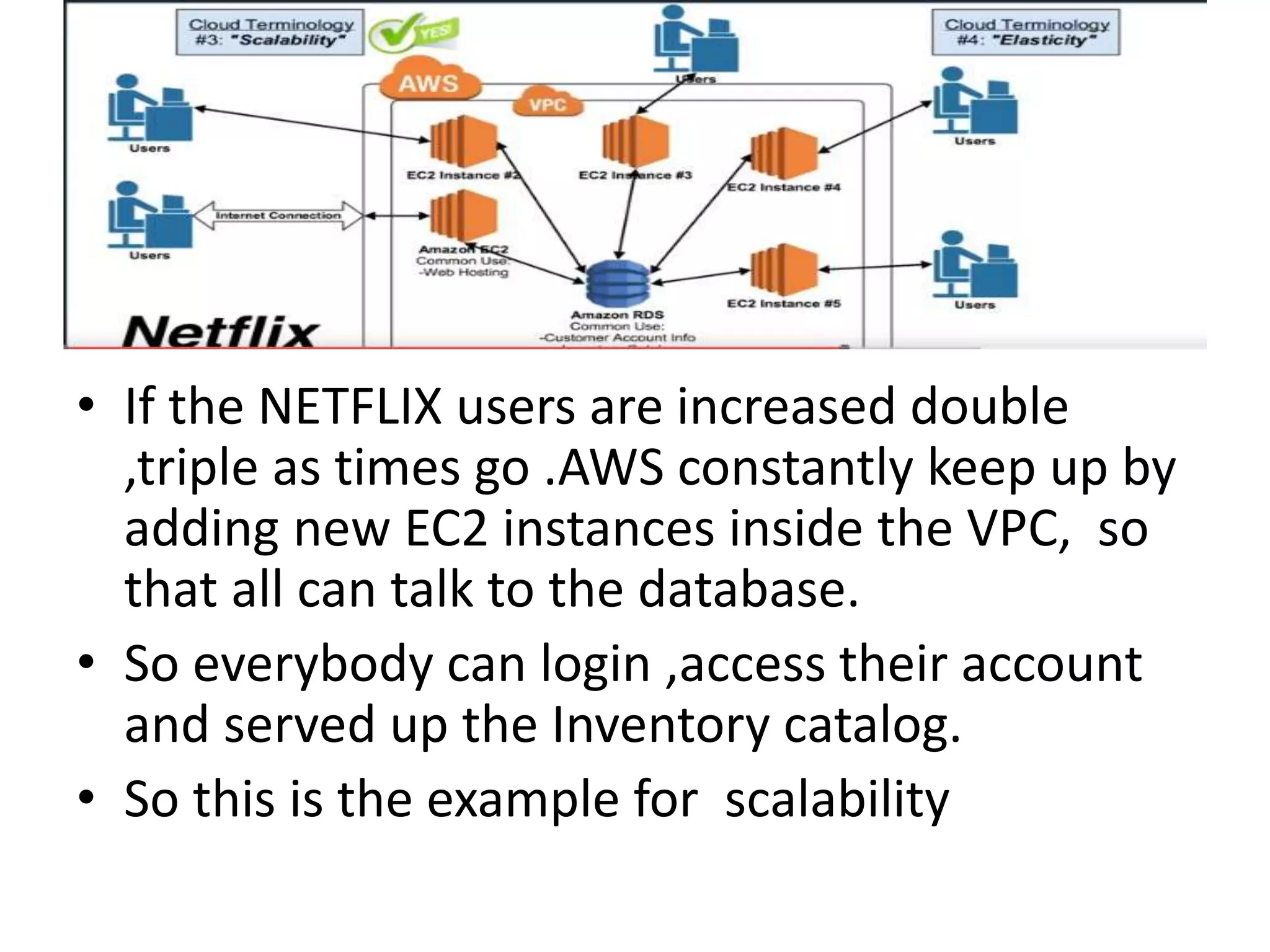

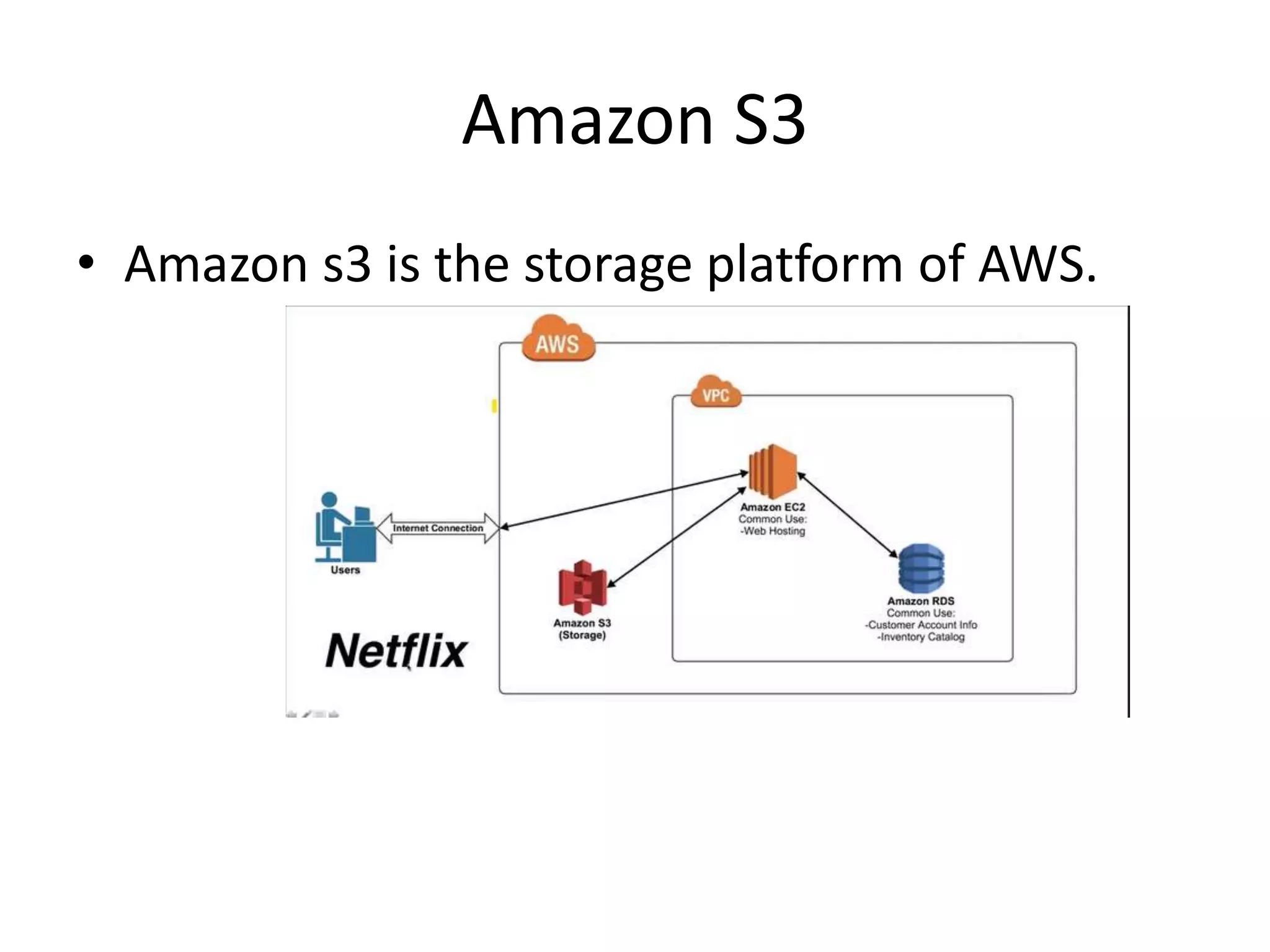

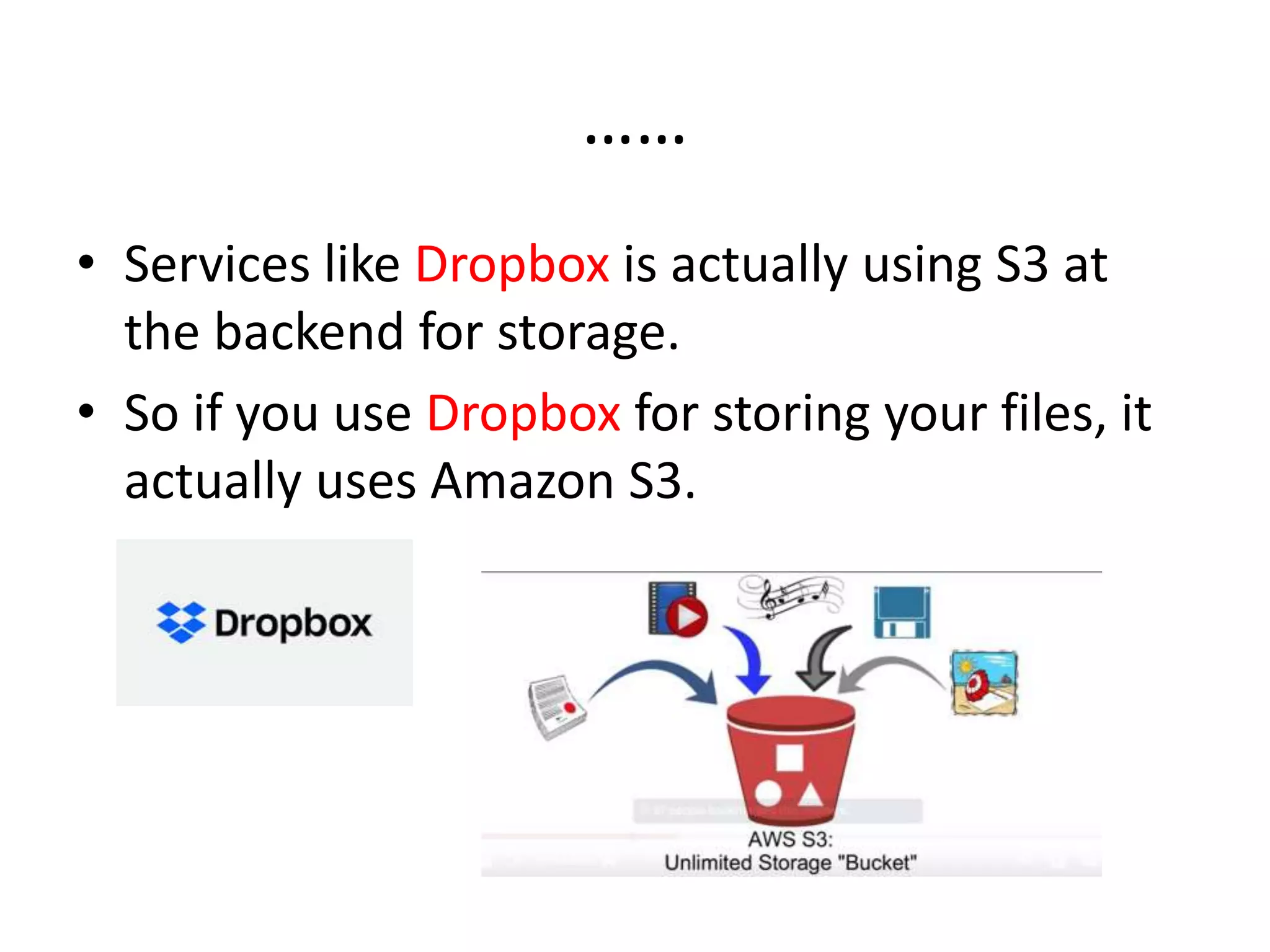

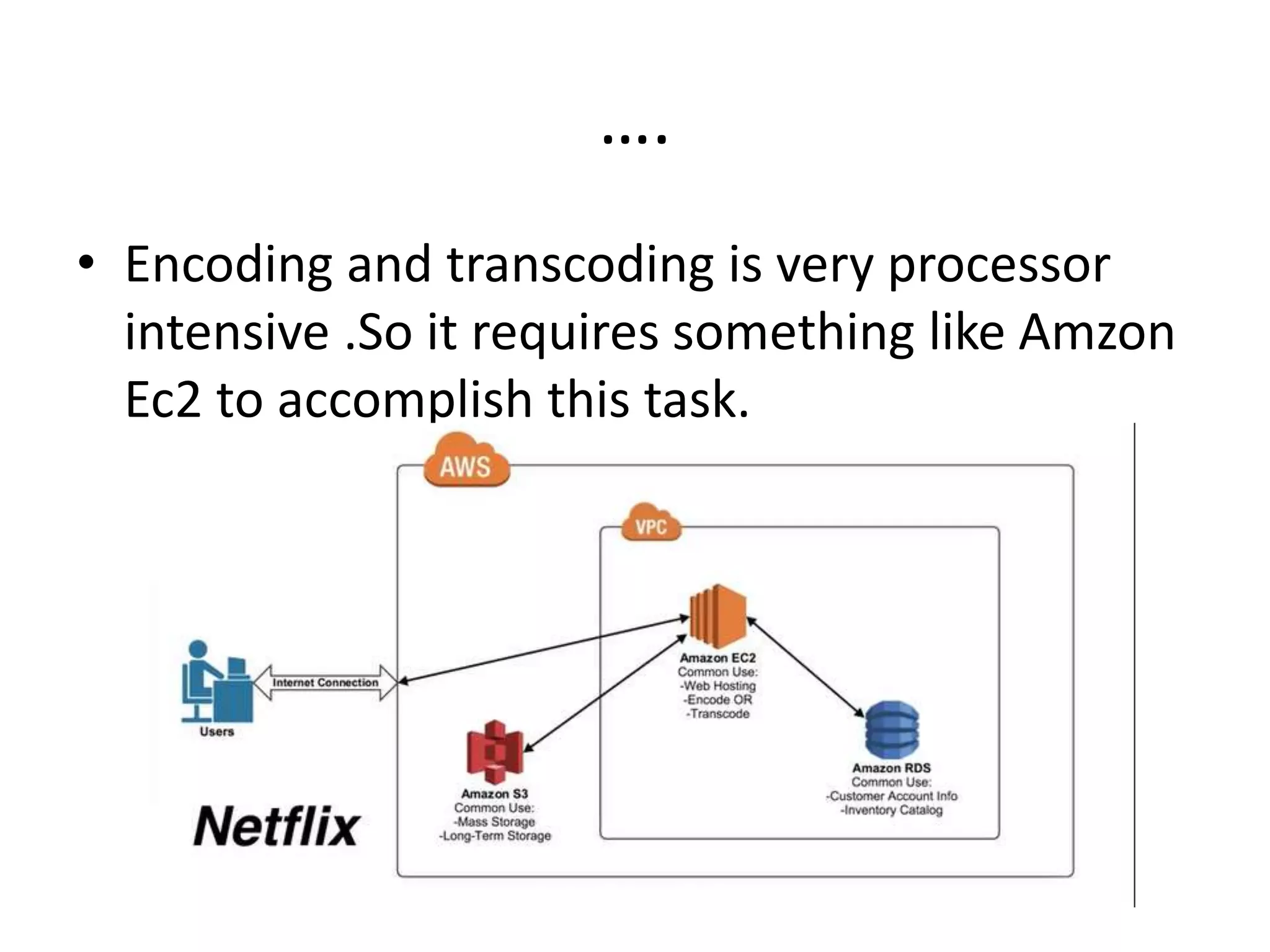

The document provides an overview of cloud computing and Amazon Web Services (AWS), explaining how cloud infrastructures like AWS offer high availability, fault tolerance, scalability, and elasticity for businesses. It discusses the advantages of using cloud services over on-premise data centers, including easy resource allocation and cost savings. Specific AWS services such as Amazon EC2, Amazon S3, and Amazon RDS are detailed, particularly in relation to their usage by companies like Netflix for web hosting, storage, and database management.