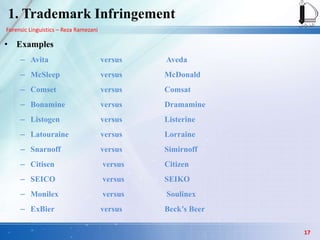

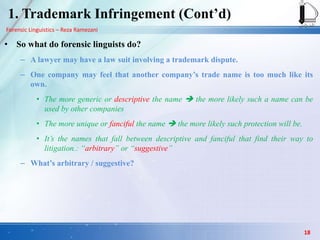

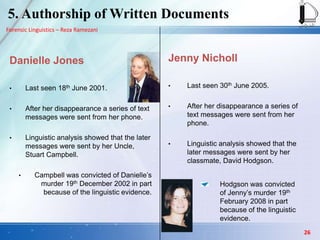

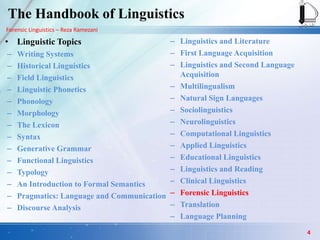

The document discusses the field of forensic linguistics, providing definitions and examples. It defines forensic linguistics as the application of linguistic analysis to legal issues and problems. Some key areas that forensic linguistics can be applied to are discussed in more detail, including trademark infringement, product liability, speaker identification, and authorship analysis of written documents. Examples are given for each area to illustrate how linguistic analysis can be used, such as comparing trademark names, analyzing warning labels, comparing voices in recordings, and profiling authors based on linguistic patterns in written texts.

![Forensic Linguistics – Reza Ramezani

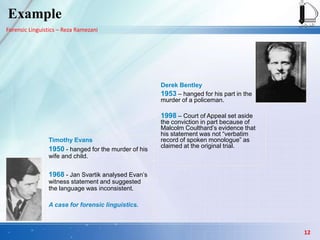

Derek Bentley statement

Bentley was hanged 28th January, 1953,

for his part in the murder of a policeman.

On 30th July, 1998 he was pardoned,

partly on the basis of the evidence of

Malcolm Coulthard who demonstrated

linguistic anomalies in his statement.

In the original trial it was claimed by the

prosecution that the statement was

produced by Bentley as a monologue and

in response to a simple request for his

account of events.

[…] The policeman then pushed me

down the stairs and I did not see any

more. I knew we were going to break

into the place, I did not know what we

were going to get - just anything that

was going. I did not have a gun and I did

not know Chris had one until he shot.

I now know that the policeman in

uniform is dead. I should have

mentioned that after the plainclothes

policeman got up the drainpipe and

arrested me, another policeman in

uniform followed and I heard someone

call him 'Mac'. He was with us when the

other policeman was killed.

Example

13](https://image.slidesharecdn.com/anintroductiontoforensiclinguistics-130923011358-phpapp02/85/An-introduction-to-forensic-linguistics-13-320.jpg)

![Forensic Linguistics – Reza Ramezani

Derek Bentley statement

‘then’ occurs

1 in 500 words in general language,

1 in every 930 words in undisputed

witness statements,

1 in every 78 words in police witness

statements and

1 in 57 words in this statement.

‘I then’ occurs

1 in 16500 words in general language,

1 in 5700 words in undisputed witness

statements,

1 in 100 words in police witness

statements

1 in every 190 words in this statement.

[…] My mother told me that they had

called and I then ran after them. […]

We all talked together and then

Norman Parsley and Frank Fasey left.

Chris Craig and I then caught a bus to

Croyden. […] There was a little iron

gate at the side. Chris then jumped

and over I followed. Chris then climbed

up the drainpipe to the roof and I

followed. Up to then Chris had not said

anything. We both got out on to the

flat roof at the top. Then someone in

the garden on the opposite side…

Example

14](https://image.slidesharecdn.com/anintroductiontoforensiclinguistics-130923011358-phpapp02/85/An-introduction-to-forensic-linguistics-14-320.jpg)