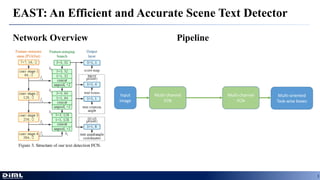

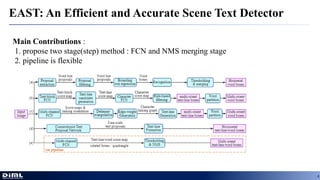

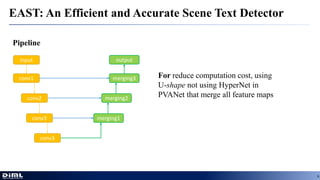

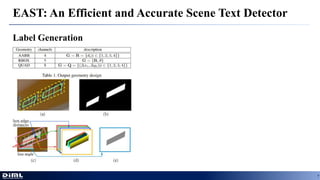

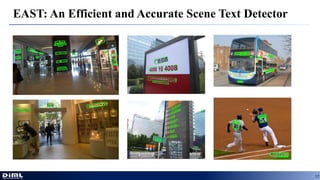

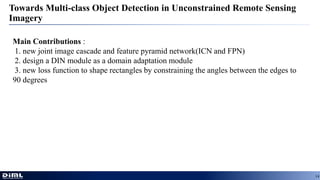

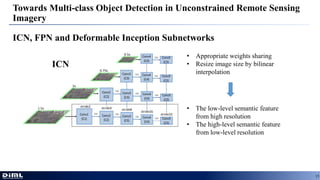

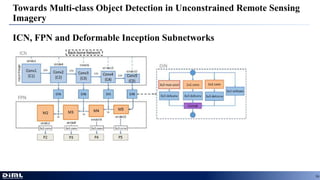

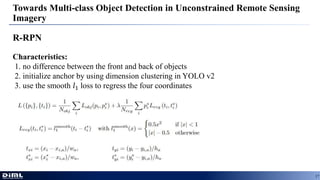

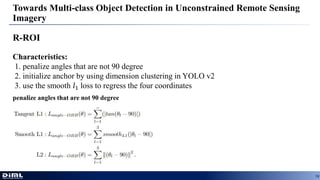

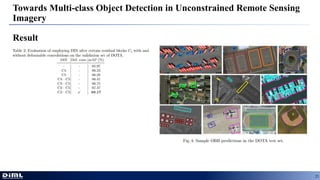

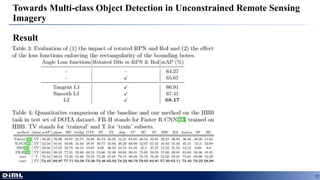

The document discusses advancements in aerial object detection, highlighting two main approaches: EAST for efficient scene text detection and a multi-class object detection framework for unconstrained remote sensing imagery. It details methodologies such as a two-stage pipeline, label generation techniques, and the use of a locality-aware NMS for improved accuracy. Additionally, it introduces a new joint image cascade and feature pyramid network, alongside a domain adaptation module and specialized loss functions for optimizing rectangular outputs.