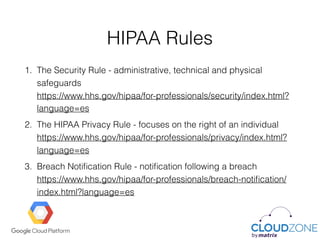

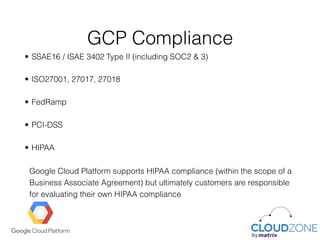

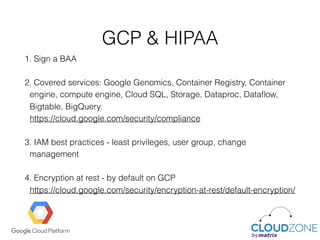

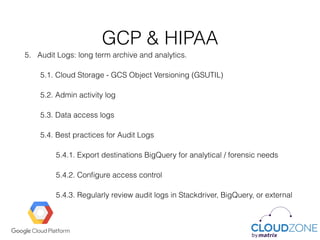

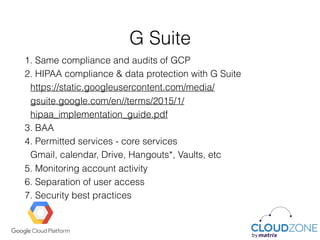

The document outlines HIPAA regulations and compliance requirements for Google Cloud Platform (GCP), emphasizing the shared responsibility between Google and its customers. It details key HIPAA rules, responsibilities for protecting personal health information, and compliance frameworks applicable to GCP, including necessary agreements like the business associate agreement (BAA). Additionally, it discusses best practices for using GCP and G Suite to ensure HIPAA compliance and protect sensitive healthcare data.