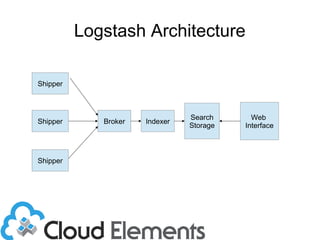

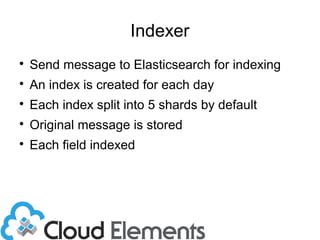

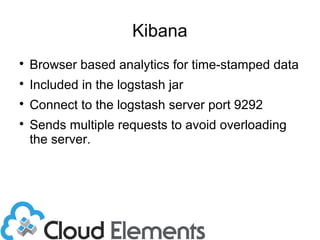

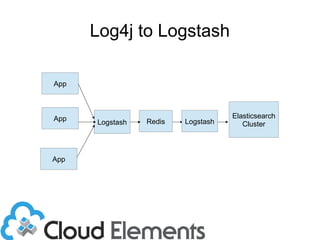

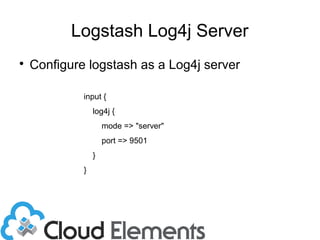

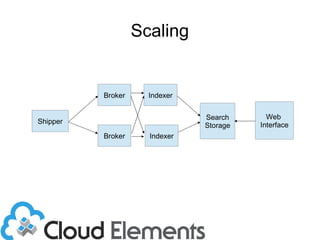

The document outlines log scaling and analytics using Logstash, addressing common logging challenges such as load on databases and difficulties in log format management. It describes Logstash as an open-source tool that processes, filters, and outputs logs to various destinations while integrating with Elasticsearch for indexing and Kibana for analytics. Additionally, it provides details on the architecture, plugins, and configuration necessary for effective log management and data analysis.

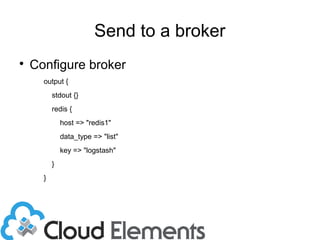

![Sending to Broker

output {

stdout {}

redis {

host => ["redis1", “redis2”]

data_type => "list"

shuffle_hosts => true

key => "logstash"

}

}](https://image.slidesharecdn.com/logstash-140409105729-phpapp02/85/Scalable-Logging-and-Analytics-with-LogStash-21-320.jpg)