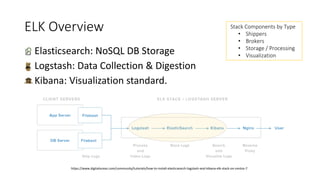

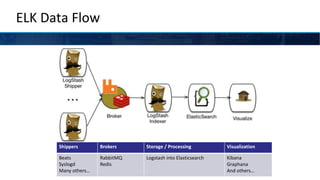

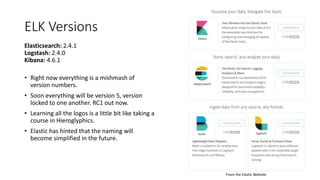

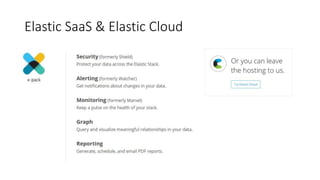

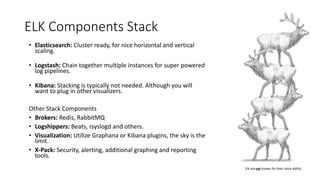

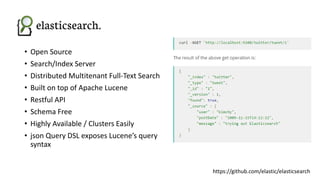

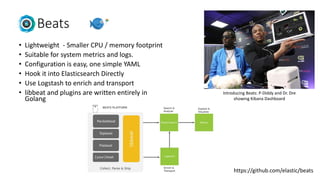

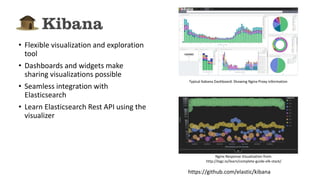

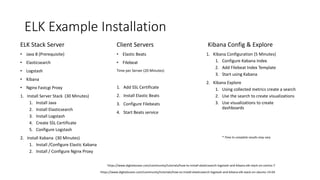

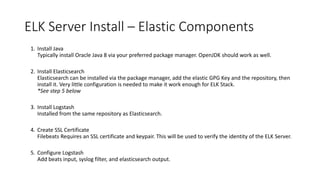

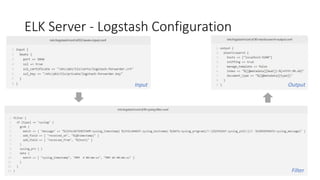

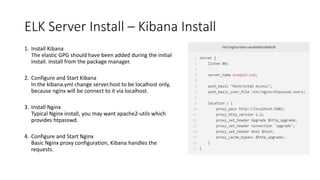

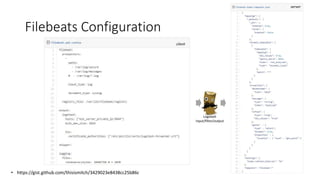

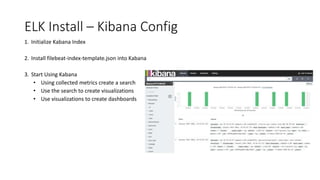

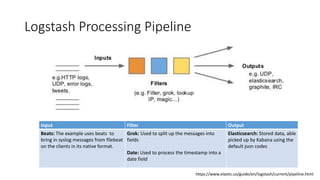

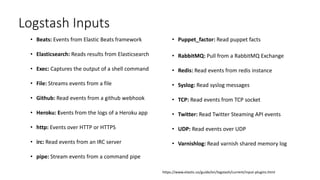

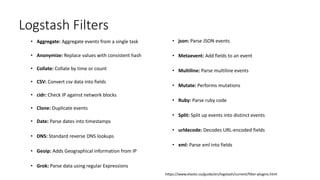

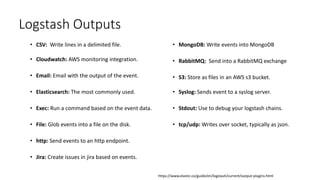

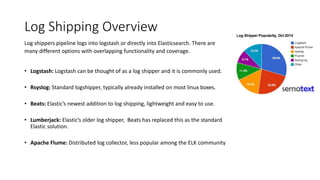

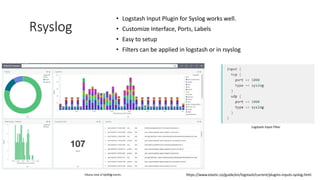

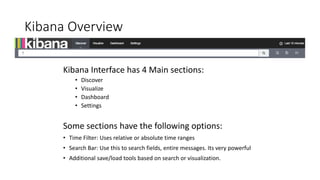

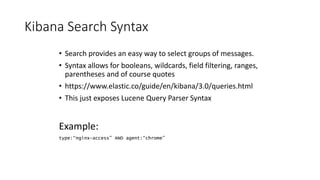

The document provides an overview of the ELK stack (Elasticsearch, Logstash, and Kibana), detailing its components, installation process, and production considerations. It covers architecture, data flow, and challenges associated with utilizing ELK for logging and analytics, while emphasizing its capability to scale and manage log data effectively. Additionally, it addresses the various plugins, configurations, and security measures necessary for optimizing the ELK stack in a production environment.