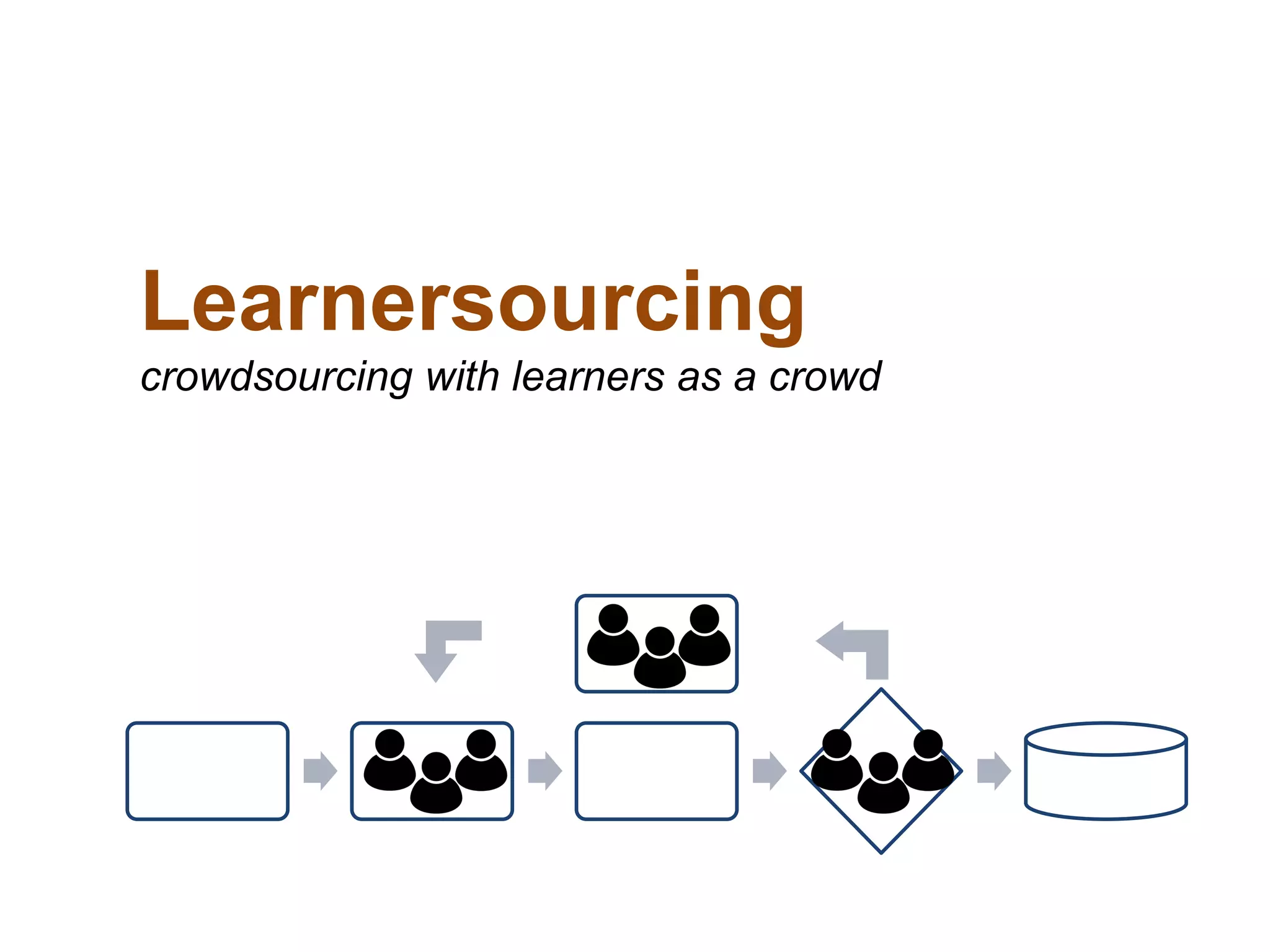

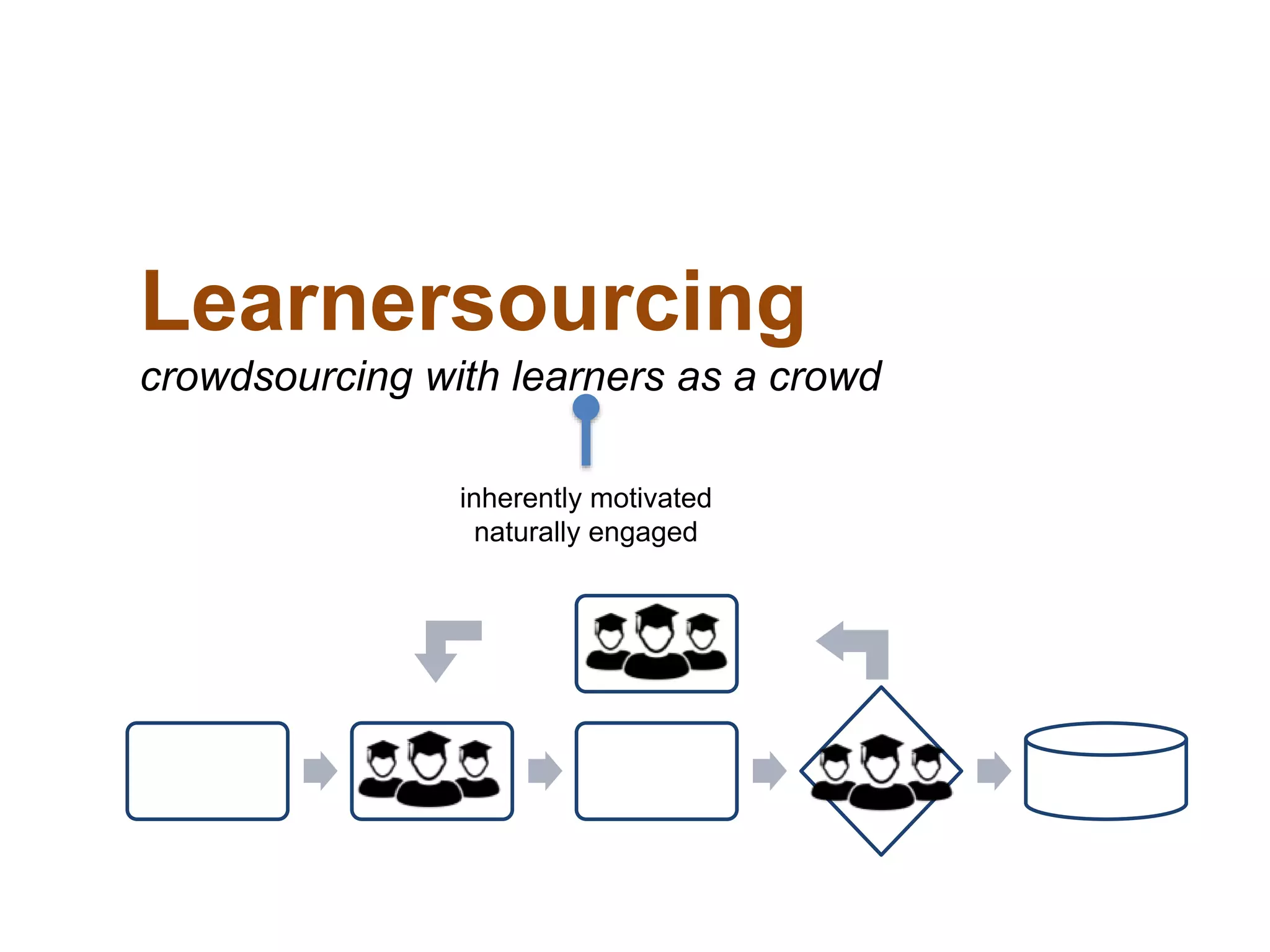

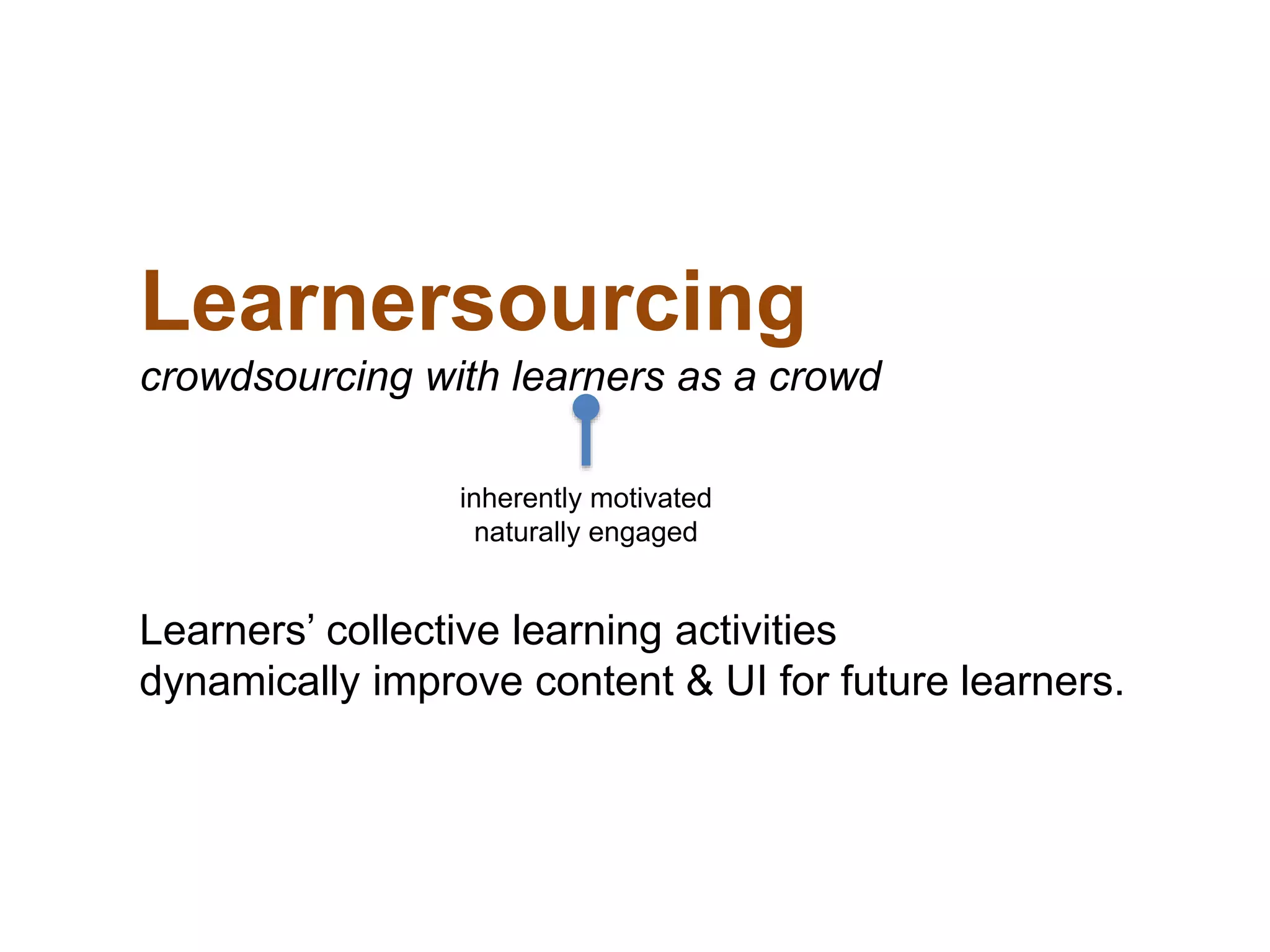

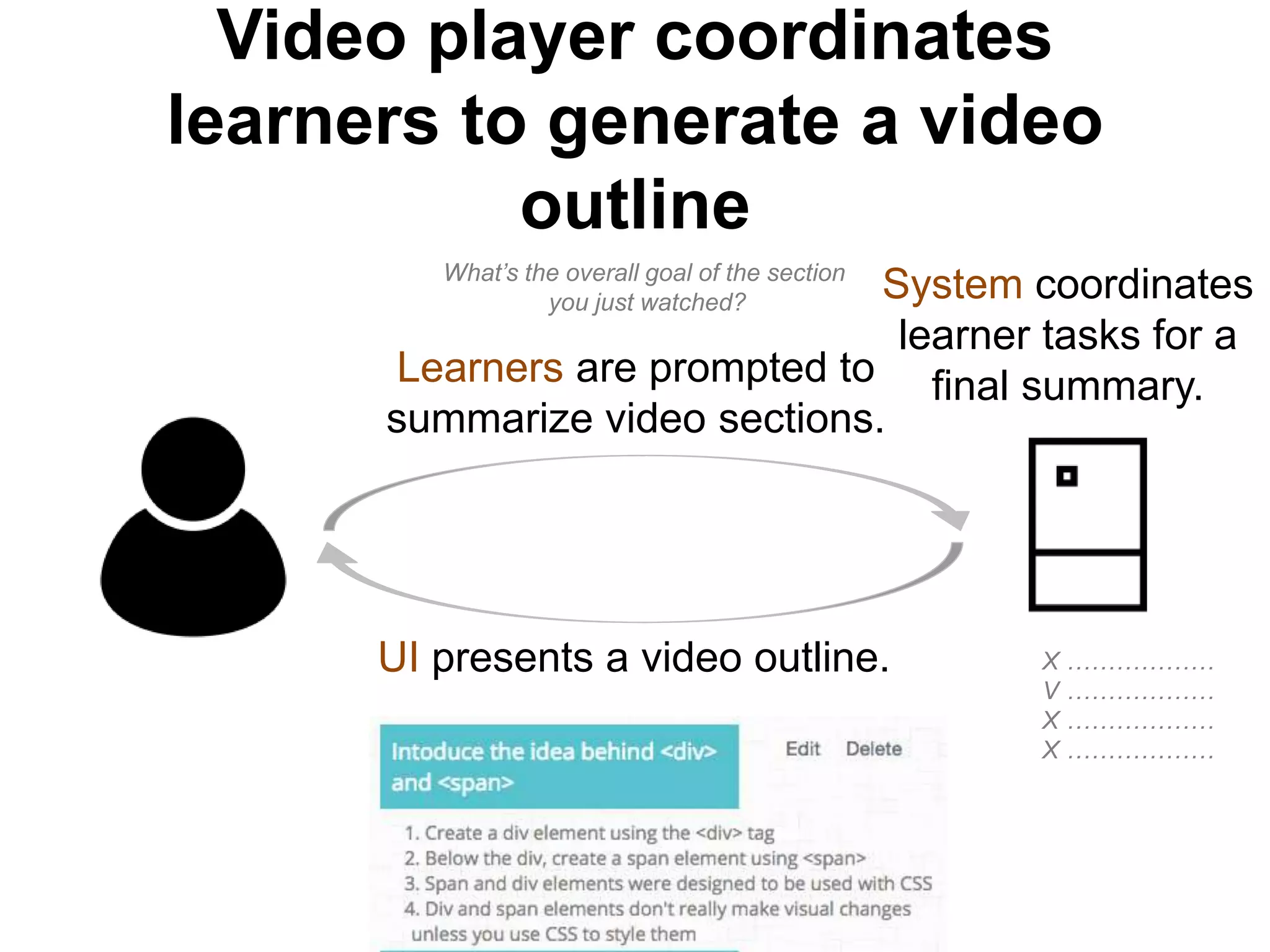

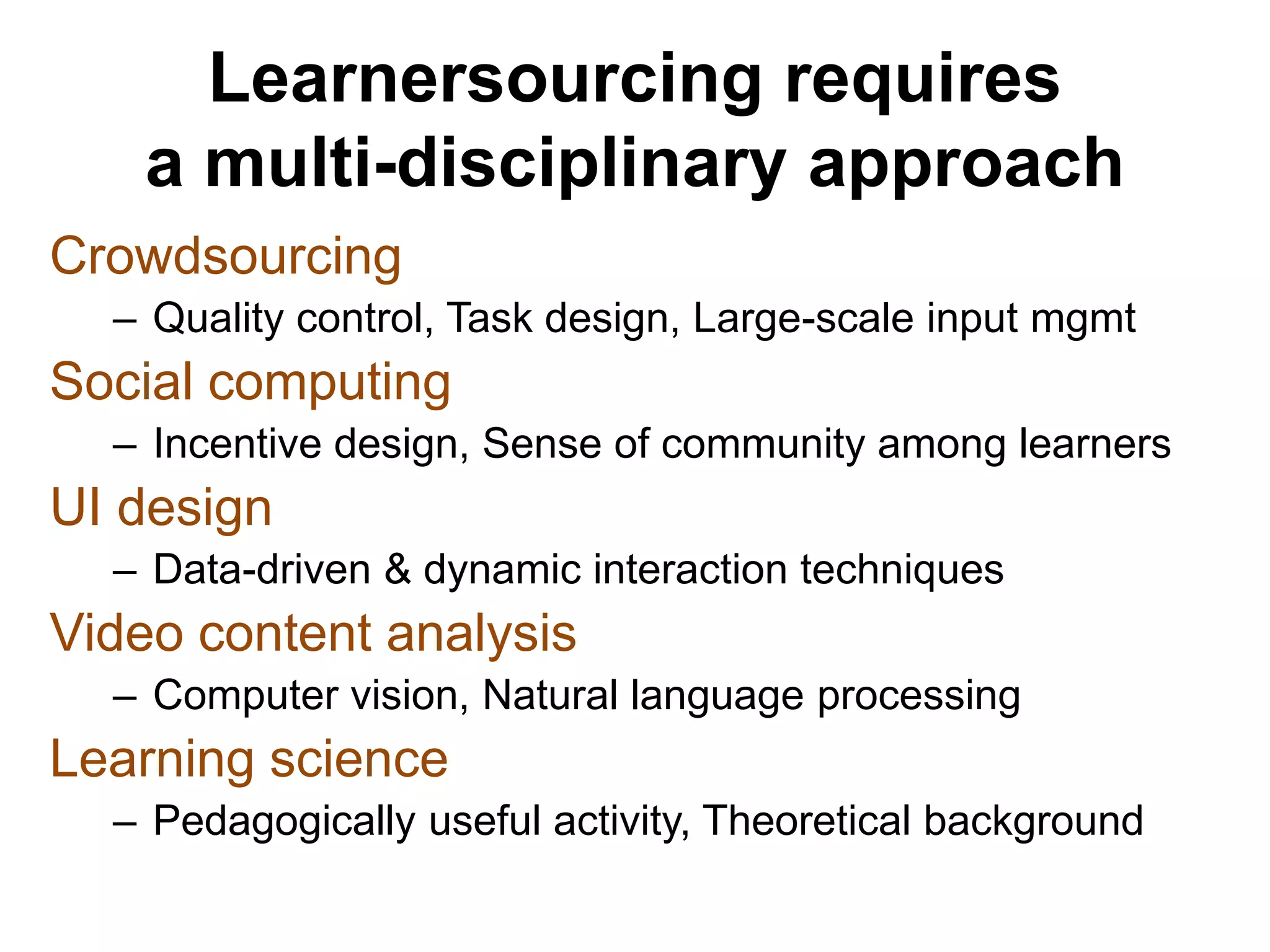

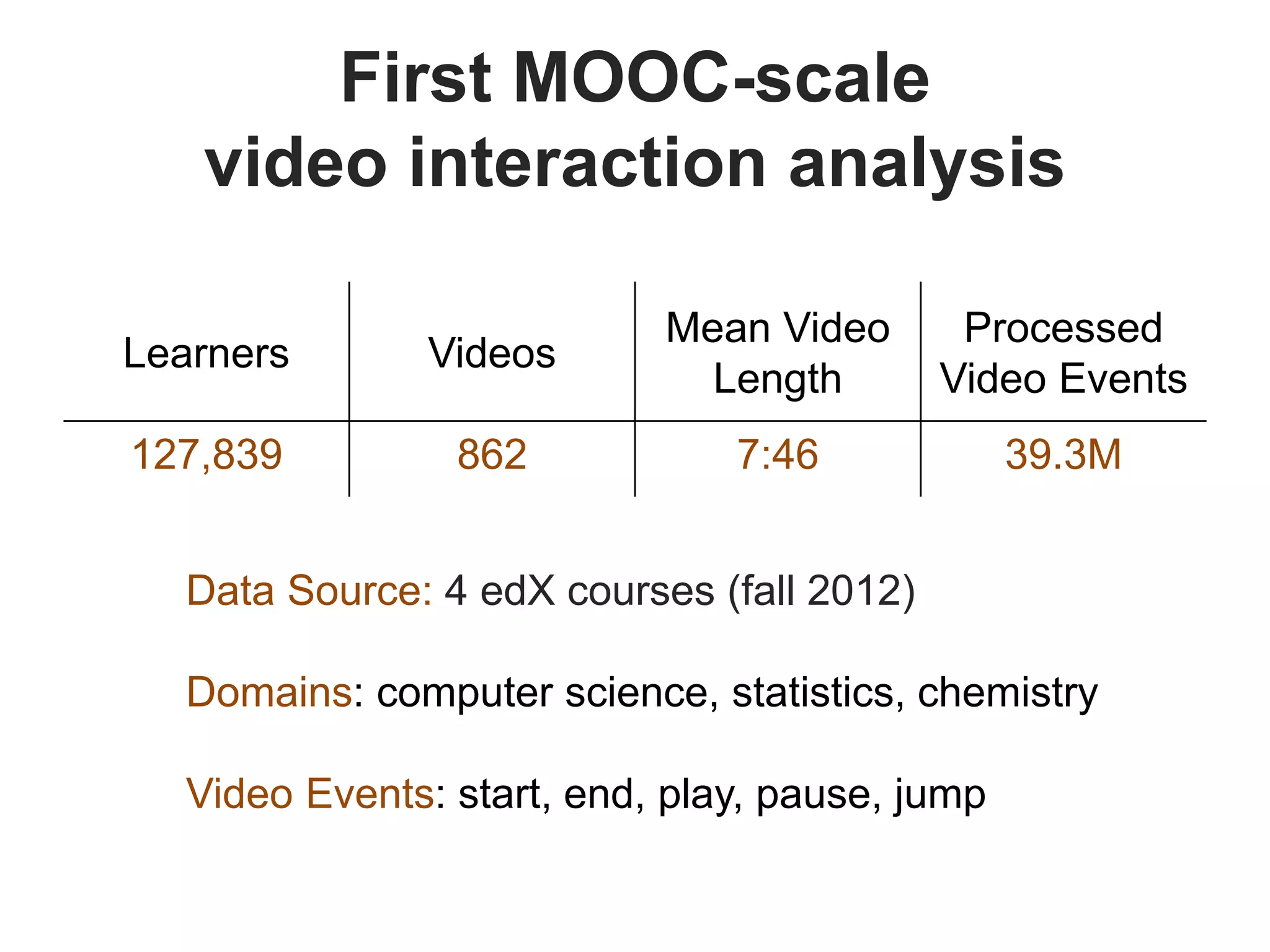

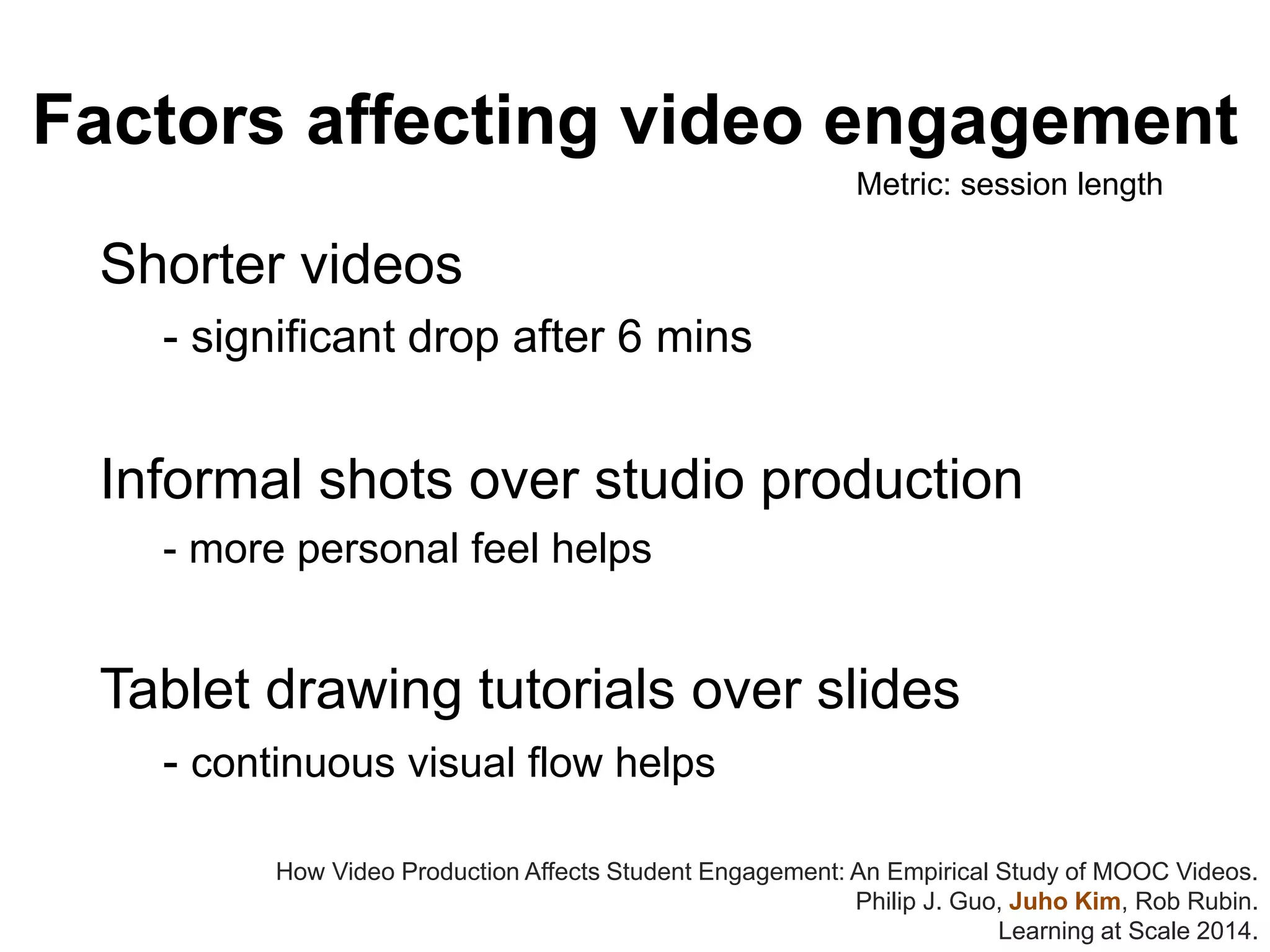

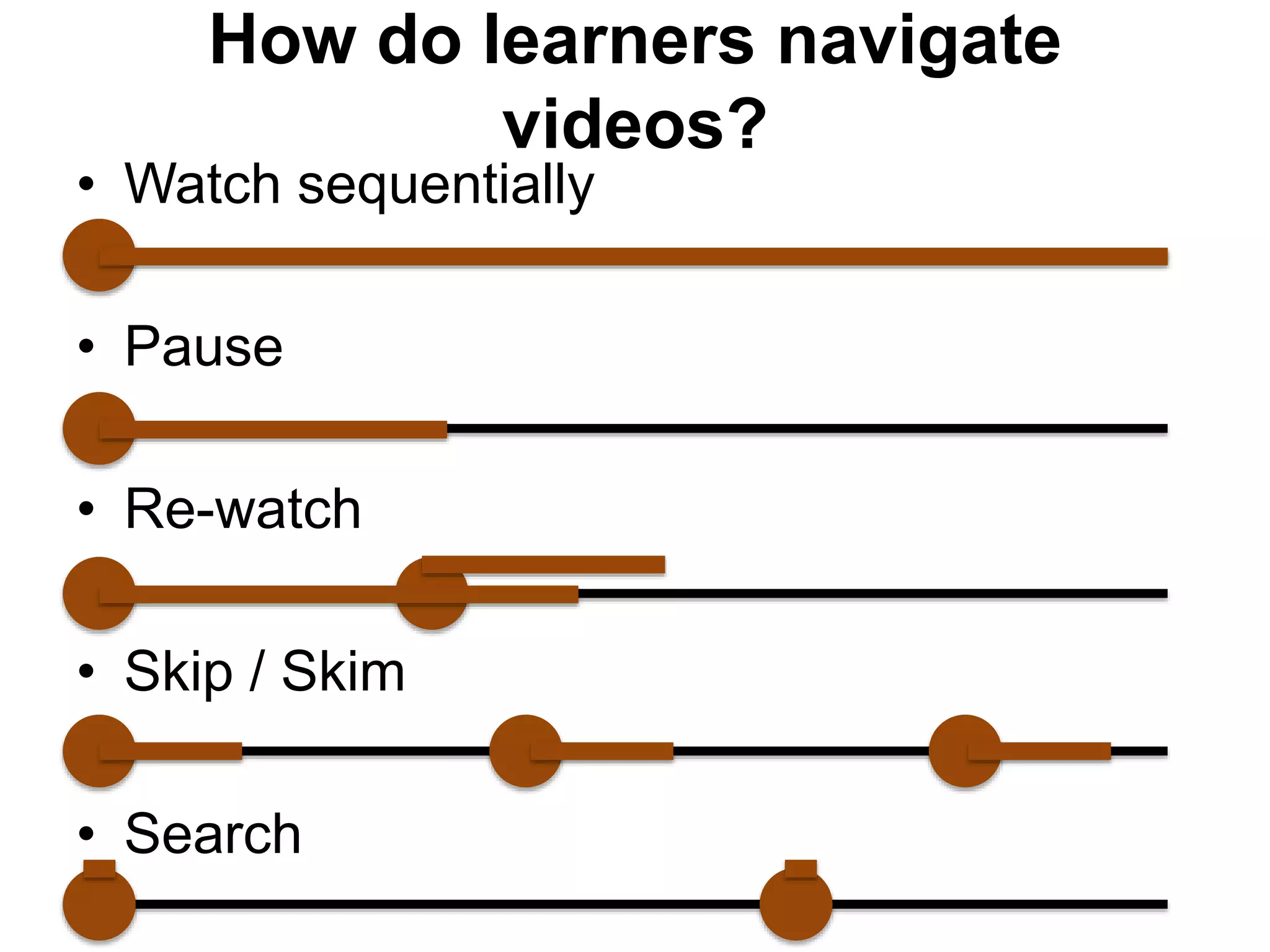

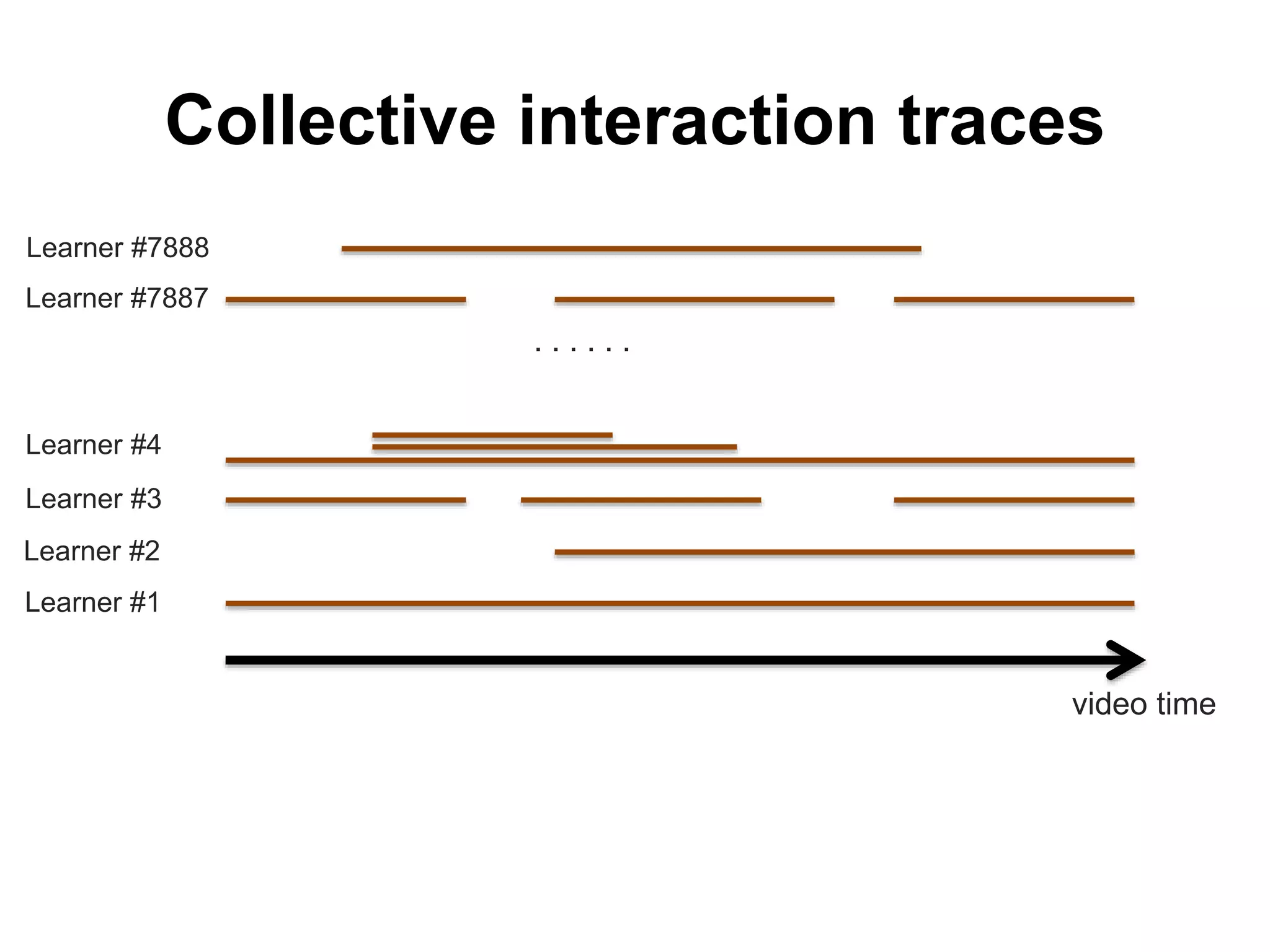

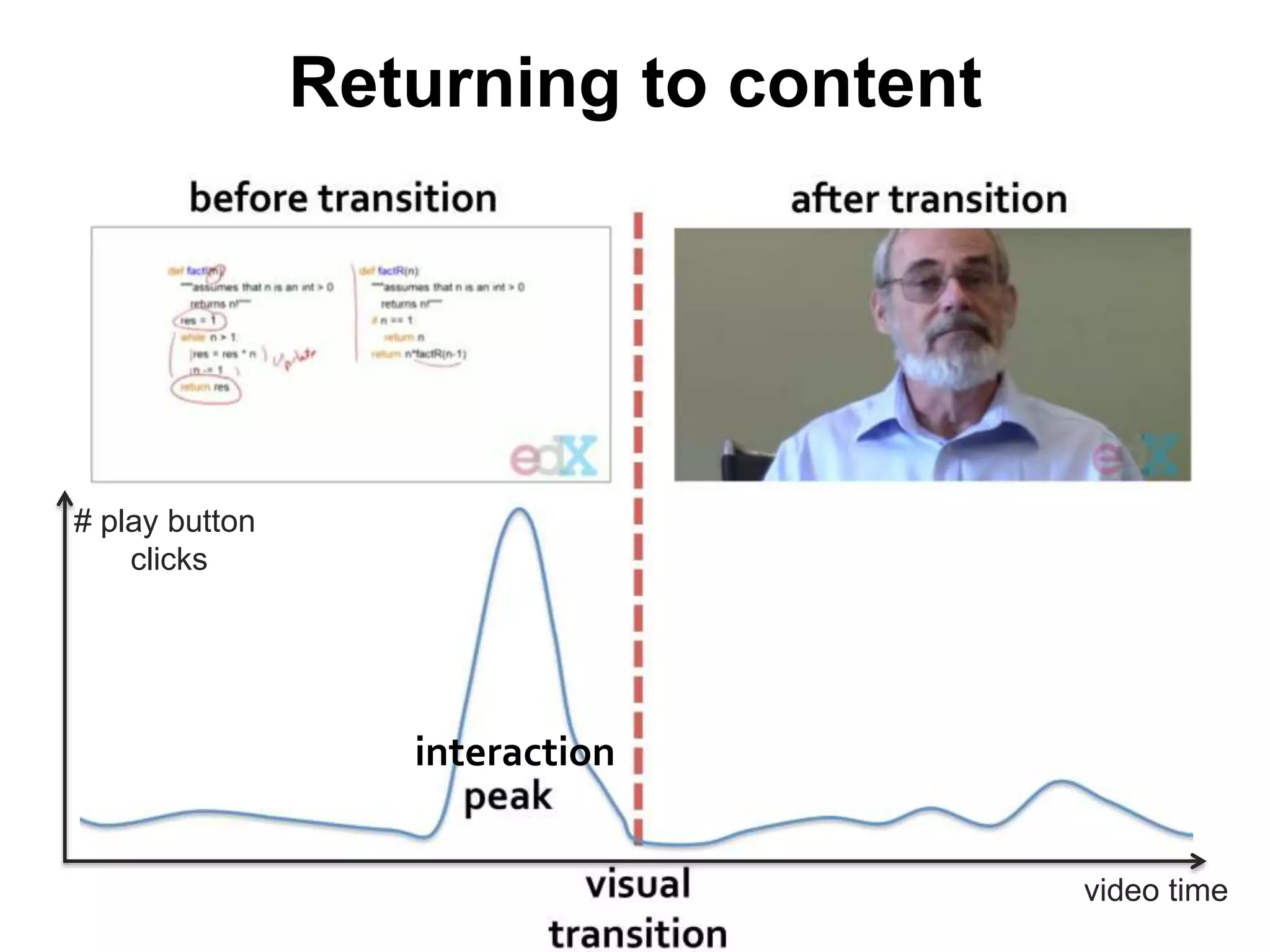

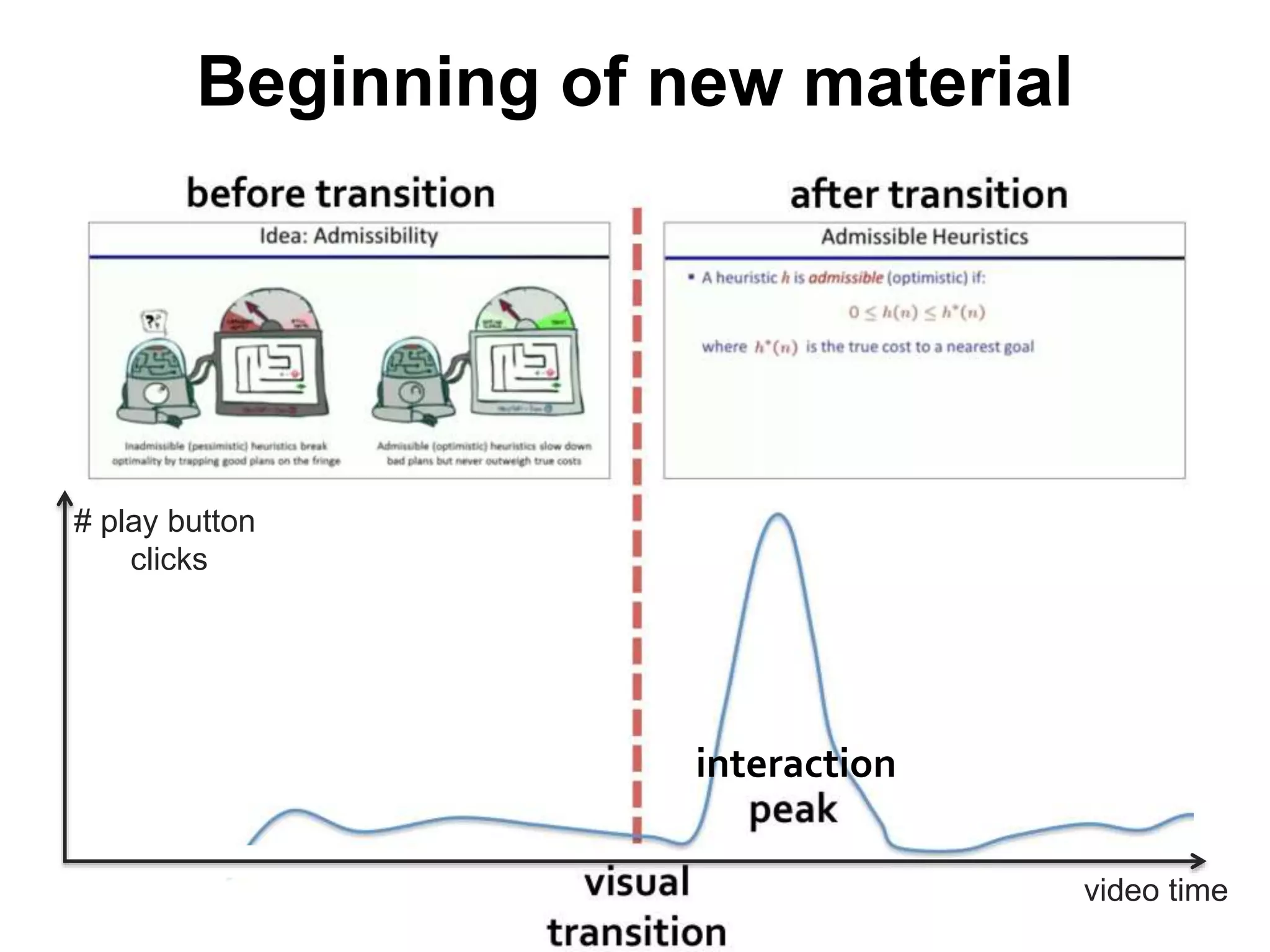

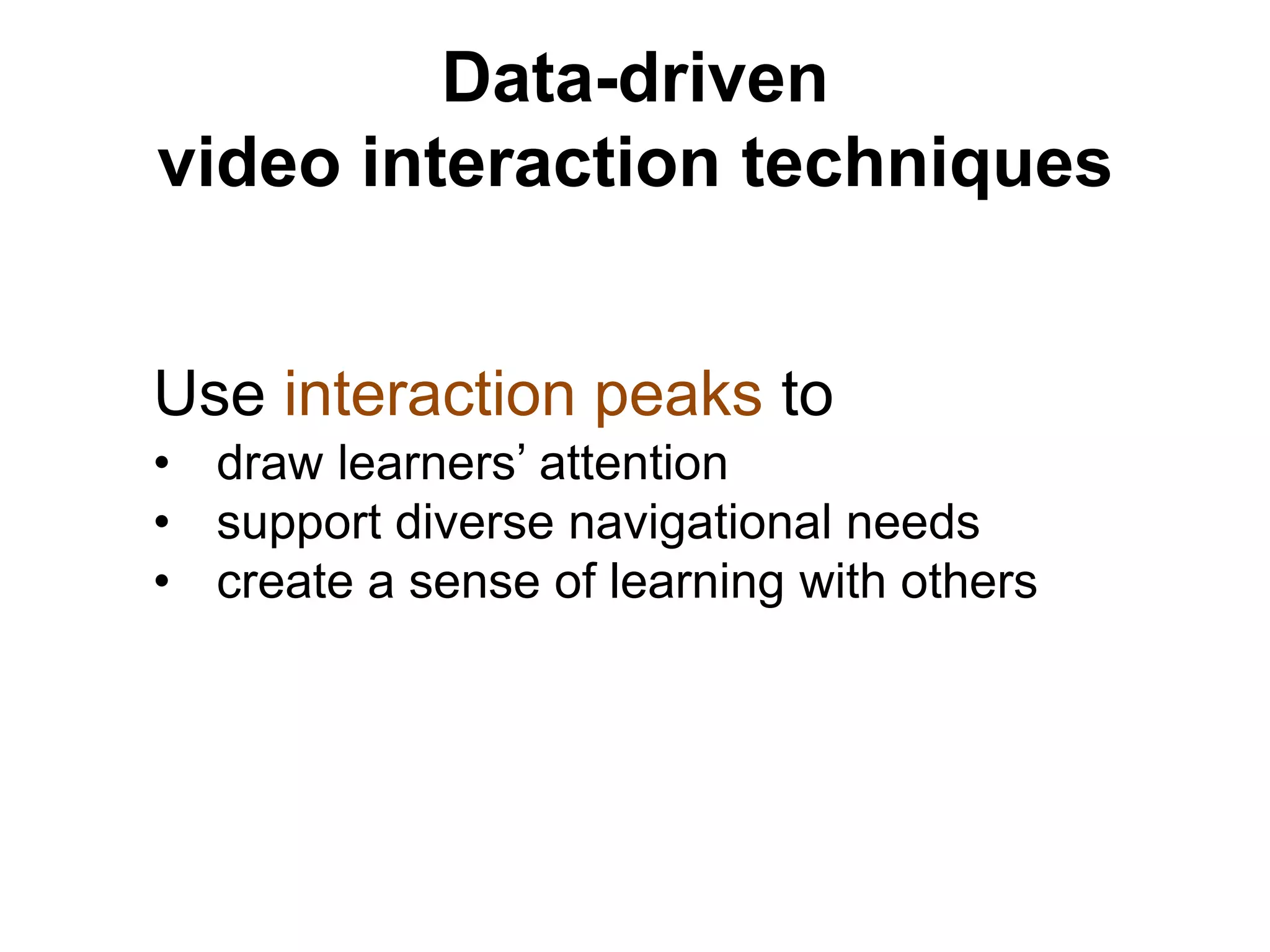

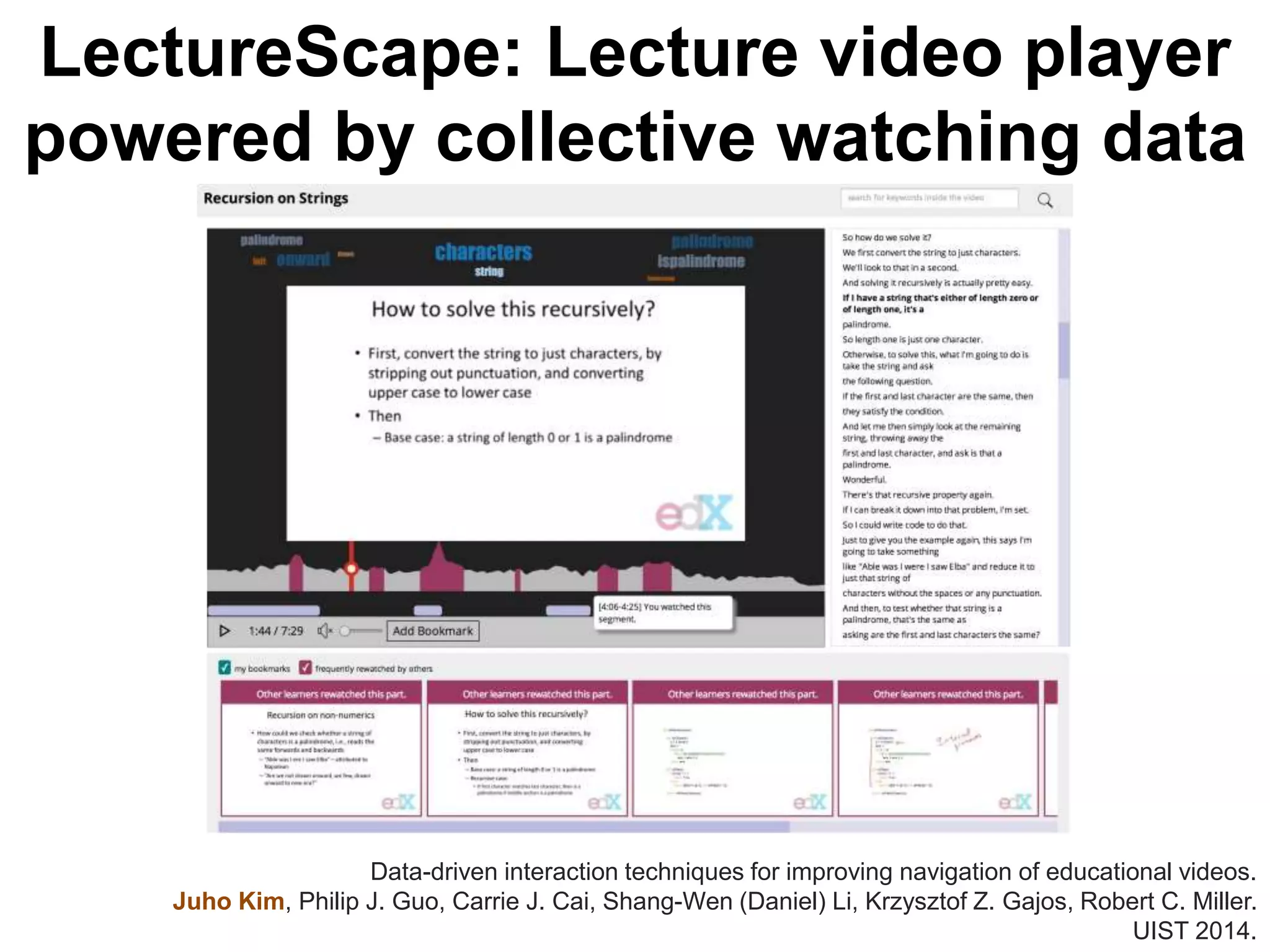

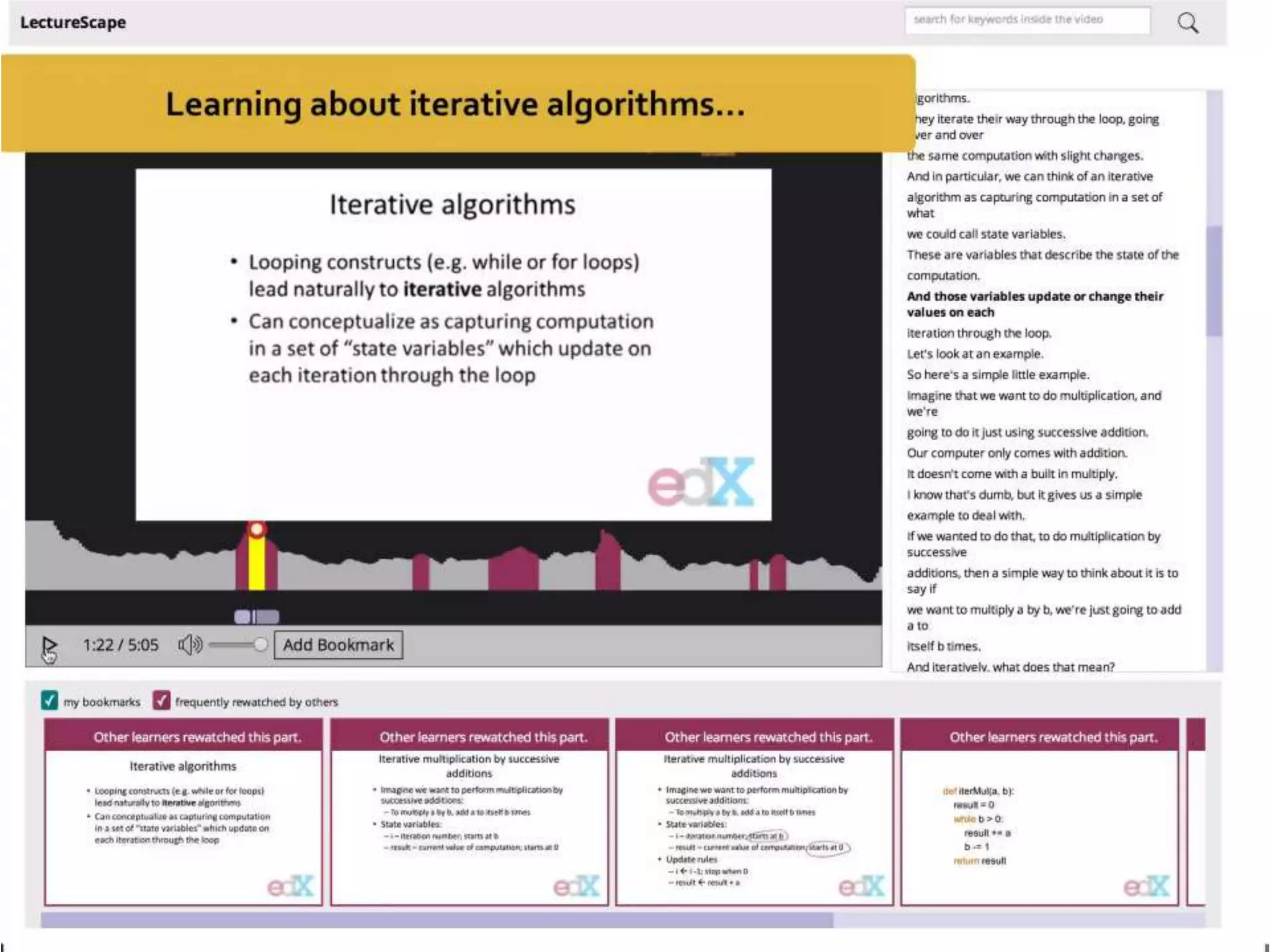

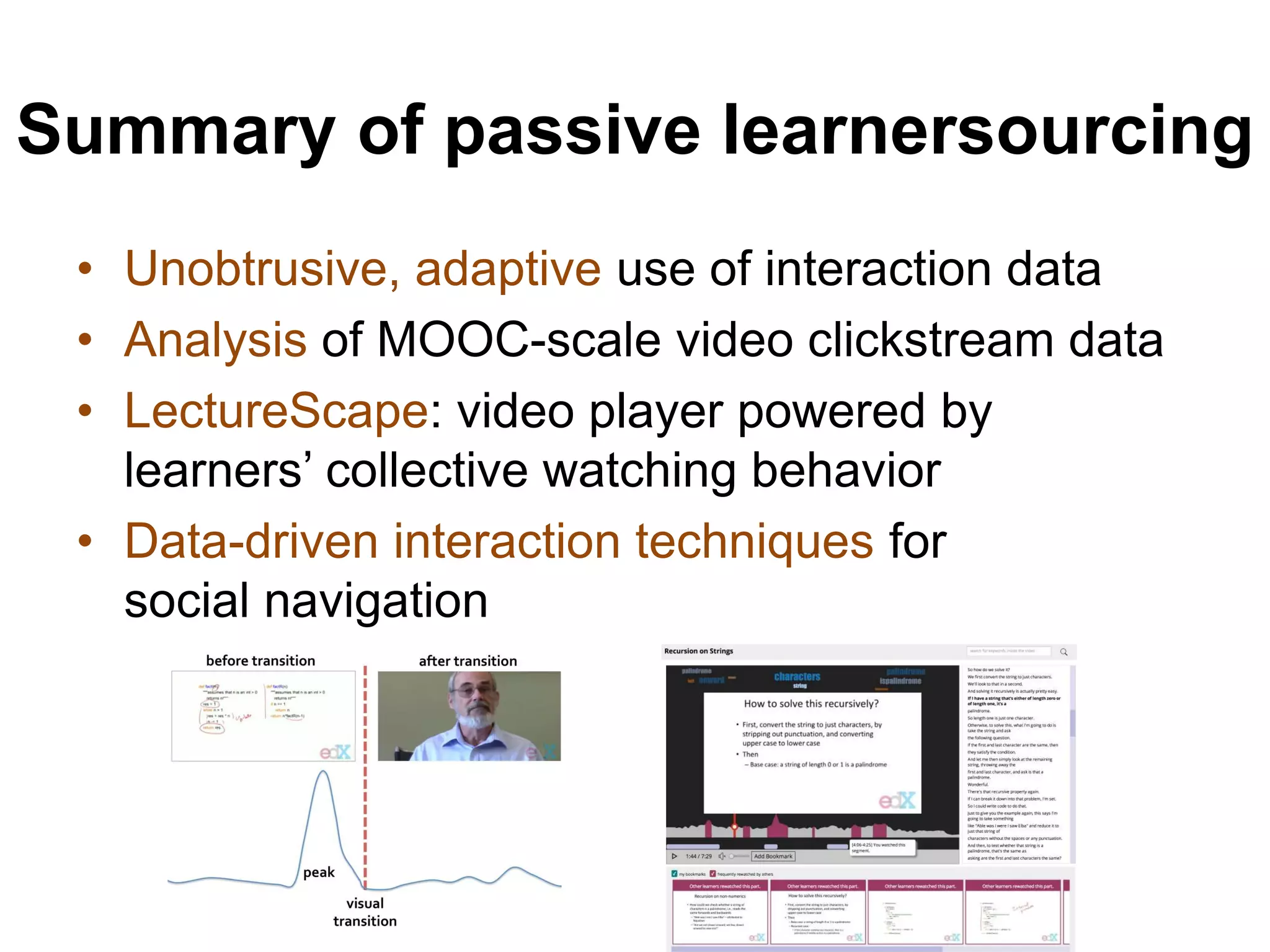

Juho Kim from MIT CSAIL discusses learnersourcing, a method to enhance video learning through collective learner engagement and data-driven interactions. By analyzing learner interactions, this approach seeks to improve video content and user interfaces, fostering better navigation and a collaborative learning environment. The document highlights applications of learnersourcing in educational videos, detailing both passive and active engagement strategies to boost learning outcomes.

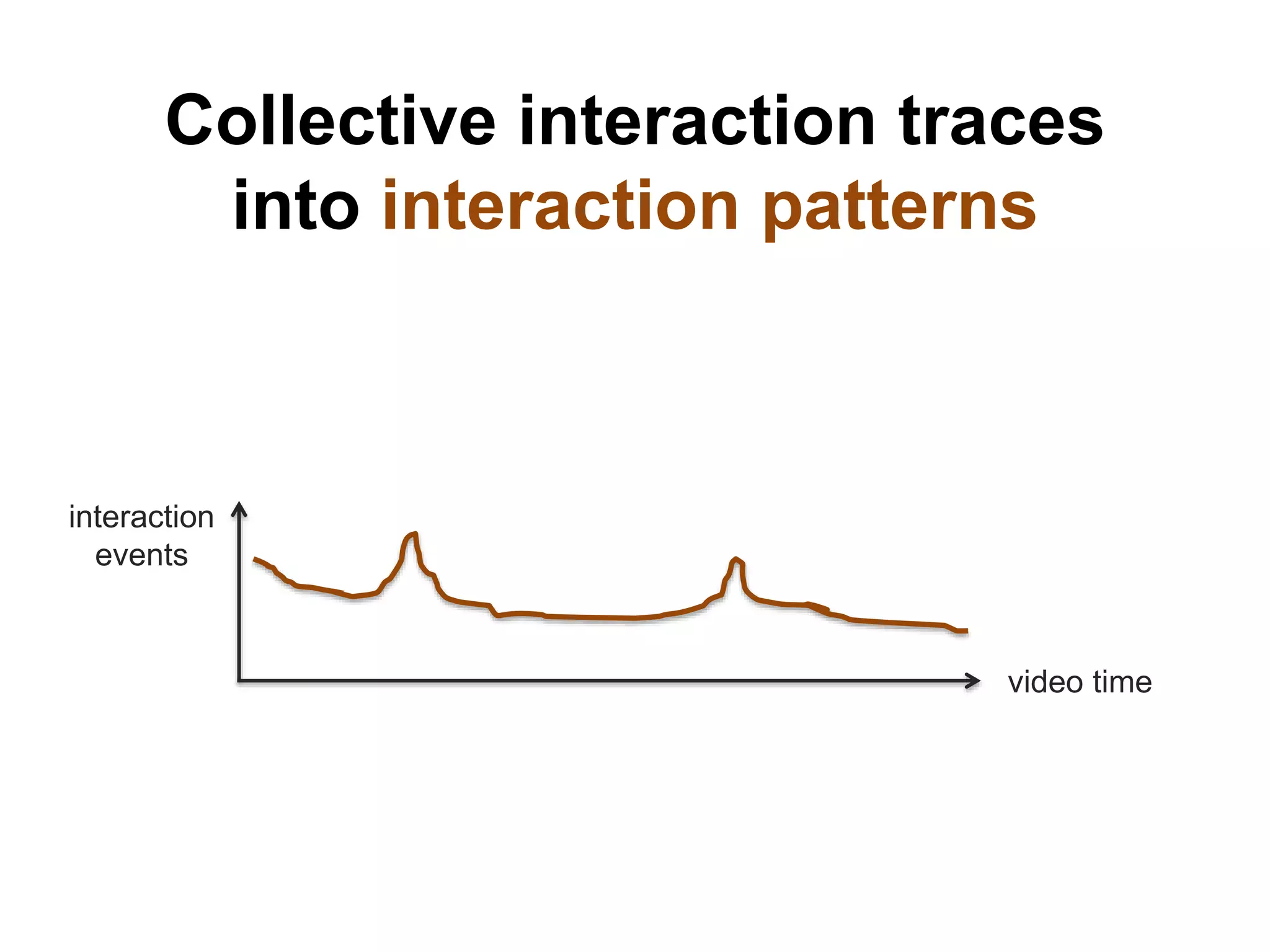

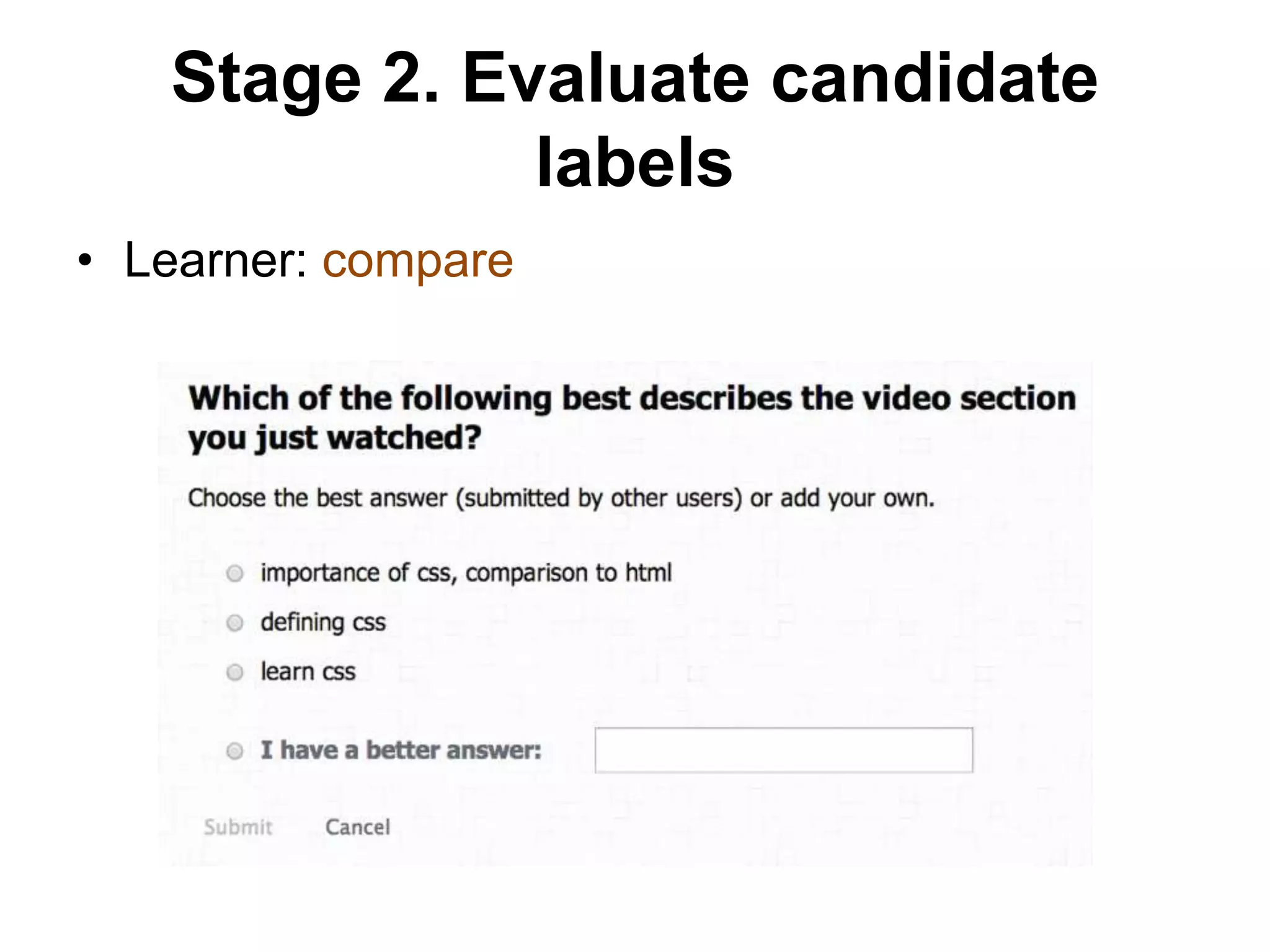

![Learners watch videos.

UI provides

social navigation & recommendation.

System analyzes

interaction traces

for hot spots.

[Learner3879, Video327, “play”, 35.6]

[Learner3879, Video327, “pause”, 47.2]

…

Video player adapts to

collective learner engagement](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-13-2048.jpg)

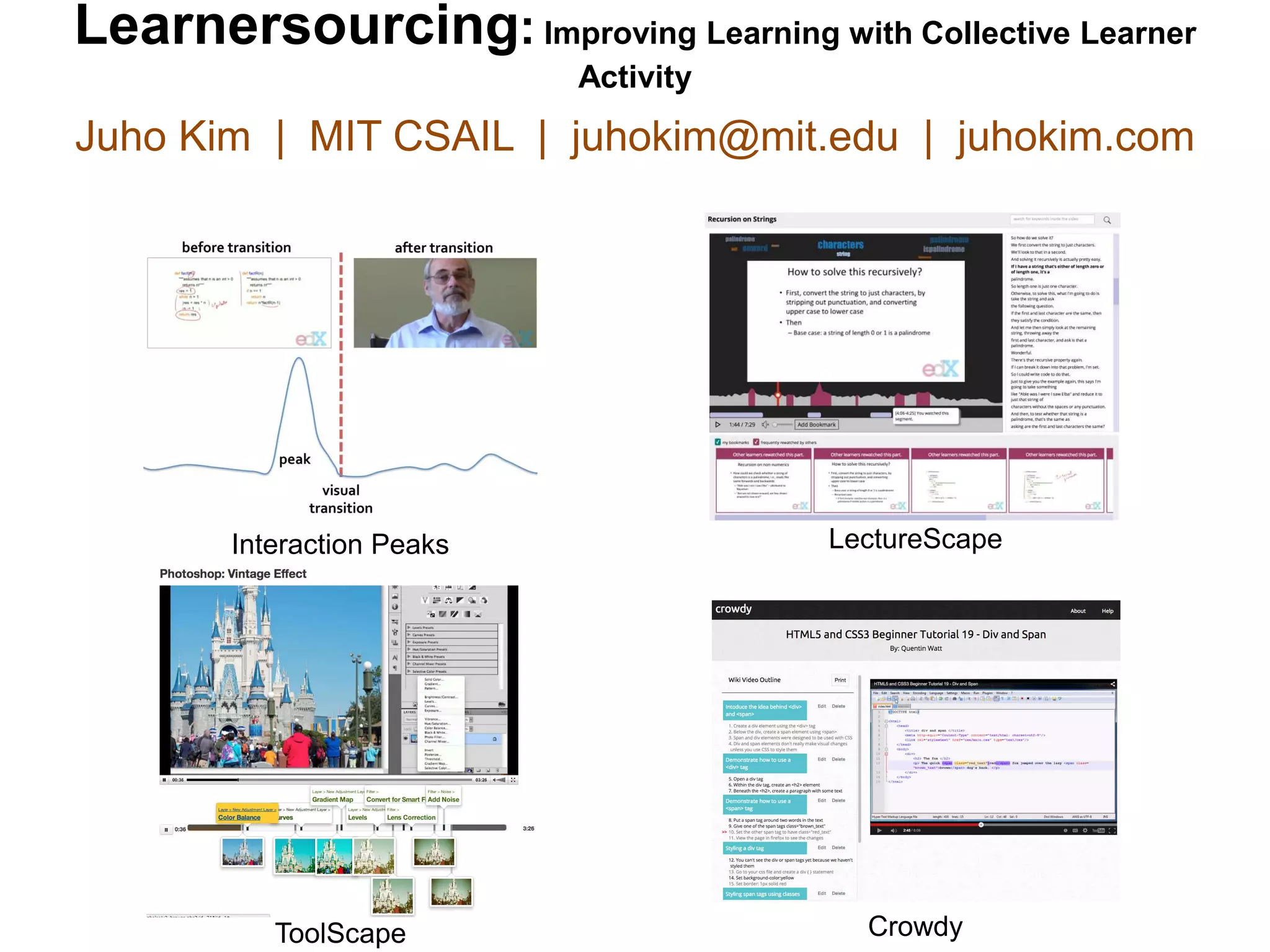

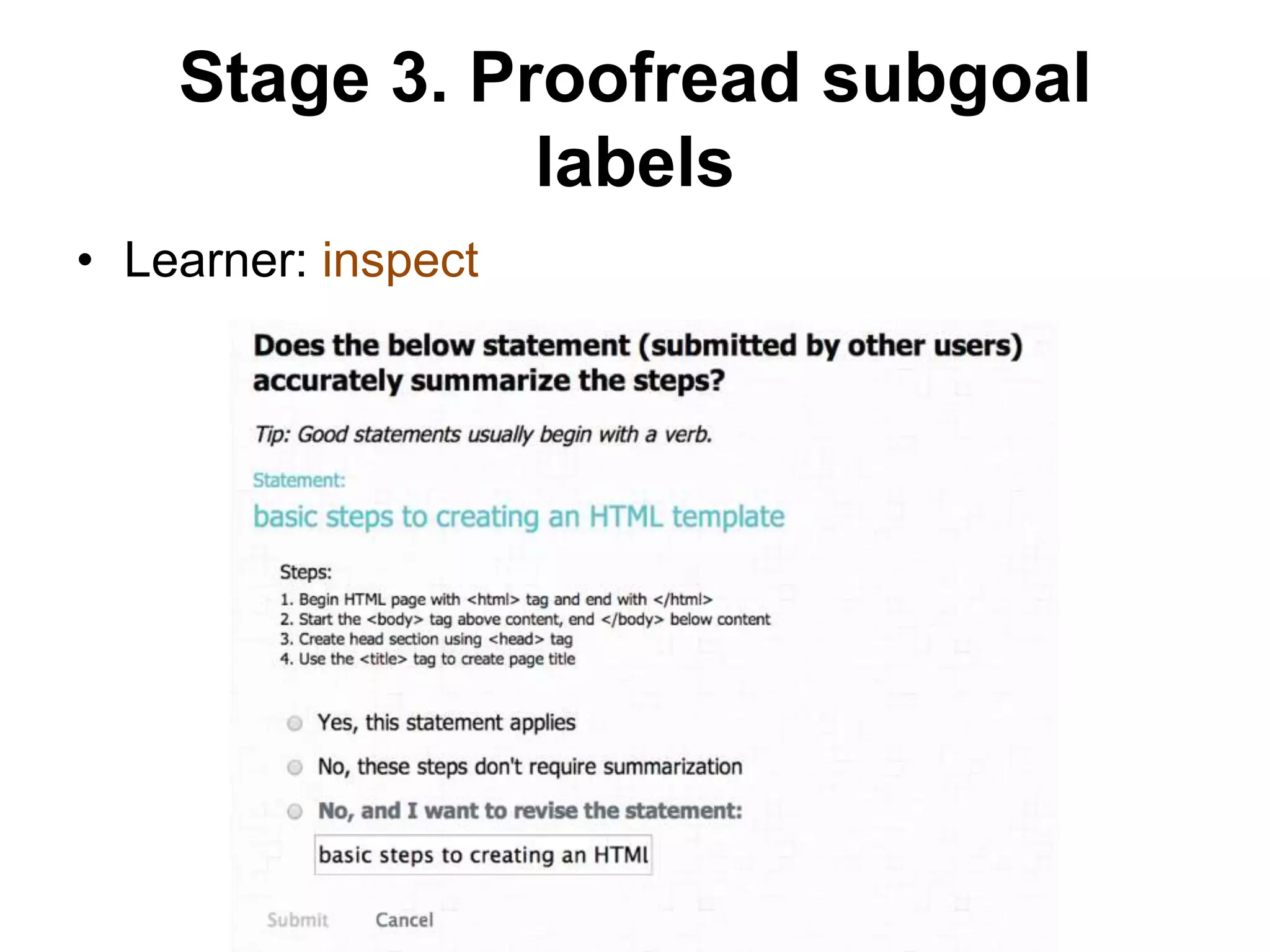

![Learnersourcing applications for

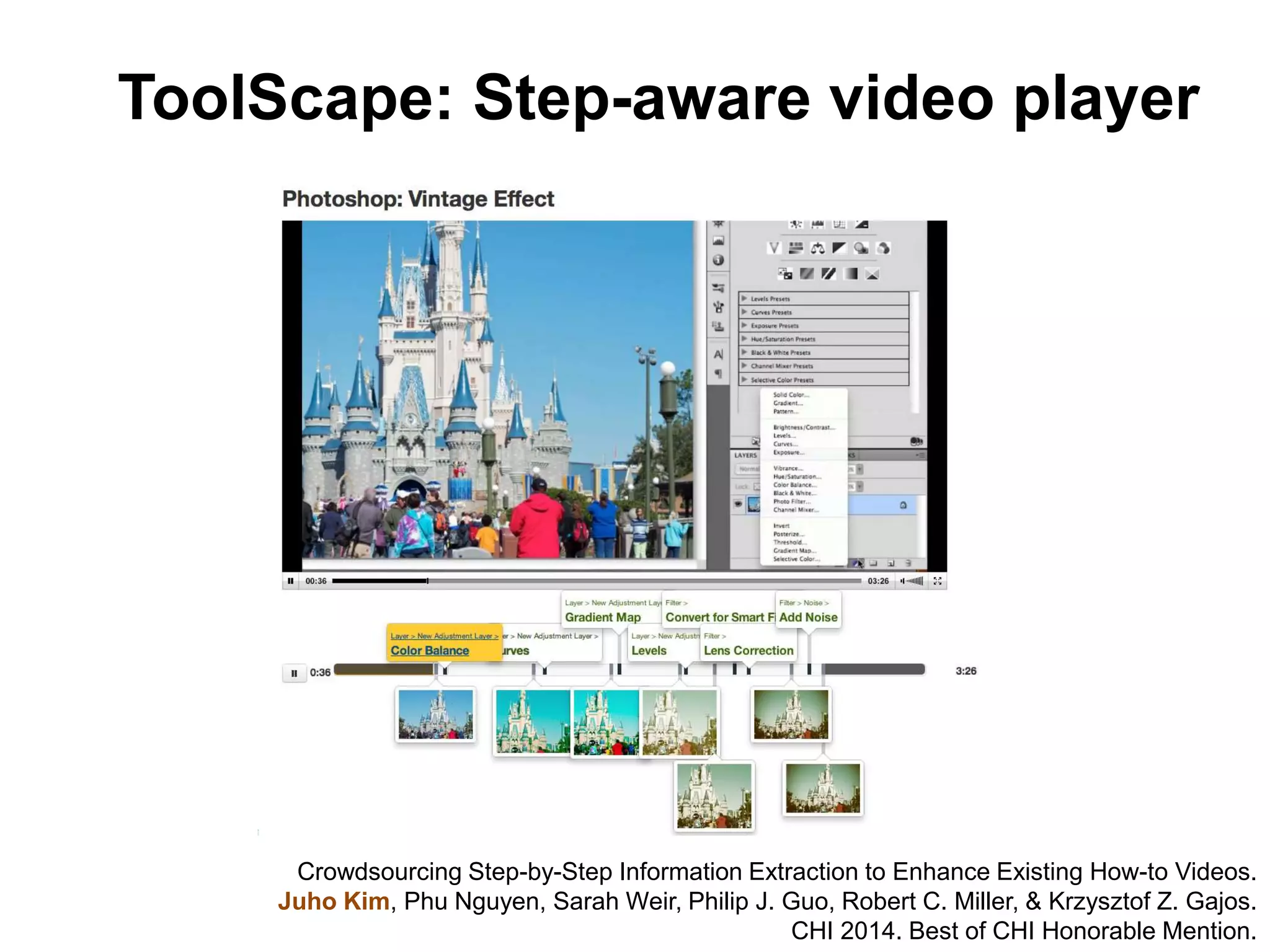

educational videos

ToolScape [CHI 2014]

Interaction Peaks [L@S 2014] LectureScape [UIST 2014] RIMES [CHI 2015]

Mudslide [CHI 2015]Crowdy [CSCW 2015]](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-16-2048.jpg)

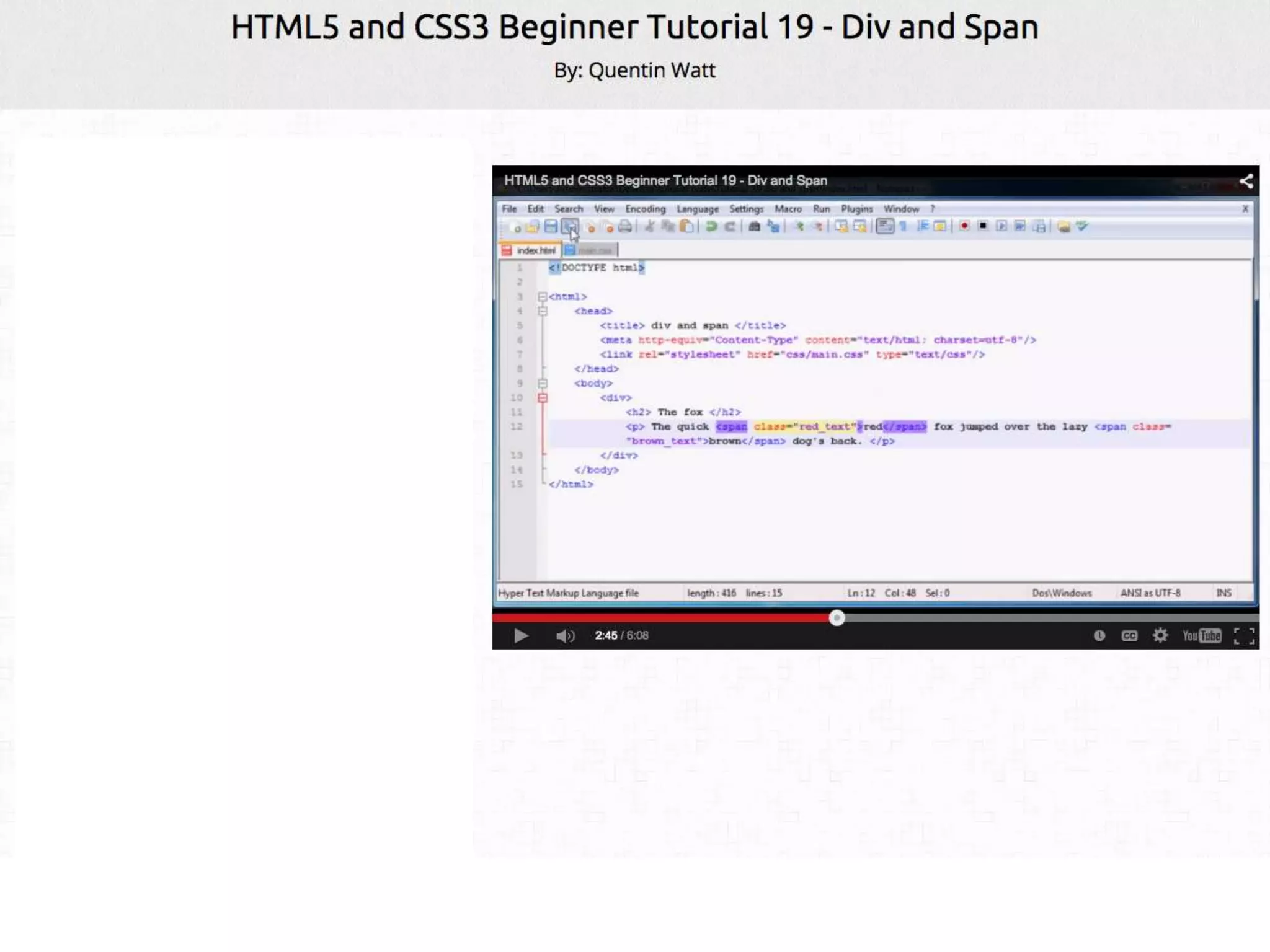

![I. Passive learnersourcing (MOOC videos)

– Video player clickstream analysis [L@S 2014a, L@S 2014b]

– Data-driven content navigation [UIST 2014a, UIST 2014b]

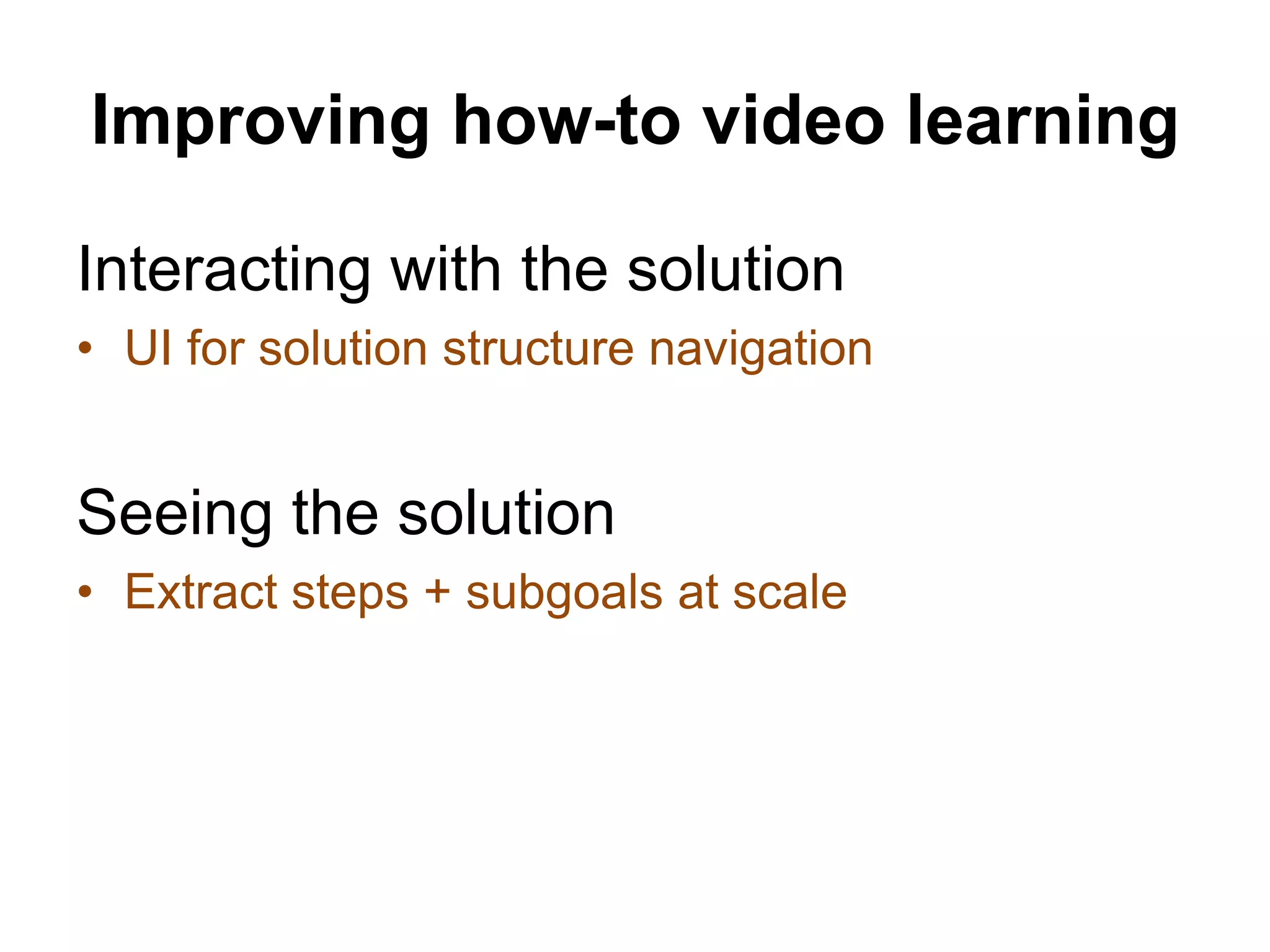

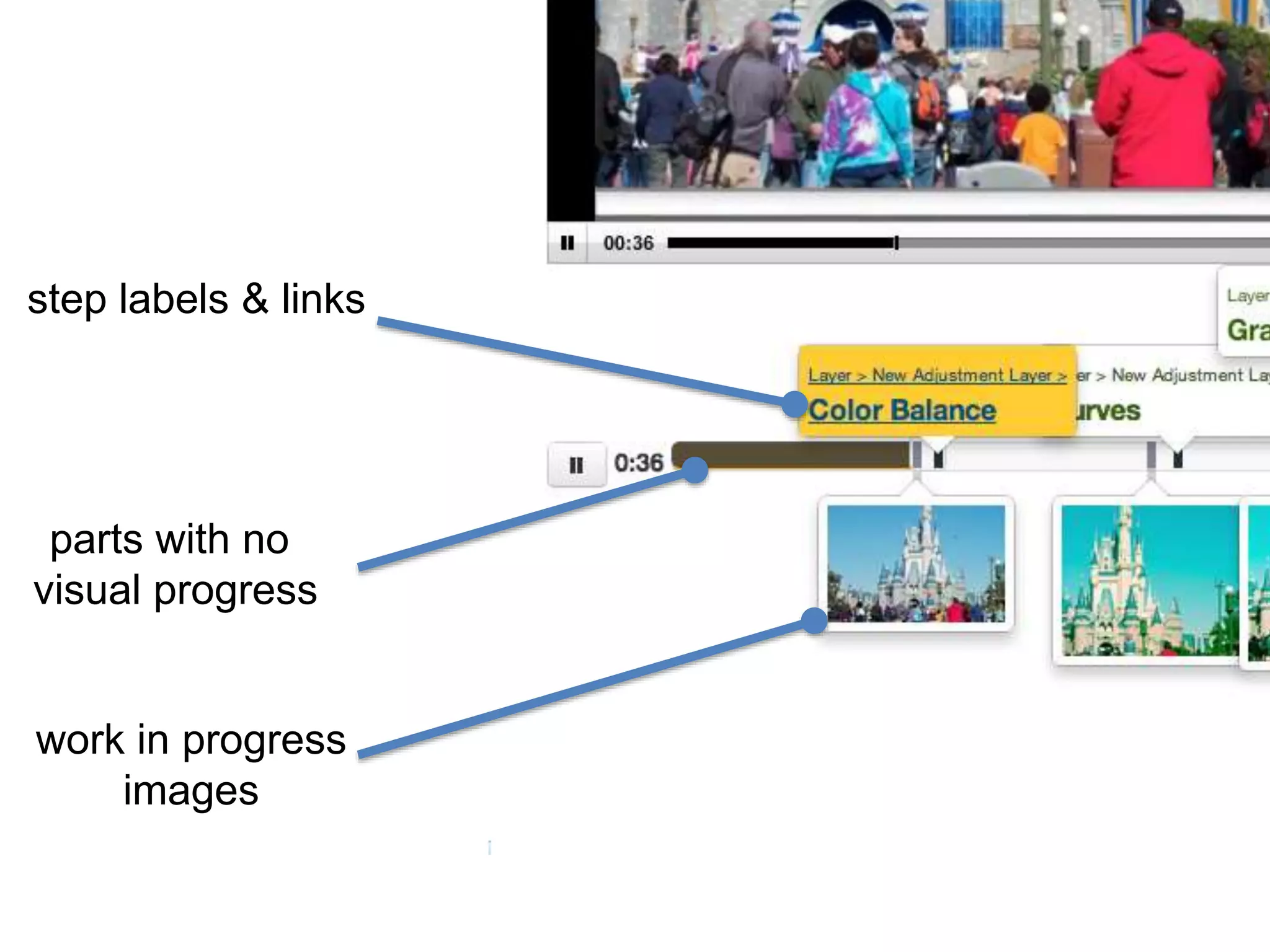

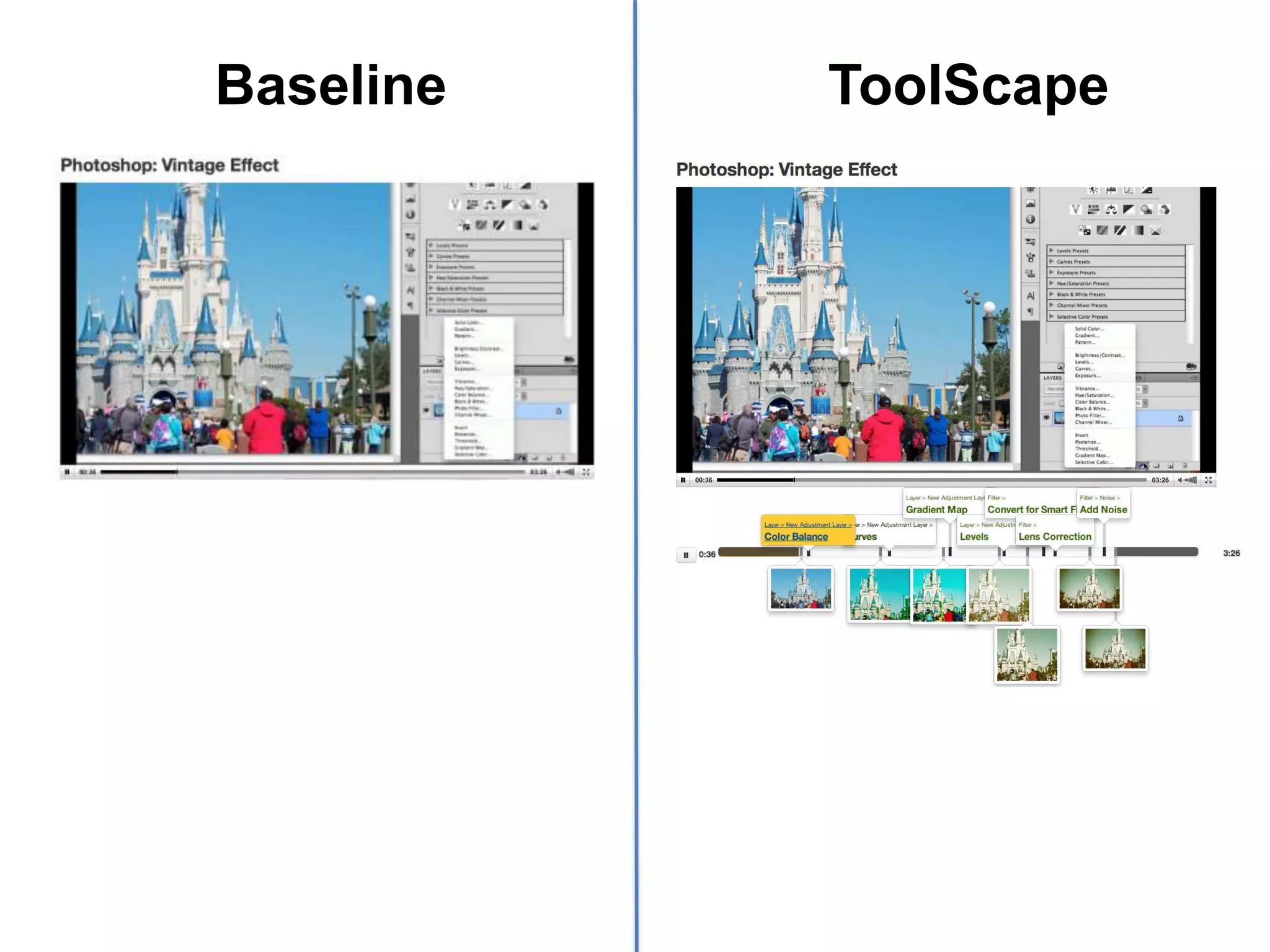

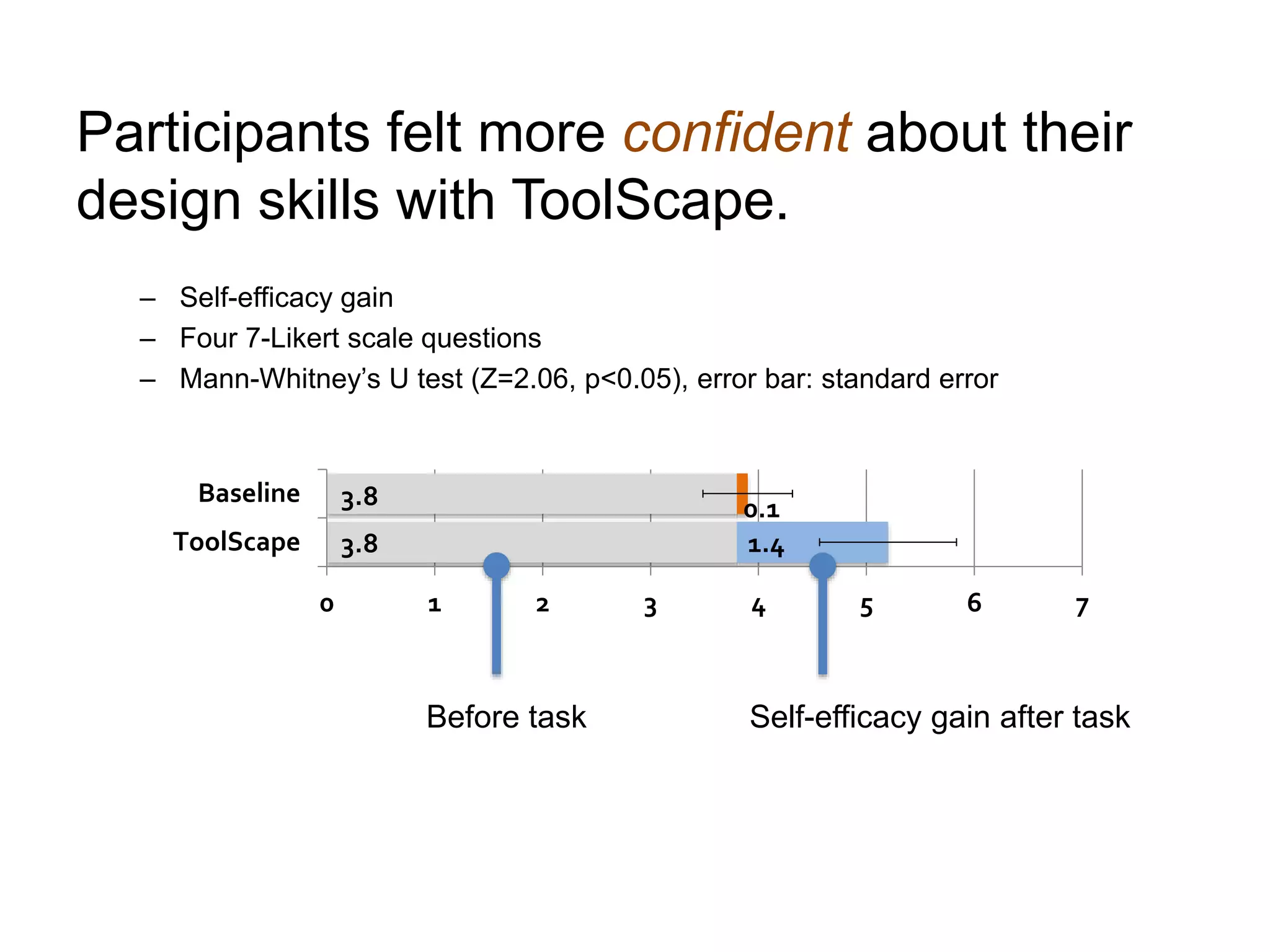

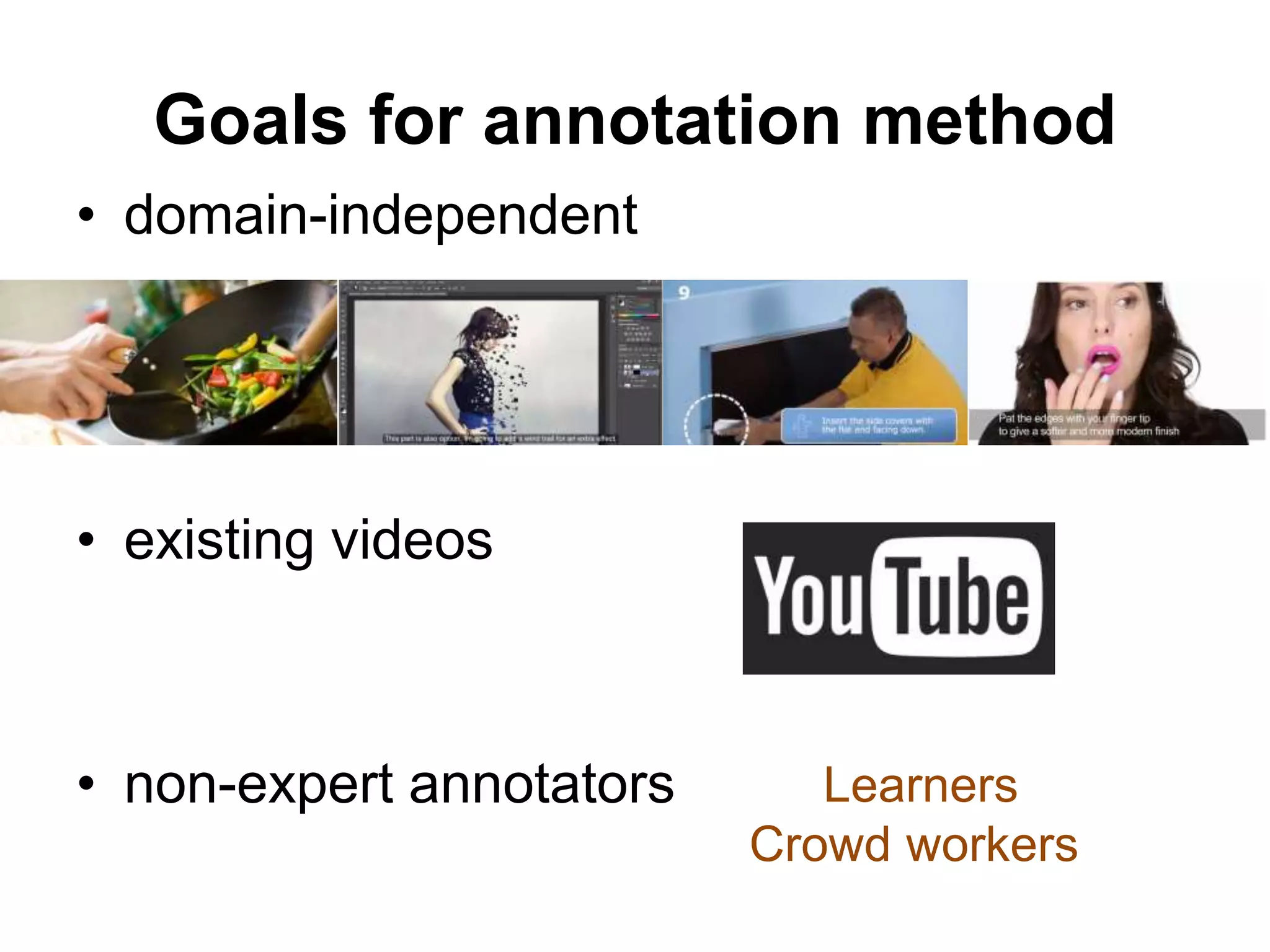

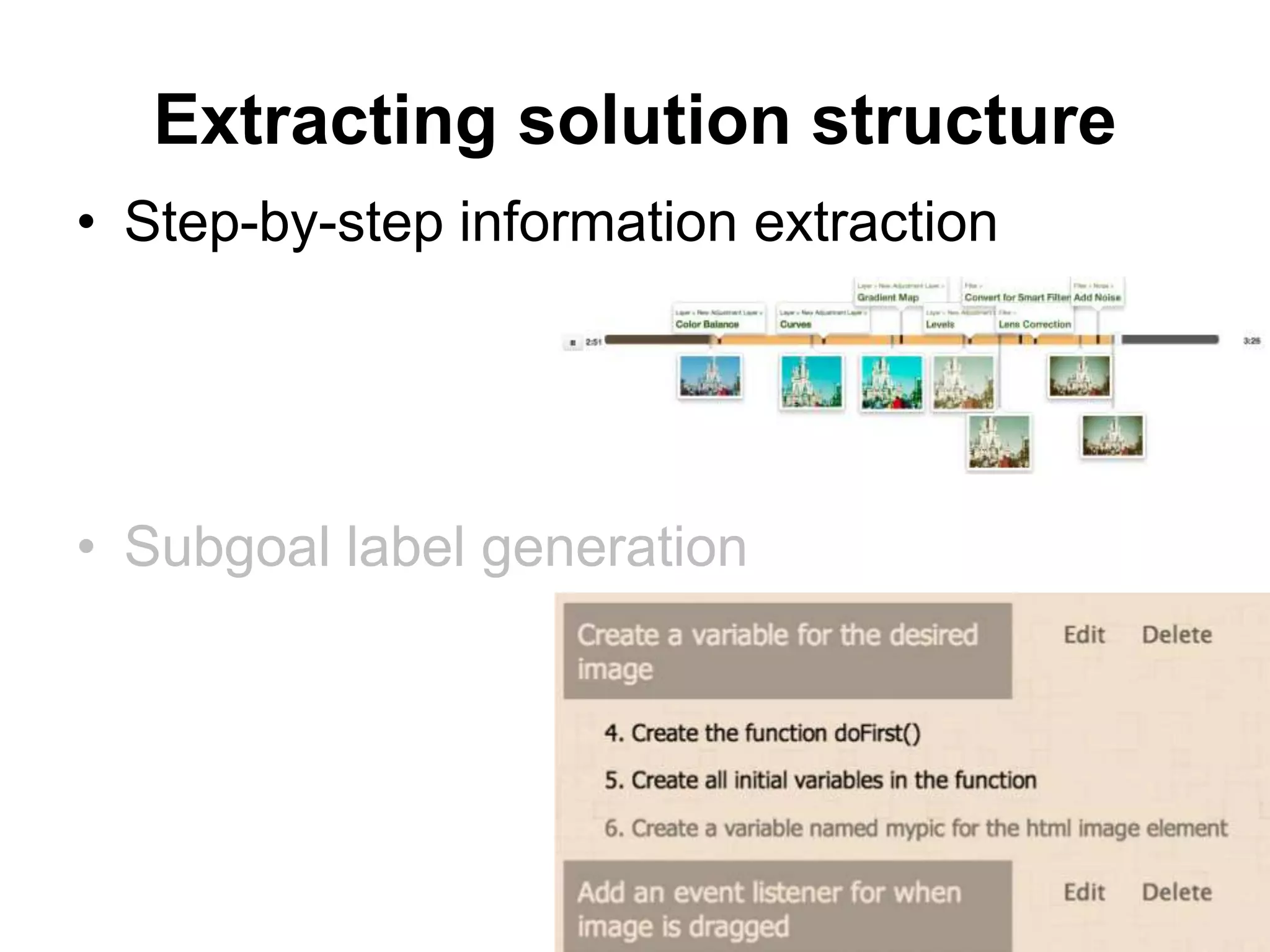

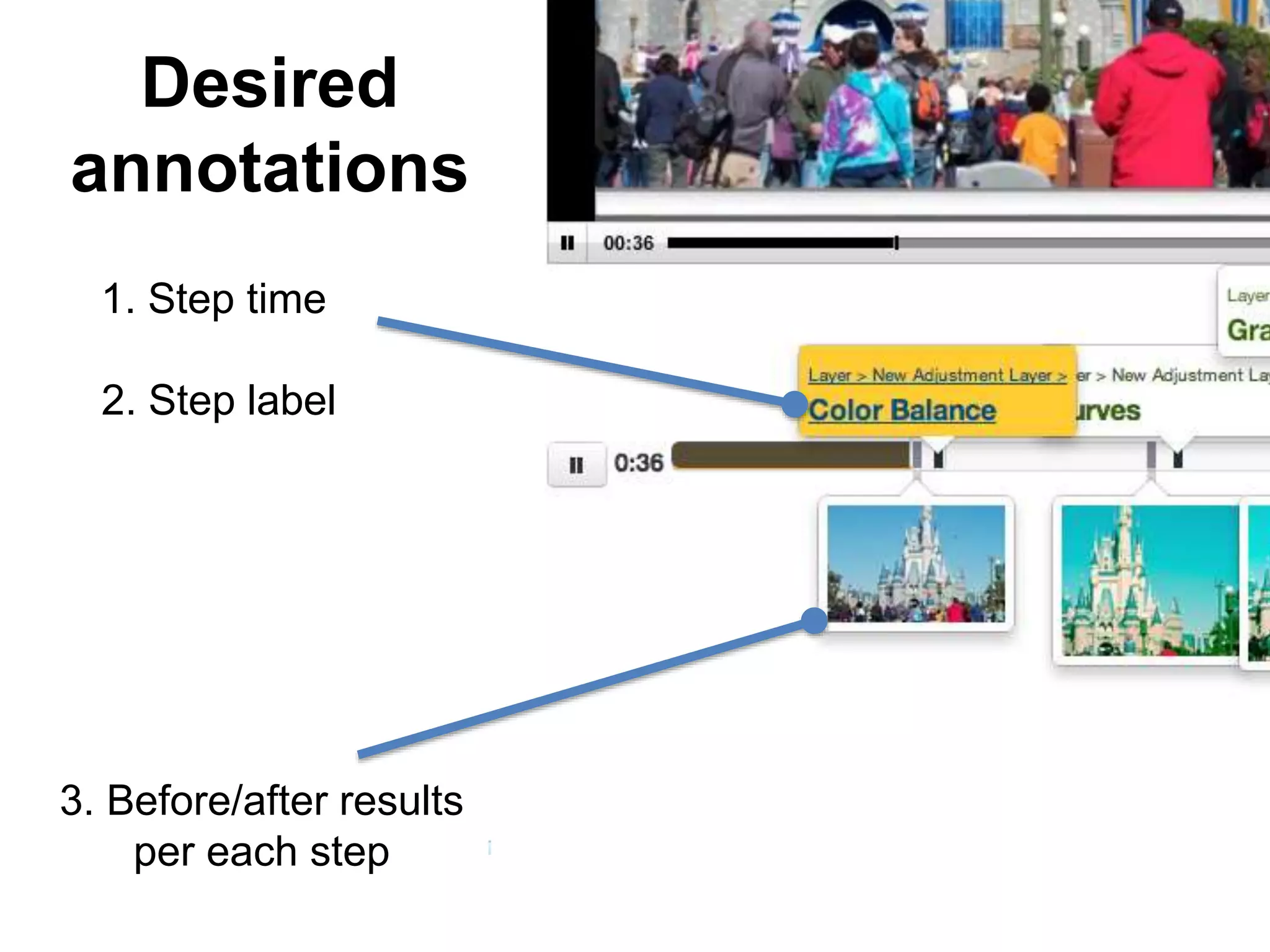

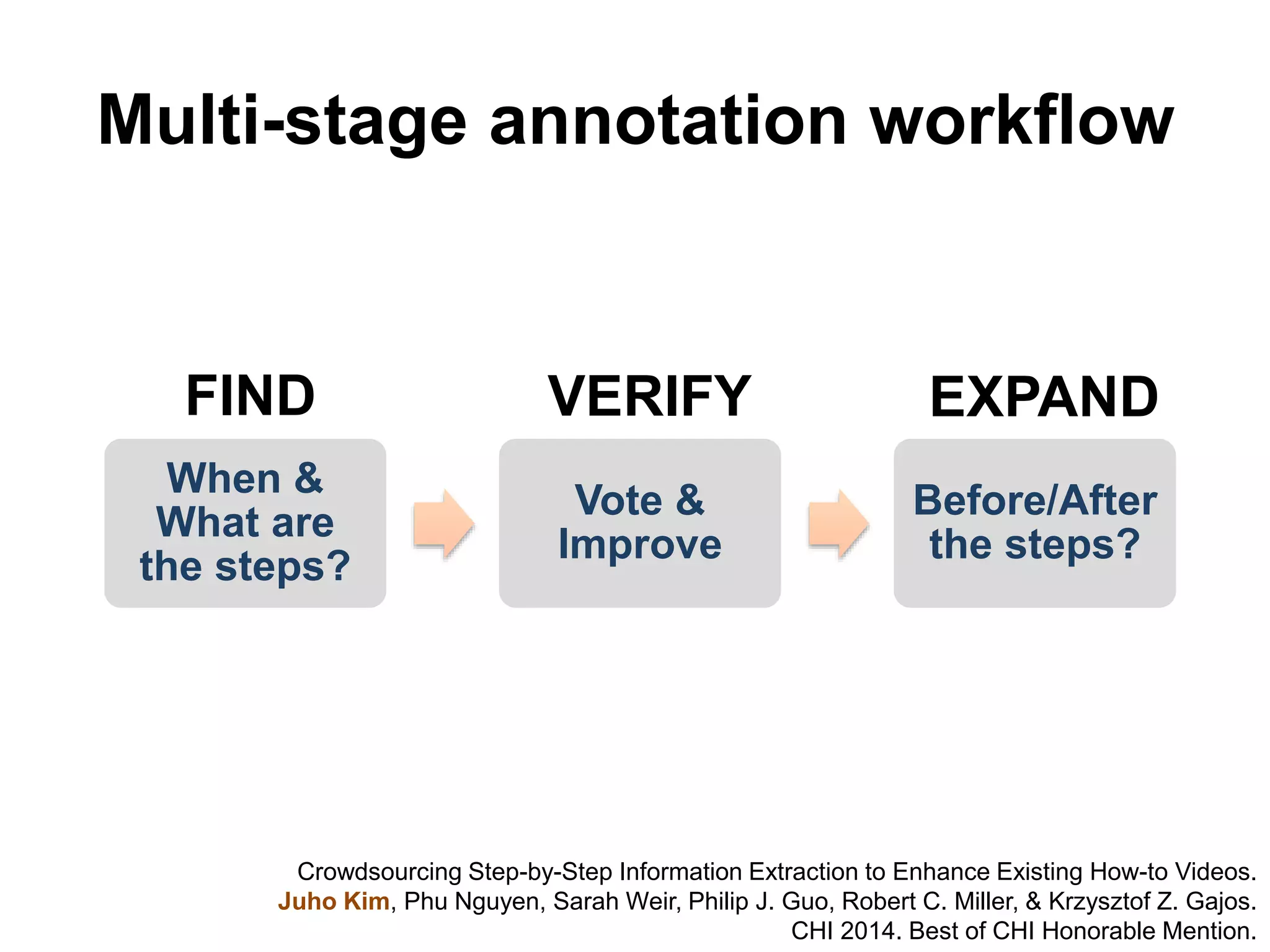

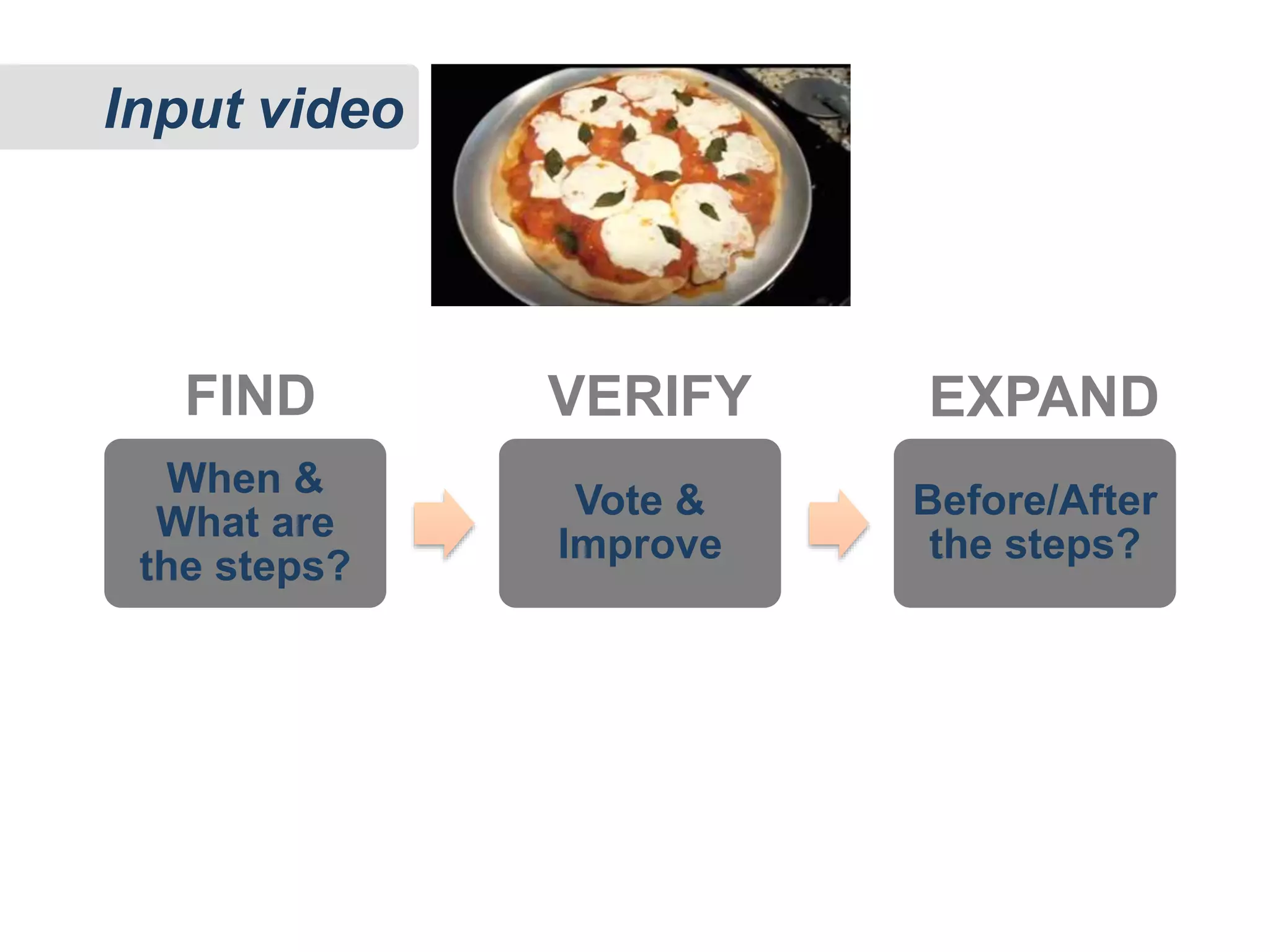

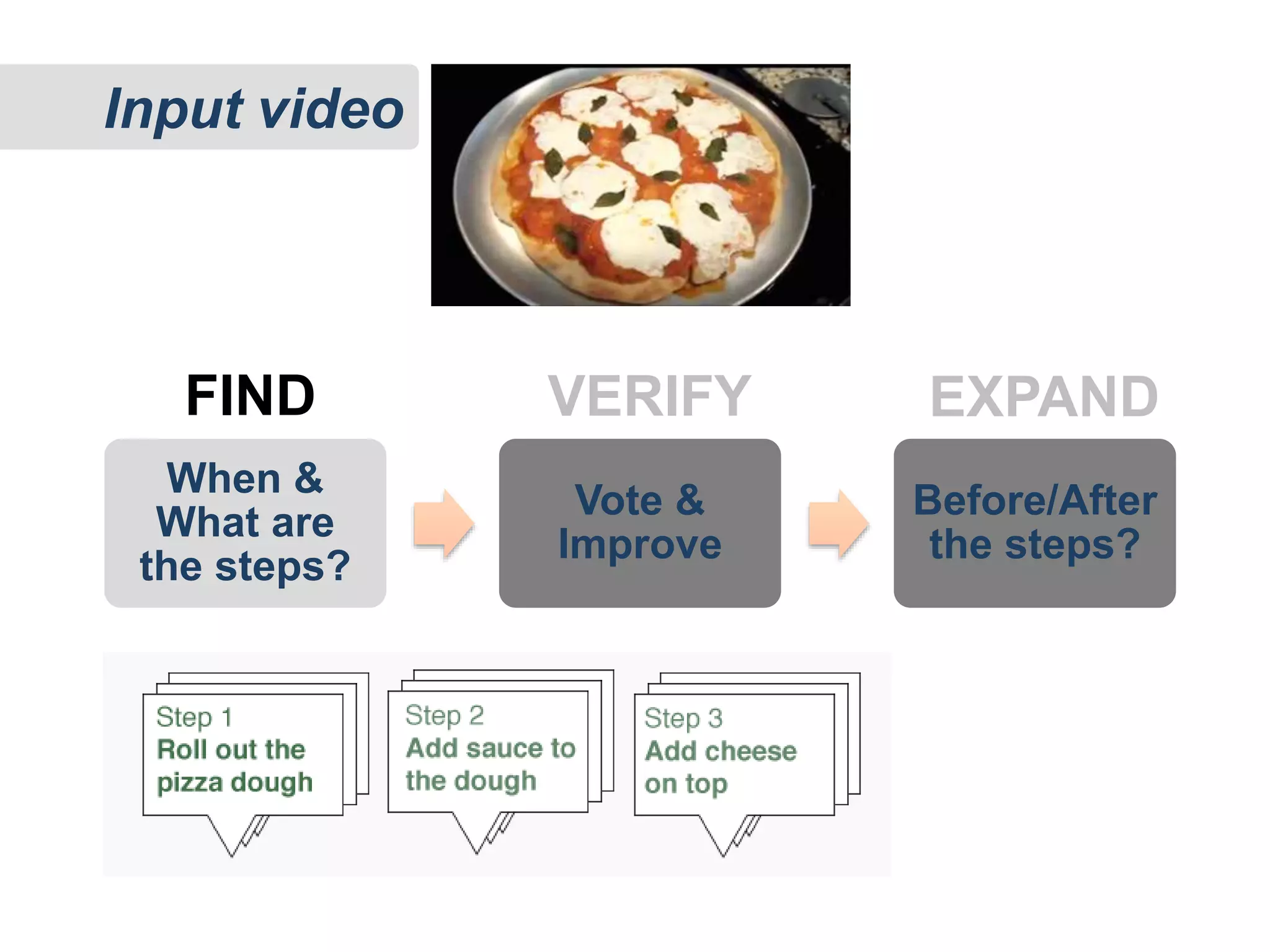

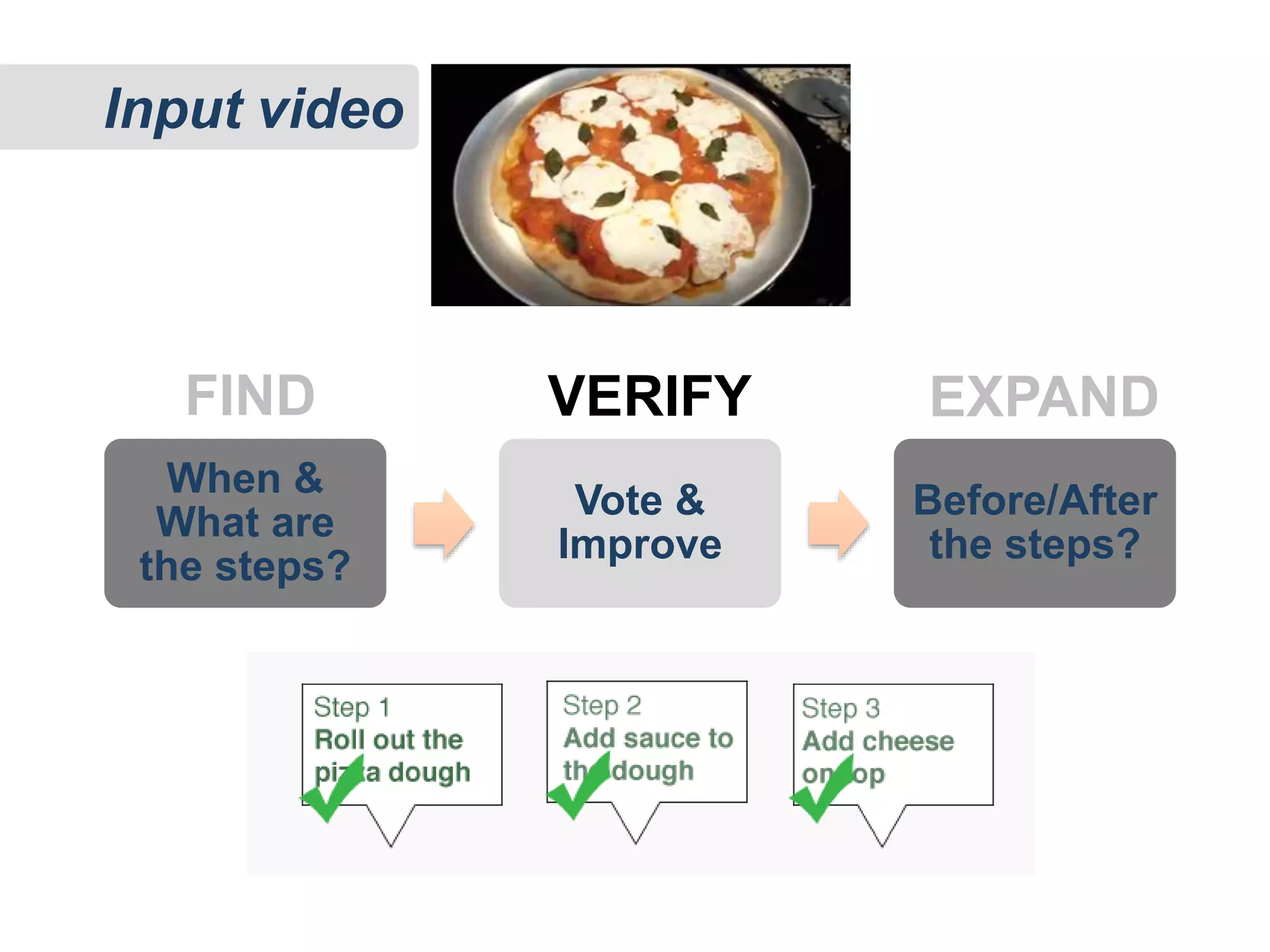

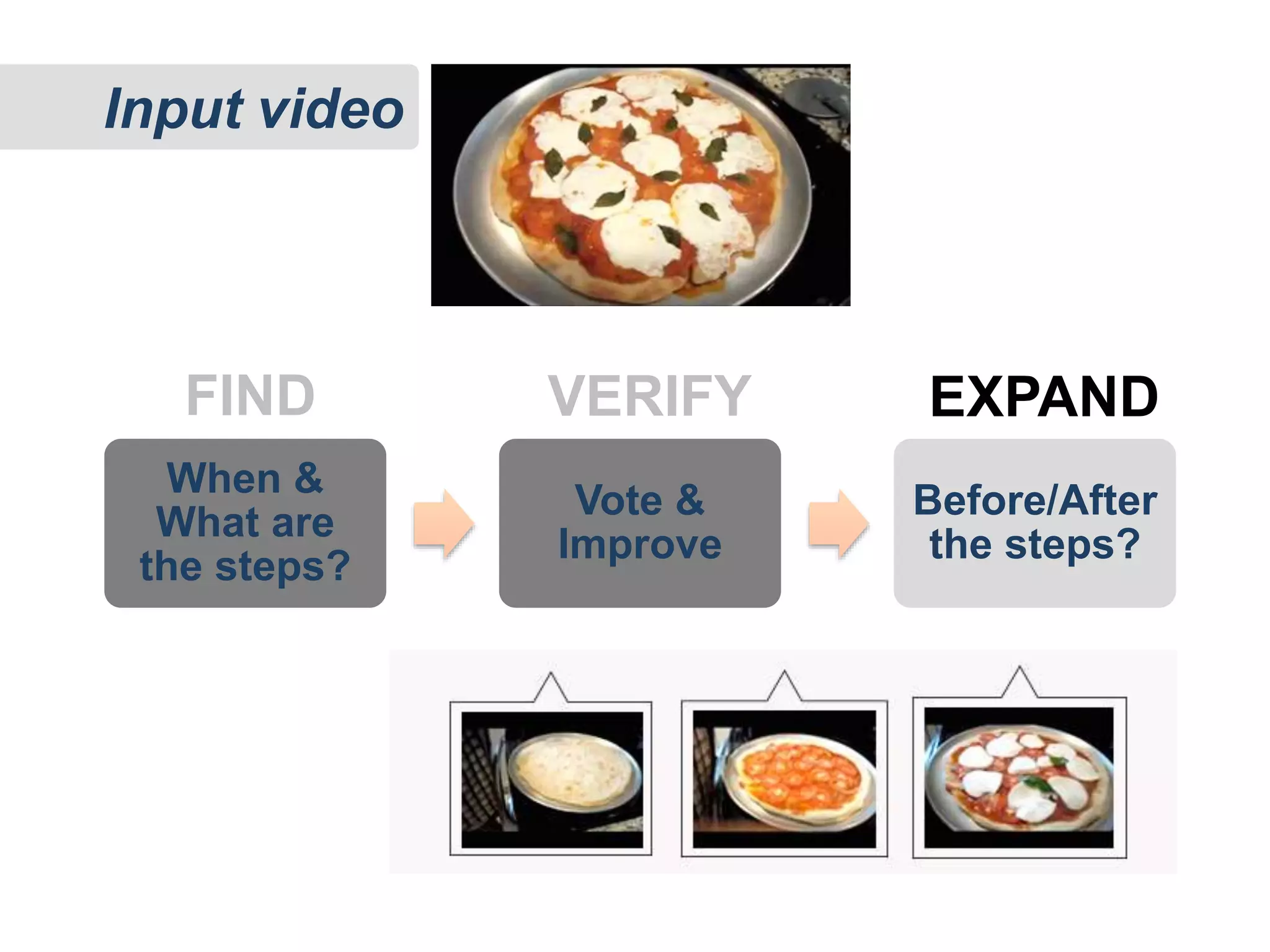

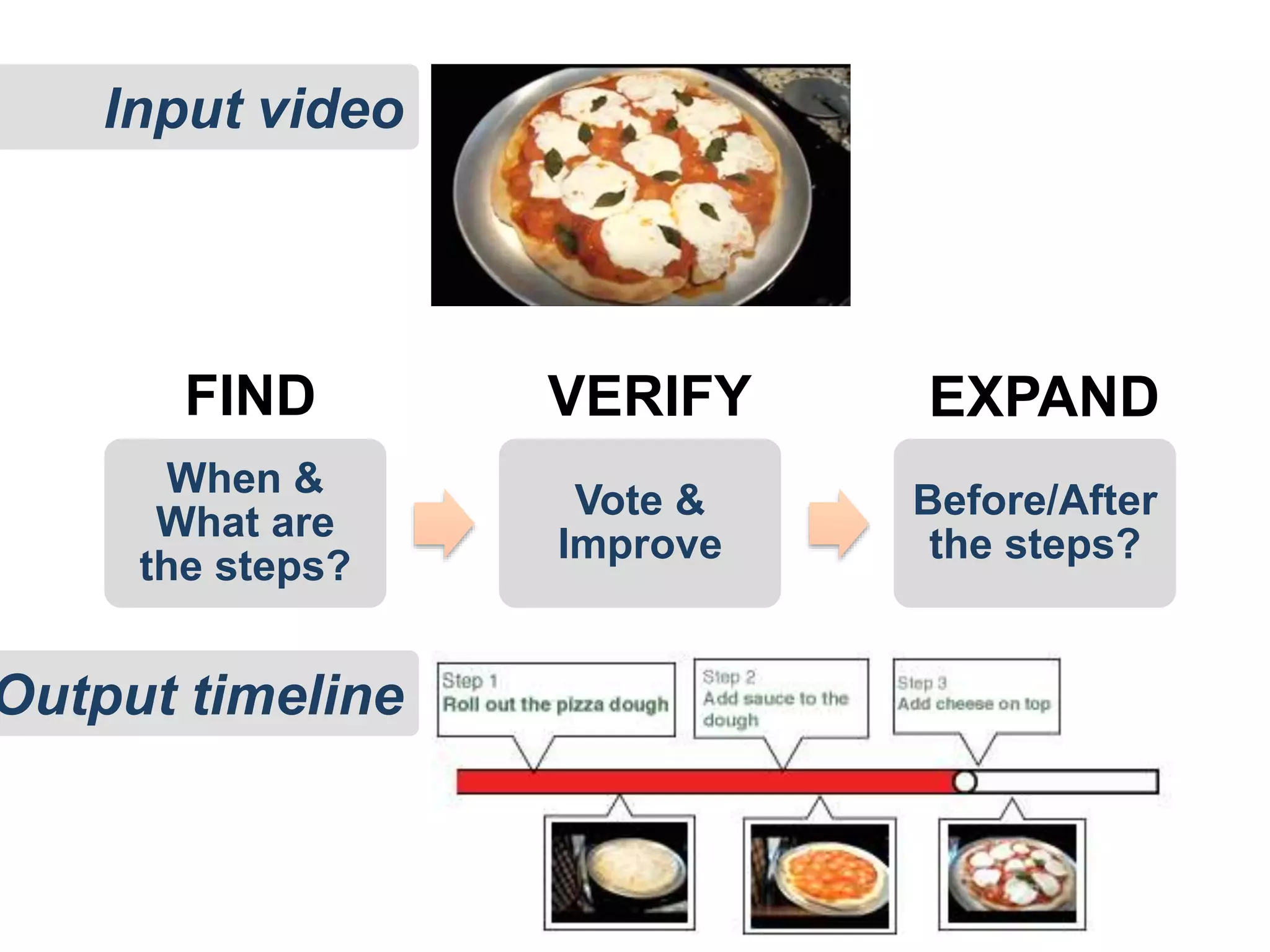

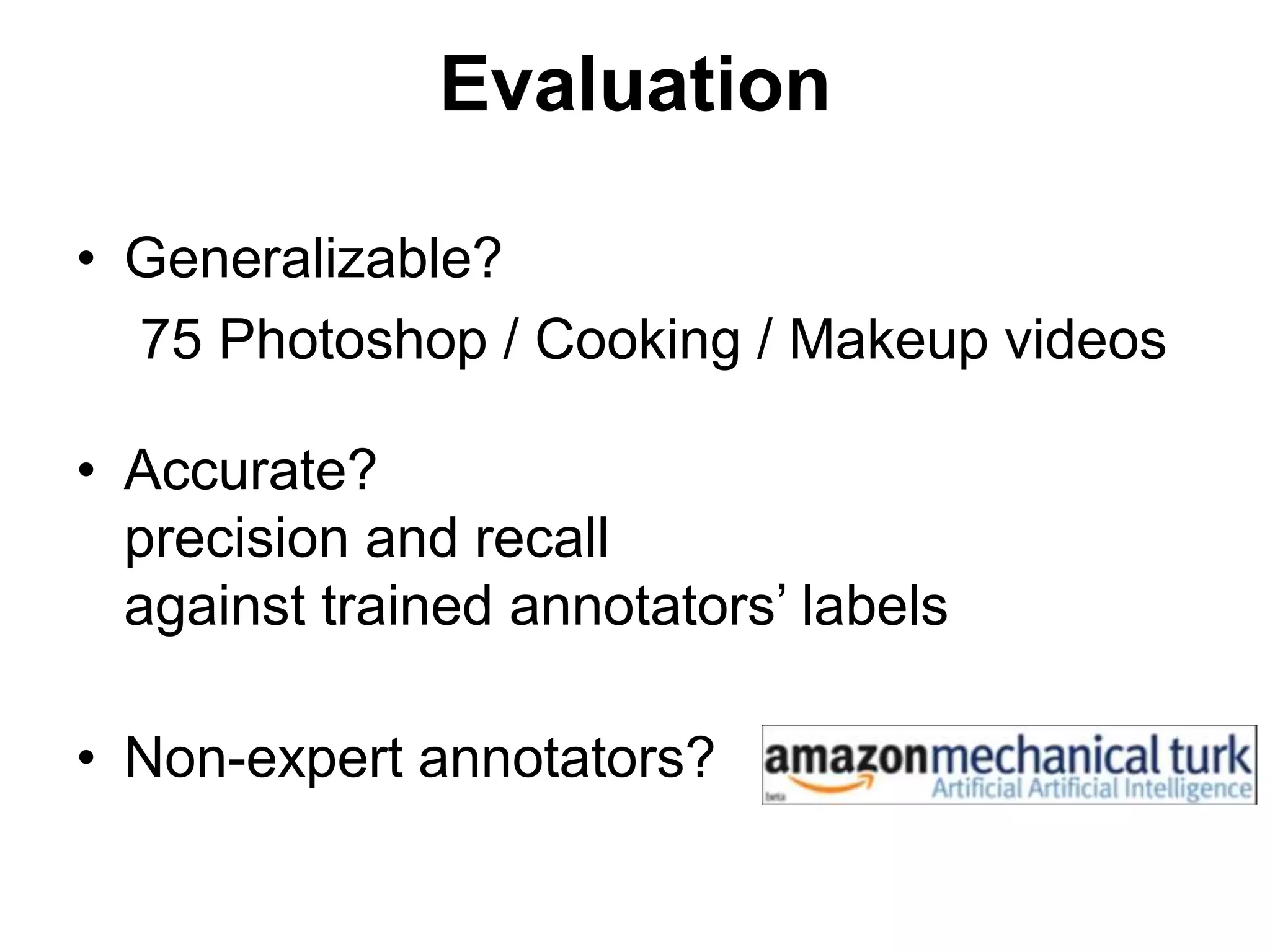

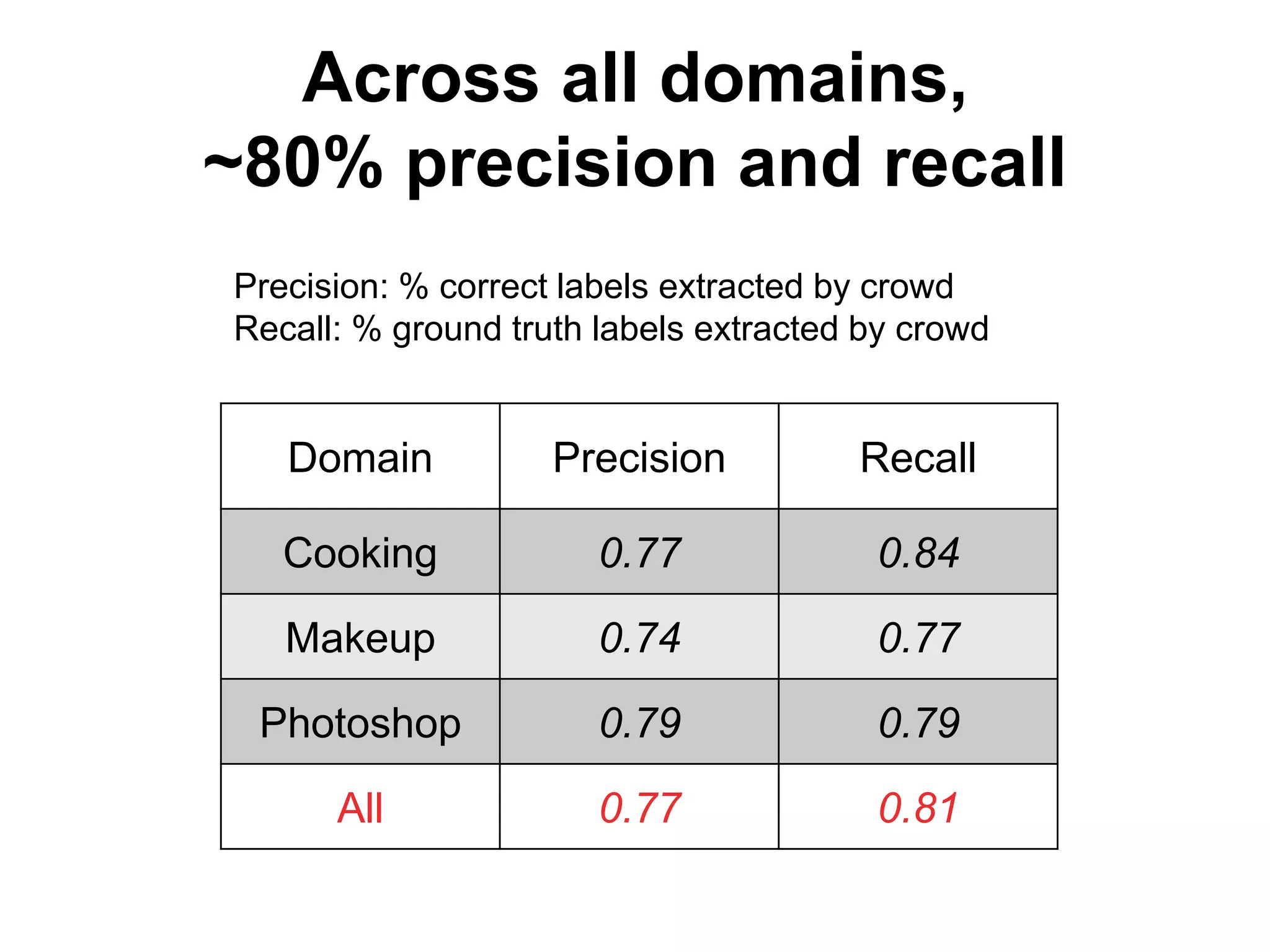

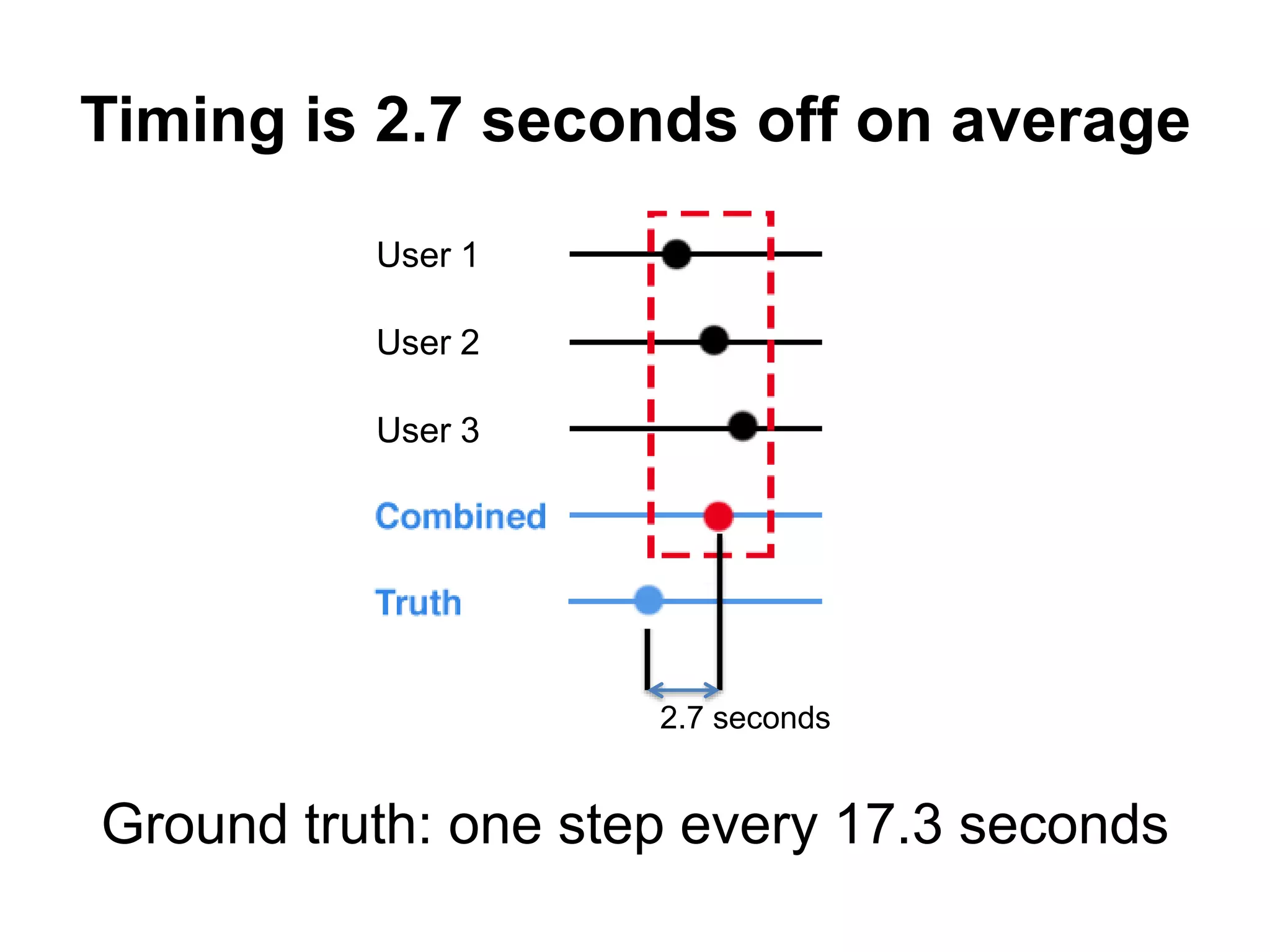

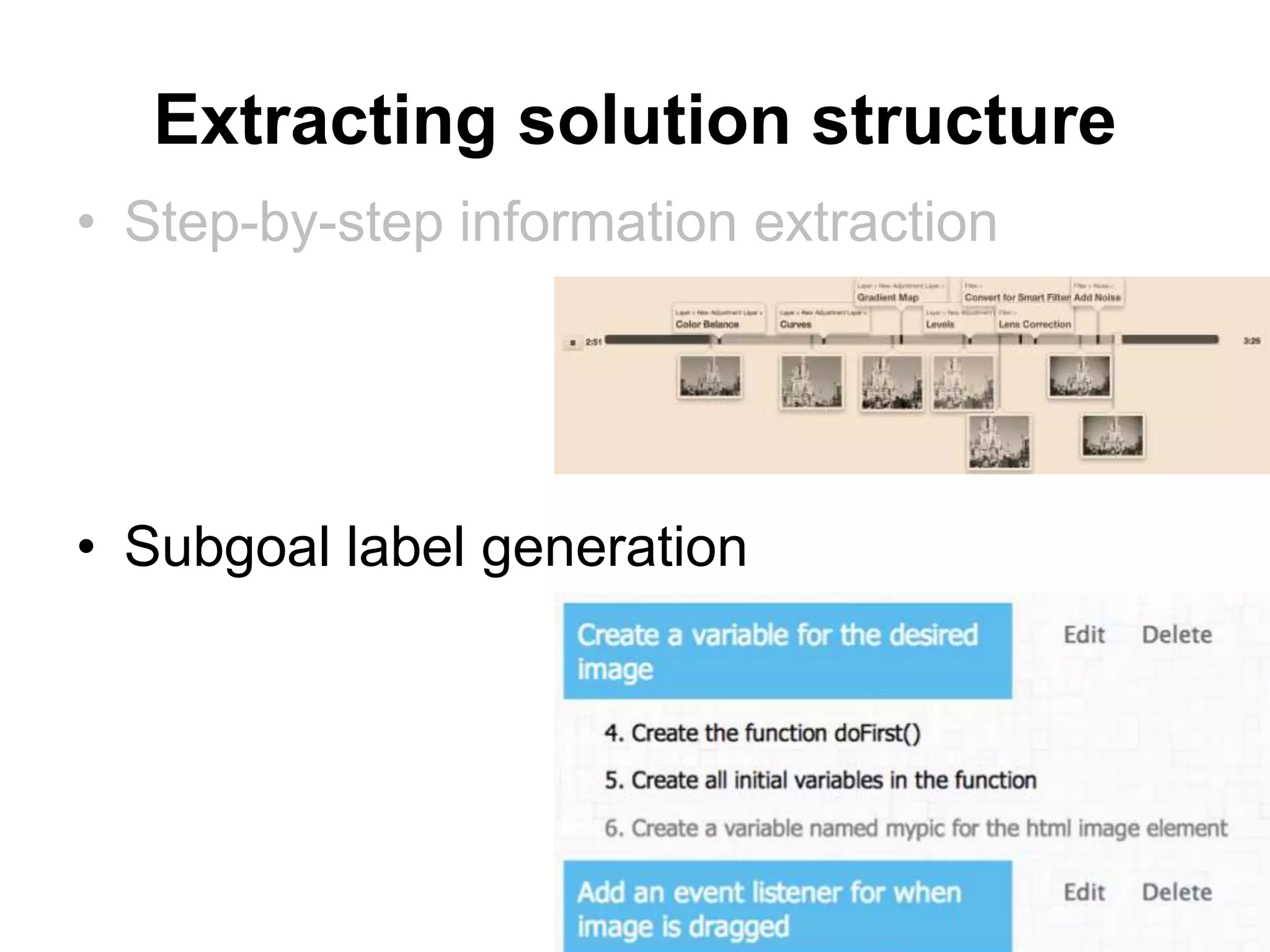

II. Active learnersourcing (how-to videos)

– Step-by-step information [ CHI 2014]

– Summary of steps [CSCW 2015]](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-19-2048.jpg)

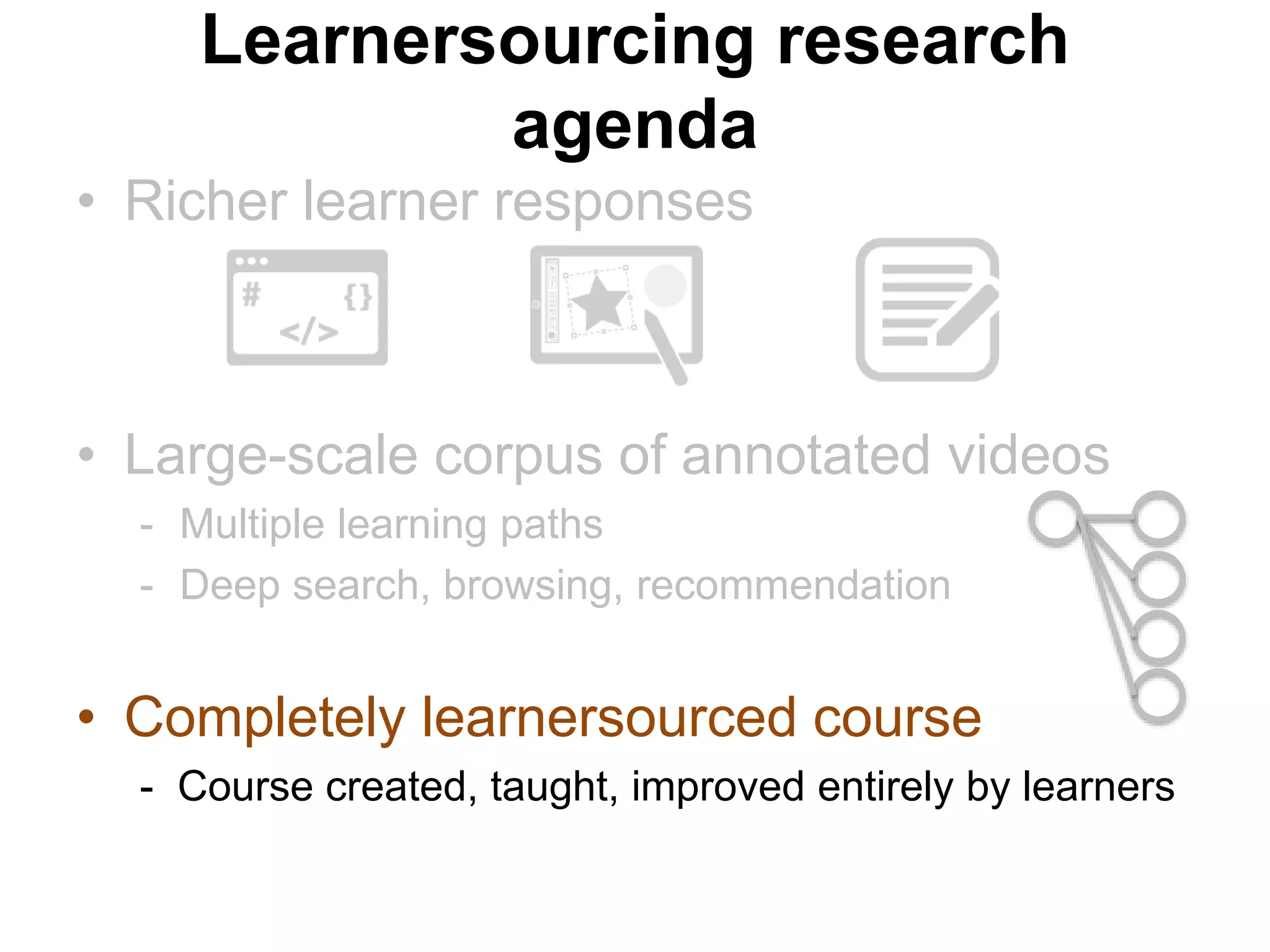

![I. Passive learnersourcing (MOOC videos)

– Video player clickstream analysis [L@S 2014a, L@S 2014b]

– Data-driven content navigation [UIST 2014a, UIST 2014b]

II. Active learnersourcing (how-to videos)

– Step-by-step information [ CHI 2014]

– Summary of steps [CSCW 2015]](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-20-2048.jpg)

![Phantom cursor

• Visual & physical emphasis on interaction peaks

• Read wear [Hill et al., 1992], Semantic pointing [Blanch et al., 2004],

Pseudo-haptic feedback [Lécuyer et al., 2004]](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-38-2048.jpg)

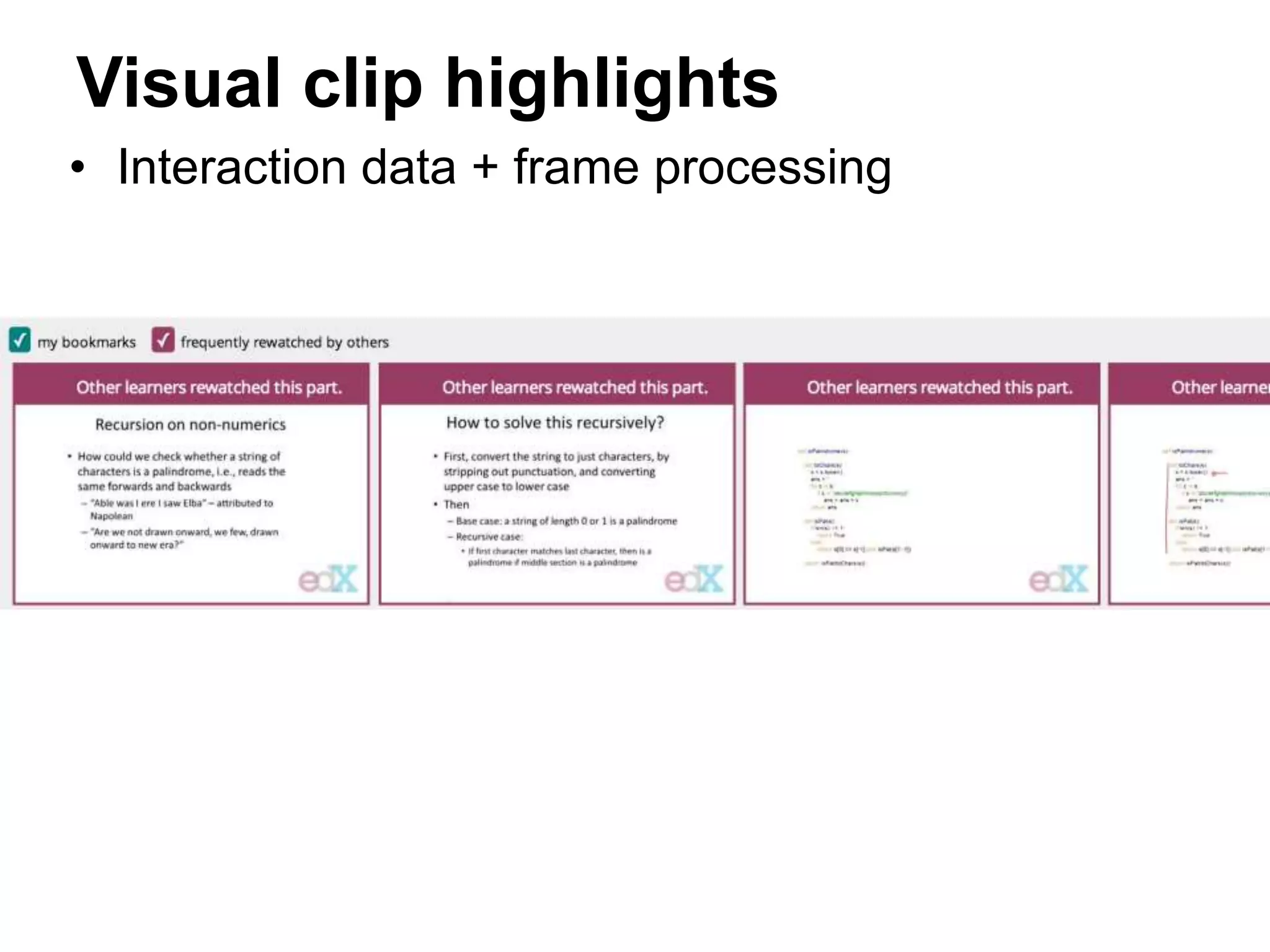

![Diverse navigation patterns

With LectureScape:

• more non-linear jumps in navigation

• more navigation options

- rollercoaster timeline

- phantom cursor

- highlight summary

- pinning

“[LectureScape] gives you more options.

It personalizes the strategy I can use in the task.”](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-43-2048.jpg)

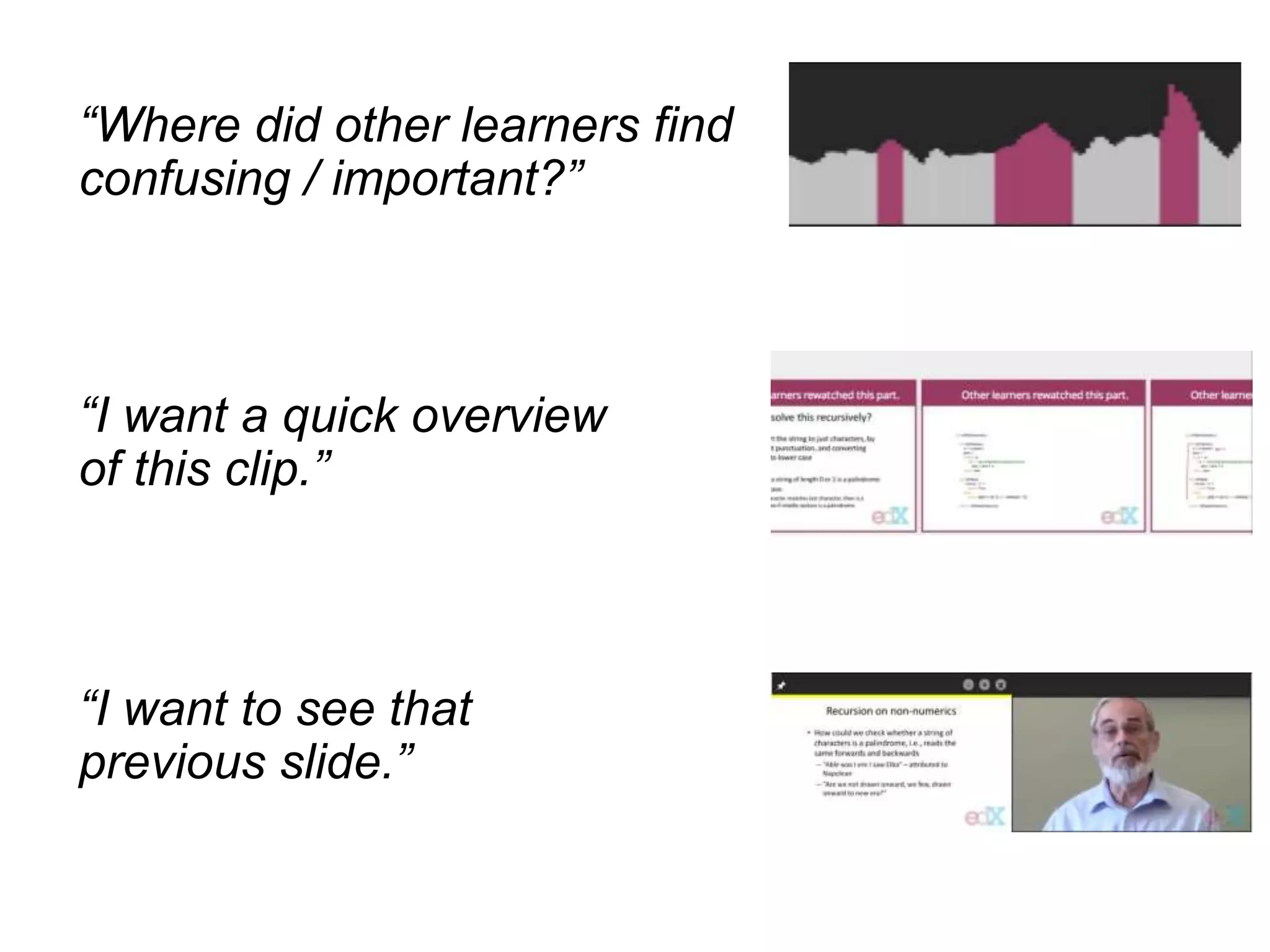

![Interaction data give

a sense of “learning together”

Interaction peaks matched with participants’ points of

“confusion” (8/12) and “importance” (6/12)

“It’s not like cold-watching.

It feels like watching with other students.”

“[interaction data] makes it seem more classroom-y,

as in you can compare yourself to how other students

are learning and what they need to repeat.”](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-44-2048.jpg)

![I. Passive learnersourcing (MOOC videos)

– Video player clickstream analysis [L@S 2014a, L@S 2014b]

– Data-driven content navigation [UIST 2014a, UIST 2014b]

II. Active learnersourcing (how-to videos)

– Step-by-step information [ CHI 2014]

– Summary of steps [CSCW 2015]](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-47-2048.jpg)

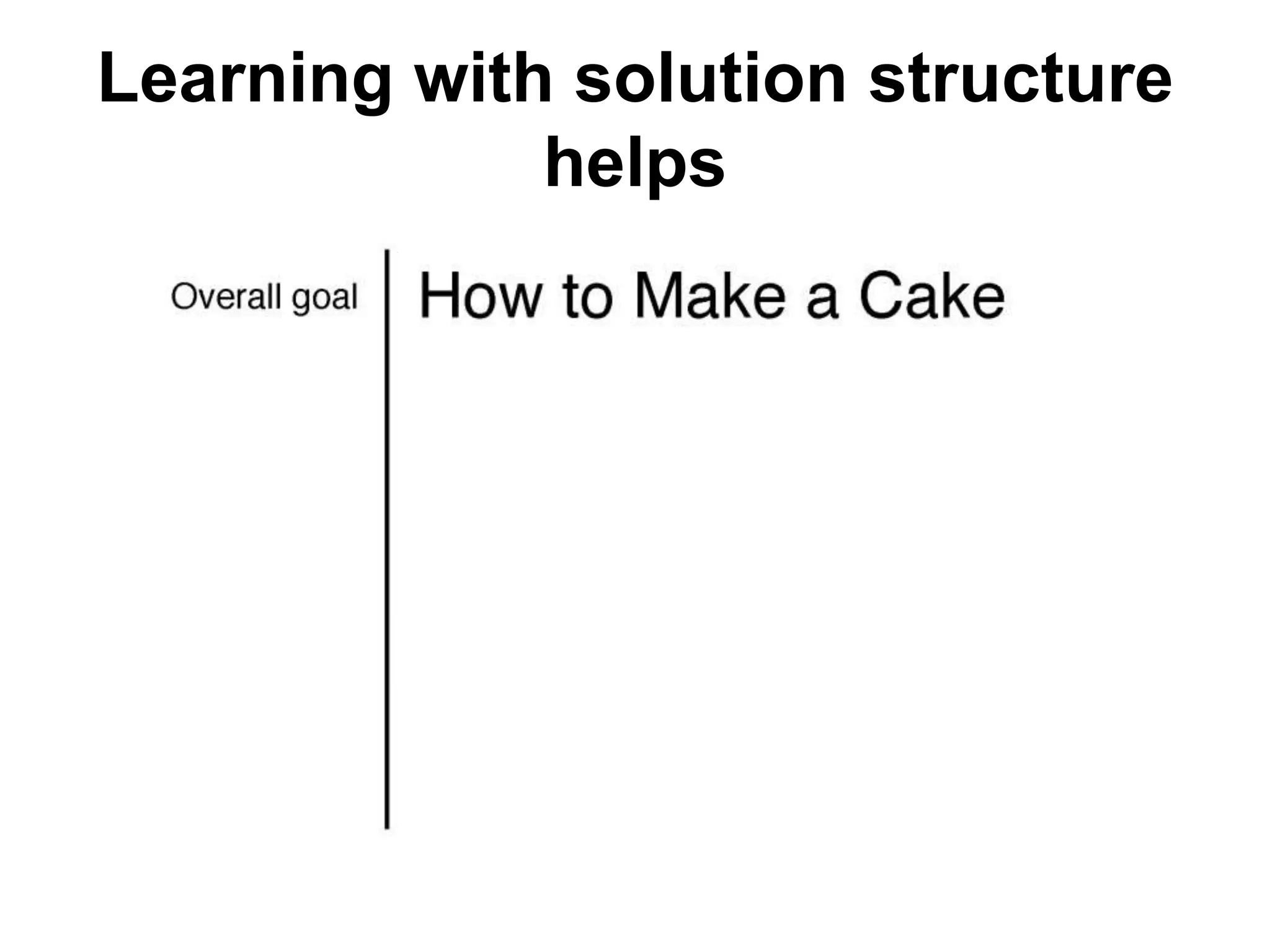

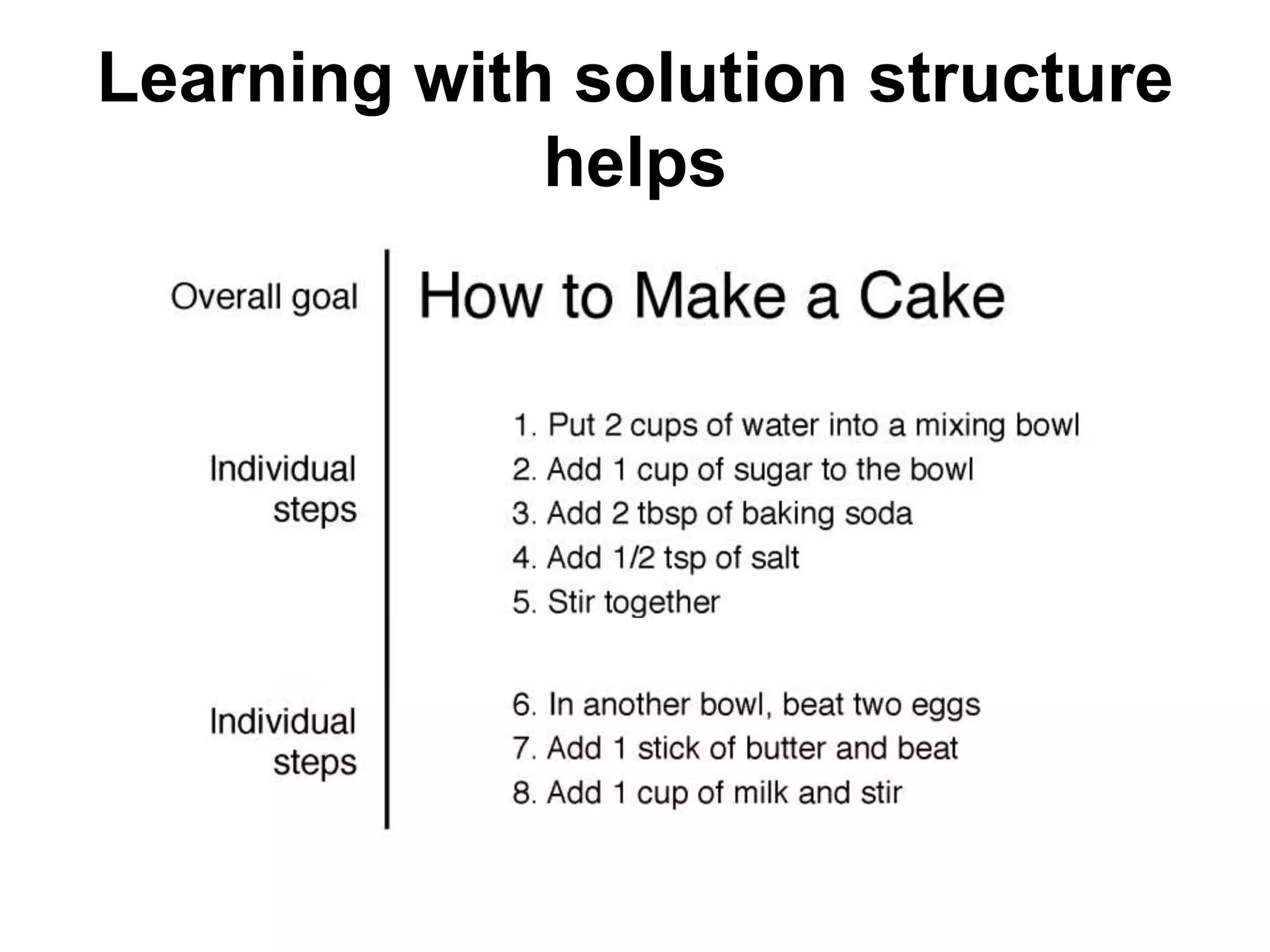

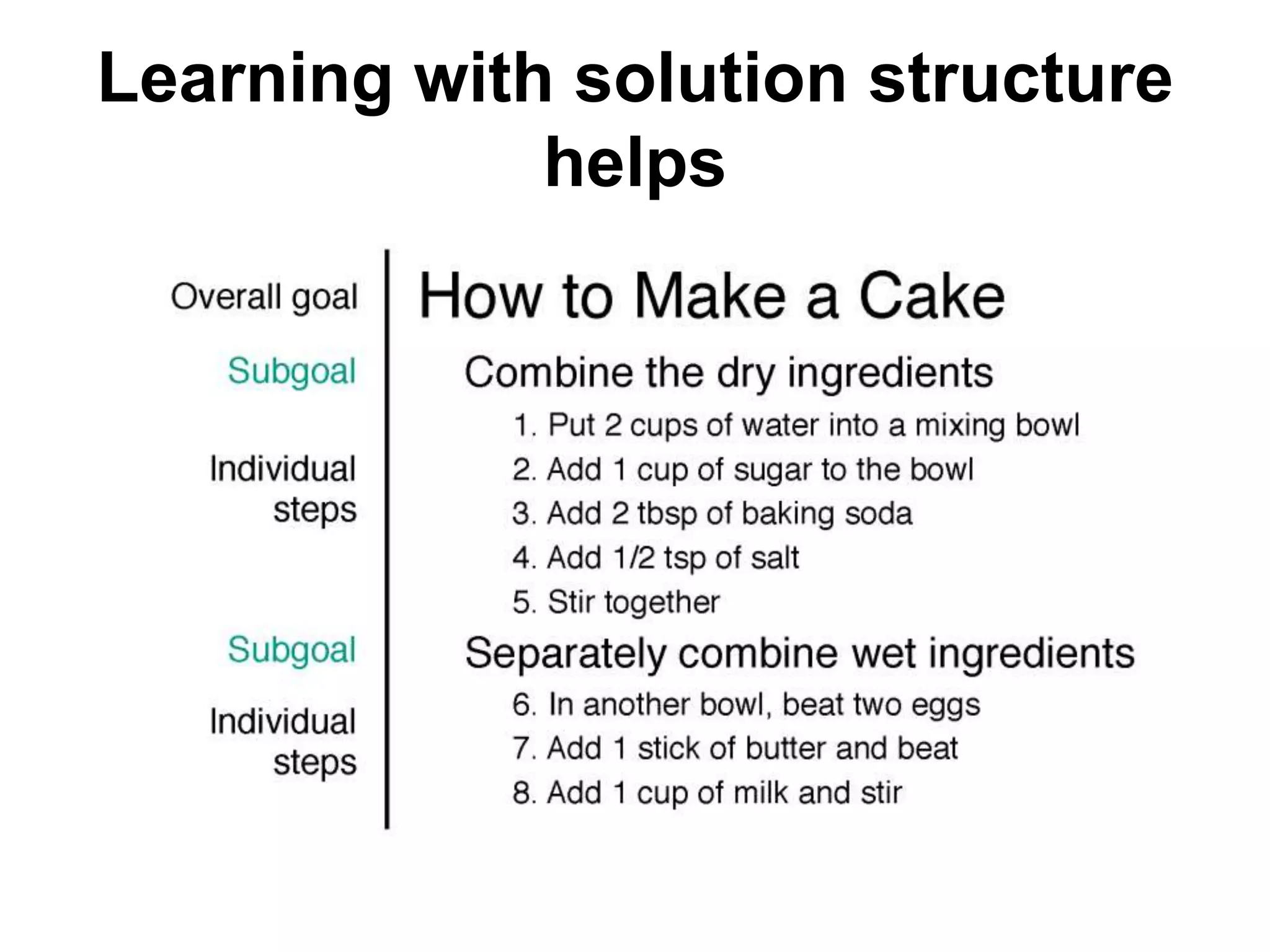

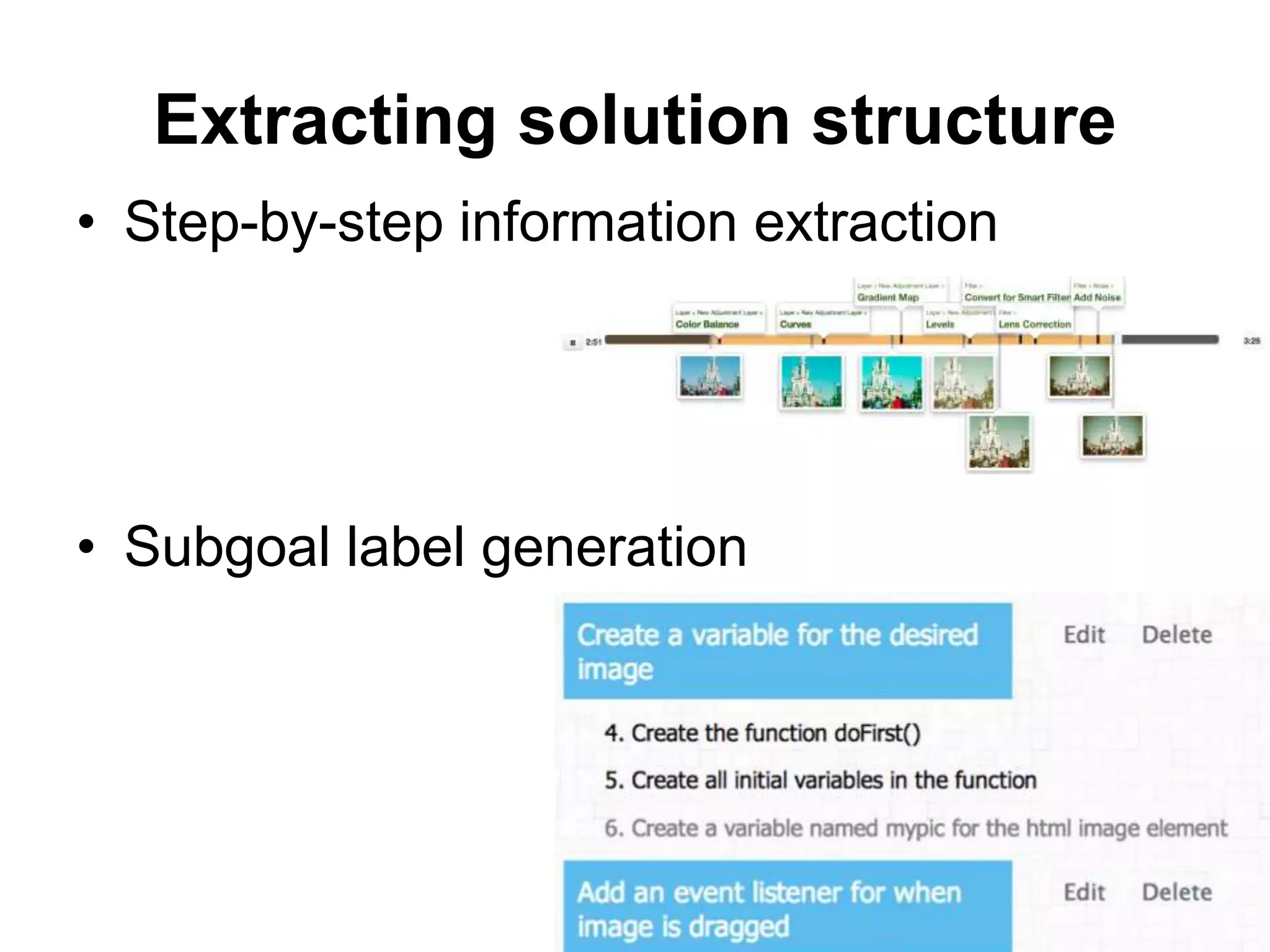

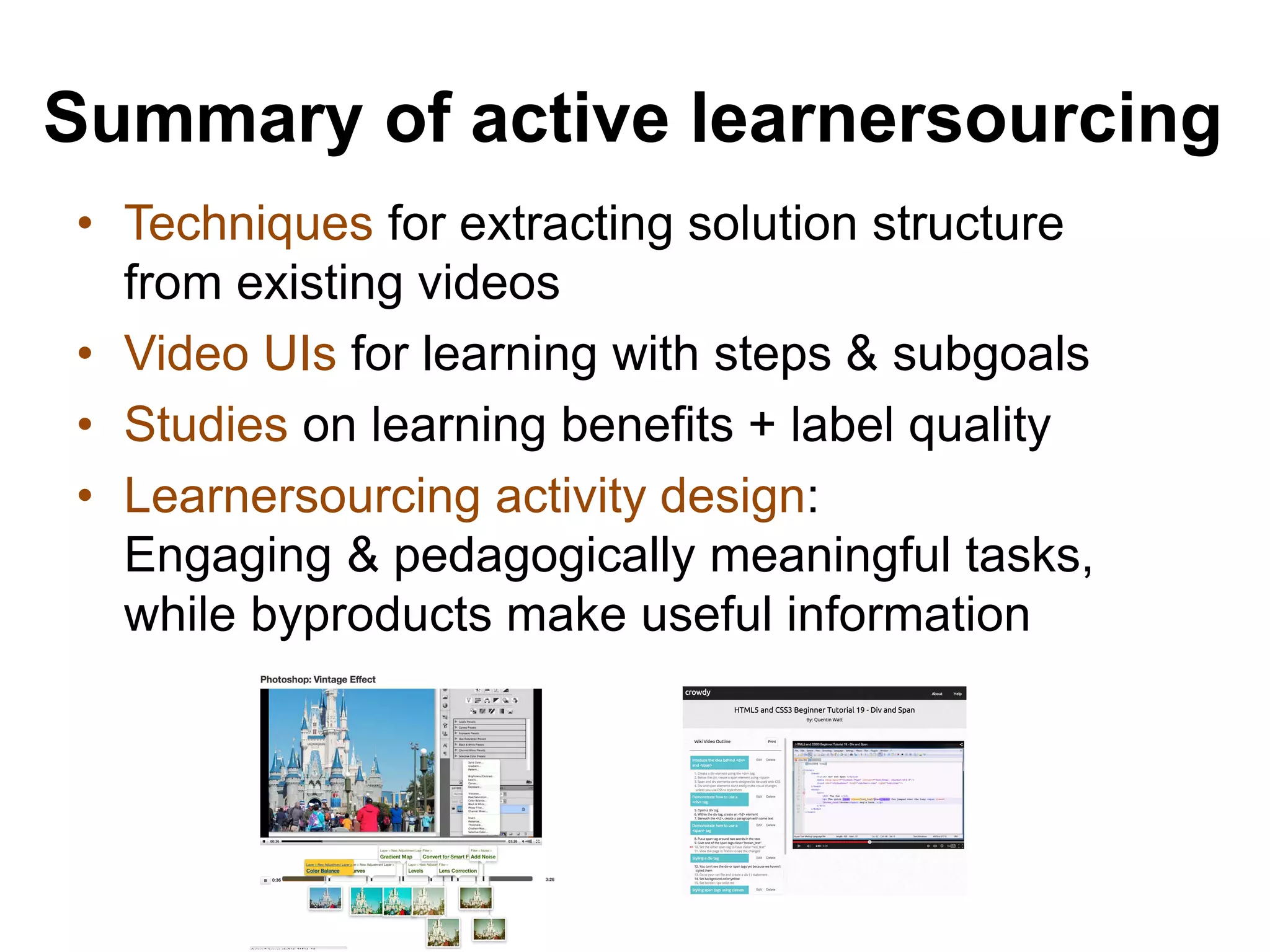

![Completeness & detail of

instructions

[Eiriksdottir and Catrambone, 2011]

Proactive & random

access in instructional

videos

[Zhang et al., 2006]

Interactivity: stopping,

starting and replaying

[Tversky et al., 2002]

Subgoals: a group of steps

representing task structures

[Catrambone, 1994, 1998]

Seeing and interacting with

solution structure helps learning](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-51-2048.jpg)

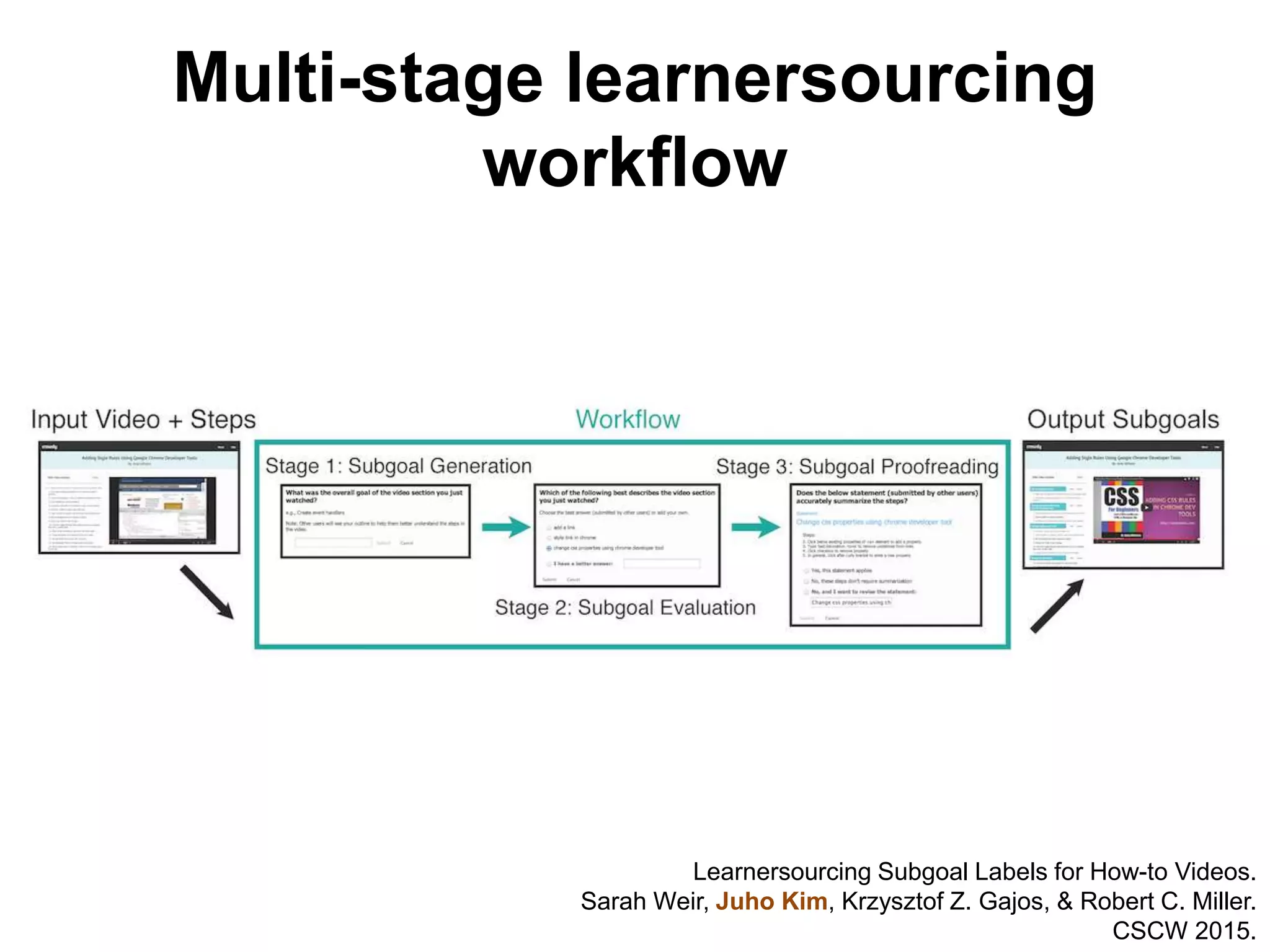

![Crowd-powered algorithms

improvement $0.05 3 votes @ $0.01

…

Crowd workflow for complex tasks

• Soylent [UIST 2010], CrowdForge [UIST 2011],

PlateMate [UIST 2011], Turkomatic [CSCW 2012]](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-67-2048.jpg)

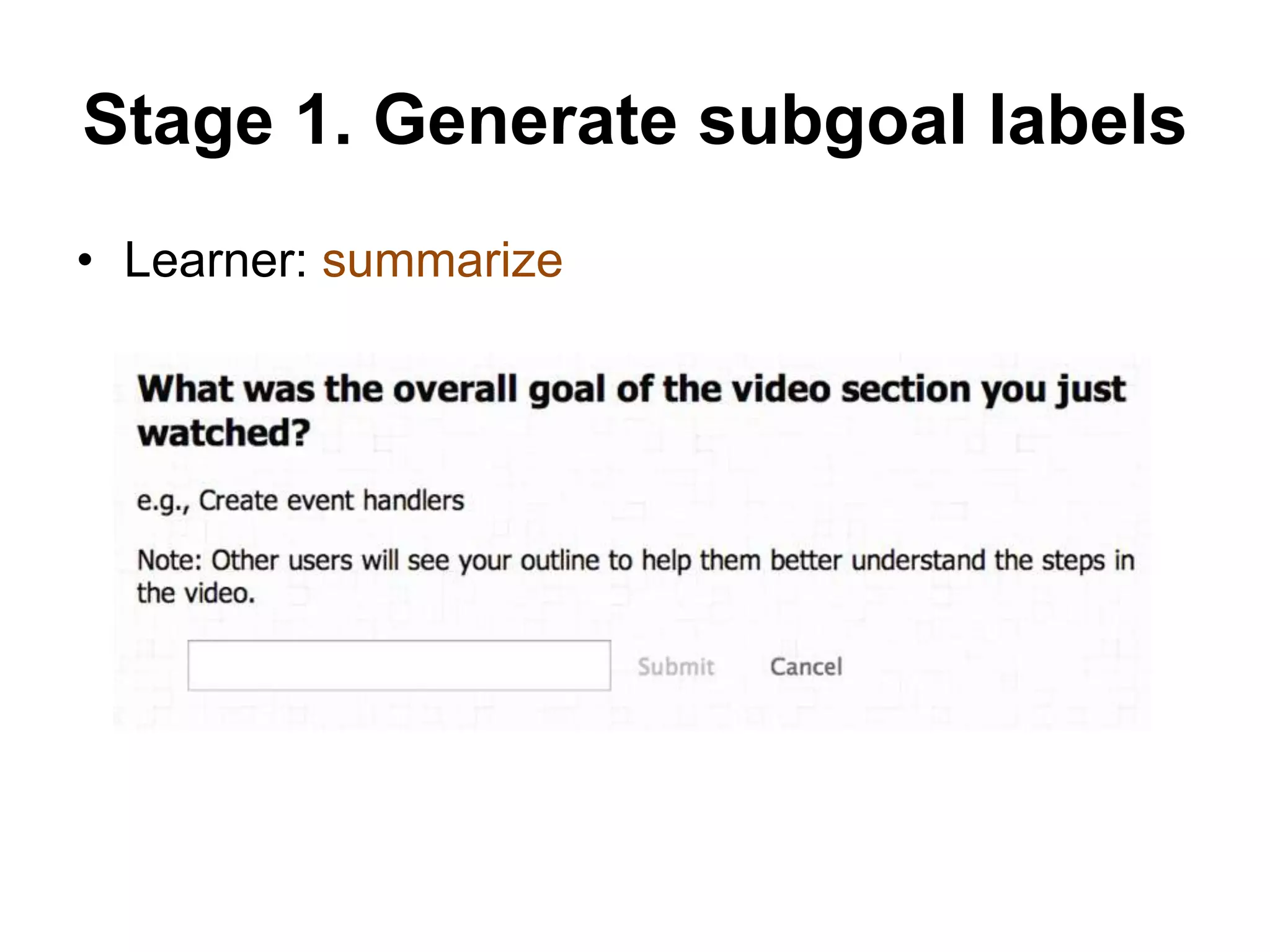

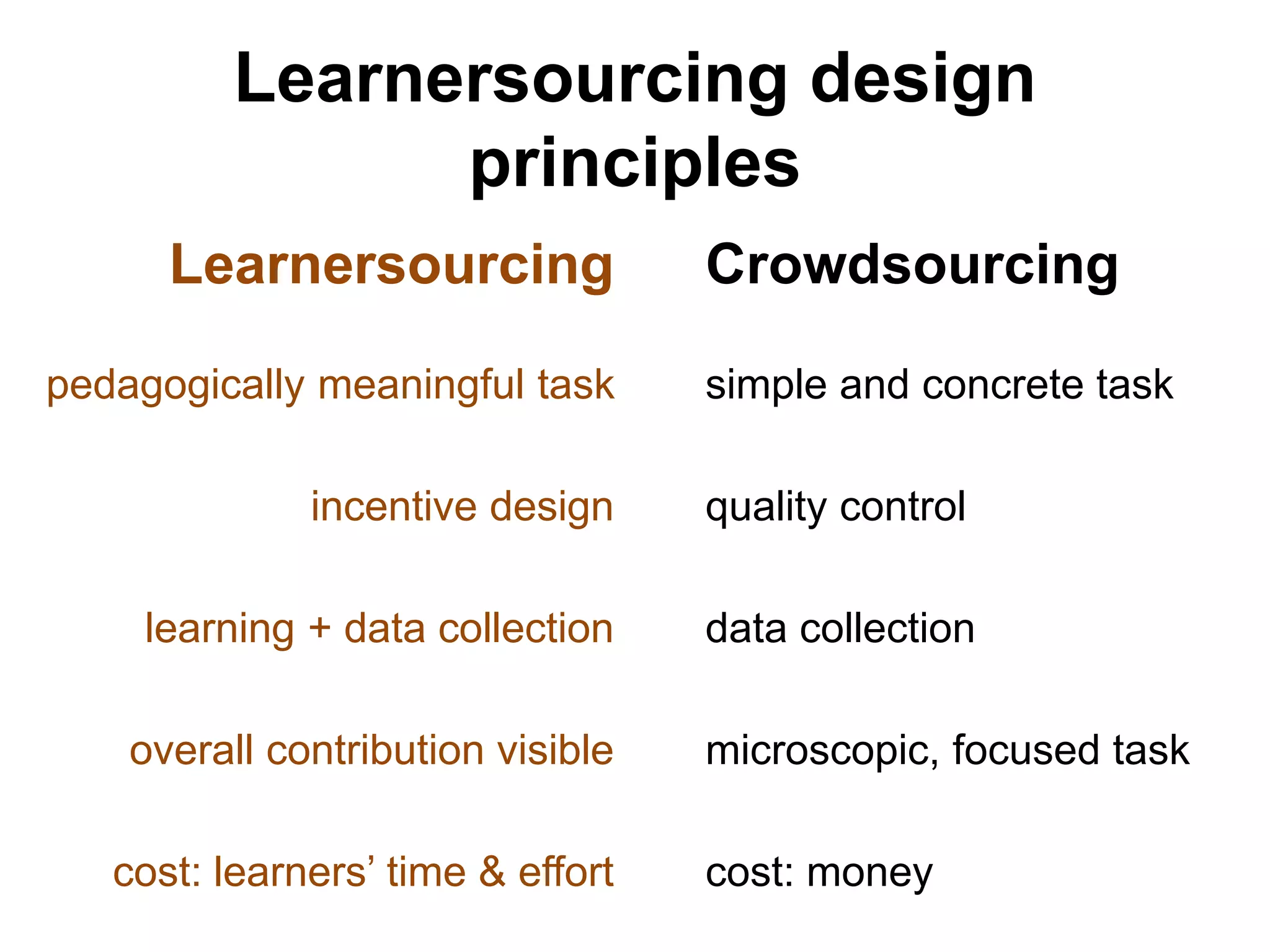

![• Requires domain experts and knowledge

extraction experts to work together. [Catrambone, 2011]

• Insight: the subgoal labeling process is

a good exercise for learning!

– Reflect on

– Explain

– Summarize

Generating subgoal labels is difficult](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-80-2048.jpg)

![Generalizing learnersourcing

community-guided planning, discussion,

decision making, collaborative work

- Conference planning [UIST 2013, CHI 2014, HCOMP 2013, HCOMP

2014]

- Civic engagement [ CHI 2015, CHI 2015 EA]](https://image.slidesharecdn.com/20150730-thesis-defense-web-150805095116-lva1-app6892/75/Learnersourcing-Improving-Learning-with-Collective-Learner-Activity-105-2048.jpg)