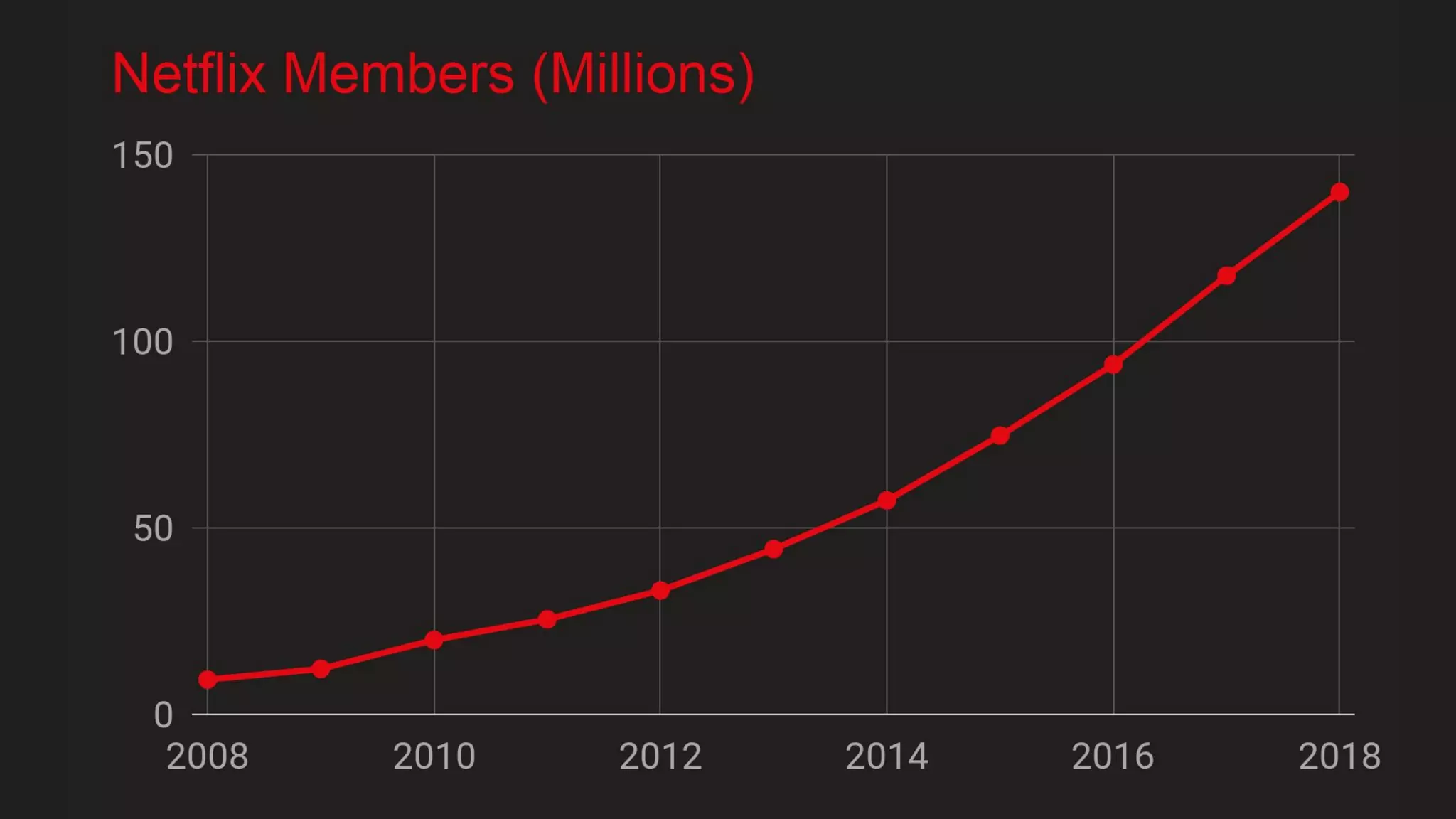

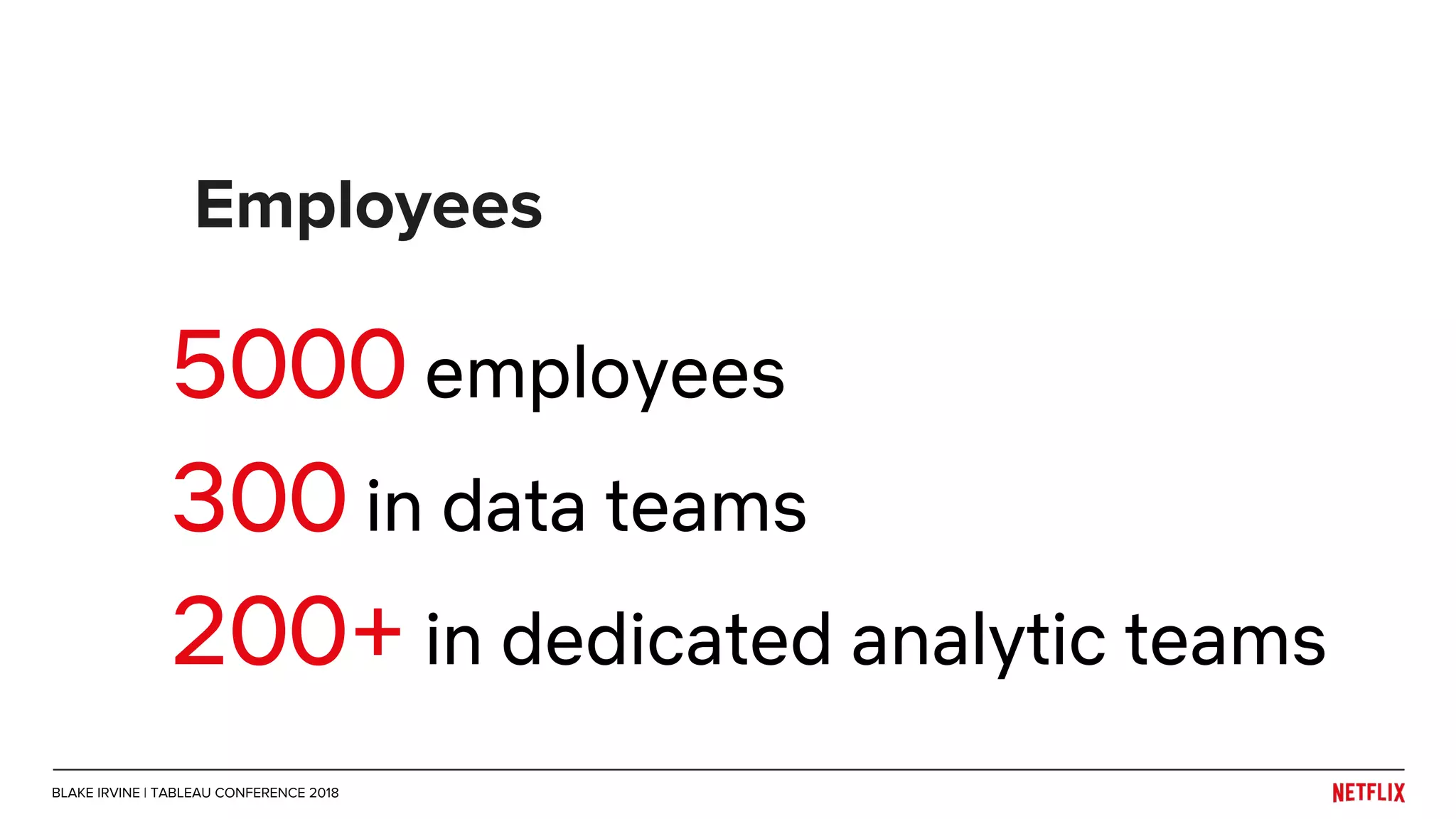

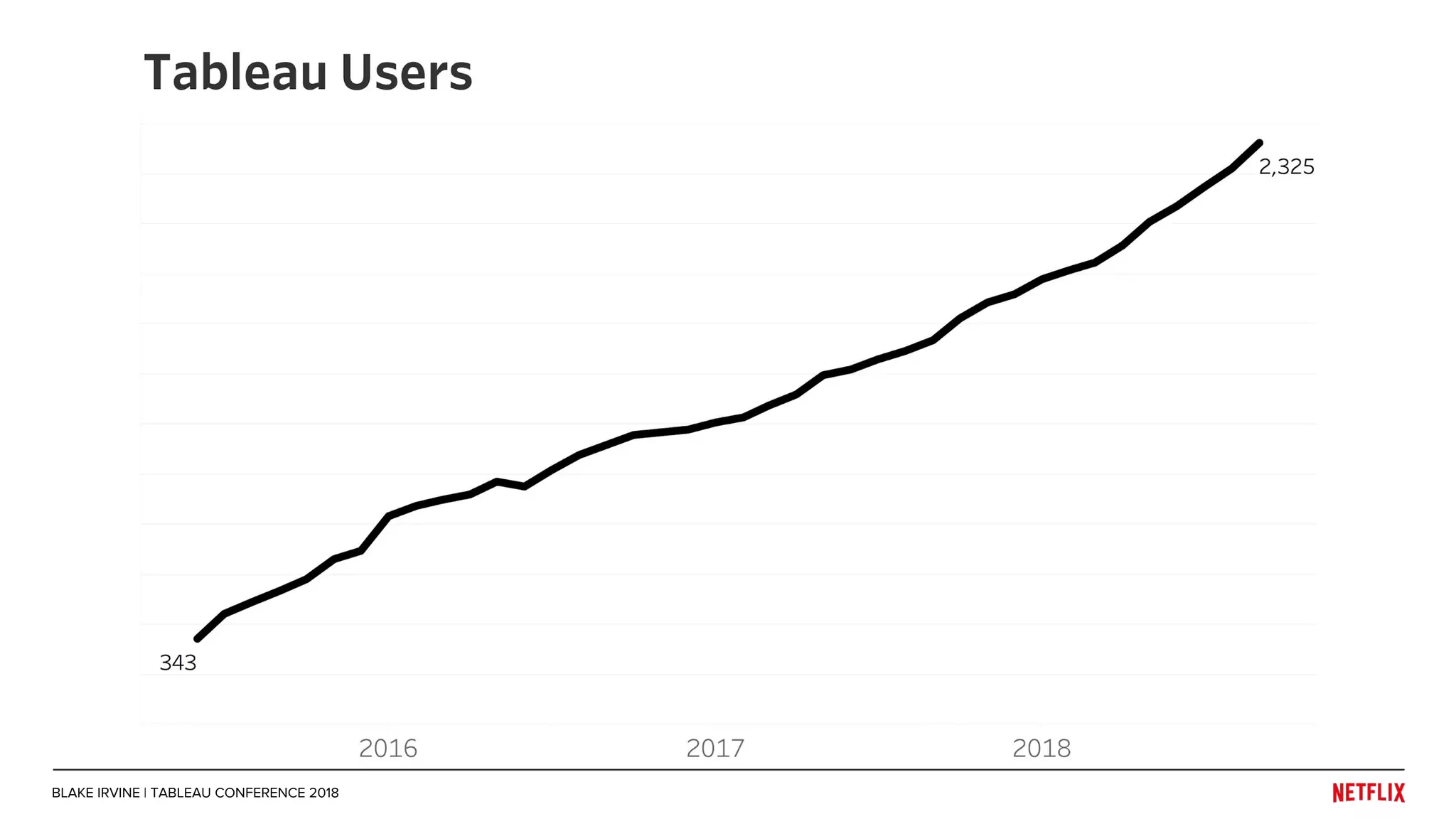

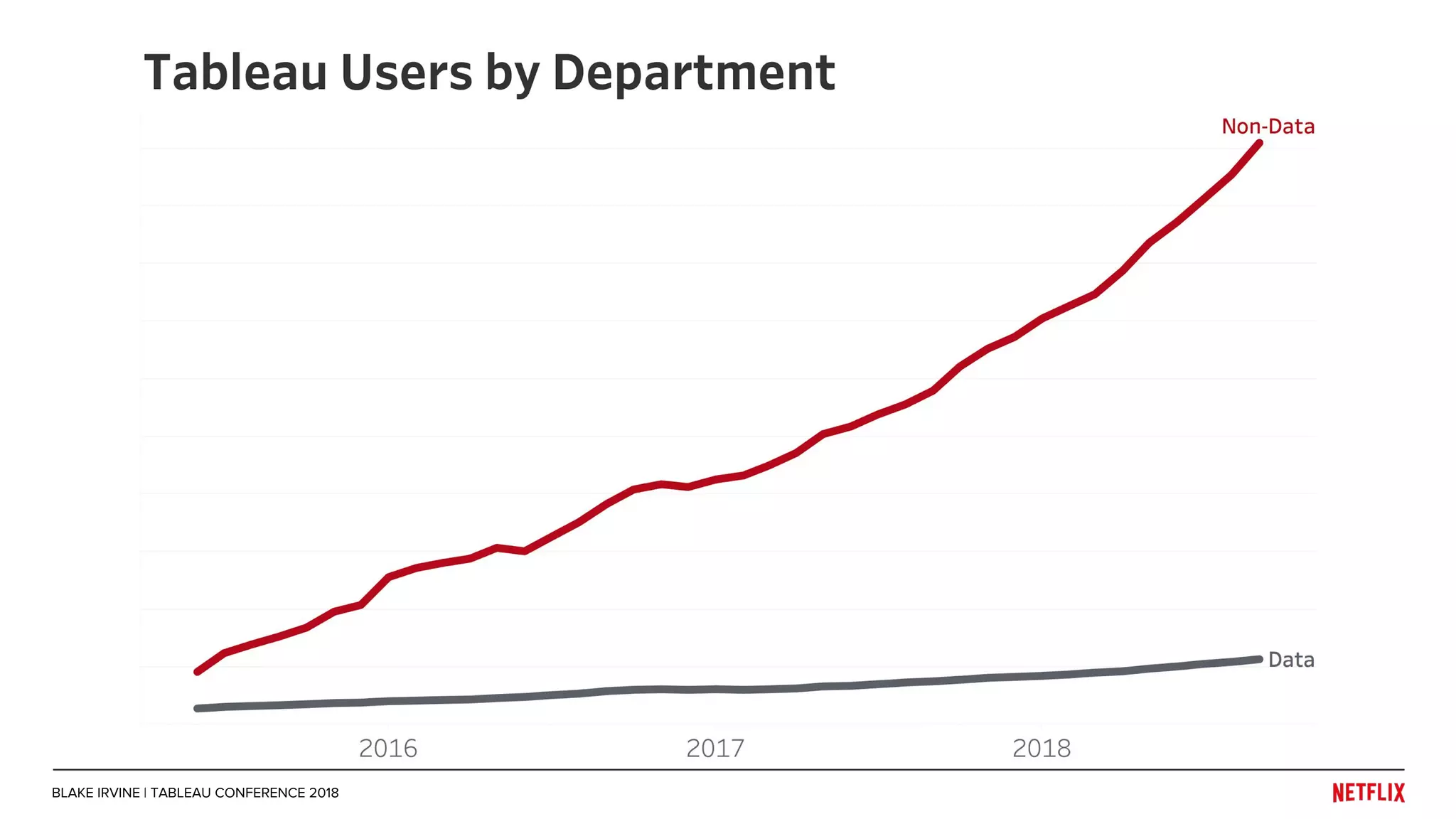

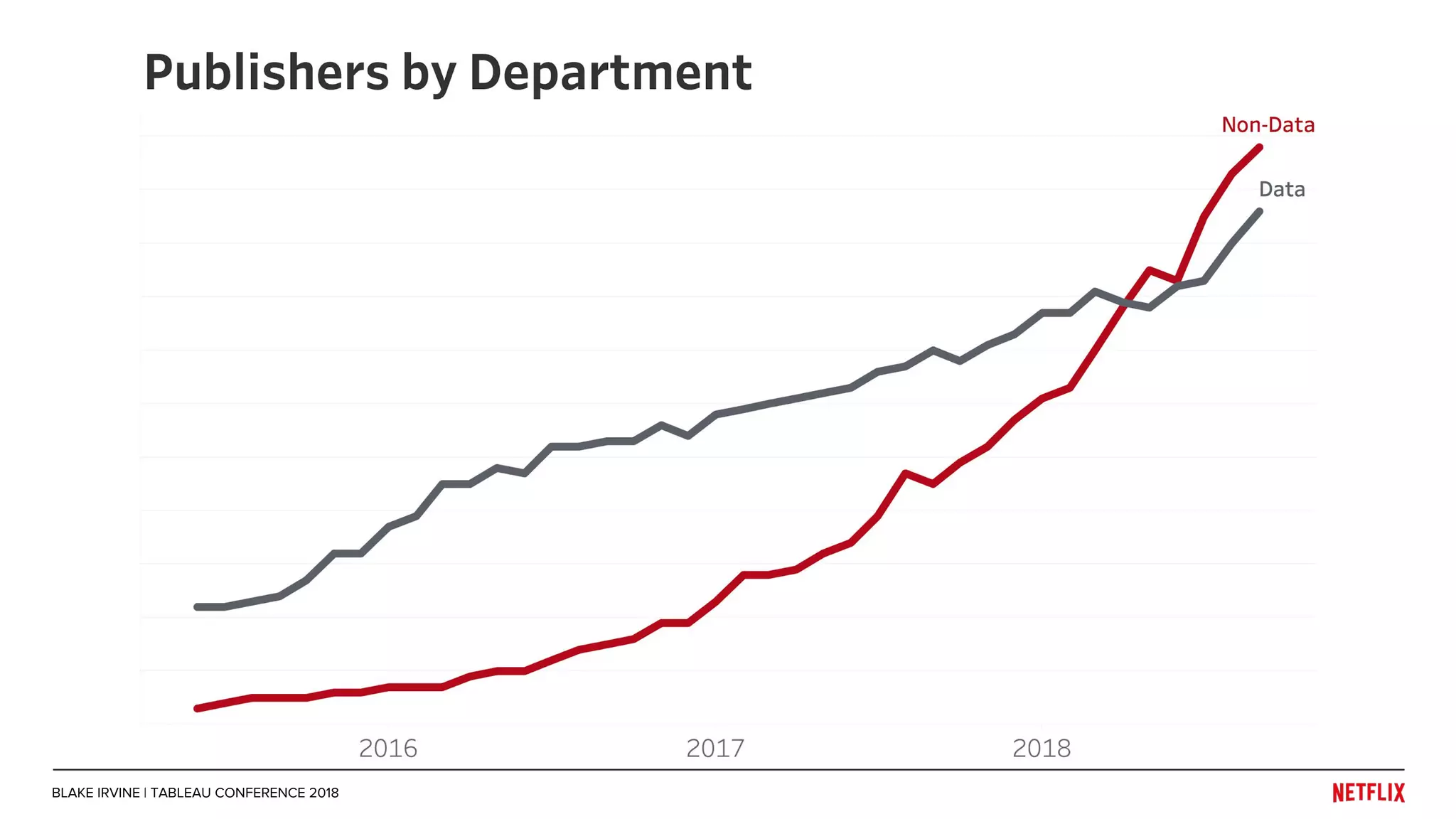

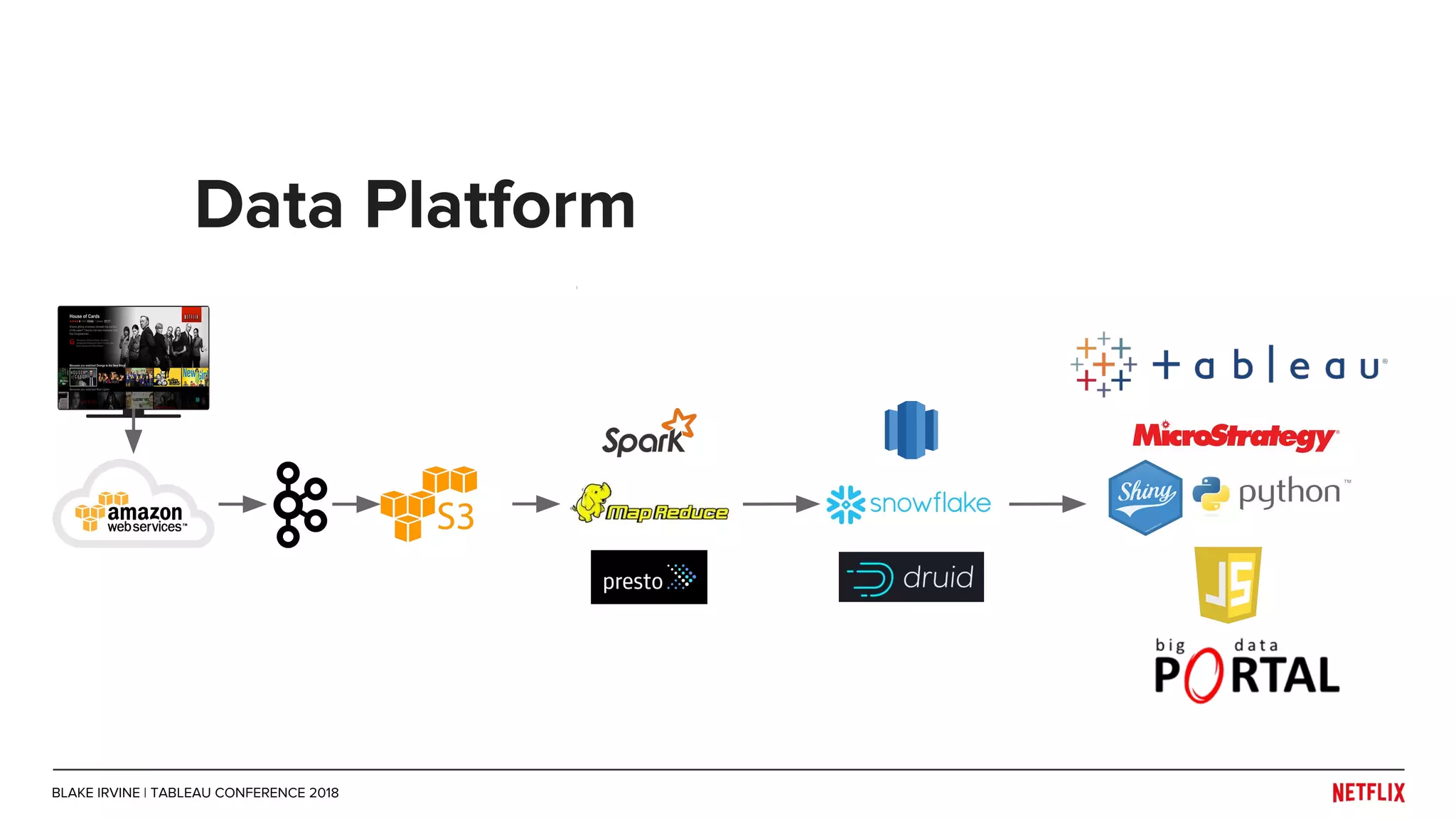

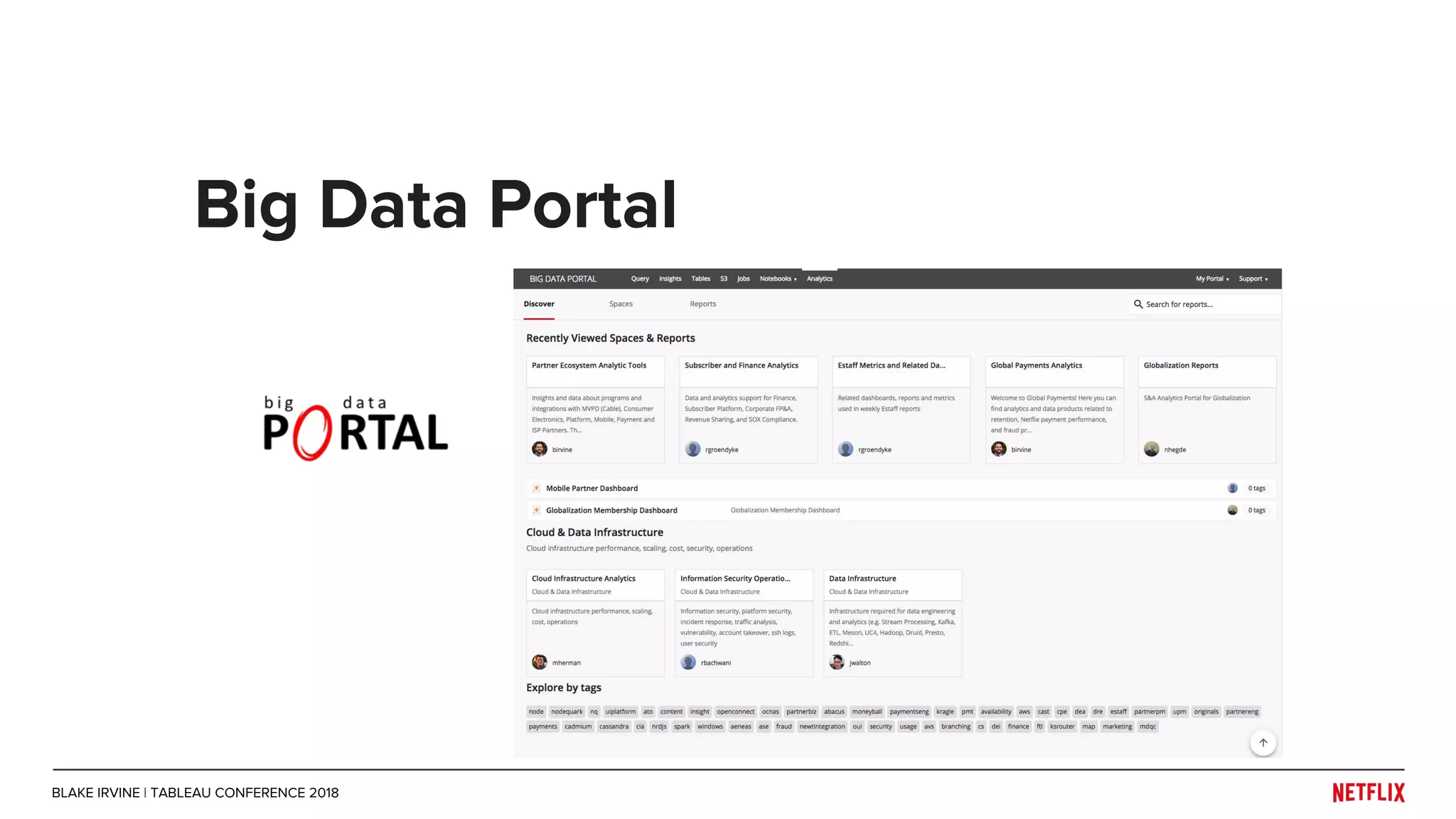

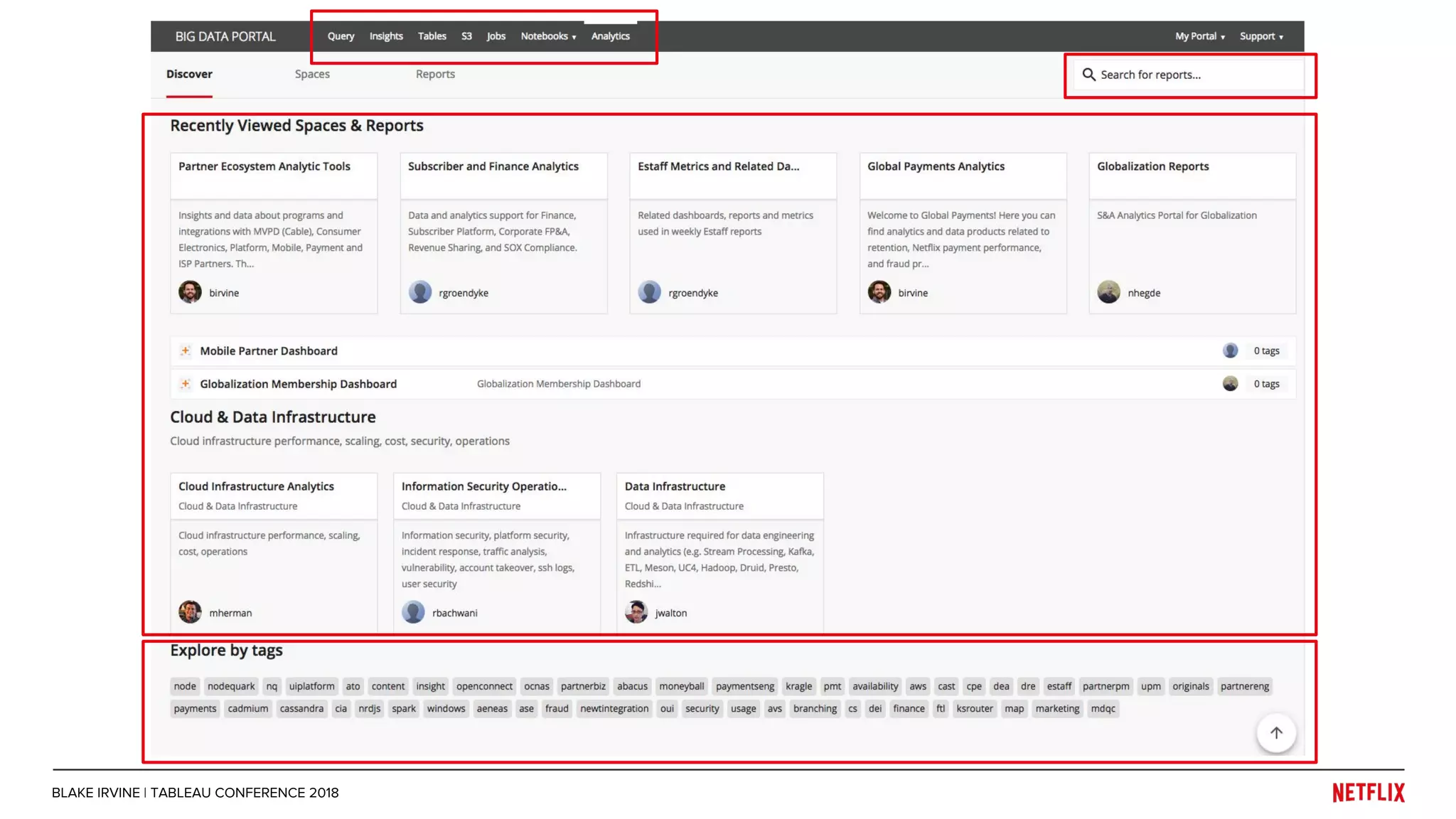

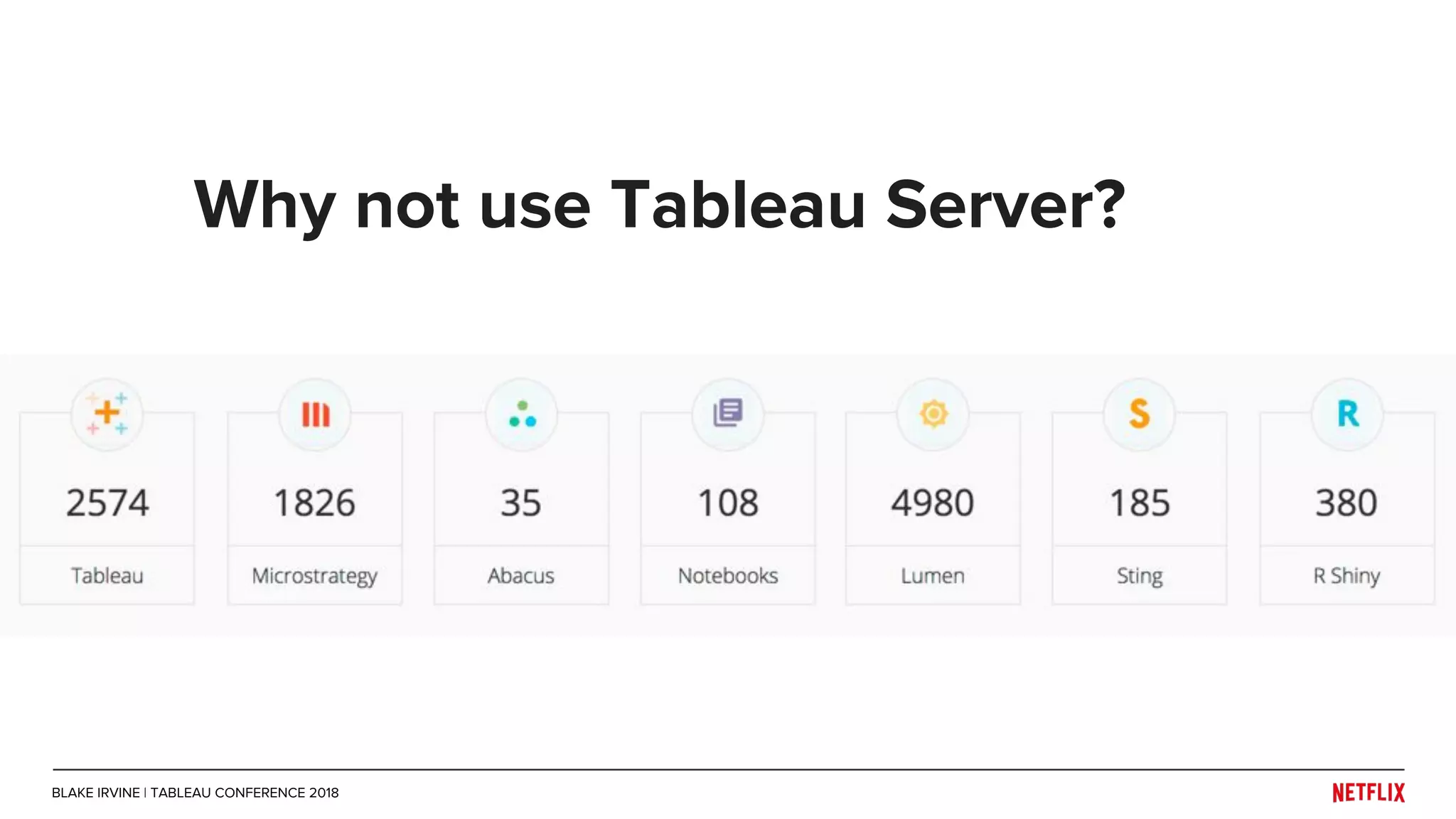

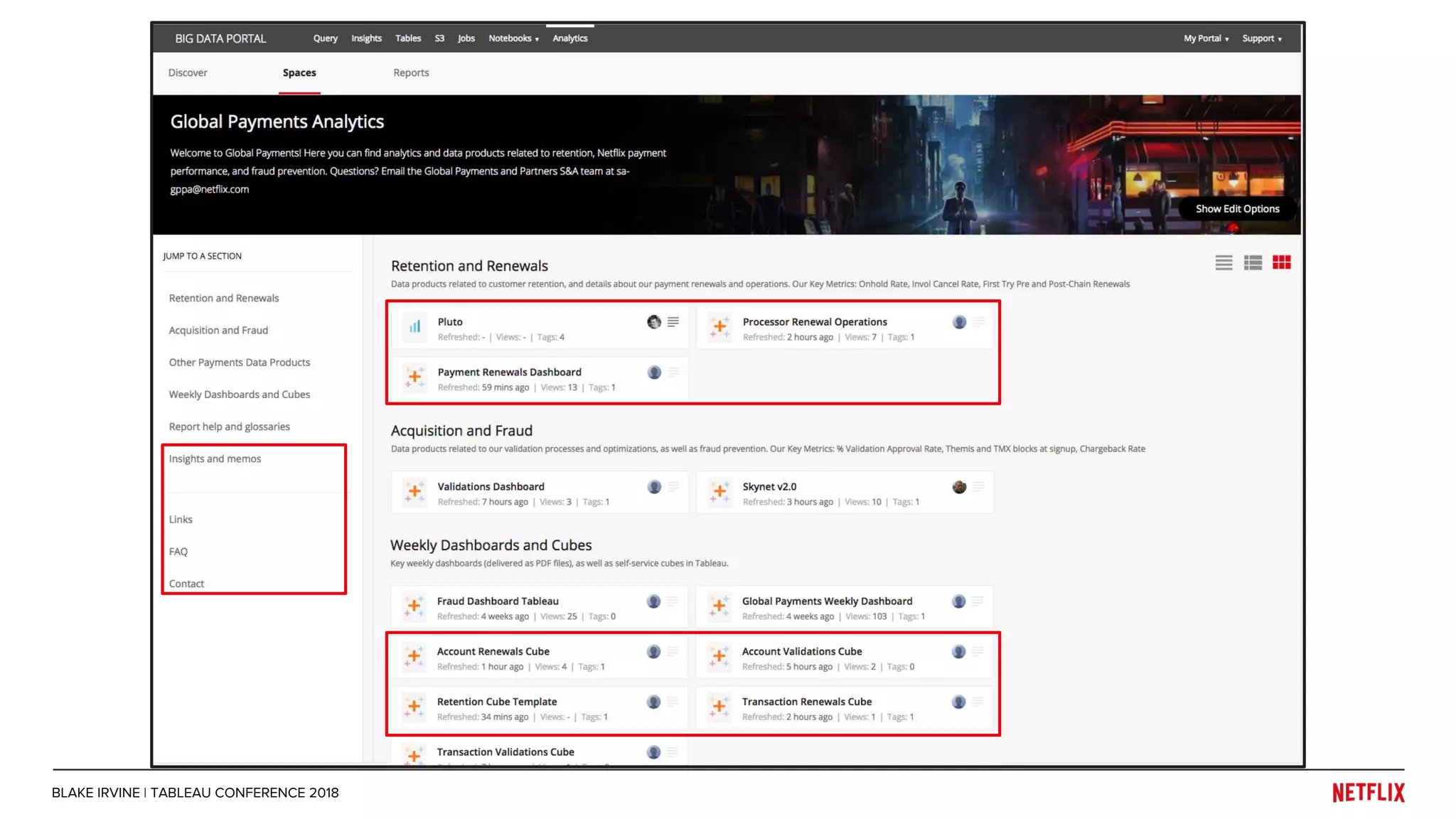

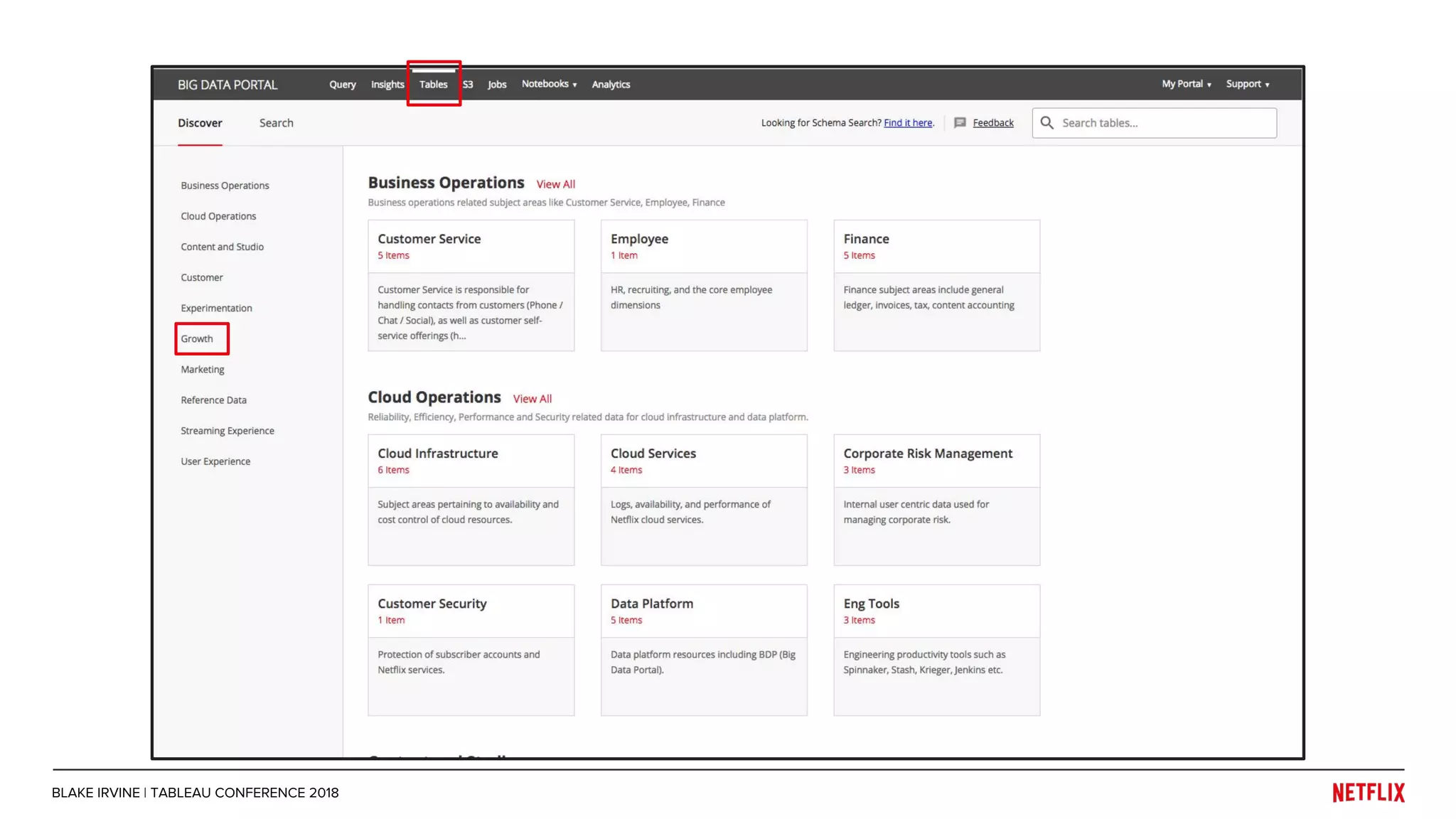

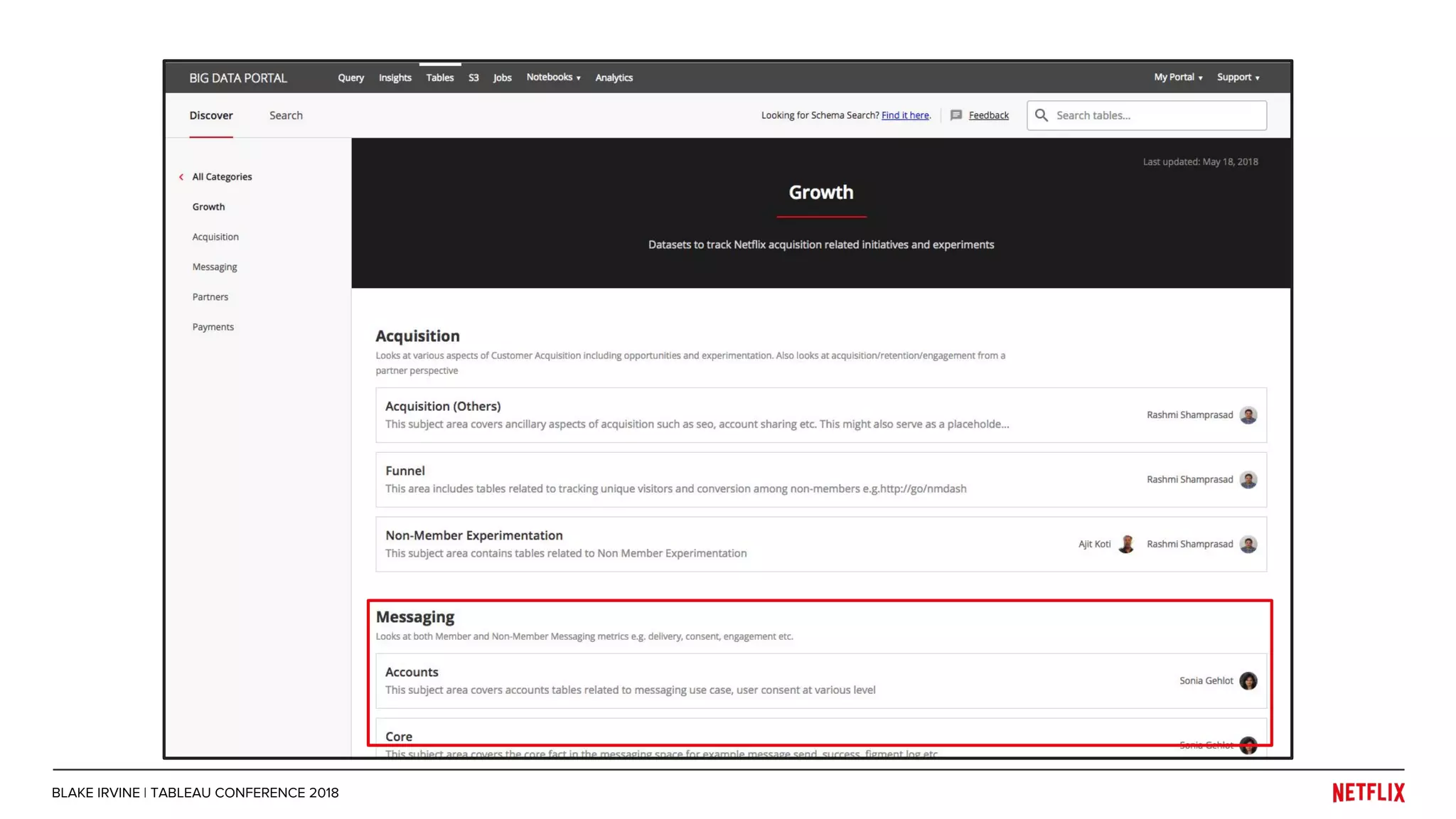

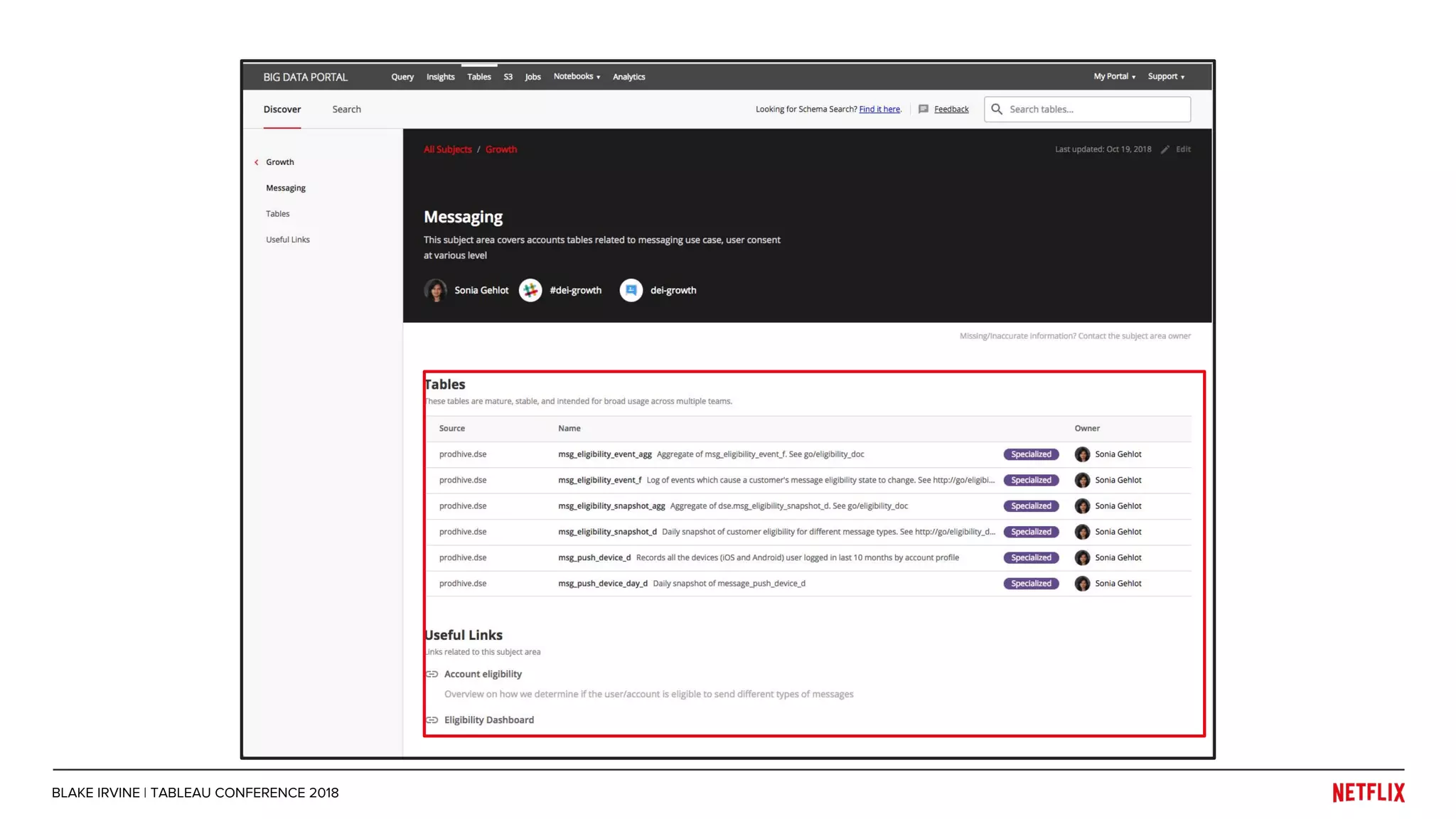

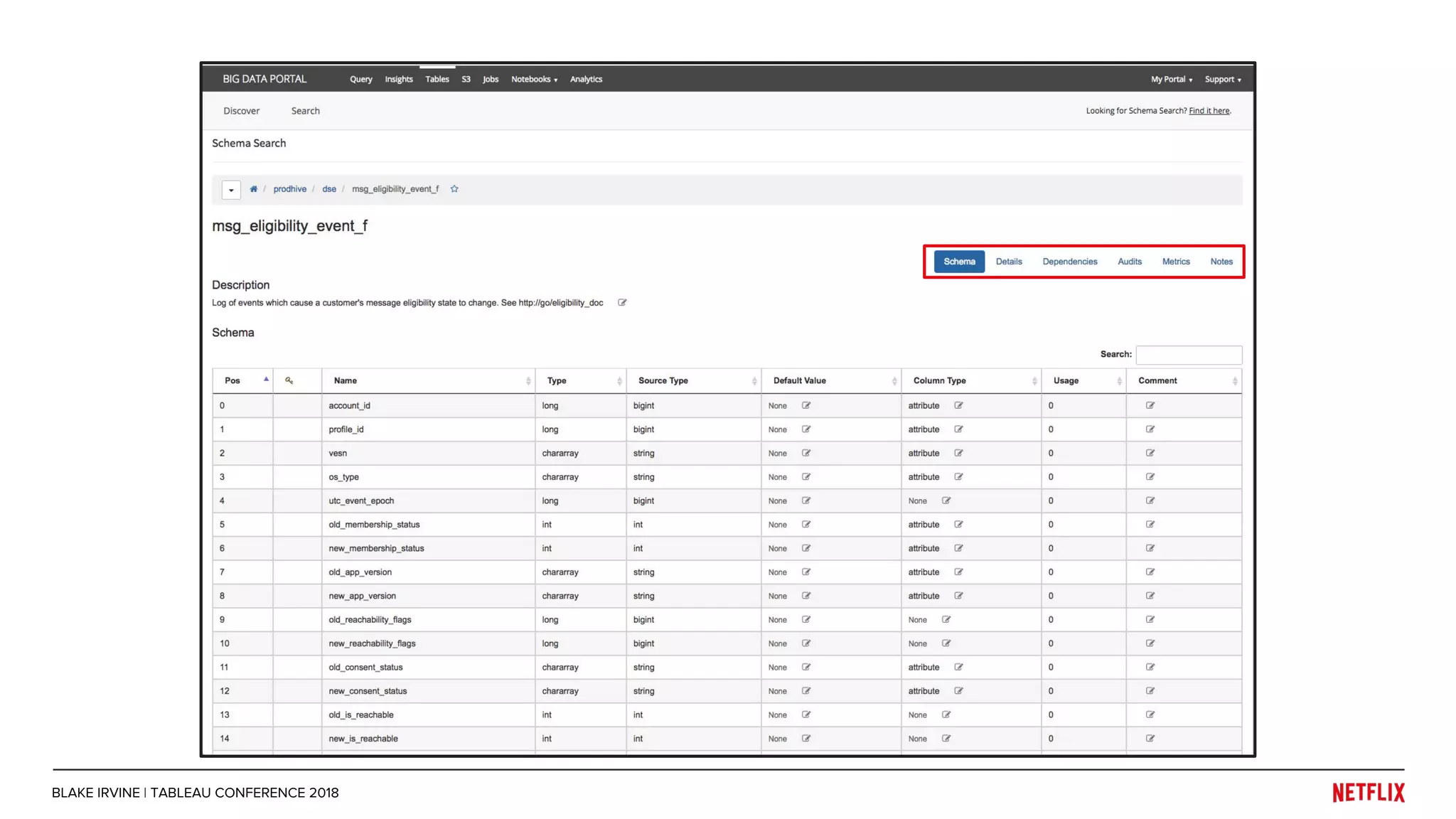

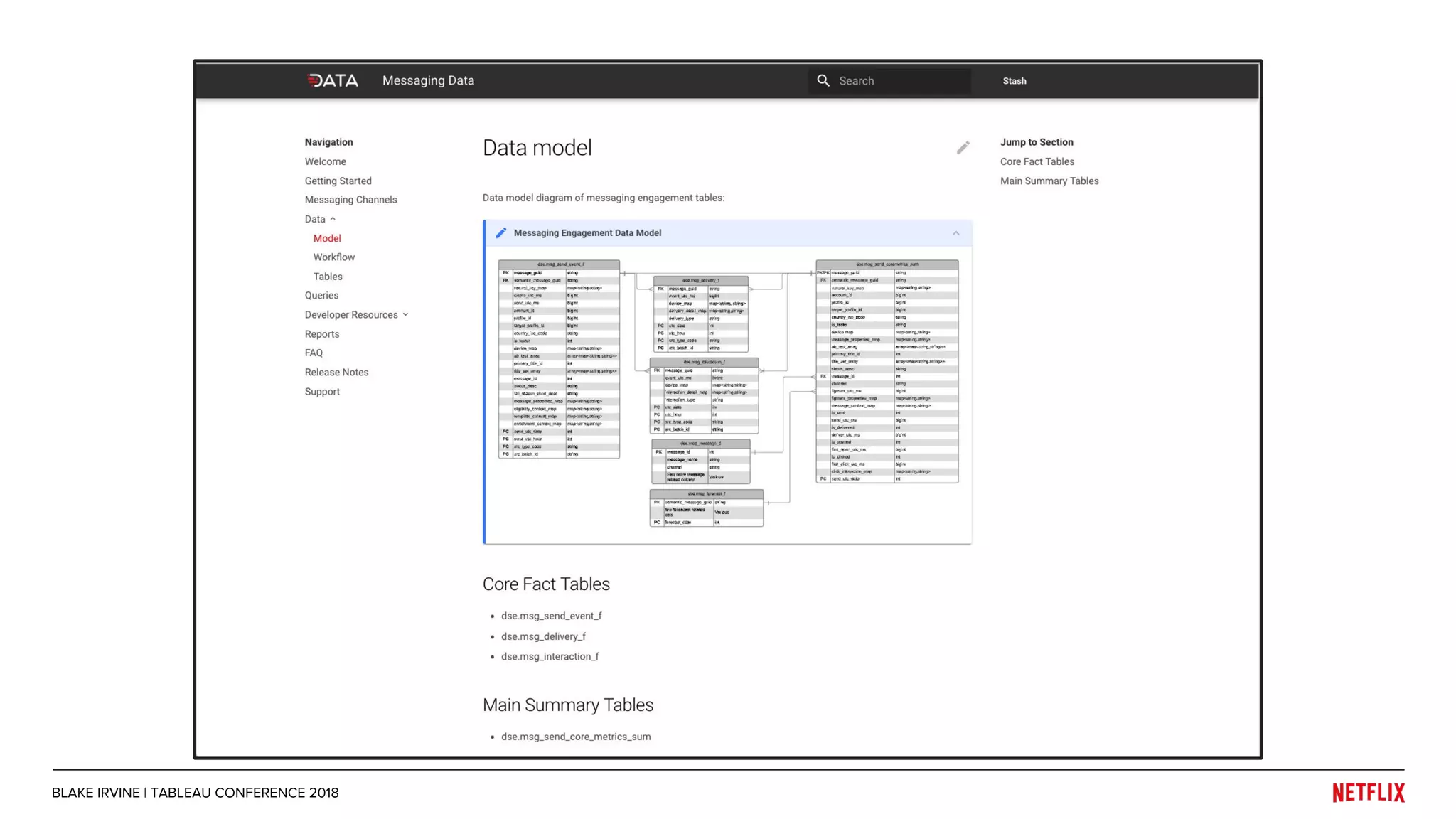

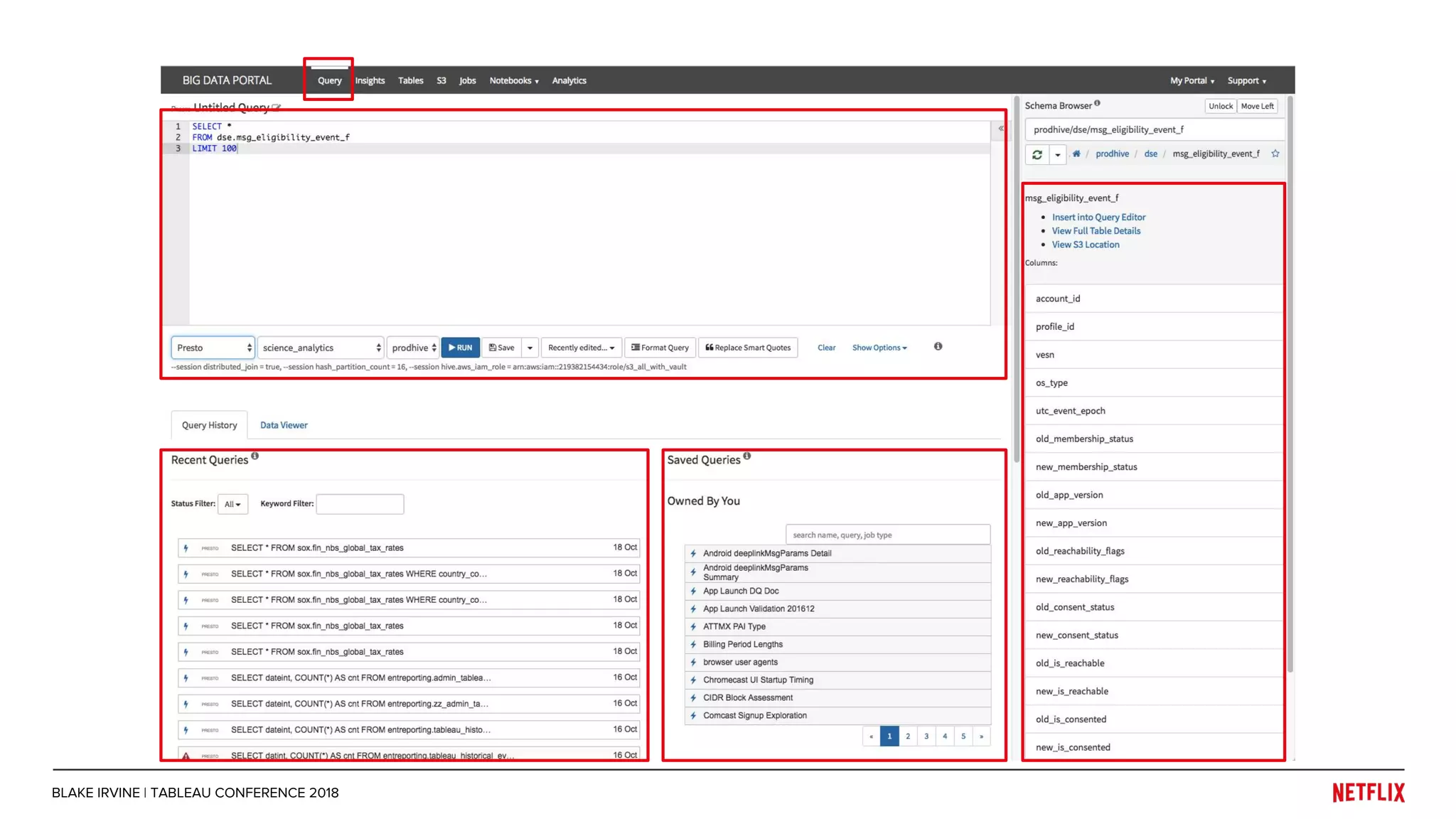

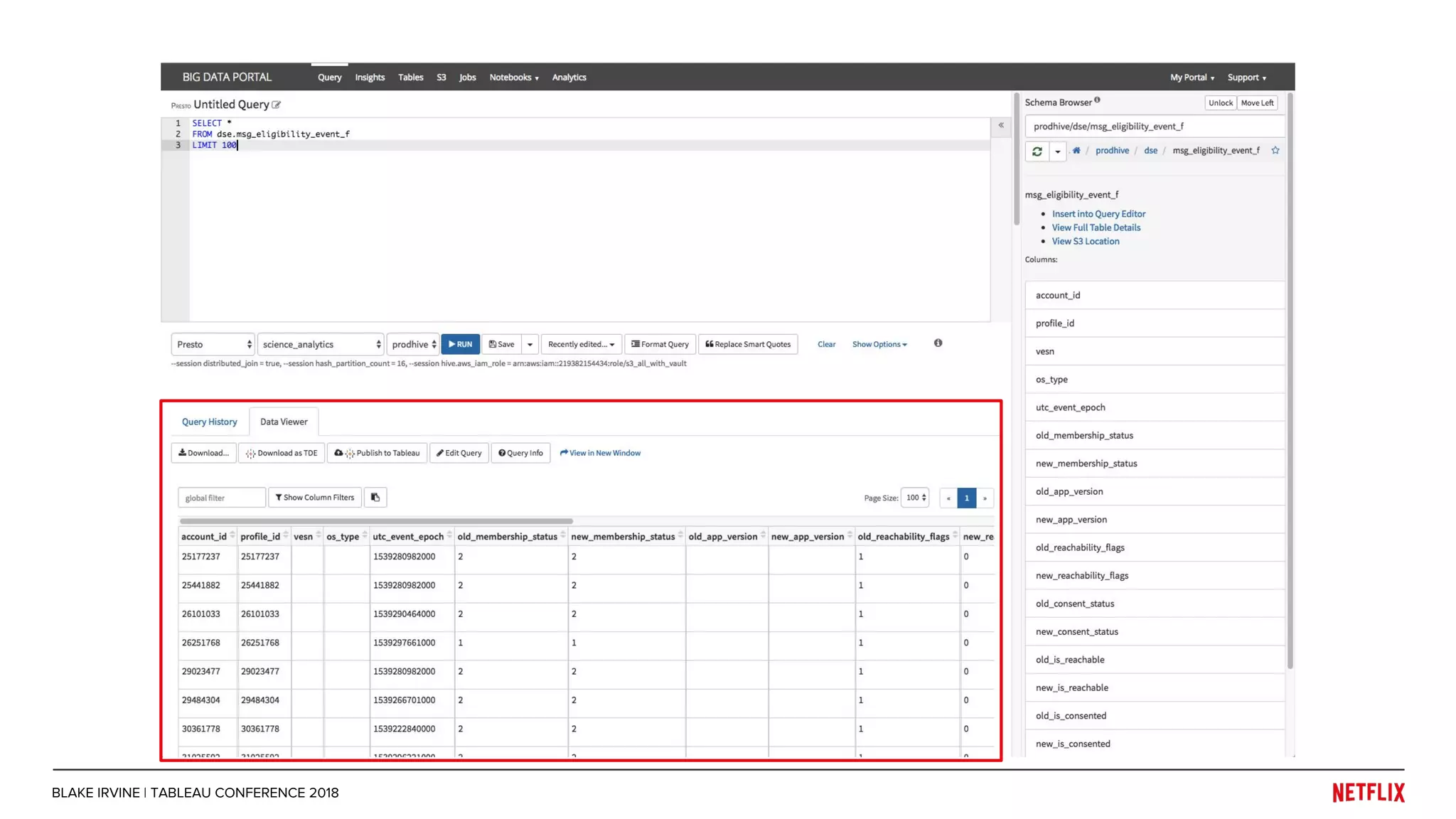

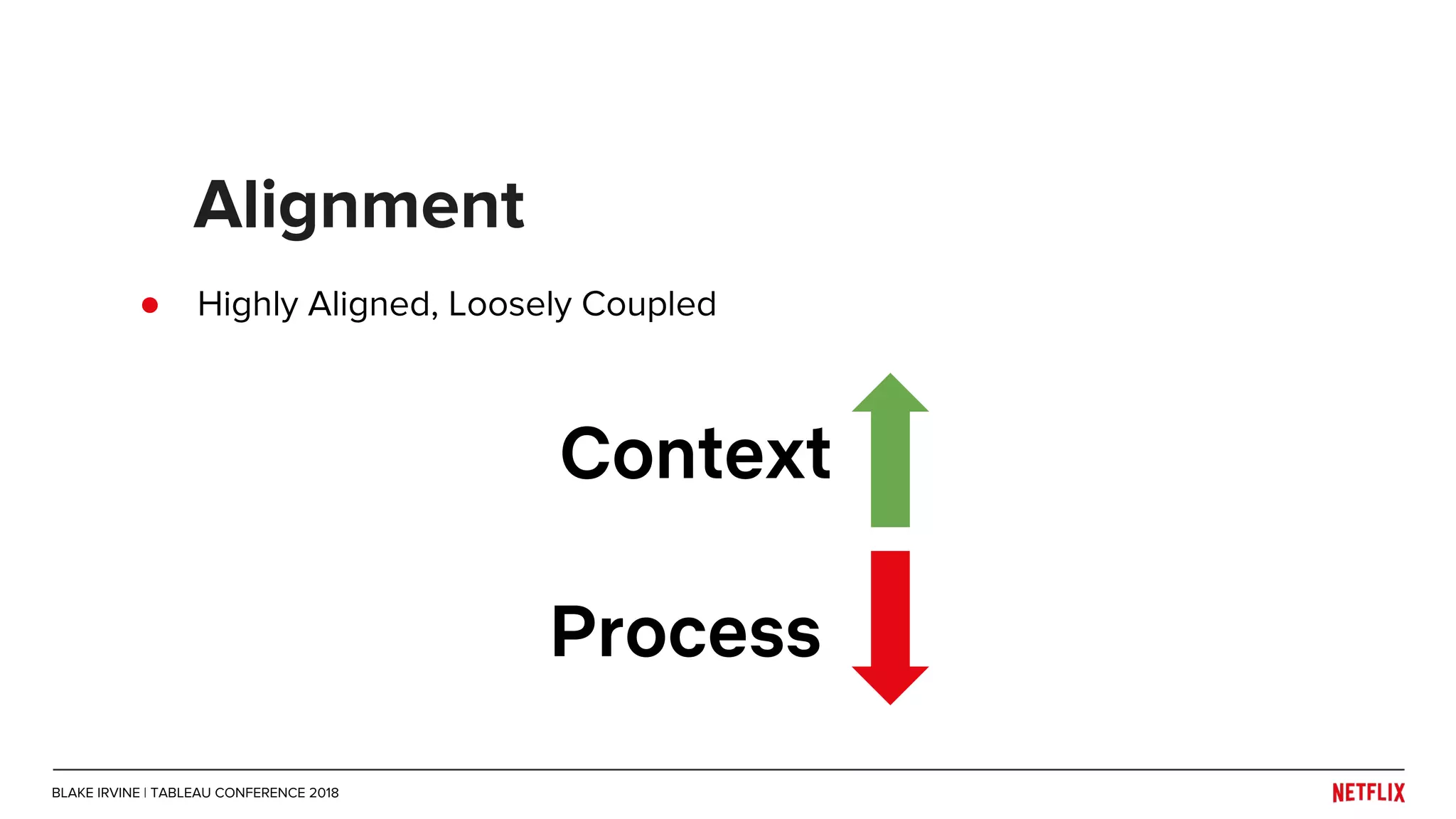

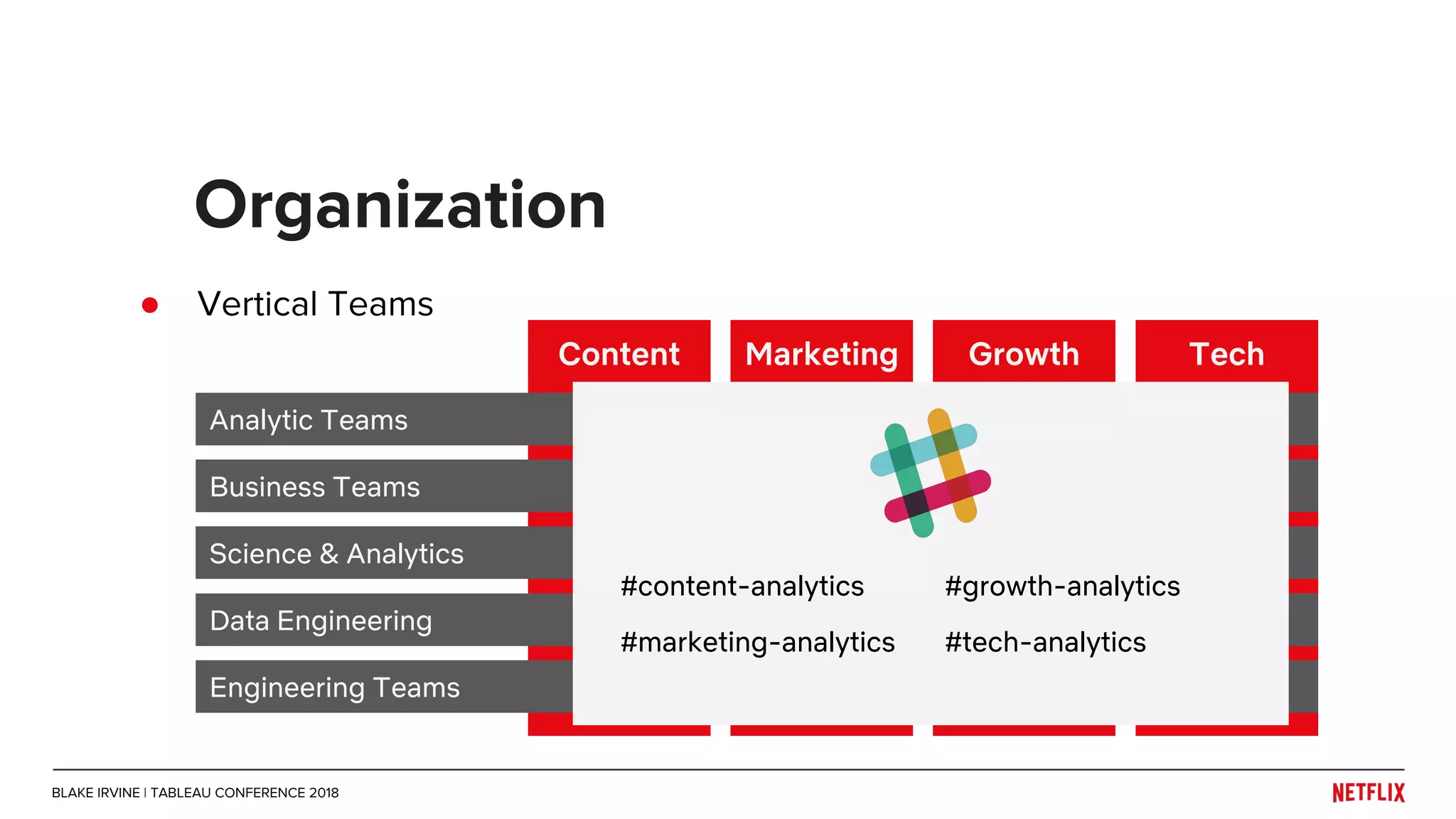

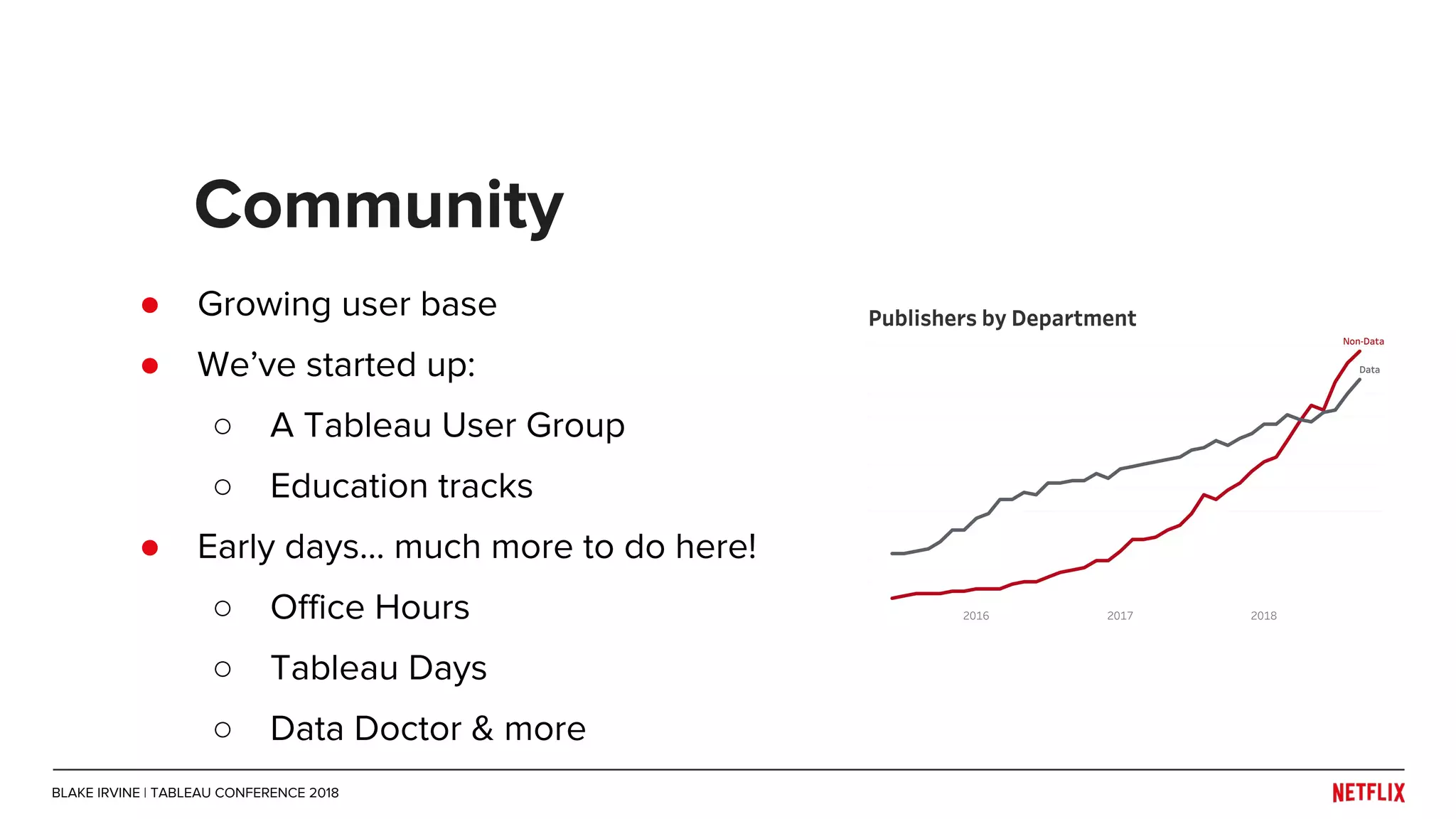

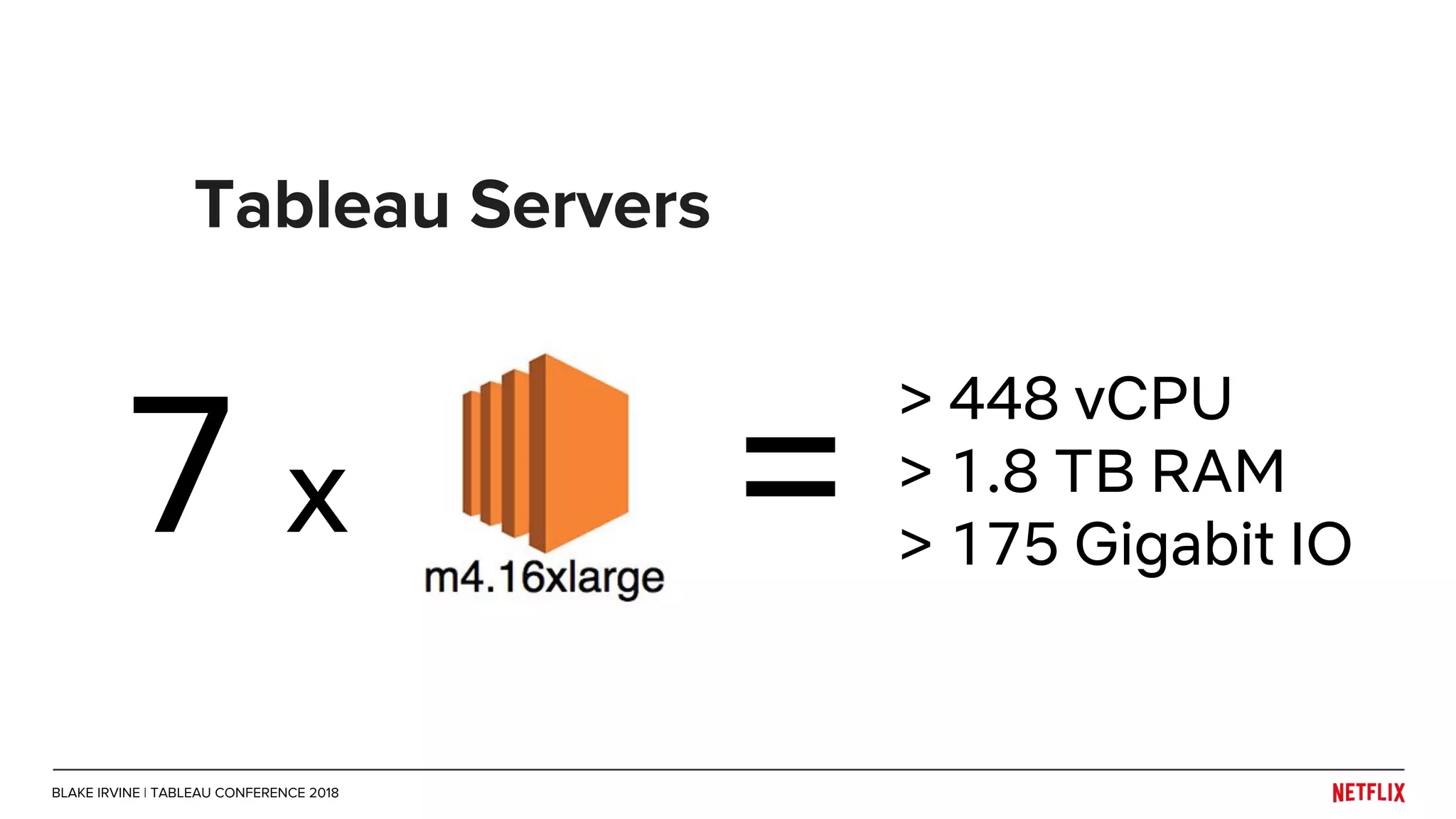

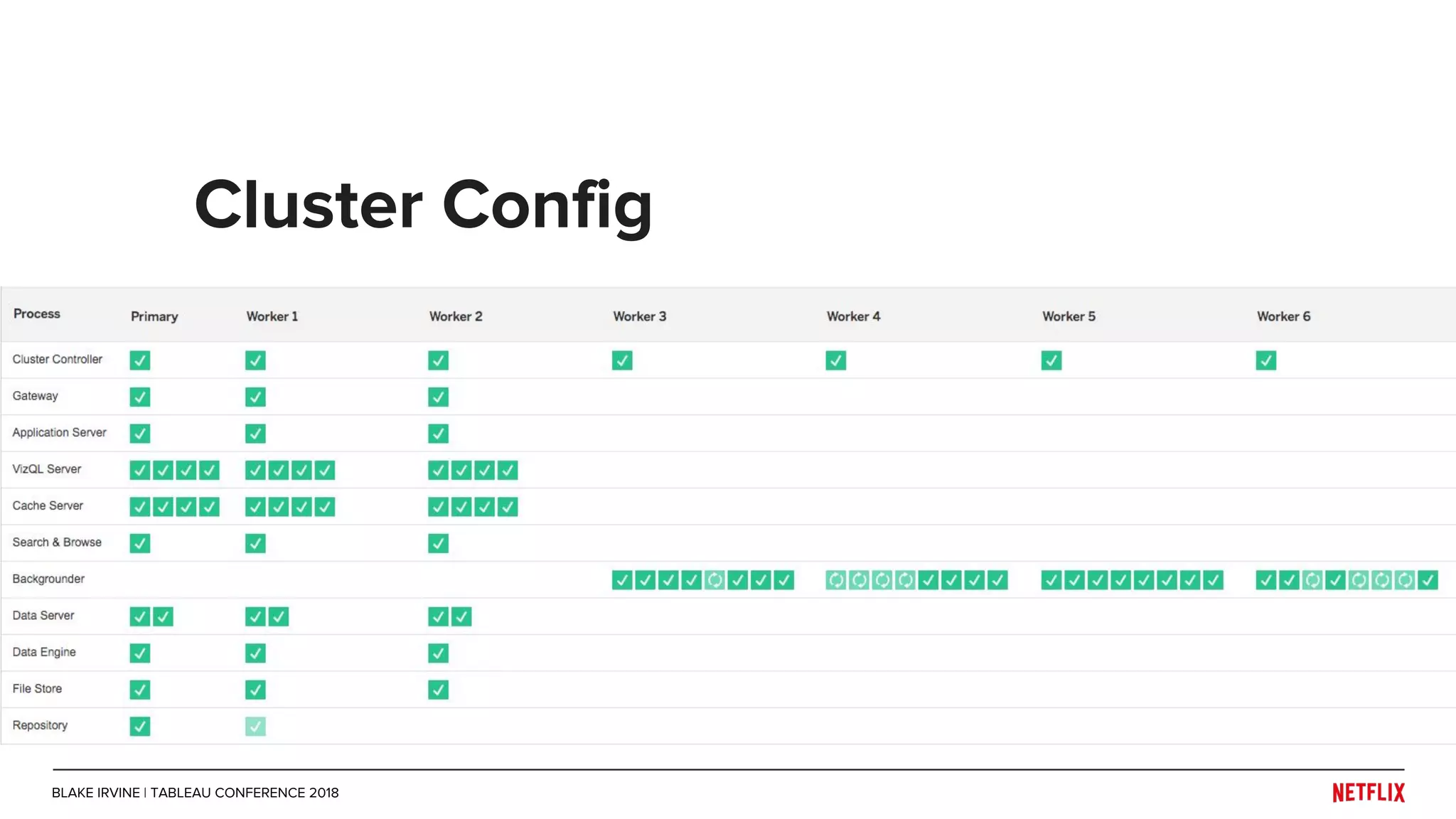

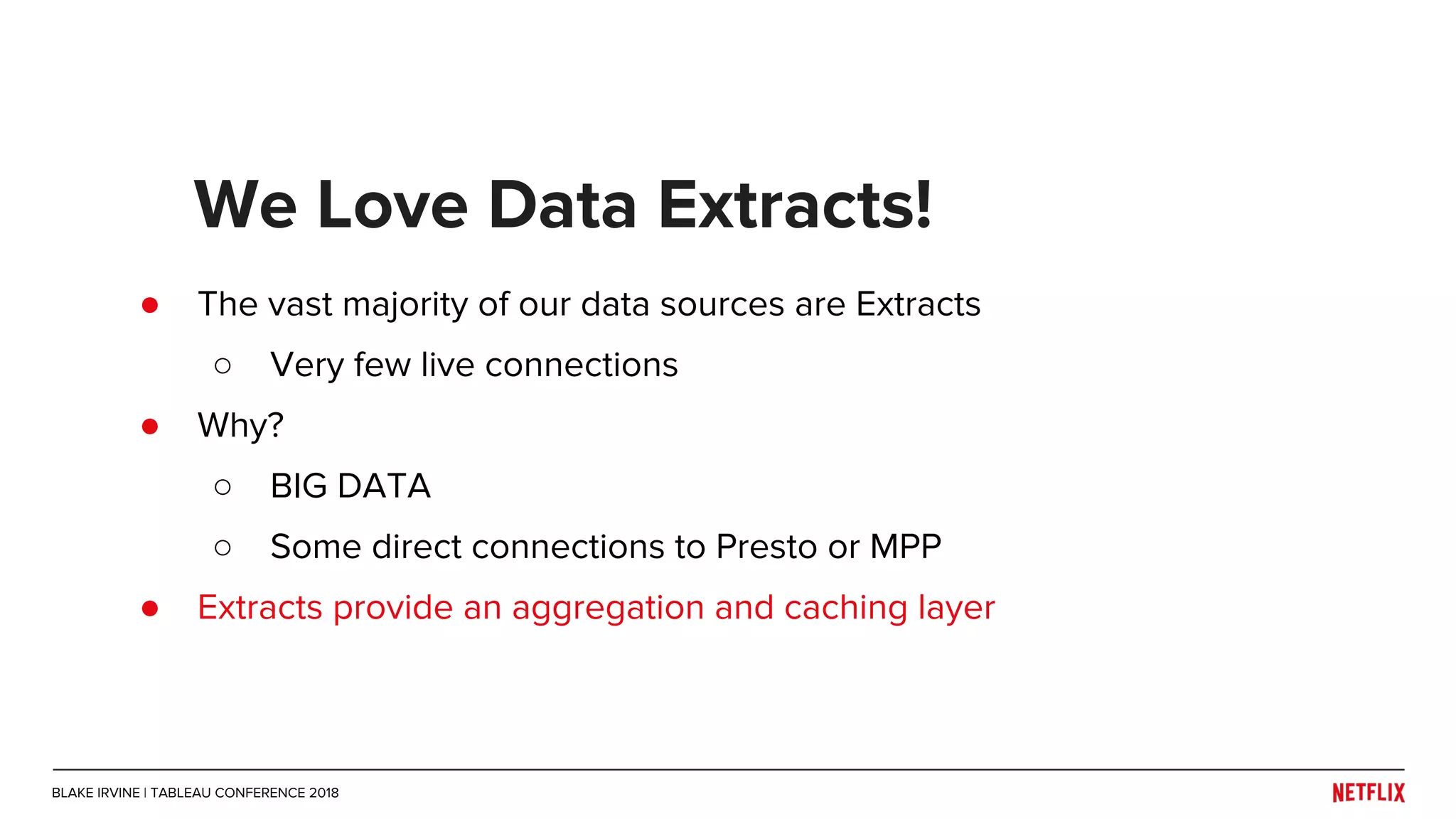

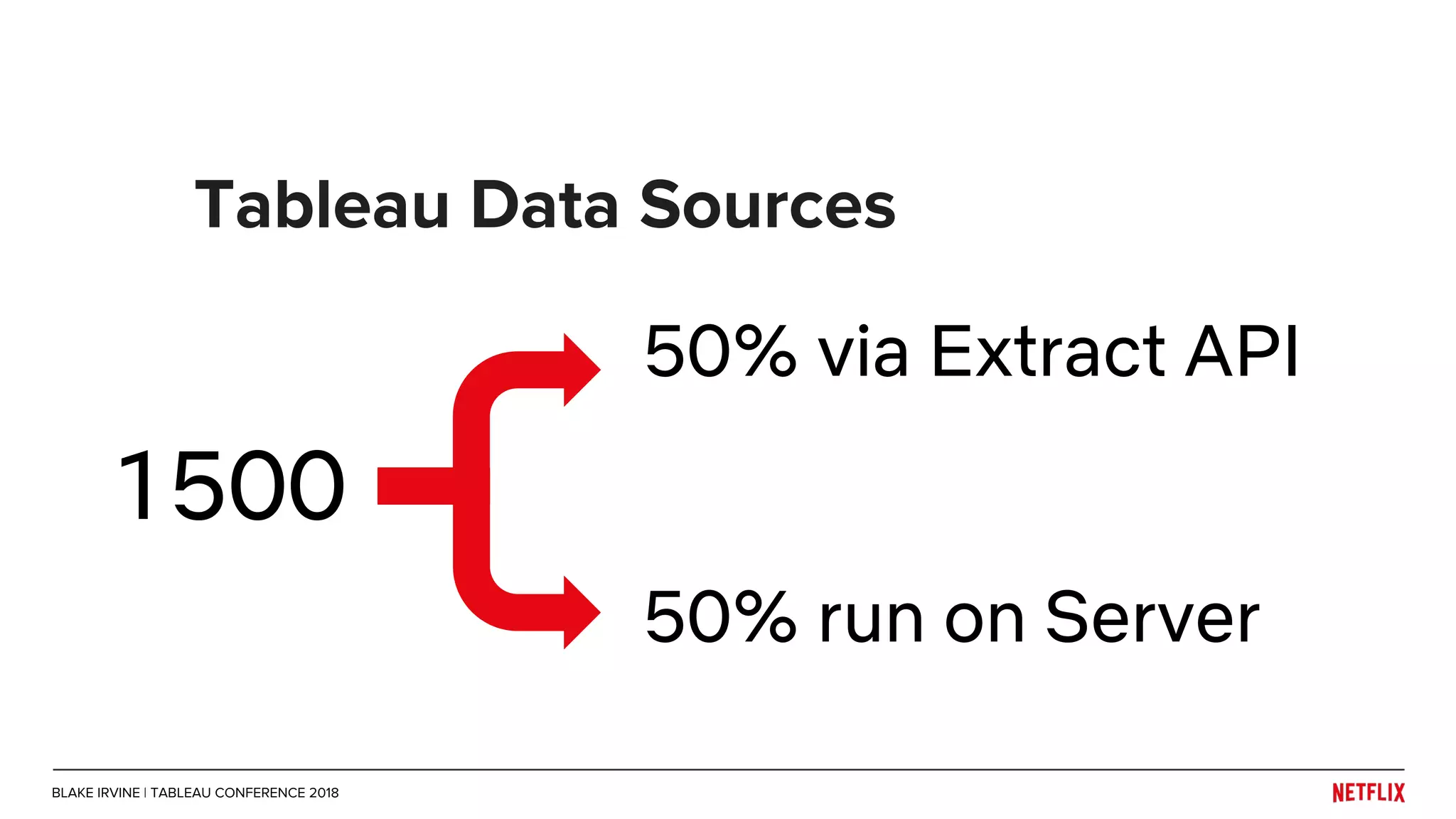

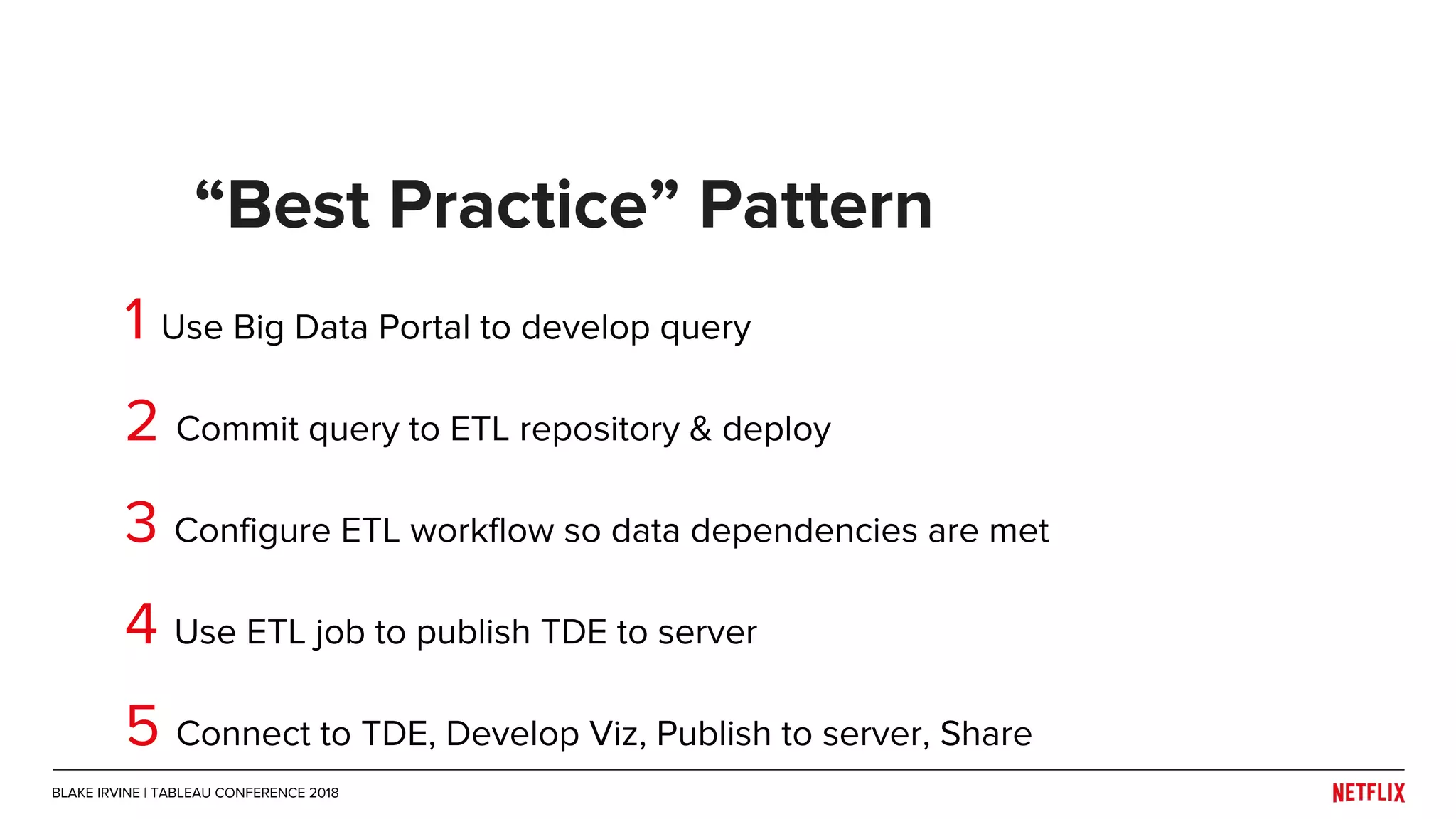

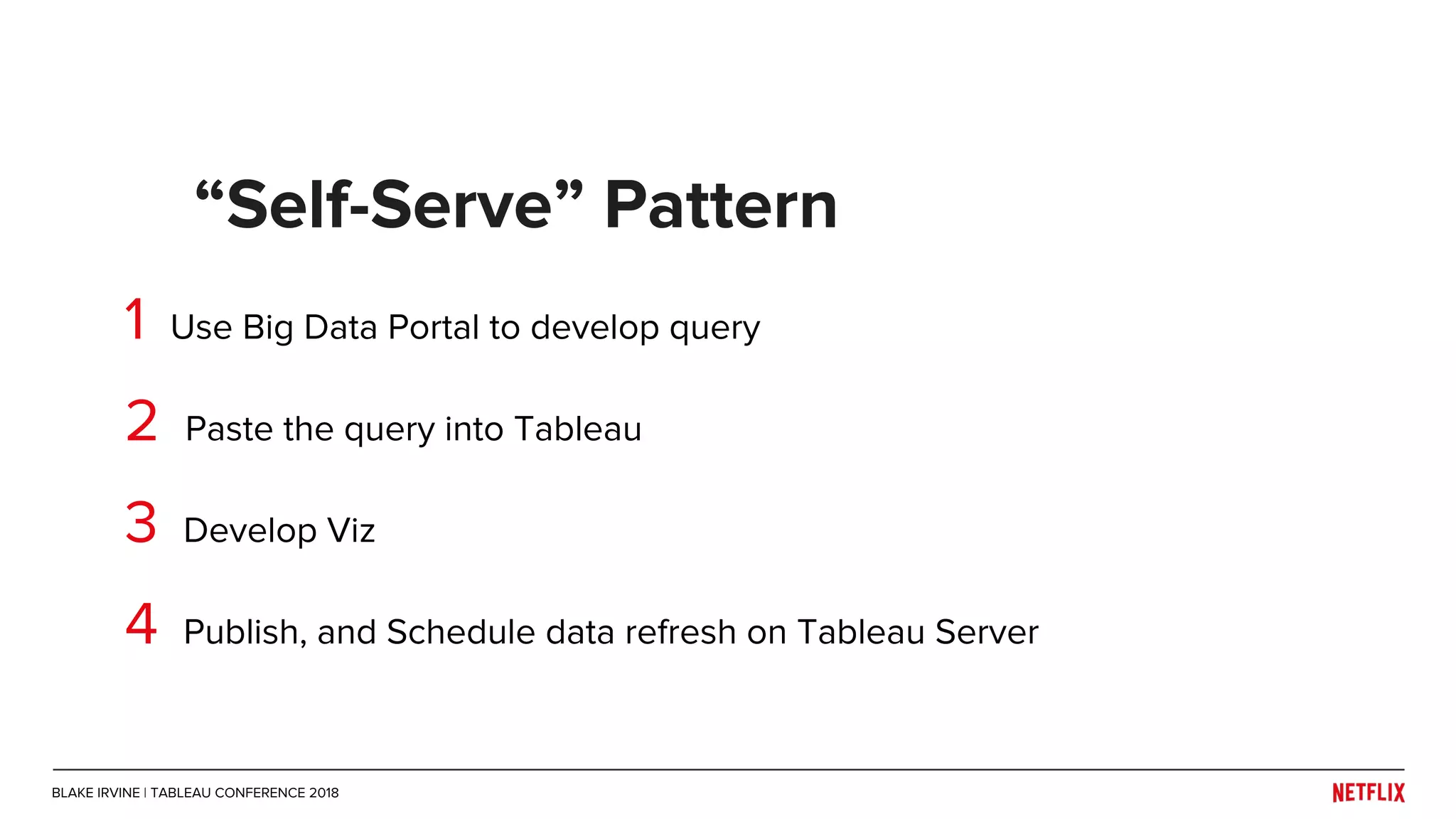

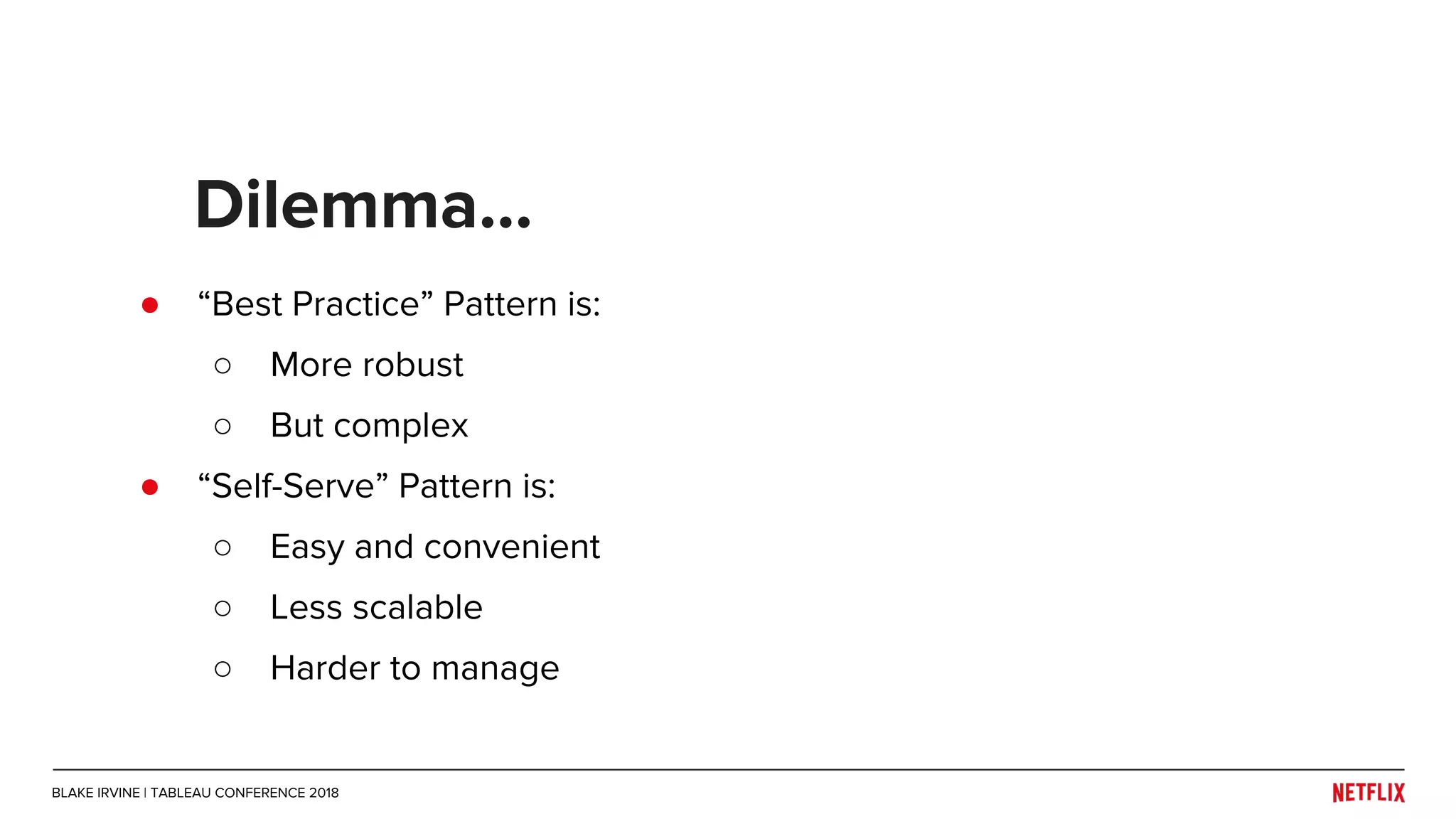

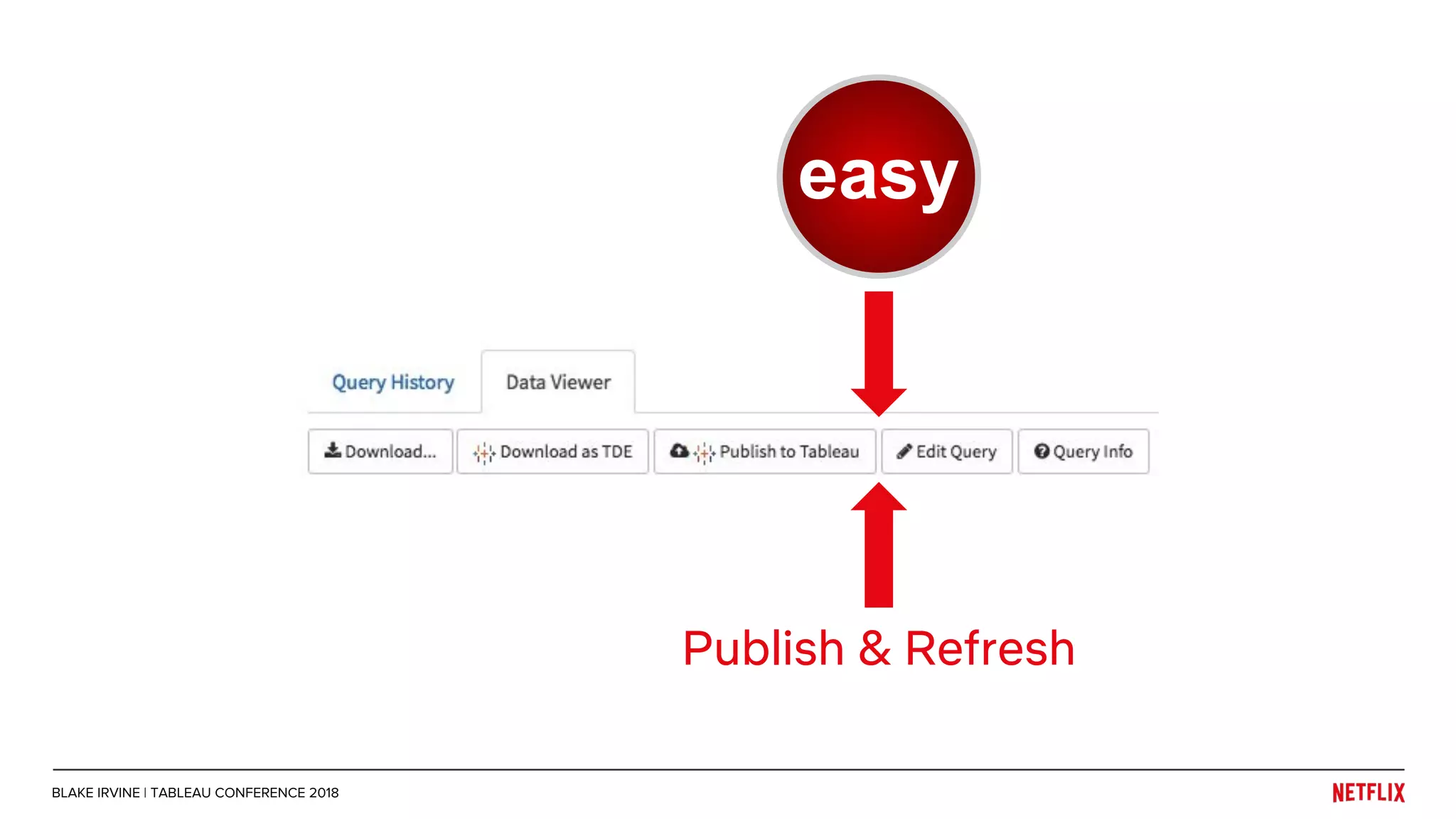

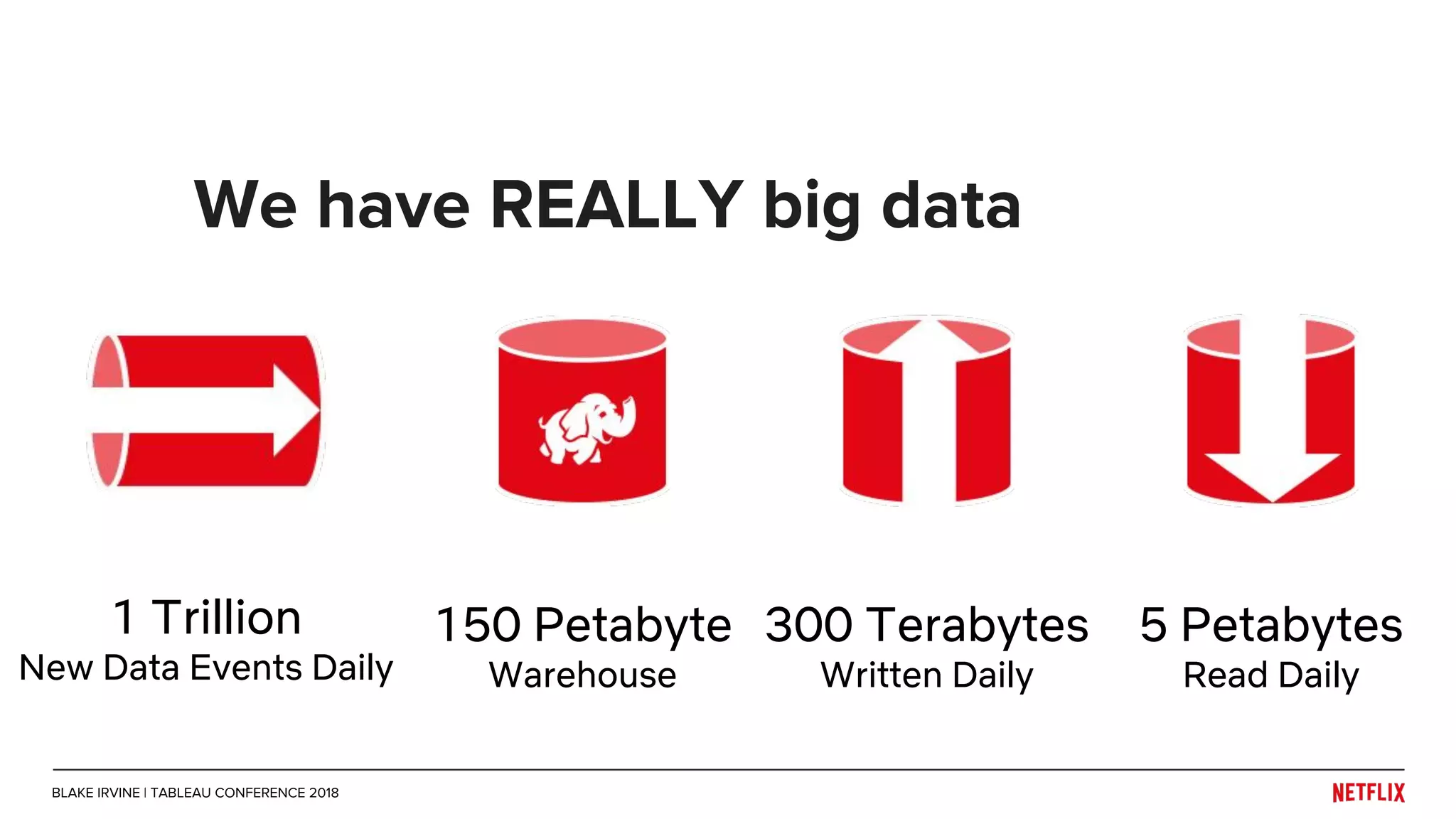

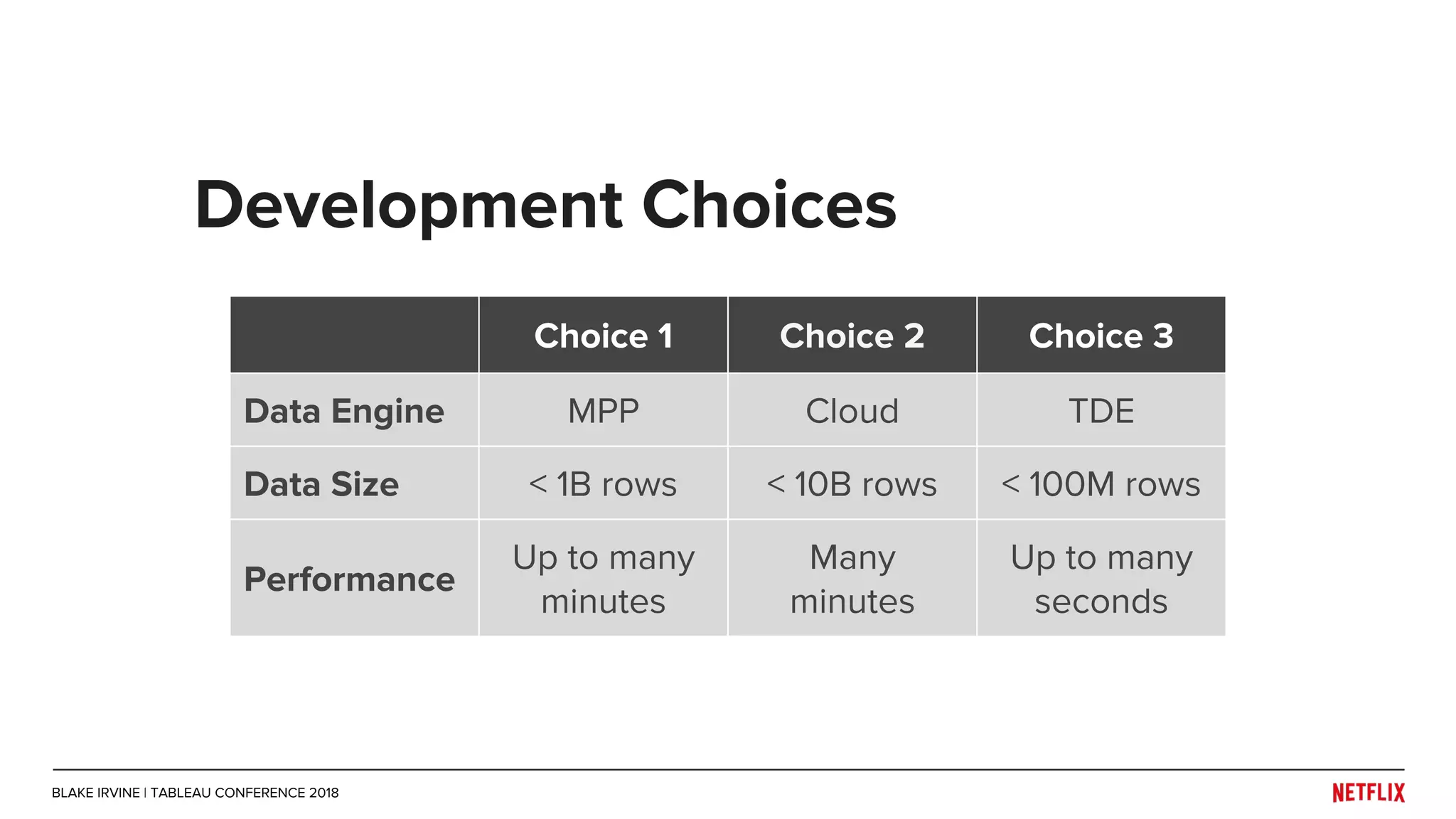

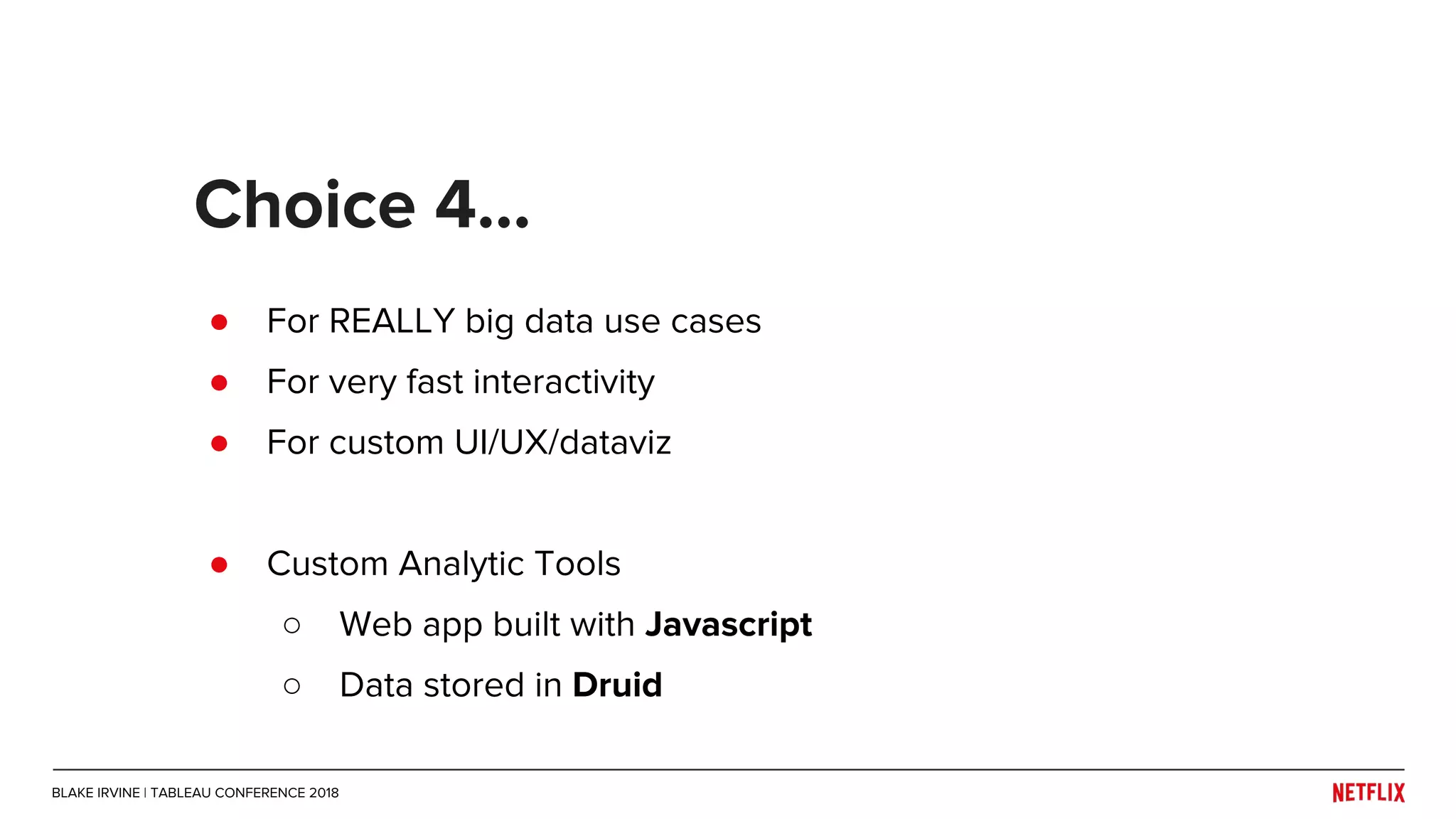

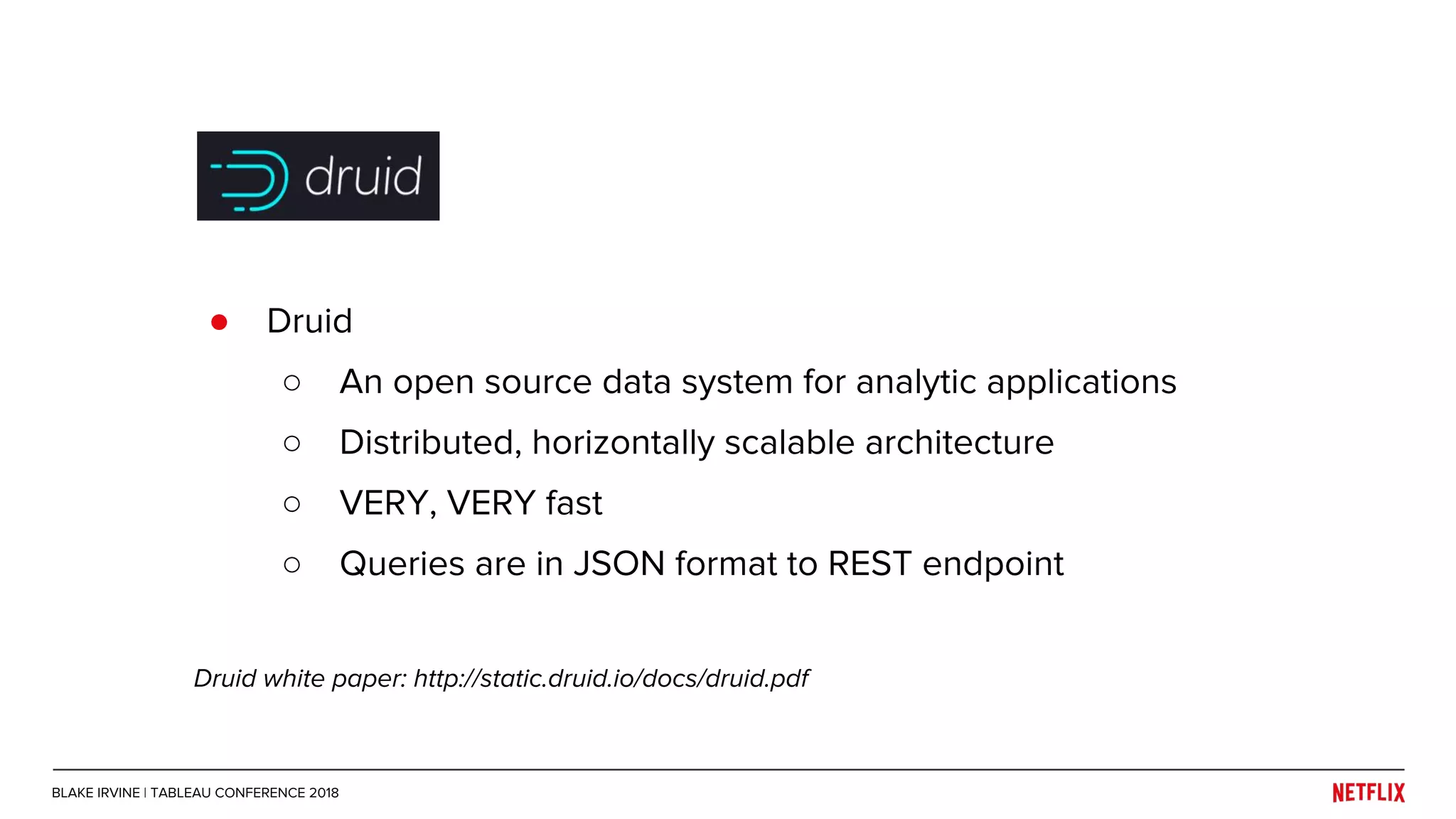

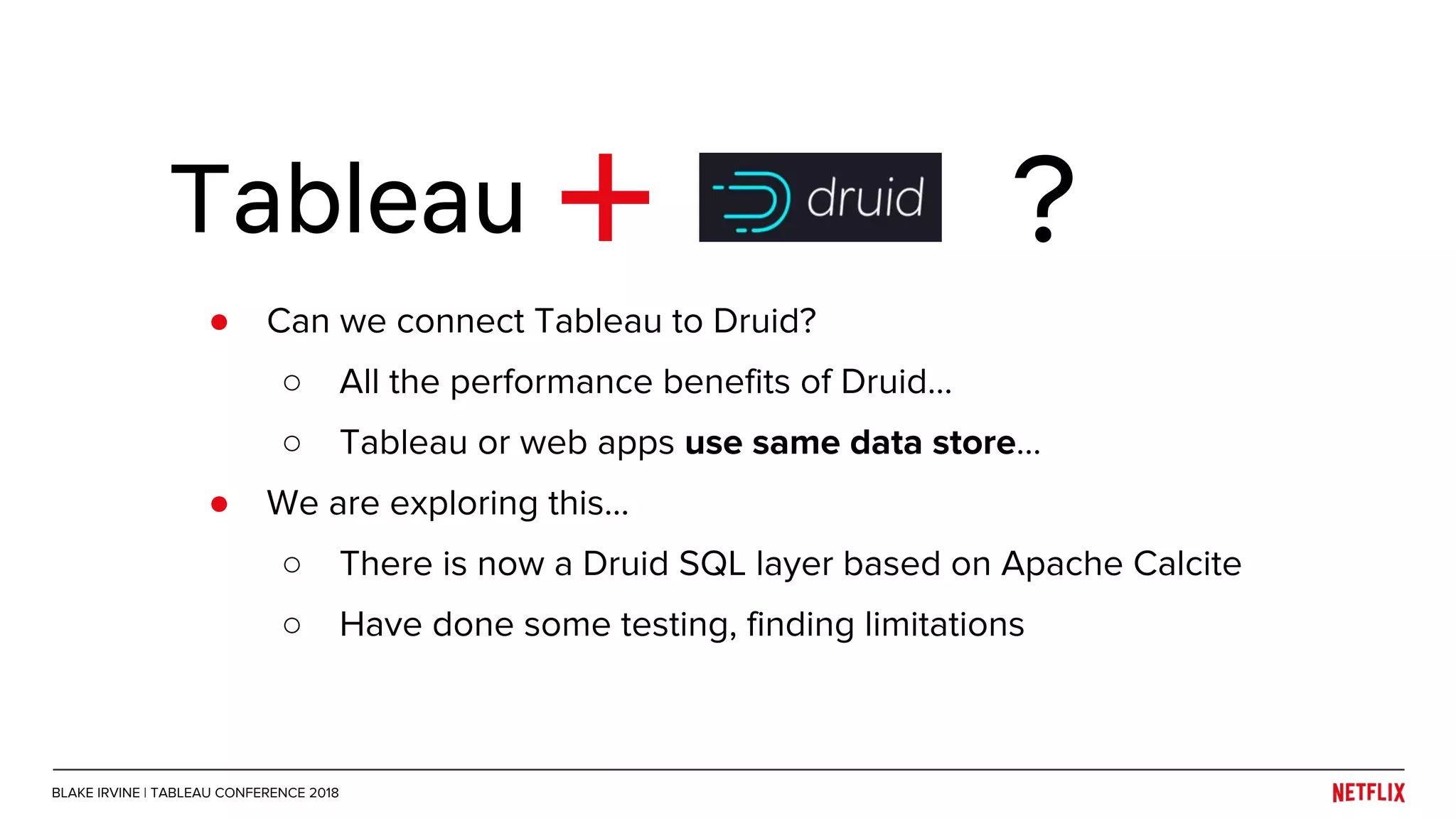

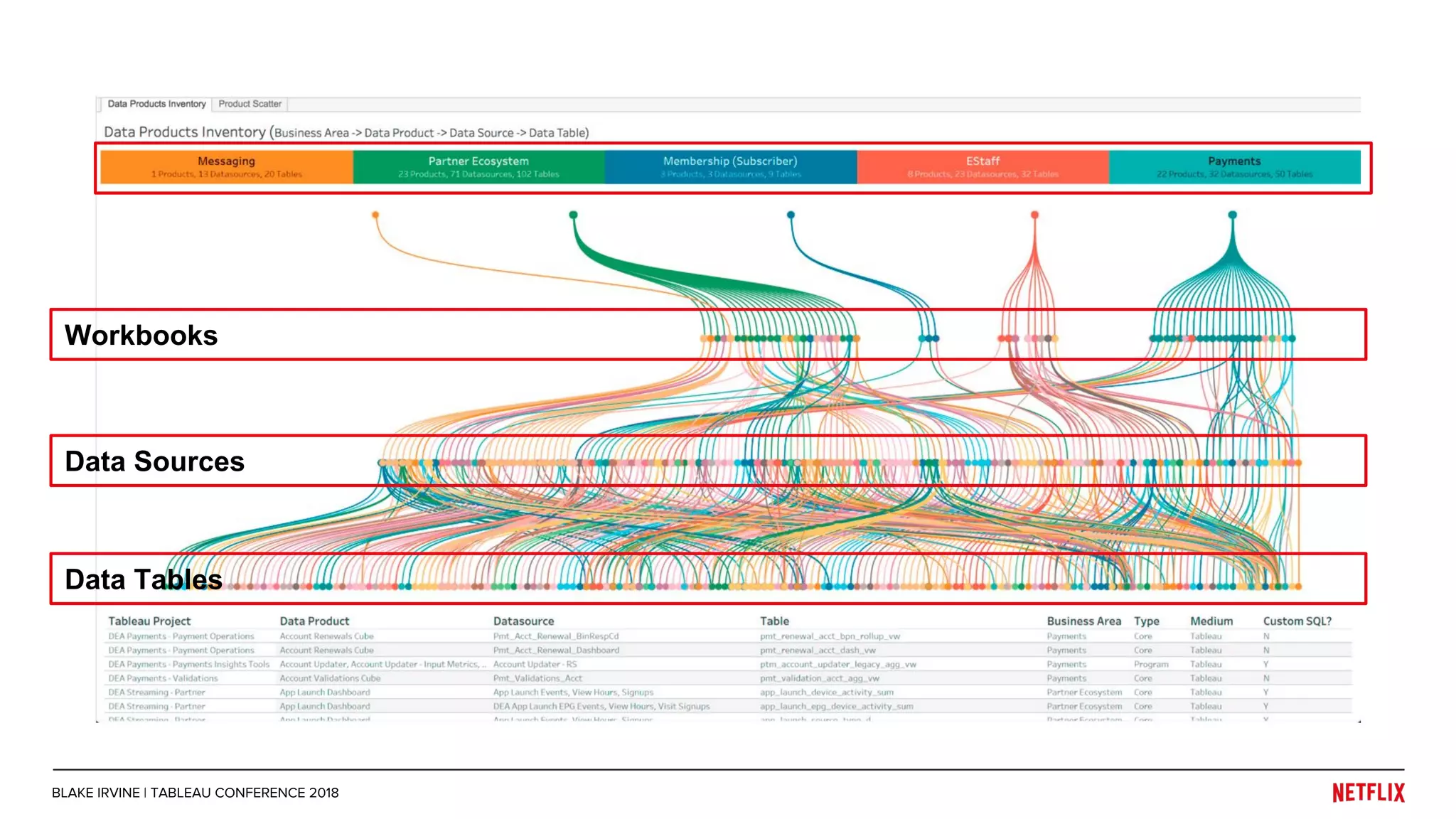

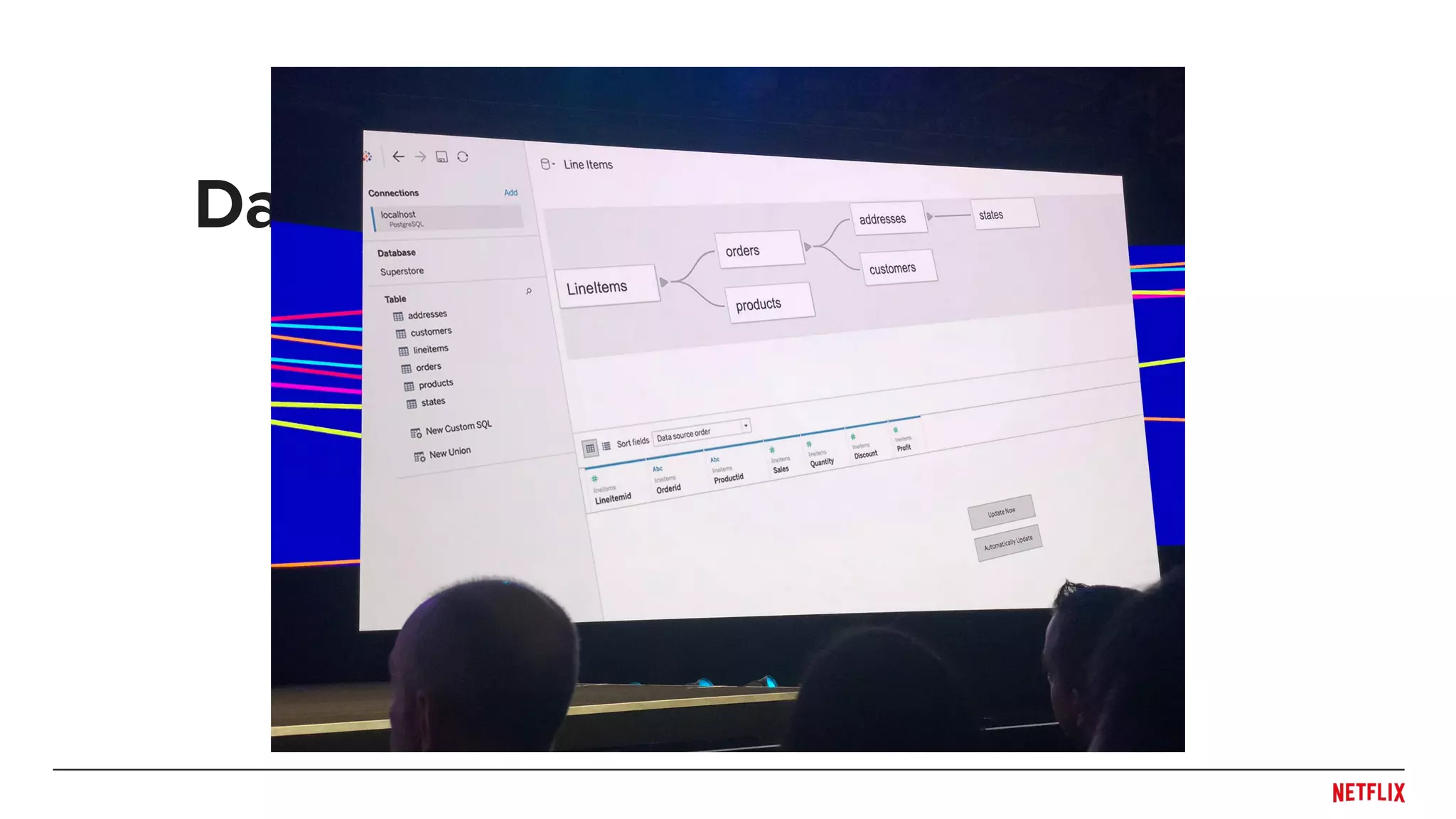

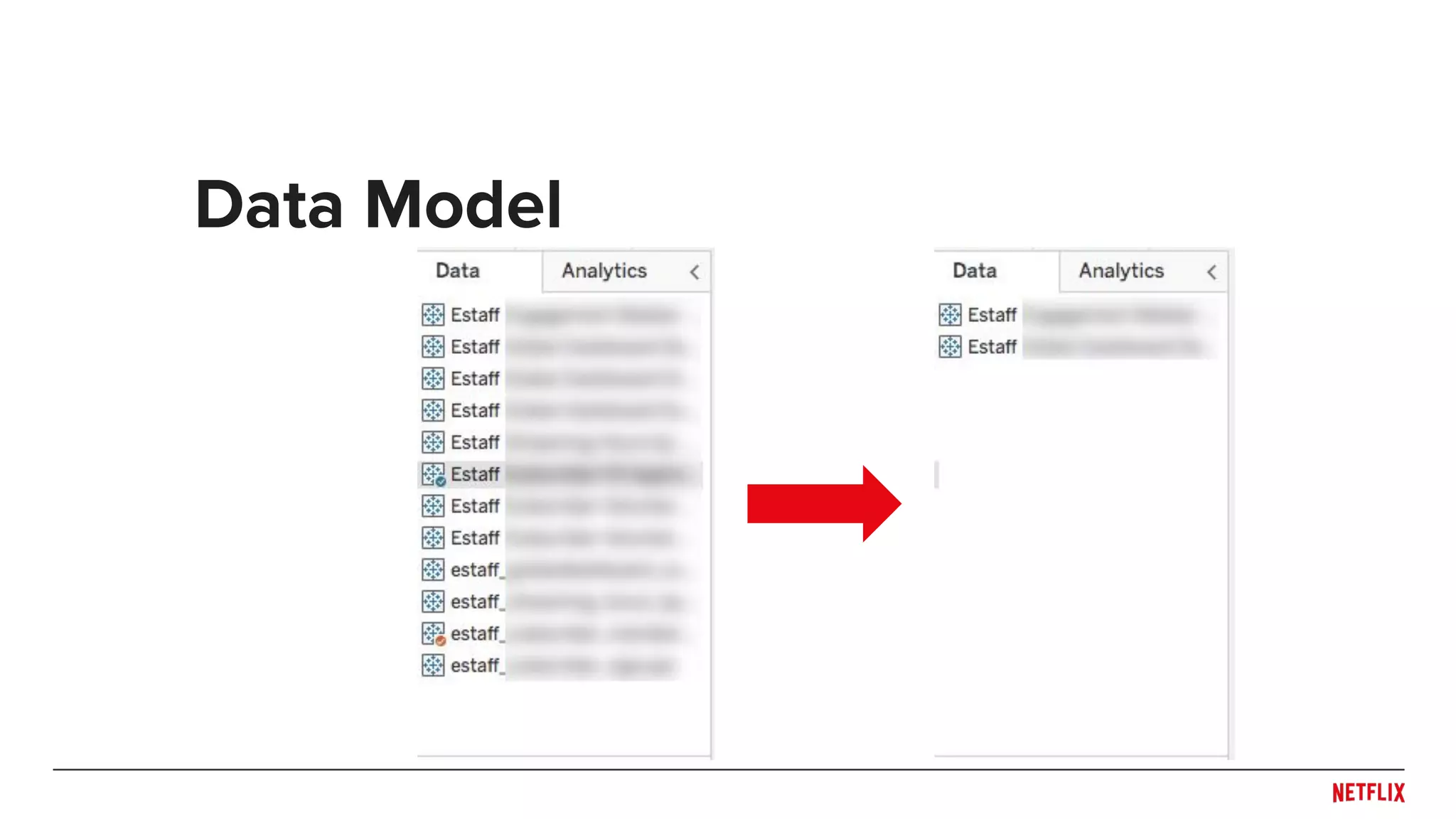

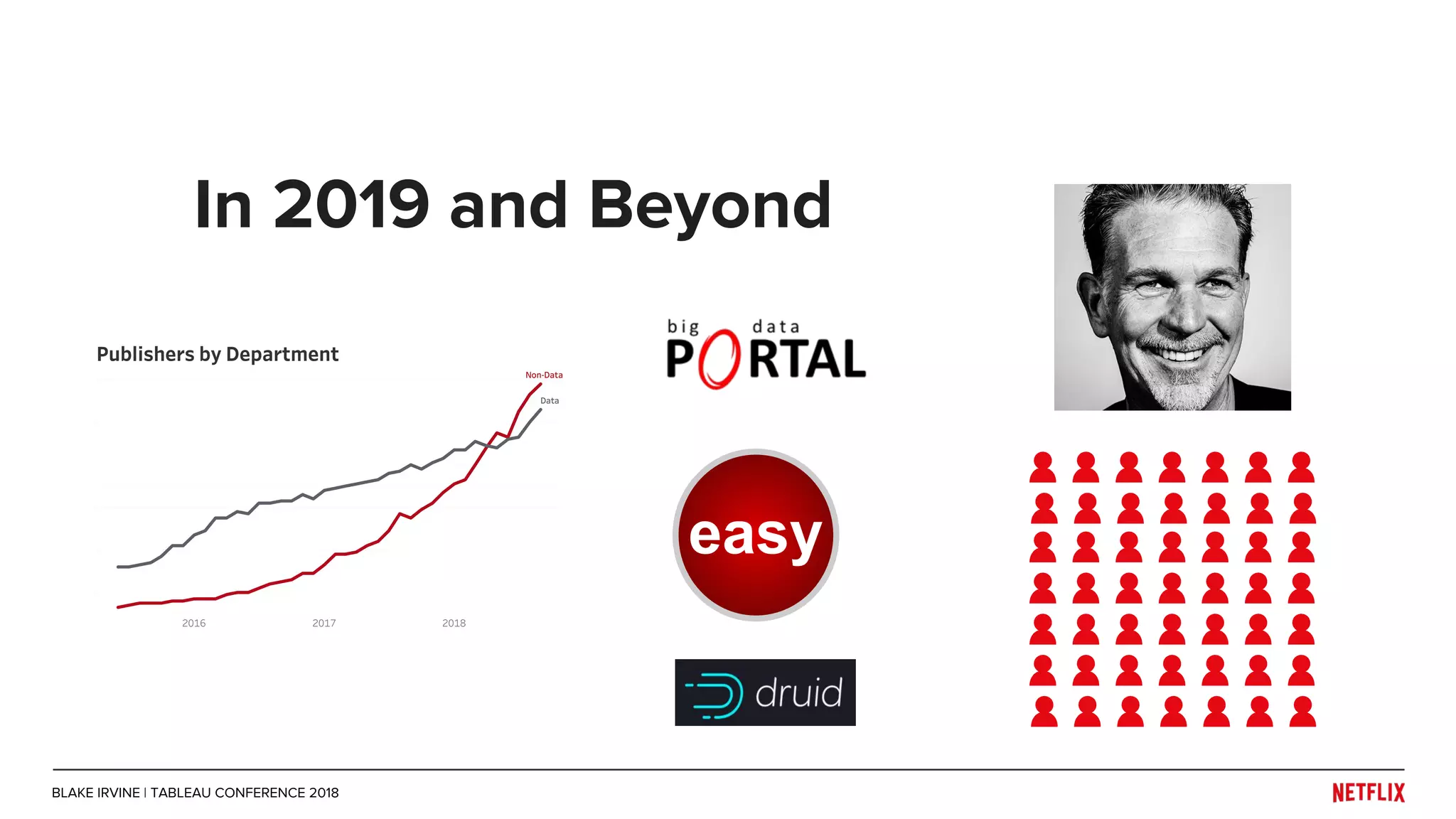

Blake Irvine's presentation at the Tableau Conference 2018 discusses how Netflix enables analytics across various departments, emphasizing the importance of data-driven decisions and A/B testing. The document outlines Netflix's analytics ecosystem, challenges related to data scale and lineage, and the usage of Tableau alongside their big data portal. It also mentions ongoing efforts to improve data reporting practices and explore new technologies like Apache Druid for better analytics performance.