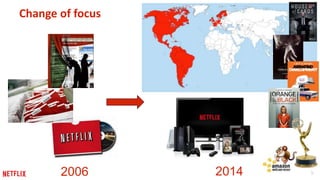

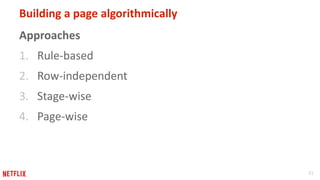

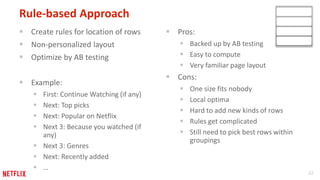

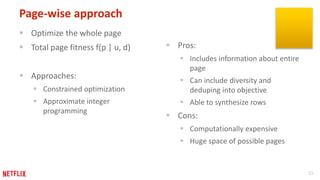

The document discusses the evolution and techniques behind personalized recommendation systems at Netflix, focusing on the importance of high-quality recommendations to enhance user satisfaction and retention. It outlines various machine learning models and algorithms used to predict ratings and generate personalized pages for users, emphasizing the balance between accuracy, diversity, and user engagement. The presentation concludes with insights on future research directions and challenges in optimizing the recommendation approach.