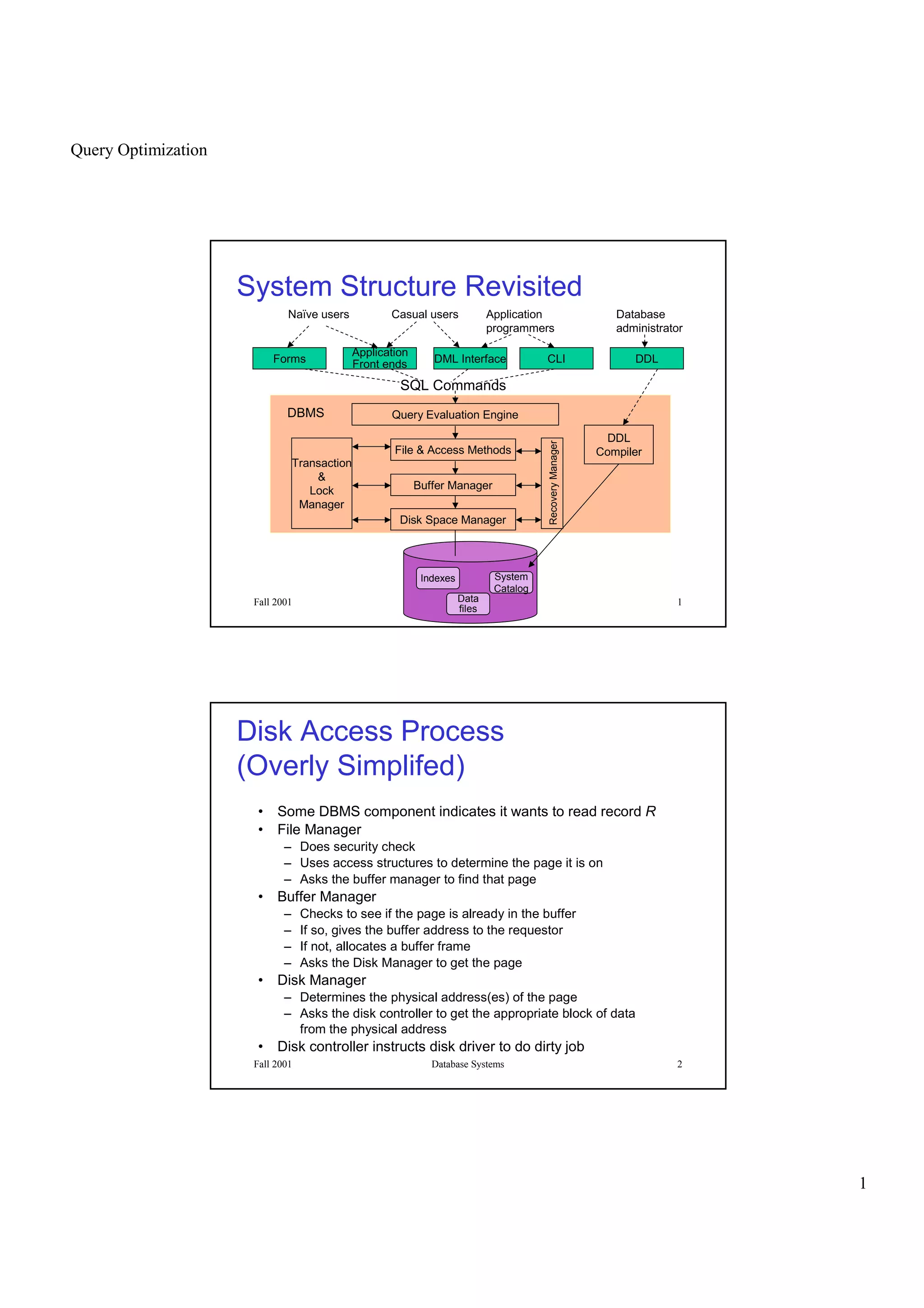

This document discusses query optimization in database systems. It begins by describing the components of a database management system and how queries are processed. It then explains that the goal of query optimization is to reduce the execution cost of a query by choosing efficient access methods and ordering operations. The document outlines different query plans involving table scans, index scans, and joins. It also introduces concepts like filter factors, statistics about tables and indexes, and how these are used to estimate the cost of alternative query execution plans.

![Query Optimization

7

Fall 2001 Database Systems 13

SELECT * FROM T WHERE P

• Table scan methods

– read the entire table and select tuples that satisfy

the predicate P [sequential scan]

– prefetching is used to reduce the read time (read

blocks of N pages at once from the same track)

[sequential scan with prefetch]

• Index scan methods

– use indices to find tuples that satisfy all of P and

then read the tuples from disk [index scan]

– use indices to find tuples that satisfy part of P and

then read the tuples from disk and check the rest

of P [index scan+select]

Fall 2001 Database Systems 14

SELECT * FROM T WHERE P

• Index scan methods (continued)

– use indices to find tuples that satisfy all of P and output the

indexed attributes [index-only scan]

– use indices to find tuples that satisfy part of P and then find the

intersection of different sets of tuples [multi-index scan]](https://image.slidesharecdn.com/www-pkbul-blogspot-comdbms13-130615034551-phpapp01/85/Www-pkbulk-blogspot-com-dbms13-7-320.jpg)