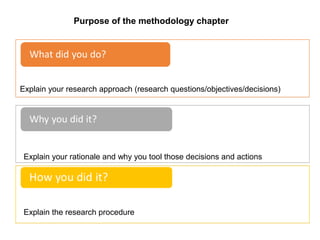

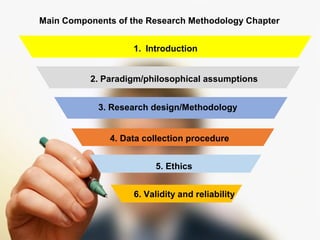

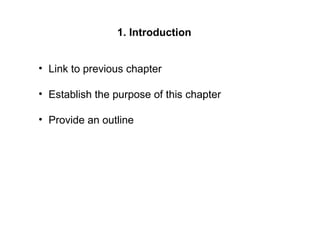

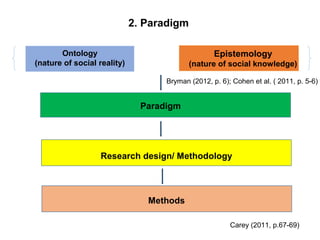

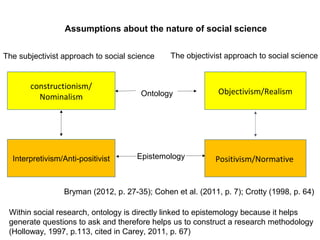

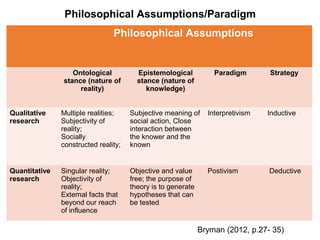

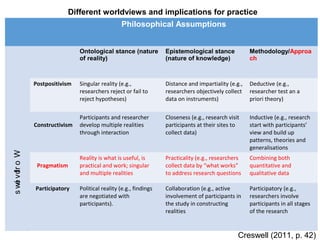

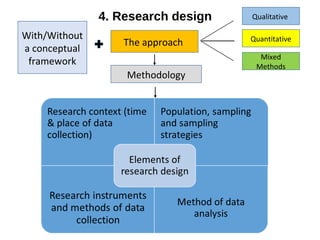

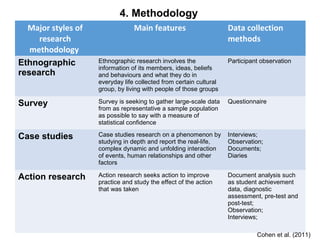

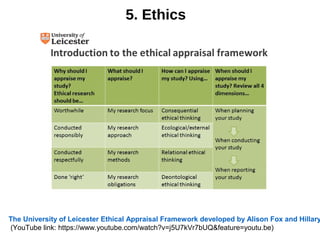

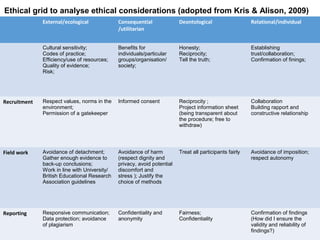

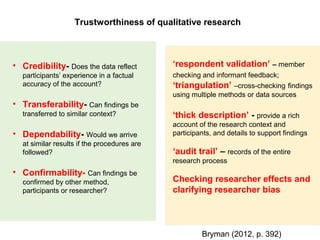

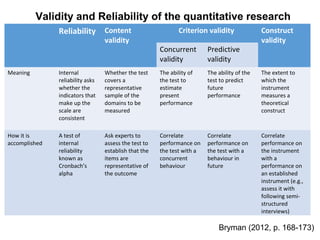

The document provides guidance on writing the methodology chapter of a research paper. It discusses the main components including the introduction, philosophical assumptions, research design, methodology, sampling, data collection, ethics, and validity and reliability. The introduction should establish the purpose and provide an outline. The philosophical assumptions section explains the research paradigm including ontology and epistemology. The research design discusses the approach, methodology, and research methods. Sampling, data collection, and analysis are also important to include. The methodology chapter must address ethics and ensure the validity and reliability of the research.