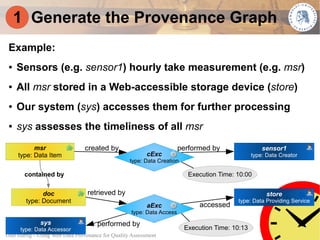

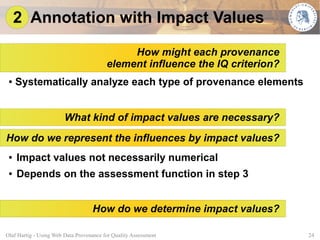

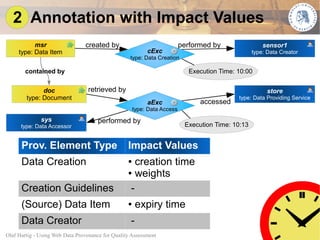

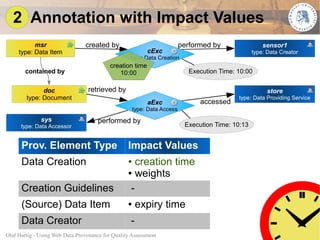

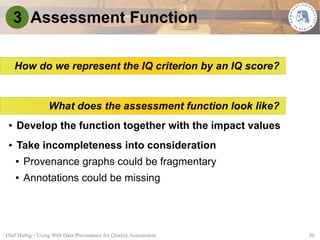

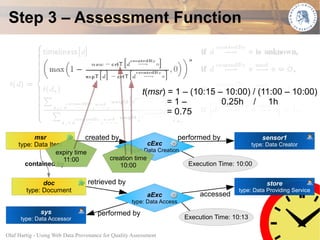

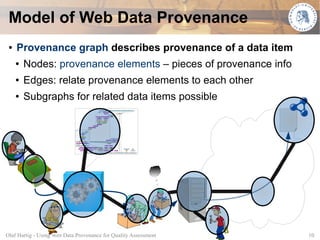

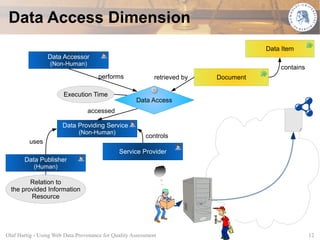

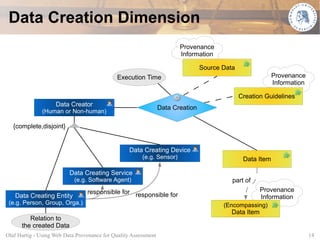

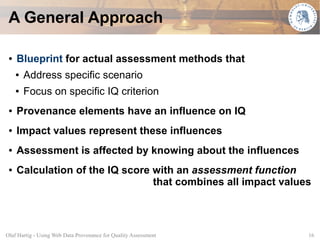

This document proposes using web data provenance for automated quality assessment. It defines provenance as information about the origin and processing of data. The goal is to develop methods to automatically assess quality criteria like timeliness. It outlines a general provenance-based assessment approach involving generating a provenance graph, annotating it with impact values representing how provenance elements influence quality, and calculating a quality score with an assessment function. As an example, it shows how the approach could be applied to assess the timeliness of sensor measurements based on their provenance.

![Designing Assessment Methods

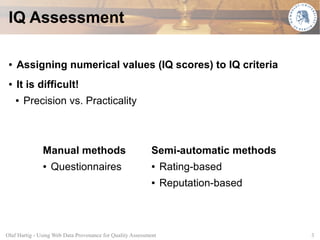

● Developing the general approach into an actual method

● Fundamental design question:

For which IQ criterion do we want to apply the method?

● Timeliness: degree to which the data item is up-to-date

with respect to the task at hand

● Representation* as an absolute measure in [0,1]

● 1 – meeting the most strict timeliness standards

● 0 – unacceptable

*Following Ballou et al., 1998

Olaf Hartig - Using Web Data Provenance for Quality Assessment 20](https://image.slidesharecdn.com/slidesswpm09web-091025105926-phpapp02/85/Using-Web-Data-Provenance-for-Quality-Assessment-20-320.jpg)