The document discusses the concept of open data, its significance in enhancing transparency in government, and its potential applications in cultural heritage. It highlights various approaches to implementing open data, including linked open data principles and the use of graph databases for efficient data management. Additionally, it presents case studies of cultural institutions leveraging open data to improve service delivery and public engagement.

![Expected Payoff

• Ubiquitous access

• Re usability

• Optimization

• Social and Cultural enrichment

ROI ―A greater than 100X return on investment in direct Federal IT spending

through economies of scope is achievable by equipping agencies with an

Open Data platform that is the shared foundation for numerous programs that

are independently funded today‖

[http://www.socrata.com/blog/open-data-as-a-platform/]

OD turns to be a formidable tool for:

• Analyzing Spending Review on administrations expenses

• Enforcing Fact Checking on declarations policies and campaigns

7](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-6-320.jpg)

![GLAMS LOD Examples

In Agora, the Rijksmuseum Amsterdam and the

Netherlands Institute for Sound and Vision collaborate

with the Computer Science and History departments at

the VU to integrate their collections and enrich with

historical information to facilitate a more comprehensive

understanding of the historical dimension of objects in

online heritage collections. [http://agora.cs.vu.nl/]

The Amsterdam Museum was the first museum in the

Netherlands to convert its complete museum collection

database to RDF. The resulting resource consists of

more than 5 Million RDF triples describing over than

70.000 cultural heritage objects. Several working

examples uses this dataset, such as a mobile city

guide.

31](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-27-320.jpg)

![GLAMS LOD Examples

Europeana is a pan-European initiative that provides

access to millions of objects as LOD through API. The

Europeana Thought Lab[5] search interface shows how

LOD principles can aid the search process. Europeana

has been a strong supporter for the uptake of CC0, the

"no rights reserved" in Creative Commons-licenses

[http://pro.europeana.eu]

Open Images provides access to a large and growing

collection of Creative Commons licensed archive

material. The meta-data is converted to RDF, allowing

the creation of rich semantic links between other

datasets such as the Amsterdam Museum dataset

[http://www.openimages.eu/]

32](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-28-320.jpg)

![PROS and CONS of LOD for GLAMS

PROS

• Driving users to online content held by GLAMS (e.g., by improved

search engine optimization);

• Stimulating collaboration in the library, archives and museums

domain and beyond, for instance by inviting people to clean/enrich

existing data;

• Enabling new scholarship that can only be done with open data;

• Allowing the creation of new services for discovery;

• quoting Verwayen (2011) ―increas[ing] relevance to digital society.‖

CONS

• Loss of Attribution to the ―memory institution‖, which may turn to

decrease values of the artworks

• Loss of potential Incomes: open data may not be sold

33](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-29-320.jpg)

![Storing data in Graphs

Graph theory was pioneered by Euler in the 18th century, received

multidisciplinary contributes across centuries

• Facebook, Google and Twitter have centered their business models

around their own proprietary distributed graph technologies

Facebook TAO

Twitter FlockDB

Graph databases store information in ways that much more closely

resemble the ways the world is organized and the humans ―think

about‖ data.

Top 10 Gartner IT technologies in 2013 ―[..] are designed to support

new transaction, interaction and observation use cases involving web

scale, mobile, cloud and clustered environments‖

41](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-36-320.jpg)

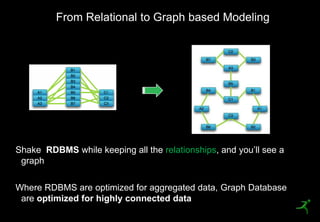

![From Relational to Graph based Modeling

Graph DB place relationships as first-class abstractions of the data

model

• It contains nodes and relationships

• Nodes contain properties (key-

value pairs)

• Relationships are named, directed

and always have a start and end

node

• Relationships can also contain

properties

A Graph –[:RECORDS_DATA_IN] Nodes –[:WHICH_HAVE]

Properties.

Nodes –[:LINKED_BY] Relationships

42](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-37-320.jpg)

![Open Linked Graph

User

[:OWNS] [:OWNS]

Document

Document

52](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-45-320.jpg)

![Open Linked Graph

User

[:OWNS] [:OWNS]

Document

Document

[:INCLUDES]

[:INCLUDES]

[:INCLUDES]

Node [:INCLUDES] [:INCLUDES] Node

[:INCLUDES]

Node Node Node

Node

53](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-46-320.jpg)

![Open Linked Graph

User

[:OWNS] [:OWNS]

Document

Document

[:INCLUDES]

[:INCLUDES]

[:INCLUDES]

Node [:INCLUDES] [:INCLUDES] Node

[:INCLUDES]

Node Node Node

Node

[:DBP_LINKED] [:DBP_LINKED]

[:DBP_LINKED]

[:LOCATED]

[:LOCATED] [:LOCATED]

[:LOCATED]

[:DBP_LINKED]

DBPedia URI

DBPedia URI

Venue DBPedia URI

Venue DBPedia URI Venue

Venue

54](https://image.slidesharecdn.com/netcamplodpiunti-130412163458-phpapp02/85/Linked_Open_Data_Rome_Netcamp_13-47-320.jpg)