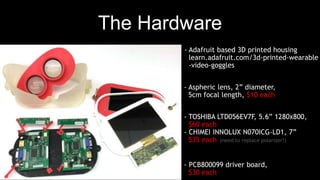

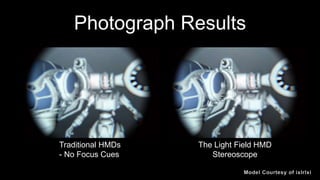

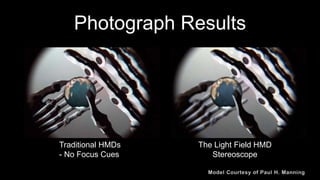

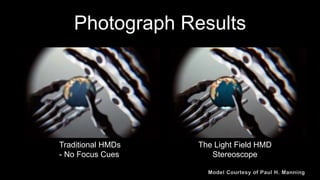

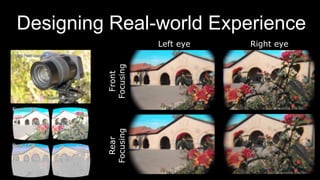

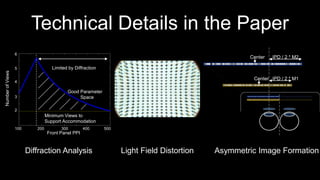

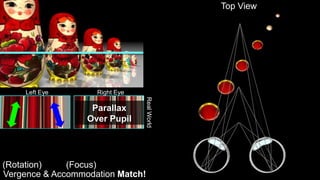

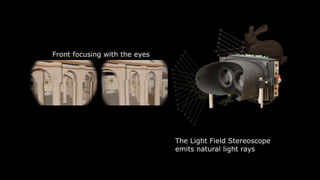

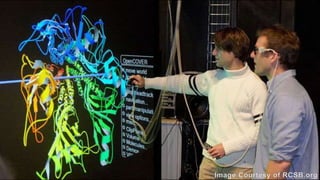

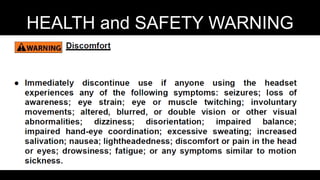

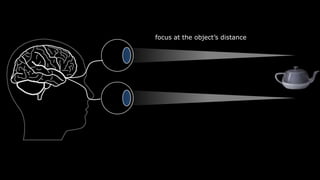

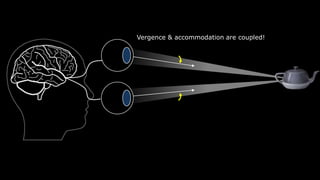

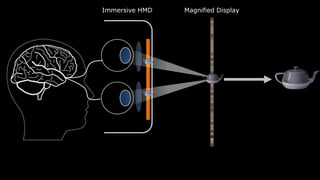

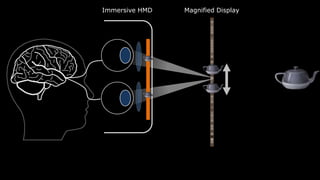

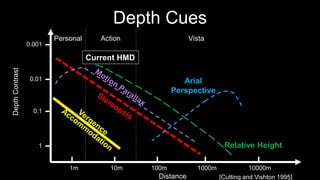

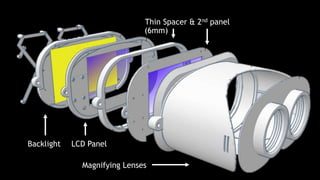

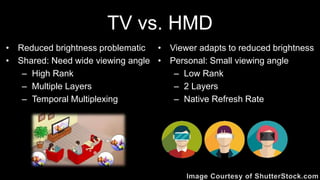

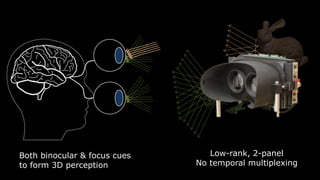

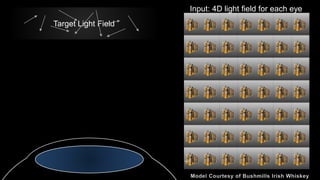

The document discusses the development of a light field stereoscope that utilizes factored near-eye light field displays to enhance immersive computer graphics through proper vergence and accommodation cues. It presents technical specifications and hardware components necessary for constructing such a stereoscope, alongside potential applications in virtual and augmented reality. The document also addresses limitations like reduced brightness and latency while envisioning future enhancements in light field content and human vision compatibility.

![0.001

0.01

0.1

1

1m 10m 100m 1000m 10000m

Personal Action Vista

Relative Height

Arial

Perspective

DepthContrast

Distance [Cutting and Vishton 1995]

Current HMD

Depth Cues](https://image.slidesharecdn.com/thelightfieldstereoscope-siggraph2015-150822000243-lva1-app6892/85/The-Light-Field-Stereoscope-SIGGRAPH-2015-22-320.jpg)

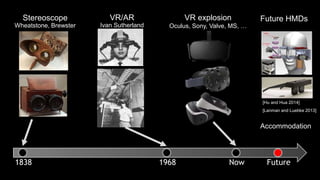

![Stereoscope

Wheatstone, Brewster

1838

VR/AR

Ivan Sutherland

1968

VR explosion

Oculus, Sony, Valve, MS, …

Now Future

Future HMDs

[Lanman and Luebke 2013]

[Hu and Hua 2014]

Accommodation](https://image.slidesharecdn.com/thelightfieldstereoscope-siggraph2015-150822000243-lva1-app6892/85/The-Light-Field-Stereoscope-SIGGRAPH-2015-28-320.jpg)

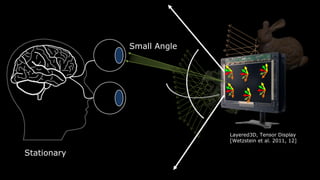

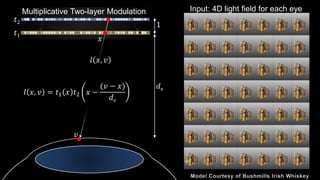

![Layered3D, Tensor Display

[Wetzstein et al. 2011, 12]

Small Angle

Stationary](https://image.slidesharecdn.com/thelightfieldstereoscope-siggraph2015-150822000243-lva1-app6892/85/The-Light-Field-Stereoscope-SIGGRAPH-2015-30-320.jpg)

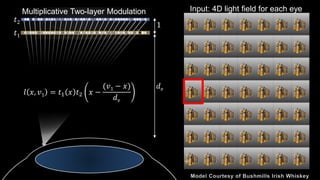

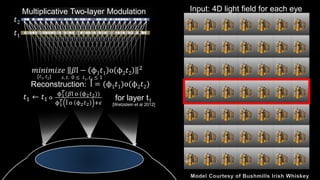

![Multiplicative Two-layer Modulation

𝑡1

𝑡2

l = (ϕ1 𝑡1)o(ϕ2 𝑡2)Reconstruction:

Input: 4D light field for each eye

𝑚𝑖𝑛𝑖𝑚𝑖𝑧𝑒 𝛽l − ϕ1 𝑡1 o ϕ2 𝑡2

2

{𝑡1, 𝑡2} 𝑠. 𝑡. 0 ≤ 𝑡1, 𝑡2 ≤ 1

𝑡1 ← 𝑡1 o

ϕ1

𝑇(𝛽l o (ϕ2 𝑡2))

ϕ1

𝑇 l o ϕ2 𝑡2 +𝜖

for layer t1

[Wetzstein et al 2012]](https://image.slidesharecdn.com/thelightfieldstereoscope-siggraph2015-150822000243-lva1-app6892/85/The-Light-Field-Stereoscope-SIGGRAPH-2015-40-320.jpg)

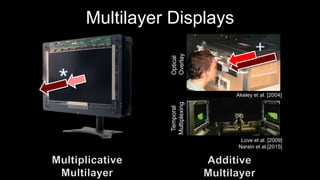

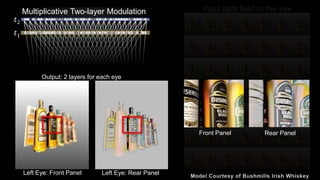

![Multilayer Displays

Akeley et al. [2004]

Love et al. [2009]

Narain et al.[2015]

Optical

Overlay

Temporal

Multiplexing](https://image.slidesharecdn.com/thelightfieldstereoscope-siggraph2015-150822000243-lva1-app6892/85/The-Light-Field-Stereoscope-SIGGRAPH-2015-43-320.jpg)