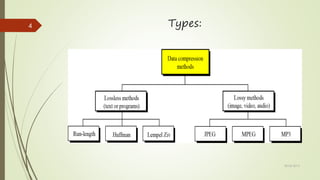

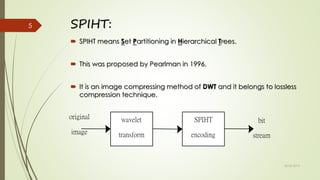

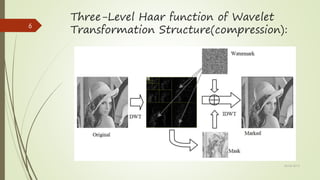

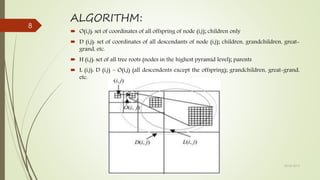

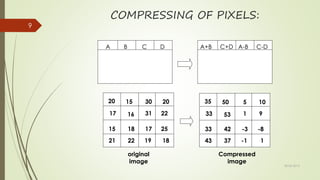

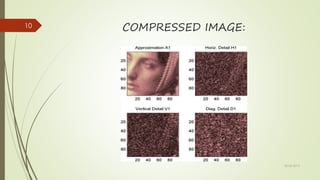

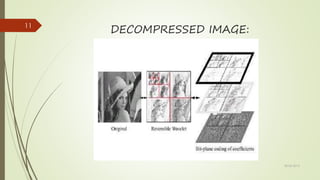

The document discusses image compression, specifically focusing on the SPIHT (Set Partitioning in Hierarchical Trees) method proposed by Pearlman in 1996, which is a lossless compression technique. It outlines the importance of image compression for reducing data size for storage and transmission, particularly in domains like medicine. Additionally, it describes the properties of SPIHT, including high image quality, fast coding and decoding, and the ability to combine with error protection.