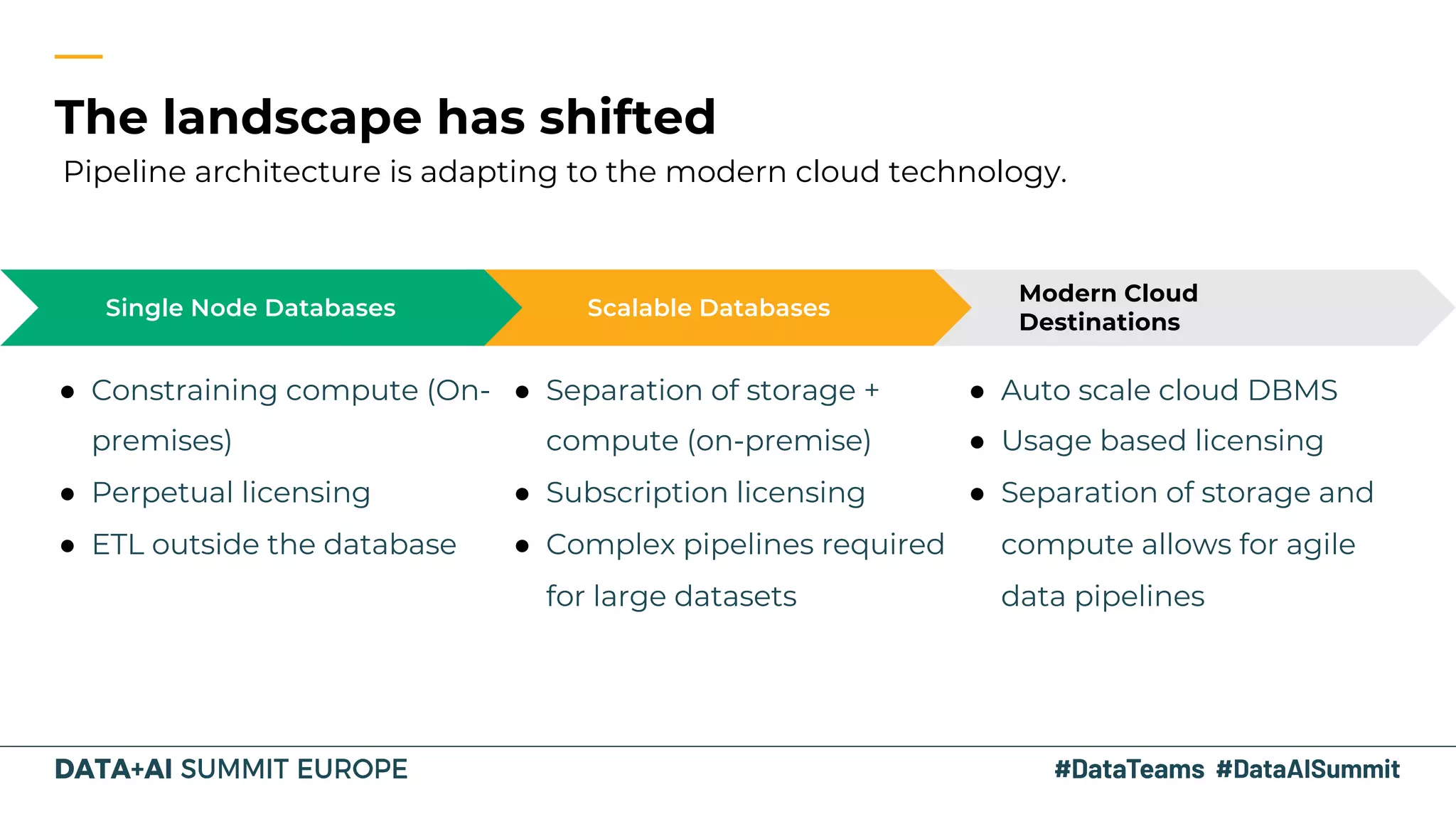

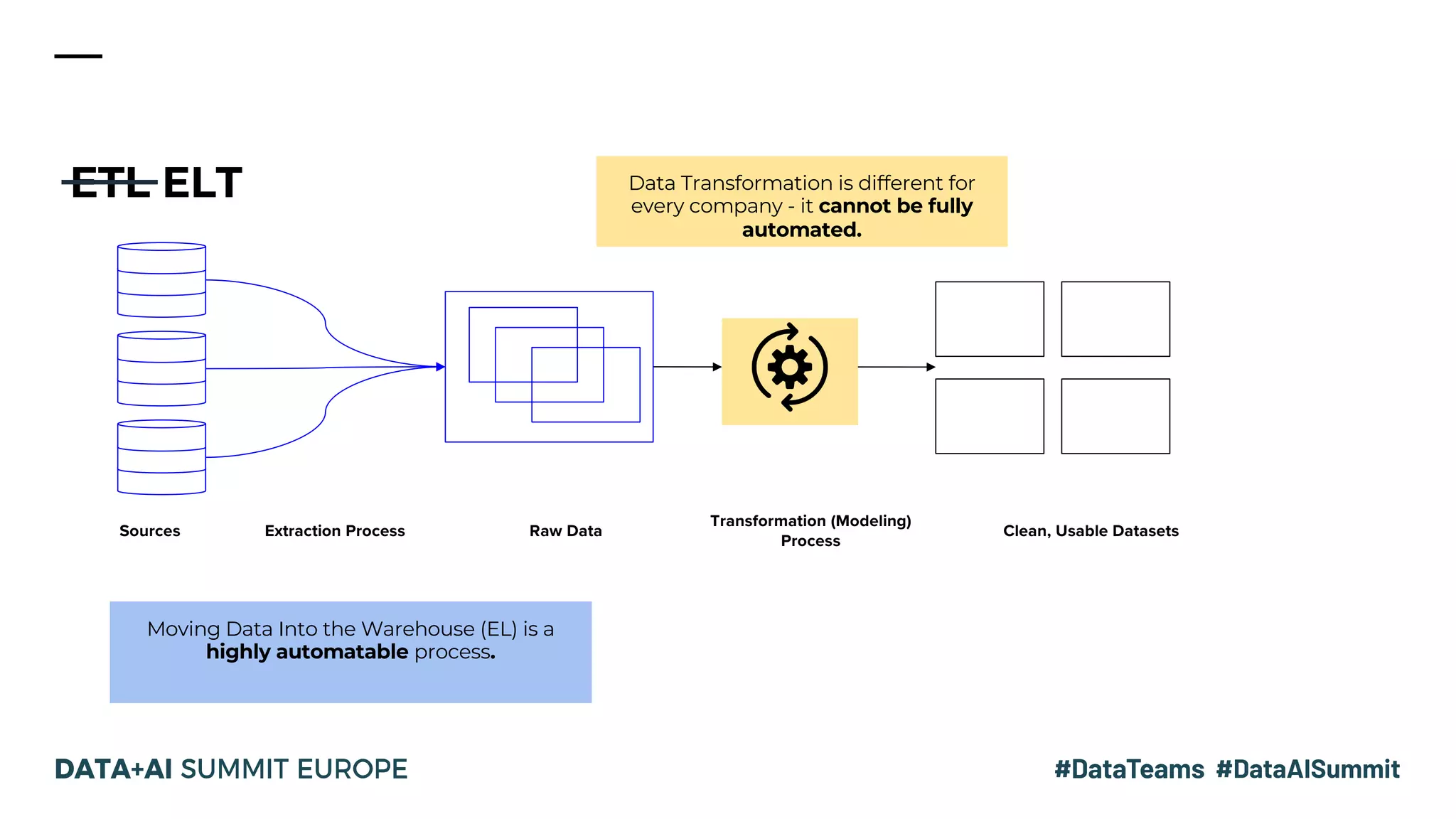

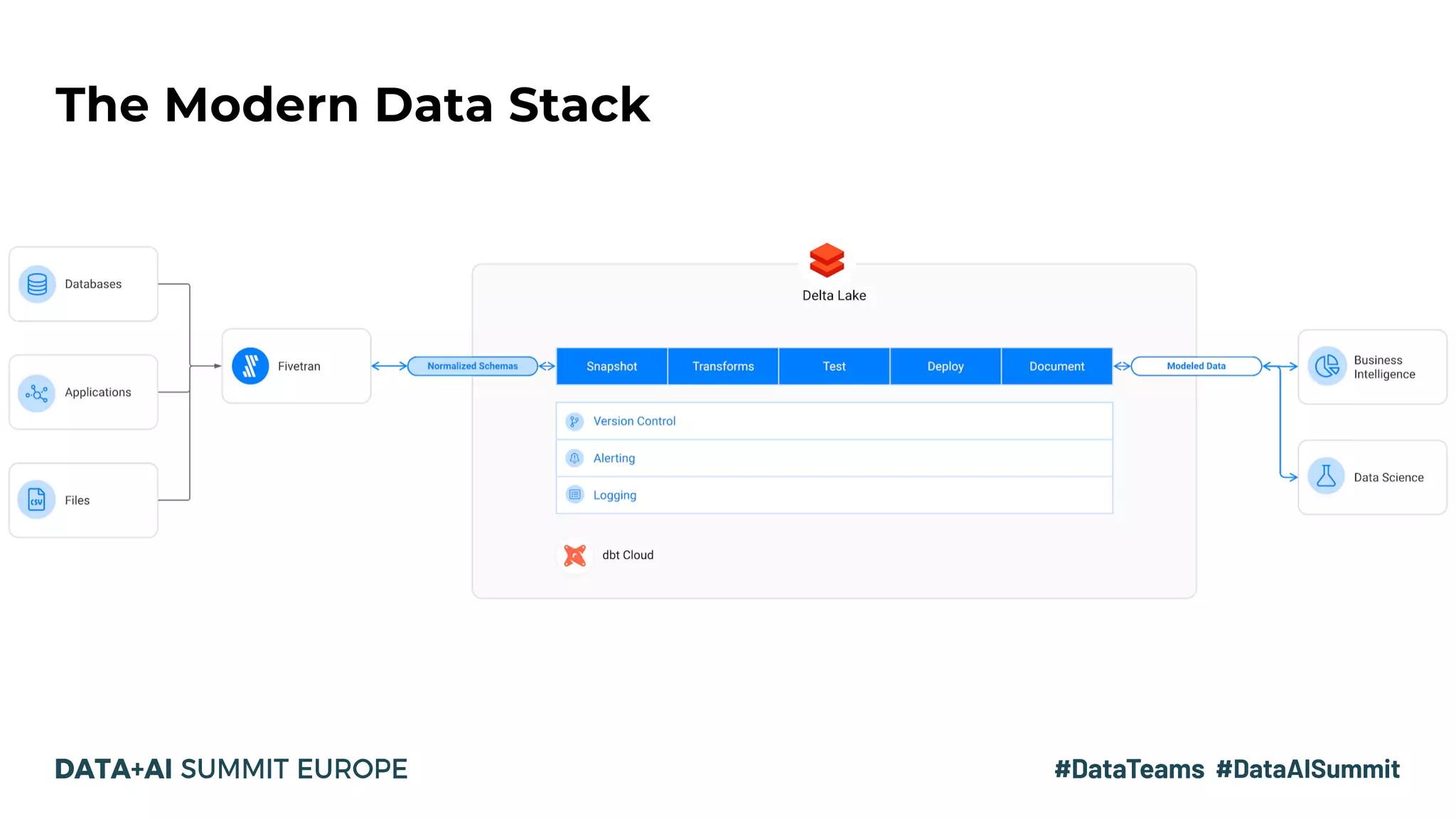

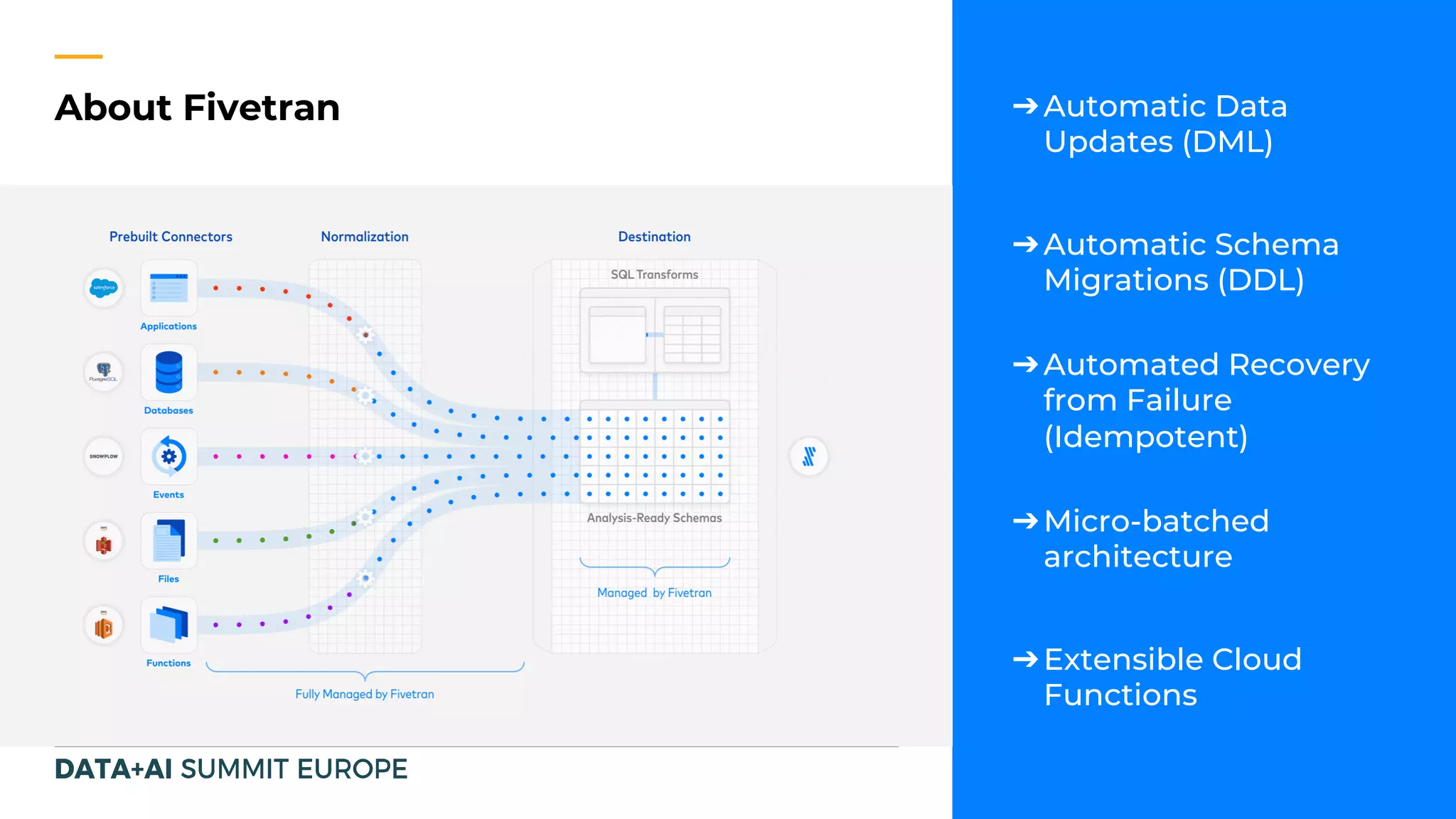

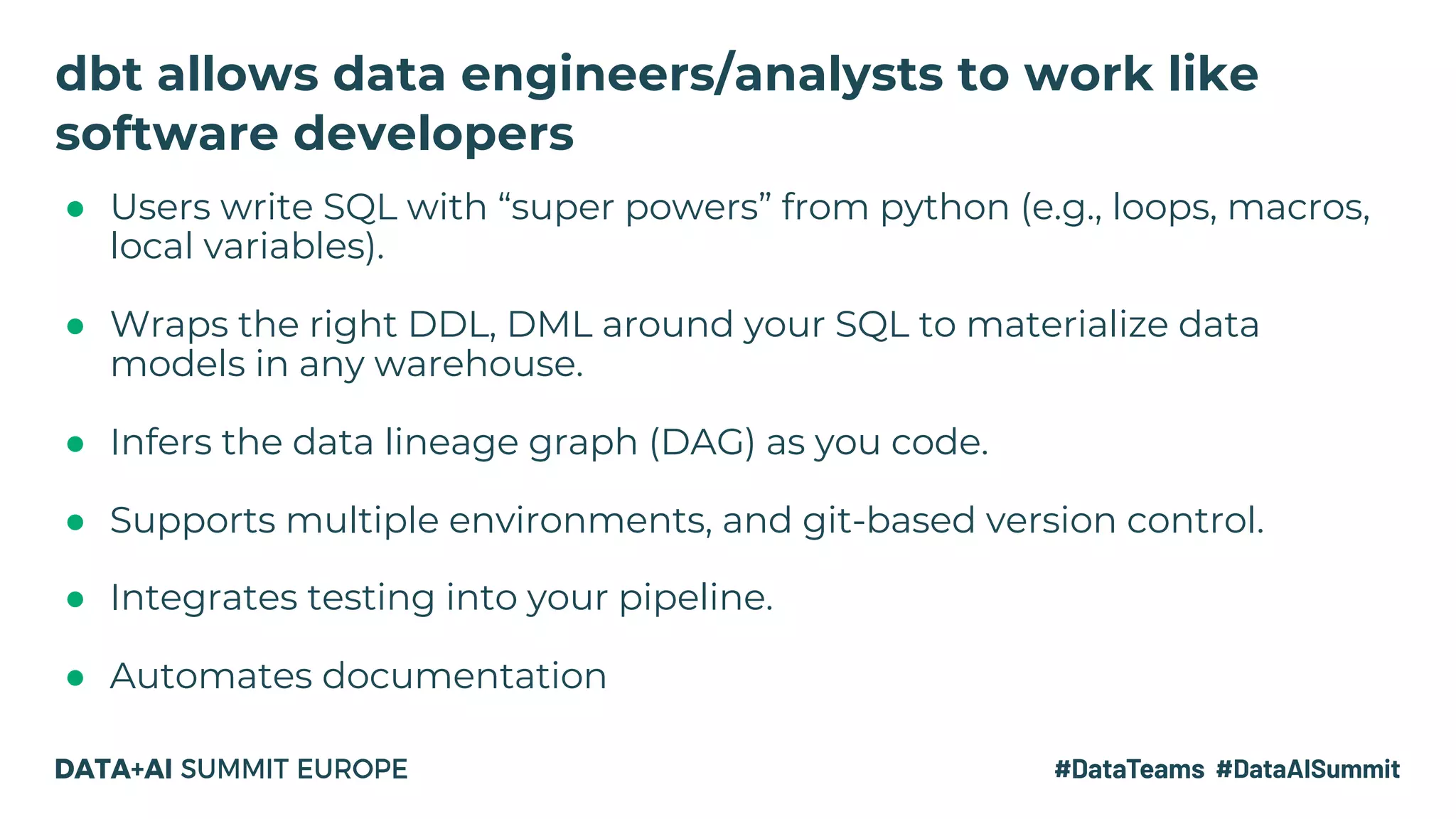

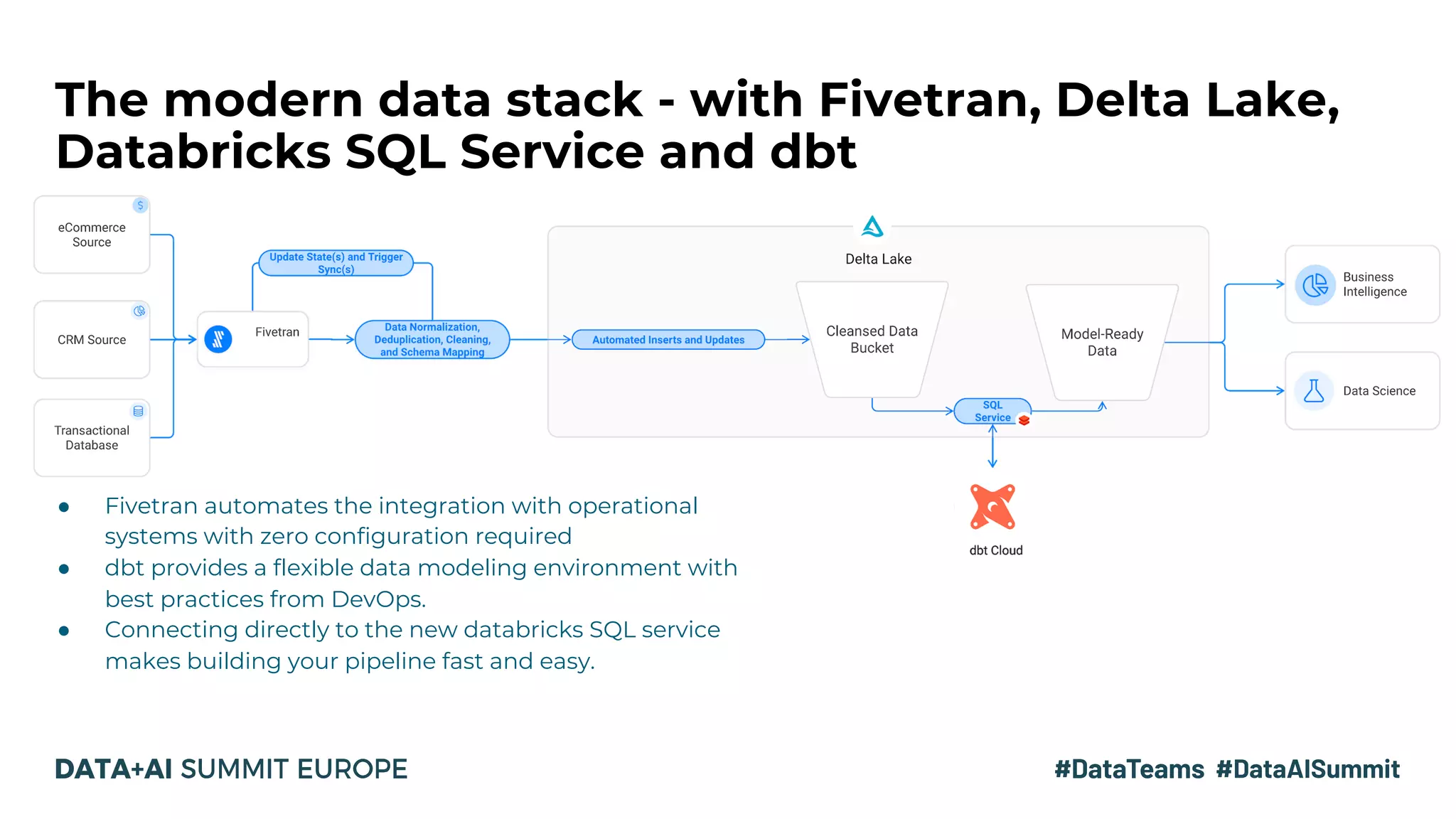

The document discusses the evolution of data analytics infrastructure, emphasizing a modern ELT approach with tools like Fivetran, Databricks SQL Service, and dbt. It highlights the separation of storage and compute, allowing for agile data pipelines and democratizing data access for various team members. By moving the data transformation step into the warehouse, companies can leverage automation and best practices for efficient data modeling.