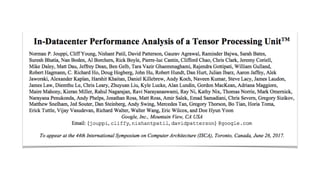

Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit

•

2 likes•1,412 views

The document discusses the motivation for developing the Tensor Processing Unit (TPU), which was that DNN-based workloads were consuming a large and growing portion of datacenter compute resources. It describes how the TPU was developed by Norman Jouppi and others at Google to be much more efficient than CPUs and GPUs for DNN workloads, with up to 80x higher performance per watt. It provides details on the TPU architecture and experimental results showing it significantly outperformed GPUs on latency for DNN inference tasks.

Report

Share

Report

Share

Download to read offline

Recommended

In datacenter performance analysis of a tensor processing unit

Tensorflow-KR 논문읽기모임 85번째 발표영상입니다 Google의 TPU v1논문에 대해서 발표했습니다(그런데 발표하고 하루만에 Google I/O에서 TPU v3가 발표됐네요... 논문이 나오길 기대합니다)

발표영상 : https://youtu.be/7WhWkhFAIO4

논문링크 : https://arxiv.org/abs/1704.04760

TPU paper slide

This slide is presented in the class of high-performance computing 2017 at Tokyo Institute of technology.

Tensor Processing Unit (TPU)

This is an overview of google's draft paper that will be presented at ISCA 2017.

High performance computing with accelarators

Seminar Presentation on High Performance With Accelerators.

Google TPU

Summary and discussion about Google's paper of TPU.

Dose TPU really end the life of GPU? No, it could only do inference currently.

Architecture of TPU, GPU and CPU

This webinar by Dov Nimratz (Senior Solution Architect, Consultant, GlobalLogic) was delivered at Embedded Community Webinar #1 on July 7, 2020.

Webinar agenda:

- CPU / GPU / TPU architectures

- Historical context

- CPU and their variations

- GPU or gin in a bottle for artificial intelligence tasks

- TPU architecture specialized artificial intelligence accelerator

- What's next in technology

More details and presentation: https://www.globallogic.com/ua/about/events/embedded-community-webinar-1/

データ爆発時代のネットワークインフラ

2022 年 4 月 26 日 (火) に開催した「GTC 2022 テクニカルフォローアップセミナー 〜新アーキテクチャ & HPC〜」にて、NVIDIA 愛甲が講演した「データ爆発時代のネットワークインフラ」のスライドです。

The Google File System (GFS)

The Google File System (GFS) presented in 2003 is the inspiration for the Hadoop Distributed File System (HDFS). Let's take a deep dive into GFS to better understand Hadoop.

Recommended

In datacenter performance analysis of a tensor processing unit

Tensorflow-KR 논문읽기모임 85번째 발표영상입니다 Google의 TPU v1논문에 대해서 발표했습니다(그런데 발표하고 하루만에 Google I/O에서 TPU v3가 발표됐네요... 논문이 나오길 기대합니다)

발표영상 : https://youtu.be/7WhWkhFAIO4

논문링크 : https://arxiv.org/abs/1704.04760

TPU paper slide

This slide is presented in the class of high-performance computing 2017 at Tokyo Institute of technology.

Tensor Processing Unit (TPU)

This is an overview of google's draft paper that will be presented at ISCA 2017.

High performance computing with accelarators

Seminar Presentation on High Performance With Accelerators.

Google TPU

Summary and discussion about Google's paper of TPU.

Dose TPU really end the life of GPU? No, it could only do inference currently.

Architecture of TPU, GPU and CPU

This webinar by Dov Nimratz (Senior Solution Architect, Consultant, GlobalLogic) was delivered at Embedded Community Webinar #1 on July 7, 2020.

Webinar agenda:

- CPU / GPU / TPU architectures

- Historical context

- CPU and their variations

- GPU or gin in a bottle for artificial intelligence tasks

- TPU architecture specialized artificial intelligence accelerator

- What's next in technology

More details and presentation: https://www.globallogic.com/ua/about/events/embedded-community-webinar-1/

データ爆発時代のネットワークインフラ

2022 年 4 月 26 日 (火) に開催した「GTC 2022 テクニカルフォローアップセミナー 〜新アーキテクチャ & HPC〜」にて、NVIDIA 愛甲が講演した「データ爆発時代のネットワークインフラ」のスライドです。

The Google File System (GFS)

The Google File System (GFS) presented in 2003 is the inspiration for the Hadoop Distributed File System (HDFS). Let's take a deep dive into GFS to better understand Hadoop.

Nvidia (History, GPU Architecture and New Pascal Architecture)

This presentation focuses on Nvidia GPUs and explores the topics of what a GPU is, its basic architecture, how it is different from a CPU, its basic working, and what new Nvidia has to offer in consumer as well as server market

Linux memory consumption

Linux memory consumption - Why memory utilities show a little amount of free RAM? How does Linux kernel utilizes free RAM? What is the real amount of free RAM in the system?

Wireshark入門 (2014版)

2014年10月31日開催「ネットワーク パケットを読む会 (仮)」でのセッション「いま改めて Wireshark 入門」の発表スライドです。

Wireshark の利用方法について、インストールから初期設定、パケットキャプチャの開始とフィルタリングまで解説しています。

Multicore computers

A multi-core processor is a single computing component with two or more independent actual processing units (called "cores"), which are units that read and execute program instructions. The instructions are ordinary CPU instructions (such as add, move data, and branch), but the multiple cores can run multiple instructions at the same time, increasing overall speed for programs amenable to parallel computing. Manufacturers typically integrate the cores onto a single integrated circuit die (known as a chip multiprocessor or CMP), or onto multiple dies in a single chip package.

Intel® hyper threading technology

This is a presentation about Intel Nehalm Micro-architecture and the reference of the slides is Intel white paper

GPU Architecture NVIDIA (GTX GeForce 480)

GPU architecture of NVIDIA's GPUs of series GeForce 400 - series GeForce 500. As both use same architecture i.e. Fermi Architecture.

Multi core processors

This presentation describes the processors, its types and how it is categorized on a basic level.

Doom Technical Review

The technical review I made for the DOOM series, presented at IranGDI's summer FPS games workshop.

Infrastructure and Tooling - Full Stack Deep Learning

The Training and Evaluation Phases of Your Machine Learning Workflow.

See more at https://fullstackdeeplearning.com

[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Tensorflow on Apache Hadoop YARN during the Bangalore Hadoop Meetup by Sunil Govindan

More Related Content

What's hot

Nvidia (History, GPU Architecture and New Pascal Architecture)

This presentation focuses on Nvidia GPUs and explores the topics of what a GPU is, its basic architecture, how it is different from a CPU, its basic working, and what new Nvidia has to offer in consumer as well as server market

Linux memory consumption

Linux memory consumption - Why memory utilities show a little amount of free RAM? How does Linux kernel utilizes free RAM? What is the real amount of free RAM in the system?

Wireshark入門 (2014版)

2014年10月31日開催「ネットワーク パケットを読む会 (仮)」でのセッション「いま改めて Wireshark 入門」の発表スライドです。

Wireshark の利用方法について、インストールから初期設定、パケットキャプチャの開始とフィルタリングまで解説しています。

Multicore computers

A multi-core processor is a single computing component with two or more independent actual processing units (called "cores"), which are units that read and execute program instructions. The instructions are ordinary CPU instructions (such as add, move data, and branch), but the multiple cores can run multiple instructions at the same time, increasing overall speed for programs amenable to parallel computing. Manufacturers typically integrate the cores onto a single integrated circuit die (known as a chip multiprocessor or CMP), or onto multiple dies in a single chip package.

Intel® hyper threading technology

This is a presentation about Intel Nehalm Micro-architecture and the reference of the slides is Intel white paper

GPU Architecture NVIDIA (GTX GeForce 480)

GPU architecture of NVIDIA's GPUs of series GeForce 400 - series GeForce 500. As both use same architecture i.e. Fermi Architecture.

Multi core processors

This presentation describes the processors, its types and how it is categorized on a basic level.

Doom Technical Review

The technical review I made for the DOOM series, presented at IranGDI's summer FPS games workshop.

What's hot (20)

High performance computing - building blocks, production & perspective

High performance computing - building blocks, production & perspective

Nvidia (History, GPU Architecture and New Pascal Architecture)

Nvidia (History, GPU Architecture and New Pascal Architecture)

Similar to Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit

Infrastructure and Tooling - Full Stack Deep Learning

The Training and Evaluation Phases of Your Machine Learning Workflow.

See more at https://fullstackdeeplearning.com

[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Tensorflow on Apache Hadoop YARN during the Bangalore Hadoop Meetup by Sunil Govindan

Deep Learning with Spark and GPUs

Spark is a powerful, scalable real-time data analytics engine that is fast becoming the de facto hub for data science and big data. However, in parallel, GPU clusters is fast becoming the default way to quickly develop and train deep learning models. As data science teams and data savvy companies mature, they will need to invest in both platforms if they intend to leverage both big data and artificial intelligence for competitive advantage.

This talk will discuss and show in action:

* Leveraging Spark and Tensorflow for hyperparameter tuning

* Leveraging Spark and Tensorflow for deploying trained models

* An examination of DeepLearning4J, CaffeOnSpark, IBM's SystemML, and Intel's BigDL

* Sidecar GPU cluster architecture and Spark-GPU data reading patterns

* Pros, cons, and performance characteristics of various approaches

Attendees will leave this session informed on:

* The available architectures for Spark and Deep Learning and Spark with and without GPUs for Deep Learning

* Several deep learning software frameworks, their pros and cons in the Spark context and for various use cases, and their performance characteristics

* A practical, applied methodology and technical examples for tackling big data deep learning

GPU accelerated Large Scale Analytics

This is an excellent overview of how the GPU has been used to accelerate large scale data mining. Published by Ren

Monitoring of GPU Usage with Tensorflow Models Using Prometheus

Understanding the dynamics of GPU utilization and workloads in containerized systems is critical to creating efficient software systems. We create a set of dashboards to monitor and evaluate GPU performance in the context of TensorFlow. We monitor performance in real time to gain insight into GPU load, GPU memory and temperature metrics in a Kubernetes GPU enabled system. Visualizing TensorFlow training job metrics in real time using Prometheus allows us to tune and optimize GPU usage. Also, because Tensor flow jobs can have both GPU and CPU implementations it is useful to view detailed real time performance data from each implementation and choose the best implementation. To illustrate our system, we will show a live demo gathering and visualizing GPU metrics on a GPU enabled Kubernetes cluster with Prometheus and Grafana.

Deep Learning with Apache Spark and GPUs with Pierce Spitler

Apache Spark is a powerful, scalable real-time data analytics engine that is fast becoming the de facto hub for data science and big data. However, in parallel, GPU clusters are fast becoming the default way to quickly develop and train deep learning models. As data science teams and data savvy companies mature, they will need to invest in both platforms if they intend to leverage both big data and artificial intelligence for competitive advantage.

This session will cover:

– How to leverage Spark and TensorFlow for hyperparameter tuning and for deploying trained models

– DeepLearning4J, CaffeOnSpark, IBM’s SystemML and Intel’s BigDL

– Sidecar GPU cluster architecture and Spark-GPU data reading patterns

– The pros, cons and performance characteristics of various approaches

You’ll leave the session better informed about the available architectures for Spark and deep learning, and Spark with and without GPUs for deep learning. You’ll also learn about the pros and cons of deep learning software frameworks for various use cases, and discover a practical, applied methodology and technical examples for tackling big data deep learning.

IRJET-A Study on Parallization of Genetic Algorithms on GPUS using CUDA

https://irjet.net/archives/V5/i6/IRJET-V5I6166.pdf

Fugaku, the Successes and the Lessons Learned

The 4th R-CCS International Symposium

Satoshi Matsuoka

Director, R-CCS RIKEN

An Introduction to H2O4GPU

This talk was given at H2O World 2018 NYC and can be viewed here: https://youtu.be/rKoBJcnsFpM

Speaker's Bio:

Mateusz is a software developer who loves all things distributed, machine learning and hates buzzwords. His favourite hobby data juggling. He obtained his M.Sc. in Computer Science from AGH UST in Krakow, Poland, during which he did an exchange at L’ECE Paris in France and worked on distributed flight booking systems. After graduation he move to Tokyo to work as a researcher at Fujitsu Laboratories on machine learning and NLP projects, where he is still currently based. In his spare time he tries to be part of the IT community by organizing, attending and speaking at conferences and meet ups.

Intel python 2017

Out of the box usability, scaling for HPC and big data, optimized for Intel processors.

If you like what you read be sure you ♥ it below. Thank you!

Python* Scalability in Production Environments

Avoid rewriting Python* applications using tools and optimizations from Intel.

Deep Learning on the SaturnV Cluster

In this deck from Switzerland HPC Conference, Gunter Roeth from NVIDIA presents: Deep Learning on the SaturnV Cluster.

"Machine Learning is among the most important developments in the history of computing. Deep learning is one of the fastest growing areas of machine learning and a hot topic in both academia and industry. It has dramatically improved the state-of-the-art in areas such as speech recognition, computer vision, predicting the activity of drug molecules, and many other machine learning tasks. The basic idea of deep learning is to automatically learn to represent data in multiple layers of increasing abstraction, thus helping to discover intricate structure in large datasets. NVIDIA has invested in SaturnV, a large GPU-accelerated cluster, (#28 on the November 2016 Top500 list) to support internal machine learning projects. After an introduction to deep learning on GPUs, we will address a selection of open questions programmers and users may face when using deep learning for their work on these clusters."

Watch the video: http://wp.me/p3RLHQ-gDv

Learn more: http://www.nvidia.com/object/dgx-saturnv.html

and

http://hpcadvisorycouncil.com/events/2017/swiss-workshop/agenda.php

Sign up for our insideHPC Newsletter: http://insidehpc.com/newsletter

Advancing GPU Analytics with RAPIDS Accelerator for Spark and Alluxio

Alluxio Day III

April 27, 2021

Speakers:

Karthikeyan Rajendra & Dong Meng, NVIDIA

Similar to Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit (20)

Infrastructure and Tooling - Full Stack Deep Learning

Infrastructure and Tooling - Full Stack Deep Learning

[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan

Monitoring of GPU Usage with Tensorflow Models Using Prometheus

Monitoring of GPU Usage with Tensorflow Models Using Prometheus

Deep Learning with Apache Spark and GPUs with Pierce Spitler

Deep Learning with Apache Spark and GPUs with Pierce Spitler

IRJET-A Study on Parallization of Genetic Algorithms on GPUS using CUDA

IRJET-A Study on Parallization of Genetic Algorithms on GPUS using CUDA

Advancing GPU Analytics with RAPIDS Accelerator for Spark and Alluxio

Advancing GPU Analytics with RAPIDS Accelerator for Spark and Alluxio

Recently uploaded

欧洲杯冠军-欧洲杯冠军网站-欧洲杯冠军|【网址🎉ac123.net🎉】领先全球的买球投注平台

欧洲杯冠军是世界上最大的网上博彩公司之一,于直布罗陀注册,在200个国家拥有超过3500万客户。在国际上都可以称得上是极其优秀的博彩公司。总部位于英国Stoke-on-Trent,欧洲杯冠军在世界各地的雇员超过600名。该公司登录亚洲市场多年,近年针对亚洲的市场拓展的很快。它的特点是投注赔率富于变化,同时对于冷门赛事,赔率变化幅度会大于其它博彩公司。欧洲杯冠军在终赔阶段往往向立博、韦德等靠拢,一旦差异很大,往往会出现问题。

LORRAINE ANDREI_LEQUIGAN_GOOGLE CALENDAR

Google Calendar is a versatile tool that allows users to manage their schedules and events effectively. With Google Calendar, you can create and organize calendars, set reminders for important events, and share your calendars with others. It also provides features like creating events, inviting attendees, and accessing your calendar from mobile devices. Additionally, Google Calendar allows you to embed calendars in websites or platforms like SlideShare, making it easier for others to view and interact with your schedules.

一比一原版(UCSB毕业证)圣塔芭芭拉社区大学毕业证如何办理

UCSB毕业证文凭证书【微信95270640】☀《圣塔芭芭拉社区大学毕业证购买》Q微信95270640《UCSB毕业证可查真实》文凭、本科、硕士、研究生学历都可以做,留信认证的作用:

1:该专业认证可证明留学生真实留学身份。

2:同时对留学生所学专业等级给予评定。

3:国家专业人才认证中心颁发入库证书

4:这个入网证书并且可以归档到地方

5:凡是获得留信网入网的信息将会逐步更新到个人身份内,将在网内查询个人身份证信息后,同步读取人才网入库信息。

6:个人职称评审加20分。

7:个人信誉贷款加10分。

8:在国家人才网主办的全国网络招聘大会中纳入资料,供国家500强等高端企业选择人才《文凭UCSB毕业证书原版制作UCSB成绩单》仿制UCSB毕业证成绩单圣塔芭芭拉社区大学学位证书pdf电子图》。

如果您是以下情况,我们都能竭诚为您解决实际问题:【公司采用定金+余款的付款流程,以最大化保障您的利益,让您放心无忧】

1、在校期间,因各种原因未能顺利毕业,拿不到官方毕业证+微信95270640

2、面对父母的压力,希望尽快拿到圣塔芭芭拉社区大学圣塔芭芭拉社区大学本科毕业证成绩单;

3、不清楚流程以及材料该如何准备圣塔芭芭拉社区大学圣塔芭芭拉社区大学本科毕业证成绩单;

4、回国时间很长,忘记办理;

5、回国马上就要找工作,办给用人单位看;

6、企事业单位必须要求办理的;

面向美国乔治城大学毕业留学生提供以下服务:

【★圣塔芭芭拉社区大学圣塔芭芭拉社区大学本科毕业证成绩单毕业证、成绩单等全套材料,从防伪到印刷,从水印到钢印烫金,与学校100%相同】

【★真实使馆认证(留学人员回国证明),使馆存档可通过大使馆查询确认】

【★真实教育部认证,教育部存档,教育部留服网站可查】

【★真实留信认证,留信网入库存档,可查圣塔芭芭拉社区大学圣塔芭芭拉社区大学本科毕业证成绩单】

我们从事工作十余年的有着丰富经验的业务顾问,熟悉海外各国大学的学制及教育体系,并且以挂科生解决毕业材料不全问题为基础,为客户量身定制1对1方案,未能毕业的回国留学生成功搭建回国顺利发展所需的桥梁。我们一直努力以高品质的教育为起点,以诚信、专业、高效、创新作为一切的行动宗旨,始终把“诚信为主、质量为本、客户第一”作为我们全部工作的出发点和归宿点。同时为海内外留学生提供大学毕业证购买、补办成绩单及各类分数修改等服务;归国认证方面,提供《留信网入库》申请、《国外学历学位认证》申请以及真实学籍办理等服务,帮助众多莘莘学子实现了一个又一个梦想。

专业服务,请勿犹豫联系我

如果您真实毕业回国,对于学历认证无从下手,请联系我,我们免费帮您递交

诚招代理:本公司诚聘当地代理人员,如果你有业余时间,或者你有同学朋友需要,有兴趣就请联系我

你赢我赢,共创双赢

你做代理,可以帮助圣塔芭芭拉社区大学同学朋友

你做代理,可以拯救圣塔芭芭拉社区大学失足青年

你做代理,可以挽救圣塔芭芭拉社区大学一个个人才

你做代理,你将是别人人生圣塔芭芭拉社区大学的转折点

你做代理,可以改变自己,改变他人,给他人和自己一个机会娃于是天天扳着手指算计着读书也格外刻苦无奈时间总过得太慢太慢每次父亲往家打电话山娃总抢着接听一个劲地提醒父亲别忘了正月说的话电话那头总会传来父亲嘿嘿的笑连连说记得记得但别忘了拿奖状进城啊考试一结束山娃就迫不及待地给父亲挂电话:爸我拿奖了三好学生接我进城吧父亲果然没有食言第二天就请假回家接山娃离开爷爷奶奶的那一刻山娃又伤心得泪如雨下宛如军人奔赴前线般难舍和悲壮卧空调大巴挤长蛇列车山娃发现车上挤满了乡

加急办理美国南加州大学毕业证文凭毕业证原版一模一样

原版一模一样【微信:741003700 】【美国南加州大学毕业证文凭】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

一比一原版(IIT毕业证)伊利诺伊理工大学毕业证如何办理

IIT本科学位证成绩单【微信95270640】(伊利诺伊理工大学毕业证成绩单本科学历)Q微信95270640(补办IIT学位文凭证书)伊利诺伊理工大学留信网学历认证怎么办理伊利诺伊理工大学毕业证成绩单精仿本科学位证书硕士文凭证书认证Seneca College diplomaoffer,Transcript办理硕士学位证书造假伊利诺伊理工大学假文凭学位证书制作IIT本科毕业证书硕士学位证书精仿伊利诺伊理工大学学历认证成绩单修改制作,办理真实认证、留信认证、使馆公证、购买成绩单,购买假文凭,购买假学位证,制造假国外大学文凭、毕业公证、毕业证明书、录取通知书、Offer、在读证明、雅思托福成绩单、假文凭、假毕业证、请假条、国际驾照、网上存档可查!

办国外伊利诺伊理工大学伊利诺伊理工大学毕业证offer教育部学历学位认证留信认证大使馆认证留学回国人员证明修改成绩单信封申请学校offer录取通知书在读证明offer letter。

快速办理高仿国外毕业证成绩单:

1伊利诺伊理工大学毕业证+成绩单+留学回国人员证明+教育部学历认证(全套留学回国必备证明材料给父母及亲朋好友一份完美交代);

2雅思成绩单托福成绩单OFFER在读证明等留学相关材料(申请学校转学甚至是申请工签都可以用到)。

3.毕业证 #成绩单等全套材料从防伪到印刷从水印到钢印烫金高精仿度跟学校原版100%相同。

专业服务请勿犹豫联系我!联系人微信号:95270640诚招代理:本公司诚聘当地代理人员如果你有业余时间有兴趣就请联系我们。

国外伊利诺伊理工大学伊利诺伊理工大学毕业证offer办理过程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。哪里父母对我们的爱和思念为我们的生命增加了光彩给予我们自由追求的力量生活的力量我们也不忘感恩正因为这股感恩的线牵着我们使我们在一年的结束时刻义无反顾的踏上了回家的旅途人们常说父母恩最难回报愿我能以当年爸爸妈妈对待小时候的我们那样耐心温柔地对待我将渐渐老去的父母体谅他们以反哺之心奉敬父母以感恩之心孝顺父母哪怕只为父母换洗衣服为父母喂饭送汤按摩酸痛的腰背握着父母的手扶着他们一步一步地慢慢散步.让我们间

一比一原版(UMich毕业证)密歇根大学|安娜堡分校毕业证如何办理

UMich硕士学位证成绩单【微信95270640】做UMich文凭、办UMich文凭、买UMich文凭Q微信95270640买办国外文凭UMich毕业证买学历咨询/代办美国毕业证成绩单文凭、办澳洲文凭毕业证、办加拿大大学毕业证文凭英国毕业证学历认证-毕业证文凭成绩单、假文凭假毕业证假学历书制作仿制、改成绩、教育部学历学位认证、毕业证、成绩单、文 凭、UMich学历文凭、UMich假学位证书、毕业证文凭、、文凭毕业证、毕业证认证、留服认证、使馆认证、使馆证明 、使馆留学回国人员证明、留学生认证、学历认证、文凭认证、学位认证

[留学文凭学历认证(留信认证使馆认证)密歇根大学|安娜堡分校毕业证成绩单毕业证证书大学Offer请假条成绩单语言证书国际回国人员证明高仿教育部认证申请学校等一切高仿或者真实可查认证服务。

多年留学服务公司,拥有海外样板无数能完美1:1还原海外各国大学degreeDiplomaTranscripts等毕业材料。海外大学毕业材料都有哪些工艺呢?工艺难度主要由:烫金.钢印.底纹.水印.防伪光标.热敏防伪等等组成。而且我们每天都在更新海外文凭的样板以求所有同学都能享受到完美的品质服务。

国外毕业证学位证成绩单办理方法:

1客户提供办理密歇根大学|安娜堡分校密歇根大学|安娜堡分校毕业证假文凭信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

— — — — 我们是挂科和未毕业同学们的福音我们是实体公司精益求精的工艺! — — — -

一真实留信认证的作用(私企外企荣誉的见证):

1:该专业认证可证明留学生真实留学身份同时对留学生所学专业等级给予评定。

2:国家专业人才认证中心颁发入库证书这个入网证书并且可以归档到地方。

3:凡是获得留信网入网的信息将会逐步更新到个人身份内将在公安部网内查询个人身份证信息后同步读取人才网入库信息。

4:个人职称评审加20分个人信誉贷款加10分。

5:在国家人才网主办的全国网络招聘大会中纳入资料供国家500强等高端企业选择人才。听话天天呆在小屋里除了看书写作业就是睡觉看电视屋里很黑很闷白天也得开灯开风扇山娃不想浪费电总将小方桌搁在门口看书写作业有一次山娃坐在门口写作业写着写着竟伏在桌上睡着了迷迷糊糊中山娃似乎听到了父亲的脚步声当他晃晃悠悠站起来时才诧然发现一位衣衫破旧的妇女挎着一只硕大的蛇皮袋手里拎着长铁钩正站在门口朝黑色的屋内张望不好坏人小偷山娃一怔却也灵机一动立马仰起头双手拢在嘴边朝楼上大喊:“爸爸爸——有人找——喜

Building a Raspberry Pi Robot with Dot NET 8, Blazor and SignalR - Slides Onl...

In this session delivered at Leeds IoT, I talk about how you can control a 3D printed Robot Arm with a Raspberry Pi, .NET 8, Blazor and SignalR.

I also show how you can use a Unity app on an Meta Quest 3 to control the arm VR too.

You can find the GitHub repo and workshop instructions here;

https://bit.ly/dotnetrobotgithub

Recently uploaded (9)

Building a Raspberry Pi Robot with Dot NET 8, Blazor and SignalR - Slides Onl...

Building a Raspberry Pi Robot with Dot NET 8, Blazor and SignalR - Slides Onl...

Production.pptxd dddddddddddddddddddddddddddddddddd

Production.pptxd dddddddddddddddddddddddddddddddddd

Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit

- 2. Motivation for TPU • 2006: “Just run DNNs on our CPU datacenter. It’s basically free.” • 2013: “3 minutes of DNN-based voice search == 2x more datacenter compute.”

- 3. The Players Norman P. Jouppi and his two musketeers. David Patterson 70+ other Google engineers

- 4. • 30-80x TOPS/watt vs. 2015 CPUs and GPUs. • 8 GiB DRAM. • 8-bit fixed point. • 256x256 MAC unit. • Support for data reordering, matrix multiply, activation, pooling, and normalization. Tensor Processing Unit (TPU)

- 5. “The unexpected desire for TPUs by many Google services combined with the preference for low response time changed the equation, with application writers often opting for reduced latency over waiting for bigger batches to accumulate.” Application Testbed

- 6. TPU Block Diagram & Floor Plan

- 7. Experimental Testbed 8x K80 GPUs

- 9. Rooflines of TPU with DNN Apps

- 10. App breakdown by Performance Counters

- 12. Programming the TPU Programming FPGAs TensorFlow graph TPU host instructions TPU bitstream

- 13. NVIDIA’s Rebuttal to the TPU https://blogs.nvidia.com/blog/2017/04/10/ai-drives-rise-accelerated-computing-datacenter/

- 14. “Patterson” Discussion 1. Fallacy: NN inference applications in data centers value throughput as much as response time. 2. Fallacy: The K80 GPU architecture is a good match to NN inference. 3. Pitfall: Architects have neglected important NN tasks. 4. Pitfall: For NN hardware, Inferences Per Second (IPS) is an inaccurate summary performance metric. 5. Fallacy: The K80 GPU results would be much better if Boost mode were enabled. 6. Fallacy: CPU and GPU results would be comparable to the TPU if we used them more efficiently or compared to newer versions. 7. Pitfall: Performance counters added as an afterthought for NN hardware. 8. Fallacy: After two years of software tuning, the only path left to increase TPU performance is hardware upgrades.

- 15. “CNNs constitute only about 5% of the representative NN workload for Google. More attention should be paid to MLPs and LSTMs. Repeating history, it’s similar to when many architects concentrated on floating- point performance when most mainstream workloads turned out to be dominated by integer operations.” Interesting quote