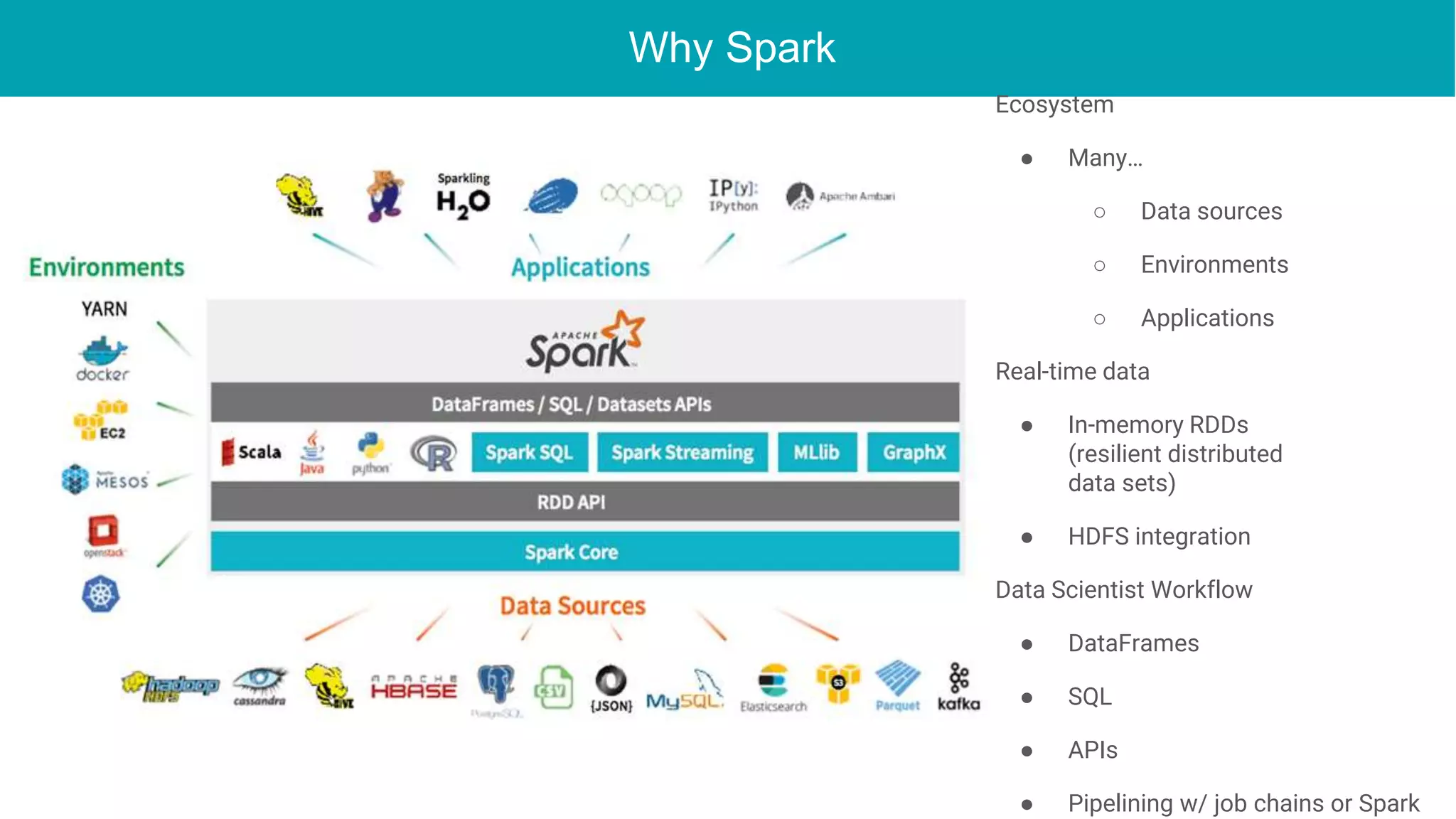

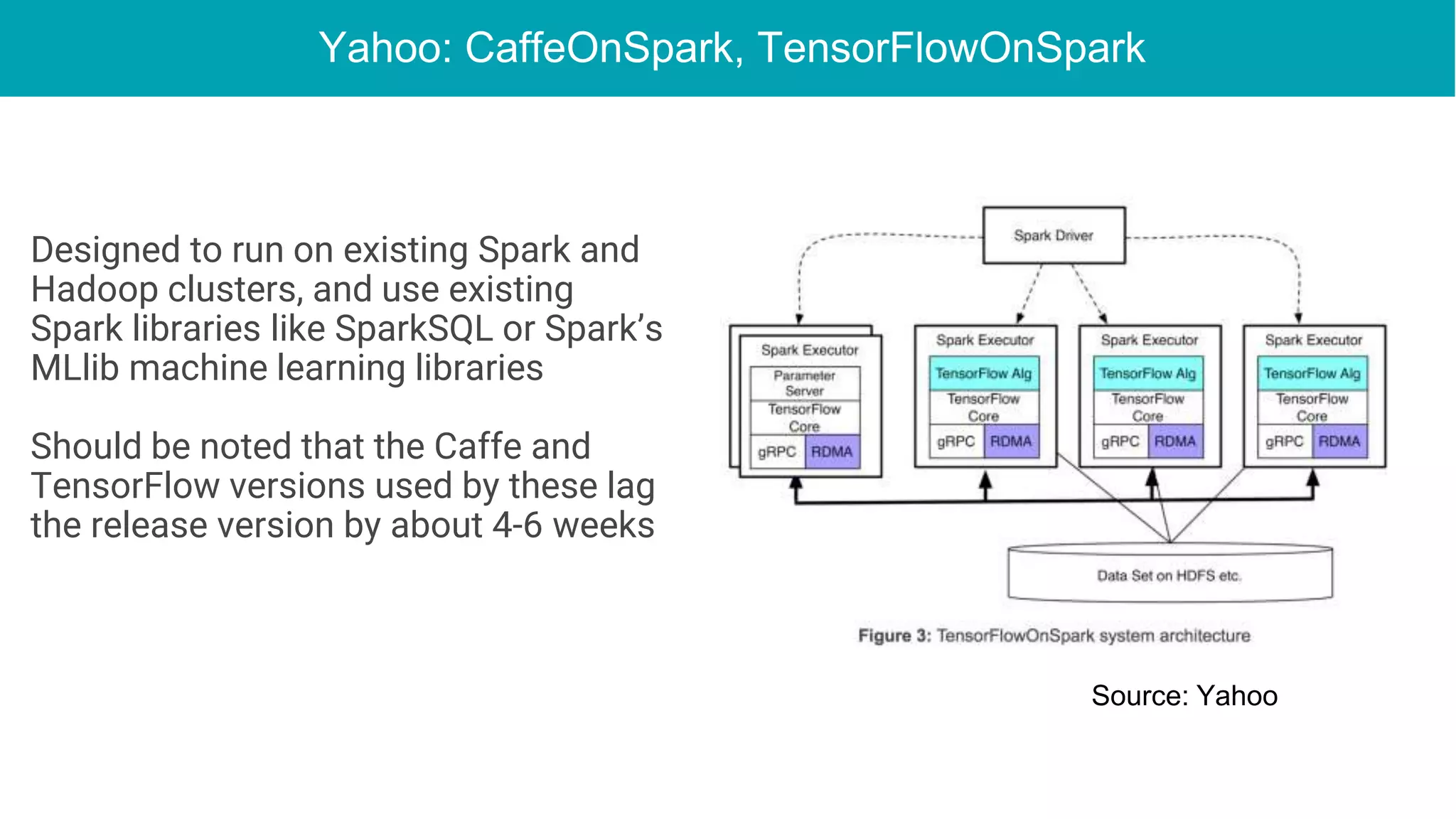

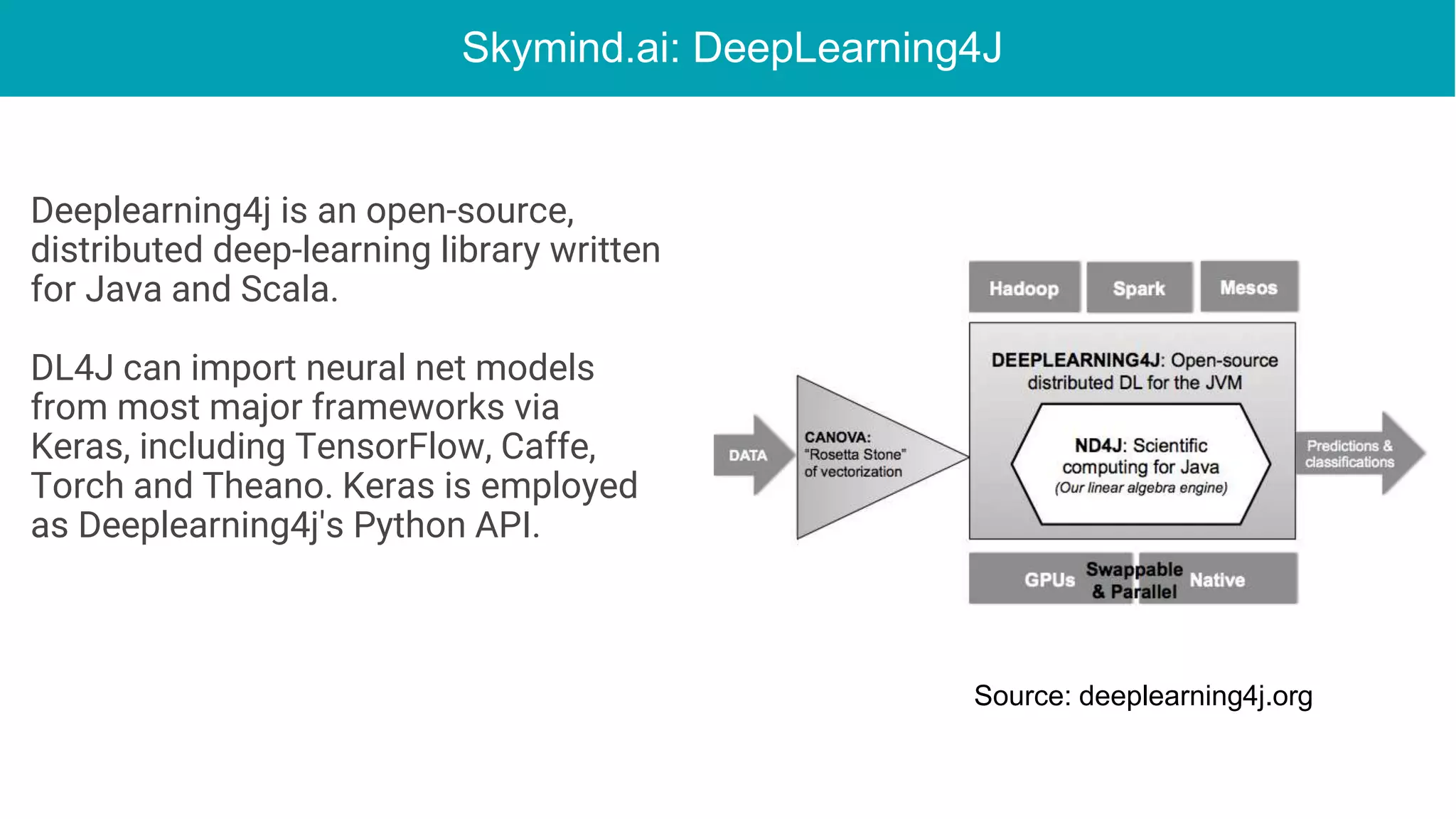

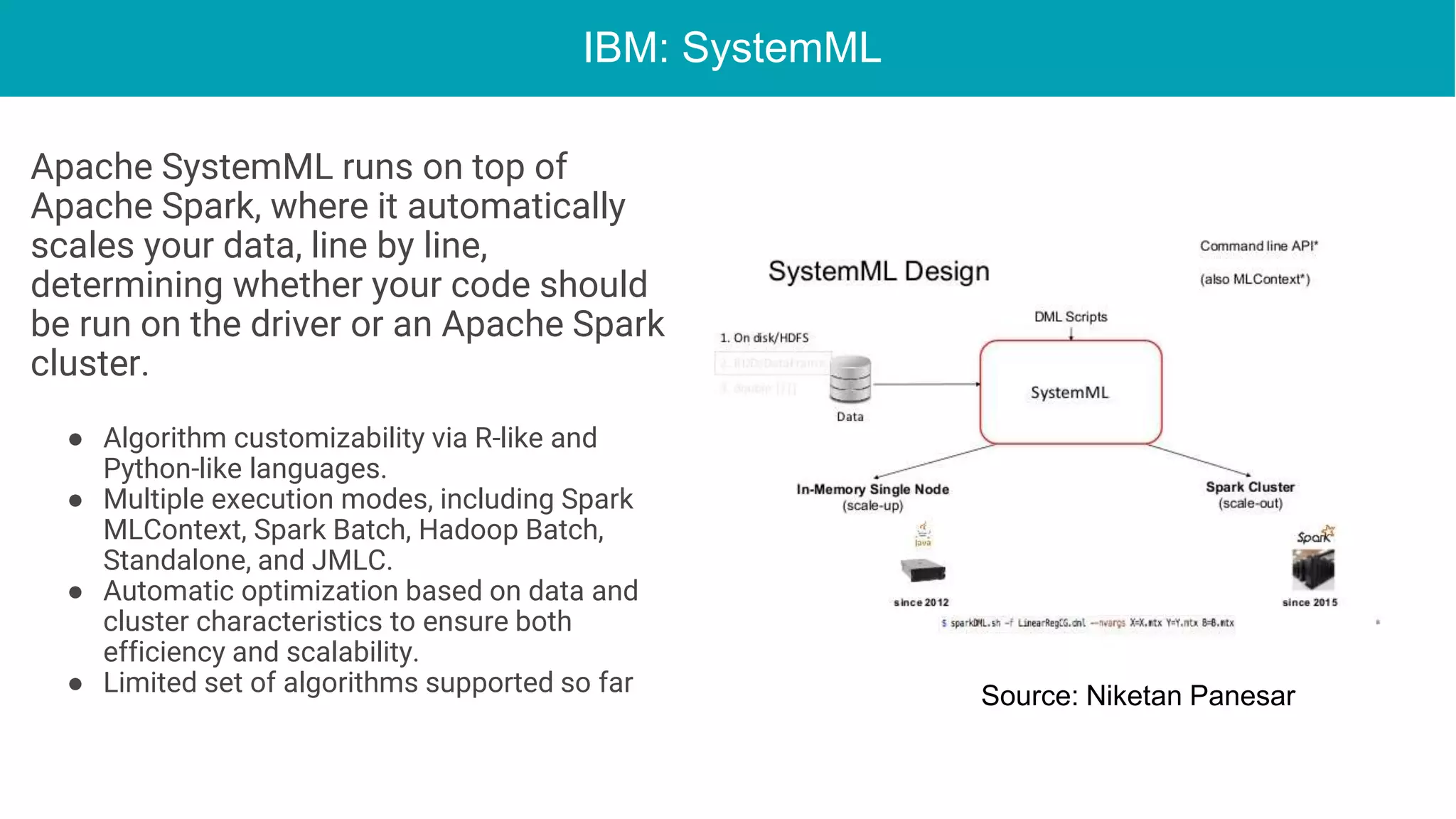

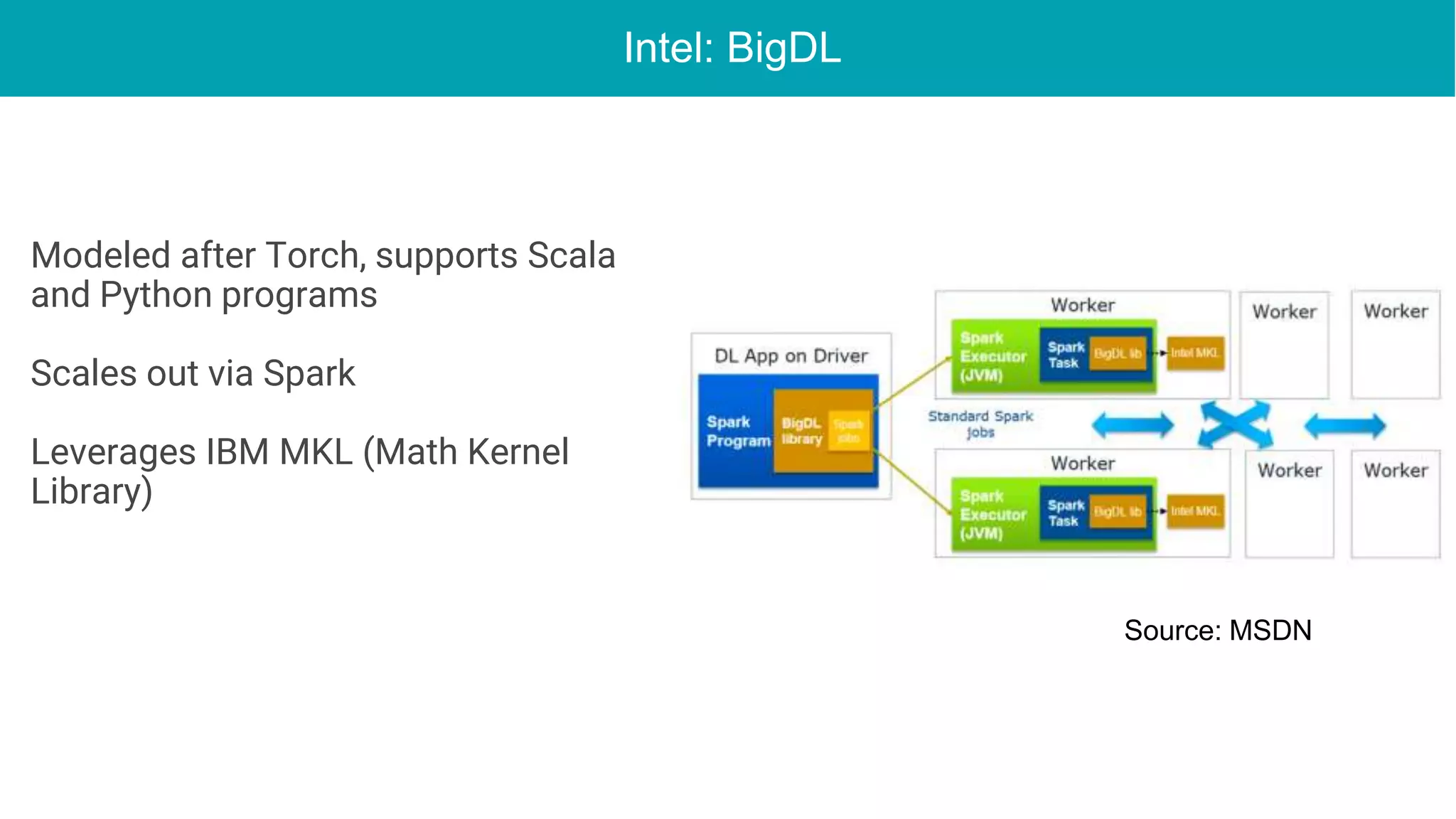

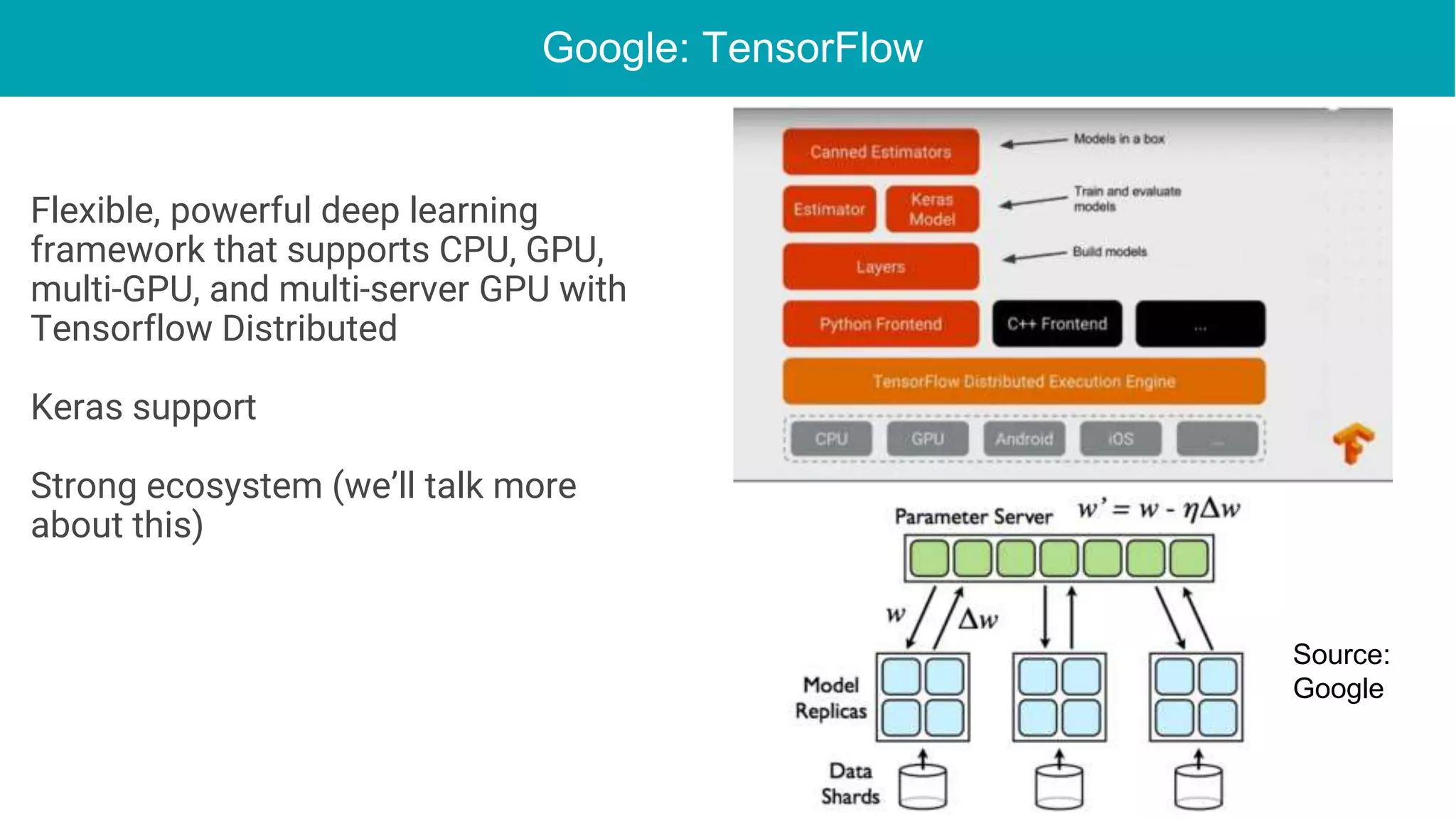

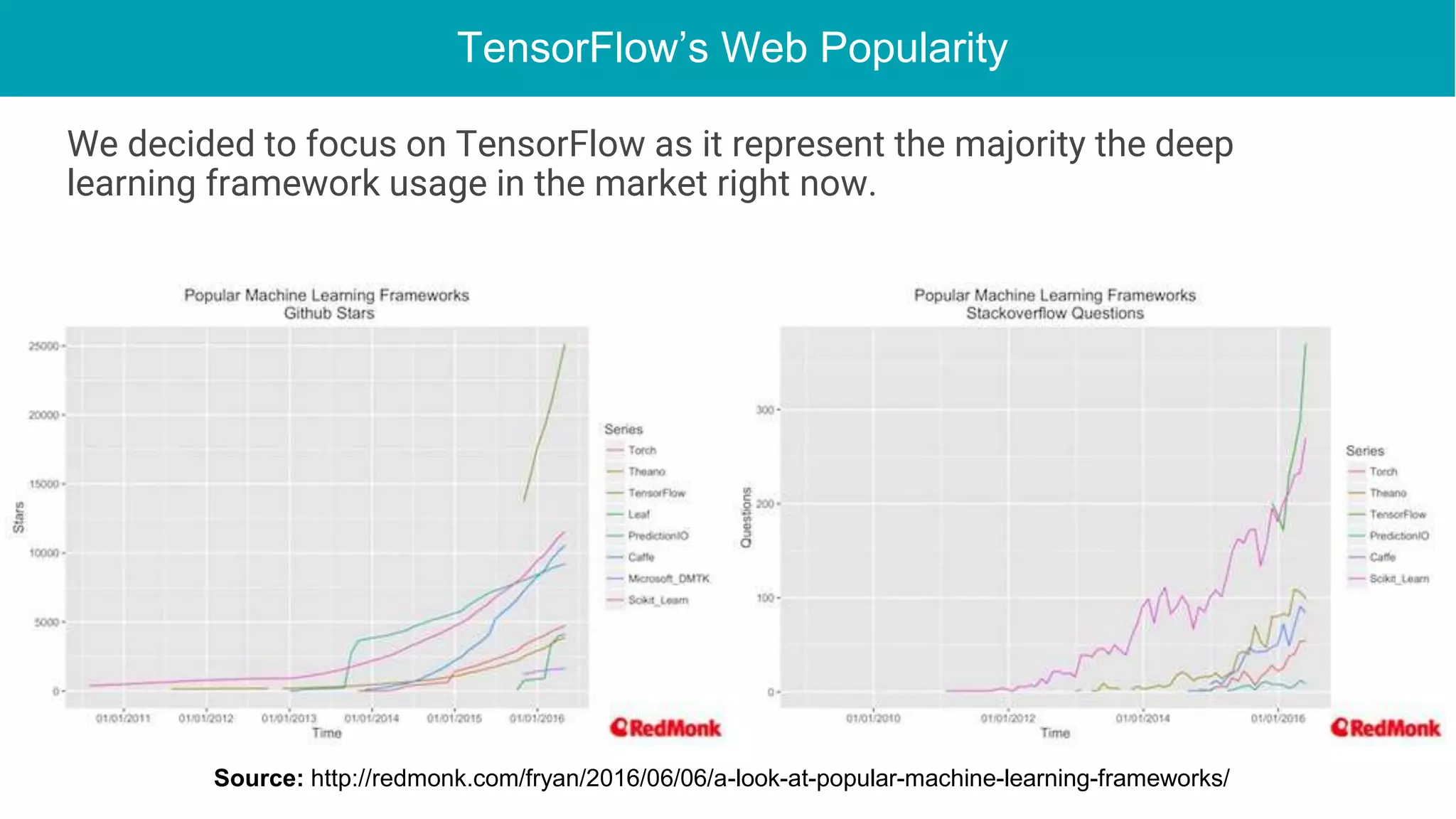

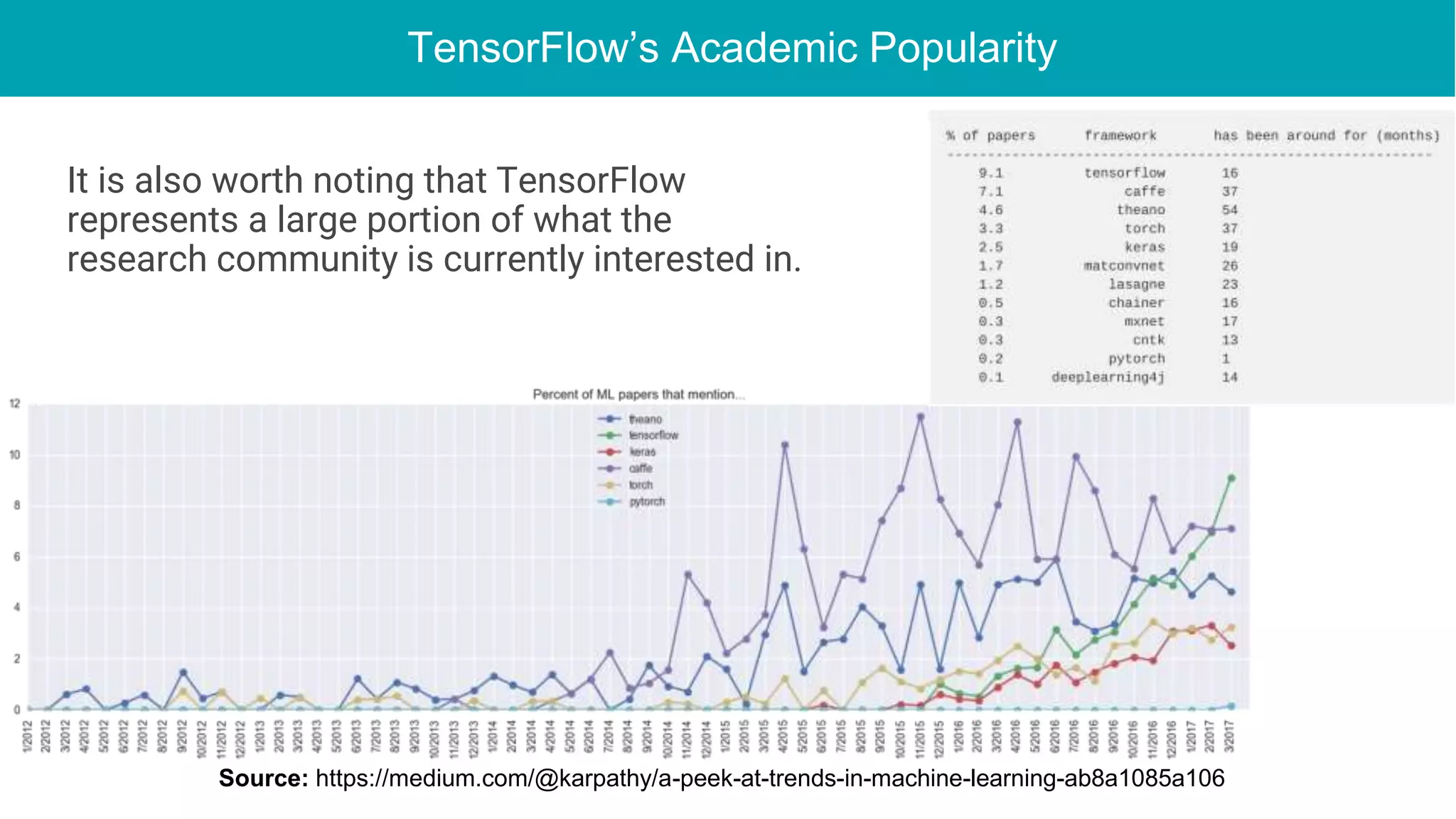

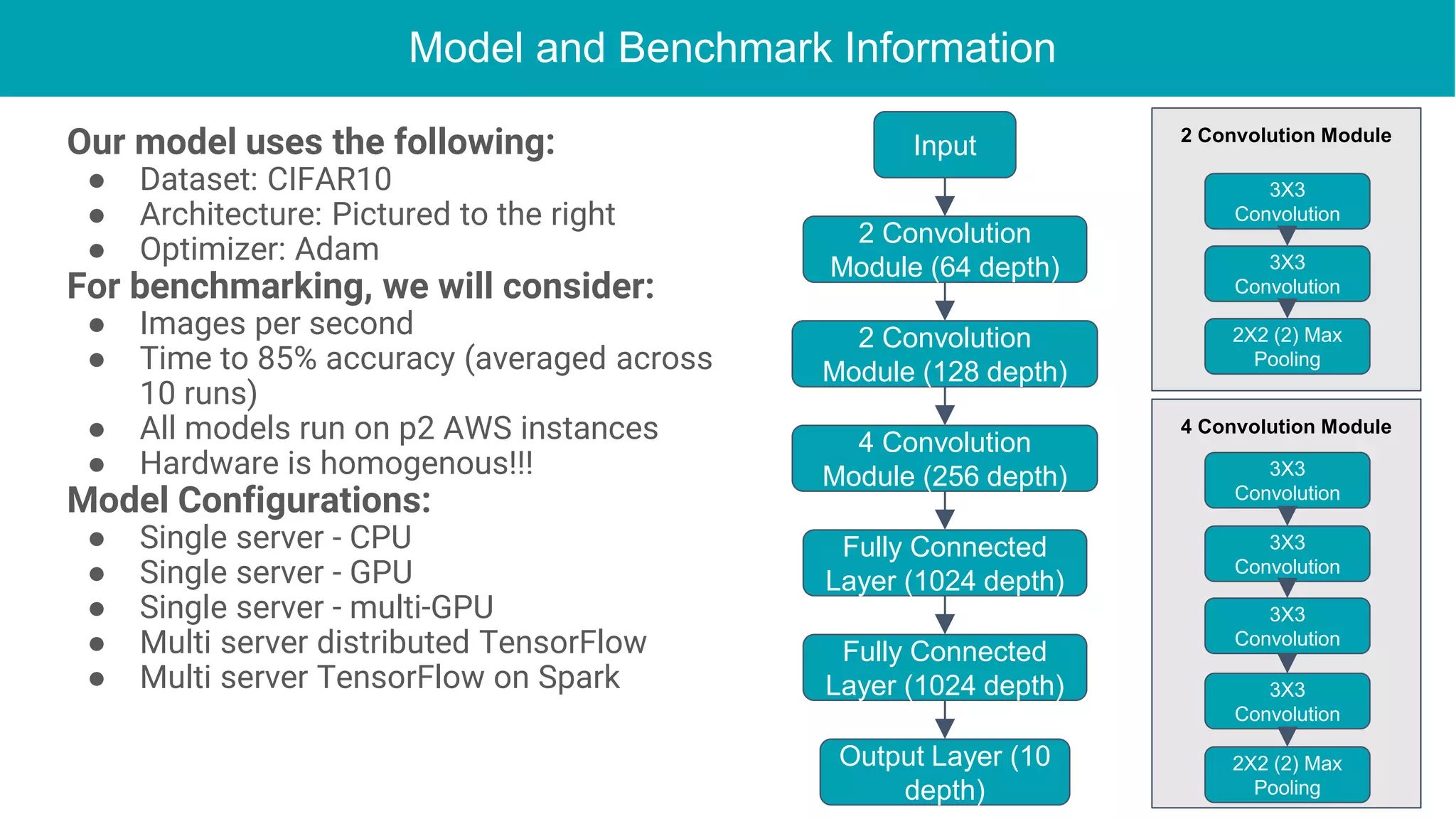

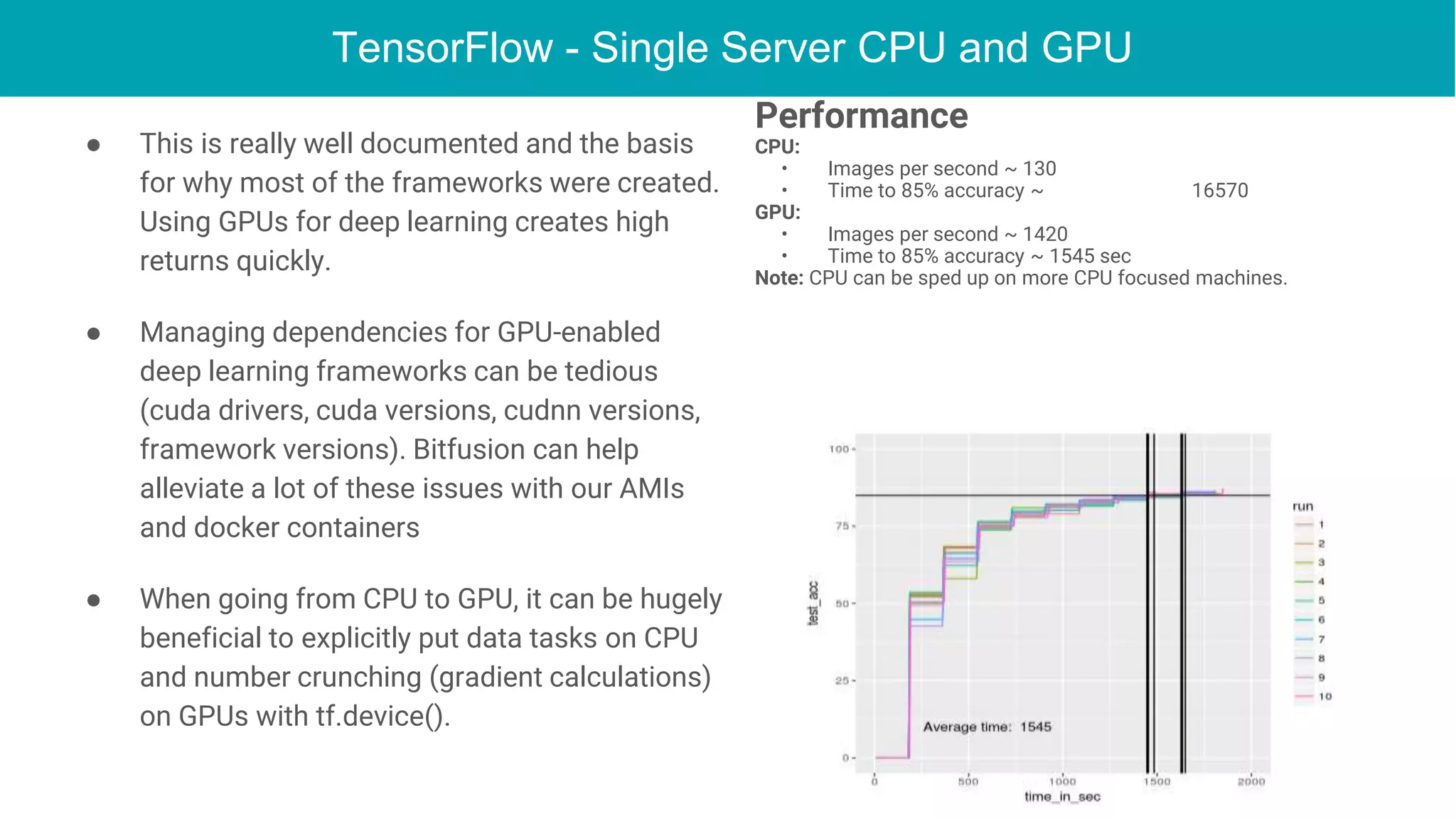

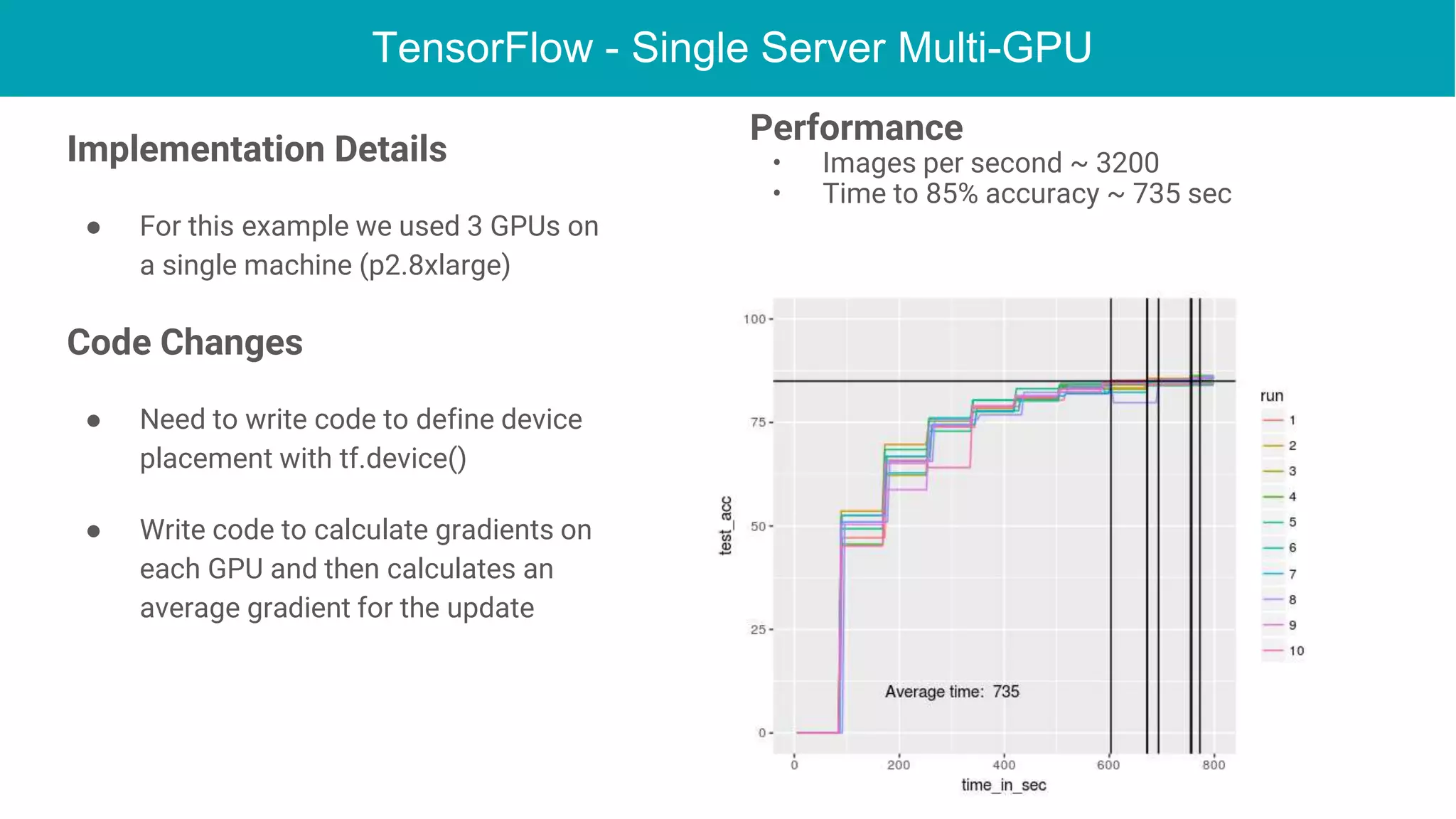

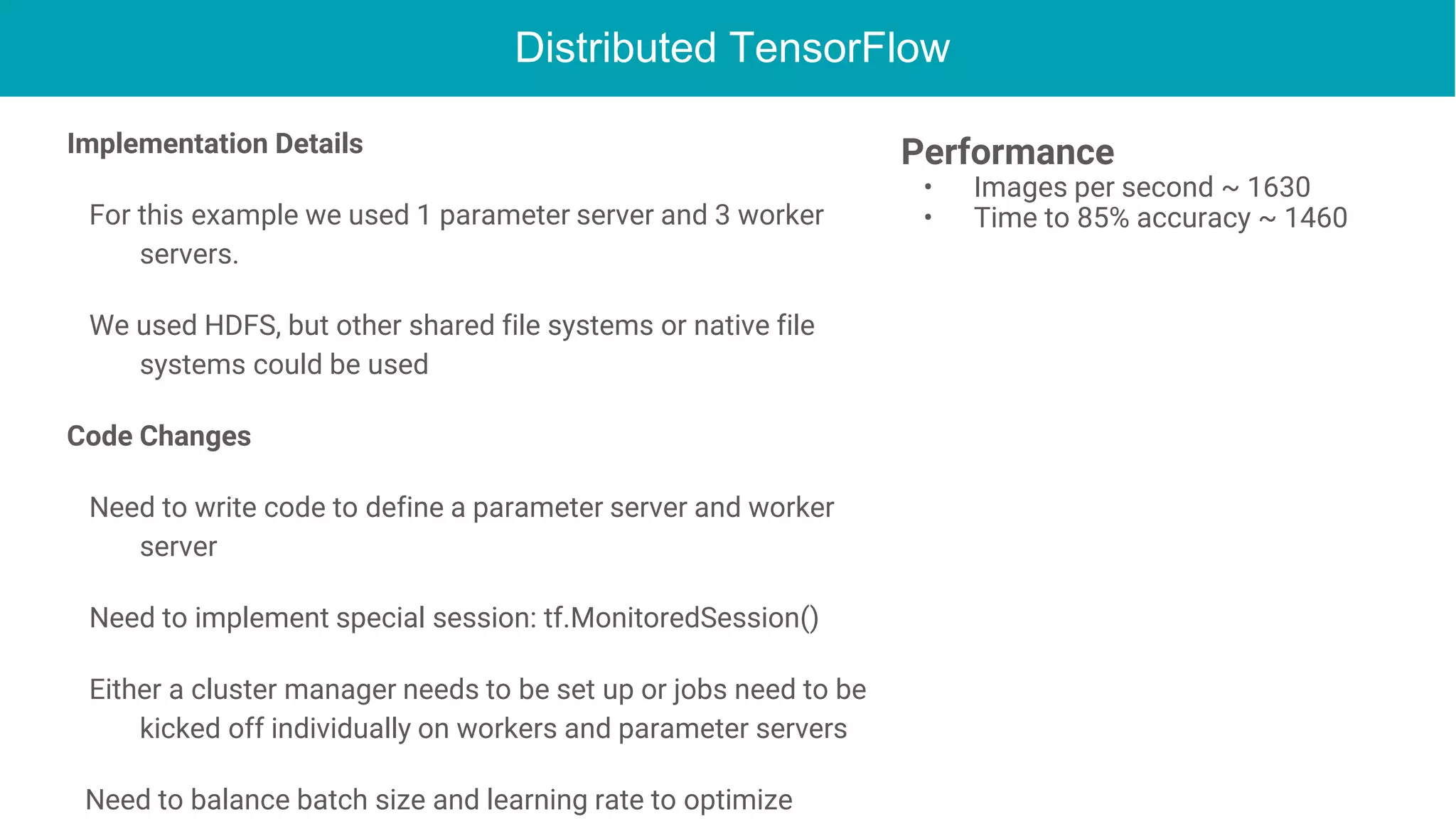

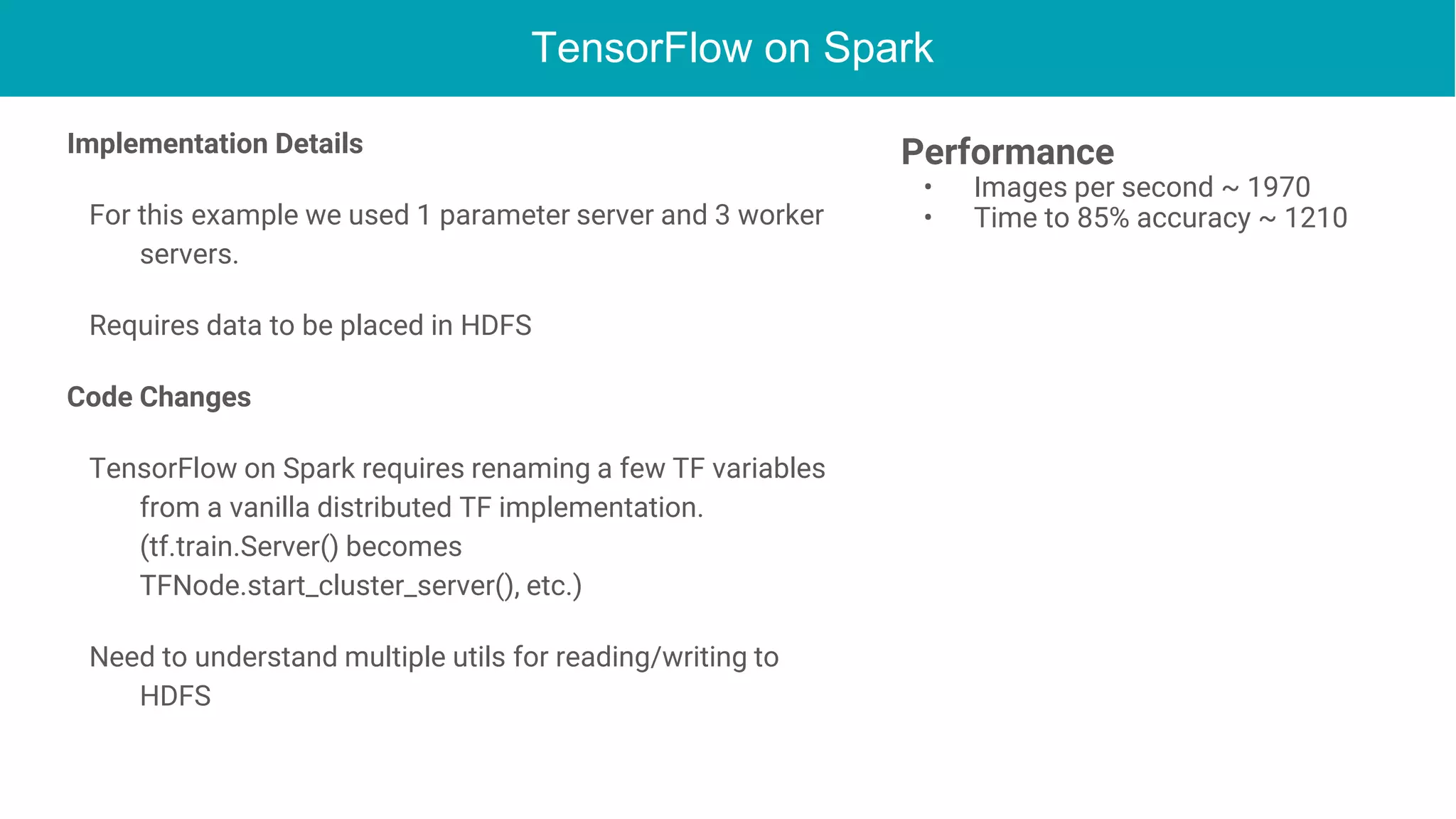

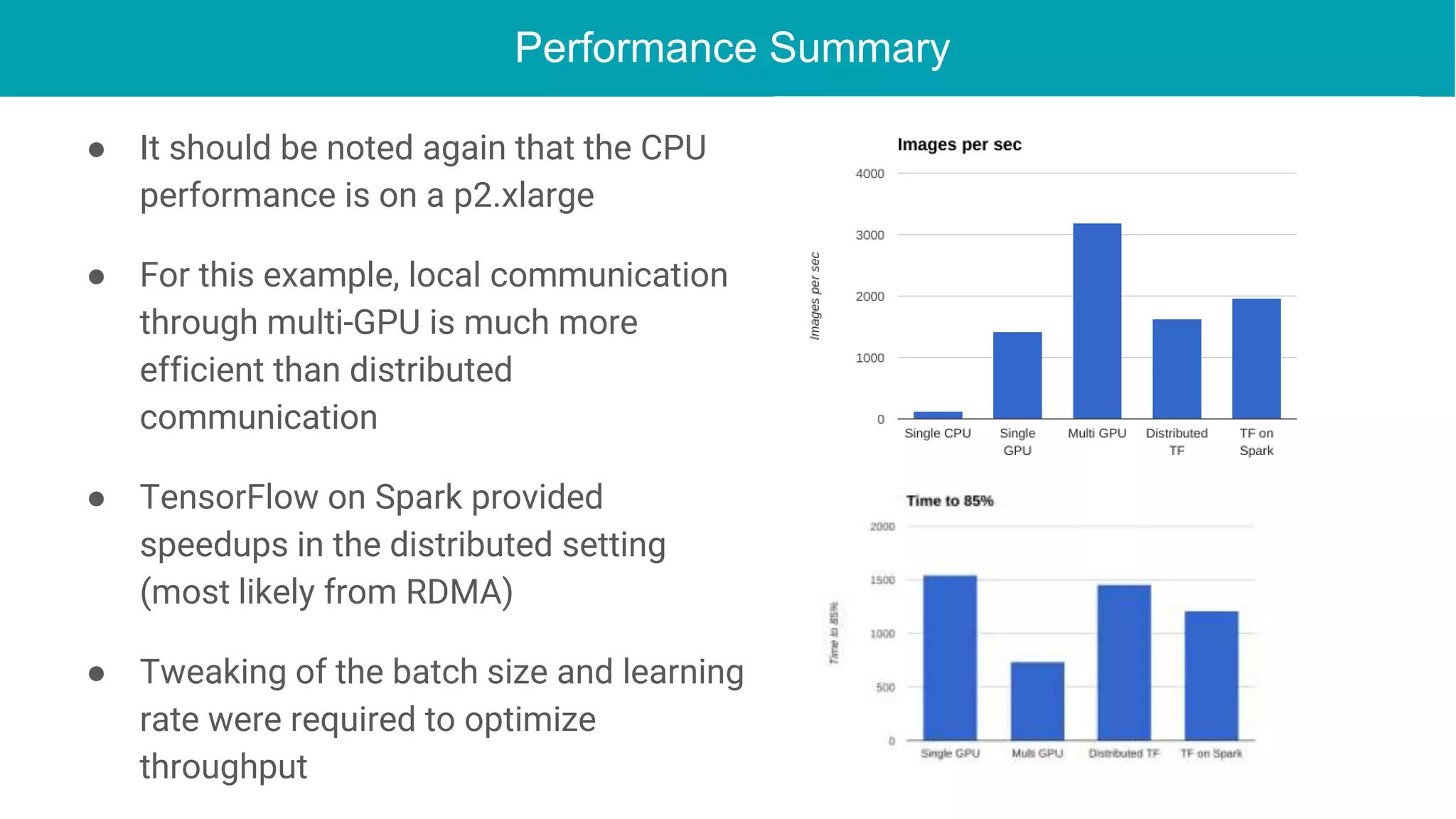

This document discusses the integration of deep learning frameworks with Apache Spark and GPU clusters, emphasizing their importance for data science and AI. It reviews various frameworks such as TensorFlow, Caffe, and Deeplearning4j, detailing their functionalities and performance in different configurations, including single server and distributed settings. The document also outlines practical considerations for optimizing performance based on hardware and network setup, as well as future research directions in the field.